操作系统:CentOS Stream release 8

Hadoop版本:3.3.6

集群角色规划

Hadoop集群组成:

| 集群名称 | 集群角色 |

| HDFS集群 | NameNode、DataNode、SecondaryNameNode |

| YARN集群 | ResourceManager、NodeManager |

集群划分:

| 节点 | 角色 |

| node1.hadoop.study | NameNode、DataNode、ResourceManager、NodeManager |

| node2.hadoop.study | SecondaryNameNode、DataNode、NodeManager |

| node3.hadoop.study | DataNode、NodeManager |

环境准备

创建3台虚拟机,操作系统为CentOS8:

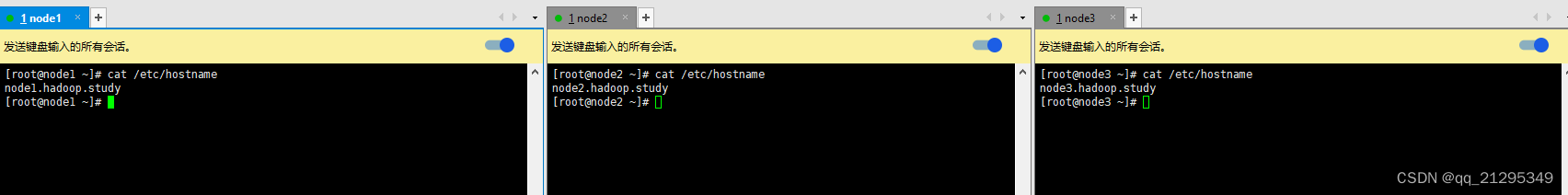

分别修改每台虚拟机的/etc/hostname文件,分别对应node1.hadoop.study、node2.hadoop.study、node3.hadoop.study:

修改/etc/hosts文件,将以下内容追加到文件末尾:

192.168.80.131 node1.hadoop.study

192.168.80.132 node2.hadoop.study

192.168.80.133 node3.hadoop.study

设置ssh免密登录,这样node1可以直接连接到node1(自己也要)、node2和node3:

设置ssh免密登录,这样node1可以直接连接到node1(自己也要)、node2和node3:

ssh-keygen

ssh-copy-id root@node1.hadoop.study

ssh-copy-id root@node2.hadoop.study

ssh-copy-id root@node3.hadoop.study

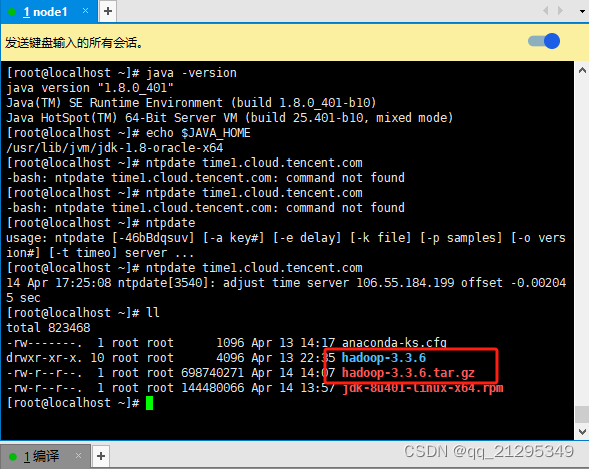

安装 oracle jdk8:

oracle jdk8下载地址Java Downloads | Oracle,同rpm的方式安装,所以下载这个

安装

rpm -ivh jdk-8u401-linux-x64.rpm

验证

关闭防火墙:

systemctl stop firewalld.service

systemctl disable firewalld.service

安装ntpdate,集群节点之间时间同步

rpm -ivh http://mirrors.wlnmp.com/centos/wlnmp-release-centos.noarch.rpm

yum install -y wntp

ntpdate time1.cloud.tencent.com

上传编译好的hadoop压缩包到node1,并解压

解压命令

tar -zxvf hadoop-3.3.6.tar.gz

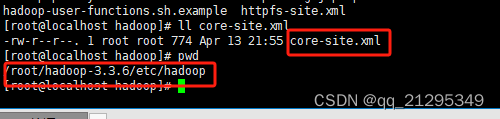

修改etc/hadoop/core-site.xml

文件内容:

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<!-- name node的地址-->

<property>

<name>fs.defaultFS</name>

<value>hdfs://node1.hadoop.study</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/root/data/hadoop-3.3.6</value>

</property>

<property>

<name>hadoop.http.staticuser.user</name>

<value>root</value>

</property>

</configuration>

修改etc/hadoop/hdfs-site.xml

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>node2.hadoop.study:9868</value>

</property>

</configuration>

修改etc/hadoop/yarn-site.xml

<?xml version="1.0"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>node1.hadoop.study</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.scheduler.minimum-allocation-mb</name>

<value>128</value>

</property>

<property>

<name>yarn.scheduler.maximum-allocation-mb</name>

<value>1024</value>

</property>

</configuration>

修改etc/hadoop/mapred-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

<property>

<name>yarn.app.mapreduce.map.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

<property>

<name>yarn.app.mapreduce.reduce.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

</configuration>

修改etc/hadoop/hadoop-env.sh

在文件末尾追加

export JAVA_HOME=/usr/lib/jvm/jdk-1.8-oracle-x64

export HADOOP_HOME=/root/hadoop-3.3.6

export HDFS_NAMENODE_USER=root

export HDFS_DATANODE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export YARN_RESOURCEMANAGER_USER=root

export YARN_NODEMANAGER_USER=root

修改etc/hadoop/workers

先删除原来的内容,再添加以下内容

node1.hadoop.study

node2.hadoop.study

node3.hadoop.study

将整个hadoop目录发送到node2和node3

scp -r hadoop-3.3.6 node2.hadoop.study:/root

scp -r hadoop-3.3.6 node3.hadoop.study:/root

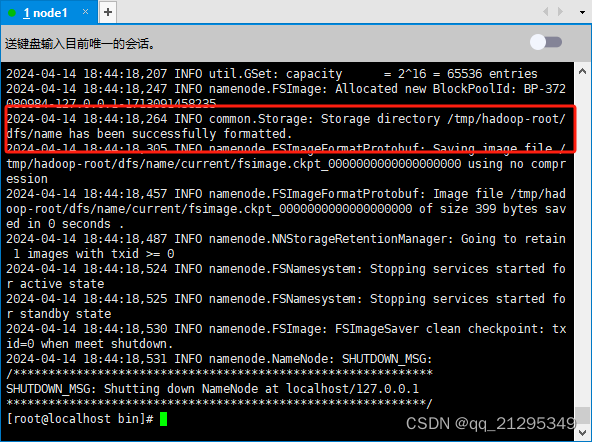

首次启动 HDFS 时,必须对其进行格式化

首次启动 HDFS 时,必须对其进行格式化

在NameNode节点上执行,也就是node1节点:

cd hadoop-3.3.6/bin/

./hdfs namenode -format

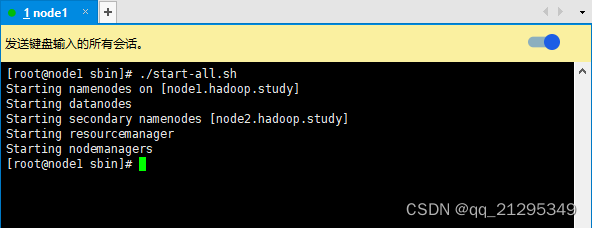

启动hdfs集群和yarn集群

在node1节点上操作

cd /root/hadoop-3.3.6/sbin

./start-all.sh

验证:

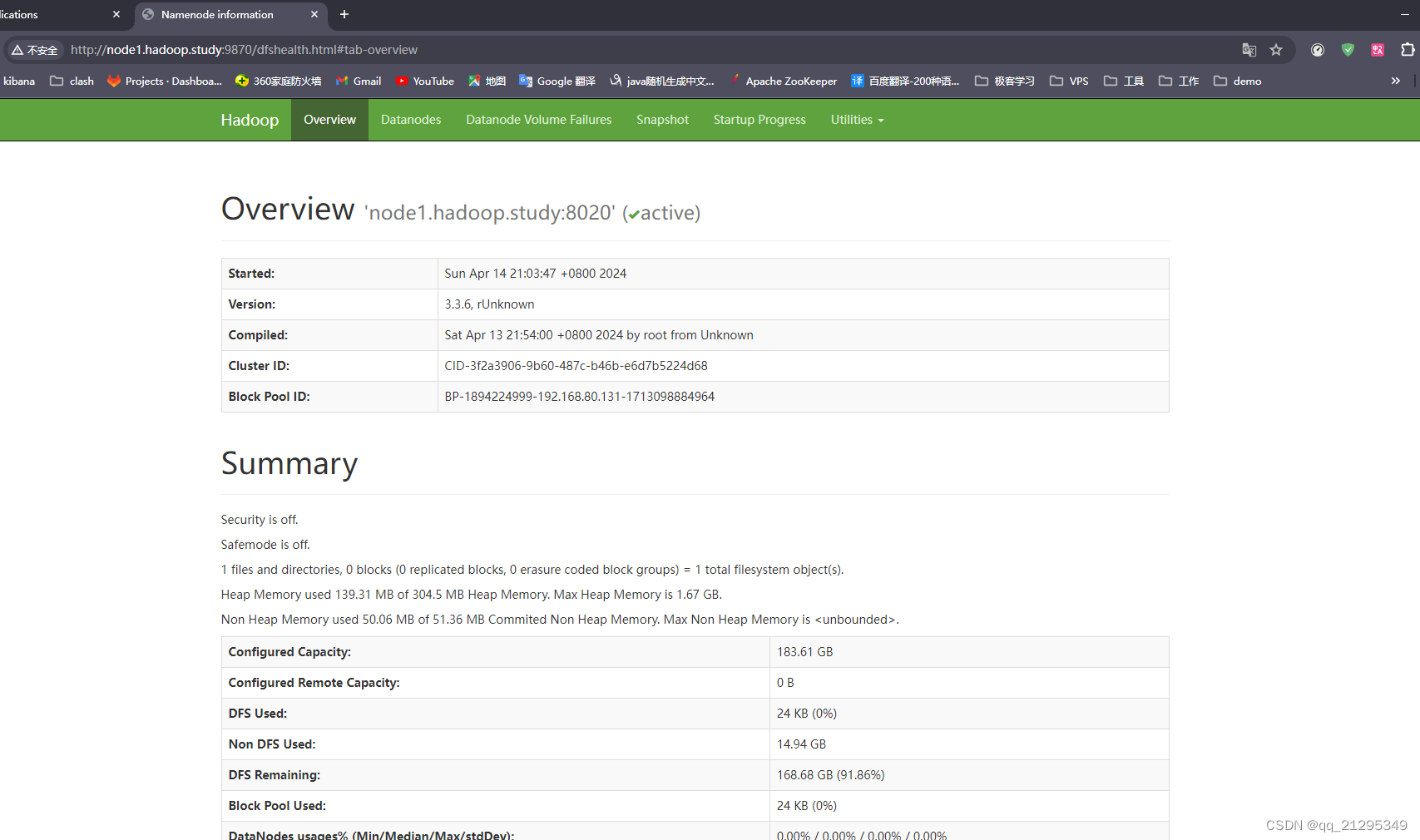

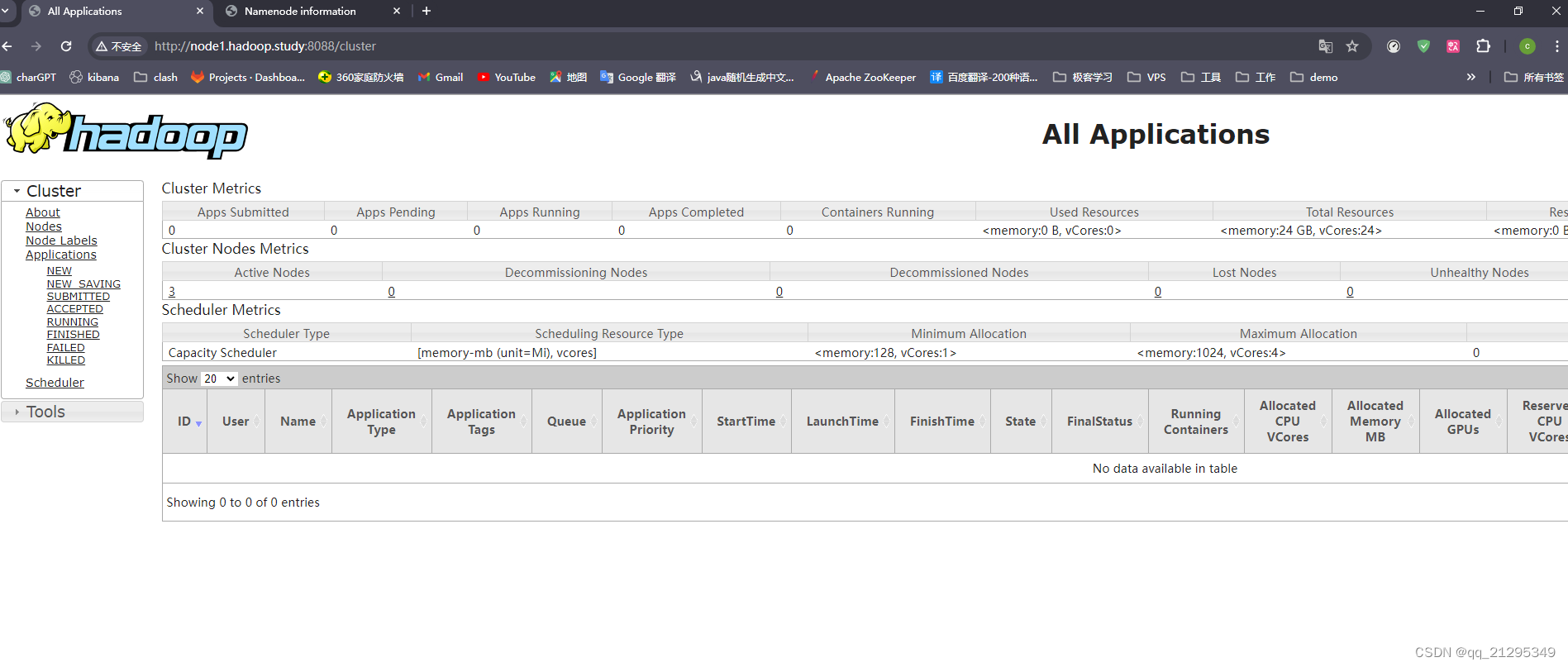

访问web ui

访问web ui

需要修改window的hosts文件,或者直接用NameNode的ip和ResourceManager的ip进行访问

PS: 如果无法访问,可能是代理的问题

http://node1.hadoop.study:9870/

http://node1.hadoop.study:8088/

NameNode http://nn_host:port/ Default HTTP port is 9870. ResourceManager http://rm_host:port/ Default HTTP port is 8088.

将hadoop目录下的bin和sbin添加的path中,方便使用

将hadoop目录下的bin和sbin添加的path中,方便使用

在.bash_profile中添加如下内容

source .bash_profile 使其生效

640

640

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?