使用SURF类和其相关的函数需要添加Emgu.CV.Features2D和Emgu.CV.XFeatures2D这两个命名空间,同时也可以使用cuda模块来进行加速,由于使用Cuda需要安装,但Cuda目前支持的显卡驱动并不兼容win10,所以这里我就简单的说一下通用的方法吧。

具体的步骤就是先计算特征点然后再进行匹配。

public partial class Form1 : Form

{

public Form1()

{

InitializeComponent();

Image<Bgra, byte> a = new Image<Bgra, byte>("IMG_20150829_192403.JPG").Resize(0.4,Inter.Area); //模板

Image<Bgra, byte> b = new Image<Bgra, byte>("DSC_0437.JPG").Resize(0.4, Inter.Area); //待匹配的图像

Mat homography = null;

Mat mask = null;

VectorOfKeyPoint modelKeyPoints = new VectorOfKeyPoint();

VectorOfKeyPoint observedKeyPoints = new VectorOfKeyPoint();

VectorOfVectorOfDMatch matches = new VectorOfVectorOfDMatch();

UMat a1 = a.Mat.ToUMat(AccessType.Read);

UMat b1 = b.Mat.ToUMat(AccessType.Read);

SURF surf = new SURF(300);

UMat modelDescriptors = new UMat();

UMat observedDescriptors = new UMat();

surf.DetectAndCompute(a1, null, modelKeyPoints, modelDescriptors, false); //进行检测和计算,把opencv中的两部分和到一起了,分开用也可以

surf.DetectAndCompute(b1, null, observedKeyPoints, observedDescriptors, false);

BFMatcher matcher = new BFMatcher(DistanceType.L2); //开始进行匹配

matcher.Add(modelDescriptors);

matcher.KnnMatch(observedDescriptors, matches, 2, null);

mask = new Mat(matches.Size, 1, DepthType.Cv8U, 1);

mask.SetTo(new MCvScalar(255));

Features2DToolbox.VoteForUniqueness(matches, 0.8, mask); //去除重复的匹配

int Count = CvInvoke.CountNonZero(mask); //用于寻找模板在图中的位置

if (Count >= 4)

{

Count = Features2DToolbox.VoteForSizeAndOrientation(modelKeyPoints, observedKeyPoints, matches, mask, 1.5, 20);

if (Count >= 4)

homography = Features2DToolbox.GetHomographyMatrixFromMatchedFeatures(modelKeyPoints, observedKeyPoints, matches, mask, 2);

}

Mat result = new Mat();

Features2DToolbox.DrawMatches(a.Convert<Gray, byte>().Mat, modelKeyPoints, b.Convert<Gray, byte>().Mat, observedKeyPoints,matches, result, new MCvScalar(255, 0, 255), new MCvScalar(0, 255, 255), mask);

//绘制匹配的关系图

if (homography != null) //如果在图中找到了模板,就把它画出来

{

Rectangle rect = new Rectangle(Point.Empty, a.Size);

PointF[] points = new PointF[]

{

new PointF(rect.Left, rect.Bottom),

new PointF(rect.Right, rect.Bottom),

new PointF(rect.Right, rect.Top),

new PointF(rect.Left, rect.Top)

};

points = CvInvoke.PerspectiveTransform(points, homography);

Point[] points2 = Array.ConvertAll<PointF, Point>(points, Point.Round);

VectorOfPoint vp = new VectorOfPoint(points2);

CvInvoke.Polylines(result, vp, true, new MCvScalar(255, 0, 0, 255), 15);

}

imageBox1.Image = result;

}

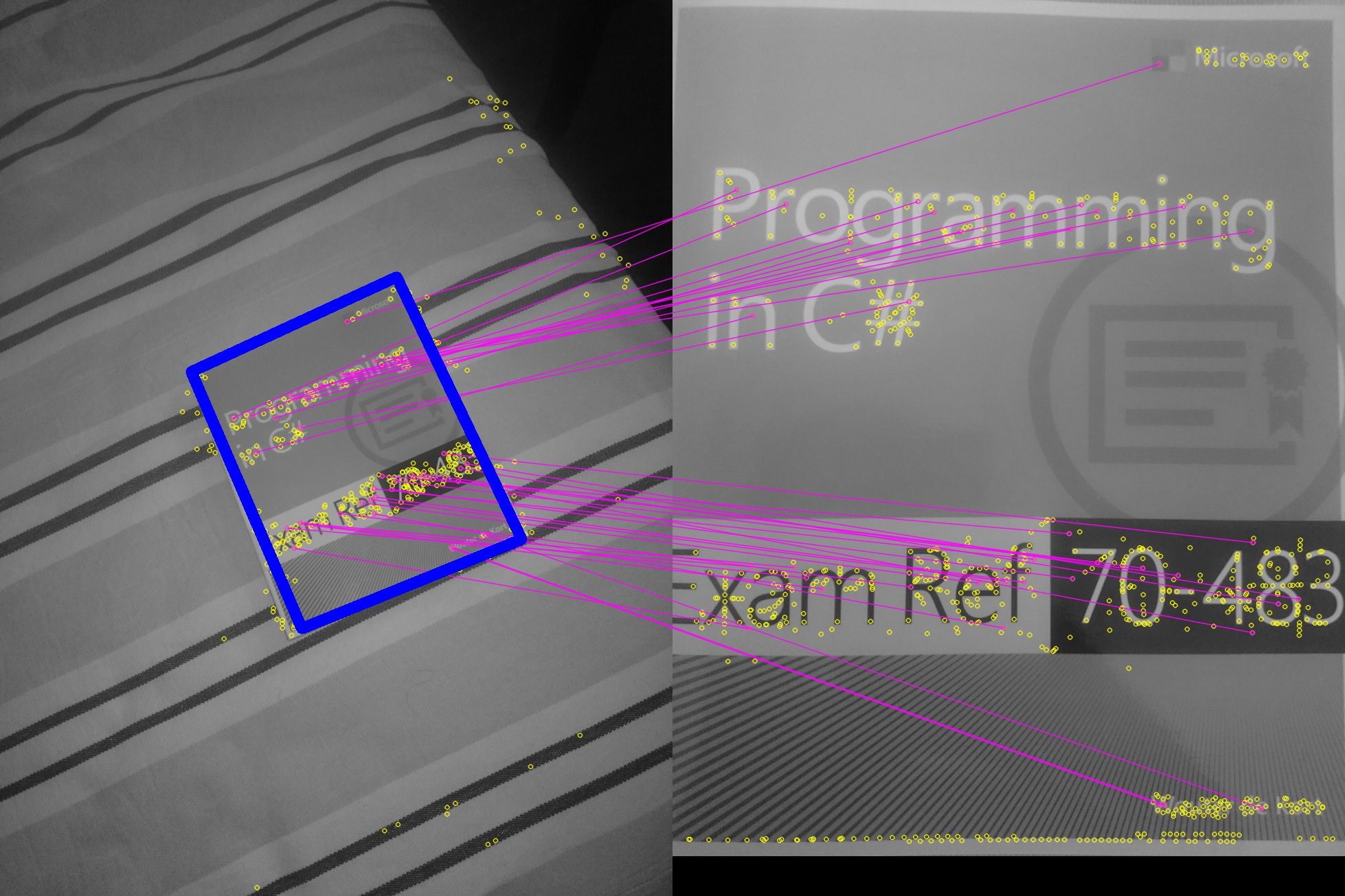

}效果图:

85

85

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?