一、启动日志start_log清洗到DWD

用到的函数:get_json_object

1)person表xjson字段内容为:

Xjson=[{"name":"大郎","sex":"男","age":"25"},{"name":"西门庆","sex":"男","age":"47"}]

2)取出第一个json对象

SELECT get_json_object(xjson,"$.[0]") FROM person;

结果是:{"name":"大郎","sex":"男","age":"25"}

3)取出第一个json的age字段的值

SELECT get_json_object(xjson,"$.[0].age") FROM person;

结果是:25启动日志表(start_log)

rop table if exists dwd_start_log;

CREATE EXTERNAL TABLE dwd_start_log(

`mid_id` string,

`user_id` string,

`version_code` string,

`version_name` string,

`lang` string,

`source` string,

`os` string,

`area` string,

`model` string,

`brand` string,

`sdk_version` string,

`gmail` string,

`height_width` string,

`app_time` string,

`network` string,

`lng` string,

`lat` string,

`entry` string,

`open_ad_type` string,

`action` string,

`loading_time` string,

`detail` string,

`extend1` string

)

PARTITIONED BY (dt string)

stored as parquet

location '/warehouse/gmall/dwd/dwd_start_log/'

TBLPROPERTIES('parquet.compression'='lzo');

#!/bin/bash

# 定义变量方便修改

APP=gmall

# 如果是输入的日期按照取输入日期;如果没输入日期取当前时间的前一天

if [ -n "$1" ] ;then

do_date=$1

else

do_date=`date -d "-1 day" +%F`

fi

sql="

set hive.exec.dynamic.partition.mode=nonstrict;

insert overwrite table "$APP".dwd_start_log

PARTITION (dt='$do_date')

select

get_json_object(line,'$.mid') mid_id,

get_json_object(line,'$.uid') user_id,

get_json_object(line,'$.vc') version_code,

get_json_object(line,'$.vn') version_name,

get_json_object(line,'$.l') lang,

get_json_object(line,'$.sr') source,

get_json_object(line,'$.os') os,

get_json_object(line,'$.ar') area,

get_json_object(line,'$.md') model,

get_json_object(line,'$.ba') brand,

get_json_object(line,'$.sv') sdk_version,

get_json_object(line,'$.g') gmail,

get_json_object(line,'$.hw') height_width,

get_json_object(line,'$.t') app_time,

get_json_object(line,'$.nw') network,

get_json_object(line,'$.ln') lng,

get_json_object(line,'$.la') lat,

get_json_object(line,'$.entry') entry,

get_json_object(line,'$.open_ad_type') open_ad_type,

get_json_object(line,'$.action') action,

get_json_object(line,'$.loading_time') loading_time,

get_json_object(line,'$.detail') detail,

get_json_object(line,'$.extend1') extend1

from "$APP".ods_start_log

where dt='$do_date';

"

$hive -e "$sql"

事件表(event_log)

事件日志中需要编写UDF,UDTF进行过滤

ods_event_log中拿出一条数据,事件中包含事件

1605927018899|{"cm":

{"ln":"-39.8","sv":"V2.9.8",

"os":"8.2.2","g":"RR02OCPX@gmail.com",

"mid":"0","nw":"3G","l":"es","vc":"8",

"hw":"640*1136","ar":"MX","uid":"0",

"t":"1605921511845","la":"28.5",

"md":"Huawei-8","vn":"1.2.7",

"ba":"Huawei","sr":"F"},

ap":"app",

"et":[{"ett":"1605904328376","en":"newsdetail",

"kv":{"entry":"1",

"goodsid":"0",

"news_staytime":"18",

"loading_time":"4",

"action":"4",

"showtype":"3",

"category":"27",

"type1":"433"}},

{"ett":"1605850106419","en":"notification",

"kv":{"ap_time":"1605914557494",

"action":"3",

"type":"4",

"content":""}},

{"ett":"1605905731874",

"en":"active_background",

"kv":{"active_source":"1"}}]}

drop table if exists dwd_base_event_log;

CREATE EXTERNAL TABLE dwd_base_event_log(

`mid_id` string,

`user_id` string,

`version_code` string,

`version_name` string,

`lang` string,

`source` string,

`os` string,

`area` string,

`model` string,

`brand` string,

`sdk_version` string,

`gmail` string,

`height_width` string,

`app_time` string,

`network` string,

`lng` string,

`lat` string,

`event_name` string,

`event_json` string,

`server_time` string)

PARTITIONED BY (`dt` string)

stored as parquet

location '/warehouse/gmall/dwd/dwd_base_event_log/'

TBLPROPERTIES('parquet.compression'='lzo');

写脚本导入数据ods_to_dwd_base_log.sh

#!/bin/bash

# 定义变量方便修改

APP=gmall

hive=/opt/module/hive/bin/hive

# 如果是输入的日期按照取输入日期;如果没输入日期取当前时间的前一天

if [ -n "$1" ] ;then

do_date=$1

else

do_date=`date -d "-1 day" +%F`

fi

sql="

use gmall;

insert overwrite table "$APP".dwd_base_event_log partition(dt='$do_date')

select

base_analizer(line,'mid') as mid_id,

base_analizer(line,'uid') as user_id,

base_analizer(line,'vc') as version_code,

base_analizer(line,'vn') as version_name,

base_analizer(line,'l') as lang,

base_analizer(line,'sr') as source,

base_analizer(line,'os') as os,

base_analizer(line,'ar') as area,

base_analizer(line,'md') as model,

base_analizer(line,'ba') as brand,

base_analizer(line,'sv') as sdk_version,

base_analizer(line,'g') as gmail,

base_analizer(line,'hw') as height_width,

base_analizer(line,'t') as app_time,

base_analizer(line,'nw') as network,

base_analizer(line,'ln') as lng,

base_analizer(line,'la') as lat,

event_name,

event_json,

base_analizer(line,'st') as server_time

from "$APP".ods_event_log lateral view flat_analizer(base_analizer(line,'et')) tem_flat as event_name,event_json

where dt='$do_date' and base_analizer(line,'et')<>'';

"

#cdh

# sudu -u hive hive -e "$sql"

$hive -e "$sql"

DWD层用户行为事件表获取

说明:因为事件表已经拆分开,所以这里需要建设10张表来进行存储, (数据来源 dwd_base_event_log)

商品点击表 dwd_display_log

商品详情页表

drop table if exists dwd_newsdetail_log;

CREATE EXTERNAL TABLE dwd_newsdetail_log(

`mid_id` string,

`user_id` string,

`version_code` string,

`version_name` string,

`lang` string,

`source` string,

`os` string,

`area` string,

`model` string,

`brand` string,

`sdk_version` string,

`gmail` string,

`height_width` string,

`app_time` string,

`network` string,

`lng` string,

`lat` string,

`entry` string,

`action` string,

`goodsid` string,

`showtype` string,

`news_staytime` string,

`loading_time` string,

`type1` string,

`category` string,

`server_time` string)

PARTITIONED BY (dt string)

stored as parquet

location '/warehouse/gmall/dwd/dwd_newsdetail_log/'

TBLPROPERTIES('parquet.compression'='lzo');

hive (gmall)>

insert overwrite table dwd_newsdetail_log PARTITION (dt='2020-03-29')

select

mid_id,

user_id,

version_code,

version_name,

lang,

source,

os,

area,

model,

brand,

sdk_version,

gmail,

height_width,

app_time,

network,

lng,

lat,

get_json_object(event_json,'$.kv.entry') entry,

get_json_object(event_json,'$.kv.action') action,

get_json_object(event_json,'$.kv.goodsid') goodsid,

get_json_object(event_json,'$.kv.showtype') showtype,

get_json_object(event_json,'$.kv.news_staytime') news_staytime,

get_json_object(event_json,'$.kv.loading_time') loading_time,

get_json_object(event_json,'$.kv.type1') type1,

get_json_object(event_json,'$.kv.category') category,

server_time

from dwd_base_event_log

where dt='2020-03-29' and event_name='newsdetail';

点赞表

评论表

收藏表

消息通知表

用户后台活跃表

二、数据仓库DWD层——业务数据

本层主要表类型:

- 存储各业务表的全量快照

- 存储各业务表的拉链表

每一张维度表基本都是从ods层中筛选字段进行导入,有些导入不是很复杂,但是有些导入会涉及多个join,多个join也意味着资源高、效率低的缺点,这一块可以多了解优化,面试必备技巧。下面就要进行业务层DWD层的建模了

商品维度表(全量数据快照)

活动维度表(全量数据快照)

可以看到红色字段通过left join 进行筛选

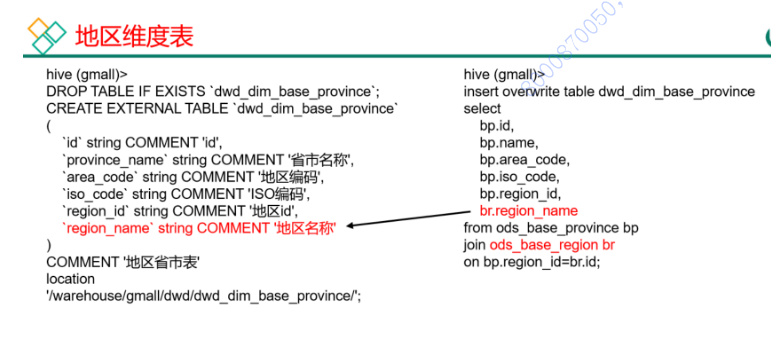

地区维度表(全量数据快照)

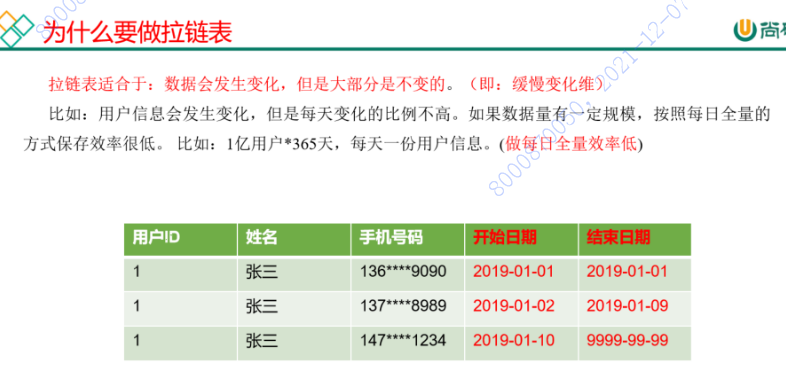

用户维度表(拉链表)

用户表中的数据每日既有可能新增,也有可能修改,但修改频率并不高,属于缓慢变化维度,此处采用拉链表存储用户维度数据。

INSERT OVERWRITE INTO dwd_dim_user_info_his_tmp

SELECT *

FROM (

SELECT id, name, birthday, gender, email

, user_level, create_time, operate_time, '2020-03-29' AS start_date, '9999-99-99' AS end_date

FROM ods_user_info

WHERE dt = '2020-03-29'

UNION ALL

SELECT uh.id, uh.name, uh.birthday, uh.gender, uh.email

, uh.user_level, uh.create_time, uh.operate_time, uh.start_date

, if(ui.id IS NOT NULL

AND uh.end_date = '9999-99-99', date_add(ui.dt, -1), uh.end_date) AS end_date

FROM dwd_dim_user_info_his uh

LEFT JOIN (

SELECT *

FROM ods_user_info

WHERE dt = '2020-03-29'

) ui

ON uh.id = ui.id

) his

ORDER BY his.id, start_date;

INSERT OVERWRITE INTO dwd_dim_user_info_his

SELECT *

FROM dwd_dim_user_info_his_tmp;订单明细事实表(事务型快照事实表)

drop table if exists dwd_fact_order_detail;

create external table dwd_fact_order_detail (

`id` string COMMENT '订单编号',

`order_id` string COMMENT '订单号',

`user_id` string COMMENT '用户id',

`sku_id` string COMMENT 'sku商品id',

`sku_name` string COMMENT '商品名称',

`order_price` decimal(10,2) COMMENT '商品价格',

`sku_num` bigint COMMENT '商品数量',

`create_time` string COMMENT '创建时间',

`province_id` string COMMENT '省份ID',

`total_amount` decimal(20,2) COMMENT '商品总金额'

)

PARTITIONED BY (`dt` string)

stored as parquet

location '/warehouse/gmall/dwd/dwd_fact_order_detail/'

tblproperties ("parquet.compression"="lzo"); insert overwrite table dwd_fact_order_detail partition(dt='2020-03-29')

select

od.id,

od.order_id,

od.user_id,

od.sku_id,

od.sku_name,

od.order_price,

od.sku_num,

od.create_time,

oi.province_id,

od.order_price*od.sku_num

from

(

select * from ods_order_detail where dt='2020-03-29'

) od

join

(

select * from ods_order_info where dt='2020-03-29'

) oi

on od.order_id=oi.id; 支付事实表 (事务型快照事实表)

退款事实表(事务型快照事实表)

把ODS层ods_order_refund_info表数据导入到DWD层退款事实表,在导入过程中可以做适当的清洗。

drop table if exists dwd_fact_order_refund_info;

create external table dwd_fact_order_refund_info(

`id` string COMMENT '编号',

`user_id` string COMMENT '用户ID',

`order_id` string COMMENT '订单ID',

`sku_id` string COMMENT '商品ID',

`refund_type` string COMMENT '退款类型',

`refund_num` bigint COMMENT '退款件数',

`refund_amount` decimal(16,2) COMMENT '退款金额',

`refund_reason_type` string COMMENT '退款原因类型',

`create_time` string COMMENT '退款时间'

) COMMENT '退款事实表'

PARTITIONED BY (`dt` string)

row format delimited fields terminated by '\t'

stored as parquet

location '/warehouse/gmall/dwd/dwd_fact_order_refund_info/'

tblproperties ("parquet.compression"="lzo");

insert overwrite table dwd_fact_order_refund_info partition(dt='2020-03-29')

select

id,

user_id,

order_id,

sku_id,

refund_type,

refund_num,

refund_amount,

refund_reason_type,

create_time

from ods_order_refund_info

where dt='2020-03-29';

订单事实表(累积型快照事实表,动态分区)

设计

黄色为历史分区,以创建日期分区存放

绿色为增量数据,根据创建时间可以划分出来的分区

蓝色为合并后的分区

从黄色部分取和绿色划分相同的分区进行数据合并,再动态写回去

insert overwrite table bule partition(dt)

select coalesce(green.columns,yellow.columns),coalesce(green.dt,yellow.dt)

from yellow outer full join

green on yellow.key = green.key and green.dt = '${dt}'

where yellow.dt in(

select distinct substring(replace(create_time,'-'),1,7) –## '20201001'

from green where dt = '${dt}'

)

订单生命周期:创建时间=>支付时间=>取消时间=>完成时间=>退款时间=>退款完成时间。

由于ODS层订单表只有创建时间和操作时间两个状态,不能表达所有时间含义,所以需要关联订单状态表。订单事实表里面增加了活动id,所以需要关联活动订单表。

drop table if exists dwd_fact_order_info;

create external table dwd_fact_order_info (

`id` string COMMENT '订单编号',

`order_status` string COMMENT '订单状态',

`user_id` string COMMENT '用户id',

`out_trade_no` string COMMENT '支付流水号',

`create_time` string COMMENT '创建时间(未支付状态)',

`payment_time` string COMMENT '支付时间(已支付状态)',

`cancel_time` string COMMENT '取消时间(已取消状态)',

`finish_time` string COMMENT '完成时间(已完成状态)',

`refund_time` string COMMENT '退款时间(退款中状态)',

`refund_finish_time` string COMMENT '退款完成时间(退款完成状态)',

`province_id` string COMMENT '省份ID',

`activity_id` string COMMENT '活动ID',

`original_total_amount` string COMMENT '原价金额',

`benefit_reduce_amount` string COMMENT '优惠金额',

`feight_fee` string COMMENT '运费',

`final_total_amount` decimal(10,2) COMMENT '订单金额'

)

PARTITIONED BY (`dt` string)

stored as parquet

location '/warehouse/gmall/dwd/dwd_fact_order_info/'

tblproperties ("parquet.compression"="lzo");

set hive.exec.dynamic.partition.mode=nonstrict;

insert overwrite table dwd_fact_order_info partition(dt)

select

if(new.id is null,old.id,new.id),

if(new.order_status is null,old.order_status,new.order_status),

if(new.user_id is null,old.user_id,new.user_id),

if(new.out_trade_no is null,old.out_trade_no,new.out_trade_no),

if(new.tms['1001'] is null,old.create_time,new.tms['1001']),

if(new.tms['1002'] is null,old.payment_time,new.tms['1002']),

if(new.tms['1003'] is null,old.cancel_time,new.tms['1003']),

if(new.tms['1004'] is null,old.finish_time,new.tms['1004']),

if(new.tms['1005'] is null,old.refund_time,new.tms['1005']),

if(new.tms['1006'] is null,old.refund_finish_time,new.tms['1006']),

if(new.province_id is null,old.province_id,new.province_id),

if(new.activity_id is null,old.activity_id,new.activity_id),

if(new.original_total_amount is null,old.original_total_amount,new.original_total_amount),

if(new.benefit_reduce_amount is null,old.benefit_reduce_amount,new.benefit_reduce_amount),

if(new.feight_fee is null,old.feight_fee,new.feight_fee),

if(new.final_total_amount is null,old.final_total_amount,new.final_total_amount),

date_format(if(new.tms['1001'] is null,old.create_time,new.tms['1001']),'yyyy-MM-dd')

from (

select

id,

order_status,

user_id,

out_trade_no,

create_time,

payment_time,

cancel_time,

finish_time,

refund_time,

refund_finish_time,

province_id,

activity_id,

original_total_amount,

benefit_reduce_amount,

feight_fee,

final_total_amount

from dwd_fact_order_info

where dt

in

(

select

date_format(create_time,'yyyy-MM-dd')

from ods_order_info

where dt='2020-03-10'

)

)old

full outer join

(

select

info.id,

info.order_status,

info.user_id,

info.out_trade_no,

info.province_id,

act.activity_id,

log.tms,

info.original_total_amount,

info.benefit_reduce_amount,

info.feight_fee,

info.final_total_amount

from

(

select

order_id,

str_to_map(concat_ws(',',collect_set(concat(order_status,'=',operate_time))),',','=') tms

from ods_order_status_log

where dt='2020-03-10'

group by order_id

)log

join (

select * from ods_order_info where dt='2020-03-10'

)info

on log.order_id=info.id

left join

(

select * from ods_activity_order where dt='2020-03-10'

)act

on log.order_id=act.order_id

)new

on old.id=new.id;

insert overwrite table dwd_order_info partition(dt)

select

nvl(new.id,old.id),

nvl(new.order_status,old.order_status),

nvl(new.user_id,old.user_id),

nvl(new.province_id,old.province_id),

nvl(new.payment_way,old.payment_way),

nvl(new.delivery_address,old.delivery_address),

nvl(new.out_trade_no,old.out_trade_no),

nvl(new.tracking_no,old.tracking_no),

nvl(new.create_time,old.create_time),

nvl(new.payment_time,old.payment_time),

nvl(new.cancel_time,old.cancel_time),

nvl(new.finish_time,old.finish_time),

nvl(new.refund_time,old.refund_time),

nvl(new.refund_finish_time,old.refund_finish_time),

nvl(new.expire_time,old.expire_time),

nvl(new.feight_fee,old.feight_fee),

nvl(new.feight_fee_reduce,old.feight_fee_reduce),

nvl(new.activity_reduce_amount,old.activity_reduce_amount),

nvl(new.coupon_reduce_amount,old.coupon_reduce_amount),

nvl(new.original_amount,old.original_amount),

nvl(new.final_amount,old.final_amount),

case

when new.cancel_time is not null then date_format(new.cancel_time,'yyyy-MM-dd')

when new.finish_time is not null and date_add(date_format(new.finish_time,'yyyy-MM-dd'),7)='2020-06-15' and new.refund_time is null then '2020-06-15'

when new.refund_finish_time is not null then date_format(new.refund_finish_time,'yyyy-MM-dd')

when new.expire_time is not null then date_format(new.expire_time,'yyyy-MM-dd')

else '9999-99-99'

end

--COALESCE(new.cancel_time,new.finish_time,new.refund_finish_time,new.expire_time,'9999-99-99')

from

(

select

id,

order_status,

user_id,

province_id,

payment_way,

delivery_address,

out_trade_no,

tracking_no,

create_time,

payment_time,

cancel_time,

finish_time,

refund_time,

refund_finish_time,

expire_time,

feight_fee,

feight_fee_reduce,

activity_reduce_amount,

coupon_reduce_amount,

original_amount,

final_amount

from dwd_order_info

where dt='9999-99-99'

)old

full outer join

(

select

oi.id,

oi.order_status,

oi.user_id,

oi.province_id,

oi.payment_way,

oi.delivery_address,

oi.out_trade_no,

oi.tracking_no,

oi.create_time,

times.ts['1002'] payment_time,

times.ts['1003'] cancel_time,

times.ts['1004'] finish_time,

times.ts['1005'] refund_time,

times.ts['1006'] refund_finish_time,

oi.expire_time,

feight_fee,

feight_fee_reduce,

activity_reduce_amount,

coupon_reduce_amount,

original_amount,

final_amount

from

(

select

*

from ods_order_info

where dt='2020-06-15'

)oi

left join

(

select

order_id,

str_to_map(concat_ws(',',collect_set(concat(order_status,'=',operate_time))),',','=') ts

from ods_order_status_log

where dt='2020-06-15'

group by order_id

)times

on oi.id=times.order_id

)new

on old.id=new.id;常用函数

select concat_ws('-','a','b');

a-b

str_to_map('1001=2020-03-29,1002=2020-03-29', ',' , '=')

输出

{"1001":"2020-03-29","1002":"2020-03-29"}

hive (gmall)> select order_id, concat(order_status,'=', operate_time) from ods_order_status_log where dt='2020-03-29';

3210 1001=2020-03-29 00:00:00.0

3211 1001=2020-03-29 00:00:00.0

hive (gmall)> select order_id, collect_set(concat(order_status,'=',operate_time)) from ods_order_status_log where dt='2020-03-29' group by order_id;

3210 ["1001=2020-03-29 00:00:00.0","1002=2020-03-29 00:00:00.0","1005=2020-03-29 00:00:00.0"]

3211 ["1001=2020-03-29 00:00:00.0","1002=2020-03-29 00:00:00.0","1004=2020-03-29

hive (gmall)>

select order_id, concat_ws(',', collect_set(concat(order_status,'=',operate_time))) from ods_order_status_log where dt='2020-03-29' group by order_id;

3210 1001=2020-03-29 00:00:00.0,1002=2020-03-29 00:00:00.0,1005=2020-03-29 00:00:00.0

3211 1001=2020-03-29 00:00:00.0,1002=2020-03-29 00:00:00.0,1004=2020-03-29 00:00:00.0

hive (gmall)>

select order_id, str_to_map(concat_ws(',',collect_set(concat(order_status,'=',operate_time))), ',' , '=') from ods_order_status_log where dt='2020-03-29' group by order_id;

3210 {"1001":"2020-03-29 00:00:00.0","1002":"2020-03-29 00:00:00.0","1005":"2020-03-29 00:00:00.0"}

3211 {"1001":"2020-03-29 00:00:00.0","1002":"2020-03-29 00:00:00.0","1004":"2020-03-29 00:00:00.0"}

789

789

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?