参考:Linux-5.10内核代码

调度周期

三个参数

CFS中理论上的调度周期计算,主要由三个参数控制:

/*

* Targeted preemption latency for CPU-bound tasks:

*

* NOTE: this latency value is not the same as the concept of

* 'timeslice length' - timeslices in CFS are of variable length

* and have no persistent notion like in traditional, time-slice

* based scheduling concepts.

*

* (to see the precise effective timeslice length of your workload,

* run vmstat and monitor the context-switches (cs) field)

*

* (default: 6ms * (1 + ilog(ncpus)), units: nanoseconds)

*/

unsigned int sysctl_sched_latency = 6000000ULL;

static unsigned int normalized_sysctl_sched_latency = 6000000ULL;

/*

* Minimal preemption granularity for CPU-bound tasks:

*

* (default: 0.75 msec * (1 + ilog(ncpus)), units: nanoseconds)

*/

unsigned int sysctl_sched_min_granularity = 750000ULL;

static unsigned int normalized_sysctl_sched_min_granularity = 750000ULL;

/*

* This value is kept at sysctl_sched_latency/sysctl_sched_min_granularity

*/

static unsigned int sched_nr_latency = 8;

kernel/sched/fair.c中定义了这三个值得默认值:

| 参数 | 默认值 |

|---|---|

| sched_nr_latency | 8 |

| sched_latency | 6ms |

| sched_min_latency | 0.75ms |

其中,sched_nr_latency初始值是8,其是另外两个参数计算得出:

sched_nr_latency = sched_latency / sched_min_granularity

sched_latency 和 sched_min_granularity被导出到/proc接口,随系统的CPU配置变化而变化:

[root@244:rg#] cat /proc/sys/kernel/sched_latency_ns

24000000 #调度周期24ms

[root@244:rg#] cat /proc/sys/kernel/sched_min_granularity_ns

10000000 #最小调度时间片为10ms

kernel/sched/fair.c中也定义了factor因子的计算方法,一般来说,系统都是SCHED_TUNABLESCALING_LOG方式。log计算方式下,cpu数量超过8之后,factor也不再增加。所以我这里服务器是48个逻辑核,其算下来factor = 1 + ilog(8) = 4,所以这里系统显示的调度周期latency是24ms。

static unsigned int get_update_sysctl_factor(void)

{

unsigned int cpus = min_t(unsigned int, num_online_cpus(), 8);

unsigned int factor;

switch (sysctl_sched_tunable_scaling) {

case SCHED_TUNABLESCALING_NONE:

factor = 1;

break;

case SCHED_TUNABLESCALING_LINEAR:

factor = cpus;

break;

case SCHED_TUNABLESCALING_LOG:

default:

factor = 1 + ilog2(cpus);

break;

}

return factor;

}

/* include/linux/sched/sysctl.h */

enum sched_tunable_scaling {

SCHED_TUNABLESCALING_NONE,

SCHED_TUNABLESCALING_LOG,

SCHED_TUNABLESCALING_LINEAR,

SCHED_TUNABLESCALING_END,

};

那么根据我这里系统设定的值,sched_latency=24ms, sched_min_granularity=10ms,那么计算得出我这里的sched_nr_latency = 24/10 = 2

调度周期P计算

/*

* The idea is to set a period in which each task runs once.

*

* When there are too many tasks (sched_nr_latency) we have to stretch

* this period because otherwise the slices get too small.

*

* p = (nr <= nl) ? l : l*nr/nl

*/

static u64 __sched_period(unsigned long nr_running)

{

if (unlikely(nr_running > sched_nr_latency))

return nr_running * sysctl_sched_min_granularity;

else

return sysctl_sched_latency;

}

CFS中的调度周期时间计算公式其实很简单:

以代码默认值来计算:

当rq中任务数量小于等于8,调度周期是6ms;rq中任务数量大于8,调度周期是0.75ms * nr_running。

CFS调度实体时间片

时间片计算

问题:CFS是如何保证一个调度周期内,一个rq上的所有任务都能够至少轮询一遍的呢?

其实CFS调度器本身就在弱化时间片的思想,但其还是可以理解为通过时间片来调节调度的。

CFS中计算时间片是以调度实体的权重weight来计算的。

-

设第i个调度实体se的权重为wi

-

rq的总权重为每个se的权重之和,记为rw

-

rq中共有n个调度实体

-

调度周期时间记为P,一般默认为6ms

调度实体的组织结构分为两种情况来考虑:组调度和非组调度。

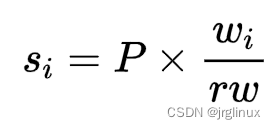

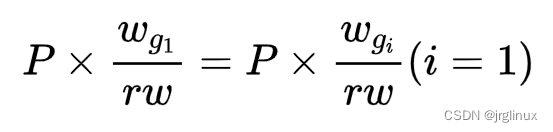

非组调度,那么,第i个调度实体获得时间片为:

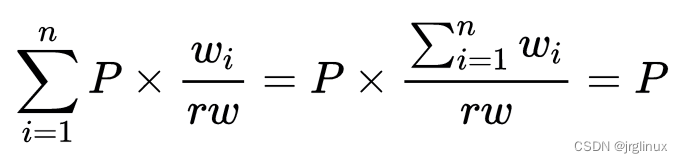

证明:对n个调度实体的时间片求和,则有

所以能保证一个调度周期内,rq上的任务都能执行一遍。

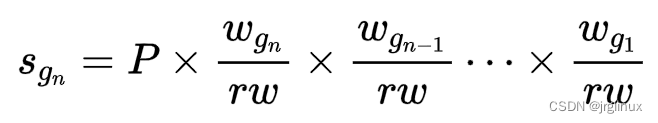

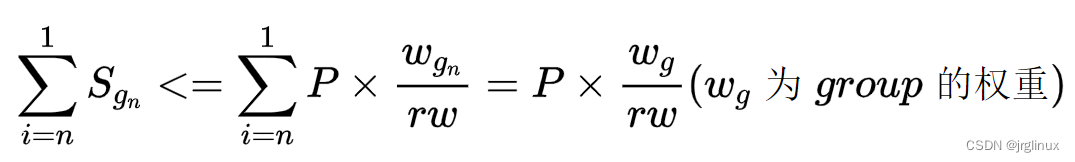

若是group调度:假设一层一层指向parent节点直到Se1根节点。

那么,编号为Se n的调度实体的调度时间片为:

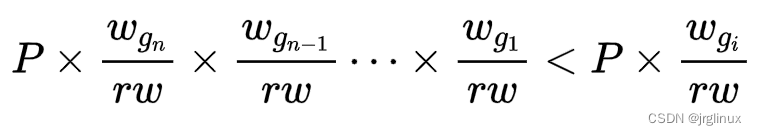

证明:一个group内,其调度实体时间片之和占比并未超过其权重之和占rw的占比

因为调度实体的w总是小于rw,所以对于group之内非父节点的任何一个调度实体有:

而对于父节点有:

所以,对于group之内的调度实体的时间片之和有:

这样便保证了调度周期内,group里面的调度实体也能都运行一遍。对于group中若n=1,其实可以看作为非组调度的情形。

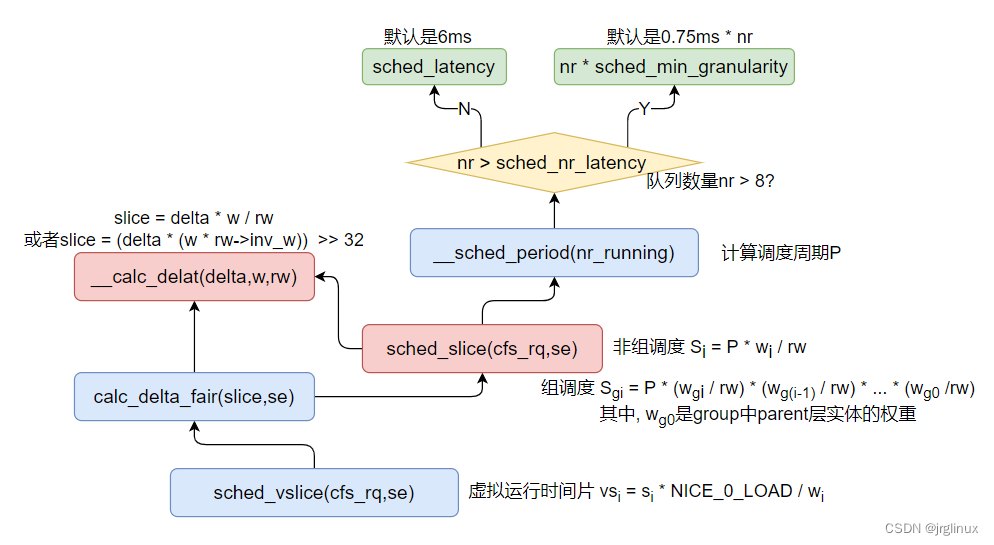

函数调用关系

fair.c中函数调用关系和计算公式整理如下图所示:

虚拟运行时间

sched_vslice()计算虚拟运行时间片,从其计算公式可以看出来,虚拟运行时间片是在计算出调度时间片的基础上,再与NICE_0_LOAD(nice值为0的调度实体的权重,32位系统中是1024)做了一个量化转换。

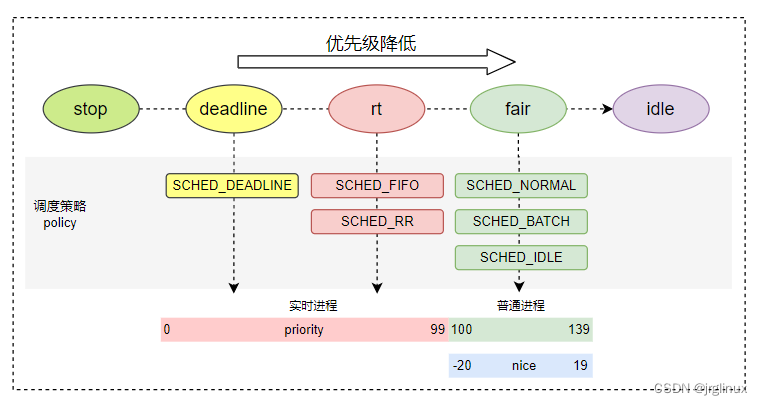

优先级映射权重

nice值确定了优先级priority,这是一个线性的转化关系

那么权重又是如何计算得来的?

策略1:进程每降低一个nice值,则多获得约10%的CPU时间,每升高一个nice值,则放弃约10%的CPU时间。

为了实现上述的策略1,则设计出了权重的数组:

/*

* Nice levels are multiplicative, with a gentle 10% change for every

* nice level changed. I.e. when a CPU-bound task goes from nice 0 to

* nice 1, it will get ~10% less CPU time than another CPU-bound task

* that remained on nice 0.

*

* The "10% effect" is relative and cumulative: from _any_ nice level,

* if you go up 1 level, it's -10% CPU usage, if you go down 1 level

* it's +10% CPU usage. (to achieve that we use a multiplier of 1.25.

* If a task goes up by ~10% and another task goes down by ~10% then

* the relative distance between them is ~25%.)

*/

const int sched_prio_to_weight[40] = {

/* -20 */ 88761, 71755, 56483, 46273, 36291,

/* -15 */ 29154, 23254, 18705, 14949, 11916,

/* -10 */ 9548, 7620, 6100, 4904, 3906,

/* -5 */ 3121, 2501, 1991, 1586, 1277,

/* 0 */ 1024, 820, 655, 526, 423,

/* 5 */ 335, 272, 215, 172, 137,

/* 10 */ 110, 87, 70, 56, 45,

/* 15 */ 36, 29, 23, 18, 15,

};

各数组之间的乘数因子是1.25。举例,两个进程A和B在nice级别0运行,因此两个进程的CPU份额相同,即都是50%。nice级别为0的进程,其权重查表可知为1024。每个进程的份额是1024/(1024+1024)=0.5,即50%。如果进程B的优先级加1,那么其CPU份额应该减少10%。换句话说,这意味着进程A得到总的CPU时间的55%,而进程B得到45%。优先级增加1导致权重减少,即1024/1.25≈820。因此进程A现在将得到的CPU份额是1024/(1024+820)≈0.55,而进程B的份额则是820/(1024+820)≈0.45,这样就产生了10%的差值。所以内核中设计出这么一组数组。

权重反转查表

其实时间片计算公式在时间片计算小节中已经直接指出来了,原理很简单。但fair.c中的代码看起来却特别复杂,这是因为kernel中避免使用除法计算,所以对涉及到除以weight的计算都做了简化,转化了乘法和移位操作。

/*

* Inverse (2^32/x) values of the sched_prio_to_weight[] array, precalculated.

*

* In cases where the weight does not change often, we can use the

* precalculated inverse to speed up arithmetics by turning divisions

* into multiplications:

*/

const u32 sched_prio_to_wmult[40] = {

/* -20 */ 48388, 59856, 76040, 92818, 118348,

/* -15 */ 147320, 184698, 229616, 287308, 360437,

/* -10 */ 449829, 563644, 704093, 875809, 1099582,

/* -5 */ 1376151, 1717300, 2157191, 2708050, 3363326,

/* 0 */ 4194304, 5237765, 6557202, 8165337, 10153587,

/* 5 */ 12820798, 15790321, 19976592, 24970740, 31350126,

/* 10 */ 39045157, 49367440, 61356676, 76695844, 95443717,

/* 15 */ 119304647, 148102320, 186737708, 238609294, 286331153,

};

为了简化/w的计算,事先先做了反转 2^32 / w,这样在实际计算的时候只要 * wmult[]表 >> 32即可。所以才有了sched_prio_to_wmult[]转换表。而__calc_delta()在计算 / rw的时候,也是先对rw(代码中变量名为lw)做了反转:

#define WMULT_CONST (~0U)

#define WMULT_SHIFT 32

static void __update_inv_weight(struct load_weight *lw)

{

unsigned long w;

if (likely(lw->inv_weight))

return;

w = scale_load_down(lw->weight);

if (BITS_PER_LONG > 32 && unlikely(w >= WMULT_CONST))

lw->inv_weight = 1;

else if (unlikely(!w))

lw->inv_weight = WMULT_CONST;

else

lw->inv_weight = WMULT_CONST / w;

}

2883

2883

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?