import requests

from bs4 import BeautifulSoup

import traceback

import re

def getHTMLtext(url, code="utf-8"):

try:

r =requests.get(url)

r.raise_for_status()

r.encoding = code

print("test")

return r.text

except:

return ""

def getStockList(list,stockURL):

html = getHTMLtext(stockURL,"GB2312")

print("getstockList start")

soup = BeautifulSoup(html,'html.parser')

a = soup.find_all('a')

for i in a:

try:

href = i.attrs['href']

list.append(re.findall(r"[s][hz]\d{6}",href)[0])

except:

continue

def getStockInfo(list,stockURL,filePath):

count = 0

for stock in list:

url = stockURL + stock +".html"

html =getHTMLtext(url)

try:

if html=="":

continue

infoDict = {}

soup = BeautifulSoup(html,"html.parser")

stockInfo = soup.find('div',attrs={'class':'stock-bets'})

name = stockInfo.find_all(attrs={'class':'bets-name'})[0]

infoDict.update({'股票名称': name.next.split()[0]})

keylist =stockInfo.find_all('dt')

vauleList = stockInfo.find_all('dd')

for i in range(len(keylist)):

key =keylist[i].text

vaule = vauleList[i].text

infoDict[key]= vaule

with open(filePath,'a',encoding='utf-8') as f:

f.write( str(infoDict) + '\n')

count= count+1

print("\r当前进度: {:.2f}%".format(count*100/len(list,end="")))

except:

count =count +1

print("\r当前进度: {:.2f}%".format(count*100/len(list)),end="")

continue

def main():

print("start")

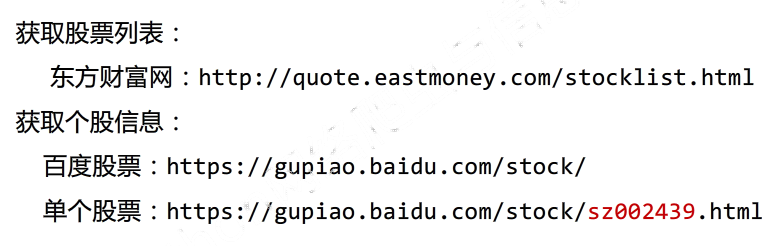

stock_list_url='http://quote.eastmoney.com/stocklist.html'

stock_info_url = 'https://gupiao.baidu.com/stock/'

output_file = 'D:/BaiduStockInfo.txt'

slist=[]

getStockList(slist,stock_list_url)

getStockInfo(slist,stock_info_url,output_file)

print("end")

main()

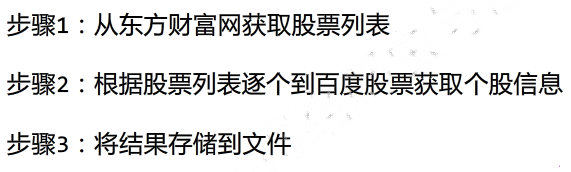

python3.x爬虫学习:股票数据定向爬虫笔记

最新推荐文章于 2024-09-17 13:30:28 发布

336

336

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?