文章目录

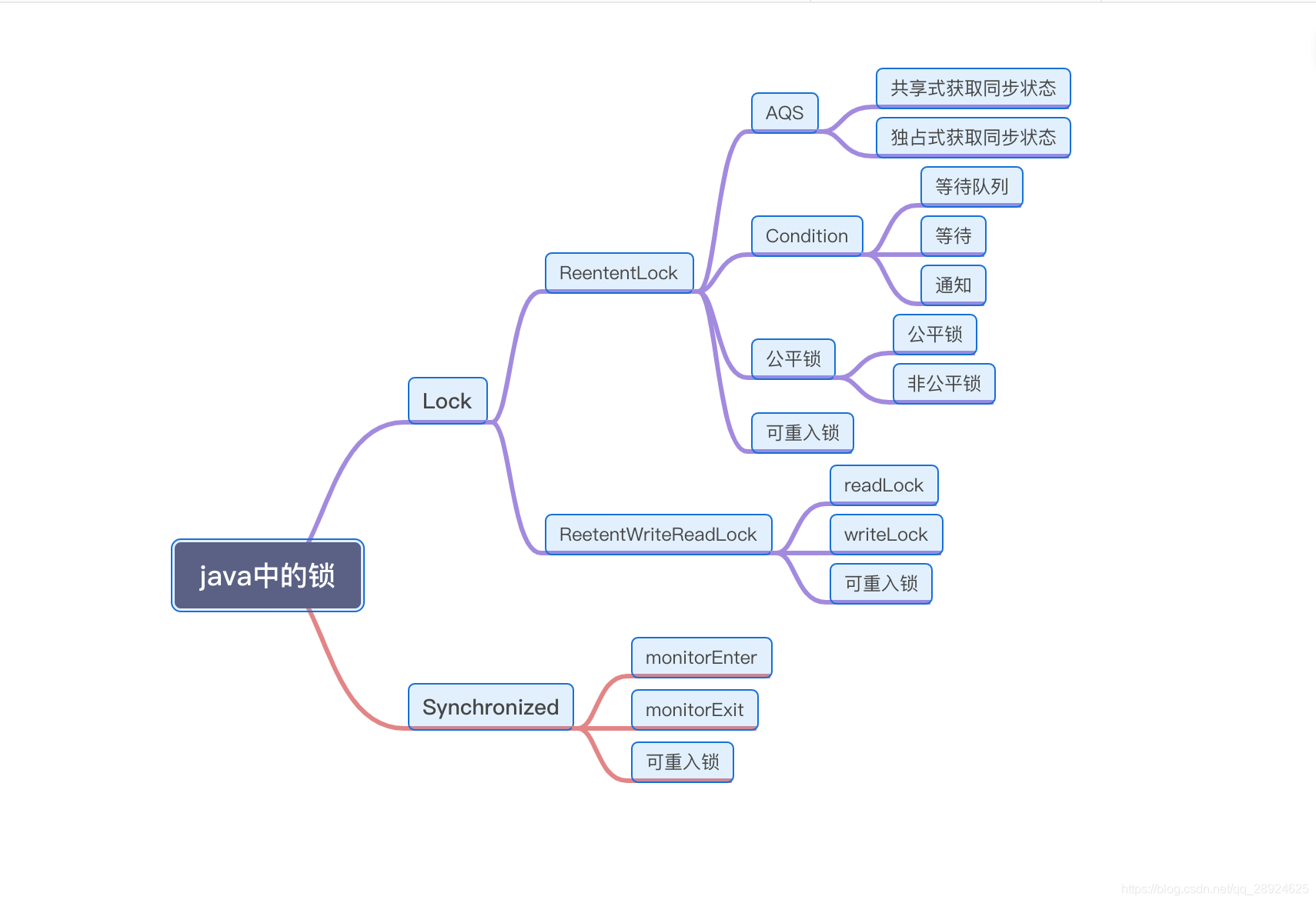

个人总结本篇文章的内容思维导图

1. Lock定义和API

1.1 Lock的定义

锁是用来控制多个线程访问共享资源的方式. 在Lock出现前,Java是通过Synchronized关键字实现锁功能的.

Lock是一个接口,他定义了锁获取和释放的基本操作.

1.2 Lock的API

| 方法名称 | 方法作用描述 |

|---|---|

| void lock() | 获取锁,调用该方法后,会一直阻塞等待锁的获取,方法返回时,就是获取到锁的时候 |

| void lockInterrruptibly() throws InterruptedException | 可中断的获取锁, 和lock()方法不同的是: 该方法会响应中断,当获取锁的过程中可以中断当前线程 |

| boolean tryLock() | 尝试非阻塞的获取锁,调用该方法,会立即返回,如果返回true,则表示获取到锁 |

| boolean tryLock(long time,TimeUnit timeUnit) throws InterruptedExcepiton | 超时的获取锁,当前线程会在以下三种场景中返回 ①:当前线程在超时结束前获取到锁 ②: 当前线程被其他线程中断 ③:线程超时结束 |

| void unlock() | 释放锁 |

| Condition newCondition | 获取等待通知组件,该组件和当前的锁绑定,线程只有获取到了锁,才能调用Condition中的await方法.然后线程将释放锁,并阻塞等待 |

1.3 Lock和Synchronized比较的优缺点

Synchronized关键字是隐式的获取锁(通过对象的monitor对象来做到锁的获取和释放),但是同时它将锁的获取和释放固话了,也就是先获取在释放.当然这种方式简化了同步的管理,可是扩展性没有显示的获取锁和释放锁好.

假设一个场景 先获取A锁,然后在获取B锁,当获取到B锁以后,释放A锁同时获取C锁,当获取C锁以后,在释放B锁和获取D锁,在这样的场景下,很明显Synchronized关键字则不容易做到. 而lock则可以轻松做到.

Lock接口提供了Synchronized关键字不具备的特性:

| 特性 | 描述 |

|---|---|

| 尝试非阻塞的获取锁 | 当前线程尝试获取锁,如果这一时刻没有被其他线程获取到,则成功获取并持有锁 |

| 能被中断的获取锁 | 与Synchronized关键词不同 |

| 超时获取锁 | 在指定截止时间前获取锁,如果截止时间结束仍无法获取,则结束 |

2. AQS 队列同步器

队列同步器 AbstractQueuedSynchronizer,是用来构建锁或者其他同步组件的基础框架.它使用一个int成员变量表示同步状态,通过内置的FIFO(先入先出)队列来完成资源线程的排队工作.

接入同步器的主要方式是继承,子类通过继承AQS的抽象方法来管理同步状态,主要的三个方法是: (getState()、setState(int newState) 和 compareAndSetState(int expct,int update))

同步器存在的意义:

锁和同步器的相互关系: 锁是面向使用者的,他定义了使用者与锁的交互接口,隐藏了实现细节;同步器面向的锁所得实现者,他简化了锁的实现方式,屏蔽了同步状态管理,线程的排队,等待与唤醒等底层操作.

2.1 队列同步器API

AQS的设计是基于模板方法模式的,就是说,使用者需要继承AQS,并重写指定的方法. 需要重写的方法如下

同步器提供的模板方法基本上分为三类

- 独占式获取与释放同步状态

- 共享式获取与释放同步状态

- 查询同步队列中的等待线程情况

通过自定义一个锁,来加深对于AQS类的使用和理解.

package chenyi.demo.lock;

import java.util.concurrent.TimeUnit;

import java.util.concurrent.locks.AbstractQueuedSynchronizer;

import java.util.concurrent.locks.Condition;

import java.util.concurrent.locks.Lock;

/**

*

* @author chenyi

*/

public class MyFirstLock implements Lock {

private static class Sync extends AbstractQueuedSynchronizer{

//尝试获取锁

@Override

protected boolean tryAcquire(int acquires) {

if (compareAndSetState(0,1)){

setExclusiveOwnerThread(Thread.currentThread());

return true;

}

return false;

}

// 释放锁

@Override

protected boolean tryRelease(int arg) {

if (getState() == 0){

throw new IllegalMonitorStateException();

}

setExclusiveOwnerThread(null);

setState(0);

return true;

}

//是否处于占用状态

@Override

protected boolean isHeldExclusively() {

return getState() == 1;

}

Condition newCondition(){

return new ConditionObject();

}

}

private final Sync sync = new Sync();

@Override

public void lock() {

sync.acquire(1);

}

@Override

public void lockInterruptibly() throws InterruptedException {

sync.acquireInterruptibly(1);

}

@Override

public boolean tryLock() {

return sync.tryAcquire(1);

}

@Override

public boolean tryLock(long time, TimeUnit unit) throws InterruptedException {

return sync.tryAcquireNanos(1,unit.toNanos(time));

}

@Override

public void unlock() {

sync.release(1);

}

@Override

public Condition newCondition() {

return sync.newCondition();

}

}

上述实现的自定义锁,通过实现Sync来控制锁的获取和释放,但真正的实现则还是依赖于AQS类,我们在继承AQS需要重写以下几个方法:

2.2 AQS实现原理

2.2.1 AQS的原理

- 同步器依赖内部的同步队列(一个FIFO双向队列)来完成对同步状态的管理.当前线程获取同步状态失败时,同步器会将当前线程以及等待状态等信息构造称为一个节点(Node),并将其加入到同步队列的尾部,同时会阻塞当前线程.当同步状态释放是,则会唤醒在首节点线程,使其再次尝试获取同步状态.

- 同步队列中的Node节点来保存获取同步状态失败的线程引用,等待状态,以及前驱和后驱节点.节点的属性名称和节点状态如下:

先看源代码:

/**

* Wait queue node class.

*

* <p>The wait queue is a variant of a "CLH" (Craig, Landin, and

* Hagersten) lock queue. CLH locks are normally used for

* spinlocks. We instead use them for blocking synchronizers, but

* use the same basic tactic of holding some of the control

* information about a thread in the predecessor of its node. A

* "status" field in each node keeps track of whether a thread

* should block. A node is signalled when its predecessor

* releases. Each node of the queue otherwise serves as a

* specific-notification-style monitor holding a single waiting

* thread. The status field does NOT control whether threads are

* granted locks etc though. A thread may try to acquire if it is

* first in the queue. But being first does not guarantee success;

* it only gives the right to contend. So the currently released

* contender thread may need to rewait.

*

* <p>To enqueue into a CLH lock, you atomically splice it in as new

* tail. To dequeue, you just set the head field.

* <pre>

* +------+ prev +-----+ +-----+

* head | | <---- | | <---- | | tail

* +------+ +-----+ +-----+

* </pre>

*

* <p>Insertion into a CLH queue requires only a single atomic

* operation on "tail", so there is a simple atomic point of

* demarcation from unqueued to queued. Similarly, dequeuing

* involves only updating the "head". However, it takes a bit

* more work for nodes to determine who their successors are,

* in part to deal with possible cancellation due to timeouts

* and interrupts.

*

* <p>The "prev" links (not used in original CLH locks), are mainly

* needed to handle cancellation. If a node is cancelled, its

* successor is (normally) relinked to a non-cancelled

* predecessor. For explanation of similar mechanics in the case

* of spin locks, see the papers by Scott and Scherer at

* http://www.cs.rochester.edu/u/scott/synchronization/

*

* <p>We also use "next" links to implement blocking mechanics.

* The thread id for each node is kept in its own node, so a

* predecessor signals the next node to wake up by traversing

* next link to determine which thread it is. Determination of

* successor must avoid races with newly queued nodes to set

* the "next" fields of their predecessors. This is solved

* when necessary by checking backwards from the atomically

* updated "tail" when a node's successor appears to be null.

* (Or, said differently, the next-links are an optimization

* so that we don't usually need a backward scan.)

*

* <p>Cancellation introduces some conservatism to the basic

* algorithms. Since we must poll for cancellation of other

* nodes, we can miss noticing whether a cancelled node is

* ahead or behind us. This is dealt with by always unparking

* successors upon cancellation, allowing them to stabilize on

* a new predecessor, unless we can identify an uncancelled

* predecessor who will carry this responsibility.

*

* <p>CLH queues need a dummy header node to get started. But

* we don't create them on construction, because it would be wasted

* effort if there is never contention. Instead, the node

* is constructed and head and tail pointers are set upon first

* contention.

*

* <p>Threads waiting on Conditions use the same nodes, but

* use an additional link. Conditions only need to link nodes

* in simple (non-concurrent) linked queues because they are

* only accessed when exclusively held. Upon await, a node is

* inserted into a condition queue. Upon signal, the node is

* transferred to the main queue. A special value of status

* field is used to mark which queue a node is on.

*

* <p>Thanks go to Dave Dice, Mark Moir, Victor Luchangco, Bill

* Scherer and Michael Scott, along with members of JSR-166

* expert group, for helpful ideas, discussions, and critiques

* on the design of this class.

*/

static final class Node {

/** Marker to indicate a node is waiting in shared mode */

static final Node SHARED = new Node();

/** Marker to indicate a node is waiting in exclusive mode */

static final Node EXCLUSIVE = null;

/** waitStatus value to indicate thread has cancelled */

static final int CANCELLED = 1;

/** waitStatus value to indicate successor's thread needs unparking */

static final int SIGNAL = -1;

/** waitStatus value to indicate thread is waiting on condition */

static final int CONDITION = -2;

/**

* waitStatus value to indicate the next acquireShared should

* unconditionally propagate

*/

static final int PROPAGATE = -3;

/**

* Status field, taking on only the values:

* SIGNAL: The successor of this node is (or will soon be)

* blocked (via park), so the current node must

* unpark its successor when it releases or

* cancels. To avoid races, acquire methods must

* first indicate they need a signal,

* then retry the atomic acquire, and then,

* on failure, block.

* CANCELLED: This node is cancelled due to timeout or interrupt.

* Nodes never leave this state. In particular,

* a thread with cancelled node never again blocks.

* CONDITION: This node is currently on a condition queue.

* It will not be used as a sync queue node

* until transferred, at which time the status

* will be set to 0. (Use of this value here has

* nothing to do with the other uses of the

* field, but simplifies mechanics.)

* PROPAGATE: A releaseShared should be propagated to other

* nodes. This is set (for head node only) in

* doReleaseShared to ensure propagation

* continues, even if other operations have

* since intervened.

* 0: None of the above

*

* The values are arranged numerically to simplify use.

* Non-negative values mean that a node doesn't need to

* signal. So, most code doesn't need to check for particular

* values, just for sign.

*

* The field is initialized to 0 for normal sync nodes, and

* CONDITION for condition nodes. It is modified using CAS

* (or when possible, unconditional volatile writes).

*/

volatile int waitStatus;

/**

* Link to predecessor node that current node/thread relies on

* for checking waitStatus. Assigned during enqueuing, and nulled

* out (for sake of GC) only upon dequeuing. Also, upon

* cancellation of a predecessor, we short-circuit while

* finding a non-cancelled one, which will always exist

* because the head node is never cancelled: A node becomes

* head only as a result of successful acquire. A

* cancelled thread never succeeds in acquiring, and a thread only

* cancels itself, not any other node.

*/

volatile Node prev;

/**

* Link to the successor node that the current node/thread

* unparks upon release. Assigned during enqueuing, adjusted

* when bypassing cancelled predecessors, and nulled out (for

* sake of GC) when dequeued. The enq operation does not

* assign next field of a predecessor until after attachment,

* so seeing a null next field does not necessarily mean that

* node is at end of queue. However, if a next field appears

* to be null, we can scan prev's from the tail to

* double-check. The next field of cancelled nodes is set to

* point to the node itself instead of null, to make life

* easier for isOnSyncQueue.

*/

volatile Node next;

/**

* The thread that enqueued this node. Initialized on

* construction and nulled out after use.

*/

volatile Thread thread;

/**

* Link to next node waiting on condition, or the special

* value SHARED. Because condition queues are accessed only

* when holding in exclusive mode, we just need a simple

* linked queue to hold nodes while they are waiting on

* conditions. They are then transferred to the queue to

* re-acquire. And because conditions can only be exclusive,

* we save a field by using special value to indicate shared

* mode.

*/

Node nextWaiter;

/**

* Returns true if node is waiting in shared mode.

*/

final boolean isShared() {

return nextWaiter == SHARED;

}

/**

* Returns previous node, or throws NullPointerException if null.

* Use when predecessor cannot be null. The null check could

* be elided, but is present to help the VM.

*

* @return the predecessor of this node

*/

final Node predecessor() throws NullPointerException {

Node p = prev;

if (p == null)

throw new NullPointerException();

else

return p;

}

Node() { // Used to establish initial head or SHARED marker

}

Node(Thread thread, Node mode) { // Used by addWaiter

this.nextWaiter = mode;

this.thread = thread;

}

Node(Thread thread, int waitStatus) { // Used by Condition

this.waitStatus = waitStatus;

this.thread = thread;

}

}

| 属性名称和类型 | 含义 |

|---|---|

| int status | 等待状态: ①: CANCELLED ,值为1 ,由于在同步队列中等待的线程等待超时或者中断,需要从同步队列中取消等待,节点进入该状态将不会变化 ②:SIGNAL,值为-1,后续节点的线程处于等待状态,而当前节点的线程如果释放了同步状态或者被取消,将会通知后续节点,使后续节点的线程得以运行. ③: CONDITION 值为-2 节点在等待队列中,节点线程等待在Condition上,当其他线程对Condition调用single()方法后,该节点将会从等待队列中转移到同步队列中,加入到对同步状态的获取中 ④:PROPAGATE ,值为 -3 ,表示下一次共享式同步状态获取将会无条件低被传播下去 ⑤: INITIAL 值为0, 初始状态 |

| Node prev | 前驱节点,当前节点接入同步队列时被设置 (尾部添加) |

| Node next | 后续节点 |

| Node nextWaiter | 等待队列中的后续节点,如果当前节点时共享的,那么这个字段将是一个SHARED常量,也就是说节点类型(独占和共享)和等待队列中的后继节点共用同一个字段 |

| Thread thread | 获取同步状态的线程 |

节点时构成同步队列的基础,AQS拥有首节点和尾节点,没有成获取到同步状态的线程将会成为节点,加入到该队列的尾部.

2.2.2 独占式同步状态获取与释放

对应的实现为 ReentrantLock中的FairSync 表示为公平锁,也就是独占式获取同步状态和释放同步状态了

获取同步状态的方法Acquire()

public final void acquire(int arg) {

if (!tryAcquire(arg) &&

acquireQueued(addWaiter(Node.EXCLUSIVE), arg))

selfInterrupt();

}

通过调用tryAcquire判断是否可以获取同步器状态,如果不能获取到同步状态,则需要先调用addWaiter() 创建节点,并通过 acquireQueued()将该节点加入到队列中.

下边是addWaiter 和 acquireQueued 的具体实现

private Node addWaiter(Node mode) {

Node node = new Node(Thread.currentThread(), mode);

// Try the fast path of enq; backup to full enq on failure

Node pred = tail;

if (pred != null) {

node.prev = pred;

if (compareAndSetTail(pred, node)) {

pred.next = node;

return node;

}

}

enq(node);

return node;

}

private Node enq(final Node node) {

for (;;) {

Node t = tail;

if (t == null) { // Must initialize

if (compareAndSetHead(new Node()))

tail = head;

} else {

node.prev = t;

if (compareAndSetTail(t, node)) {

t.next = node;

return t;

}

}

}

}

final boolean acquireQueued(final Node node, int arg) {

boolean failed = true;

try {

boolean interrupted = false;

for (;;) {

final Node p = node.predecessor();

if (p == head && tryAcquire(arg)) {

setHead(node);

p.next = null; // help GC

failed = false;

return interrupted;

}

if (shouldParkAfterFailedAcquire(p, node) &&

parkAndCheckInterrupt())

interrupted = true;

}

} finally {

if (failed)

cancelAcquire(node);

}

}

上述代码中使用 compareAndSetTail 来保证节点安全的添加,在enq(final Node node) 方法中,同步器通过 死循环 的方式保证节点的正确店家,只有通过CAS将节点设置为尾节点后,线程才从该方法返回

独占式同步状态的获取流程,也就是 acquire(int arg) 方法的调用流程.

释放同步状态

protected final boolean tryRelease(int releases) {

int c = getState() - releases;

if (Thread.currentThread() != getExclusiveOwnerThread())

throw new IllegalMonitorStateException();

boolean free = false;

if (c == 0) {

free = true;

setExclusiveOwnerThread(null);

}

setState(c);

return free;

}

判断当前线程是否是持有锁的线程,如果是,才开始设置状态,但是,如果state为 0 则去掉线程标识.

2.2.3 共享式同步状态获取与释放

共享式获取与独享式获取最主要的区别在于同一时刻能否有多个线程同时获取到同步状态.下图可以表示 共享和独占的区别,独占,在同一时间,只能有一个线程持有锁,而个共享可以有多个线程持有锁.

下边来来看下具体的共享式获取同步资源的源代码,还是以Semaphore 为具体实现中的源代码.

public final void acquireShared(int arg) {

if (tryAcquireShared(arg) < 0)

doAcquireShared(arg);

}

// TODO: Semaphore 的具体实现

protected int tryAcquireShared(int acquires) {

for (;;) {

if (hasQueuedPredecessors())

return -1;

int available = getState();

int remaining = available - acquires;

if (remaining < 0 ||

compareAndSetState(available, remaining))

return remaining;

}

}

//TODO: AQS 中的默认实现类

private void doAcquireShared(int arg) {

final Node node = addWaiter(Node.SHARED);

boolean failed = true;

try {

boolean interrupted = false;

for (;;) {

final Node p = node.predecessor();

if (p == head) {

int r = tryAcquireShared(arg);

if (r >= 0) {

setHeadAndPropagate(node, r);

p.next = null; // help GC

if (interrupted)

selfInterrupt();

failed = false;

return;

}

}

if (shouldParkAfterFailedAcquire(p, node) &&

parkAndCheckInterrupt())

interrupted = true;

}

} finally {

if (failed)

cancelAcquire(node);

}

}

同样 在 acquireShared 中先通过 tryAcquireShared 尝试获取同步状态,当该方法小于0 就需要调用doAcquireShared 尝试获取锁.在doAcquireShared 中方法中也需要判断该节点是否是头结点,然后开始尝试获取锁,如果获取到锁,则退出循环.

与独占式获取同步状态一样,共享式获取同步状态也需要释放状态.

public final boolean releaseShared(int arg) {

if (tryReleaseShared(arg)) {

doReleaseShared();

return true;

}

return false;

}

private void doReleaseShared() {

/*

* Ensure that a release propagates, even if there are other

* in-progress acquires/releases. This proceeds in the usual

* way of trying to unparkSuccessor of head if it needs

* signal. But if it does not, status is set to PROPAGATE to

* ensure that upon release, propagation continues.

* Additionally, we must loop in case a new node is added

* while we are doing this. Also, unlike other uses of

* unparkSuccessor, we need to know if CAS to reset status

* fails, if so rechecking.

*/

for (;;) {

Node h = head;

if (h != null && h != tail) {

int ws = h.waitStatus;

if (ws == Node.SIGNAL) {

if (!compareAndSetWaitStatus(h, Node.SIGNAL, 0))

continue; // loop to recheck cases

unparkSuccessor(h);

}

else if (ws == 0 &&

!compareAndSetWaitStatus(h, 0, Node.PROPAGATE))

continue; // loop on failed CAS

}

if (h == head) // loop if head changed

break;

}

}

在共享式的释放同步状态中,是需要通过CAS来保证,线程安全的,因为在释放的时候,是有多个线程并发的,原因就是在获取的时候,可以多个线程获取到.

2.2.4 独占式超时获取同步状态

独占式超时获取同步状态是通过调用doAcquireNanos 方法的

private boolean doAcquireNanos(int arg, long nanosTimeout)

throws InterruptedException {

if (nanosTimeout <= 0L)

return false;

final long deadline = System.nanoTime() + nanosTimeout;

final Node node = addWaiter(Node.EXCLUSIVE);

boolean failed = true;

try {

for (;;) {

final Node p = node.predecessor();

if (p == head && tryAcquire(arg)) {

setHead(node);

p.next = null; // help GC

failed = false;

return true;

}

nanosTimeout = deadline - System.nanoTime();

if (nanosTimeout <= 0L)

return false;

if (shouldParkAfterFailedAcquire(p, node) &&

nanosTimeout > spinForTimeoutThreshold)

LockSupport.parkNanos(this, nanosTimeout);

if (Thread.interrupted())

throw new InterruptedException();

}

} finally {

if (failed)

cancelAcquire(node);

}

}

这里是设置有超时时间的,在超时时间到时间后,就会结束获取锁的过程. 否则的话,则会一直进行循环的获取锁,在获取锁的时候,依然先判断该节点是否是头节点,然后在执行的过程中,不断的计算剩余等待时间,而每次获取不到的时候,会进行线程阻塞等待1s种,如果剩余时间不到1s中,则不进行睡眠.

3. 可重入锁

重入锁 ReentrantLock,就是说可以支持重进入的锁,表示该锁能够支持一个线程对资源的重复加锁.

ReentrantLock虽然没能像Synchronized关键字一样支持隐式的锁重新入,但是在调用lock方法时,已经获取到锁的线程,能够再次调用lock方法获取到锁,而不被阻塞.

protected final boolean tryAcquire(int acquires) {

final Thread current = Thread.currentThread();

int c = getState();

if (c == 0) {

if (!hasQueuedPredecessors() &&

compareAndSetState(0, acquires)) {

setExclusiveOwnerThread(current);

return true;

}

}

else if (current == ()) {

int nextc = c + acquires;

if (nextc < 0)

throw new Error("Maximum lock count exceeded");

setState(nextc);

return true;

}

return false;

}

3.1 如何实现重进入

重进入是指任意线程在获取到锁之后,能够再次获取该锁而不会被该锁阻塞,该特性需要解决以下两个问题:

- 线程再次获取锁. 所需要去识别该线程是不是持有所得线程,如果是,则再次获取成功

- 锁的最终释放. 线程重复获取了n次锁,随后在第n次释放该锁后,其他线程能够获取到该锁,也就是把state 置为0 . 锁的最终释放要求锁对于获取进行计数自增,技术表示该锁被重复获取的次数,而释放锁的时候,计数自减,当计数等于0 表示锁已经成功释放

ReentrantLock中的方法:

final boolean nonfairTryAcquire(int acquires) {

final Thread current = Thread.currentThread();

int c = getState();

if (c == 0) {

if (compareAndSetState(0, acquires)) {

setExclusiveOwnerThread(current);

return true;

}

}

else if (current == getExclusiveOwnerThread()) {

int nextc = c + acquires;

if (nextc < 0) // overflow

throw new Error("Maximum lock count exceeded");

setState(nextc);

return true;

}

return false;

}

释放资源的代码:

protected final boolean tryRelease(int releases) {

int c = getState() - releases;

if (Thread.currentThread() != getExclusiveOwnerThread())

throw new IllegalMonitorStateException();

boolean free = false;

if (c == 0) {

free = true;

setExclusiveOwnerThread(null);

}

setState(c);

return free;

}

3.2 公平锁和非公平锁的区别

公平性与否是针对获取锁而言的,如果一个锁是公平的,那么锁的获取顺序就应该符合请求的绝对时间顺序,也就是FIFO

我们直接在 ReentrantLock中看对应的实现:

公平锁的实现 ;

protected final boolean tryAcquire(int acquires) {

final Thread current = Thread.currentThread();

int c = getState();

if (c == 0) {

if (!hasQueuedPredecessors() &&

compareAndSetState(0, acquires)) {

setExclusiveOwnerThread(current);

return true;

}

}

else if (current == getExclusiveOwnerThread()) {

int nextc = c + acquires;

if (nextc < 0)

throw new Error("Maximum lock count exceeded");

setState(nextc);

return true;

}

return false;

}

非公平锁的实现:

final boolean nonfairTryAcquire(int acquires) {

final Thread current = Thread.currentThread();

int c = getState();

if (c == 0) {

if (compareAndSetState(0, acquires)) {

setExclusiveOwnerThread(current);

return true;

}

}

else if (current == getExclusiveOwnerThread()) {

int nextc = c + acquires;

if (nextc < 0) // overflow

throw new Error("Maximum lock count exceeded");

setState(nextc);

return true;

}

return false;

}

对比图:

差别在于hasQueuedPredecessors

public final boolean hasQueuedPredecessors() {

// The correctness of this depends on head being initialized

// before tail and on head.next being accurate if the current

// thread is first in queue.

Node t = tail; // Read fields in reverse initialization order

Node h = head;

Node s;

return h != t &&

((s = h.next) == null || s.thread != Thread.currentThread());

}

就是判断是否当前线程为头节点

非公平锁就是直接进行同步状态的获取,在公平锁中,是需要判断对应的节点是否是头节点.如果是才会进行同步状态的获取

package chenyi.demo.lock;

import org.junit.Test;

import java.util.ArrayList;

import java.util.Collection;

import java.util.Collections;

import java.util.concurrent.locks.Lock;

import java.util.concurrent.locks.ReentrantLock;

import java.util.stream.Collectors;

public class TestLock {

private static ReentrantLock2 fairLock = new ReentrantLock2(true);

private static ReentrantLock2 unfairLock = new ReentrantLock2(false);

@Test

public void testLockFair() throws InterruptedException {

testLock(fairLock);

}

@Test

public void testLocknonFair() throws InterruptedException {

testLock(unfairLock);

}

private void testLock(ReentrantLock2 lock) throws InterruptedException {

Thread thread = new Thread(() -> {

lock.lock();

try {

System.out.println(Thread.currentThread().getId() + "== 等待线程==" + lock.getQueuedThreads123());

System.out.println(Thread.currentThread().getId() + "== 等待线程==" + lock.getQueuedThreads123());

} finally {

lock.unlock();

}

});

Thread thread1 = new Thread(() -> {

lock.lock();

try {

System.out.println(Thread.currentThread().getId() + "== 等待线程==" + lock.getQueuedThreads123());

System.out.println(Thread.currentThread().getId() + "== 等待线程==" + lock.getQueuedThreads123());

} finally {

lock.unlock();

}

});

Thread thread2 = new Thread(() -> {

lock.lock();

try {

System.out.println(Thread.currentThread().getId() + "== 等待线程==" + lock.getQueuedThreads123());

System.out.println(Thread.currentThread().getId() + "== 等待线程==" + lock.getQueuedThreads123());

} finally {

lock.unlock();

}

});

Thread thread3 = new Thread(() -> {

lock.lock();

try {

System.out.println(Thread.currentThread().getId() + "== 等待线程==" + lock.getQueuedThreads123());

System.out.println(Thread.currentThread().getId() + "== 等待线程==" + lock.getQueuedThreads123());

} finally {

lock.unlock();

}

});

Thread thread4 = new Thread(() -> {

lock.lock();

try {

System.out.println(Thread.currentThread().getId() + "== 等待线程==" + lock.getQueuedThreads123());

System.out.println(Thread.currentThread().getId() + "== 等待线程==" + lock.getQueuedThreads123());

} finally {

lock.unlock();

}

});

thread.start();

thread1.start();

thread2.start();

thread3.start();

thread4.start();

Thread.sleep(6000)

;

}

public static class ReentrantLock2 extends ReentrantLock{

public ReentrantLock2(boolean fair) {

super(fair);

}

@Override

public Collection<Thread> getQueuedThreads(){

ArrayList<Thread> threads = new ArrayList<>(super.getQueuedThreads());

Collections.reverse(threads);

return threads;

}

public Collection<Long> getQueuedThreads123(){

ArrayList<Thread> threads = new ArrayList<>(super.getQueuedThreads());

Collections.reverse(threads);

return threads.stream().map(thread -> thread.getId()).collect(Collectors.toList());

}

}

}

通过上述方法来检查非公平锁和公平锁的.但是我在试验的时候,并没有监测到非公平锁获取等待队列的快速获取.

如果有时间,可以选择用并发的情况去模拟.

非公平锁,可能使线程饥饿,但是又为什么设定为默认的实现呢.是因为,公平锁的线程切换是比非公平锁的切换要多的,因为公平锁每次都需要校验是否头节点,以及判断节点中的状态.

4. Condition 接口

任意一个java对象,都有一组监视器方法(定义在 Object类中),主要包括 wait(),wait(long time), notify(),notifyAll(). 这些方法与Synchronized关键字配合,可以实现 等待/通知模式.

Condition接口也提供类似Object的监视方法与Lock配合实现 等待/通知模式

ReentrantLock reentrantLock = new ReentrantLock();

Condition condition = reentrantLock.newCondition();

@Test

public void testcondition() throws InterruptedException {

new Thread(()->{

reentrantLock.lock();

try {

System.out.println("我要awit了---");

condition.await();

System.out.println("我被唤醒了,继续执行--- ");

} catch (InterruptedException e) {

e.printStackTrace();

}finally {

reentrantLock.unlock();

}

}).start();;

new Thread(()->{

reentrantLock.lock();

try {

System.out.println("我要去唤醒他---");

condition.signal();

} finally {

reentrantLock.unlock();

}

}).start();;

Thread.sleep(3000);

}

4.1 condition 接口API

- await方法: 调用该方法时,释放锁,并进入阻塞中,等待其他线程调用singel方法唤醒该方法.

- awaitUninterruptibly方法: 调用该方法 可以相应中断,如果该方法被中断,则退出.

- awaitNanos(long nanosTimeout) 超时等待,如果超过时间,则结束等待

- awaitUntil(Date deadline) 在deadline 时间前一直等待,超过则返回

- signal 唤醒一个等待在Condition上的线程,该线程返回前必须获取到对应的锁

- signalAll 唤醒所有等待在Condition队列上的线程

4.2condition接口实现原理

ConditionObject 是同步器AQS的内部类,因为Condition的操作需要获取相关联的锁.每个Condition对象都包含着一个队列,该队列是Condition对象实现等待/通知功能的关键.

4.2.1 等待队列

等待队列是一个FIFO的队列,在队列中的每个节点都包含了一个线程引用,该线程就是在Condition对象上等待的线程,如果一个线程调用Condit.await方法,那么该线程将释放锁,构造节点加入到等待队里并进入等待状态.等待队列和同步队列中节点类型都是同步器AQS的静态内部类的Node类.

Condition拥有着 首节点和未接待近,当前线程调用Condition.await方法,将会以当前线程构造节点,并将该节点从尾部加入等待队列.

4.2.2 等待

调用Condition方法的await方法 ,会使当前线程进入等待队列并释放锁,同时线程状态变为等待状态.当从await方法返回后,当前线程一定获取了Condition相关联的锁.

ConditionObject中的await方法源码

public final void await() throws InterruptedException {

if (Thread.interrupted())

throw new InterruptedException();

Node node = addConditionWaiter();

long savedState = fullyRelease(node);

int interruptMode = 0;

while (!isOnSyncQueue(node)) {

LockSupport.park(this);

if ((interruptMode = checkInterruptWhileWaiting(node)) != 0)

break;

}

if (acquireQueued(node, savedState) && interruptMode != THROW_IE)

interruptMode = REINTERRUPT;

if (node.nextWaiter != null) // clean up if cancelled

unlinkCancelledWaiters();

if (interruptMode != 0)

reportInterruptAfterWait(interruptMode);

}

调用该方法的线程成功获取了锁的线程,也就是同步队列的首节点,该方法会将当前线程构建成节点并加入等待队列中,然后是释放同步状态,唤醒同步队列中的后续节点,然后当前线程会进入等待状态.

当等待队列中的节点被唤醒,则唤醒的节点线程尝试获取同步状态,如果是不是通过Condition.singnal方法唤醒,而是等待线程进行中断,则会抛出InterruptedException.

4.2.3 通知

调用Condition的signal方法,将会唤醒在等待队列中等待时间最长的节点(首节点),在唤醒之前,会将节点移入到同步队列中.

public final void signal() {

if (!isHeldExclusively())

throw new IllegalMonitorStateException();

Node first = firstWaiter;

if (first != null)

doSignal(first);

}

调用该方法的前置条件必须是获取了锁的线程,唤醒等待队列中的首节点.接着获取等待队列的首节点,将其移动到同步队列并使用LockSupport唤醒节点中的线程.

被唤醒的线程,将从await方法中的while循环中退出(isOnSyncQueue(Node node)方法返回true,节点已经在同步队列中),进而调用同步器AQS的 acquireQueued方法加入到获取同步状态的竞争中.

成功获取同步状态(或者说锁)之后,被唤醒的线程将从先前调用的await()方法返回.

如果是signalAll方法 则是将等待队列中的每个节点均执行一次signal方法. 效果就是把所有的等待线程节点移动到同步队列中,并唤醒每个节点的线程.

参考文章:<<java并发编程的艺术 第5章节>>

1768

1768

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?