天猫用户重复购买预测赛题——特征优化

天池大赛比赛地址:链接

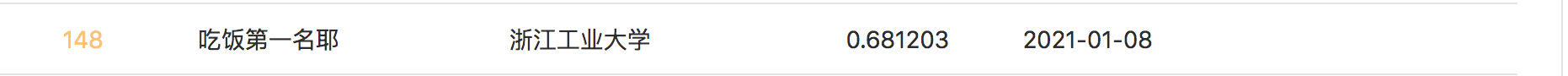

结果

理论知识

-

可参考特征优化

-

特征选择 也称为变量选择、属性选择 ,是为了构建模型而选择相关特征(即属性、指标)子集的过程

-

目的

- 简化模型,使之更易于被研究人员或用户理解

- 缩短训练时间

- 改善通用性、降低过拟合(即降低方差)

-

要使用特征选择技术的关键假设是:训练数据包含许多冗余 或无关的特征,因而移除这些特征并不会导致丢失信息。

-

特征选择包括特征的生成、评价、验证等处理过程

-

特征搜索算法

- 穷举法 暴力

- 贪心法 线性时间

- 模拟退火 随机尝试找最优

- 基因算法 组合深度优先尝试

- 邻居搜索 利用相近关系搜索

-

特征选择方法

- 过滤法 Filter

- 包装法 Wrapper

- 嵌入法 Embedded

- 可参考特征选择

1. 读取数据 并 缺失值补全

import numpy as np

import pandas as pd

import warnings

warnings.filterwarnings("ignore")

all_data_test = pd.read_csv('../datasets/all_feature.csv')

features_columns = [c for c in all_data_test.columns if c not in ['label','prob', 'age_range', 'gender',

'item_path_x', 'cat_path_x', 'seller_path', 'brand_path_x',

'time_stamp_path_x', 'action_type_path_x','item_path_y', 'cat_path_y', 'user_path', 'brand_path_y',

'time_stamp_path_y', 'action_type_path_y',]]

x_train = all_data_test[~all_data_test['label'].isna()][features_columns]

y_train = all_data_test[~all_data_test['label'].isna()]['label']

x_test= all_data_test[all_data_test['label'].isna()][features_columns]

train = x_train.head(2000)

target = y_train.head(2000)

test = x_test.head(200)

from sklearn.impute import SimpleImputer

imputer = SimpleImputer(strategy="median")

imputer = imputer.fit(train)

train_imputer = imputer.transform(train)

test_imputer = imputer.transform(test)

2. 构建验证函数

from sklearn.model_selection import cross_val_score

from sklearn.ensemble import RandomForestClassifier

def feature_selection(train, train_sel, target):

clf = RandomForestClassifier(n_estimators=100, max_depth=5, random_state=0, n_jobs=-1)

scores = cross_val_score(clf, train, target, cv=5,scoring="roc_auc")

scores_sel = cross_val_score(clf, train_sel, target, cv=5,scoring="roc_auc")

print("No Select AUC: %0.2f" % (scores.mean()))

print("Features Select AUC: %0.2f" % (scores.mean()))

3. 删除方差较小的特征 Filter->Variance Threshold

from sklearn.feature_selection import VarianceThreshold

sel = VarianceThreshold(threshold=0.4)

sel = sel.fit(train)

train_sel = sel.transform(train)

print('训练数据未特征筛选维度', train.shape)

print('训练数据特征筛选维度后', train_sel.shape)

feature_selection(train,train_sel,target)

'''

训练数据未特征筛选维度 (10000, 153)

训练数据特征筛选维度后 (10000, 61)

No Select AUC: 0.61

Features Select AUC: 0.61

'''

4. 基于单变量统计检验选择最佳特征 Filter->SelectKBest

from sklearn.feature_selection import SelectKBest

from sklearn.feature_selection import mutual_info_classif

# from sklearn.feature_selection import chi2

# mutual_info_classif 考虑两个特征的互信息 值越大表示两者关联性越强 等于0表示两者

# 可参考 https://blog.csdn.net/iizhuzhu/article/details/105031532

sel = SelectKBest(mutual_info_classif,k = 30)

#sel = SelectKBest(chi2,k=30)

sel = sel.fit(train,target)

train_sel = sel.transform(train)

print('训练数据未特征筛选维度', train.shape)

print('训练数据特征筛选维度后', train_sel.shape)

feature_selection(train,train_sel,target)

'''

训练数据未特征筛选维度 (10000, 153)

训练数据特征筛选维度后 (10000, 30)

No Select AUC: 0.61

Features Select AUC: 0.61

'''

5. 递归功能消除 RFE

from sklearn.feature_selection import RFECV

from sklearn.ensemble import RandomForestClassifier

clf = RandomForestClassifier(n_estimators=100, max_depth=2, random_state=0, n_jobs=-1)

sel = RFECV(clf, step=1, cv=2)

sel = sel.fit(train, target)

train_sel = sel.transform(train)

print('训练数据未特征筛选维度', train.shape)

print('训练数据特征筛选维度后', train_sel.shape)

feature_selection(train,train_sel,target)

'''

训练数据未特征筛选维度 (10000, 153)

训练数据特征筛选维度后 (10000, 28)

No Select AUC: 0.61

Features Select AUC: 0.61

'''

6. 使用模型选择特征 SelectFromModel

from sklearn.feature_selection import SelectFromModel

from sklearn.linear_model import LogisticRegression

from sklearn.preprocessing import Normalizer

nor = Normalizer()

train_nor = nor.fit_transform(train)

LR_2 = LogisticRegression(penalty='l2',C=5)# C 是正则化系数

LR_2 = LR_2.fit(train_nor,target)

model = SelectFromModel(LR_2,prefit=True)

train_sel = model.transform(train_nor)

print('训练数据未特征筛选维度', train.shape)

print('训练数据特征筛选维度后', train_sel.shape)

feature_selection(train,train_sel,target)

print('L2 范数选择参数',LR_2.coef_[0][:10])

'''

训练数据未特征筛选维度 (10000, 153)

训练数据特征筛选维度后 (10000, 26)

No Select AUC: 0.61

Features Select AUC: 0.61

L2 范数选择参数

array([-0.84219198, 0.0902854 , 0.99287625, 0.22331248, 1.67471924,

0.25893806, 0.28578223, 0.21691174, 0.00349268, 1.26646791])

'''

from sklearn.ensemble import ExtraTreesClassifier

from sklearn.feature_selection import SelectFromModel

clf = ExtraTreesClassifier(n_estimators=50)

clf = clf.fit(train,target)

model = SelectFromModel(clf,prefit=True)

train_sel = model.transform(train_nor)

print('训练数据未特征筛选维度', train.shape)

print('训练数据特征筛选维度后', train_sel.shape)

feature_selection(train,train_sel,target)

print('树特征的重要性',clf.feature_importances_[:10])

'''

训练数据未特征筛选维度 (10000, 153)

训练数据特征筛选维度后 (10000, 56)

No Select AUC: 0.61

Features Select AUC: 0.61

树特征的重要性

array([0.01291039, 0.0071561 , 0.00673732, 0.0064681 , 0.00594268,

0.00701712, 0.00724747, 0.00779039, 0.00513536, 0. ])

'''

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?