实验要求

使用Flink CDC连接MySQL,在Hbase中实时同步数据库的修改。

MySQL建表语句

CREATE TABLE `ums_member` (

`id` bigint(20) NOT NULL AUTO_INCREMENT,

`member_level_id` bigint(20) DEFAULT NULL COMMENT '用户会员等级ID',

`username` varchar(64) DEFAULT NULL COMMENT '用户名',

`password` varchar(64) DEFAULT NULL COMMENT '密码',

`nickname` varchar(64) DEFAULT NULL COMMENT '昵称',

`phone` varchar(64) DEFAULT NULL COMMENT '手机号码',

`status` int(1) DEFAULT NULL COMMENT '帐号启用状态:0->禁用;1->启用',

`create_time` datetime DEFAULT NULL COMMENT '注册时间',

`icon` varchar(500) DEFAULT NULL COMMENT '头像',

`gender` int(1) DEFAULT NULL COMMENT '性别:0->未知;1->男;2->女',

`birthday` date DEFAULT NULL COMMENT '生日',

`city` varchar(64) DEFAULT NULL COMMENT '所在城市',

`job` varchar(100) DEFAULT NULL COMMENT '职业',

`personalized_signature` varchar(200) DEFAULT NULL COMMENT '个性签名',

`source_type` int(1) DEFAULT NULL COMMENT '用户来源',

`integration` int(11) DEFAULT NULL COMMENT '积分',

`growth` int(11) DEFAULT NULL COMMENT '成长值',

`luckey_count` int(11) DEFAULT NULL COMMENT '剩余抽奖次数',

`history_integration` int(11) DEFAULT NULL COMMENT '历史积分数量',

`modify_time` datetime DEFAULT NULL,

PRIMARY KEY (`id`),

UNIQUE KEY `idx_username` (`username`),

UNIQUE KEY `idx_phone` (`phone`)

) ENGINE=InnoDB AUTO_INCREMENT=10 DEFAULT CHARSET=utf8 COMMENT='会员表';

插入语句

INSERT INTO ums_member

(member_level_id, username, password, nickname, phone, status, create_time, icon, gender, birthday, city, job, personalized_signature, source_type, integration, growth, luckey_count, history_integration, modify_time)

VALUES

(null, 'alis', 'password123', 'User One', '01234567890', 1, '2023-07-10 12:34:56', 'avatar1.jpg', 1, '1990-01-01', 'New York', 'Engineer', 'I love coding!', 1, 100, 200, 3, 500, '2023-07-10 12:34:56'),

(null, 'rose', 'pass567', 'User Two', '19876543210', 1, '2023-07-09 10:20:30', 'avatar2.jpg', 2, '1985-05-20', 'London', 'Teacher', 'Learning is fun!', 2, 150, 300, 2, 800, '2023-07-09 10:20:30'),

(null, 'jack', 'testpwd', 'User Three', '34567891230', 1, '2023-07-08 08:08:08', 'avatar3.jpg', 1, '1995-12-15', 'Tokyo', 'Artist', 'Art is life!', 1, 200, 100, 1, 400, '2023-07-08 08:08:08'),

(null, 'lisa', 'pass987', 'User Four', '12112223334', 1, '2023-07-07 09:45:30', 'avatar4.jpg', 2, '1992-09-10', 'Paris', 'Designer', 'Be creative!', 2, 120, 250, 4, 600, '2023-07-07 09:45:30'),

(null, 'arthas', 'test123', 'User Five', '15556667778', 1, '2023-07-06 15:30:20', 'avatar5.jpg', 1, '1998-03-25', 'Berlin', 'Writer', 'Words have power!', 1, 180, 350, 5, 900, '2023-07-06 15:30:20');

Hbase 语句

hbase:011:0> create 'dim_user_info', 'f'

hbase:011:0> scan 'dim_user_info'

hbase:011:0> truncate 'dim_user_info'

创建Hbase中的数据库dim_user_info以及列族。

Idea 依赖

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>org.example</groupId>

<artifactId>flink-dw</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<flink.version>1.13.6</flink.version>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.11</artifactId>

<version>${flink.version}</version>

<!-- <scope>provided</scope>-->

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients_2.11</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-api-java-bridge_2.11</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner-blink_2.11</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>com.ververica</groupId>

<artifactId>flink-connector-mysql-cdc</artifactId>

<version>2.2.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-shaded-hadoop-2-uber</artifactId>

<version>2.7.5-10.0</version>

</dependency>

<dependency>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

<version>1.2.17</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>8.0.21</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-hbase-2.2_2.11</artifactId>

<version>1.13.6</version>

</dependency>

</dependencies>

<build>

<extensions>

<extension>

<groupId>org.apache.maven.wagon</groupId>

<artifactId>wagon-ssh</artifactId>

<version>2.8</version>

</extension>

</extensions>

<plugins>

<plugin>

<groupId>org.codehaus.mojo</groupId>

<artifactId>wagon-maven-plugin</artifactId>

<version>1.0</version>

<configuration>

<!--上传的本地jar的位置-->

<fromFile>target/${project.build.finalName}.jar</fromFile>

<!--远程拷贝的地址-->

<url>scp://root:root@hadoop10:/opt/app</url>

</configuration>

</plugin>

<plugin>

<artifactId>maven-assembly-plugin </artifactId>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

测试代码

package demo;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.table.api.bridge.java.StreamTableEnvironment;

public class UserInfo2Hbase {

public static void main(String[] args) {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

StreamTableEnvironment tenv = StreamTableEnvironment.create(env);

/**

* CREATE TABLE ums_member (

* id BIGINT,

* username STRING,

* phone STRING,

* status int,

* create_time timestamp(3),

* gender int,

* birthday date,

* city STRING,

* job STRING ,

* source_type INT ,

* PRIMARY KEY(id) NOT ENFORCED

* ) WITH (

* 'connector' = 'mysql-cdc',

* 'hostname' = 'hadoop10',

* 'port' = '3306',

* 'username' = 'root',

* 'password' = '123456',

* 'database-name' = 'db1',

* 'scan.startup.mode' = 'latest-offset',

* 'table-name' = 'ums_member');

*/

tenv.executeSql("CREATE TABLE ums_member (\n" +

" id BIGINT, \n" +

" username STRING, \n" +

" phone STRING, \n" +

" status int, \n" +

" create_time timestamp(3), \n" +

" gender \t\tint, \n" +

" birthday\tdate, \n" +

" city \t\tSTRING, \n" +

" job \t\tSTRING , \n" +

" source_type INT , \n" +

" PRIMARY KEY(id) NOT ENFORCED\n" +

" ) WITH (\n" +

" 'connector' = 'mysql-cdc',\n" +

" 'hostname' = 'hadoop10',\n" +

" 'port' = '3306',\n" +

" 'username' = 'root',\n" +

" 'password' = '0000',\n" +

" 'database-name' = 'db1',\n" +

//" 'scan.startup.mode' = 'latest-offset',\n" +

" 'scan.incremental.snapshot.enabled' = 'false',\n" +

" 'table-name' = 'ums_member')").print();

/**

* CREATE TABLE dim_user_info (

* username String,

* f ROW<id INT,phone String,status String,create_time String,gender String,birthday String,city String,job String,source_type String>

* PRIMARY KEY (username) NOT ENFORCED

* ) WITH (

* 'connector' = 'hbase-2.2',

* 'table-name' = 'dim_user_info',

* 'zookeeper.quorum' = 'hadoop10:2181'

* )

*/

tenv.executeSql(" CREATE TABLE dim_user_info (\n" +

" username String,\n" +

" f ROW<id INT,phone String,status INT,create_time timestamp(3),gender int,birthday date,city String,job String,source_type int>,\n" +

" PRIMARY KEY (username) NOT ENFORCED\n" +

") WITH (\n" +

" 'connector' = 'hbase-2.2',\n" +

" 'table-name' = 'dim_user_info',\n" +

" 'zookeeper.quorum' = 'hadoop10:2181'\n" +

")");

/**

* insert into dim_user_info

* select username,

* ROW<id,phone,status,create_time,gender,birthday,city,job,source_type>

* from ums_member

*/

tenv.executeSql("insert into dim_user_info\n" +

"select username,\n" +

" ROW(cast(id as int),phone,status,create_time,gender,birthday,city,job,source_type) " +

"from ums_member").print();

//tenv.executeSql("select * from ums_member").print();

}

}

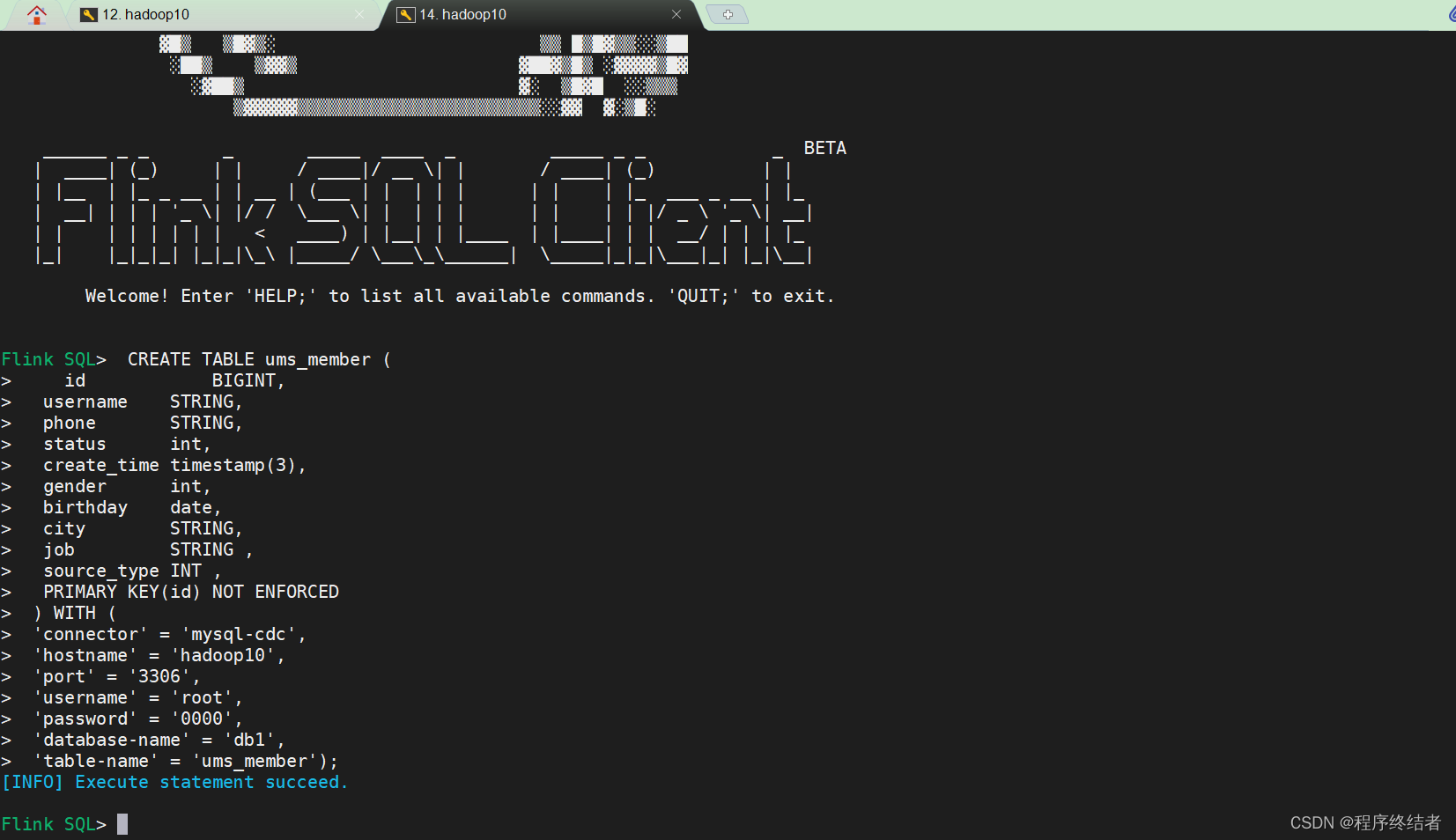

测试实验

进入这个Flink大松鼠界面

[root@hadoop10 ~]# sql-client.sh

No default environment specified.

Searching for '/opt/installs/flink/conf/sql-client-defaults.yaml'...not found.

Command history file path: /root/.flink-sql-history

▒▓██▓██▒

▓████▒▒█▓▒▓███▓▒

▓███▓░░ ▒▒▒▓██▒ ▒

░██▒ ▒▒▓▓█▓▓▒░ ▒████

██▒ ░▒▓███▒ ▒█▒█▒

░▓█ ███ ▓░▒██

▓█ ▒▒▒▒▒▓██▓░▒░▓▓█

█░ █ ▒▒░ ███▓▓█ ▒█▒▒▒

████░ ▒▓█▓ ██▒▒▒ ▓███▒

░▒█▓▓██ ▓█▒ ▓█▒▓██▓ ░█░

▓░▒▓████▒ ██ ▒█ █▓░▒█▒░▒█▒

███▓░██▓ ▓█ █ █▓ ▒▓█▓▓█▒

░██▓ ░█░ █ █▒ ▒█████▓▒ ██▓░▒

███░ ░ █░ ▓ ░█ █████▒░░ ░█░▓ ▓░

██▓█ ▒▒▓▒ ▓███████▓░ ▒█▒ ▒▓ ▓██▓

▒██▓ ▓█ █▓█ ░▒█████▓▓▒░ ██▒▒ █ ▒ ▓█▒

▓█▓ ▓█ ██▓ ░▓▓▓▓▓▓▓▒ ▒██▓ ░█▒

▓█ █ ▓███▓▒░ ░▓▓▓███▓ ░▒░ ▓█

██▓ ██▒ ░▒▓▓███▓▓▓▓▓██████▓▒ ▓███ █

▓███▒ ███ ░▓▓▒░░ ░▓████▓░ ░▒▓▒ █▓

█▓▒▒▓▓██ ░▒▒░░░▒▒▒▒▓██▓░ █▓

██ ▓░▒█ ▓▓▓▓▒░░ ▒█▓ ▒▓▓██▓ ▓▒ ▒▒▓

▓█▓ ▓▒█ █▓░ ░▒▓▓██▒ ░▓█▒ ▒▒▒░▒▒▓█████▒

██░ ▓█▒█▒ ▒▓▓▒ ▓█ █░ ░░░░ ░█▒

▓█ ▒█▓ ░ █░ ▒█ █▓

█▓ ██ █░ ▓▓ ▒█▓▓▓▒█░

█▓ ░▓██░ ▓▒ ▓█▓▒░░░▒▓█░ ▒█

██ ▓█▓░ ▒ ░▒█▒██▒ ▓▓

▓█▒ ▒█▓▒░ ▒▒ █▒█▓▒▒░░▒██

░██▒ ▒▓▓▒ ▓██▓▒█▒ ░▓▓▓▓▒█▓

░▓██▒ ▓░ ▒█▓█ ░░▒▒▒

▒▓▓▓▓▓▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒▒░░▓▓ ▓░▒█░

______ _ _ _ _____ ____ _ _____ _ _ _ BETA

| ____| (_) | | / ____|/ __ \| | / ____| (_) | |

| |__ | |_ _ __ | | __ | (___ | | | | | | | | |_ ___ _ __ | |_

| __| | | | '_ \| |/ / \___ \| | | | | | | | | |/ _ \ '_ \| __|

| | | | | | | | < ____) | |__| | |____ | |____| | | __/ | | | |_

|_| |_|_|_| |_|_|\_\ |_____/ \___\_\______| \_____|_|_|\___|_| |_|\__|

Welcome! Enter 'HELP;' to list all available commands. 'QUIT;' to exit.

Flink SQL>

这是在小松鼠界面输入的命令。

CREATE TABLE ums_member (

id BIGINT,

username STRING,

phone STRING,

status int,

create_time timestamp(3),

gender int,

birthday date,

city STRING,

job STRING ,

source_type INT ,

PRIMARY KEY(id) NOT ENFORCED

) WITH (

'connector' = 'mysql-cdc',

'hostname' = 'hadoop10',

'port' = '3306',

'username' = 'root',

'password' = '0000',

'database-name' = 'db1',

'table-name' = 'ums_member');

运行结果:

这个代码是sql-client.sh中成功读取到MySQL插入的数据,此时在MySQL中对数据的操作即可通过FlinkCDC连接展示在此界面。

Flink SQL> select * from ums_member;

[INFO] Result retrieval cancelled.

这一步遇到过几个坑,依赖的冲突和依赖版本。

java.lang.NoSuchMethodError: com.mysql.cj.CharsetMapping.getJavaEncodingForMysqlCharset(Ljava/lang;)Ljava/lang/String;

这个只是其中一个报错,还有一些报错没有截图,是以依赖问题居多。

检查思路是,捋清楚maven中的依赖和flink/lib中的jar依赖,这里我也参考了多篇文档。

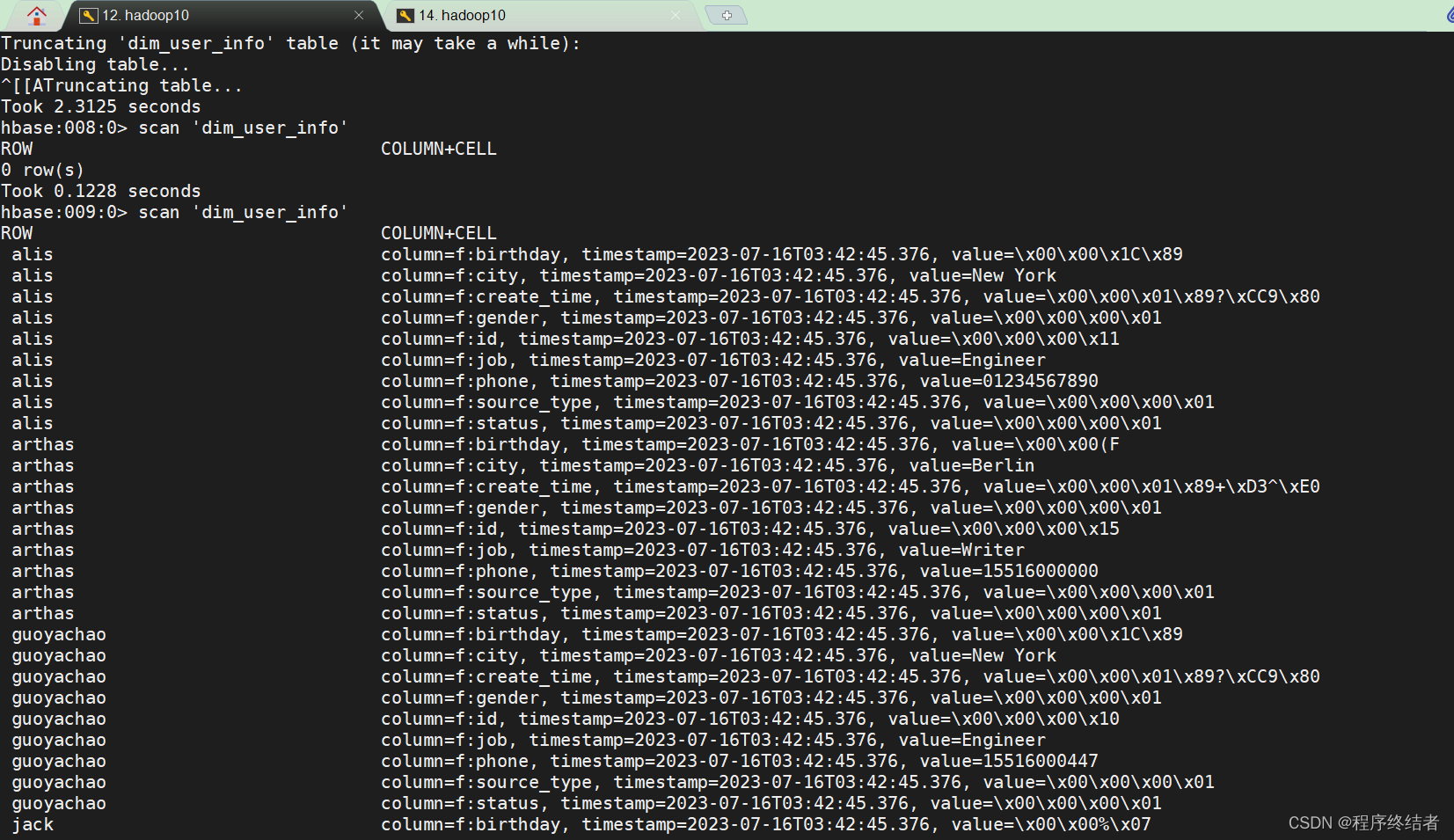

下面这个黑窗口界面是Hbase的scan查看界面,可以看到Hbase中也会修改更新。首先在idea中启动上述的测试代码。通过清空和查看表名,修改原MySQL的数据,也可以看到flink cdc的连接效果。

其中的idea报错:

HikariDataSource.java:81)

at com.ververica.cdc.connectors.mysql.source.connection.PooledDataSourceFactory.createPooledDataSource(PooledDataSourceFactory.java:63)

at com.ververica.cdc.connectors.mysql.source.connection.JdbcConnectionPools.getOrCreateConnectionPool(JdbcConnectionPools.java:51)

at com.ververica.cdc.connectors.mysql.source.connection.JdbcConnectionFactory.connect(JdbcConnectionFactory.java:53)

at io.debezium.jdbc.JdbcConnection.connection(JdbcConnection.java:872)

at io.debezium.jdbc.JdbcConnection.connection(JdbcConnection.java:867)

at io.debezium.jdbc.JdbcConnection.connect(JdbcConnection.java:413)

at com.ververica.cdc.connectors.mysql.debezium.DebeziumUtils.openJdbcConnection(DebeziumUtils.java:62)

at com.ververica.cdc.connectors.mysql.MySqlValidator.validate(MySqlValidator.java:68)

at com.ververica.cdc.connectors.mysql.source.MySqlSource.createEnumerator(MySqlSource.java:174)

at org.apache.flink.runtime.source.coordinator.SourceCoordinator.start(SourceCoordinator.java:129)

at org.apache.flink.runtime.operators.coordination.RecreateOnResetOperatorCoordinator$DeferrableCoordinator.resetAndStart(RecreateOnResetOperatorCoordinator.java:381)

at org.apache.flink.runtime.operators.coordination.RecreateOnResetOperatorCoordinator.lambda$resetToCheckpoint$6(RecreateOnResetOperatorCoordinator.java:136)

at java.util.concurrent.CompletableFuture.uniRun(CompletableFuture.java:719)

at java.util.concurrent.CompletableFuture$UniRun.tryFire(CompletableFuture.java:701)

at java.util.concurrent.CompletableFuture.postComplete(CompletableFuture.java:488)

at java.util.concurrent.CompletableFuture.complete(CompletableFuture.java:1975)

at org.apache.flink.runtime.operators.coordination.ComponentClosingUtils.lambda$closeAsyncWithTimeout$0(ComponentClosingUtils.java:71)

at java.lang.Thread.run(Thread.java:750)

Caused by: com.mysql.cj.jdbc.exceptions.CommunicationsException: Communications link failure

The last packet sent successfully to the server was 0 milliseconds ago. The driver has not received any packets from the server.

at com.mysql.cj.jdbc.exceptions.SQLError.createCommunicationsException(SQLError.java:174)

at com.mysql.cj.jdbc.exceptions.SQLExceptionsMapping.translateException(SQLExceptionsMapping.java:64)

at com.mysql.cj.jdbc.ConnectionImpl.createNewIO(ConnectionImpl.java:836)

at com.mysql.cj.jdbc.ConnectionImpl.<init>(ConnectionImpl.java:456)

at com.mysql.cj.jdbc.ConnectionImpl.getInstance(ConnectionImpl.java:246)

at com.mysql.cj.jdbc.NonRegisteringDriver.connect(NonRegisteringDriver.java:197)

at com.zaxxer.hikari.util.DriverDataSource.getConnection(DriverDataSource.java:138)

at com.zaxxer.hikari.pool.PoolBase.newConnection(PoolBase.java:364)

at com.zaxxer.hikari.pool.PoolBase.newPoolEntry(PoolBase.java:206)

at com.zaxxer.hikari.pool.HikariPool.createPoolEntry(HikariPool.java:476)

at com.zaxxer.hikari.pool.HikariPool.checkFailFast(HikariPool.java:561)

... 20 more

Caused by: com.mysql.cj.exceptions.CJCommunicationsException: Communications link failure

The last packet sent successfully to the server was 0 milliseconds ago. The driver has not received any packets from the server.

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at com.mysql.cj.exceptions.ExceptionFactory.createException(ExceptionFactory.java:61)

at com.mysql.cj.exceptions.ExceptionFactory.createException(ExceptionFactory.java:105)

at com.mysql.cj.exceptions.ExceptionFactory.createException(ExceptionFactory.java:151)

at com.mysql.cj.exceptions.ExceptionFactory.createCommunicationsException(ExceptionFactory.java:167)

at com.mysql.cj.protocol.a.NativeProtocol.negotiateSSLConnection(NativeProtocol.java:334)

at com.mysql.cj.protocol.a.NativeAuthenticationProvider.connect(NativeAuthenticationProvider.java:164)

at com.mysql.cj.protocol.a.NativeProtocol.connect(NativeProtocol.java:1342)

at com.mysql.cj.NativeSession.connect(NativeSession.java:157)

at com.mysql.cj.jdbc.ConnectionImpl.connectOneTryOnly(ConnectionImpl.java:956)

at com.mysql.cj.jdbc.ConnectionImpl.createNewIO(ConnectionImpl.java:826)

... 28 more

Caused by: javax.net.ssl.SSLHandshakeException: No appropriate protocol (protocol is disabled or cipher suites are inappropriate)

at sun.security.ssl.HandshakeContext.<init>(HandshakeContext.java:171)

at sun.security.ssl.ClientHandshakeContext.<init>(ClientHandshakeContext.java:106)

at sun.security.ssl.TransportContext.kickstart(TransportContext.java:238)

at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:410)

at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:389)

at com.mysql.cj.protocol.ExportControlled.performTlsHandshake(ExportControlled.java:336)

at com.mysql.cj.protocol.StandardSocketFactory.performTlsHandshake(StandardSocketFactory.java:188)

at com.mysql.cj.protocol.a.NativeSocketConnection.performTlsHandshake(NativeSocketConnection.java:99)

at com.mysql.cj.protocol.a.NativeProtocol.negotiateSSLConnection(NativeProtocol.java:325)

... 33 more

[ERROR] 2023-07-21 17:26:22,480(55973) --> [Thread-48] org.apache.flink.runtime.source.coordinator.SourceCoordinator.start(SourceCoordinator.java:132): Failed to create Source Enumerator for source Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username])

org.apache.flink.util.FlinkRuntimeException: com.zaxxer.hikari.pool.HikariPool$PoolInitializationException: Failed to initialize pool: Communications link failure

The last packet sent successfully to the server was 0 milliseconds ago. The driver has not received any packets from the server.

at com.ververica.cdc.connectors.mysql.debezium.DebeziumUtils.openJdbcConnection(DebeziumUtils.java:65)

at com.ververica.cdc.connectors.mysql.MySqlValidator.validate(MySqlValidator.java:68)

at com.ververica.cdc.connectors.mysql.source.MySqlSource.createEnumerator(MySqlSource.java:174)

at org.apache.flink.runtime.source.coordinator.SourceCoordinator.start(SourceCoordinator.java:129)

at org.apache.flink.runtime.operators.coordination.RecreateOnResetOperatorCoordinator$DeferrableCoordinator.resetAndStart(RecreateOnResetOperatorCoordinator.java:381)

at org.apache.flink.runtime.operators.coordination.RecreateOnResetOperatorCoordinator.lambda$resetToCheckpoint$6(RecreateOnResetOperatorCoordinator.java:136)

at java.util.concurrent.CompletableFuture.uniRun(CompletableFuture.java:719)

at java.util.concurrent.CompletableFuture$UniRun.tryFire(CompletableFuture.java:701)

at java.util.concurrent.CompletableFuture.postComplete(CompletableFuture.java:488)

at java.util.concurrent.CompletableFuture.complete(CompletableFuture.java:1975)

at org.apache.flink.runtime.operators.coordination.ComponentClosingUtils.lambda$closeAsyncWithTimeout$0(ComponentClosingUtils.java:71)

at java.lang.Thread.run(Thread.java:750)

Caused by: com.zaxxer.hikari.pool.HikariPool$PoolInitializationException: Failed to initialize pool: Communications link failure

The last packet sent successfully to the server was 0 milliseconds ago. The driver has not received any packets from the server.

at com.zaxxer.hikari.pool.HikariPool.throwPoolInitializationException(HikariPool.java:596)

at com.zaxxer.hikari.pool.HikariPool.checkFailFast(HikariPool.java:582)

at com.zaxxer.hikari.pool.HikariPool.<init>(HikariPool.java:115)

at com.zaxxer.hikari.HikariDataSource.<init>(HikariDataSource.java:81)

at com.ververica.cdc.connectors.mysql.source.connection.PooledDataSourceFactory.createPooledDataSource(PooledDataSourceFactory.java:63)

at com.ververica.cdc.connectors.mysql.source.connection.JdbcConnectionPools.getOrCreateConnectionPool(JdbcConnectionPools.java:51)

at com.ververica.cdc.connectors.mysql.source.connection.JdbcConnectionFactory.connect(JdbcConnectionFactory.java:53)

at io.debezium.jdbc.JdbcConnection.connection(JdbcConnection.java:872)

at io.debezium.jdbc.JdbcConnection.connection(JdbcConnection.java:867)

at io.debezium.jdbc.JdbcConnection.connect(JdbcConnection.java:413)

at com.ververica.cdc.connectors.mysql.debezium.DebeziumUtils.openJdbcConnection(DebeziumUtils.java:62)

... 11 more

Caused by: com.mysql.cj.jdbc.exceptions.CommunicationsException: Communications link failure

The last packet sent successfully to the server was 0 milliseconds ago. The driver has not received any packets from the server.

at com.mysql.cj.jdbc.exceptions.SQLError.createCommunicationsException(SQLError.java:174)

at com.mysql.cj.jdbc.exceptions.SQLExceptionsMapping.translateException(SQLExceptionsMapping.java:64)

at com.mysql.cj.jdbc.ConnectionImpl.createNewIO(ConnectionImpl.java:836)

at com.mysql.cj.jdbc.ConnectionImpl.<init>(ConnectionImpl.java:456)

at com.mysql.cj.jdbc.ConnectionImpl.getInstance(ConnectionImpl.java:246)

at com.mysql.cj.jdbc.NonRegisteringDriver.connect(NonRegisteringDriver.java:197)

at com.zaxxer.hikari.util.DriverDataSource.getConnection(DriverDataSource.java:138)

at com.zaxxer.hikari.pool.PoolBase.newConnection(PoolBase.java:364)

at com.zaxxer.hikari.pool.PoolBase.newPoolEntry(PoolBase.java:206)

at com.zaxxer.hikari.pool.HikariPool.createPoolEntry(HikariPool.java:476)

at com.zaxxer.hikari.pool.HikariPool.checkFailFast(HikariPool.java:561)

... 20 more

Caused by: com.mysql.cj.exceptions.CJCommunicationsException: Communications link failure

The last packet sent successfully to the server was 0 milliseconds ago. The driver has not received any packets from the server.

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at com.mysql.cj.exceptions.ExceptionFactory.createException(ExceptionFactory.java:61)

at com.mysql.cj.exceptions.ExceptionFactory.createException(ExceptionFactory.java:105)

at com.mysql.cj.exceptions.ExceptionFactory.createException(ExceptionFactory.java:151)

at com.mysql.cj.exceptions.ExceptionFactory.createCommunicationsException(ExceptionFactory.java:167)

at com.mysql.cj.protocol.a.NativeProtocol.negotiateSSLConnection(NativeProtocol.java:334)

at com.mysql.cj.protocol.a.NativeAuthenticationProvider.connect(NativeAuthenticationProvider.java:164)

at com.mysql.cj.protocol.a.NativeProtocol.connect(NativeProtocol.java:1342)

at com.mysql.cj.NativeSession.connect(NativeSession.java:157)

at com.mysql.cj.jdbc.ConnectionImpl.connectOneTryOnly(ConnectionImpl.java:956)

at com.mysql.cj.jdbc.ConnectionImpl.createNewIO(ConnectionImpl.java:826)

... 28 more

Caused by: javax.net.ssl.SSLHandshakeException: No appropriate protocol (protocol is disabled or cipher suites are inappropriate)

at sun.security.ssl.HandshakeContext.<init>(HandshakeContext.java:171)

at sun.security.ssl.ClientHandshakeContext.<init>(ClientHandshakeContext.java:106)

at sun.security.ssl.TransportContext.kickstart(TransportContext.java:238)

at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:410)

at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:389)

at com.mysql.cj.protocol.ExportControlled.performTlsHandshake(ExportControlled.java:336)

at com.mysql.cj.protocol.StandardSocketFactory.performTlsHandshake(StandardSocketFactory.java:188)

at com.mysql.cj.protocol.a.NativeSocketConnection.performTlsHandshake(NativeSocketConnection.java:99)

at com.mysql.cj.protocol.a.NativeProtocol.negotiateSSLConnection(NativeProtocol.java:325)

... 33 more

[WARN ] 2023-07-21 17:26:22,484(55977) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (3/8)#7] org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:576): Retrieve cluster id failed

java.lang.InterruptedException

at java.util.concurrent.CompletableFuture.reportGet(CompletableFuture.java:347)

at java.util.concurrent.CompletableFuture.get(CompletableFuture.java:1908)

at org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:574)

at org.apache.hadoop.hbase.client.ConnectionImplementation.<init>(ConnectionImplementation.java:307)

at sun.reflect.GeneratedConstructorAccessor40.newInstance(Unknown Source)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.hbase.client.ConnectionFactory.lambda$createConnection$0(ConnectionFactory.java:230)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1754)

at org.apache.hadoop.hbase.security.User$SecureHadoopUser.runAs(User.java:347)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:228)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:128)

at org.apache.flink.connector.hbase.sink.HBaseSinkFunction.open(HBaseSinkFunction.java:116)

at org.apache.flink.api.common.functions.util.FunctionUtils.openFunction(FunctionUtils.java:34)

at org.apache.flink.streaming.api.operators.AbstractUdfStreamOperator.open(AbstractUdfStreamOperator.java:102)

at org.apache.flink.table.runtime.operators.sink.SinkOperator.open(SinkOperator.java:58)

at org.apache.flink.streaming.runtime.tasks.OperatorChain.initializeStateAndOpenOperators(OperatorChain.java:442)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restoreGates(StreamTask.java:585)

at org.apache.flink.streaming.runtime.tasks.StreamTaskActionExecutor$1.call(StreamTaskActionExecutor.java:55)

at org.apache.flink.streaming.runtime.tasks.StreamTask.executeRestore(StreamTask.java:565)

at org.apache.flink.streaming.runtime.tasks.StreamTask.runWithCleanUpOnFail(StreamTask.java:650)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restore(StreamTask.java:540)

at org.apache.flink.runtime.taskmanager.Task.doRun(Task.java:759)

at org.apache.flink.runtime.taskmanager.Task.run(Task.java:566)

at java.lang.Thread.run(Thread.java:750)

[WARN ] 2023-07-21 17:26:22,487(55980) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (5/8)#7] org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:576): Retrieve cluster id failed

java.lang.InterruptedException

at java.util.concurrent.CompletableFuture.reportGet(CompletableFuture.java:347)

at java.util.concurrent.CompletableFuture.get(CompletableFuture.java:1908)

at org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:574)

at org.apache.hadoop.hbase.client.ConnectionImplementation.<init>(ConnectionImplementation.java:307)

at sun.reflect.GeneratedConstructorAccessor40.newInstance(Unknown Source)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.hbase.client.ConnectionFactory.lambda$createConnection$0(ConnectionFactory.java:230)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1754)

at org.apache.hadoop.hbase.security.User$SecureHadoopUser.runAs(User.java:347)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:228)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:128)

at org.apache.flink.connector.hbase.sink.HBaseSinkFunction.open(HBaseSinkFunction.java:116)

at org.apache.flink.api.common.functions.util.FunctionUtils.openFunction(FunctionUtils.java:34)

at org.apache.flink.streaming.api.operators.AbstractUdfStreamOperator.open(AbstractUdfStreamOperator.java:102)

at org.apache.flink.table.runtime.operators.sink.SinkOperator.open(SinkOperator.java:58)

at org.apache.flink.streaming.runtime.tasks.OperatorChain.initializeStateAndOpenOperators(OperatorChain.java:442)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restoreGates(StreamTask.java:585)

at org.apache.flink.streaming.runtime.tasks.StreamTaskActionExecutor$1.call(StreamTaskActionExecutor.java:55)

at org.apache.flink.streaming.runtime.tasks.StreamTask.executeRestore(StreamTask.java:565)

at org.apache.flink.streaming.runtime.tasks.StreamTask.runWithCleanUpOnFail(StreamTask.java:650)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restore(StreamTask.java:540)

at org.apache.flink.runtime.taskmanager.Task.doRun(Task.java:759)

at org.apache.flink.runtime.taskmanager.Task.run(Task.java:566)

at java.lang.Thread.run(Thread.java:750)

[WARN ] 2023-07-21 17:26:22,489(55982) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (4/8)#7] org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:576): Retrieve cluster id failed

java.lang.InterruptedException

at java.util.concurrent.CompletableFuture.reportGet(CompletableFuture.java:347)

at java.util.concurrent.CompletableFuture.get(CompletableFuture.java:1908)

at org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:574)

at org.apache.hadoop.hbase.client.ConnectionImplementation.<init>(ConnectionImplementation.java:307)

at sun.reflect.GeneratedConstructorAccessor40.newInstance(Unknown Source)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.hbase.client.ConnectionFactory.lambda$createConnection$0(ConnectionFactory.java:230)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1754)

at org.apache.hadoop.hbase.security.User$SecureHadoopUser.runAs(User.java:347)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:228)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:128)

at org.apache.flink.connector.hbase.sink.HBaseSinkFunction.open(HBaseSinkFunction.java:116)

at org.apache.flink.api.common.functions.util.FunctionUtils.openFunction(FunctionUtils.java:34)

at org.apache.flink.streaming.api.operators.AbstractUdfStreamOperator.open(AbstractUdfStreamOperator.java:102)

at org.apache.flink.table.runtime.operators.sink.SinkOperator.open(SinkOperator.java:58)

at org.apache.flink.streaming.runtime.tasks.OperatorChain.initializeStateAndOpenOperators(OperatorChain.java:442)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restoreGates(StreamTask.java:585)

at org.apache.flink.streaming.runtime.tasks.StreamTaskActionExecutor$1.call(StreamTaskActionExecutor.java:55)

at org.apache.flink.streaming.runtime.tasks.StreamTask.executeRestore(StreamTask.java:565)

at org.apache.flink.streaming.runtime.tasks.StreamTask.runWithCleanUpOnFail(StreamTask.java:650)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restore(StreamTask.java:540)

at org.apache.flink.runtime.taskmanager.Task.doRun(Task.java:759)

at org.apache.flink.runtime.taskmanager.Task.run(Task.java:566)

at java.lang.Thread.run(Thread.java:750)

[WARN ] 2023-07-21 17:26:22,490(55983) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (2/8)#7] org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:576): Retrieve cluster id failed

java.lang.InterruptedException

at java.util.concurrent.CompletableFuture.reportGet(CompletableFuture.java:347)

at java.util.concurrent.CompletableFuture.get(CompletableFuture.java:1908)

at org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:574)

at org.apache.hadoop.hbase.client.ConnectionImplementation.<init>(ConnectionImplementation.java:307)

at sun.reflect.GeneratedConstructorAccessor40.newInstance(Unknown Source)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.hbase.client.ConnectionFactory.lambda$createConnection$0(ConnectionFactory.java:230)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1754)

at org.apache.hadoop.hbase.security.User$SecureHadoopUser.runAs(User.java:347)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:228)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:128)

at org.apache.flink.connector.hbase.sink.HBaseSinkFunction.open(HBaseSinkFunction.java:116)

at org.apache.flink.api.common.functions.util.FunctionUtils.openFunction(FunctionUtils.java:34)

at org.apache.flink.streaming.api.operators.AbstractUdfStreamOperator.open(AbstractUdfStreamOperator.java:102)

at org.apache.flink.table.runtime.operators.sink.SinkOperator.open(SinkOperator.java:58)

at org.apache.flink.streaming.runtime.tasks.OperatorChain.initializeStateAndOpenOperators(OperatorChain.java:442)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restoreGates(StreamTask.java:585)

at org.apache.flink.streaming.runtime.tasks.StreamTaskActionExecutor$1.call(StreamTaskActionExecutor.java:55)

at org.apache.flink.streaming.runtime.tasks.StreamTask.executeRestore(StreamTask.java:565)

at org.apache.flink.streaming.runtime.tasks.StreamTask.runWithCleanUpOnFail(StreamTask.java:650)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restore(StreamTask.java:540)

at org.apache.flink.runtime.taskmanager.Task.doRun(Task.java:759)

at org.apache.flink.runtime.taskmanager.Task.run(Task.java:566)

at java.lang.Thread.run(Thread.java:750)

[WARN ] 2023-07-21 17:26:22,492(55985) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (6/8)#7] org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:576): Retrieve cluster id failed

java.lang.InterruptedException

at java.util.concurrent.CompletableFuture.reportGet(CompletableFuture.java:347)

at java.util.concurrent.CompletableFuture.get(CompletableFuture.java:1908)

at org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:574)

at org.apache.hadoop.hbase.client.ConnectionImplementation.<init>(ConnectionImplementation.java:307)

at sun.reflect.GeneratedConstructorAccessor40.newInstance(Unknown Source)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.hbase.client.ConnectionFactory.lambda$createConnection$0(ConnectionFactory.java:230)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1754)

at org.apache.hadoop.hbase.security.User$SecureHadoopUser.runAs(User.java:347)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:228)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:128)

at org.apache.flink.connector.hbase.sink.HBaseSinkFunction.open(HBaseSinkFunction.java:116)

at org.apache.flink.api.common.functions.util.FunctionUtils.openFunction(FunctionUtils.java:34)

at org.apache.flink.streaming.api.operators.AbstractUdfStreamOperator.open(AbstractUdfStreamOperator.java:102)

at org.apache.flink.table.runtime.operators.sink.SinkOperator.open(SinkOperator.java:58)

at org.apache.flink.streaming.runtime.tasks.OperatorChain.initializeStateAndOpenOperators(OperatorChain.java:442)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restoreGates(StreamTask.java:585)

at org.apache.flink.streaming.runtime.tasks.StreamTaskActionExecutor$1.call(StreamTaskActionExecutor.java:55)

at org.apache.flink.streaming.runtime.tasks.StreamTask.executeRestore(StreamTask.java:565)

at org.apache.flink.streaming.runtime.tasks.StreamTask.runWithCleanUpOnFail(StreamTask.java:650)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restore(StreamTask.java:540)

at org.apache.flink.runtime.taskmanager.Task.doRun(Task.java:759)

at org.apache.flink.runtime.taskmanager.Task.run(Task.java:566)

at java.lang.Thread.run(Thread.java:750)

[WARN ] 2023-07-21 17:26:22,496(55989) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (8/8)#7] org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:576): Retrieve cluster id failed

java.lang.InterruptedException

at java.util.concurrent.CompletableFuture.reportGet(CompletableFuture.java:347)

at java.util.concurrent.CompletableFuture.get(CompletableFuture.java:1908)

at org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:574)

at org.apache.hadoop.hbase.client.ConnectionImplementation.<init>(ConnectionImplementation.java:307)

at sun.reflect.GeneratedConstructorAccessor40.newInstance(Unknown Source)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.hbase.client.ConnectionFactory.lambda$createConnection$0(ConnectionFactory.java:230)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1754)

at org.apache.hadoop.hbase.security.User$SecureHadoopUser.runAs(User.java:347)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:228)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:128)

at org.apache.flink.connector.hbase.sink.HBaseSinkFunction.open(HBaseSinkFunction.java:116)

at org.apache.flink.api.common.functions.util.FunctionUtils.openFunction(FunctionUtils.java:34)

at org.apache.flink.streaming.api.operators.AbstractUdfStreamOperator.open(AbstractUdfStreamOperator.java:102)

at org.apache.flink.table.runtime.operators.sink.SinkOperator.open(SinkOperator.java:58)

at org.apache.flink.streaming.runtime.tasks.OperatorChain.initializeStateAndOpenOperators(OperatorChain.java:442)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restoreGates(StreamTask.java:585)

at org.apache.flink.streaming.runtime.tasks.StreamTaskActionExecutor$1.call(StreamTaskActionExecutor.java:55)

at org.apache.flink.streaming.runtime.tasks.StreamTask.executeRestore(StreamTask.java:565)

at org.apache.flink.streaming.runtime.tasks.StreamTask.runWithCleanUpOnFail(StreamTask.java:650)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restore(StreamTask.java:540)

at org.apache.flink.runtime.taskmanager.Task.doRun(Task.java:759)

at org.apache.flink.runtime.taskmanager.Task.run(Task.java:566)

at java.lang.Thread.run(Thread.java:750)

[WARN ] 2023-07-21 17:26:22,496(55989) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (1/8)#7] org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:576): Retrieve cluster id failed

java.lang.InterruptedException

at java.util.concurrent.CompletableFuture.reportGet(CompletableFuture.java:347)

at java.util.concurrent.CompletableFuture.get(CompletableFuture.java:1908)

at org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:574)

at org.apache.hadoop.hbase.client.ConnectionImplementation.<init>(ConnectionImplementation.java:307)

at sun.reflect.GeneratedConstructorAccessor40.newInstance(Unknown Source)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.hbase.client.ConnectionFactory.lambda$createConnection$0(ConnectionFactory.java:230)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1754)

at org.apache.hadoop.hbase.security.User$SecureHadoopUser.runAs(User.java:347)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:228)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:128)

at org.apache.flink.connector.hbase.sink.HBaseSinkFunction.open(HBaseSinkFunction.java:116)

at org.apache.flink.api.common.functions.util.FunctionUtils.openFunction(FunctionUtils.java:34)

at org.apache.flink.streaming.api.operators.AbstractUdfStreamOperator.open(AbstractUdfStreamOperator.java:102)

at org.apache.flink.table.runtime.operators.sink.SinkOperator.open(SinkOperator.java:58)

at org.apache.flink.streaming.runtime.tasks.OperatorChain.initializeStateAndOpenOperators(OperatorChain.java:442)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restoreGates(StreamTask.java:585)

at org.apache.flink.streaming.runtime.tasks.StreamTaskActionExecutor$1.call(StreamTaskActionExecutor.java:55)

at org.apache.flink.streaming.runtime.tasks.StreamTask.executeRestore(StreamTask.java:565)

at org.apache.flink.streaming.runtime.tasks.StreamTask.runWithCleanUpOnFail(StreamTask.java:650)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restore(StreamTask.java:540)

at org.apache.flink.runtime.taskmanager.Task.doRun(Task.java:759)

at org.apache.flink.runtime.taskmanager.Task.run(Task.java:566)

at java.lang.Thread.run(Thread.java:750)

[WARN ] 2023-07-21 17:26:22,497(55990) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (7/8)#7] org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:576): Retrieve cluster id failed

java.lang.InterruptedException

at java.util.concurrent.CompletableFuture.reportGet(CompletableFuture.java:347)

at java.util.concurrent.CompletableFuture.get(CompletableFuture.java:1908)

at org.apache.hadoop.hbase.client.ConnectionImplementation.retrieveClusterId(ConnectionImplementation.java:574)

at org.apache.hadoop.hbase.client.ConnectionImplementation.<init>(ConnectionImplementation.java:307)

at sun.reflect.GeneratedConstructorAccessor40.newInstance(Unknown Source)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.hbase.client.ConnectionFactory.lambda$createConnection$0(ConnectionFactory.java:230)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1754)

at org.apache.hadoop.hbase.security.User$SecureHadoopUser.runAs(User.java:347)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:228)

at org.apache.hadoop.hbase.client.ConnectionFactory.createConnection(ConnectionFactory.java:128)

at org.apache.flink.connector.hbase.sink.HBaseSinkFunction.open(HBaseSinkFunction.java:116)

at org.apache.flink.api.common.functions.util.FunctionUtils.openFunction(FunctionUtils.java:34)

at org.apache.flink.streaming.api.operators.AbstractUdfStreamOperator.open(AbstractUdfStreamOperator.java:102)

at org.apache.flink.table.runtime.operators.sink.SinkOperator.open(SinkOperator.java:58)

at org.apache.flink.streaming.runtime.tasks.OperatorChain.initializeStateAndOpenOperators(OperatorChain.java:442)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restoreGates(StreamTask.java:585)

at org.apache.flink.streaming.runtime.tasks.StreamTaskActionExecutor$1.call(StreamTaskActionExecutor.java:55)

at org.apache.flink.streaming.runtime.tasks.StreamTask.executeRestore(StreamTask.java:565)

at org.apache.flink.streaming.runtime.tasks.StreamTask.runWithCleanUpOnFail(StreamTask.java:650)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restore(StreamTask.java:540)

at org.apache.flink.runtime.taskmanager.Task.doRun(Task.java:759)

at org.apache.flink.runtime.taskmanager.Task.run(Task.java:566)

at java.lang.Thread.run(Thread.java:750)

[WARN ] 2023-07-21 17:26:23,501(56994) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (5/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,501(56994) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (1/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,502(56995) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (5/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,502(56995) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (1/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,502(56995) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (2/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,503(56996) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (2/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,504(56997) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (4/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,505(56998) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (4/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,506(56999) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (6/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,506(56999) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (6/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,507(57000) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (3/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,508(57001) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (3/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,511(57004) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (1/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,511(57004) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (2/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,514(57007) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (6/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,519(57012) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (7/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,520(57013) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (7/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,523(57016) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (8/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,523(57016) --> [Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username]) (8/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,529(57022) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (3/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,531(57024) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (8/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,533(57026) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (4/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,536(57029) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (5/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,536(57029) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (7/8)#8] org.apache.flink.runtime.metrics.groups.TaskMetricGroup.getOrAddOperator(TaskMetricGroup.java:154): The operator name Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) exceeded the 80 characters length limit and was truncated.

[WARN ] 2023-07-21 17:26:23,558(57051) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (1/8)#8] org.apache.flink.connector.hbase.util.HBaseConfigurationUtil.getHBaseConfiguration(HBaseConfigurationUtil.java:79): Could not find HBase configuration via any of the supported methods (Flink configuration, environment variables).

[WARN ] 2023-07-21 17:26:23,558(57051) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (2/8)#8] org.apache.flink.connector.hbase.util.HBaseConfigurationUtil.getHBaseConfiguration(HBaseConfigurationUtil.java:79): Could not find HBase configuration via any of the supported methods (Flink configuration, environment variables).

[WARN ] 2023-07-21 17:26:23,559(57052) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (6/8)#8] org.apache.flink.connector.hbase.util.HBaseConfigurationUtil.getHBaseConfiguration(HBaseConfigurationUtil.java:79): Could not find HBase configuration via any of the supported methods (Flink configuration, environment variables).

[WARN ] 2023-07-21 17:26:23,602(57095) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (8/8)#8] org.apache.flink.connector.hbase.util.HBaseConfigurationUtil.getHBaseConfiguration(HBaseConfigurationUtil.java:79): Could not find HBase configuration via any of the supported methods (Flink configuration, environment variables).

[WARN ] 2023-07-21 17:26:23,626(57119) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (3/8)#8] org.apache.flink.connector.hbase.util.HBaseConfigurationUtil.getHBaseConfiguration(HBaseConfigurationUtil.java:79): Could not find HBase configuration via any of the supported methods (Flink configuration, environment variables).

[WARN ] 2023-07-21 17:26:23,627(57120) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (4/8)#8] org.apache.flink.connector.hbase.util.HBaseConfigurationUtil.getHBaseConfiguration(HBaseConfigurationUtil.java:79): Could not find HBase configuration via any of the supported methods (Flink configuration, environment variables).

[WARN ] 2023-07-21 17:26:23,627(57120) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (7/8)#8] org.apache.flink.connector.hbase.util.HBaseConfigurationUtil.getHBaseConfiguration(HBaseConfigurationUtil.java:79): Could not find HBase configuration via any of the supported methods (Flink configuration, environment variables).

[WARN ] 2023-07-21 17:26:23,629(57122) --> [SinkMaterializer -> Sink: Sink(table=[default_catalog.default_database.dim_user_info], fields=[username, EXPR$1], upsertMaterialize=[true]) (5/8)#8] org.apache.flink.connector.hbase.util.HBaseConfigurationUtil.getHBaseConfiguration(HBaseConfigurationUtil.java:79): Could not find HBase configuration via any of the supported methods (Flink configuration, environment variables).

[ERROR] 2023-07-21 17:26:24,496(57989) --> [Thread-57] com.zaxxer.hikari.pool.HikariPool.throwPoolInitializationException(HikariPool.java:594): connection-pool-hadoop10:3306 - Exception during pool initialization.

com.mysql.cj.jdbc.exceptions.CommunicationsException: Communications link failure

The last packet sent successfully to the server was 0 milliseconds ago. The driver has not received any packets from the server.

at com.mysql.cj.jdbc.exceptions.SQLError.createCommunicationsException(SQLError.java:174)

at com.mysql.cj.jdbc.exceptions.SQLExceptionsMapping.translateException(SQLExceptionsMapping.java:64)

at com.mysql.cj.jdbc.ConnectionImpl.createNewIO(ConnectionImpl.java:836)

at com.mysql.cj.jdbc.ConnectionImpl.<init>(ConnectionImpl.java:456)

at com.mysql.cj.jdbc.ConnectionImpl.getInstance(ConnectionImpl.java:246)

at com.mysql.cj.jdbc.NonRegisteringDriver.connect(NonRegisteringDriver.java:197)

at com.zaxxer.hikari.util.DriverDataSource.getConnection(DriverDataSource.java:138)

at com.zaxxer.hikari.pool.PoolBase.newConnection(PoolBase.java:364)

at com.zaxxer.hikari.pool.PoolBase.newPoolEntry(PoolBase.java:206)

at com.zaxxer.hikari.pool.HikariPool.createPoolEntry(HikariPool.java:476)

at com.zaxxer.hikari.pool.HikariPool.checkFailFast(HikariPool.java:561)

at com.zaxxer.hikari.pool.HikariPool.<init>(HikariPool.java:115)

at com.zaxxer.hikari.HikariDataSource.<init>(HikariDataSource.java:81)

at com.ververica.cdc.connectors.mysql.source.connection.PooledDataSourceFactory.createPooledDataSource(PooledDataSourceFactory.java:63)

at com.ververica.cdc.connectors.mysql.source.connection.JdbcConnectionPools.getOrCreateConnectionPool(JdbcConnectionPools.java:51)

at com.ververica.cdc.connectors.mysql.source.connection.JdbcConnectionFactory.connect(JdbcConnectionFactory.java:53)

at io.debezium.jdbc.JdbcConnection.connection(JdbcConnection.java:872)

at io.debezium.jdbc.JdbcConnection.connection(JdbcConnection.java:867)

at io.debezium.jdbc.JdbcConnection.connect(JdbcConnection.java:413)

at com.ververica.cdc.connectors.mysql.debezium.DebeziumUtils.openJdbcConnection(DebeziumUtils.java:62)

at com.ververica.cdc.connectors.mysql.MySqlValidator.validate(MySqlValidator.java:68)

at com.ververica.cdc.connectors.mysql.source.MySqlSource.createEnumerator(MySqlSource.java:174)

at org.apache.flink.runtime.source.coordinator.SourceCoordinator.start(SourceCoordinator.java:129)

at org.apache.flink.runtime.operators.coordination.RecreateOnResetOperatorCoordinator$DeferrableCoordinator.resetAndStart(RecreateOnResetOperatorCoordinator.java:381)

at org.apache.flink.runtime.operators.coordination.RecreateOnResetOperatorCoordinator.lambda$resetToCheckpoint$6(RecreateOnResetOperatorCoordinator.java:136)

at java.util.concurrent.CompletableFuture.uniRun(CompletableFuture.java:719)

at java.util.concurrent.CompletableFuture$UniRun.tryFire(CompletableFuture.java:701)

at java.util.concurrent.CompletableFuture.postComplete(CompletableFuture.java:488)

at java.util.concurrent.CompletableFuture.complete(CompletableFuture.java:1975)

at org.apache.flink.runtime.operators.coordination.ComponentClosingUtils.lambda$closeAsyncWithTimeout$0(ComponentClosingUtils.java:71)

at java.lang.Thread.run(Thread.java:750)

Caused by: com.mysql.cj.exceptions.CJCommunicationsException: Communications link failure

The last packet sent successfully to the server was 0 milliseconds ago. The driver has not received any packets from the server.

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at com.mysql.cj.exceptions.ExceptionFactory.createException(ExceptionFactory.java:61)

at com.mysql.cj.exceptions.ExceptionFactory.createException(ExceptionFactory.java:105)

at com.mysql.cj.exceptions.ExceptionFactory.createException(ExceptionFactory.java:151)

at com.mysql.cj.exceptions.ExceptionFactory.createCommunicationsException(ExceptionFactory.java:167)

at com.mysql.cj.protocol.a.NativeProtocol.negotiateSSLConnection(NativeProtocol.java:334)

at com.mysql.cj.protocol.a.NativeAuthenticationProvider.connect(NativeAuthenticationProvider.java:164)

at com.mysql.cj.protocol.a.NativeProtocol.connect(NativeProtocol.java:1342)

at com.mysql.cj.NativeSession.connect(NativeSession.java:157)

at com.mysql.cj.jdbc.ConnectionImpl.connectOneTryOnly(ConnectionImpl.java:956)

at com.mysql.cj.jdbc.ConnectionImpl.createNewIO(ConnectionImpl.java:826)

... 28 more

Caused by: javax.net.ssl.SSLHandshakeException: No appropriate protocol (protocol is disabled or cipher suites are inappropriate)

at sun.security.ssl.HandshakeContext.<init>(HandshakeContext.java:171)

at sun.security.ssl.ClientHandshakeContext.<init>(ClientHandshakeContext.java:106)

at sun.security.ssl.TransportContext.kickstart(TransportContext.java:238)

at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:410)

at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:389)

at com.mysql.cj.protocol.ExportControlled.performTlsHandshake(ExportControlled.java:336)

at com.mysql.cj.protocol.StandardSocketFactory.performTlsHandshake(StandardSocketFactory.java:188)

at com.mysql.cj.protocol.a.NativeSocketConnection.performTlsHandshake(NativeSocketConnection.java:99)

at com.mysql.cj.protocol.a.NativeProtocol.negotiateSSLConnection(NativeProtocol.java:325)

... 33 more

[ERROR] 2023-07-21 17:26:24,496(57989) --> [Thread-57] com.ververica.cdc.connectors.mysql.debezium.DebeziumUtils.openJdbcConnection(DebeziumUtils.java:64): Failed to open MySQL connection

com.zaxxer.hikari.pool.HikariPool$PoolInitializationException: Failed to initialize pool: Communications link failure

The last packet sent successfully to the server was 0 milliseconds ago. The driver has not received any packets from the server.

at com.zaxxer.hikari.pool.HikariPool.throwPoolInitializationException(HikariPool.java:596)

at com.zaxxer.hikari.pool.HikariPool.checkFailFast(HikariPool.java:582)

at com.zaxxer.hikari.pool.HikariPool.<init>(HikariPool.java:115)

at com.zaxxer.hikari.HikariDataSource.<init>(HikariDataSource.java:81)

at com.ververica.cdc.connectors.mysql.source.connection.PooledDataSourceFactory.createPooledDataSource(PooledDataSourceFactory.java:63)

at com.ververica.cdc.connectors.mysql.source.connection.JdbcConnectionPools.getOrCreateConnectionPool(JdbcConnectionPools.java:51)

at com.ververica.cdc.connectors.mysql.source.connection.JdbcConnectionFactory.connect(JdbcConnectionFactory.java:53)

at io.debezium.jdbc.JdbcConnection.connection(JdbcConnection.java:872)

at io.debezium.jdbc.JdbcConnection.connection(JdbcConnection.java:867)

at io.debezium.jdbc.JdbcConnection.connect(JdbcConnection.java:413)

at com.ververica.cdc.connectors.mysql.debezium.DebeziumUtils.openJdbcConnection(DebeziumUtils.java:62)

at com.ververica.cdc.connectors.mysql.MySqlValidator.validate(MySqlValidator.java:68)

at com.ververica.cdc.connectors.mysql.source.MySqlSource.createEnumerator(MySqlSource.java:174)

at org.apache.flink.runtime.source.coordinator.SourceCoordinator.start(SourceCoordinator.java:129)

at org.apache.flink.runtime.operators.coordination.RecreateOnResetOperatorCoordinator$DeferrableCoordinator.resetAndStart(RecreateOnResetOperatorCoordinator.java:381)

at org.apache.flink.runtime.operators.coordination.RecreateOnResetOperatorCoordinator.lambda$resetToCheckpoint$6(RecreateOnResetOperatorCoordinator.java:136)

at java.util.concurrent.CompletableFuture.uniRun(CompletableFuture.java:719)

at java.util.concurrent.CompletableFuture$UniRun.tryFire(CompletableFuture.java:701)

at java.util.concurrent.CompletableFuture.postComplete(CompletableFuture.java:488)

at java.util.concurrent.CompletableFuture.complete(CompletableFuture.java:1975)

at org.apache.flink.runtime.operators.coordination.ComponentClosingUtils.lambda$closeAsyncWithTimeout$0(ComponentClosingUtils.java:71)

at java.lang.Thread.run(Thread.java:750)

Caused by: com.mysql.cj.jdbc.exceptions.CommunicationsException: Communications link failure

The last packet sent successfully to the server was 0 milliseconds ago. The driver has not received any packets from the server.

at com.mysql.cj.jdbc.exceptions.SQLError.createCommunicationsException(SQLError.java:174)

at com.mysql.cj.jdbc.exceptions.SQLExceptionsMapping.translateException(SQLExceptionsMapping.java:64)

at com.mysql.cj.jdbc.ConnectionImpl.createNewIO(ConnectionImpl.java:836)

at com.mysql.cj.jdbc.ConnectionImpl.<init>(ConnectionImpl.java:456)

at com.mysql.cj.jdbc.ConnectionImpl.getInstance(ConnectionImpl.java:246)

at com.mysql.cj.jdbc.NonRegisteringDriver.connect(NonRegisteringDriver.java:197)

at com.zaxxer.hikari.util.DriverDataSource.getConnection(DriverDataSource.java:138)

at com.zaxxer.hikari.pool.PoolBase.newConnection(PoolBase.java:364)

at com.zaxxer.hikari.pool.PoolBase.newPoolEntry(PoolBase.java:206)

at com.zaxxer.hikari.pool.HikariPool.createPoolEntry(HikariPool.java:476)

at com.zaxxer.hikari.pool.HikariPool.checkFailFast(HikariPool.java:561)

... 20 more

Caused by: com.mysql.cj.exceptions.CJCommunicationsException: Communications link failure

The last packet sent successfully to the server was 0 milliseconds ago. The driver has not received any packets from the server.

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at com.mysql.cj.exceptions.ExceptionFactory.createException(ExceptionFactory.java:61)

at com.mysql.cj.exceptions.ExceptionFactory.createException(ExceptionFactory.java:105)

at com.mysql.cj.exceptions.ExceptionFactory.createException(ExceptionFactory.java:151)

at com.mysql.cj.exceptions.ExceptionFactory.createCommunicationsException(ExceptionFactory.java:167)

at com.mysql.cj.protocol.a.NativeProtocol.negotiateSSLConnection(NativeProtocol.java:334)

at com.mysql.cj.protocol.a.NativeAuthenticationProvider.connect(NativeAuthenticationProvider.java:164)

at com.mysql.cj.protocol.a.NativeProtocol.connect(NativeProtocol.java:1342)

at com.mysql.cj.NativeSession.connect(NativeSession.java:157)

at com.mysql.cj.jdbc.ConnectionImpl.connectOneTryOnly(ConnectionImpl.java:956)

at com.mysql.cj.jdbc.ConnectionImpl.createNewIO(ConnectionImpl.java:826)

... 28 more

Caused by: javax.net.ssl.SSLHandshakeException: No appropriate protocol (protocol is disabled or cipher suites are inappropriate)

at sun.security.ssl.HandshakeContext.<init>(HandshakeContext.java:171)

at sun.security.ssl.ClientHandshakeContext.<init>(ClientHandshakeContext.java:106)

at sun.security.ssl.TransportContext.kickstart(TransportContext.java:238)

at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:410)

at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:389)

at com.mysql.cj.protocol.ExportControlled.performTlsHandshake(ExportControlled.java:336)

at com.mysql.cj.protocol.StandardSocketFactory.performTlsHandshake(StandardSocketFactory.java:188)

at com.mysql.cj.protocol.a.NativeSocketConnection.performTlsHandshake(NativeSocketConnection.java:99)

at com.mysql.cj.protocol.a.NativeProtocol.negotiateSSLConnection(NativeProtocol.java:325)

... 33 more

[ERROR] 2023-07-21 17:26:24,497(57990) --> [Thread-57] org.apache.flink.runtime.source.coordinator.SourceCoordinator.start(SourceCoordinator.java:132): Failed to create Source Enumerator for source Source: TableSourceScan(table=[[default_catalog, default_database, ums_member]], fields=[id, username, phone, status, create_time, gender, birthday, city, job, source_type]) -> Calc(select=[username, ROW(CAST(id), phone, status, create_time, gender, birthday, city, job, source_type) AS EXPR$1]) -> NotNullEnforcer(fields=[username])

org.apache.flink.util.FlinkRuntimeException: com.zaxxer.hikari.pool.HikariPool$PoolInitializationException: Failed to initialize pool: Communications link failure

The last packet sent successfully to the server was 0 milliseconds ago. The driver has not received any packets from the server.

at com.ververica.cdc.connectors.mysql.debezium.DebeziumUtils.openJdbcConnection(DebeziumUtils.java:65)