搭建需要内容包含:JDK+Zookeeper+Kafka三部分

jdk搭建

jdk搭建配置已经是老生常谈,这里不再赘述,不懂的话请自行百度。

Zookeeper配置

百度网盘链接:https://pan.baidu.com/s/1RChj7LrF5W2ddbrkkhrhLg 提取码:am4f

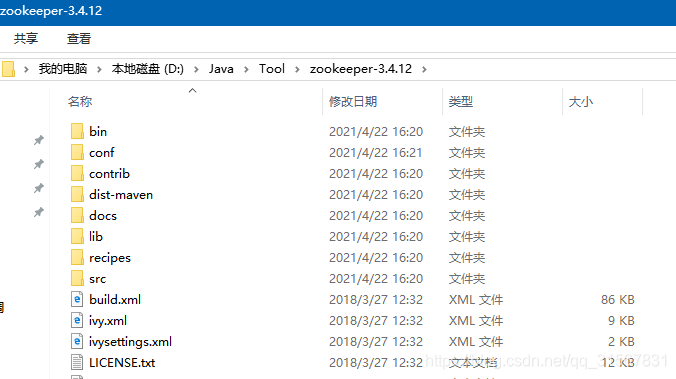

1、下载后解压到一个目录:eg: D:\Java\Tool\zookeeper-3.4.12

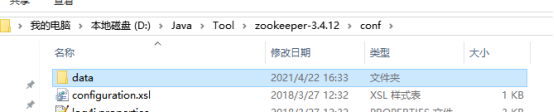

2、在zookeeper-3.4.12目录下,新建文件夹,并命名(eg: data).(路径为:D:\Java\Tool\zookeeper-3.4.12\conf\data)

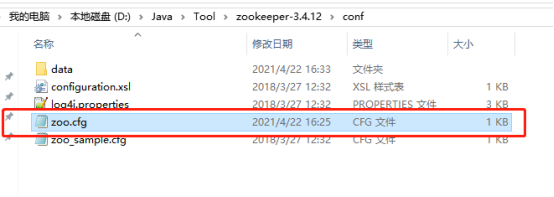

3、进入Zookeeper设置目录,eg: D:\Java\Tool\zookeeper-3.4.10\conf 复制“zoo_sample.cfg”粘贴并将副本重命名为“zoo.cfg”

4、在任意文本编辑器(eg:记事本)中打开zoo.cfg, 找到并编辑dataDir=D:\Java\Tool\zookeeper-3.4.10\data

注意,路径是双斜杠:\

5、添加系统环境变量:

5.1 在系统变量中添加ZOOKEEPER_HOME = D:\Java\Tool\zookeeper-3.4.10

5.2 编辑path系统变量,添加为路径%ZOOKEEPER_HOME%\bin。

6、在zoo.cfg文件中修改默认的Zookeeper端口(不改则默认端口2181)

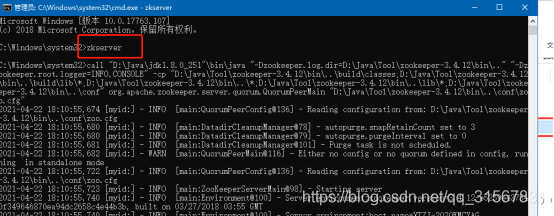

7、运行zk

命令窗口直接执行:zkserver

搭建成功。

Kafka配置

百度网盘链接:https://pan.baidu.com/s/1WdhTPLmKeQ-TzIrt9tfTQg 提取码:m254

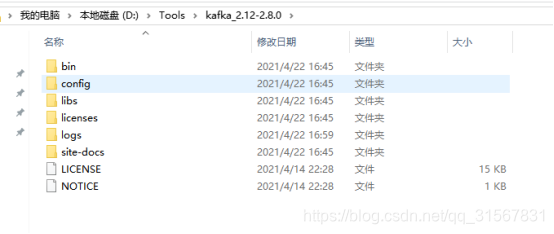

** 1、下载后解压缩。eg: D:\Tools\kafka_2.12-2.8.0**

2、建立一个空文件夹 logs. eg: D:\Tools\kafka_2.12-2.8.0\logs

3、进入config目录,编辑 server.properties文件(eg: 用“写字板”打开)。

3.1找到并编辑log.dirs= D:\Tools\kafka_2.12-2.8.0\logs

3.2找到并编辑zookeeper.connect=localhost:2181。表示本地运行

3.3(Kafka会按照默认,在9092端口上运行,并连接zookeeper的默认端口:2181)

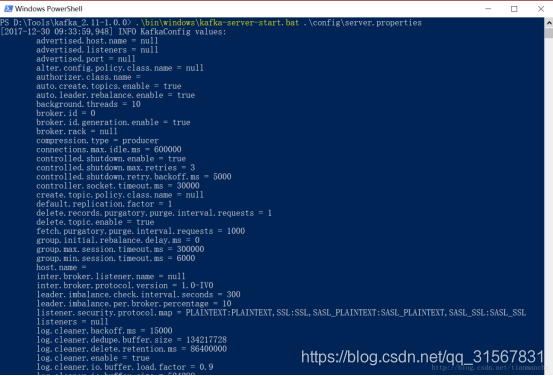

4、运行:请确保在启动Kafka服务器前,Zookeeper实例已经准备好并开始运行。(就是开着Zookeeper窗口不要关)

** 5、 在 D:\WorkSoftware\kafka_2.12-2.8.0下,按住shift+鼠标右键。 选择“在此处打开Powershell窗口(S)”(如果没有此选项,在此处打开命令窗口)。**

6、 运行:.\bin\windows\kafka-server-start.bat .\config\server.properties

搭建成功。

生产者实现

1、先在项目添加依赖

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>0.8.2.1</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.11</artifactId>

<version>0.8.2.1</version>

</dependency>

2、新增生产者测试类

直接上源码

package com.csg.commonInterFace.kafka;

import kafka.javaapi.producer.Producer;

import kafka.producer.KeyedMessage;

import kafka.producer.ProducerConfig;

import java.util.Properties;

/**

* @program: kafkaTest

* @description: kafkaTest

* @author: 小李

* @create: 2021-04-19 14:17

**/

public class RunKafkaProduce {

private final Producer<String, String> producer;

public final static String TOPIC = "logstest";

private RunKafkaProduce(){

Properties props = new Properties();

// 此处配置的是kafka的broker地址:端口列表

props.put("metadata.broker.list", "127.0.0.1:9092");

//配置value的序列化类

props.put("serializer.class", "kafka.serializer.StringEncoder");

//配置key的序列化类

props.put("key.serializer.class", "kafka.serializer.StringEncoder");

props.put("request.required.acks","-1");

producer = new Producer<String, String>(new ProducerConfig(props));

}

void produce() {

int messageNo = 1;

final int COUNT = 11;

int messageCount = 0;

while (messageNo < COUNT) {

String key = String.valueOf(messageNo);

String data = "hello kafka! :" + key;

producer.send(new KeyedMessage<String, String>(TOPIC, key ,data));

System.out.println(data);

messageNo ++;

messageCount++;

}

System.out.println("Producer端一共产生了" + messageCount + "条消息!");

}

public static void main( String[] args )

{

new RunKafkaProduce().produce();

}

}

运行生产者:

消费者实现

1、新增消费者类

直接上源码:

package com.csg.commonInterFace.kafka;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Properties;

import kafka.consumer.ConsumerConfig;

import kafka.consumer.ConsumerIterator;

import kafka.consumer.KafkaStream;

import kafka.javaapi.consumer.ConsumerConnector;

import kafka.serializer.StringDecoder;

import kafka.utils.VerifiableProperties;

/**

* @program: kafkaTest

* @description: kafka

* @author: 小李

* @create: 2021-04-19 15:07

**/

public class RunKafkaConsumer {

private final ConsumerConnector consumer;

private final static String TOPIC="logstest";

private RunKafkaConsumer(){

Properties props=new Properties();

//zookeeper

props.put("zookeeper.connect","127.0.0.1:2181");

//topic

props.put("group.id","logstest");

//Zookeeper 超时

props.put("zookeeper.session.timeout.ms", "50000");

props.put("zookeeper.sync.time.ms", "200");

props.put("auto.commit.interval.ms", "1000");

props.put("auto.offset.reset", "smallest");

props.put("serializer.class", "kafka.serializer.StringEncoder");

ConsumerConfig config=new ConsumerConfig(props);

consumer= kafka.consumer.Consumer.createJavaConsumerConnector(config);

}

void consume(){

Map<String, Integer> topicCountMap = new HashMap<String, Integer>();

topicCountMap.put(TOPIC, new Integer(1));

StringDecoder keyDecoder = new StringDecoder(new VerifiableProperties());

StringDecoder valueDecoder = new StringDecoder(new VerifiableProperties());

Map<String, List<KafkaStream<String, String>>> consumerMap =

consumer.createMessageStreams(topicCountMap,keyDecoder,valueDecoder);

KafkaStream<String, String> stream = consumerMap.get(TOPIC).get(0);

ConsumerIterator<String, String> it = stream.iterator();

int messageCount = 0;

while (it.hasNext()){

System.out.println(it.next().message());

messageCount++;

if(messageCount == 10){

System.out.println("Consumer端一共消费了" + messageCount + "条消息!");

}

}

}

public static void main(String[] args) {

new RunKafkaConsumer().consume();

}

}

2、运行消费者,收到消息:

1924

1924

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?