问题描述:

2024-06-24 01:10:42,141 - mmdet - INFO - Epoch [17][1550/8015] lr: 9.963e-03, eta: 7 days, 8:40:21, time: 0.285, data_time: 0.165, memory: 6272, loss_cls: 0.6743, loss_bbox: 3.3448, loss_obj: 5.4422, loss: 9.4612

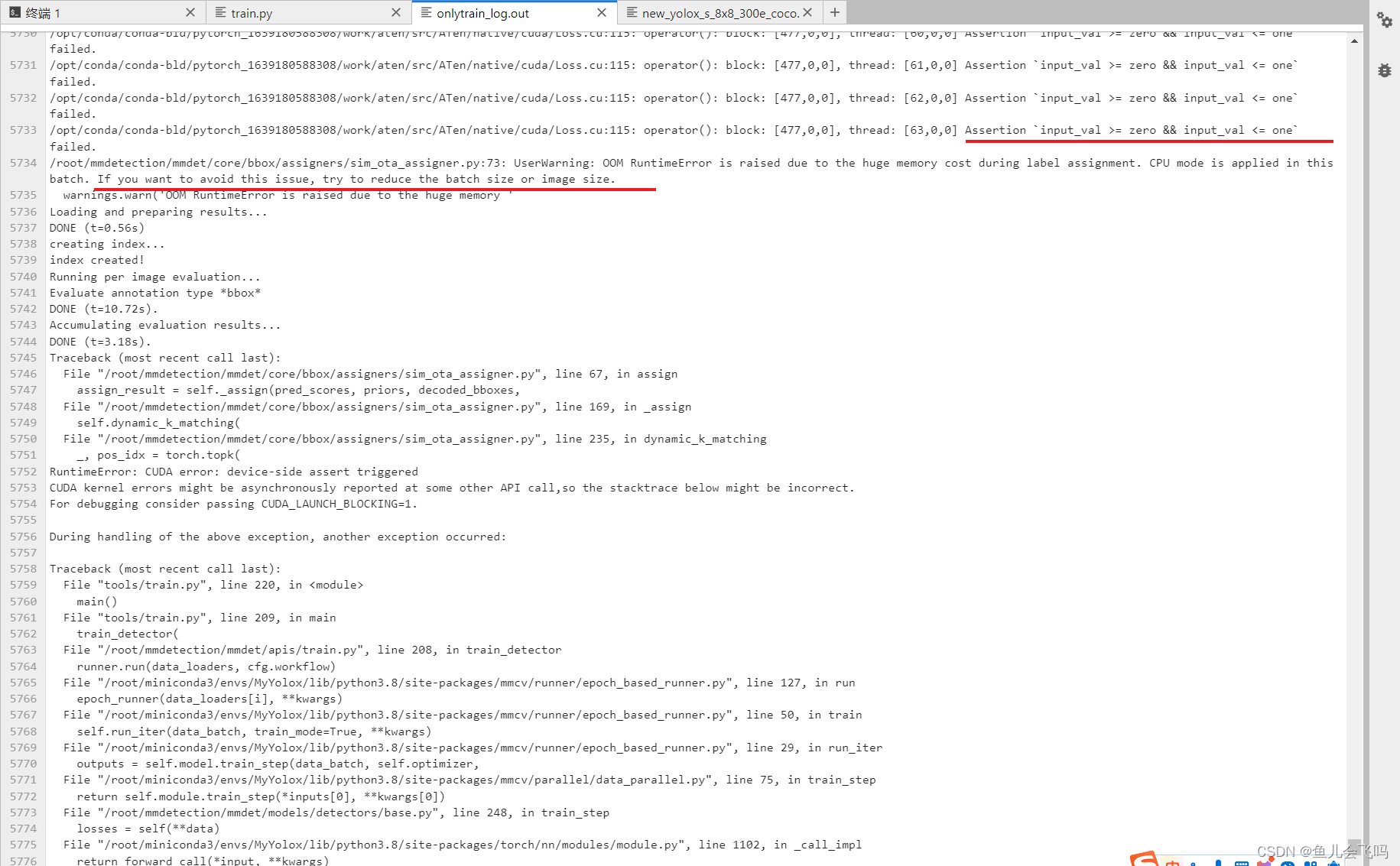

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [0,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [1,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [2,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [3,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [4,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [5,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [6,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [7,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [8,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [9,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [10,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [11,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [12,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [13,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [14,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [15,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [16,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [17,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [18,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [19,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [20,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [21,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [22,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [23,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [24,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [25,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [26,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [27,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [28,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [29,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [30,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [471,0,0], thread: [31,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [32,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [33,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [34,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [35,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [36,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [37,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [38,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [39,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [40,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [41,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [42,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [43,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [44,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [45,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [46,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [47,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [48,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [49,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [50,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [51,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [52,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [53,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [54,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [55,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [56,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [57,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [58,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [59,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [60,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [61,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [62,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/opt/conda/conda-bld/pytorch_1639180588308/work/aten/src/ATen/native/cuda/Loss.cu:115: operator(): block: [477,0,0], thread: [63,0,0] Assertion `input_val >= zero && input_val <= one` failed.

/root/mmdetection/mmdet/core/bbox/assigners/sim_ota_assigner.py:73: UserWarning: OOM RuntimeError is raised due to the huge memory cost during label assignment. CPU mode is applied in this batch. If you want to avoid this issue, try to reduce the batch size or image size.

warnings.warn('OOM RuntimeError is raised due to the huge memory '

Loading and preparing results...

DONE (t=0.56s)

creating index...

index created!

Running per image evaluation...

Evaluate annotation type *bbox*

DONE (t=10.72s).

Accumulating evaluation results...

DONE (t=3.18s).

Traceback (most recent call last):

File "/root/mmdetection/mmdet/core/bbox/assigners/sim_ota_assigner.py", line 67, in assign

assign_result = self._assign(pred_scores, priors, decoded_bboxes,

File "/root/mmdetection/mmdet/core/bbox/assigners/sim_ota_assigner.py", line 169, in _assign

self.dynamic_k_matching(

File "/root/mmdetection/mmdet/core/bbox/assigners/sim_ota_assigner.py", line 235, in dynamic_k_matching

_, pos_idx = torch.topk(

RuntimeError: CUDA error: device-side assert triggered

CUDA kernel errors might be asynchronously reported at some other API call,so the stacktrace below might be incorrect.

For debugging consider passing CUDA_LAUNCH_BLOCKING=1.During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "tools/train.py", line 220, in <module>

main()

File "tools/train.py", line 209, in main

train_detector(

File "/root/mmdetection/mmdet/apis/train.py", line 208, in train_detector

runner.run(data_loaders, cfg.workflow)

File "/root/miniconda3/envs/MyYolox/lib/python3.8/site-packages/mmcv/runner/epoch_based_runner.py", line 127, in run

epoch_runner(data_loaders[i], **kwargs)

File "/root/miniconda3/envs/MyYolox/lib/python3.8/site-packages/mmcv/runner/epoch_based_runner.py", line 50, in train

self.run_iter(data_batch, train_mode=True, **kwargs)

File "/root/miniconda3/envs/MyYolox/lib/python3.8/site-packages/mmcv/runner/epoch_based_runner.py", line 29, in run_iter

outputs = self.model.train_step(data_batch, self.optimizer,

File "/root/miniconda3/envs/MyYolox/lib/python3.8/site-packages/mmcv/parallel/data_parallel.py", line 75, in train_step

return self.module.train_step(*inputs[0], **kwargs[0])

File "/root/mmdetection/mmdet/models/detectors/base.py", line 248, in train_step

losses = self(**data)

File "/root/miniconda3/envs/MyYolox/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1102, in _call_impl

return forward_call(*input, **kwargs)

File "/root/miniconda3/envs/MyYolox/lib/python3.8/site-packages/mmcv/runner/fp16_utils.py", line 110, in new_func

return old_func(*args, **kwargs)

File "/root/mmdetection/mmdet/models/detectors/base.py", line 172, in forward

return self.forward_train(img, img_metas, **kwargs)

File "/root/mmdetection/mmdet/models/detectors/yolox.py", line 95, in forward_train

losses = super(YOLOX, self).forward_train(img, img_metas, gt_bboxes,

File "/root/mmdetection/mmdet/models/detectors/single_stage.py", line 83, in forward_train

losses = self.bbox_head.forward_train(x, img_metas, gt_bboxes,

File "/root/mmdetection/mmdet/models/dense_heads/base_dense_head.py", line 335, in forward_train

losses = self.loss(*loss_inputs, gt_bboxes_ignore=gt_bboxes_ignore)

File "/root/miniconda3/envs/MyYolox/lib/python3.8/site-packages/mmcv/runner/fp16_utils.py", line 198, in new_func

return old_func(*args, **kwargs)

File "/root/mmdetection/mmdet/models/dense_heads/yolox_head.py", line 382, in loss

num_fg_imgs) = multi_apply(

File "/root/mmdetection/mmdet/core/utils/misc.py", line 30, in multi_apply

return tuple(map(list, zip(*map_results)))

File "/root/miniconda3/envs/MyYolox/lib/python3.8/site-packages/torch/autograd/grad_mode.py", line 28, in decorate_context

return func(*args, **kwargs)

File "/root/mmdetection/mmdet/models/dense_heads/yolox_head.py", line 462, in _get_target_single

assign_result = self.assigner.assign(

File "/root/mmdetection/mmdet/core/bbox/assigners/sim_ota_assigner.py", line 77, in assign

torch.cuda.empty_cache()

File "/root/miniconda3/envs/MyYolox/lib/python3.8/site-packages/torch/cuda/memory.py", line 114, in empty_cache

torch._C._cuda_emptyCache()

RuntimeError: CUDA error: device-side assert triggered

CUDA kernel errors might be asynchronously reported at some other API call,so the stacktrace below might be incorrect.

For debugging consider passing CUDA_LAUNCH_BLOCKING=1.

解决方案:

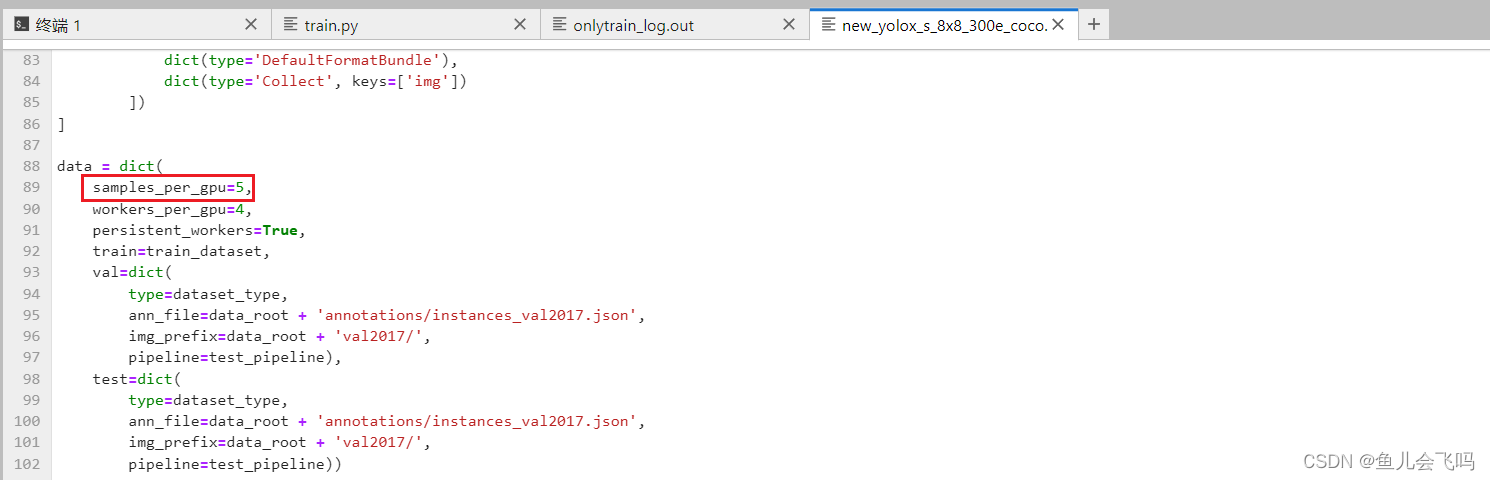

把samples_per_gpu=8改为samples_per_gpu=5

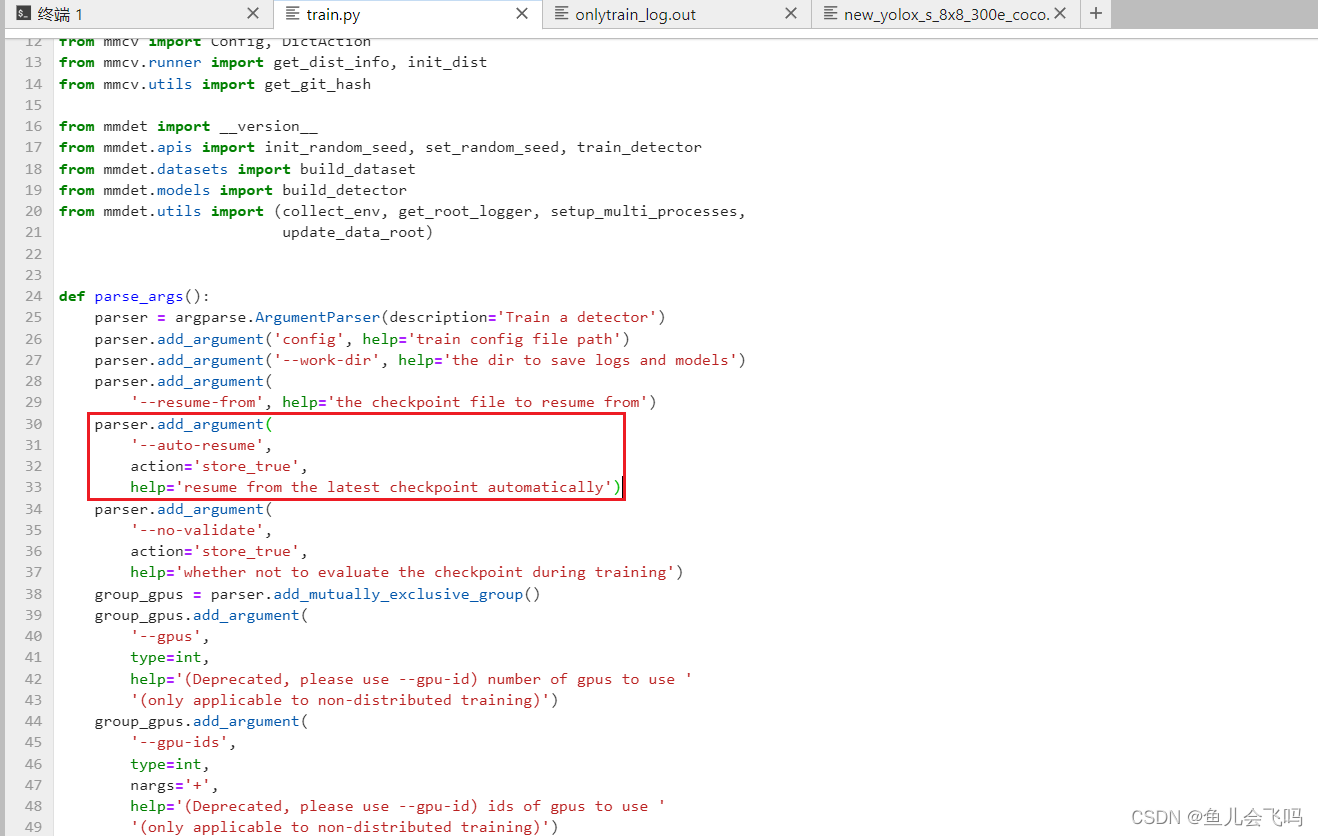

接着命令行加上--auto-resume参数

resume from the latest checkpoint automatically

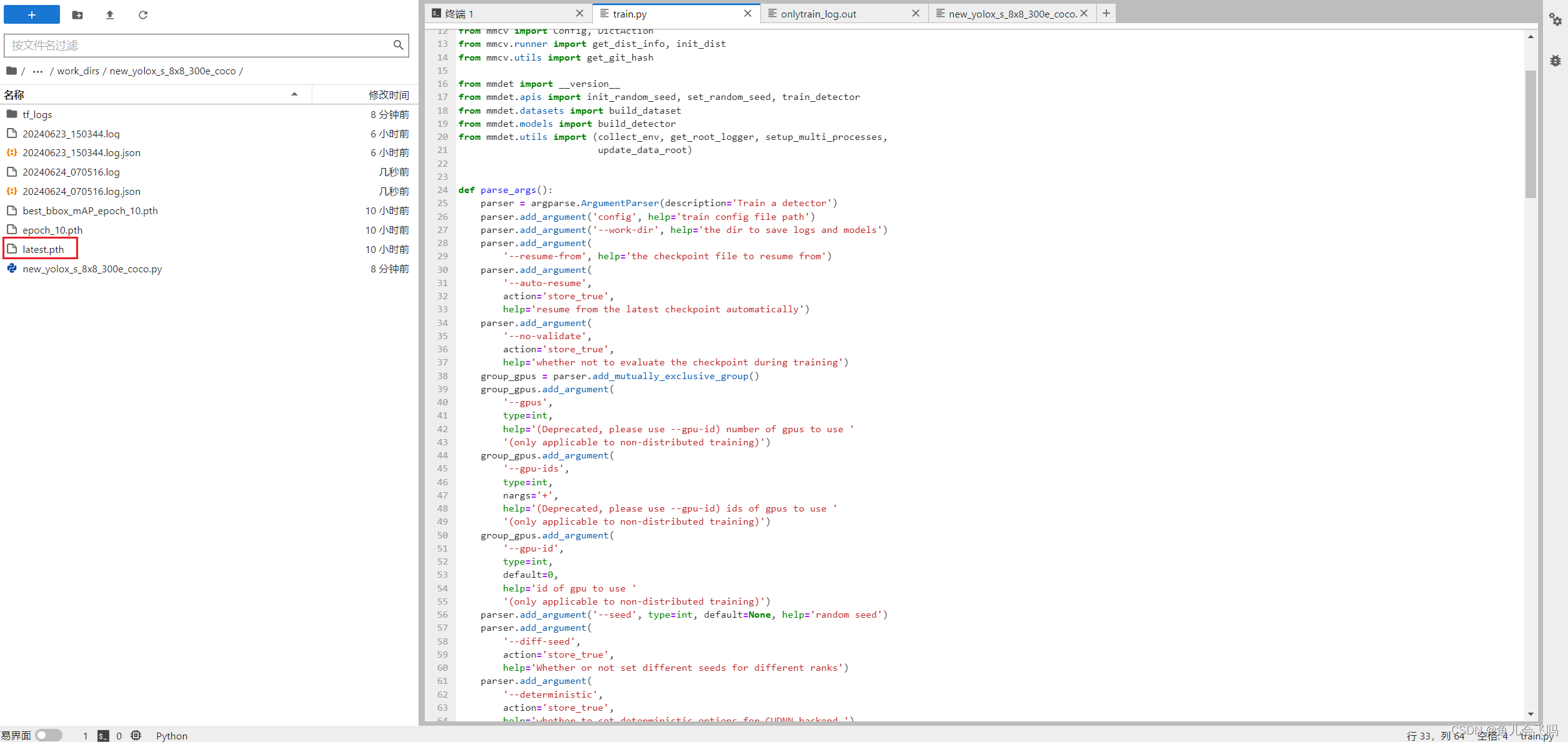

nohup python tools/train.py configs/yolox/new_yolox_s_8x8_300e_coco.py --auto-resume >newonlytrain_log.out 2>&1

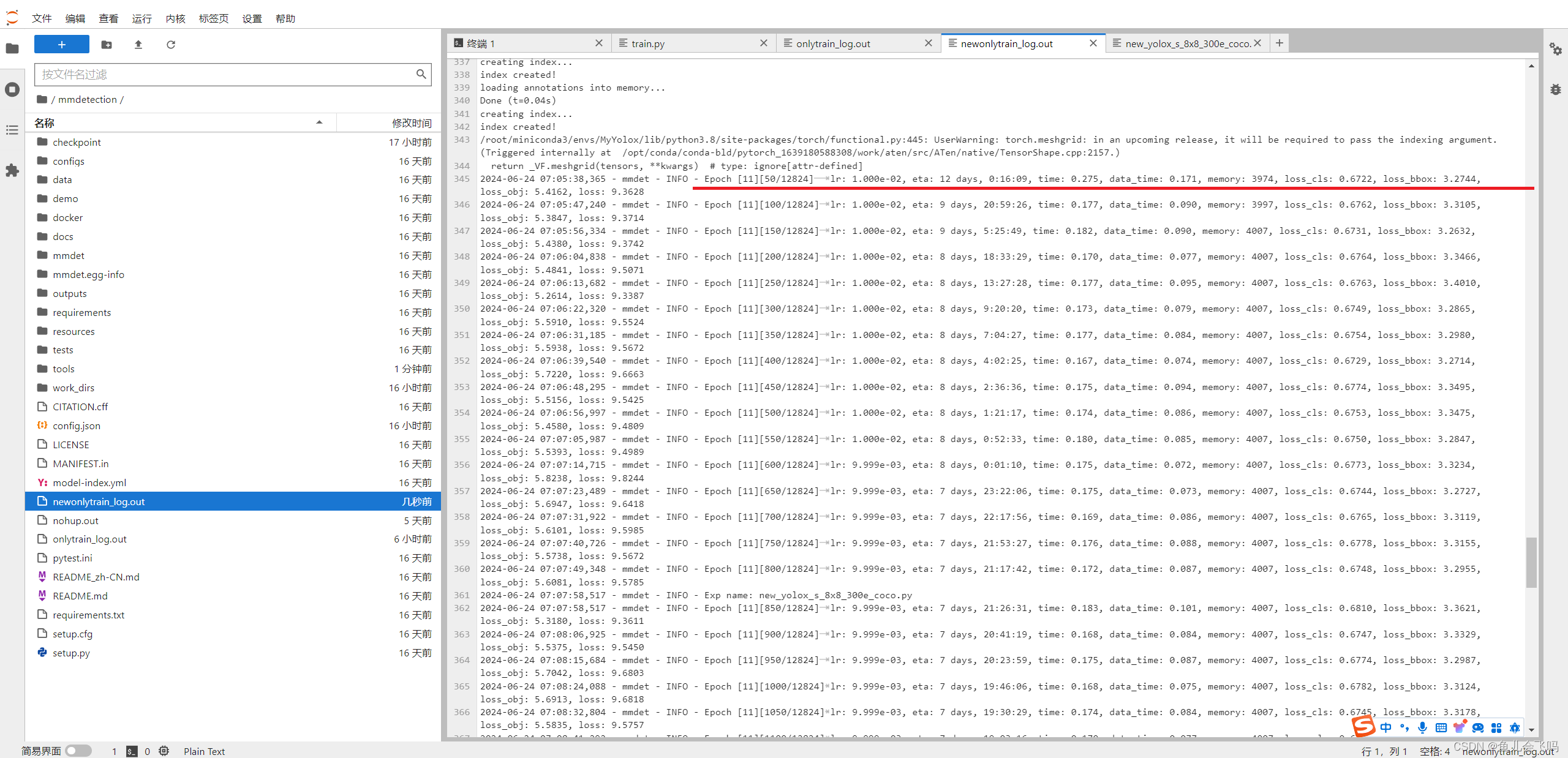

发现从报错的地方修改之后,继续会从latest.pth开始训练

pytorch训练过程中出现错误:Assertion input_val >= zero && input_val <= one failed._test 最后一个batch补全-CSDN博客

3837

3837

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?