#coding:utf8

from audioop import add

from cmd import IDENTCHARS

from random import shuffle

from numpy import partition

from pyspark import StorageLevel

from pyspark.sql import SparkSession

from pyspark.sql.types import StructType,StringType,IntegerType,ArrayType

import pandas as pd

from pyspark.sql import functions as F

import string

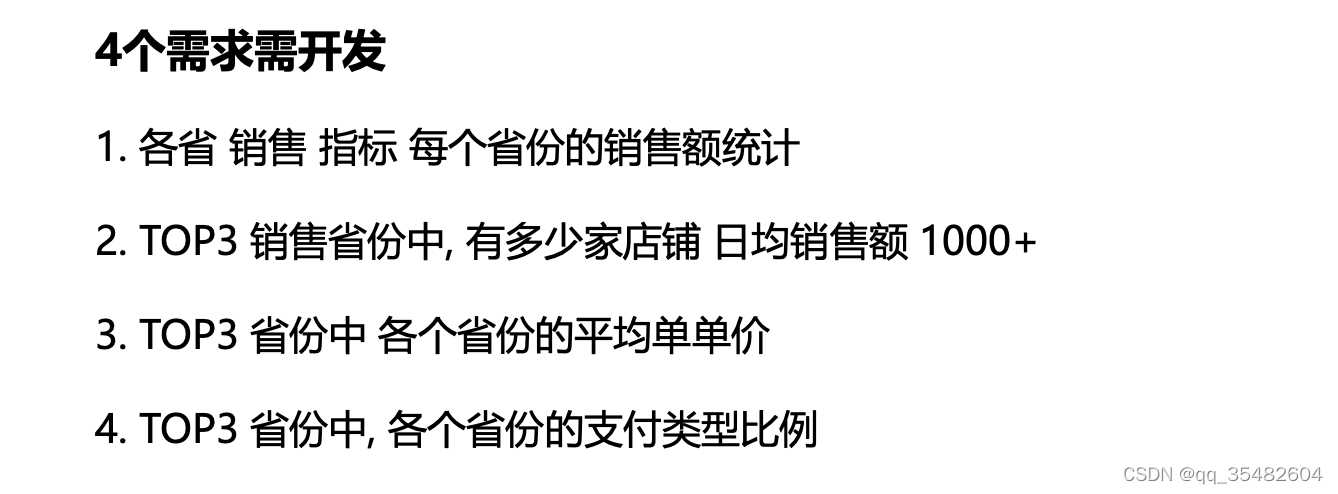

# 需求1: 统计各省销售额

# 需求2:TOP3销售省份中,有多少店铺达到过日销售额1000+

# 需求3: TOP3省份中各个省份的平均订单价格

# 需求4: TOP3省份中,各个省份的支付比例

# /opt/module/spark/bin/spark-submit /opt/Code/spark_dev_example.py

if __name__ == '__main__':

spark = SparkSession.builder.appName('SparkSQL Example').master('local[*]').\

config('spark.sql.shuffle.partition','2').\

getOrCreate()

sc = spark.sparkContext

# 1. 读取信息

# 有的订单金额超过10000的,是测试数据,故过滤掉

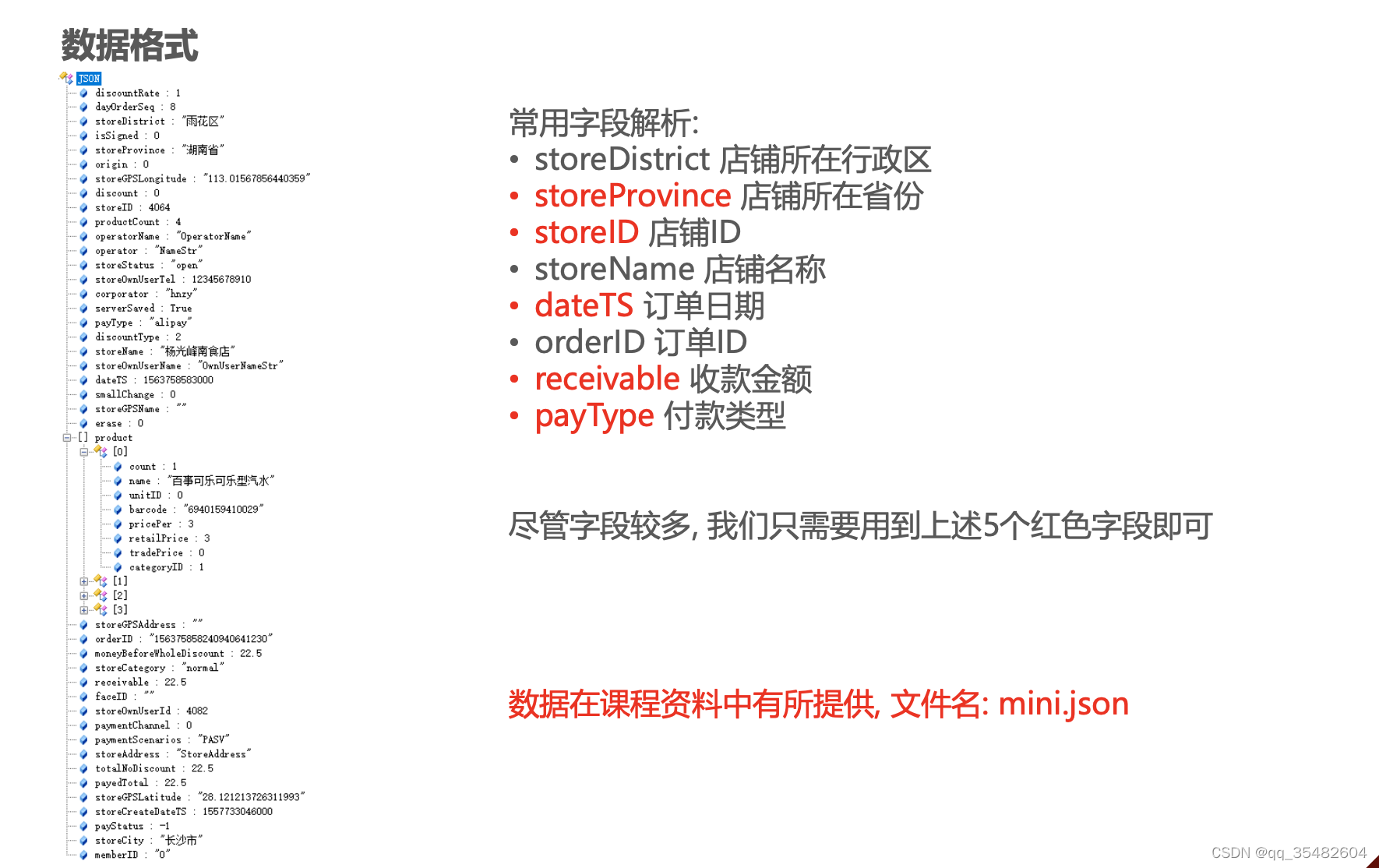

df = spark.read.format('json').load('file:///opt/Data/mini.json').\

dropna(thresh=1,subset=['storeProvince']).\

filter("storeProvince!='null'").\

filter("receivable < 10000").\

select("storeProvince","storeID","receivable","dateTS","payType")

# TODO1 : 各省销售额统计

province_sale_df = df.groupBy("storeProvince").sum("receivable").\

withColumnRenamed("sum(receivable)","money").\

withColumn("money",F.round("money",2)).\

orderBy("money",ascending=False)

# TODO2:TOP3销售省份中,有多少店铺达到过日销售额1000+

top3_province_df = province_sale_df.limit(3).select("storeProvince").withColumnRenamed("storeProvince","top3_province")

# 和原始的DF进行内关联

top3_province_df_joined = df.join(top3_province_df,on = df["storeProvince"] == top3_province_df["top3_province"])

top3_province_df_joined.persist(StorageLevel.MEMORY_AND_DISK)

province_host_store_count_df = top3_province_df_joined.groupBy("storeProvince","storeID",

F.from_unixtime(df["dateTS"].substr(0,10),"yyyy-MM-dd").alias("day")).\

sum("receivable").withColumnRenamed("sum(receivable)","money").\

filter("money > 1000").\

dropDuplicates(subset=["storeID"]).\

groupBy("storeProvince").count()

# TODO3: TOP3省份中各个省份的平均订单价格

top3_province_order_avg_df = top3_province_df_joined.groupBy("storeProvince").\

avg("receivable").\

withColumnRenamed("avg(receivable)","money").\

withColumn("money",F.round("money",2)).\

orderBy("money",ascending=False)

# TODO4: TOP3省份中,各个省份的支付比例

top3_province_df_joined.createTempView("province_pay")

spark.sql("""

select storeProvince,payType,count(payType)/total as percent

from (

select storeProvince,payType,count(1) over(partition by storeProvince) as total

from province_pay

) t1

group by storeProvince,payType,total

""").show()

top3_province_df_joined.unpersist()

631

631

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?