import os

import time

import torch

import numpy as np

import pandas as pd

import seaborn as sb

import torch. nn as nn

import torch. optim as optim

import torch. utils. data as Data

import matplotlib. pyplot as plt

import torch. nn. functional as F

def get_mnist ( ) :

current_file = os. path. relpath( __file__)

current_path = os. path. split( current_file) [ 0 ]

mnist_file = os. path. join( current_path, 'mnist.npz' )

data = np. load( mnist_file)

x, y = data[ 'x' ] , data[ 'y' ]

return x, y

class BP ( nn. Module) :

def __init__ ( self, in_feature, out_feature, cell_count) :

super ( BP, self) . __init__( )

self. layer1 = nn. Linear( in_feature, cell_count)

self. layer1_activation = nn. ReLU( )

self. layer2 = nn. Linear( cell_count, out_feature)

def forward ( self, x) :

out = self. layer1( x)

out = self. layer1_activation( out)

out = self. layer2( out)

return out

class SoftmaxCrossEntropyLoss ( nn. Module) :

def __init__ ( self) :

super ( SoftmaxCrossEntropyLoss, self) . __init__( )

def forward ( self, y_hat, y_label) :

log_softmax = F. log_softmax( y_hat)

return F. nll_loss( log_softmax, y_label)

if __name__ == "__main__" :

X, Y = get_mnist( )

X = X/ 255.0

X = torch. from_numpy( X. reshape( X. shape[ 0 ] , - 1 ) ) . float ( )

Y = torch. tensor( np. argmax( Y, axis= 1 ) . tolist( ) )

dataset = Data. TensorDataset( X, Y)

dataloader = Data. DataLoader( dataset, batch_size= 100 , shuffle= True )

pre_defined_cells = [ 10 , 30 , 100 , 300 , 1000 ]

train_time = [ ]

diff_cells_acc = { }

plt. subplots( figsize = ( 16 , 9 ) )

epochs = 40

for cell_count in pre_defined_cells:

network = BP( 28 * 28 , 10 , cell_count)

print ( 'current cell count is %d' % ( cell_count) )

start = time. clock( )

loss = SoftmaxCrossEntropyLoss( )

optimizer = optim. SGD( network. parameters( ) , lr= 0.01 )

acc_every_epoch = [ ]

for epoch in range ( epochs) :

network. train( )

for ( x, y_label) in dataloader:

y_hat = network( x)

loss_value = loss( y_hat, y_label)

optimizer. zero_grad( )

loss_value. backward( )

optimizer. step( )

network. eval ( )

y_hat = network( torch. autograd. Variable( X) ) . detach( ) . numpy( )

y_hat = np. argmax( y_hat, axis= 1 )

y_label = Y. detach( ) . numpy( )

acc = ( y_hat == y_label)

acc = acc. mean( )

print ( f'epoch={epoch}, accuracy={acc}' )

acc_every_epoch. append( int ( acc * 10000 ) / 100 )

elapsed = ( time. clock( ) - start)

train_time. append( elapsed)

diff_cells_acc[ 'cell_count=%d' % ( cell_count) ] = acc_every_epoch

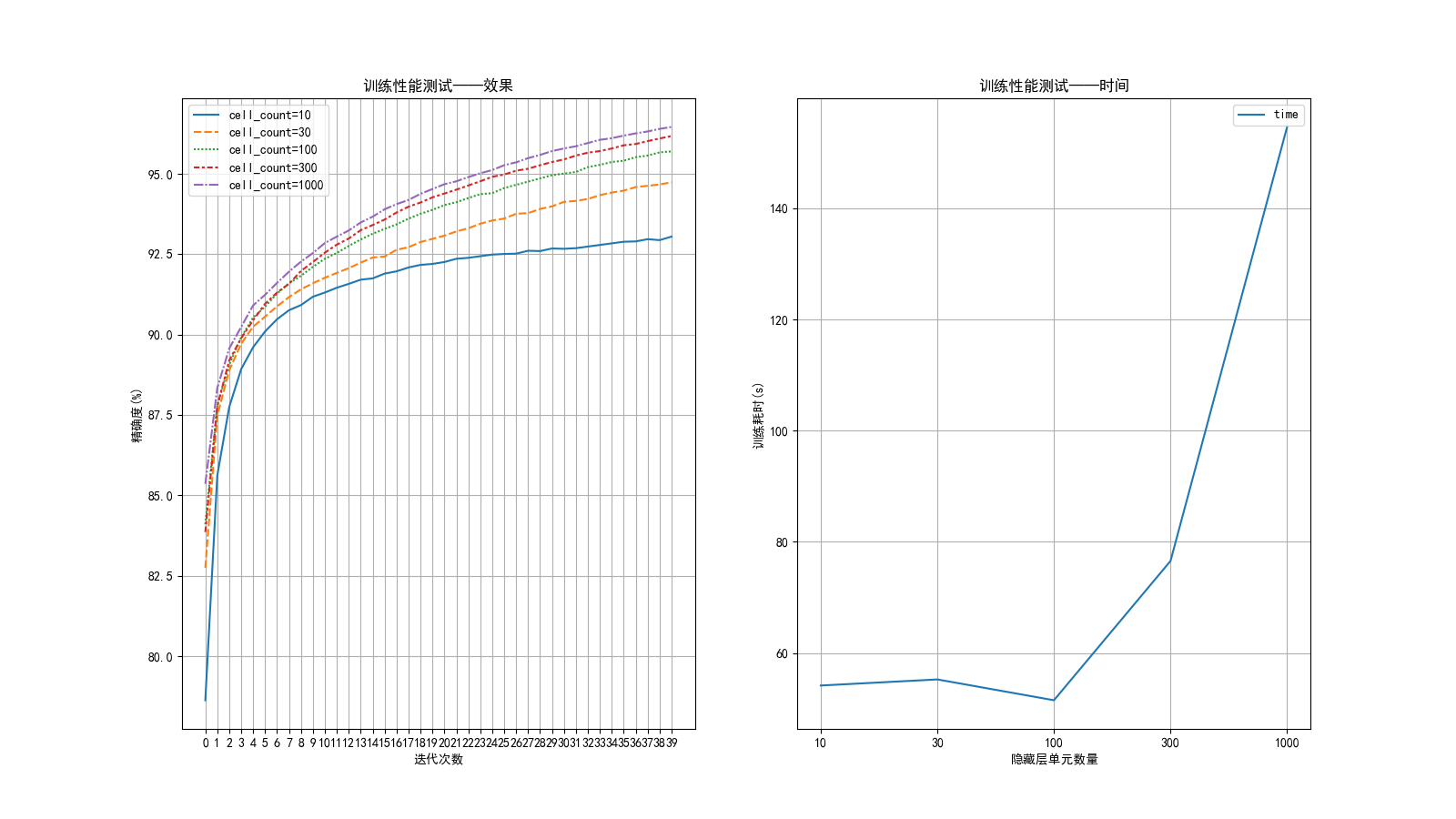

plt. subplot( 1 , 2 , 1 )

sb. lineplot(

data= diff_cells_acc

)

plt. title( r'训练性能测试——效果' )

plt. xlabel( r'数据代' )

plt. ylabel( r'精确度(%)' )

plt. xticks( range ( epochs) , rotation= 0 )

plt. grid( )

plt. subplot( 1 , 2 , 2 )

sb. lineplot(

data= dict ( {

'time' : np. array( train_time) ,

} )

)

plt. title( r'训练性能测试——时间' )

plt. xlabel( r'隐藏层单元数量' )

plt. ylabel( r'训练耗时(s)' )

plt. xticks( range ( len ( train_time) ) , [ str ( i) for i in pre_defined_cells] , rotation= 0 )

plt. grid( )

plt. show( )

epoch= 0, accuracy= 0.7766428571428572

epoch= 1, accuracy= 0.8463428571428572

epoch= 2, accuracy= 0.8715428571428572

epoch= 3, accuracy= 0.8849

epoch= 4, accuracy= 0.8941142857142858

epoch= 5, accuracy= 0.9001857142857143

epoch= 6, accuracy= 0.9049857142857143

.. .. ..

epoch= 39, accuracy= 0.9306571428571428

current cell count is 30

epoch= 0, accuracy= 0.8235857142857143

epoch= 1, accuracy= 0.873

epoch= 2, accuracy= 0.8902857142857142

.. .. ..

epoch= 37, accuracy= 0.9501285714285714

epoch= 38, accuracy= 0.9505285714285714

epoch= 39, accuracy= 0.9515285714285714

current cell count is 100

epoch= 0, accuracy= 0.8409857142857143

epoch= 1, accuracy= 0.8767428571428572

epoch= 2, accuracy= 0.8899285714285714

.. .. ..

1801

1801

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?