MR案例之日志清洗

简单版

在运行核心业务Mapreduce程序之前,往往要先对数据进行清洗,清理掉不符合用户要求的数据。清理的过程往往只需要运行mapper程序,不需要运行reduce程序。

需求分析

对于输入的数据文件,需要去除日志中按照空格切分,字段数小于等于11的日志。

输入数据

数据格式如下:

编写代码

CleanMapper编写

简单版本的清洗任务在map中就可以完成,所以不需要编写reduce。

package LogClean;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/***

* 通过map来进行数据清洗

*/

public class CleanMapper extends Mapper<LongWritable,Text, Text, NullWritable> {

//快捷键alt+insert实现父类

//ctrl+alt+o去掉无用的包

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//首先读一行数据

String logs=value.toString();

//按照空格切分数据

String[] log = logs.split(" ");

//统计一行数据的字段长,去除小于11的字段

if(log.length>=11) context.write(value,NullWritable.get());

}

}

CleanDriver编写

package LogClean;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/***

* 主类,进行对job的封装

*/

public class CleanDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

//配置job

Job job = Job.getInstance(new Configuration());

job.setJarByClass(CleanDriver.class);

//配置map的类以及输出数据类型

job.setMapperClass(CleanMapper.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

//配置reduce的个数,默认是1

//但是简单版本不需要reduce,清洗工作在map中已经完成

job.setNumReduceTasks(0);

//输入数据路径

FileInputFormat.setInputPaths(job,new Path("D:\\BigdataTest\\LogCleaning\\web.txt"));

//输出数据路径

FileOutputFormat.setOutputPath(job,new Path("D:\\BigdataTest\\LogCleaning\\result"));

//提交任务

job.waitForCompletion(true);

}

}

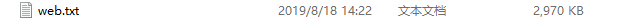

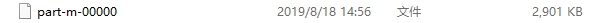

输出结果

清洗之前:大小2970kb

清洗之后:2901kb

去除掉了字段小于11的数据。

复杂版

需求分析

- 对web访问日志中的各字段识别切分

- 去除日志中不合法的记录(1.字段小于等于11不合法 2.状态码大于等于400)

- 根据统计需求,生成各类访问请求过滤数据

输入数据

输入数据同简单版本的输入数据

编写代码

定义Bean

定义一个bean,用来记录日志数据中的各数据字段。

package LogClean;

/***

* 编写一个Bean用来记录日志数据中心各个数据字段

*/

public class LogBean {

private String remote_addr;// 记录客户端的ip地址

private String remote_user;// 记录客户端用户名称,忽略属性"-"

private String time_local;// 记录访问时间与时区

private String request;// 记录请求的url与http协议

private String status;// 记录请求状态;成功是200

private String body_bytes_sent;// 记录发送给客户端文件主体内容大小

private String http_referer;// 用来记录从那个页面链接访问过来的

private String http_user_agent;// 记录客户浏览器的相关信息

private boolean valid=true;//判断数据是否合法

public String getRemote_addr() {

return remote_addr;

}

public void setRemote_addr(String remote_addr) {

this.remote_addr = remote_addr;

}

public String getRemote_user() {

return remote_user;

}

public void setRemote_user(String remote_user) {

this.remote_user = remote_user;

}

public String getTime_local() {

return time_local;

}

public void setTime_local(String time_local) {

this.time_local = time_local;

}

public String getRequest() {

return request;

}

public void setRequest(String request) {

this.request = request;

}

public String getStatus() {

return status;

}

public void setStatus(String status) {

this.status = status;

}

public String getBody_bytes_sent() {

return body_bytes_sent;

}

public void setBody_bytes_sent(String body_bytes_sent) {

this.body_bytes_sent = body_bytes_sent;

}

public String getHttp_referer() {

return http_referer;

}

public void setHttp_referer(String http_referer) {

this.http_referer = http_referer;

}

public String getHttp_user_agent() {

return http_user_agent;

}

public void setHttp_user_agent(String http_user_agent) {

this.http_user_agent = http_user_agent;

}

public boolean isValid(){

return valid;

}

public void setValid(boolean valid){

this.valid=valid;

}

//重写toString方法用来拼接数据

@Override

public String toString(){

StringBuilder sb = new StringBuilder();

sb.append(this.valid);

//"\001"是一个特殊符号

sb.append("\001").append(this.remote_addr);

sb.append("\001").append(this.remote_user);

sb.append("\001").append(this.time_local);

sb.append("\001").append(this.request);

sb.append("\001").append(this.status);

sb.append("\001").append(this.body_bytes_sent);

sb.append("\001").append(this.http_referer);

sb.append("\001").append(this.http_user_agent);

return sb.toString();

}

}

编写CleanMapper2

package LogClean;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class CleanMapper2 extends Mapper<LongWritable, Text,Text, NullWritable> {

//自动补全的快捷键 ctrl+alt+空格

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//获取数据

String logs = value.toString();

//判断日志是否合法

LogBean logBean = pressLog(logs);

if(!logBean.isValid()) return;

context.write(new Text(logBean.toString()),NullWritable.get());

}

public LogBean pressLog(String logs){

LogBean logBean = new LogBean();

//切分数据

String[] fields = logs.split(" ");

//长度判定

if(fields.length>11){

//封装数据到LogBean

logBean.setRemote_addr(fields[0]);

logBean.setRemote_user(fields[1]);

logBean.setTime_local(fields[3]+fields[4]);

logBean.setRequest(fields[7]);

logBean.setStatus(fields[8]);

logBean.setBody_bytes_sent(fields[9]);

logBean.setHttp_referer(fields[10]);

//用户信息

if(fields.length>12){

logBean.setHttp_user_agent(fields[11]+" "+fields[12]);

}else logBean.setHttp_user_agent(fields[11]);

//判断状态码 去除状态码大于等于400的

if(Integer.parseInt(logBean.getStatus())>=400) logBean.setValid(false);

}else logBean.setValid(false);

return logBean;

}

}

编写CleanDriver2

package LogClean;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class CleanDriver2 {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

//配置job

Job job = Job.getInstance(new Configuration());

job.setJarByClass(CleanDriver2.class);

//配置map的类以及输出数据类型

job.setMapperClass(CleanMapper2.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

//配置reduce的个数,默认是1

//但是简单版本不需要reduce,清洗工作在map中已经完成

job.setNumReduceTasks(0);

//输入数据路径

FileInputFormat.setInputPaths(job,new Path("D:\\BigdataTest\\LogCleaning\\web.txt"));

//输出数据路径

FileOutputFormat.setOutputPath(job,new Path("D:\\BigdataTest\\LogCleaning\\result2"));

//提交任务

job.waitForCompletion(true);

}

}

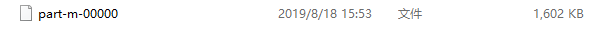

输出结果

清洗之前:大小2970kb

清洗之后:大小1602kb

167

167

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?