1.下载 http://spark.apache.org/downloads.html

2. 解压tar -zxvf spark-2.2.0-bin-2.6.0-cdh5.7.0.tgz -C ~/app/

3. 配置环境变量 vi ~/.bash_profile

export SPARK_HOME=/home/hadoop/app/spark-2.2.0-bin-2.6.0-cdh5.7.0

export PATH=$SPARK_HOME/bin:$PATH

4.刷新环境变量

source ~/.bash_profile

5. Local模式启动

Local 模式是最简单的一种运行方式,它采用单节点多线程方式运行,不用部署,开箱即用,适合日常测试开发。

启动spark-shell

spark-shell --master local[2]

- local:只启动一个工作线程;

- local[k]:启动 k 个工作线程;

- local[*]:启动跟 cpu 数目相同的工作线程数。

[hadoop@hadoop000 bin]$ spark-shell --master local[2]

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

19/11/20 14:47:43 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

19/11/20 14:47:47 WARN ObjectStore: Failed to get database global_temp, returning NoSuchObjectException

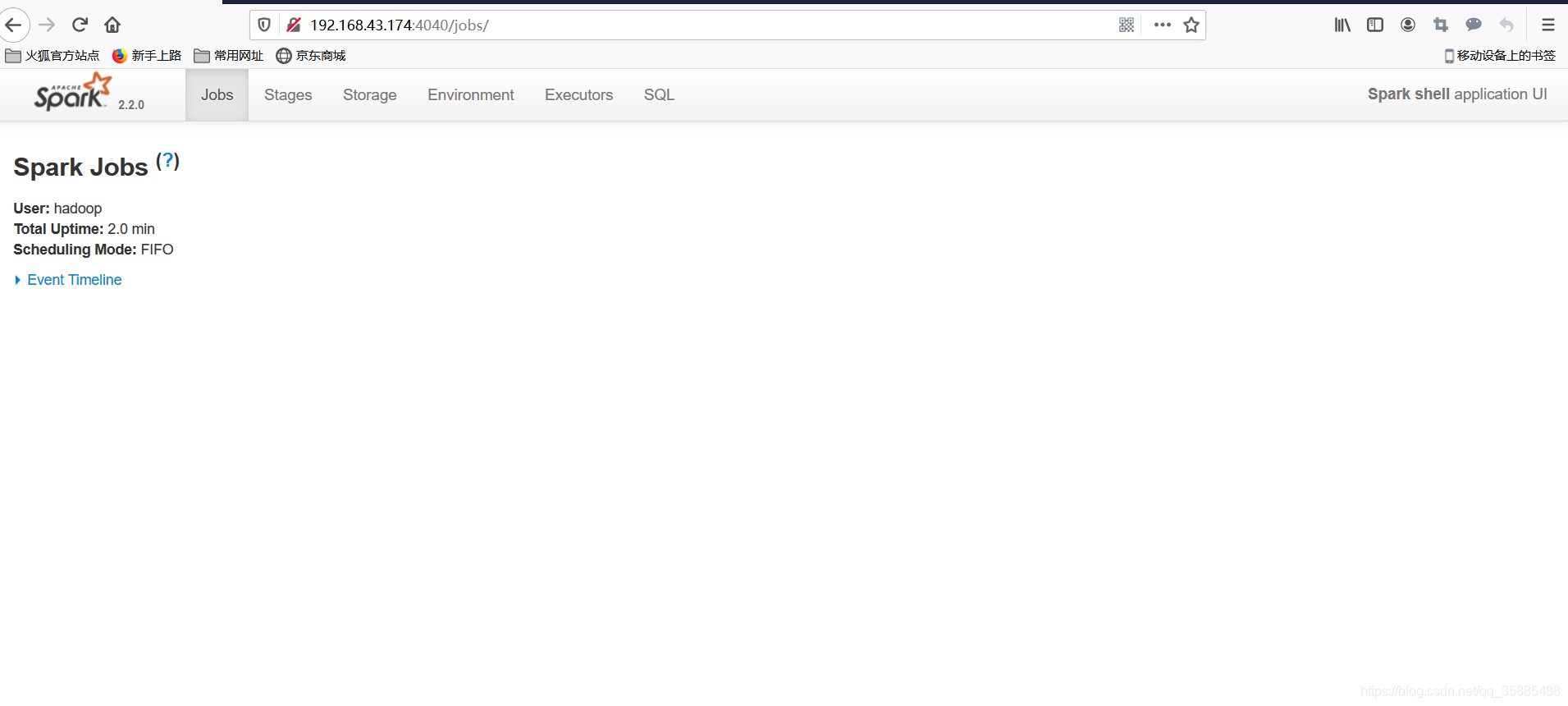

Spark context Web UI available at http://192.168.43.174:4040

Spark context available as 'sc' (master = local[2], app id = local-1574232464443).

Spark session available as 'spark'.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 2.2.0

/_/

Using Scala version 2.11.8 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_144)

Type in expressions to have them evaluated.

Type :help for more information.

scala>

我们也可以查看Spark的Web UI界面,访问http://192.168.43.174:4040

至此:spark环境搭建成功

340

340

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?