Logstash

Logstash 是开源的服务器端数据处理管道,能够同时从多个来源采集数据,转换数据,然后将数据发送到您最喜欢的存储库中。

Logstash 能够动态地采集、转换和传输数据,不受格式或复杂度的影响。利用 Grok 从非结构化数据中派生出结构,从 IP 地址解码出地理坐标,匿名化或排除敏感字段,并简化整体处理过程。

数据往往以各种各样的形式,或分散或集中地存在于很多系统中。Logstash 支持各种输入选择 ,可以在同一时间从众多常用来源捕捉事件,能够以连续的流式传输方式,轻松地从您的日志、指标、Web 应用、数据存储以及各种 AWS 服务采集数据。

下载logstash镜像

查询镜像

[root@localhost elk]# docker search logstash

NAME DESCRIPTION STARS OFFICIAL AUTOMATED

logstash Logstash is a tool for managing events and l… 2141 [OK]

opensearchproject/logstash-oss-with-opensearch-output-plugin The Official Docker Image of Logstash with O… 17

grafana/logstash-output-loki Logstash plugin to send logs to Loki 3

拉取镜像

[root@localhost elk]# docker pull logstash:7.17.7

7.17.7: Pulling from library/logstash

fb0b3276a519: Already exists

4a9a59914a22: Pull complete

5b31ddf2ac4e: Pull complete

162661d00d08: Pull complete

706a1bf2d5e3: Pull complete

741874f127b9: Pull complete

d03492354dd2: Pull complete

a5245bb90f80: Pull complete

05103a3b7940: Pull complete

815ba6161ff7: Pull complete

7777f80b5df4: Pull complete

Digest: sha256:93030161613312c65d84fb2ace25654badbb935604a545df91d2e93e28511bca

Status: Downloaded newer image for logstash:7.17.7

docker.io/library/logstash:7.17.7

创建logstash容器

创建logstash容器,为拷贝配置文件。

docker run --name logstash -d logstash:7.17.7

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

511e983027e7 logstash:7.17.7 "/usr/local/bin/dock…" 4 seconds ago Up 4 seconds 5044/tcp, 9600/tcp logstash

配置文件

挂载文件夹

从容器中拷贝文件到/usr/local/software/elk/logstash下

[root@localhost logstash]#

配置文件

从容器中拷贝文件到linux

[root@localhost logstash]# docker cp logstash:/usr/share/logstash/config/ ./

Successfully copied 11.8kB to /usr/local/software/elk/logstash/./

[root@localhost logstash]# docker cp logstash:/usr/share/logstash/data/ ./

Successfully copied 4.1kB to /usr/local/software/elk/logstash/./

[root@localhost logstash]# docker cp logstash:/usr/share/logstash/pipeline/ ./

Successfully copied 2.56kB to /usr/local/software/elk/logstash/./

创建一个日志文件夹

mkdir logs

logstash.yml

path.logs: /usr/share/logstash/logs

config.test_and_exit: false

config.reload.automatic: false

http.host: "0.0.0.0"

xpack.monitoring.elasticsearch.hosts: [ "http://192.168.198.128:9200" ]

pipelines.yml

# This file is where you define your pipelines. You can define multiple.

# For more information on multiple pipelines, see the documentation:

# https://www.elastic.co/guide/en/logstash/current/multiple-pipelines.html

- pipeline.id: main

path.config: "/usr/share/logstash/pipeline/logstash.conf"

logstash.conf

input {

tcp {

mode => "server"

host => "0.0.0.0"

port => 5044

codec => json_lines

}

}

filter{

}

output {

elasticsearch {

hosts => ["192.168.198.128:9200"] #elasticsearch的ip地址

index => "elk" #索引名称

}

stdout { codec => rubydebug }

}

创建运行容器

docker run -it \

--name logstash \

--privileged=true \

-p 5044:5044 \

-p 9600:9600 \

--network wn_docker_net \

--ip 172.18.12.54 \

-v /etc/localtime:/etc/localtime \

-v /usr/local/software/elk/logstash/config:/usr/share/logstash/config \

-v /usr/local/software/elk/logstash/data:/usr/share/logstash/data \

-v /usr/local/software/elk/logstash/logs:/usr/share/logstash/logs \

-v /usr/local/software/elk/logstash/pipeline:/usr/share/logstash/pipeline \

-d logstash:7.17.7

由于挂载有时候不成功,可以先创建容器后把文件拷贝到config, pipeline中。

docker run -it \

--name logstash \

--privileged=true \

-p 5044:5044 \

-p 9600:9600 \

--network wn_docker_net \

--ip 172.18.12.54 \

-v /etc/localtime:/etc/localtime \

-d logstash:7.17.7

springboot整合logstash

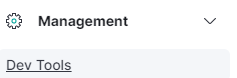

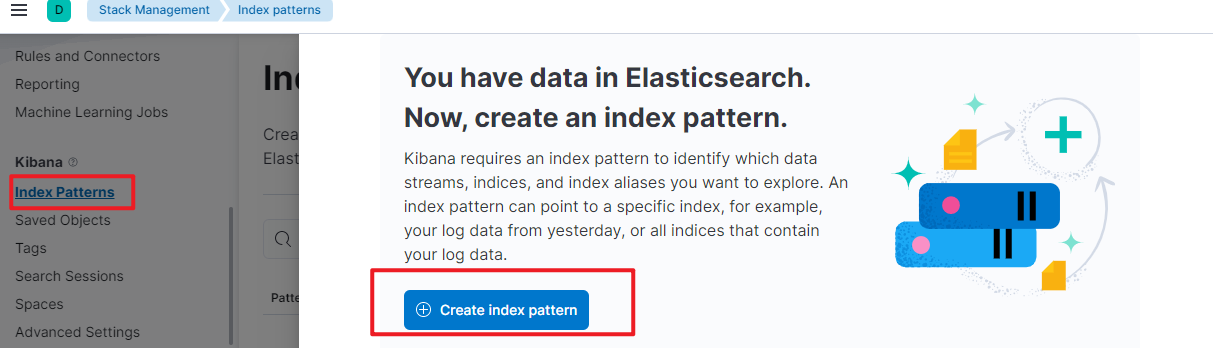

kibana创建索引

创建索引对象

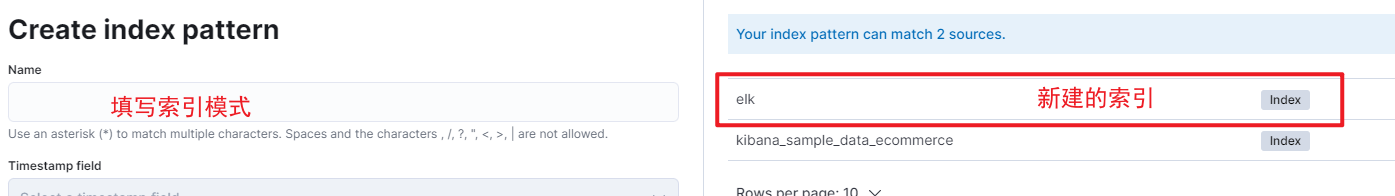

在kibana中创建索引,索引名称和logstash.conf中的index名称一致。

#logstash.conf中配置的内容

elasticsearch {

hosts => ["192.168.198.128:9200"] #elasticsearch的ip地址

index => "elk" #索引名称

}

·

·

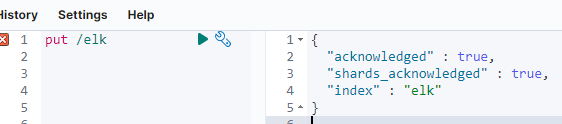

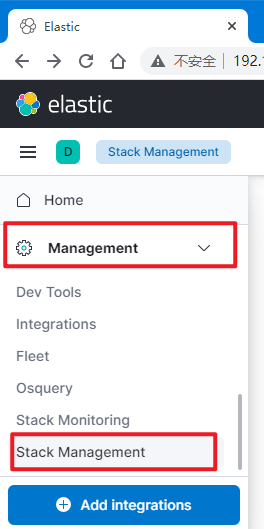

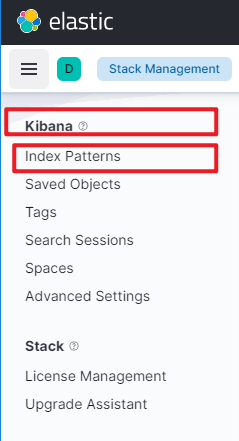

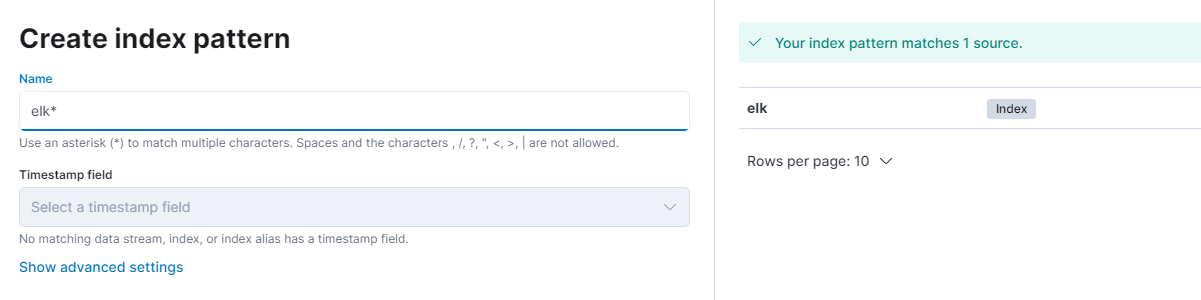

创建索引模式

在菜单中选择Management —> StackManagement 点击 Add integrations

Kibana–>index Patterns

springboot

引入依赖

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.wnhz.elk</groupId>

<artifactId>springboot-elk</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<maven.compiler.source>8</maven.compiler.source>

<maven.compiler.target>8</maven.compiler.target>

</properties>

<parent>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-parent</artifactId>

<version>2.5.14</version>

</parent>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>org.projectlombok</groupId>

<artifactId>lombok</artifactId>

</dependency>

<dependency>

<groupId>net.logstash.logback</groupId>

<artifactId>logstash-logback-encoder</artifactId>

<version>7.3</version>

</dependency>

</dependencies>

</project>

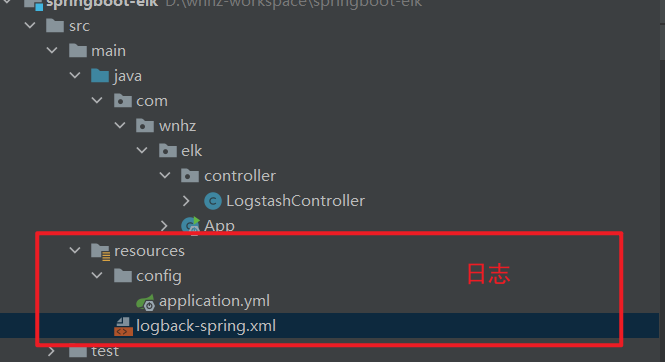

编辑logback-spring.xml文件

<?xml version="1.0" encoding="UTF-8"?>

<!-- 日志级别从低到高分为TRACE < DEBUG < INFO < WARN < ERROR < FATAL,如果设置为WARN,则低于WARN的信息都不会输出 -->

<!-- scan:当此属性设置为true时,配置文档如果发生改变,将会被重新加载,默认值为true -->

<!-- scanPeriod:设置监测配置文档是否有修改的时间间隔,如果没有给出时间单位,默认单位是毫秒。

当scan为true时,此属性生效。默认的时间间隔为1分钟。 -->

<!-- debug:当此属性设置为true时,将打印出logback内部日志信息,实时查看logback运行状态。默认值为false。 -->

<configuration scan="true" scanPeriod="10 seconds">

<!--1. 输出到控制台-->

<appender name="CONSOLE" class="ch.qos.logback.core.ConsoleAppender">

<!--此日志appender是为开发使用,只配置最低级别,控制台输出的日志级别是大于或等于此级别的日志信息-->

<filter class="ch.qos.logback.classic.filter.ThresholdFilter">

<level>DEBUG</level>

</filter>

<encoder>

<pattern>%d{yyyy-MM-dd HH:mm:ss.SSS} -%5level ---[%15.15thread] %-40.40logger{39} : %msg%n</pattern>

<!-- 设置字符集 -->

<charset>UTF-8</charset>

</encoder>

</appender>

<!-- 2. 输出到文件 -->

<appender name="FILE" class="ch.qos.logback.core.rolling.RollingFileAppender">

<!--日志文档输出格式-->

<append>true</append>

<encoder>

<pattern>%d{yyyy-MM-dd HH:mm:ss.SSS} -%5level ---[%15.15thread] %-40.40logger{39} : %msg%n</pattern>

<charset>UTF-8</charset> <!-- 此处设置字符集 -->

</encoder>

</appender>

<!--LOGSTASH config -->

<appender name="LOGSTASH" class="net.logstash.logback.appender.LogstashTcpSocketAppender">

<destination>192.168.198.128:5044</destination>

<encoder charset="UTF-8" class="net.logstash.logback.encoder.LogstashEncoder">

<!--自定义时间戳格式, 默认是yyyy-MM-dd'T'HH:mm:ss.SSS<-->

<timestampPattern>yyyy-MM-dd HH:mm:ss</timestampPattern>

<customFields>{"appname":"App"}</customFields>

</encoder>

</appender>

<root level="DEBUG">

<appender-ref ref="CONSOLE"/>

<appender-ref ref="FILE"/>

<appender-ref ref="LOGSTASH"/>

</root>

</configuration>

代码测试

package com.wnhz.elk.controller;

import com.wnhz.elk.result.HttpResp;

import io.swagger.annotations.Api;

import io.swagger.annotations.ApiOperation;

import lombok.extern.slf4j.Slf4j;

import org.springframework.web.bind.annotation.GetMapping;

import org.springframework.web.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.RestController;

import javax.servlet.http.HttpServletRequest;

import java.util.Date;

@Api(tags = "ELK测试模块")

@RestController

@RequestMapping("/api/elk")

@Slf4j

public class ElkController {

@ApiOperation(value = "elkHello",notes = "测试ELK的结果")

@GetMapping("/elkHello")

public HttpResp<String> elkHello(HttpServletRequest request) {

log.debug("测试日志,请求request:{},当前时间:{}", request, new Date());

log.debug("测试日志,第{}行测试代码,当前时间:{}", 2, new Date());

return HttpResp.success("ELK测试-hello");

}

}

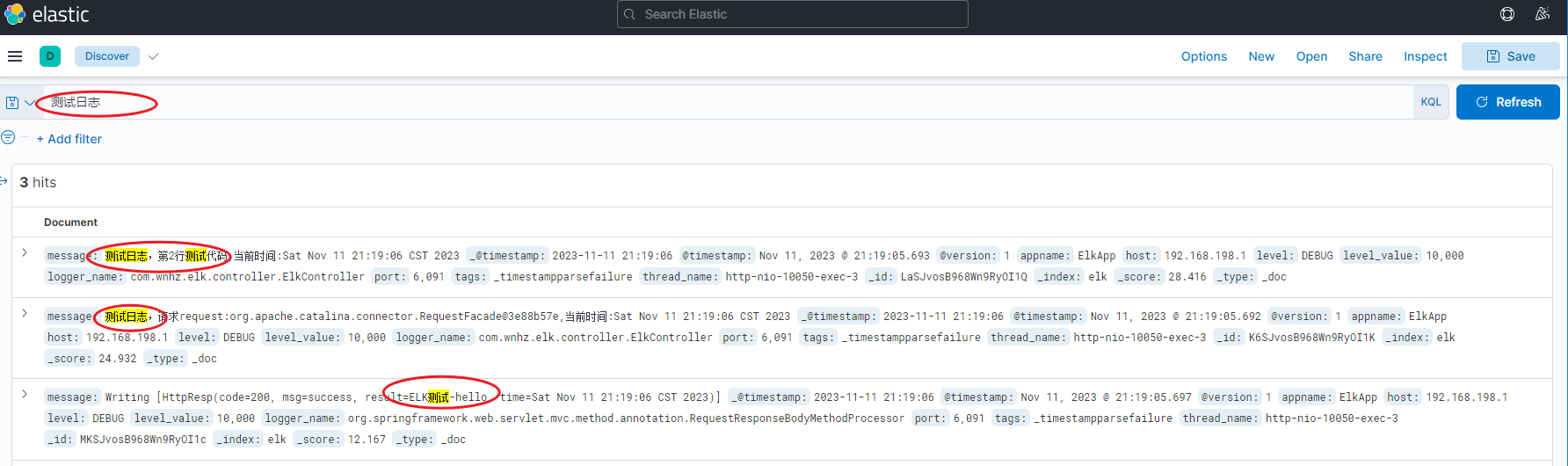

kibana中查看索引

4万+

4万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?