<properties>

<maven.compiler.source>1.7</maven.compiler.source>

<maven.compiler.target>1.7</maven.compiler.target>

<encoding>UTF-8</encoding>

<scala.version>2.10.6</scala.version>

<spark.version>1.5.2</spark.version>

<hadoop.version>2.6.4</hadoop.version>

</properties>

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.10</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${hadoop.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.10</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.10</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-kafka_2.10</artifactId>

<version>1.5.2</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-flume_2.10</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.38</version>

</dependency>

</dependencies>

import org.apache.spark.{HashPartitioner, SparkConf}

import org.apache.spark.storage.StorageLevel

import org.apache.spark.streaming.kafka.KafkaUtils

import org.apache.spark.streaming.{Seconds, StreamingContext}

/**

* SparkStreaming整合kafka

*/

object KafkaWordCount {

def main(args: Array[String]): Unit = {

/**

* 数组中的参数我们将通过edit configuration方式赋值

*/

val Array(zkQuorum,group,topics,numThreads) =args

val conf = new SparkConf().setAppName("KafkaWordCount").setMaster("local[2]")

/**

* 批处理的时间间隔为5秒

*/

val streamContext = new StreamingContext(conf,Seconds(5))

streamContext.checkpoint("E:\\kafkaWordCount")

val topicMap = topics.split(",").map((_,numThreads.toInt)).toMap

/*def createStream(ssc : org.apache.spark.streaming.StreamingContext,

zkQuorum : scala.Predef.String,

groupId : scala.Predef.String,

topics : scala.Predef.Map[scala.Predef.String, scala.Int],

storageLevel : org.apache.spark.storage.StorageLevel = {

/* compiled code */ }) : org.apache.spark.streaming.dstream.ReceiverInputDStream[scala.Tuple2[scala.Predef.String, scala.Predef.String]] = { /* compiled code */ }*/

val data = KafkaUtils.createStream(streamContext,

zkQuorum,

group,

topicMap,

StorageLevel.MEMORY_AND_DISK)

/**

* 对kafka获取的数据进行处理

*/

val words = data.map(_._2).flatMap(_.split(" "))

/* def updateStateByKey[S](updateFunc : scala.Function1[scala.Iterator[scala.Tuple3[K, scala.Seq[V],

scala.Option[S]]],

scala.Iterator[scala.Tuple2[K, S]]],

partitioner : org.apache.spark.Partitioner,

rememberPartitioner : scala.Boolean)

(implicit evidence$5 : scala.reflect.ClassTag[S]) : org.apache.spark.streaming.dstream.DStream[scala.Tuple2[K, S]] = { /* compiled code */ }*/

val wordCounts = words.map((_,1)).updateStateByKey(updateFunc,

new HashPartitioner(streamContext.sparkContext.defaultParallelism),

true)

wordCounts.print()

streamContext.start()

streamContext.awaitTermination()

}

val updateFunc=(iter:Iterator[(String,Seq[Int],Option[(Int)])])=>{

iter.flatMap{

case (x,y,z) =>Some(y.sum+z.getOrElse(0)).map(i=>(x,i))

}

}

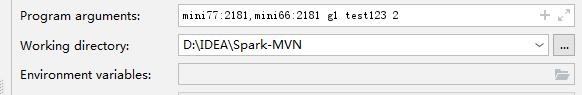

}2.在Run/Debug configurations中设置我们所需要的参数

3.启动kafka集群

这里是kafka所需要的命令,https://blog.csdn.net/qq_38704184/article/details/85135739

4.启动生产者(所需要的topic必须与写的代码的topic一致)

5.启动消费者(所需要的topic必须与写的代码的topic一致)

6.运行代码程序

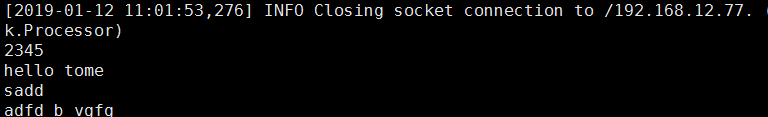

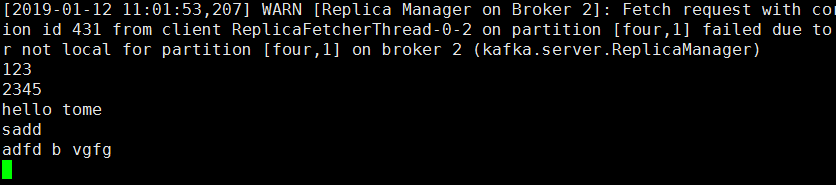

sparkStreaming处理数据成功

415

415

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?