prometheus 监控 k8s pod 容器服务状态

Prometheus+Grafana 作为监控K8S的解决方案,大部分都是在K8S集群内部部署,所以监控起来很方便,可以直接调用集群内的cert及各种监控url,但是增加了集群的资源开销,

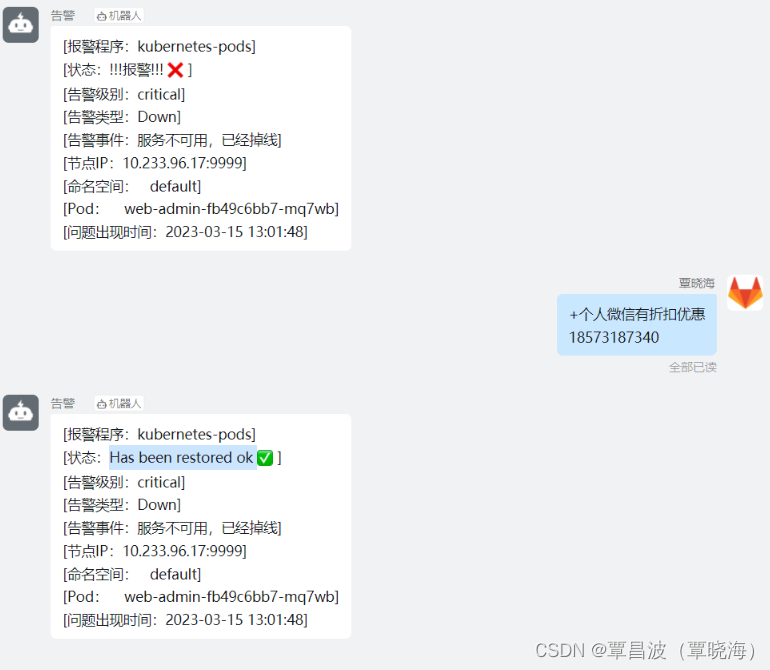

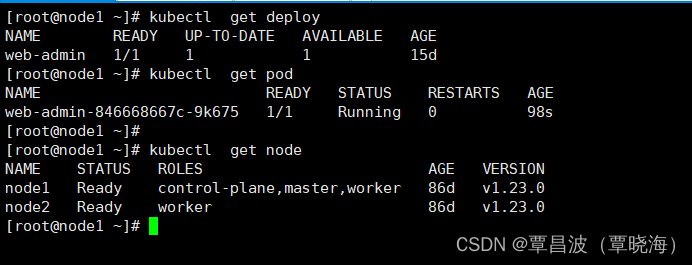

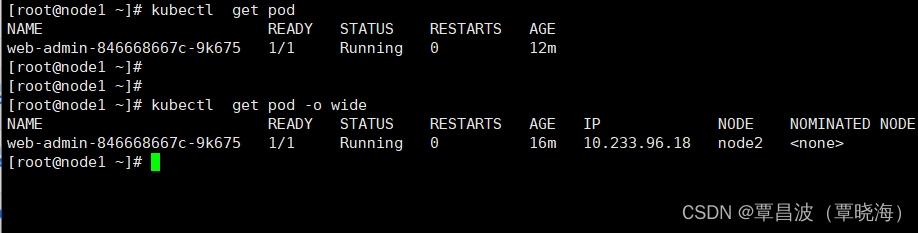

**需求:**每个 pod 重启/删除时,都能发出告警。要及时和准确

前几期也讲过 报警,可以回顾哦

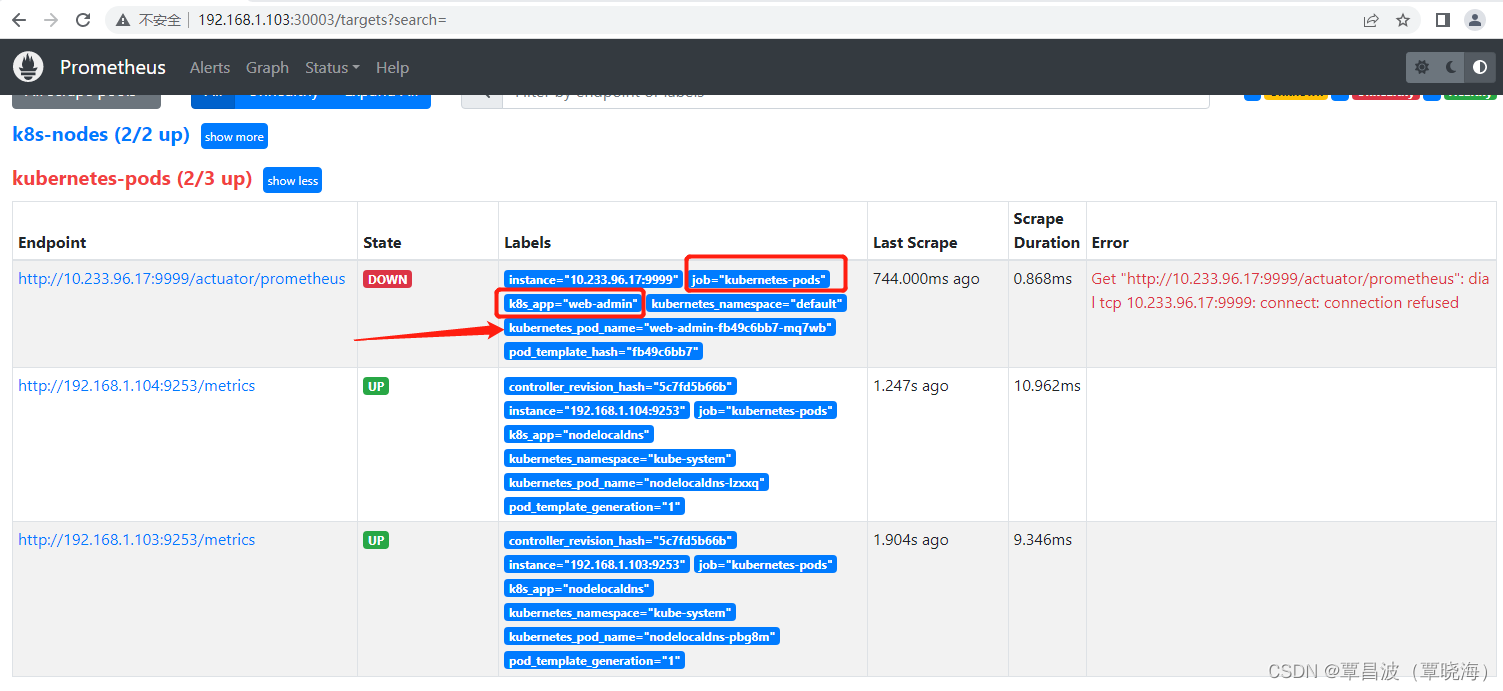

实现 效果

配置 rbac 相关认证

Prometheus 需要访问 Kubernetes 的一些资源对象,所以需要配置 rbac 相关认证,内容如下:

1)创建一个用于Prometheus pod 中的ServiceAccount

2)创建ClusterRole,定义规则权限

3)创建ClusterRoleBinding 将ServiceAccount 与 ClusterRole进行绑定

apiVersion: v1

kind: Namespace

metadata:

name: monitor

labels:

name: monitor

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups: [""]

resources:

- nodes

- nodes/proxy

- services

- endpoints

- pods

verbs: ["get", "list", "watch"]

- apiGroups:

- extensions

resources:

- ingresses

verbs: ["get", "list", "watch"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: monitor

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: monitor

---

1.配置 prometheus-config

告警规则 和 监控

global:

scrape_interval: 2s

scrape_timeout: 2s

evaluation_interval: 2s

alerting:

alertmanagers:

- static_configs:

- targets:

- 192.168.1.104:9093 #alertmanagersIP

rule_files:

- "/etc/prometheus-rules/rules"

scrape_configs:

- job_name: 'prometheus'

static_configs:

- targets: ['localhost:9090']

- job_name: 'k8s-nodes'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics

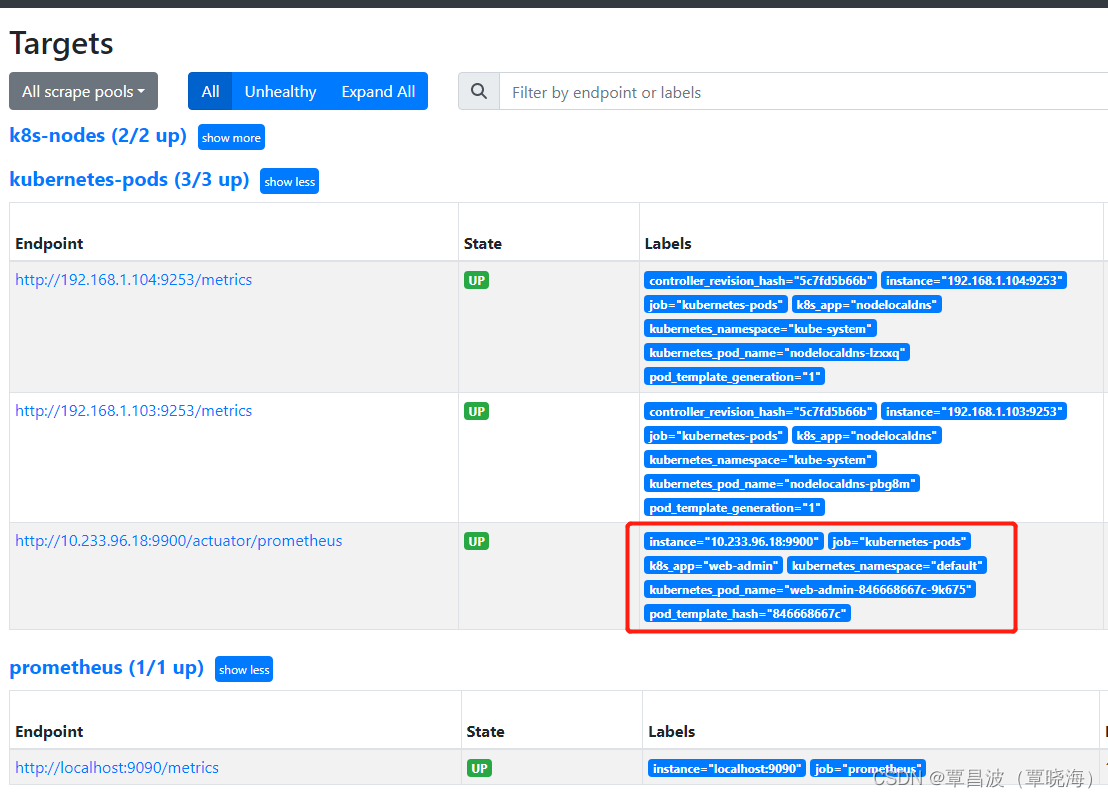

- job_name: 'kubernetes-pods'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

https://yunlzheng.gitbook.io/prometheus-book/part-iii-prometheus-shi-zhan/readmd/use-prometheus-monitor-kubernetes

这是官网的注解

告警规则

groups:

- name: Down

rules:

- alert: Down

expr: up == 0

for: 30s

labels:

severity: critical

annotations:

description: "服务不可用,已经掉线"

Deployment部署应用

demo1.yaml

kind: Deployment

apiVersion: apps/v1

metadata:

name: web-admin

spec:

replicas: 1

selector:

matchLabels:

k8s-app: web-admin

template:

metadata:

annotations:

prometheus.io/scrape: 'true'

prometheus.io/port: '9900'

prometheus.io/path: "/actuator/prometheus"

labels:

k8s-app: web-admin

spec:

containers:

- image: 857676355/web-job-admin:v1

name: web-admin

ports:

- containerPort: 9900

注意

template:

metadata:

annotations:

prometheus.io/scrape: 'true' #的话该pod会作为监控目标

prometheus.io/port: '9900' #采集endpoint的端口号

prometheus.io/path: "/actuator/prometheus" #采集的url,默认为/metrics

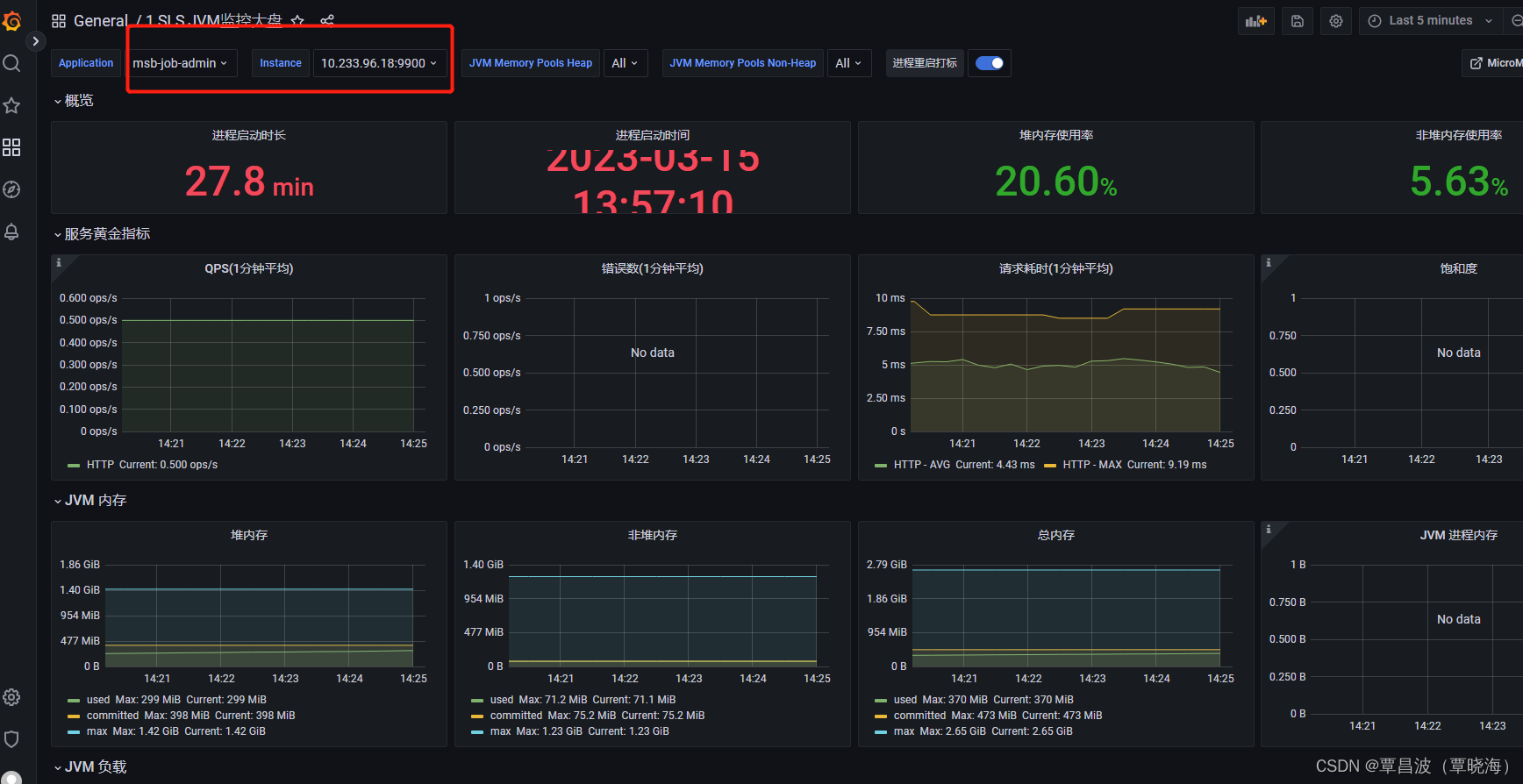

添加 监控项目

完成

1207

1207

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?