1. 连接数据库

我使用了Druid数据库连接池和JdbcTemplate,其实只要能连接上数据库就行

-

在数据库建好需要的数据库表

-

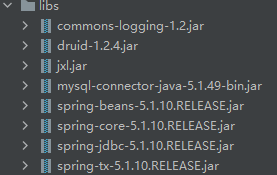

需要的jar包:

- 新建一个libs文件夹,把jar包放到里面,右击=>

Add as Library,这样便可在java类中调用

-

在src文件夹下新建一个配置文件:

druid.properyties,用于配置MySQL连接的信息driverClassName=com.mysql.jdbc.Driver url=jdbc:mysql://localhost:3306/test?characterEncoding=utf8&useSSL=false username=root password=root # 初始化连接数量 initialSize=5 # 最大连接数量 maxActive=10 # 最大等待时间 maxWait=3000 -

新建一个通用获取数据库连接的工具类:

DruidJDBCUtils.java,用于获取数据库连接package utils; import com.alibaba.druid.pool.DruidDataSourceFactory; import javax.sql.DataSource; import java.io.IOException; import java.io.InputStream; import java.sql.Connection; import java.sql.ResultSet; import java.sql.SQLException; import java.sql.Statement; import java.util.Properties; public class DruidJDBCUtils { private static DataSource ds; static { try { //1.加载配置文件 Properties pros = new Properties(); InputStream is = DruidJDBCUtils.class.getClassLoader().getResourceAsStream("druid.properties"); pros.load(is); //2.获取数据库连接池对象 ds = (DataSource) DruidDataSourceFactory.createDataSource(pros); } catch (IOException e) { e.printStackTrace(); } catch (Exception e) { e.printStackTrace(); } } /** * @Author SuGar * @Description //TODO 3.获取数据库连接 * @Date 17:33 2021/2/16 * @Param [] * @return java.sql.Connection **/ public static Connection getConnection() throws SQLException { return ds.getConnection(); } /** * @Author SuGar * @Description //TODO 释放资源 * @Date 17:36 2021/2/16 * @Param [stmt, conn] * @return void **/ public static void close(Statement stmt, Connection conn){ /*if (stmt != null) { try { stmt.close(); } catch (SQLException e) { e.printStackTrace(); } } if (conn != null) { try { conn.close(); } catch (SQLException e) { e.printStackTrace(); } }*/ close(null, stmt, conn); } /** * @Author SuGar * @Description //TODO 释放资源 * @Date 17:36 2021/2/16 * @Param [rs, stmt, conn] * @return void **/ public static void close(ResultSet rs, Statement stmt, Connection conn){ if (rs != null) { try { rs.close(); } catch (SQLException e) { e.printStackTrace(); } } if (stmt != null) { try { stmt.close(); } catch (SQLException e) { e.printStackTrace(); } } if (conn != null) { try { conn.close(); } catch (SQLException e) { e.printStackTrace(); } } } /** * @Author SuGar * @Description //TODO 获取数据库连接池对象 * @Date 17:39 2021/2/16 * @Param [] * @return javax.sql.DataSource **/ public static DataSource getDataSource(){ return ds; } }

2. 雪花id算法:

-

雪花算法生成id工具类:

SnowFlakeUtil .javapackage utils; /** * Twitter_Snowflake<br> * SnowFlake的结构如下(每部分用-分开):<br> * 0 - 0000000000 0000000000 0000000000 0000000000 0 - 00000 - 00000 - 000000000000 <br> * 1位标识,由于long基本类型在Java中是带符号的,最高位是符号位,正数是0,负数是1,所以id一般是正数,最高位是0<br> * 41位时间截(毫秒级),注意,41位时间截不是存储当前时间的时间截,而是存储时间截的差值(当前时间截 - 开始时间截) * 得到的值),这里的的开始时间截,一般是我们的id生成器开始使用的时间,由我们程序来指定的(如下下面程序IdWorker类的startTime属性)。41位的时间截,可以使用69年,年T = (1L << 41) / (1000L * 60 * 60 * 24 * 365) = 69<br> * 10位的数据机器位,可以部署在1024个节点,包括5位datacenterId和5位workerId<br> * 12位序列,毫秒内的计数,12位的计数顺序号支持每个节点每毫秒(同一机器,同一时间截)产生4096个ID序号<br> * 加起来刚好64位,为一个Long型。<br> * SnowFlake的优点是,整体上按照时间自增排序,并且整个分布式系统内不会产生ID碰撞(由数据中心ID和机器ID作区分),并且效率较高,经测试,SnowFlake每秒能够产生26万ID左右。 */ public class SnowFlakeUtil { private final long id; /** * 时间起始标记点,作为基准,一般取系统的最近时间 */ private final long epoch = 1524291141010L; /** * 机器标识位数 */ private final long workerIdBits = 10L; /** * 机器ID最大值: 1023 */ private final long maxWorkerId = -1L ^ -1L << this.workerIdBits; /** * 0,并发控制 */ private long sequence = 0L; /** * 毫秒内自增位 */ private final long sequenceBits = 12L; /** * 12 */ private final long workerIdShift = this.sequenceBits; /** * 22 */ private final long timestampLeftShift = this.sequenceBits + this.workerIdBits; /** * 4095,111111111111,12位 */ private final long sequenceMask = -1L ^ -1L << this.sequenceBits; private long lastTimestamp = -1L; private SnowFlakeUtil(long id) { if (id > this.maxWorkerId || id < 0) { throw new IllegalArgumentException(String.format("worker Id can't be greater than %d or less than 0", this.maxWorkerId)); } this.id = id; } public synchronized long nextId() { long timestamp = timeGen(); if (this.lastTimestamp == timestamp) { //如果上一个timestamp与新产生的相等,则sequence加一(0-4095循环); 对新的timestamp,sequence从0开始 this.sequence = this.sequence + 1 & this.sequenceMask; if (this.sequence == 0) { // 重新生成timestamp timestamp = this.tilNextMillis(this.lastTimestamp); } } else { this.sequence = 0; } if (timestamp < this.lastTimestamp) { //log.error(String.format("clock moved backwards.Refusing to generate id for %d milliseconds", (this.lastTimestamp - timestamp))); return -1; } this.lastTimestamp = timestamp; return timestamp - this.epoch << this.timestampLeftShift | this.id << this.workerIdShift | this.sequence; } private static SnowFlakeUtil flowIdWorker = new SnowFlakeUtil(1); public static SnowFlakeUtil getFlowIdInstance() { return flowIdWorker; } /** * 等待下一个毫秒的到来, 保证返回的毫秒数在参数lastTimestamp之后 */ private long tilNextMillis(long lastTimestamp) { long timestamp = timeGen(); while (timestamp <= lastTimestamp) { timestamp = timeGen(); } return timestamp; } /** * 获得系统当前毫秒数 */ private static long timeGen() { return System.currentTimeMillis(); } public static void main(String[] args) { for(int i=0;i<10;i++){ SnowFlakeUtil snowFlakeUtil = SnowFlakeUtil.getFlowIdInstance(); System.out.println(snowFlakeUtil.nextId()); } } }

3. 读取Excel并存到数据库

-

新建一个读取Excel的类:ExcelProcess.java,综合所有,完成任务

import jxl.NumberCell; import jxl.Sheet; import jxl.Workbook; import jxl.read.biff.BiffException; import jxl.write.WriteException; import org.springframework.jdbc.core.JdbcTemplate; import utils.DruidJDBCUtils; import utils.SnowFlakeUtil; import javax.sql.DataSource; import java.io.File; import java.io.IOException; import java.text.DecimalFormat; import java.text.ParseException; import java.text.SimpleDateFormat; import java.util.Date; import java.util.Locale; public class ExeclProcess { public static void main(String[] args) throws IOException, WriteException, BiffException, ParseException { //1:创建workbook,即excel文件 Workbook workbook = Workbook.getWorkbook(new File("./excels/2020data.xls")); //2:获取所有工作表sheets Sheet[] sheets = workbook.getSheets(); String[] sensorNames = {"应力传感器", "温度传感器", "伸缩缝传感器", "沉降传感器"}; String[] dbTbNames = {"sensor_stress_long", "sensor_temperature_long", "sensor_expansion_joint_long", "sensor_settlement_long"}; String[] labels = {"应力监测", "温度监测", "伸缩缝监测", "沉降监测"}; String[] units = {"MPa", "℃", "", ""}; /* //1:创建workbook,即excel文件 Workbook workbook = Workbook.getWorkbook(new File("./excels/202005数据.xls")); //2:获取所有工作表sheets Sheet[] sheets = workbook.getSheets(); //3.数据库字段 String[] sensorNames = {"应力传感器", "温度传感器", "索力传感器"}; String[] dbTbNames = {"sensor_stress_short", "sensor_temperature_short", "sensor_cable_force_short"}; String[] labels = {"应力监测", "温度监测", "索力监测"}; String[] units = {"MPa", "℃", "Pa"};*/ //4.获取数据库连接池对象 DataSource ds = DruidJDBCUtils.getDataSource(); //5.创建JdbcTemplate对象 JdbcTemplate template = new JdbcTemplate(ds); //6. String sensor_id = null; String name = null; String value = null; String sql = null; SimpleDateFormat sdf = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss"); Date time = null; //7:获取数据 for (int i = 0; i < sheets.length; i++) { int rows = sheets[i].getRows(); int columns = sheets[i].getColumns(); for (int r = 1; r < rows; r++) { for (int c = 1; c < columns; c++) { sensor_id = sheets[i].getCell(c, 0).getContents().toLowerCase(Locale.ROOT);//获得第2列开始,每列的第一个代表传感器id name = sensorNames[i] + "_" + sensor_id; System.out.println(((NumberCell)sheets[i].getCell(c, r)).getValue()); value = String.valueOf(((NumberCell)sheets[i].getCell(c, r)).getValue());//获得第2列开始,每列的除第一个外的值,代表传感器数据 time = sdf.parse(sheets[i].getCell(0, r).getContents());//获取第一列的时间 //8.定义SQL sql = "insert into " + dbTbNames[i] + "(" + "id, " + "sensor_id, " + "name, " + "type, " + "label, " + "value, " + "unit, " + "create_time, " + "create_by, " + "update_time, " + "update_by) values(?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?)"; //9.调用方法,执行sql int count = template.update(sql, String.valueOf(SnowFlakeUtil.getFlowIdInstance().nextId()), sensor_id, name, i, labels[i], value, units[i], time, name, time, name); System.out.println(sheets[i].getName() + ":(" + r + ", " + c + ")-(" + value + ")"); } } } //最后一步:关闭资源 workbook.close(); } } -

运行即可

4. 遇到的问题

-

在写sql时,刚开始没有使用预编译sql,会报一个错:

是sql语法错误,但我的sql是没写错的,其中字段sensor_id会赋值为a1, a2, b1, b2等。但运行时报:Unknown ‘a1’ in Field List

即:字段列表中未知的“a1”。

还有后面的name、create_by和update_by。

随便给个值,可能就会避过报错,比如直接把values里的sensor_id加引号。但有些值也会报错,如"aa1"等。

最后索性用预编译sql,把字段值写在update方法的参数里,这时就不报错了,惊了。

-

读取出来的数据(小数),总会被四舍五入,只留下3位小数

我也不知道原因,可能是jxl的不同Cell的getContents()方法的问题。

我改用((NumberCell)sheets[i].getCell(c, r)).getValue(),得到的值与Excel里的一样。

2419

2419

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?