文章目录

Pytorch搭建简单神经网络

机器学习和神经网络的基本概念

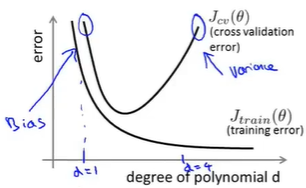

诊断偏差和方差:

根据学习曲线判断:

高偏差的学习曲线:

如果一个学习算法有高偏差,随着我们增加训练样本,就是向图片右边移动,我们发现交叉验证误差不会明显地下降了,基本变成平的了;所以如果学习算法正处于高偏差的情形,那么选用更多的训练集数据对于改善算法表现无益

高方差的学习曲线:

如果我们考虑增加训练集的样本数,两条的学习曲线正在互相靠近,那么在一定程度下,两条曲线会接近相交,那么表示如果学习算法正处于高方差的情况,增加样本数对改进算法是有帮助的

利用神经网络解决分类和回归问题

预测房价(回归)

demo_reg.py

import torch

#data

import numpy as np

import re

ff = open("housing.data").readlines()

data = []

for item in ff:

#将数值间的多个空格合并为一个空格

out = re.sub(r"\s{2,}", " ",item).strip()

#\s匹配空白符 {2,}至少匹配2次 strip()去除收尾空格

#sub()替换

#print(out)

data.append(out.split(" "))

data = np.array(data).astype(np.float) #把列表数据变为数组形式,同时数据变为float格式

print(data.shape)#(506,14)

Y = data[:,-1] #将最后一列Y值取出

X = data[:,0:-1]

#划分测试集和训练集

X_train = X[0:496,...]

Y_train = Y[0:496,...]

X_test = X[496:,...]

Y_test = Y[496:,...]

print("----------数据集维度----------")

print(X_train.shape)

print(Y_train.shape)

print(X_test.shape)

print(Y_test.shape)

print("-----------------------------")

#net

class Net(torch.nn.Module):

def __init__(self, n_feature, n_output):

super(Net,self).__init__()

#加入一层隐藏层,优化欠拟合的问题

self.hidden = torch.nn.Linear(n_feature,100)

self.predict = torch.nn.Linear(100,n_output)

def forward(self,x):

out = self.hidden(x)

out = torch.relu(out)

out = self.predict(out)

return out

#定义了只有一个线性隐藏层的网络

net = Net(13,1) #输入单元13个,输出单元1个

#loss

loss_func = torch.nn.MSELoss() #采用均方损失

#optimiter

#使用Adam优化算法,优化欠拟合的问题

optimizer = torch.optim.Adam(net.parameters(), lr = 0.001)

#training

for i in range(5000):#如果CPU足够可以训练10000次

x_data = torch.tensor(X_train,dtype=torch.float32)

y_data = torch.tensor(Y_train,dtype=torch.float32)

pred = net.forward(x_data)

#print(pred.shape) 二维

#print(y_data.shape) 一维 因此需要统一纬度

pred = torch.squeeze(pred)#压缩维度

loss = loss_func(pred, y_data) * 0.001

optimizer.zer

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1307

1307

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?