文章目录

docker搭建hadoop集群

MapReduce操作

环境

- docker

- jdk-1.8

- hadoop 3.3.2

- Eclipse IDE for Enterprise Java and Web Developers 2022-3

参考教程 (主要参照第二个)

一、 编写mapreduce代码

eclipse中创建maven项目,Archetype选择quickstart

pom.xml

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>RELEASE</version>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-core</artifactId>

<version>2.8.2</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.7.2</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.7.2</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>2.7.2</version>

</dependency>

</dependencies>

WcMapper.java

package com.hadpro;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class WcMapper extends Mapper<LongWritable, Text, Text, IntWritable> {

// 对输出数据进行封装

private Text word = new Text();

private IntWritable one = new IntWritable(1);

/**

* Map是核心逻辑

* @param key 行号

* @param value 行内容

* @param context 任务对象 job

* @throws IOException

* @throws InterruptedException

*/

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// 这一行内容

String line = value.toString();

// 将这一行拆成很多单词

String[] words = line.split(" ");

// 将拆好的单词按照(word, 1) 的形式输出

for (String word :

words) {

this.word.set(word);

context.write(this.word, one);

}

}

}

WcReducer.java

package com.hadpro;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class WcReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

private IntWritable result = new IntWritable();

/**

*

* @param key 单词

* @param values 这个单词所有的1

* @param context

* @throws IOException

* @throws InterruptedException

*/

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

// 做累加

int sum = 0;

// 将相同的key的值做累加

for (IntWritable value : values) {

sum += value.get();

}

result.set(sum);

context.write(key, result);

}

}

App.java

package com.hadpro;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class App

{

public static void main( String[] args ) throws IOException, ClassNotFoundException, InterruptedException

{

// 1. 新建一个Job

Job job = Job.getInstance(new Configuration(), "MyWordCount");

// 2. 设置Job的Jar包

job.setJarByClass(App.class);

// 3. 设置Job的Mapper和Reducer

job.setMapperClass(WcMapper.class);

job.setReducerClass(WcReducer.class);

// combiner

// job.setCombinerClass(WcReducer.class);

// 4. 设置Mapper和Reducer的输出类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

// 5. 设置输入路径和输出路径

// (FileInputFormat和FileOutputFormat选包名找包名长的)

// 输入目录里面带有需求的文件

FileInputFormat.setInputPaths(job, new Path("E:/eclipse/hadpro/input"));

// 输出目录必须不存在

FileOutputFormat.setOutputPath(job, new Path("E:/eclipse/hadpro/output"));

boolean completion = job.waitForCompletion(true);

if (completion){

System.out.println("程序运行成功~");

}else {

System.out.println("程序运行失败~");

}

}

}

二、本地运行

2.1 添加hadoop环境

- 将win10的hadoop的压缩包解压

- 下载与hadoop版本对应的winutils.exe与hadoop.dll并放到 hadoop 下的bin文件夹内 高版本winutils下载

- 添加HADOOP_HOME

- 将%HADOOP_HOME%\bin 与 %HADOOP_HOME%\sbin 添加到Path

2.2 eclipse中运行

项目右击->Run As -> Java Application

输入文件

结果

三、集群测试

3.1 将Jar上传到HDFS

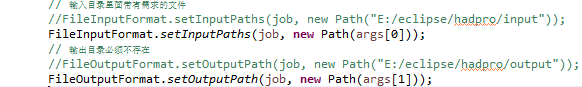

- 修改输入输出路径

- 打包程序

- 启动hadoop集群

- 将jar包上传到docker容器中

docker cp 本地文件路径 ID全称:容器路径

docker cp E:/eclipse/hadpro/hadpro.jar fc7458b73909:/usr/local/hadoop/bin

- 将Jar包上传到HDFS

./hadoop fs -put hadpro.jar /- docker中创建新文件夹pachongData放置txt,并将其上传到HDFS

- docker cp E:/eclipse/hadpro/input/data.txt fc7458b73909:/usr/local/hadoop/bin/pachongData

- ./hadoop fs -put pachongData/ /

3.2 执行jar包

com.hadpro为包含执行类的包,App为执行类

./hadoop jar hadpro.jar com.hadpro.App /pachongData /output

我运行jar包后出现了错误,百度以后发现是jdk的版本不同,修改eclipse的jdk版本即可,参考 修改eclipsejdk版本

接着重新打包jar包,重新上传,最后再次运行jar包即可。

在运行的时候发现文件输入输出路径有问题,因此要进行修改

./hadoop jar pachongFile.jar pachong/WordCountJob /pachongData /output

- 运行jar包后,如果卡在"running job",可以参考 Hadoop运行任务时一直卡在: INFO mapreduce.Job: Running job

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?