Volumes配置管理

一.Volumes概述

-

容器中的文件在磁盘上是临时存放的,这给容器中运行的特殊应用程序带来一些问题。首先,当容器崩溃时,kubelet 将重新启动容器,容器中的文件将会丢失,因为容器会以干净的状态重建。其次,当在一个 Pod 中同时运行多个容器时,常常需要在这些容器之间共享文件。 Kubernetes 抽象出 Volume 对象来解决这两个问题。

-

Kubernetes 卷具有明确的生命周期,与包裹它的 Pod 相同。 因此,卷比 Pod 中运行的任何容器的存活期都长,在容器重新启动时数据也会得到保留。 当然,当一个 Pod 不再存在时,卷也将不再存在。也许更重要的是,Kubernetes 可以支持许多类型的卷,Pod 也能同时使用任意数量的卷。

-

卷不能挂载到其他卷,也不能与其他卷有硬链接。 Pod 中的每个容器必须独立地指定每个卷的挂载位置。

Kubernetes 支持下列类型的卷:

- awsElasticBlockStore 、azureDisk、azureFile、cephfs、cinder、configMap、csi

- downwardAPI、emptyDir、fc (fibre channel)、flexVolume、flocker

- gcePersistentDisk、gitRepo (deprecated)、glusterfs、hostPath、iscsi、local、

- nfs、persistentVolumeClaim、projected、portworxVolume、quobyte、rbd

- scaleIO、secret、storageos、vsphereVolume

二.emptyDir卷

当 Pod 指定到某个节点上时,首先创建的是一个 emptyDir 卷,并且只要 Pod 在该节点上运行,卷就一直存在。 就像它的名称表示的那样,卷最初是空的。 尽管 Pod 中的容器挂载 emptyDir 卷的路径可能相同也可能不同,但是这些容器都可以读写 emptyDir 卷中相同的文件。 当 Pod 因为某些原因被从节点上删除时,emptyDir 卷中的数据也会永久删除。

emptyDir 的使用场景:

- 缓存空间,例如基于磁盘的归并排序。

- 为耗时较长的计算任务提供检查点,以便任务能方便地从崩溃前状态恢复执行。

- 在 Web 服务器容器服务数据时,保存内容管理器容器获取的文件。

默认情况下, emptyDir 卷存储在支持该节点所使用的介质上;这里的介质可以是磁盘或 SSD 或网络存储,这取决于您的环境。 但是,您可以将 emptyDir.medium 字段设置为 “Memory”,以告诉 Kubernetes 为您安装 tmpfs(基于内存的文件系统)。 虽然 tmpfs 速度非常快,但是要注意它与磁盘不同。 tmpfs 在节点重启时会被清除,并且您所写入的所有文件都会计入容器的内存消耗,受容器内存限制约束。

在一个pod下的两个容器共享volumes

创建pod:nginx+busyboxplus

[root@server1 volumes]# cat vol1.yaml

apiVersion: v1

kind: Pod

metadata:

name: vol1

spec:

containers:

- image: busyboxplus

name: vm1

command: ["sleep", "300"]

volumeMounts:

- mountPath: /cache

name: cache-volume

- name: vm2

image: nginx

volumeMounts:

- mountPath: /usr/share/nginx/html

name: cache-volume

volumes:

- name: cache-volume

emptyDir:

medium: Memory

sizeLimit: 100Mi

[root@server1 volumes]# kubectl apply -f vol1.yaml

pod/vol1 created

查看pod及ip

[root@server1 volumes]# kubectl get pod

NAME READY STATUS RESTARTS AGE

vol1 2/2 Running 0 7s

[root@server1 volumes]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

vol1 2/2 Running 0 34s 10.244.22.18 server4 <none> <none>

进入交互界面,进行访问,403错误 配置默认发布文件,再次访问,访问成功,说明busyboxplus与nginx共享volumes

[root@server1 volumes]# kubectl exec vol1 -it sh

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

Defaulted container "vm1" out of: vm1, vm2

/ # ls

bin cache dev etc home lib lib64 linuxrc media mnt opt proc root run sbin sys tmp usr var

/ # cd cache/

/cache # ls

/cache # curl 10.244.22.18

<html>

<head><title>403 Forbidden</title></head>

<body>

<center><h1>403 Forbidden</h1></center>

<hr><center>nginx/1.19.2</center>

</body>

</html>

/cache # echo www.westos.org > index.html

/cache # curl 10.244.22.18

www.westos.org

volumes限制为100MB,创建200MB文件

/cache # dd if=/dev/zero of=/cache/bigfile bs=1M count=200

200+0 records in

200+0 records out

/cache # du -sh bigfile

200.0M bigfile

超过限制,崩溃

[root@server1 volumes]# kubectl get pod

NAME READY STATUS RESTARTS AGE

vol1 0/2 Evicted 0 4m38s

- 可以看到文件超过sizeLimit,则一段时间后(1-2分钟)会被kubelet evict掉。之所以不是“立即”被evict,是因为kubelet是定期进行检查的,这里会有一个时间差。

emptydir缺点:

- 不能及时禁止用户使用内存。虽然过1-2分钟kubelet会将Pod挤出,但是这个时间内,其实对node还是有风险的;

- 影响kubernetes调度,因为empty dir并不涉及node的resources,这样会造成Pod“偷偷”使用了node的内存,但是调度器并不知晓;

- 用户不能及时感知到内存不可用

三.hostPath 卷

hostPath 卷能将主机节点文件系统上的文件或目录挂载到您的 Pod 中。 虽然这不是大多数 Pod 需要的,但是它为一些应用程序提供了强大的逃生舱。

hostPath 的一些用法有:

- 运行一个需要访问 Docker 引擎内部机制的容器,挂载 /var/lib/docker 路径。

- 在容器中运行 cAdvisor 时,以 hostPath 方式挂载 /sys。

- 允许 Pod 指定给定的 hostPath 在运行 Pod 之前是否应该存在,是否应该创建以及应该以什么方式存在。

- 查看pod调度节点是否创建相关目录

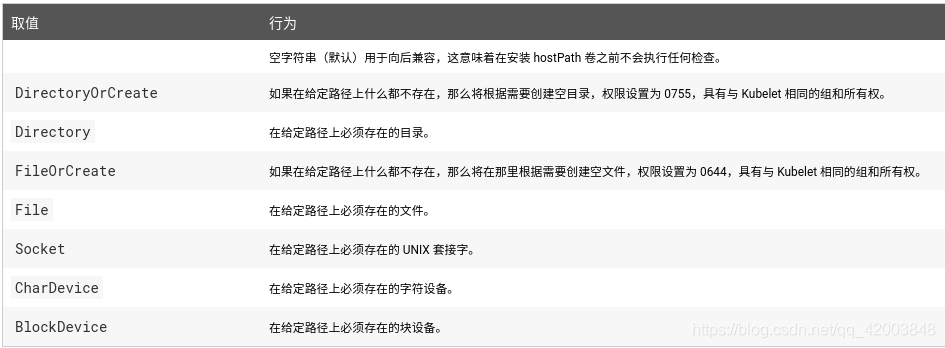

除了必需的 path 属性之外,用户可以选择性地为 hostPath 卷指定 type

当使用这种类型的卷时要小心,因为:

- 具有相同配置(例如从 podTemplate 创建)的多个 Pod 会由于节点上文件的不同而在不同节点上有不同的行为。

- 当 Kubernetes 按照计划添加资源感知的调度时,这类调度机制将无法考虑由 hostPath 使用的资源。

- 基础主机上创建的文件或目录只能由 root 用户写入。您需要在 特权容器 中以 root 身份运行进程,或者修改主机上的文件权限以便容器能够写入 hostPath 卷

1.查看pod调度节点是否创建相关目录

[root@server1 volumes]# cat host.yml

apiVersion: v1

kind: Pod

metadata:

name: test-pd

spec:

containers:

- image: nginx

name: test-container

volumeMounts:

- mountPath: /test-pd

name: test-volume

volumes:

- name: test-volume

hostPath:

path: /data

/test-pd目录已经创建,可以看到目录内已存在内容

[root@server1 volumes]# kubectl exec test-pd -it bash

root@test-pd:/# cd /test-pd/

root@test-pd:/test-pd# ls

ca_download database job_logs psc redis registry secret

2.nfs

安装nfs,共享/mnt/nfs

[root@server1 volumes]# yum install -y nfs-utils

Loaded plugins: product-id, search-disabled-repos, subscription-manager

This system is not registered with an entitlement server. You can use subscription-manager to register.

Package 1:nfs-utils-1.3.0-0.61.el7.x86_64 already installed and latest version

Nothing to do

[root@server1 volumes]# vim /etc/exports

[root@server1 volumes]# systemctl start nfs

[root@server1 volumes]# showmount -e

Export list for server1:

/mnt/nfs *

在k8s集群利用nfs部署nginx

[root@server1 volumes]# cat nfs.yml

apiVersion: v1

kind: Pod

metadata:

name: test-pd

spec:

containers:

- image: nginx

name: test-container

volumeMounts:

- mountPath: /usr/share/nginx/html

name: test-volume

volumes:

- name: test-volume

nfs:

server: 172.25.3.1

path: /mnt/nfs

[root@server1 volumes]# kubectl apply -f nfs.yml

pod/test-pd created

查看pod

[root@server1 volumes]# kubectl get pod

NAME READY STATUS RESTARTS AGE

test-pd 1/1 Running 0 14s

在共享目录创建默认发布文件index.html

[root@server1 volumes]# cd /mnt/nfs/

[root@server1 nfs]# ls

config vol1

[root@server1 nfs]# rm -rf *

[root@server1 nfs]# echo www.westos.org > index.html

进入交互界面,查看到index.html存在发布目录,说明nfs+k8s集群实现成功

[root@server1 nfs]# kubectl exec test-pd -it bash

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

root@test-pd:/# ls

bin boot dev docker-entrypoint.d docker-entrypoint.sh etc home lib lib64 media mnt opt proc root run sbin srv sys tmp usr var

root@test-pd:/# cd /usr/local/

bin/ etc/ games/ include/ lib/ man/ sbin/ share/ src/

root@test-pd:/# cd /usr/share/nginx/html/

root@test-pd:/usr/share/nginx/html# ls

index.html

root@test-pd:/usr/share/nginx/html# exit

exit

1.21.3

查看ip,测试访问,成功

[root@server1 nfs]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

test-pd 1/1 Running 0 6m3s 10.244.179.89 server2 <none> <none>

[root@server1 nfs]# curl 10.244.179.89

www.westos.org

四.PersistentVolume持久卷

1.PersistentVolume简介

PersistentVolume(持久卷,简称PV)是集群内,由管理员提供的网络存储的一部分。就像集群中的节点一样,PV也是集群中的一种资源。它也像Volume一样,是一种volume插件,但是它的生命周期却是和使用它的Pod相互独立的。PV这个API对象,捕获了诸如NFS、ISCSI、或其他云存储系统的实现细节。

PersistentVolumeClaim(持久卷声明,简称PVC)是用户的一种存储请求。它和Pod类似,Pod消耗Node资源,而PVC消耗PV资源。Pod能够请求特定的资源(如CPU和内存)。PVC能够请求指定的大小和访问的模式(可以被映射为一次读写或者多次只读)。

有两种PV提供的方式:静态和动态。

-

静态PV:集群管理员创建多个PV,它们携带着真实存储的详细信息,这些存储对于集群用户是可用的。它们存在于Kubernetes API中,并可用于存储使用。

-

动态PV:当管理员创建的静态PV都不匹配用户的PVC时,集群可能会尝试专门地供给volume给PVC。这种供给基于StorageClass。

PVC与PV的绑定是一对一的映射。没找到匹配的PV,那么PVC会无限期得处于unbound未绑定状态。

2.PersistentVolume原理

使用

- Pod使用PVC就像使用volume一样。集群检查PVC,查找绑定的PV,并映射PV给Pod。对于支持多种访问模式的PV,用户可以指定想用的模式。一旦用户拥有了一个PVC,并且PVC被绑定,那么只要用户还需要,PV就一直属于这个用户。用户调度Pod,通过在Pod的volume块中包含PVC来访问PV。

释放

- 当用户使用PV完毕后,他们可以通过API来删除PVC对象。当PVC被删除后,对应的PV就被认为是已经是“released”了,但还不能再给另外一个PVC使用。前一个PVC的属于还存在于该PV中,必须根据策略来处理掉。

回收

- PV的回收策略告诉集群,在PV被释放之后集群应该如何处理该PV。当前,PV可以被Retained(保留)、 Recycled(再利用)或者Deleted(删除)。保留允许手动地再次声明资源。对于支持删除操作的PV卷,删除操作会从Kubernetes中移除PV对象,还有对应的外部存储(如AWS EBS,GCE PD,Azure Disk,或者Cinder volume)。动态供给的卷总是会被删除。

访问模式

- ReadWriteOnce – 该volume只能被单个节点以读写的方式映射

- ReadOnlyMany – 该volume可以被多个节点以只读方式映射

- ReadWriteMany – 该volume可以被多个节点以读写的方式映射

在命令行中,访问模式可以简写为:

- RWO - ReadWriteOnce

- ROX - ReadOnlyMany

- RWX - ReadWriteMany

回收策略

- Retain:保留,需要手动回收

- Recycle:回收,自动删除卷中数据

- Delete:删除,相关联的存储资产,如AWS EBS,GCE PD,Azure - Disk,or OpenStack Cinder卷都会被删除

当前,只有NFS和HostPath支持回收利用,AWS EBS,GCE PD,Azure Disk,or OpenStack Cinder卷支持删除操作。

状态

- Available:空闲的资源,未绑定给PVC

- Bound:绑定给了某个PVC

- Released:PVC已经删除了,但是PV还没有被集群回收

- Failed:PV在自动回收中失败了

- 命令行可以显示PV绑定的PVC名称。

3.NFS持久化存储部署(静态pv)

安装配置nfs

[root@server1 volumes]# yum install -y nfs-utils

Loaded plugins: product-id, search-disabled-repos, subscription-manager

This system is not registered with an entitlement server. You can use subscription-manager to register.

Package 1:nfs-utils-1.3.0-0.61.el7.x86_64 already installed and latest version

Nothing to do

[root@server1 volumes]# vim /etc/exports

[root@server1 volumes]# systemctl start nfs

[root@server1 volumes]# showmount -e

Export list for server1:

/mnt/nfs *

创建pv脚本pv.yml,指定位置为/mnt/nfs/pv1和 /mnt/nfs/pv2

[root@server1 volumes]# vim pv.yml

[root@server1 volumes]# kubectl apply -f pv.yml

persistentvolume/pv1 created

persistentvolume/pv2 created

[root@server1 volumes]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pv1 5Gi RWO Recycle Available nfs 3s

pv2 10Gi RWX Recycle Available nfs 3s

[root@server1 volumes]# cat pv.yml

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv1

spec:

capacity:

storage: 5Gi

volumeMode: Filesystem

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: nfs

mountOptions:

- hard

- nfsvers=4.1

nfs:

path: /mnt/nfs/pv1

server: 172.25.3.1

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv2

spec:

capacity:

storage: 10Gi

volumeMode: Filesystem

accessModes:

- ReadWriteMany

persistentVolumeReclaimPolicy: Recycle

storageClassName: nfs

mountOptions:

- hard

- nfsvers=4.1

nfs:

path: /mnt/nfs/pv2

server: 172.25.3.1

创建pvc的脚本pvc.yml ,volume分别为pv1和pv2

[root@server1 volumes]# vim pvc.yml

[root@server1 volumes]# kubectl apply -f pvc.yml

persistentvolumeclaim/pvc1 created

persistentvolumeclaim/pvc2 created

[root@server1 volumes]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

pvc1 Bound pv1 5Gi RWO nfs 6s

pvc2 Bound pv2 10Gi RWX nfs 6s

查看pv,CLAIM 中为default/pvc1和default/pvc2,说明已经链接

[root@server1 volumes]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pv1 5Gi RWO Recycle Bound default/pvc1 nfs 114s

pv2 10Gi RWX Recycle Bound default/pvc2 nfs 114s

[root@server1 volumes]# cat pvc.yml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: pvc1

spec:

storageClassName: nfs

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: pvc2

spec:

storageClassName: nfs

accessModes:

- ReadWriteMany

resources:

requests:

storage: 10Gi

创建pod,使用持久化存储方式

[root@server1 volumes]# vim pod.yml

[root@server1 volumes]# kubectl apply -f pod.yml

pod/test-pd-1 created

pod/test-pd-2 created

[root@server1 volumes]# cat pod.yml

apiVersion: v1

kind: Pod

metadata:

name: test-pd-1

spec:

containers:

- image: nginx

name: nginx

volumeMounts:

- mountPath: /usr/share/nginx/html

name: pv1

volumes:

- name: pv1

persistentVolumeClaim:

claimName: pvc1

---

apiVersion: v1

kind: Pod

metadata:

name: test-pd-2

spec:

containers:

- image: nginx

name: nginx

volumeMounts:

- mountPath: /usr/share/nginx/html

name: pv2

volumes:

- name: pv2

persistentVolumeClaim:

claimName: pvc2

在共享目录pv1 pv2下设置发布内容不同的index.html

[root@server1 volumes]# echo pv1.westos > /mnt/nfs/pv1/index.html

[root@server1 volumes]# echo pv2.westos > /mnt/nfs/pv2/index.html

查看ip,进行访问,访问pod不同,发布内容不同

[root@server1 volumes]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

test-pd-1 1/1 Running 0 83s 10.244.22.19 server4 <none> <none>

test-pd-2 1/1 Running 0 83s 10.244.22.20 server4 <none> <none>

[root@server1 volumes]# curl 10.244.22.19

pv1.westos

[root@server1 volumes]# curl 10.244.22.20

pv2.westos

[root@server1 volumes]#

删除pod再次建立,pvc依旧存在,依旧可以使用,注意ip需要重新获取

[root@server1 volumes]# kubectl delete -f pod.yml

pod "test-pd-1" deleted

pod "test-pd-2" deleted

[root@server1 volumes]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

pvc1 Bound pv1 5Gi RWO nfs 7m3s

pvc2 Bound pv2 10Gi RWX nfs 7m3s

[root@server1 volumes]# kubectl apply -f pod.yml

pod/test-pd-1 created

pod/test-pd-2 created

[root@server1 volumes]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

test-pd-1 1/1 Running 0 13s 10.244.22.22 server4 <none> <none>

test-pd-2 1/1 Running 0 13s 10.244.22.21 server4 <none> <none>

[root@server1 volumes]# curl 10.244.22.22

pv1.westos

[root@server1 volumes]# curl 10.244.22.21

pv2.westos

删除pvc,pv状态不变化,是因为是静态

[root@server1 volumes]# kubectl delete -f pvc.yml

persistentvolumeclaim "pvc1" deleted

persistentvolumeclaim "pvc2" deleted

[root@server1 volumes]# kubectl get pvc

No resources found in default namespace.

[root@server1 volumes]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pv1 5Gi RWO Recycle Released default/pvc1 nfs 11m

pv2 10Gi RWX Recycle Released default/pvc2 nfs 11m

4.NFS持久化存储部署(动态pv)

StorageClass提供了一种描述存储类(class)的方法,不同的class可能会映射到不同的服务质量等级和备份策略或其他策略等。

每个 StorageClass 都包含 provisioner、parameters 和 reclaimPolicy 字段, 这些字段会在StorageClass需要动态分配 PersistentVolume 时会使用到。

StorageClass的属性

Provisioner(存储分配器):用来决定使用哪个卷插件分配 PV,该字段必须指定。可以指定内部分配器,也可以指定外部分配器。外部分配器的代码地址为: kubernetes-incubator/external-storage,其中包括NFS和Ceph等。

Reclaim Policy(回收策略):通过reclaimPolicy字段指定创建的Persistent Volume的回收策略,回收策略包括:Delete 或者 Retain,没有指定默认为Delete。

更多属性查看:https://kubernetes.io/zh/docs/concepts/storage/storage-classes/

NFS Client Provisioner是一个automatic provisioner,使用NFS作为存储,自动创建PV和对应的PVC,本身不提供NFS存储,需要外部先有一套NFS存储服务。

- PV以

${namespace}-${pvcName}-${pvName}的命名格式提供(在NFS服务器上) - PV回收的时候以

archieved-${namespace}-${pvcName}-${pvName}的命名格式(在NFS服务器上) - nfs-client-provisioner源码地址:https://github.com/kubernetes-incubator/external-storage/tree/master/nfs-client

NFS动态分配PV

编写生成脚本,内容包含配置授权,部署NFS Client Provisioner,创建 NFS SotageClass

[root@server1 nfs-client]# cat nfs-client-provisioner.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: nfs-client-provisioner

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["nodes"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: nfs-client-provisioner

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: nfs-client-provisioner

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: nfs-client-provisioner

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

labels:

app: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: nfs-client-provisioner

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: nfs-subdir-external-provisioner:v4.0.0

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: westos.org/nfs

- name: NFS_SERVER

value: 172.25.3.1

- name: NFS_PATH

value: /mnt/nfs

volumes:

- name: nfs-client-root

nfs:

server: 172.25.3.1

path: /mnt/nfs

---

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage

provisioner: westos.org/nfs

parameters:

archiveOnDelete: "true"

[root@server1 nfs-client]# cat claim.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: nfs-pv1

annotations:

volume.beta.kubernetes.io/storage-class: "managed-nfs-storage"

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 100Mi

[root@server1 nfs-client]# cat pod.yml

apiVersion: v1

kind: Pod

metadata:

name: test-pd-1

spec:

containers:

- image: nginx

name: nginx

volumeMounts:

- mountPath: /usr/share/nginx/html

name: zy-pv

volumes:

- name: zy-pv

persistentVolumeClaim:

claimName: nfs-pv1

首先创建namespace nfs-client-provisioner,以下实验皆在此环境下运行

[root@server1 nfs-client]# kubectl create namespace nfs-client-provisioner

namespace/nfs-client-provisioner created

执行脚本,查看sc

[root@server1 nfs-client]# kubectl apply -f nfs-client-provisioner.yaml

serviceaccount/nfs-client-provisioner created

clusterrole.rbac.authorization.k8s.io/nfs-client-provisioner-runner unchanged

clusterrolebinding.rbac.authorization.k8s.io/run-nfs-client-provisioner unchanged

role.rbac.authorization.k8s.io/leader-locking-nfs-client-provisioner created

rolebinding.rbac.authorization.k8s.io/leader-locking-nfs-client-provisioner created

deployment.apps/nfs-client-provisioner created

storageclass.storage.k8s.io/managed-nfs-storage unchanged

[root@server1 nfs-client]# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

managed-nfs-storage westos.org/nfs Delete Immediate false 52s

创建pv,查看pv及pvc

[root@server1 nfs-client]# vim claim.yaml

[root@server1 nfs-client]# kubectl apply -f claim.yaml

persistentvolumeclaim/nfs-pv1 created

[root@server1 nfs-client]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pvc-7eb44af7-ec63-4853-95ba-9b1509d061a1 100Mi RWX Delete Bound default/nfs-pv1 managed-nfs-storage 9s

[root@server1 nfs-client]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

nfs-pv1 Bound pvc-7eb44af7-ec63-4853-95ba-9b1509d061a1 100Mi RWX managed-nfs-storage 12s

pvc脚本

[root@server1 nfs-client]# cat claim.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: nfs-pv1

annotations:

#volume.beta.kubernetes.io/storage-class: "managed-nfs-storage"

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 100Mi

创建pod

[root@server1 nfs-client]# kubectl apply -f pod.yml

pod/test-pd-1 created

[root@server1 nfs-client]# kubectl get pod

NAME READY STATUS RESTARTS AGE

test-pd-1 1/1 Running 0 7s

脚本内容

[root@server1 nfs-client]# cat pod.yml

apiVersion: v1

kind: Pod

metadata:

name: test-pd-1

spec:

containers:

- image: nginx

name: nginx

volumeMounts:

- mountPath: /usr/share/nginx/html

name: zy-pv

volumes:

- name: zy-pv

persistentVolumeClaim:

claimName: nfs-pv1

进入共享目录,可以查看到default-nfs-pv1-pvc-7eb44af7-ec63-4853-95ba-9b1509d061a1,该目录为动态pv自动创建,用于存放卷数据,在目录内创建index.html

[root@server1 nfs-client]# cd /mnt/nfs/

[root@server1 nfs]# ls

default-nfs-pv1-pvc-7eb44af7-ec63-4853-95ba-9b1509d061a1 pv1 pv2

[root@server1 nfs]# cd default-nfs-pv1-pvc-7eb44af7-ec63-4853-95ba-9b1509d061a1/

[root@server1 default-nfs-pv1-pvc-7eb44af7-ec63-4853-95ba-9b1509d061a1]# ls

SUCCESS

[root@server1 default-nfs-pv1-pvc-7eb44af7-ec63-4853-95ba-9b1509d061a1]# echo www.westos.org > index.html

[root@server1 default-nfs-pv1-pvc-7eb44af7-ec63-4853-95ba-9b1509d061a1]# cd

获取ip,测试访问,得到默认发布页内容

[root@server1 ~]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

test-pd-1 1/1 Running 0 64s 10.244.22.46 server4 <none> <none>

[root@server1 ~]# curl 10.244.22.46

www.westos.org

删除pod、pvc,卷内数据依旧存在,保存为archived-pvc-7eb44af7-ec63-4853-95ba-9b1509d061a1

[root@server1 nfs-client]# kubectl delete -f claim.yaml

persistentvolumeclaim "nfs-pv1" deleted

[root@server1 nfs-client]# kubectl get pv

No resources found

[root@server1 nfs-client]# kubectl get pvc

No resources found in default namespace.

[root@server1 nfs-client]# ls /mnt/nfs/

archived-pvc-7eb44af7-ec63-4853-95ba-9b1509d061a1 pv1 pv2

修改配置,设置为无备份,应先删除sc,再执行nfs-client-provisioner.yaml

[root@server1 nfs-client]# kubectl delete sc managed-nfs-storage

storageclass.storage.k8s.io "managed-nfs-storage" deleted

更改配置,创建,可以看到目录

将true改为false

[root@server1 nfs-client]# kubectl apply -f nfs-client-provisioner.yaml

serviceaccount/nfs-client-provisioner created

clusterrole.rbac.authorization.k8s.io/nfs-client-provisioner-runner created

clusterrolebinding.rbac.authorization.k8s.io/run-nfs-client-provisioner created

role.rbac.authorization.k8s.io/leader-locking-nfs-client-provisioner created

rolebinding.rbac.authorization.k8s.io/leader-locking-nfs-client-provisioner created

deployment.apps/nfs-client-provisioner created

storageclass.storage.k8s.io/managed-nfs-storage created

[root@server1 nfs-client]# ls /mnt/nfs/

pv1 pv2

[root@server1 nfs-client]# kubectl apply -f claim.yaml

persistentvolumeclaim/nfs-pv1 created

[root@server1 nfs-client]# kubectl apply -f pod.yml

pod/test-pd-1 created

[root@server1 nfs-client]# ls /mnt/nfs/

default-nfs-pv1-pvc-04552a00-0059-465e-ac35-8d9c61596b47 pv1 pv2

删除pod、pvc,目录也不存在

[root@server1 nfs-client]# kubectl delete -f claim.yaml

[root@server1 nfs-client]# kubectl delete -f pod.yml

pod "test-pd-1" deleted

[root@server1 nfs-client]# kubectl delete -f claim.yaml

persistentvolumeclaim "nfs-pv1" deleted

catcc[root@server1 nfs-client]# ls /mnt/nfs/

pv1 pv2

五.StatefulSet

1.设置默认sc

- 默认的 StorageClass 将被用于动态的为没有特定 storage class 需求的 PersistentVolumeClaims 配置存储:(只能有一个默认StorageClass)

- 如果没有默认StorageClass,PVC 也没有指定storageClassName 的值,那么意味着它只能够跟 storageClassName 也是“”的 PV 进行绑定。

[root@server1 nfs-client]# kubectl patch storageclass managed-nfs-storage -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'

storageclass.storage.k8s.io/managed-nfs-storage patched

[root@server1 nfs-client]# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

managed-nfs-storage (default) westos.org/nfs Delete Immediate false 15s

执行脚本,未指定sc

[root@server1 nfs-client]# cat claim.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: nfs-pv1

annotations:

#volume.beta.kubernetes.io/storage-class: "managed-nfs-storage"

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 100Mi

创建成功,查看pv,自动使用默认sc managed-nfs-storage

[root@server1 nfs-client]# kubectl apply -f claim.yaml

persistentvolumeclaim/nfs-pv1 created

[root@server1 nfs-client]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pvc-22524877-c04c-498e-955a-5f121a1e40cc 100Mi RWX Delete Bound default/nfs-pv1 managed-nfs-storage 5s

2.StatefulSet实现pod拓扑

StatefulSet将应用状态抽象成了两种情况:

拓扑状态:应用实例必须按照某种顺序启动。新创建的Pod必须和原来Pod的网络标识一样

存储状态:应用的多个实例分别绑定了不同存储数据。

StatefulSet给所有的Pod进行了编号,编号规则是:$(statefulset名称)-$(序号),从0开始。

Pod被删除后重建,重建Pod的网络标识也不会改变,Pod的拓扑状态按照Pod的“名字+编号”的方式固定下来,并且为每个Pod提供了一个固定且唯一的访问入口,即Pod对应的DNS记录。

注:StatefulSet通过Headless Service维持Pod的拓扑状态,因此需要先配置Headless Service

[root@server1 statefulset]# kubectl apply -f nginx-svc.yml

service/nginx created

[root@server1 statefulset]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 6d18h

nginx ClusterIP None <none> 80/TCP 7s

[root@server1 statefulset]# cat nginx-svc.yml

apiVersion: v1

kind: Service

metadata:

name: nginx

labels:

app: nginx

spec:

ports:

- port: 80

name: web

clusterIP: None

selector:

app: nginx

statefulset.yml 内容

[root@server1 statefulset]# cat statefulset.yml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: web

spec:

serviceName: "nginx"

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx

ports:

- containerPort: 80

name: web

statefulset 对每一个pod进行编号,从0开始依次创建,具有顺序性 ,便于根据编号进行高级操作

replicas: 6,依次创建6个pod,查看建立过程

[root@server1 statefulset]# kubectl apply -f statefulset.yml

statefulset.apps/web configured

[root@server1 statefulset]# kubectl get pod -w

NAME READY STATUS RESTARTS AGE

web-0 1/1 Running 0 40s

web-1 1/1 Running 0 39s

web-2 1/1 Running 0 3s

web-3 0/1 ContainerCreating 0 2s

web-3 1/1 Running 0 2s

web-4 0/1 Pending 0 0s

web-4 0/1 Pending 0 0s

web-4 0/1 ContainerCreating 0 0s

web-4 0/1 ContainerCreating 0 1s

web-4 1/1 Running 0 1s

web-5 0/1 Pending 0 0s

web-5 0/1 Pending 0 0s

web-5 0/1 ContainerCreating 0 0s

web-5 0/1 ContainerCreating 0 1s

web-5 1/1 Running 0 2s

^C

statefulset管理的pod的删除不使用delete,而是将replicas:0设置为0,从后往前依次回收,也可用于pod伸缩

Pod的创建也是严格按照编号顺序进行的。比如在web-0进入到running状态,并且Conditions为Ready之前,web-1一直会处于pending状态。

[root@server1 statefulset]# vim statefulset.yml

[root@server1 statefulset]# kubectl apply -f statefulset.yml

statefulset.apps/web configured

[root@server1 statefulset]# kubectl get pod -w

NAME READY STATUS RESTARTS AGE

web-0 1/1 Running 0 69s

web-1 1/1 Running 0 68s

web-2 1/1 Running 0 32s

web-3 1/1 Running 0 31s

web-4 1/1 Running 0 29s

web-5 0/1 Terminating 0 28s

web-5 0/1 Terminating 0 37s

web-5 0/1 Terminating 0 37s

web-4 1/1 Terminating 0 38s

web-4 1/1 Terminating 0 38s

web-4 0/1 Terminating 0 38s

web-4 0/1 Terminating 0 46s

web-4 0/1 Terminating 0 46s

web-3 1/1 Terminating 0 48s

web-3 1/1 Terminating 0 48s

web-3 0/1 Terminating 0 49s

web-3 0/1 Terminating 0 50s

web-3 0/1 Terminating 0 50s

web-2 1/1 Terminating 0 51s

web-2 1/1 Terminating 0 51s

web-2 0/1 Terminating 0 53s

web-2 0/1 Terminating 0 54s

web-2 0/1 Terminating 0 54s

web-1 1/1 Terminating 0 90s

web-1 1/1 Terminating 0 90s

web-1 0/1 Terminating 0 90s

web-1 0/1 Terminating 0 91s

web-1 0/1 Terminating 0 91s

web-0 1/1 Terminating 0 92s

web-0 1/1 Terminating 0 92s

web-0 0/1 Terminating 0 94s

web-0 0/1 Terminating 0 95s

web-0 0/1 Terminating 0 95s

创建交互式pod,查看nginx-svc解析,测试域名访问curl nginx-svc,得到访问内容

[root@server1 statefulset]# kubectl run demo --image=busyboxplus -it

If you don't see a command prompt, try pressing enter.

/ # nslookup nginx-svc

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

nslookup: can't resolve 'nginx-svc'

/ # exit

Session ended, resume using 'kubectl attach demo -c demo -i -t' command when the pod is running

[root@server1 statefulset]# vim statefulset.yml

[root@server1 statefulset]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 6d18h

nginx ClusterIP None <none> 80/TCP 14m

[root@server1 statefulset]# kubectl attach demo -it

If you don't see a command prompt, try pressing enter.

/ # nslookup nginx

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: nginx

Address 1: 10.244.179.111 web-0.nginx.default.svc.cluster.local

Address 2: 10.244.22.56 web-1.nginx.default.svc.cluster.local

/ # curl web-0.nginx.default.svc.cluster.local

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

/ # curl web-1.nginx.default.svc.cluster.local

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

3.StatefulSet+pvc

PV和PVC的设计,使得StatefulSet对存储状态的管理成为了可能:

[root@server1 statefulset]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 6d18h

nginx-svc ClusterIP None <none> 80/TCP 3s

StatefulSet还会为每一个Pod分配并创建一个同样编号的PVC。这样,kubernetes就可以通过Persistent Volume机制为这个PVC绑定对应的PV,从而保证每一个Pod都拥有一个独立的Volume。

脚本内容

[root@server1 statefulset]# cat statefulset.yml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: web

spec:

serviceName: "nginx-svc"

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: myapp:v1

ports:

- containerPort: 80

name: web

volumeMounts:

- name: www

mountPath: /usr/share/nginx/html

volumeClaimTemplates:

- metadata:

name: www

spec:

#storageClassName: nfs

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

运行脚本,查看pod

[root@server1 statefulset]# kubectl apply -f statefulset.yml

statefulset.apps/web created

[root@server1 statefulset]# kubectl get pod

NAME READY STATUS RESTARTS AGE

demo 0/1 Completed 4 23m

web-0 1/1 Running 0 10m

web-1 1/1 Running 0 10m

web-2 1/1 Running 0 10m

查看pv、pvc

[root@server1 statefulset]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 6d18h

nginx-svc ClusterIP None <none> 80/TCP 3m29s

[root@server1 statefulset]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pvc-037cf46b-d7f5-41f6-b156-17799899daaa 1Gi RWO Delete Bound default/www-web-2 managed-nfs-storage 12m

pvc-22524877-c04c-498e-955a-5f121a1e40cc 100Mi RWX Delete Bound default/nfs-pv1 managed-nfs-storage 51m

pvc-5a835670-8962-4714-8311-c8a441bf98eb 1Gi RWO Delete Bound default/www-web-0 managed-nfs-storage 12m

pvc-b227ba79-3f52-4d34-a1fd-655ff1516592 1Gi RWO Delete Bound default/www-web-1 managed-nfs-storage 12m

[root@server1 statefulset]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

nfs-pv1 Bound pvc-22524877-c04c-498e-955a-5f121a1e40cc 100Mi RWX managed-nfs-storage 51m

www-web-0 Bound pvc-5a835670-8962-4714-8311-c8a441bf98eb 1Gi RWO managed-nfs-storage 12m

www-web-1 Bound pvc-b227ba79-3f52-4d34-a1fd-655ff1516592 1Gi RWO managed-nfs-storage 12m

www-web-2 Bound pvc-037cf46b-d7f5-41f6-b156-17799899daaa 1Gi RWO managed-nfs-storage 12m

进入交互界面,查看解析,测试负载均衡

访问格式:web-1.nginx-svc‘

负载测试: nginx-svc

[root@server1 statefulset]# kubectl attach demo -it

If you don't see a command prompt, try pressing enter.

/ # curl nginx-svc

web-1

/ # nslookup nginx-svc

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: nginx-svc

Address 1: 10.244.22.58 web-2.nginx-svc.default.svc.cluster.local

Address 2: 10.244.179.113 web-1.nginx-svc.default.svc.cluster.local

Address 3: 10.244.22.57 web-0.nginx-svc.default.svc.cluster.local

/ # curl web-1.nginx-svc

web-1

/ # curl web-2.nginx-svc

web-2

/ # curl web-0.nginx-svc

web-0

/ # curl nginx-svc

web-0

/ # curl nginx-svc

web-2

/ # curl nginx-svc

web-2

/ # curl nginx-svc

web-1

真实路径下存储,删减节点不影响存储,新建立节点内容依旧存在

[root@server1 statefulset]# ls /mnt/nfs/

default-nfs-pv1-pvc-22524877-c04c-498e-955a-5f121a1e40cc

default-www-web-0-pvc-5a835670-8962-4714-8311-c8a441bf98eb

default-www-web-1-pvc-b227ba79-3f52-4d34-a1fd-655ff1516592

default-www-web-2-pvc-037cf46b-d7f5-41f6-b156-17799899daaa

1707

1707

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?