【目录】

损失函数概念

交叉熵损失函数

NLL/BCE/BCEWithLogits Loss

1、损失函数概念

损失函数Loss Function 计算模型输出与真实标签之间的差异

代价函数Cost Function 计算训练集中所有样本的模型输出与真实标签差异的平均值

目标函数Objective Function 是最终要达到的,其中的正则项Regularization是为了避免模型过拟合,对模型进行的一些约束

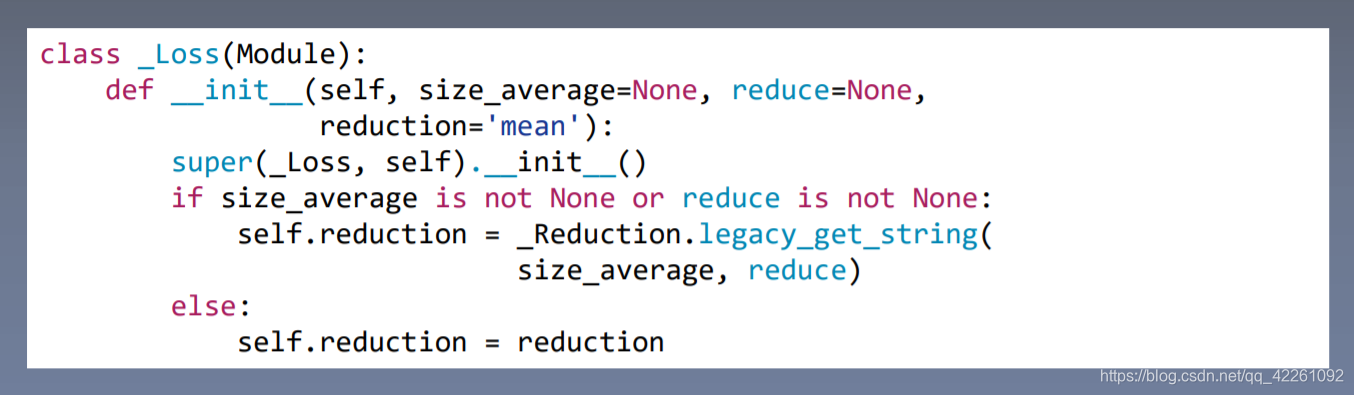

Loss继承自Module,可以看做一个网络层

2、交叉熵损失函数

熵即为信息熵,表示样本概率×样本自信息的一个均值:xi事件发生的概率×这个事件的取值

为了更好理解熵的大小与时间不确定性大小的关系,如右图所示两点分布的信息熵曲线

横轴为事件发生概率,纵轴为信息熵。当事件发生的概率为0.5的时候,信息熵最大,不确定性最大,信息熵为0.69。在二分类模型中loss值若为0.69,则模型不具备判别能力,对任意输入都认为是50%的可能性。

相对熵又称为KL散度,分析两个参数之间的差异,表示P到Q的距离,训练样本熵-模型输出熵

P是真实的一个概率分布,也就是训练集中样本的一个分布;

Q是模型输出的一个分布

交叉熵越小,表示两个两个分布越近

在深度学习中,去优化(最小化)交叉熵等价于去优化相对熵,因为训练集是固定的,所以信息熵H(P)是一个常数

-

nn.CrossEntropyLoss

先用Softmax将取值进行归一化处理到概率取值的一个范围,即0到1这个区间,然后利用Log和NLLLoss中的负号来计算交叉熵

x为输出的概率值,class为类别值。x[class]表示:类别为class这一神经元的输出,然后对每一个神经元的输出值进行指数运算然后求和

Pxi是等于1的因为这个样本是已经取出来的;因为只是计算一个样本,因此sigma求和也是没有的

weight需要对每个loss进行权值的缩放,weight张量里的元素代表缩放系数,例如有[0,1]两个类别,则weight需要设置为[1,2]两个元素

# fake data

inputs = torch.tensor([[1, 2], [1, 3], [1, 3]], dtype=torch.float)#三组输入,网络层有两个神经元

target = torch.tensor([0, 1, 1], dtype=torch.long)#第一个样本设置为第0类,第2,3个样本设置为第1类

#三个标签,类型设置为long长整型,有多少个样本,向量就有多长

#---------------------------- CrossEntropy loss: reduction -------------------------------#

#对神经元向量计算loss

# def loss function

loss_f_none = nn.CrossEntropyLoss(weight=None, reduction='none')

loss_f_sum = nn.CrossEntropyLoss(weight=None, reduction='sum')

loss_f_mean = nn.CrossEntropyLoss(weight=None, reduction='mean')

# forward

loss_none = loss_f_none(inputs, target)#输出值为tensor([1.3133,0.1269,0.1269])

loss_sum = loss_f_sum(inputs, target)#输出值为tensor(1.5271)

loss_mean = loss_f_mean(inputs, target)#输出值为tensor(0.5224)

# view

print("Cross Entropy Loss:\n ", loss_none, loss_sum, loss_mean)加了权重weight的交叉熵损失

# def loss function

weights = torch.tensor([1, 2], dtype=torch.float)#设置为1,则loss尺度不会变;将类别为0的权值设置为1,类别为1的权值设置为2

# weights = torch.tensor([0.7, 0.3], dtype=torch.float)

loss_f_none_w = nn.CrossEntropyLoss(weight=weights, reduction='none')#乘以对应的权重,类别为0不变化,类别为1乘以2

loss_f_sum = nn.CrossEntropyLoss(weight=weights, reduction='sum')

loss_f_mean = nn.CrossEntropyLoss(weight=weights, reduction='mean')

# forward

loss_none_w = loss_f_none_w(inputs, target)

loss_sum = loss_f_sum(inputs, target)

loss_mean = loss_f_mean(inputs, target)#mean是通过权值求平均,之前除以3,现在除以5

# view

print("\nweights: ", weights)

print(loss_none_w, loss_sum, loss_mean)

3、NLL/BCE/BCEWithLogits Loss

-

nn.NLLLoss

nn.NLLLoss只是执行了一个负号功能,并没有进行复杂的计算

# ----------------------------------- 2 NLLLoss -----------------------------------

weights = torch.tensor([1, 1], dtype=torch.float)

loss_f_none_w = nn.NLLLoss(weight=weights, reduction='none')

loss_f_sum = nn.NLLLoss(weight=weights, reduction='sum')

loss_f_mean = nn.NLLLoss(weight=weights, reduction='mean')

# forward

#tensor([[1, 2], [1, 3], [1, 3]])#三组输入,网络层有两个神经元

#target = torch.tensor([0, 1, 1])#标签值,类别0取前者,类别1取后者

loss_none_w = loss_f_none_w(inputs, target)#输出为tensor([-1,-3,-3])

loss_sum = loss_f_sum(inputs, target)

loss_mean = loss_f_mean(inputs, target)

# view

print("\nweights: ", weights)

print("NLL Loss", loss_none_w, loss_sum, loss_mean)-

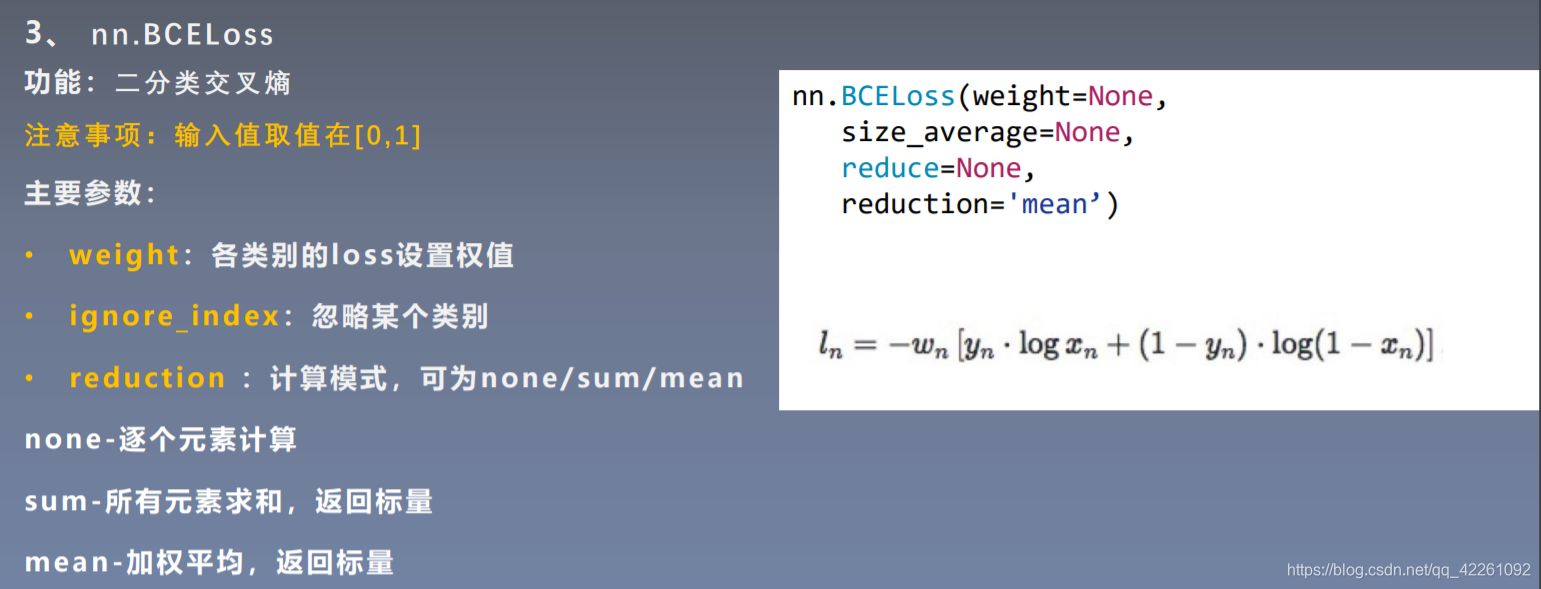

nn.BCELoss 二分类交叉熵损失函数

每一个神经元逐一对应的去计算loss,四个(一对神经元数据)输入,会有八个输出,而不是对每个神经元向量计算Loss

要将输出值压缩到0-1之间变为概率,torch.sigmoid()

# ----------------------------------- 3 BCE Loss -----------------------------------

#对每个神经元一一计算loss,而不是对神经元向量计算loss

inputs = torch.tensor([[1, 2], [2, 2], [3, 4], [4, 5]], dtype=torch.float)

target = torch.tensor([[1, 0], [1, 0], [0, 1], [0, 1]], dtype=torch.float)

target_bce = target

# itarget

inputs = torch.sigmoid(inputs)

weights = torch.tensor([1, 1], dtype=torch.float)

loss_f_none_w = nn.BCELoss(weight=weights, reduction='none')

loss_f_sum = nn.BCELoss(weight=weights, reduction='sum')

loss_f_mean = nn.BCELoss(weight=weights, reduction='mean')

# forward

loss_none_w = loss_f_none_w(inputs, target_bce)

#输出BEC Loss tensor([[0.3133,2.1269],

#[0.1269,2.1269],

#[3.0486,0.0181],

#[4.0181,0.0067]])

loss_sum = loss_f_sum(inputs, target_bce)

loss_mean = loss_f_mean(inputs, target_bce)

# view

print("\nweights: ", weights)

print("BCE Loss", loss_none_w, loss_sum, loss_mean)-

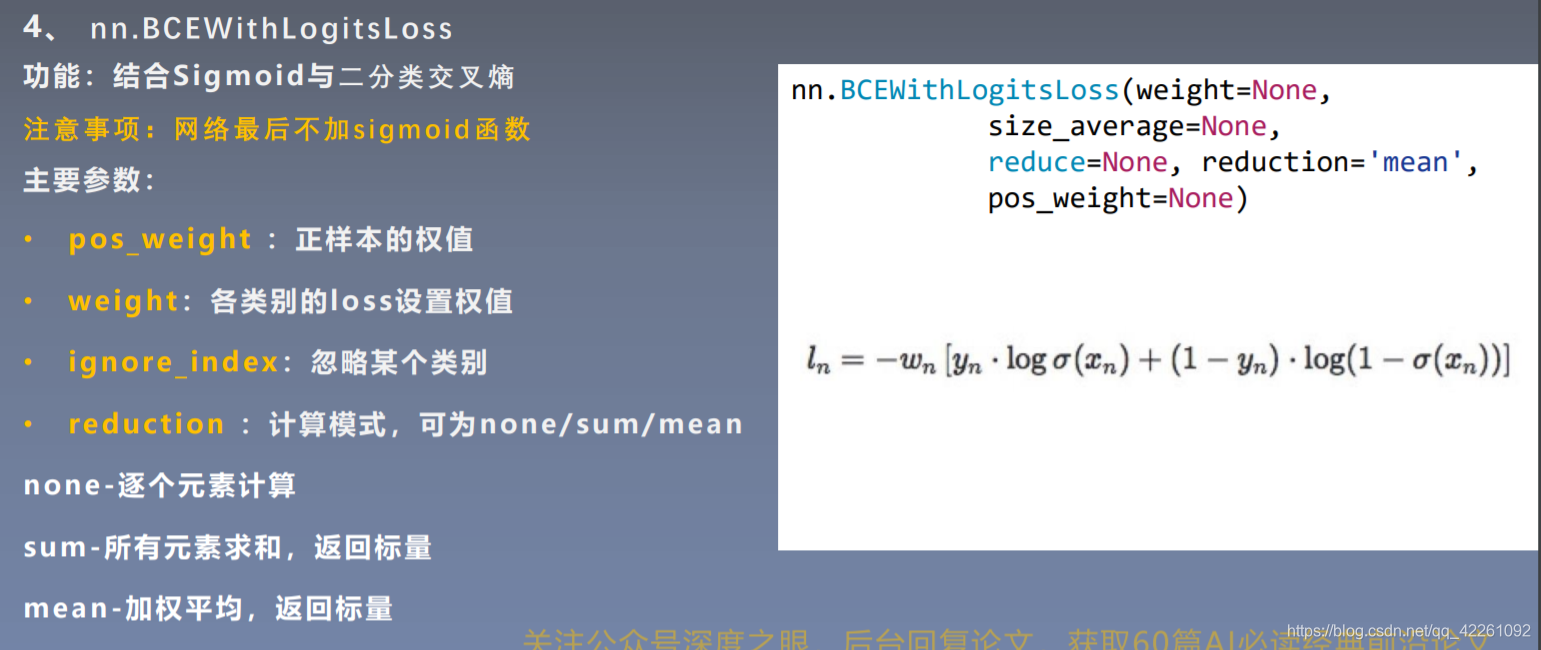

nn.BCEWithLogitsLoss

因为有时候模型最后并不希望添加Sigmoid函数,但计算Loss的时候又希望有一个[0,1]的概率分布,有了这个函数就不需要在模型中加sigmoid

pos_weight用于实现正负样本的均衡,比如说正样本有100个,负样本有300个,这时pos_weight设置为3,即可均衡正负样本

# -------------------------------- 4 BCE with Logis Loss ----------------------------------

inputs = torch.tensor([[1, 2], [2, 2], [3, 4], [4, 5]], dtype=torch.float)

target = torch.tensor([[1, 0], [1, 0], [0, 1], [0, 1]], dtype=torch.float)

target_bce = target

# inputs = torch.sigmoid(inputs)

weights = torch.tensor([1, 1], dtype=torch.float)

loss_f_none_w = nn.BCEWithLogitsLoss(weight=weights, reduction='none')

loss_f_sum = nn.BCEWithLogitsLoss(weight=weights, reduction='sum')

loss_f_mean = nn.BCEWithLogitsLoss(weight=weights, reduction='mean')

# forward

loss_none_w = loss_f_none_w(inputs, target_bce)

loss_sum = loss_f_sum(inputs, target_bce)

loss_mean = loss_f_mean(inputs, target_bce)

# view

print("\nweights: ", weights)

print(loss_none_w, loss_sum, loss_mean)

773

773

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?