from sklearn.ensemble import RandomForestClassifier

from sklearn.ensemble import VotingClassifier

from sklearn.linear_model import LogisticRegression

from sklearn.svm import SVC

log_clf = LogisticRegression()

rnd_clf = RandomForestClassifier()

svm_clf = SVC()

voting_clf = VotingClassifier(estimators=[('lr', log_clf), ('rf', rnd_clf), ('svc', svm_clf)],voting='hard')

voting_clf.fit(X_train, y_train)

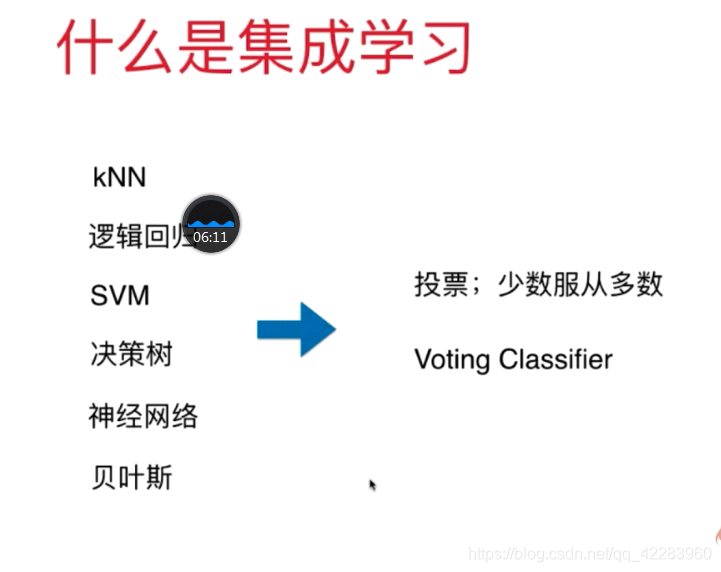

逻辑回归、kNN、决策树、SVM

log_clf = LogisticRegression()

svm_clf = SVC(probability=True)

voting_clf = VotingClassifier(estimators=[('lr', log_clf),('svc',svm_clf)],voting='soft')

voting_clf.fit(X_train, y_train)

from sklearn.ensemble import BaggingClassifier

from sklearn.tree import DecisionTreeClassifier

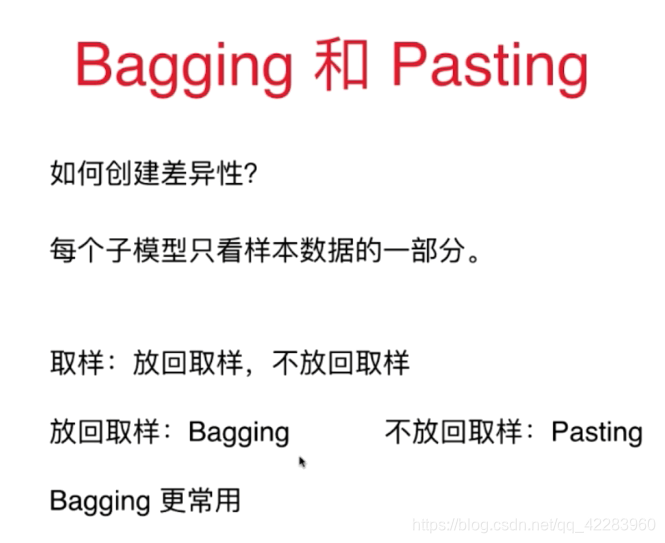

bag_clf = BaggingClassifier(DecisionTreeClassifier(), n_estimators=500, max_samples=100, bootstrap=True, n_jobs=-1)

#子模型数量、每次取的样本数、有放回

bag_clf.fit(X_train, y_train)

y_pred = bag_clf.predict(X_test)

bag_clf = BaggingClassifier(DecisionTreeClassifier(), n_estimators=500,bootstrap=True, n_jobs=-1, oob_score=True)

bag_clf.fit(X_train, y_train)

bag_clf.oob_score_

当你在处理高维度输入下(例如图片) 此方法尤其有效。对训练实例和特征的采样被叫做随机贴片。保留了所有的训练实例(如 bootstrap=False 和 max_samples=1.0 ) ,但是对特征采样(bootstrap_features=True 并且/或者 max_features 小于 1.0) 叫做随机子空间。

from sklearn.ensemble import RandomForestClassifier

rnd_clf = RandomForestClassifier(n_estimators=500, max_leaf_nodes=16,n_jobs=-1)

rnd_clf.fit(X_train, y_train)

y_pred_rf = rnd_clf.predict(X_test)

ExtraTreesClassifier

from sklearn.ensemble import AdaBoostClassifier

ada_clf = AdaBoostClassifier(DecisionTreeClassifier(max_depth=1), n_estimators=200,algorithm="SAMME.R", learning_rate=0.5)

ada_clf.fit(X_train, y_train)

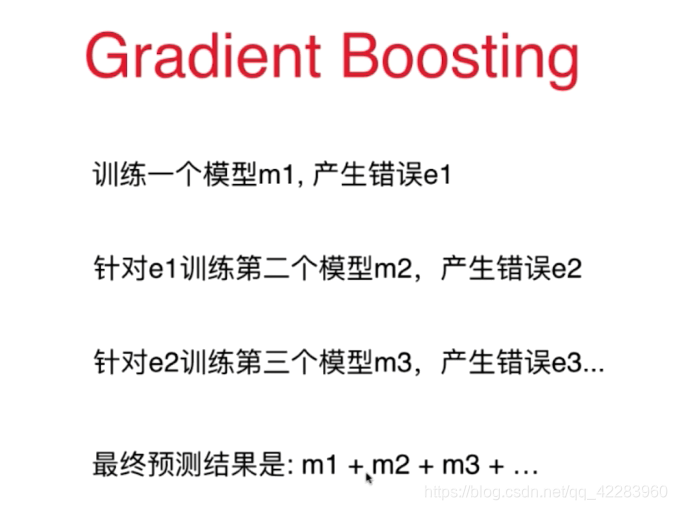

from sklearn.ensemble import GradientBoostingRegressor

gbrt = GradientBoostingRegressor(max_depth=2, n_estimators=3, learning_rate=1.0)

gbrt.fit(X, y)

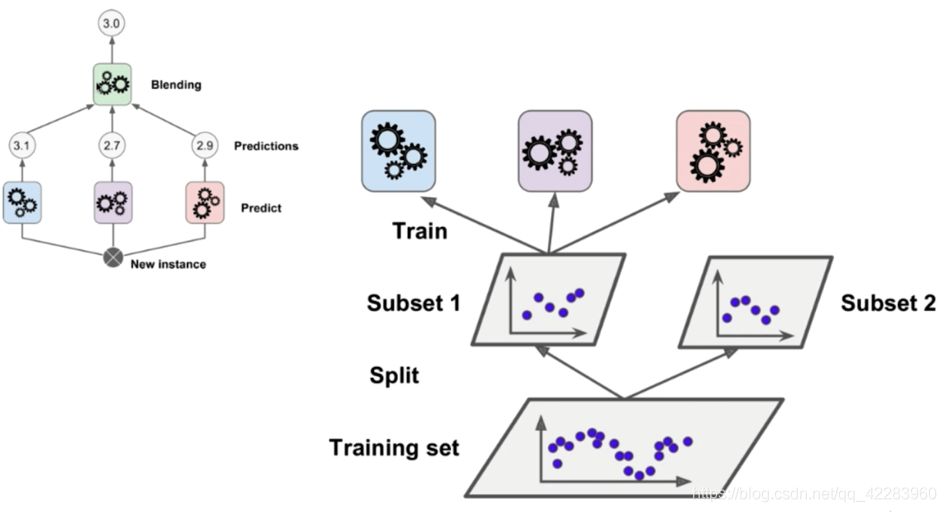

Stacking

573

573

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?