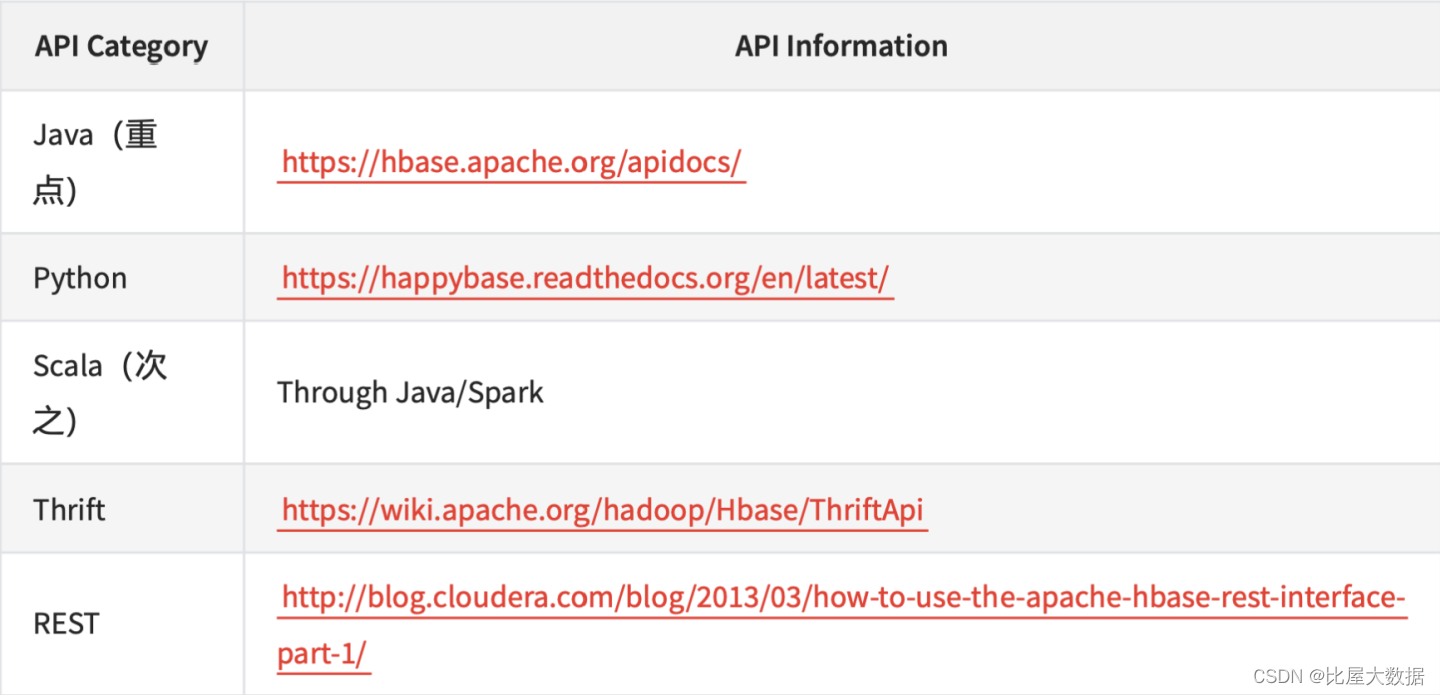

如何使用HBase - API

Official API is Java (官方API是Java)

It support full set of hbase commands (支持所有hbase 命令集的操作)

External API Apache HBase ™ Reference Guide (官方介绍)

HBase Java API

HBase Rest API Demo (Hbase Rest接口,了解)

Start/Stop rest servicen

hbase-daemon.sh start rest -p 9081

hbase-daemon.sh stop rest -p 9081

Examples

http://localhost:9081/version

http://localhost:9081/ <table_name>/schema

http://localhost:9081/ <table_name>/<row_key>

创建表

curl -v -X PUT 'http://hadoop5:9081/contact/schema' -H "Accept: application/json"

-H "Content-Type: application/json" -d '{"@name":"contact","ColumnSchema":

[{"name":"data"}]}'

#插入数据

TABLE='contact'

FAMILY='data'

KEY=$(echo 'rowkey1' | tr -d "\n" | base64)

COLUMN=$(echo 'data:test' | tr -d "\n" | base64)

DATA=$(echo 'some data' | tr -d "\n" | base64)

curl -v -X PUT 'http://hadoop5:9081/contact/rowkey1/data:test' -H "Accept:

application/json" -H "Content-Type: application/json" -d '{"Row":

[{"key":"'$KEY'","Cell":[{"column":"'$COLUMN'","$":"'$DATA'"}]}]}'

#获取指定rowkey的数据

curl -v -X GET 'http://hadoop5:9081/contact/rowkey1/' -H "Accept:

application/json"

#删除表

curl -v -X DELETE 'http://hadoop5:9081/contact/schema' -H "Accept:

application/json"

通过Phoenix访问HBase

Phoenix Is:

- A SQL Skin for HBase.(HBase的SQL插件)

- Provides a SQL interface for managing data in HBase.(支持使用SQL操作HBase的数据)

- Create tables, insert, update data and perform low-latency lookups through JDBC.(通过JDBC进行 低延迟的操作)

- Phoenix JDBC driver easily embeddable in any app that supports JDBC. (Phoenix JDBC驱动程序可 轻松嵌入任何支持JDBC的应用程序中)

Phoenix Is NOT:

- An replacement for the RDBMS. (不能代替RDB,RDB的设计更加严谨和成熟)

- Why? No transactions, lack of integrity constraints, many other areas still maturing. (缺乏完整性约 束,其他领域还不够成熟)

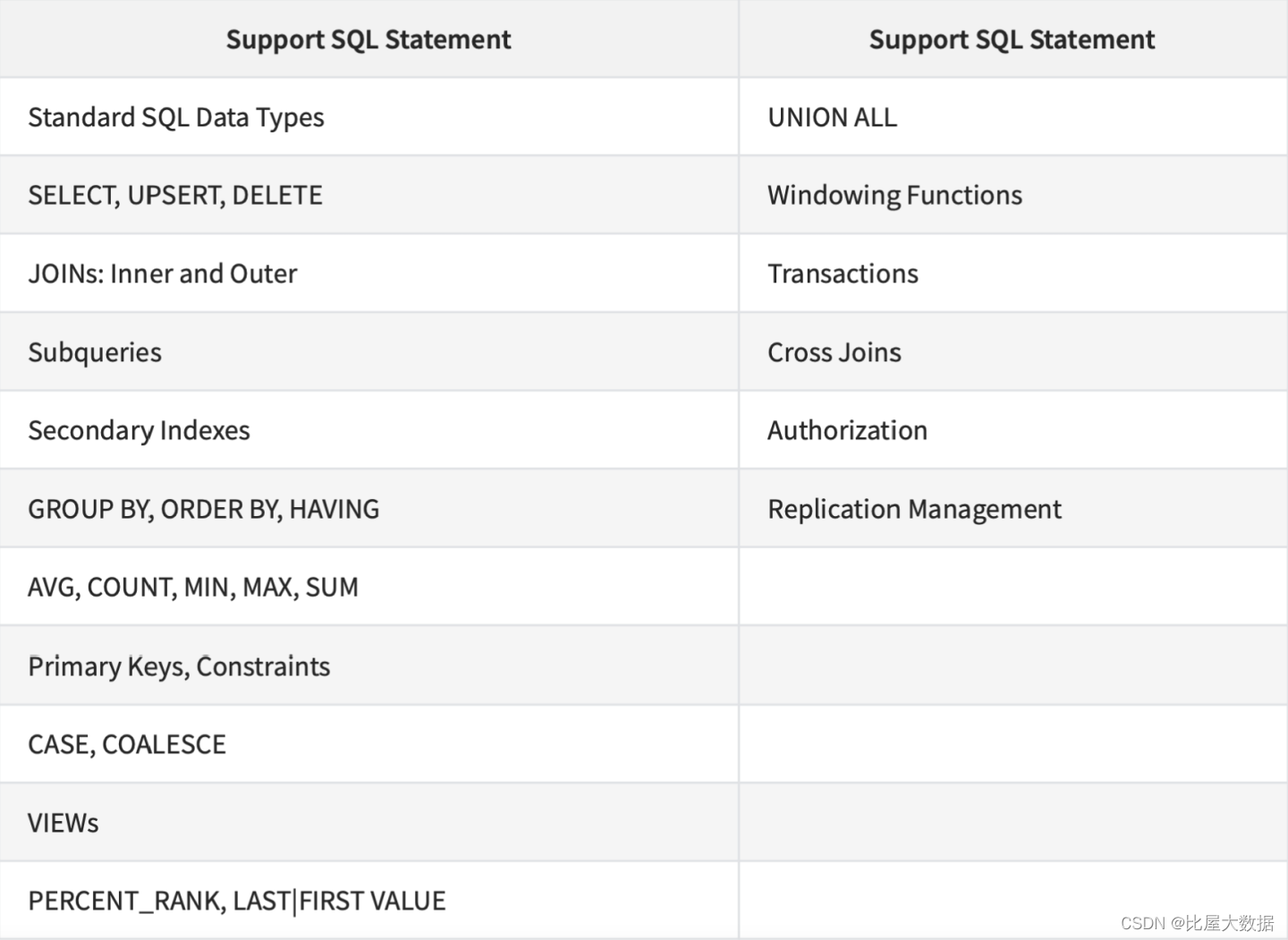

Phoenix Makes HBase Better: (Phoenix使HBase更好) - Killer features like secondary indexes, joins, aggregation pushdowns. (二级索引、连接、聚合)

- 对查询的要求如果不是非常高,很适合Phoenix

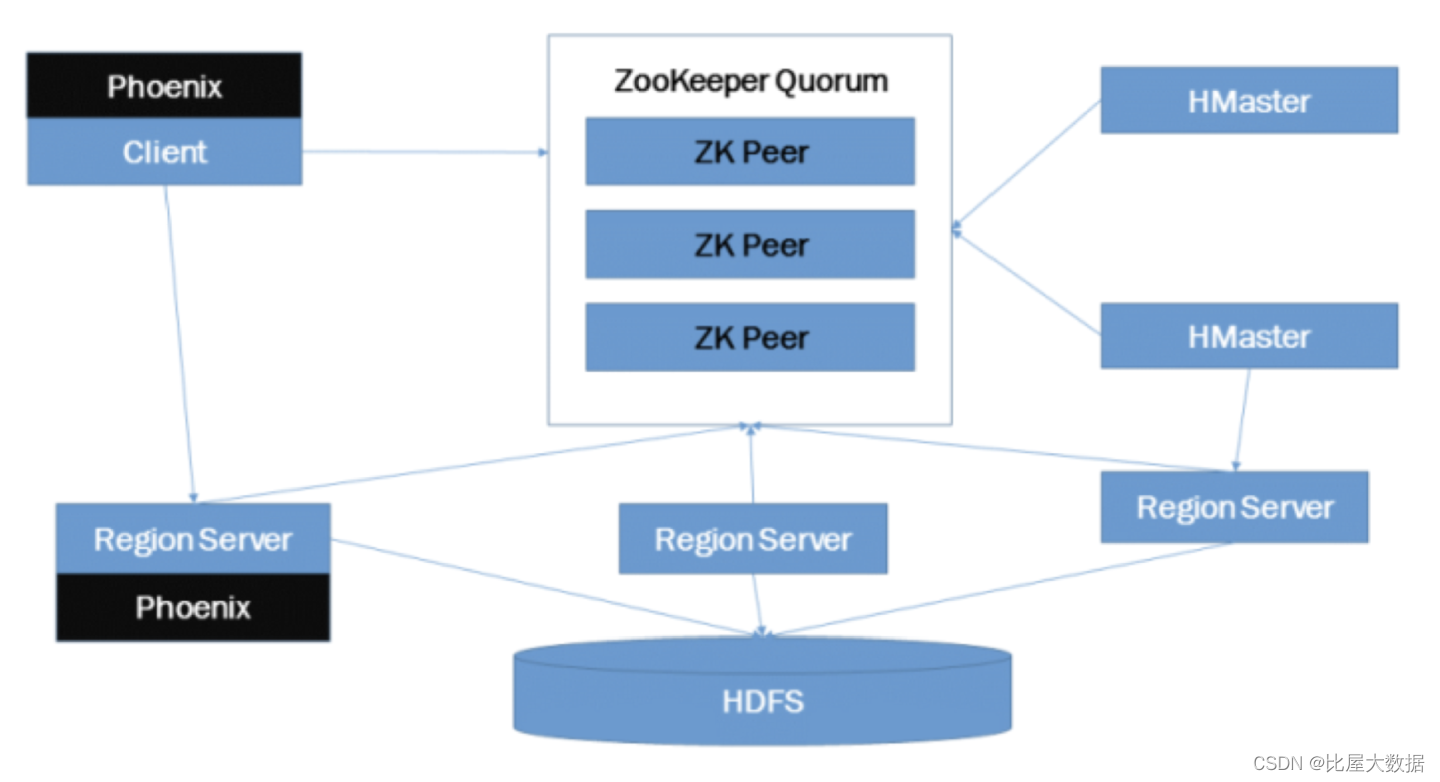

Phoenix Architecture

Phoenix提供类似SQL的语法

//PhoenixClient API Code

HBaseAdmin hbase = new HBaseAdmin(conf);

HTableDescriptor empdesc = new HTableDescriptor("employees");

HColumnDescriptor name = new HColumnDescriptor("name".getBytes());

HColumnDescriptor personalinfo = new HColumnDescriptor("personalinfo".getBytes());

HColumnDescriptor contactinfo = new HColumnDescriptor("contactinfo".getBytes());

empdesc.addFamily(name);

empdesc.addFamily(personalinfo);

empdesc.addFamily(contactinfo);

hbase.createTable(empdesc);

//Phoenix DDL语法

create table employees (

id varchar primary key,

name varchar NOT NULL,

age BIGINT NOT NULL

);

Phoenix支持的关键字

Phoenix 案例

Phoenix Is A Great Fit For: (非常适合)

- Rapidly and easily building an application backed by HBase. (快速轻松地构建HBase支持的应用

程序) - Re-using existing SQL skills while making the transition to Hadoop. (在过渡到Hadoop的同时重用

现有的SQL) - BI Tools

Consider Other Tools For: (考虑其他工具)

- Sophisticated SQL queries involving large joins or advanced SQL features. (涉及大型连接或高级

- SQL功能的复杂SQL查询)

- ETL jobs

-- 进入Phoenix 客户端

/usr/hdp/2.6.4.0-91/phoenix/bin/sqlline.py localhost

-- 创建table

!table

create table company (COMPANY_ID INTEGER PRIMARY KEY, NAME VARCHAR(255));

!columns company

upsert into company values(1,'alibaba');

upsert into company values(2,'baidu');

upsert into company values(3,'xiaomi');

upsert into company values(3,'jinshan');

upsert into company values(3,'jd');

upsert into company values(4,'tengxun');

upsert into company values(5,'zijietiaodong');

select * from company;

delete from company where company_id = 5;

create table stock (company_id integer primary key, price decimal(10,2));

upsert into stock values(1,124.9);

upsert into stock values(2,99);

upsert into stock values(3,130);

upsert into stock values(4,33.5);

upsert into stock values(5,111.9);

select * from stock;

select s.company_id,c.name,s.price from stock s left join company c on

s.company_id =

c.company_id;

-- 查看hbase表

desc 'STOCK'

scan 'STOCK'

scan 'COMPANY'

-- Note:Phoenix 创建的表能在hbase显示,但是hbase创建的表不能在Phoenix显示。

Hbase关联Hive

- 仅支持select,insert,不支持版本 控制

- Performance is ok (性能ok)

-- 创建hbase表

create 'customer_hive',{NAME=>'addr'},{NAME=>'order'}

--通过hive插入数据到hbase

create external table customer (

name string,

order_numb string,

order_date string,

addr_city string,

addr_state string)

stored by 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

with

serdeproperties("hbase.columns.mapping"=":key,order:numb,order:date,addr:city,addr

:state")

tblproperties("hbase.table.name" = "customer_hive");

select * from customer;

insert into table customer values ('James','1121','2019-95-31','toronto','ON')

insert into table customer values ('Tom','1155','2019-12-31','beijing','ON')

-- hbase 执行DML

scan 'customer_hive'

put 'customer_hive','lisi','order:numb','5555'

put 'customer_hive','wangwu','addr:city','dallas'

put 'customer_hive','wangwu','order:state','aa'

put 'customer_hive','zhaoliu','addr:city','denver'

put 'customer_hive','zhaoliu','order:state','CO1'

put 'customer_hive','zhaoliu','order:numb','1111'

put 'customer_hive','zhugeliang','order:state','TX'

put 'customer_hive','sunquan','addr:state','TX'

scan 'customer_hive'

--hive

select * from customer;

select count(*) from customer;

5658

5658

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?