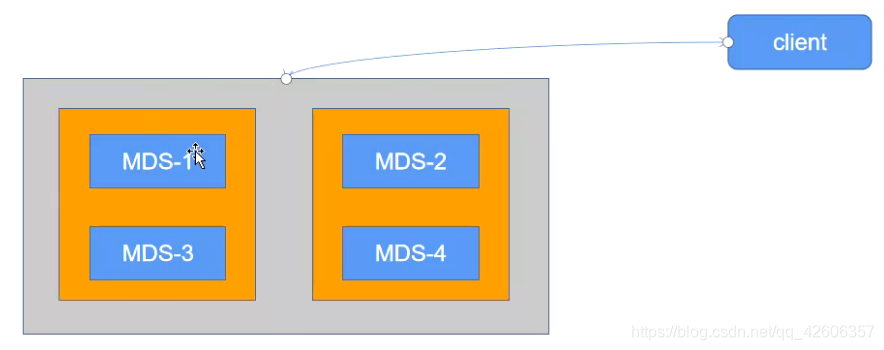

Ceph mds(metadata service)作为 ceph 的访问入口,需要实现高性能及数据备份,假设启动 4 个 MDS 进程,设置 2 个 Rank。这时候有 2 个 MDS 进程会分配给两个 Rank,还剩下 2 个 MDS 进程分别作为另外个的备份。

设置每个 Rank 的备份 MDS,也就是如果此 Rank 当前的 MDS 出现问题马上切换到另个MDS。 设置备份的方法有很多,常用选项如下:

mds_standby_replay:值为 true 或 false,true 表示开启 replay 模式,这种模式下主 MDS 内的数量将实时与从 MDS 同步,如果主宕机,从可以快速的切换。如果为 false 只有宕机的时候才去同步数据,这样会有一段时间的中断。

mds_standby_for_name:设置当前 MDS 进程只用于备份于指定名称的 MDS。

mds_standby_for_rank:设置当前 MDS 进程只用于备份于哪个 Rank,通常为 Rank 编号。另外在存在之个 CephFS 文件系统中,还可以使用 mds_standby_for_fscid 参数来为指定不同的 文件系统。

mds_standby_for_fscid:指定 CephFS 文件系统 ID,需要联合 mds_standby_for_rank 生效,如果设置 mds_standby_for_rank,那么就是用于指定文件系统的指定 Rank,如果没有设置,就 是指定文件系统的所有 Rank。

一、查看当前 mds 服务器状态

[ceph@ceph-deploy ceph-cluster]$ ceph mds stat

mycephfs-1/1/1 up {0=ceph-mgr1=up:active}

二、添加 MDS 服务器

原有MDS服务器:mgr1 10.0.0.17

新添加MDS服务器:mgr2 10.0.0.18、mon1 10.0.0.14、mon2 10.0.0.15

# 安装 ceph-mds 服务

[root@ceph-mgr2 ~]# yum install ceph-mds -y

[root@ceph-mon1 ~]# yum install ceph-mds -y

[root@ceph-mon2 ~]# yum install ceph-mds -y

# 添加 mds 服务器

[ceph@ceph-deploy ceph-cluster]$ ceph-deploy mds create ceph-mgr2

[ceph@ceph-deploy ceph-cluster]$ ceph-deploy mds create ceph-mon1

[ceph@ceph-deploy ceph-cluster]$ ceph-deploy mds create ceph-mon2

# 验证 mds 服务器当前状态

[ceph@ceph-deploy ceph-cluster]$ ceph mds stat

mycephfs-1/1/1 up {0=ceph-mgr1=up:active}, 3 up:standby

[ceph@ceph-deploy ceph-cluster]$ ceph fs status

mycephfs - 0 clients

========

+------+--------+-----------+---------------+-------+-------+

| Rank | State | MDS | Activity | dns | inos |

+------+--------+-----------+---------------+-------+-------+

| 0 | active | ceph-mgr1 | Reqs: 0 /s | 10 | 13 |

+------+--------+-----------+---------------+-------+-------+

+-----------------+----------+-------+-------+

| Pool | type | used | avail |

+-----------------+----------+-------+-------+

| cephfs-metadata | metadata | 2654 | 281G |

| cephfs-data | data | 0 | 281G |

+-----------------+----------+-------+-------+

+-------------+

| Standby MDS |

+-------------+

| ceph-mgr2 |

| ceph-mon1 |

| ceph-mon2 |

+-------------+

三、设置 MDS 为 active 的数量为 2

四个 mds 服务器,可以设置为两主两备

# 设置mycephfs的max_mds值为2,其就是表示active数量

[ceph@ceph-deploy ceph-cluster]$ ceph fs set mycephfs max_mds 2

# 查看其中max_mds参数是否为 2

[ceph@ceph-deploy ceph-cluster]$ ceph fs get mycephfs

Filesystem 'mycephfs' (1)

fs_name mycephfs

epoch 18

flags 12

created 2021-08-09 07:58:49.786248

modified 2021-08-16 01:46:30.913265

tableserver 0

root 0

session_timeout 60

session_autoclose 300

max_file_size 1099511627776

min_compat_client -1 (unspecified)

last_failure 0

last_failure_osd_epoch 66

compat compat={},rocompat={},incompat={1=base v0.20,2=client writeable ranges,3=default file layouts on dirs,4=dir inode in separate object,5=mds uses versioned encoding,6=dirfrag is stored in omap,8=no anchor table,9=file layout v2,10=snaprealm v2}

max_mds 2

in 0,1

up {0=14133,1=14622}

failed

damaged

stopped

data_pools [8]

metadata_pool 7

inline_data disabled

balancer

standby_count_wanted 1

14133: 10.0.0.17:6800/1866575599 'ceph-mgr1' mds.0.6 up:active seq 18

14622: 10.0.0.15:6800/555073892 'ceph-mon2' mds.1.17 up:active seq 206

# 查看当前 fs 状态

[ceph@ceph-deploy ceph-cluster]$ ceph fs status

mycephfs - 0 clients

========

+------+--------+-----------+---------------+-------+-------+

| Rank | State | MDS | Activity | dns | inos |

+------+--------+-----------+---------------+-------+-------+

| 0 | active | ceph-mgr1 | Reqs: 0 /s | 10 | 13 |

| 1 | active | ceph-mon2 | Reqs: 0 /s | 10 | 13 |

+------+--------+-----------+---------------+-------+-------+

+-----------------+----------+-------+-------+

| Pool | type | used | avail |

+-----------------+----------+-------+-------+

| cephfs-metadata | metadata | 3974 | 281G |

| cephfs-data | data | 0 | 281G |

+-----------------+----------+-------+-------+

+-------------+

| Standby MDS |

+-------------+

| ceph-mgr2 |

| ceph-mon1 |

+-------------+

MDS version: ceph version 13.2.10 (564bdc4ae87418a232fc901524470e1a0f76d641) mimic (stable)

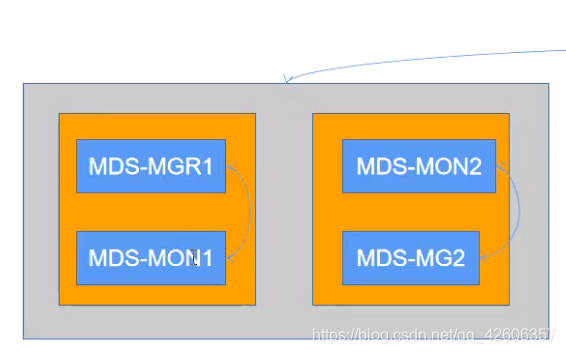

四、MDS 高可用配置

目前 MDS 状态:

ceph-mgr1和ceph-mon2分别是active状态

ceph-mon1和ceph-mgr2分别处于standby 状态

现在将 ceph-mon1 设置为 ceph-mgr1 的 standby,将 ceph-mgr2 设置为 ceph-mon2 的 standby

则配置文件如下:

[ceph@ceph-deploy ceph-cluster]$ vim ceph.conf

public_network = 10.0.0.0/24

cluster_network = 192.168.0.0/24

mon_initial_members = ceph-mon1

mon_host = 10.0.0.14

auth_cluster_required = cephx

auth_service_required = cephx

auth_client_required = cephx

mon clock drift allowed = 2

mon clock drift warn backoff = 30 #设置此选项可以避免时间不同步带来的警告

[mds.ceph-mon1]

mds_standby_for_name = ceph-mgr1

mds_standby_replay = true

[mds.ceph-mgr2]

#mds_standby_for_fscid = mycephfs

mds_standby_for_name = ceph-mon2

mds_standby_replay = true

五、发布配置文件并重启 mds 服务

# 发布配置文件并重启 mds 服务

[ceph@ceph-deploy ceph-cluster]$ ceph-deploy --overwrite-conf config push ceph-mon1

[ceph@ceph-deploy ceph-cluster]$ ceph-deploy --overwrite-conf config push ceph-mon2

[ceph@ceph-deploy ceph-cluster]$ ceph-deploy --overwrite-conf config push ceph-mgr1

[ceph@ceph-deploy ceph-cluster]$ ceph-deploy --overwrite-conf config push ceph-mgr2

# 重启服务

[root@ceph-mgr1 ~]# systemctl restart ceph-mds@ceph-mgr1.service

[root@ceph-mgr2 ~]# systemctl restart ceph-mds@ceph-mgr2.service

[root@ceph-mon1 ~]# systemctl restart ceph-mds@ceph-mon1.service

[root@ceph-mon2 ~]# systemctl restart ceph-mds@ceph-mon2.service

六、Ceph 集群 mds 高可用状态

[ceph@ceph-deploy ceph-cluster]$ ceph fs get mycephfs

Filesystem 'mycephfs' (1)

fs_name mycephfs

epoch 62

flags 12

created 2021-08-09 07:58:49.786248

modified 2021-08-16 02:13:01.891828

tableserver 0

root 0

session_timeout 60

session_autoclose 300

max_file_size 1099511627776

min_compat_client -1 (unspecified)

last_failure 0

last_failure_osd_epoch 91

compat compat={},rocompat={},incompat={1=base v0.20,2=client writeable ranges,3=default file layouts on dirs,4=dir inode in separate object,5=mds uses versioned encoding,6=dirfrag is stored in omap,8=no anchor table,9=file layout v2,10=snaprealm v2}

max_mds 2

in 0,1

up {0=14742,1=14838}

failed

damaged

stopped

data_pools [8]

metadata_pool 7

inline_data disabled

balancer

standby_count_wanted 1

14742: 10.0.0.15:6800/1127316889 'ceph-mon2' mds.0.55 up:active seq 31

15000: 10.0.0.18:6800/3993964800 'ceph-mgr2' mds.0.0 up:standby-replay seq 2 (standby for rank 0 'ceph-mon2')

14838: 10.0.0.17:6800/3216206710 'ceph-mgr1' mds.1.49 up:active seq 14

15006: 10.0.0.14:6800/1730652745 'ceph-mon1' mds.1.0 up:standby-replay seq 2 (standby for rank 1 'ceph-mgr1')

1025

1025

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?