前言

Knative是构建在容器、kubernetes以及Istio的基础之上的Serverless解决方案。Knative有两个组件,它们既可以各自独立安装部署,也可以一起安装相互配合。

基本环境搭建

Knative 0.16版本需要kubernetes 1.15以上版本的支持。

| 软件 | 版本 |

|---|---|

| Kubernetes | 1.23.15 |

| Istio | 1.6.8 |

| Knative Serving | 1.8.3 |

| Knative Eventing | 0.16.0 |

| Tekton Pipeline | 0.16.3 |

| Tekton Trigger | 0.8.1 |

| Tekton Dashboard | 0.10.0 |

Kubernetes安装

| 主机名 | IP | 操作系统 | 硬件配置 |

|---|---|---|---|

| master | 192.168.1.91 | centos7 | 4CPU RAM:6GB |

| node1 | 192.168.1.92 | centos7 | 4CPU RAM:6GB |

| node2 | 192.168.1.93 | centos7 | 4CPU RAM:6GB |

常规配置(三台虚拟机均需进行配置)

关闭防火墙

# systemctl disable firewalld

# systemctl stop firewalld

关闭Selinux

# sed -i 's/enforcing/disabled/' /etc/selinux/config

禁用swap

# sed -ri 's/.*swap.*/#&/' /etc/fstab

配置域名解析

# cat >> /etc/hosts <<EOF

192.168.1.91 master

192.168.1.92 node1

192.168.1.93 node2

EOF

将桥接的IPv4流量传递到iptables的链

# cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

vm.swappiness = 0

EOF

# modprobe br_netfilter

# lsmod | grep br_netfilter

# sysctl --system

配置ipvs功能

在kubernetes中service有两种代理模型,一种是基于iptables,另一种是基于ipvs的。ipvs的性能要高于iptables的,但是如果要使用它,需要手动载入ipvs模块。

# yum -y install ipset ipvsadm

# cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

# chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

配置时间同步

# yum install ntpdate -y

# ntpdate time.windows.com

Docker安装

# curl https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -o /etc/yum.repos.d/docker-ce.repo

# yum install -y docker-ce

# systemctl enable docker && systemctl start docker

配置docker镜像仓库加速

# sudo mkdir -p /etc/docker

# vim /etc/docker/daemon.json

{

"registry-mirrors": ["https://qdzgikwk.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

# systemctl daemon-reload

# systemctl restart docker

修改防火墙规则

Docker从1.13版本开始调整了默认的防火墙规则,禁用了iptables的filter表中FOWARD链,这样会引起Kubernetes集群中跨节点的Pod无法通信。

# iptables -P FORWARD ACCEPT

# iptables-save

配置k8s源仓库

# cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes /yum/doc/rpm-package-key.gpg

EOF

Kubernetes Master配置

部署kubelet、kubeadm、kubectl

# yum install -y kubelet-1.23.15 kubeadm-1.23.15 kubectl-1.23.15

# systemctl enable kubelet

部署Kubernetes Master

# kubeadm init \

--apiserver-advertise-address=192.168.1.91 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.23.15 \

--service-cidr=11.62.0.0/16 \

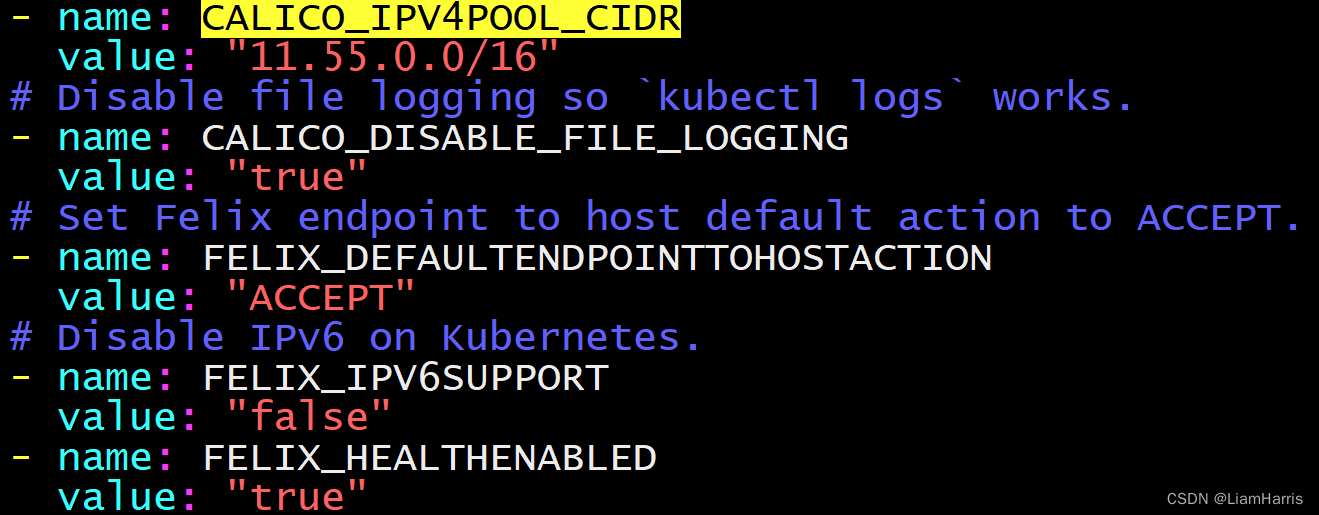

--pod-network-cidr=11.55.0.0/16

-–apiserver-advertise-address:集群通告地址

-–image-repository:由于默认拉取镜像地址k8s.gcr.io国内无法访问,这里指定阿里云镜像仓库地址

-–kubernetes-version: K8s版本,与上面安装的一致

-–service-cidr :集群内部虚拟网络,Pod统一访问入口

-–pod-network-cidr Pod:网络,需要与接下来部署的CNI网络组件yaml中保持一致

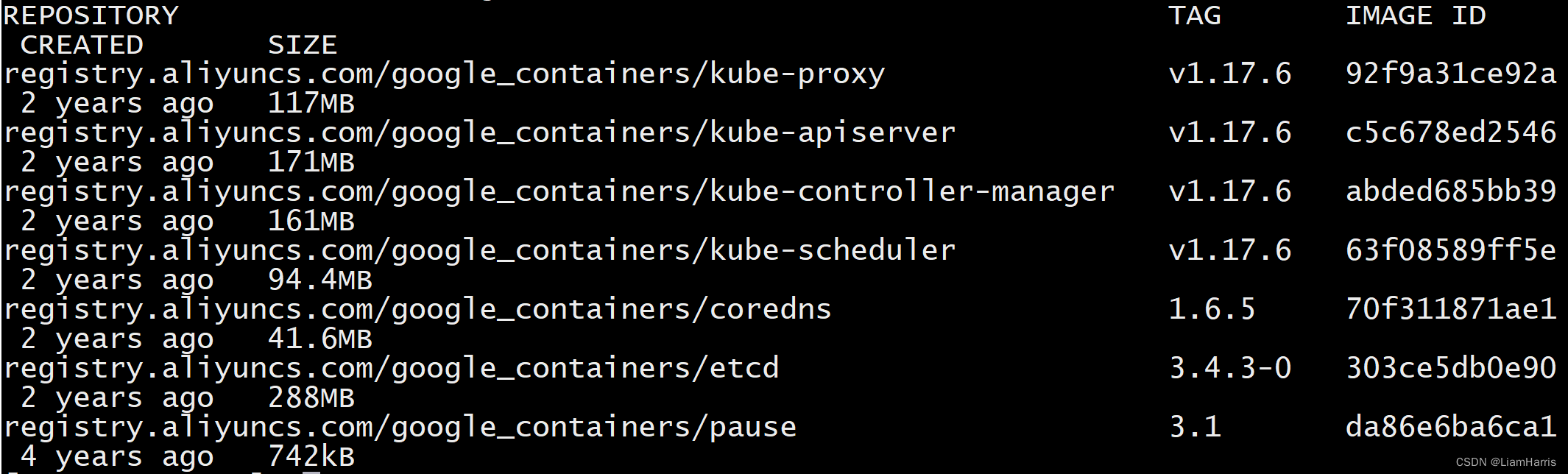

安装完成后,运行docker images 命令,可见到如下输出

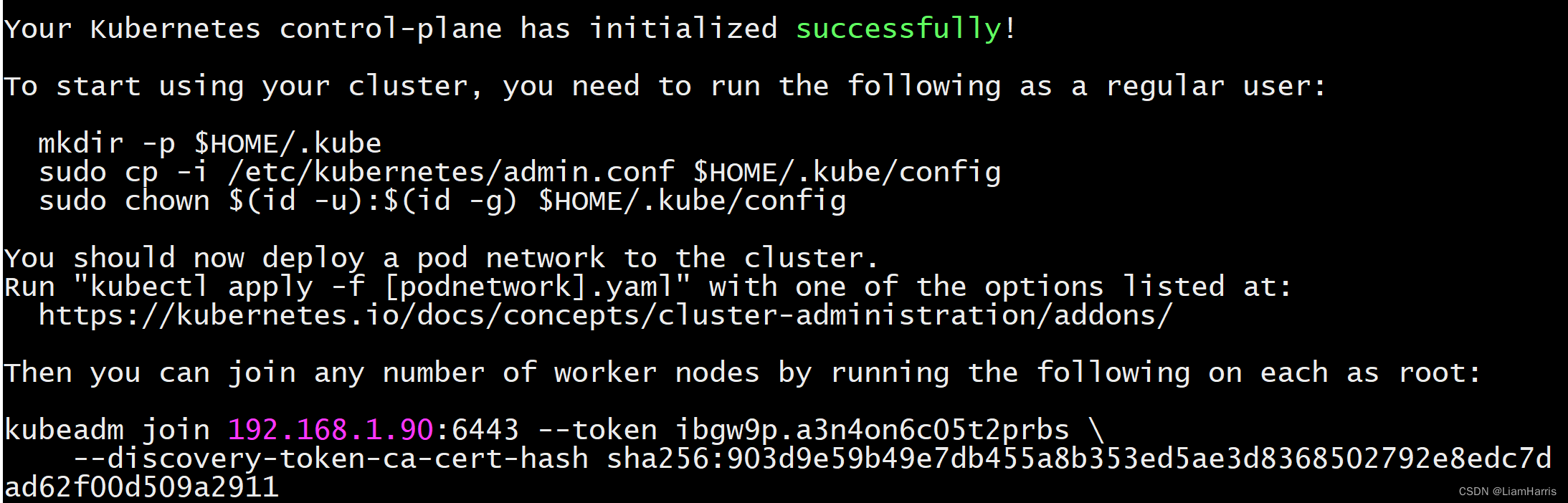

如果安装成功,可见到如下输出

关注最后的kudeadm join命令,等会将用到

关注最后的kudeadm join命令,等会将用到

建立k8s管理用户

# useradd kadmin

配置k8s用户连接

# mkdir -p /home/kadmin/.kube

# cp /etc/kubernetes/admin.conf /home/kadmin/.kube/config

# chown kadmin:kadmin -R /home/kadmin/.kube

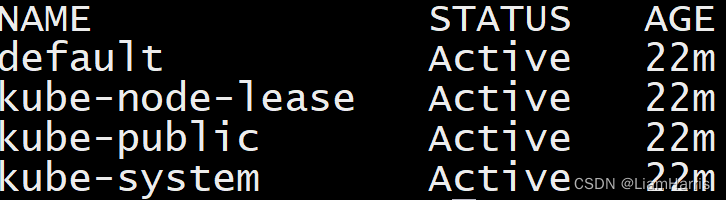

验证配置

# su - kadmin

$ kubectl get ns

如果要登出,输入exit即可

k8s node配置

部署kubelet、kubeadmin

# yum install -y kubelet-1.23.15 kubeadm-1.23.15

# systemctl enable kubelet

加入k8s集群

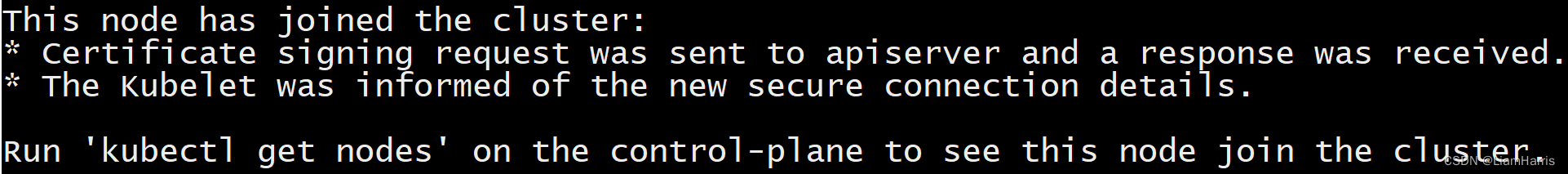

# kubeadm join 192.168.1.91:6443 --token ibgw9p.a3n4on6c05t2prbs \

--discovery-token-ca-cert-hash sha256:903d9e59b49e7db455a8b353ed5ae3d8368502792e8edc7d ad62f00d509a2911

加入完成之后,可见到如下输出

配置k8s集群网络

# curl https://docs.projectcalico.org/v3.20/manifests/calico.yaml -o /tmp/calico.yaml

取消注释 CALICO_IPV4POOL_CIDR,并将其值设置为kubernetes的pod-network-cidr的值

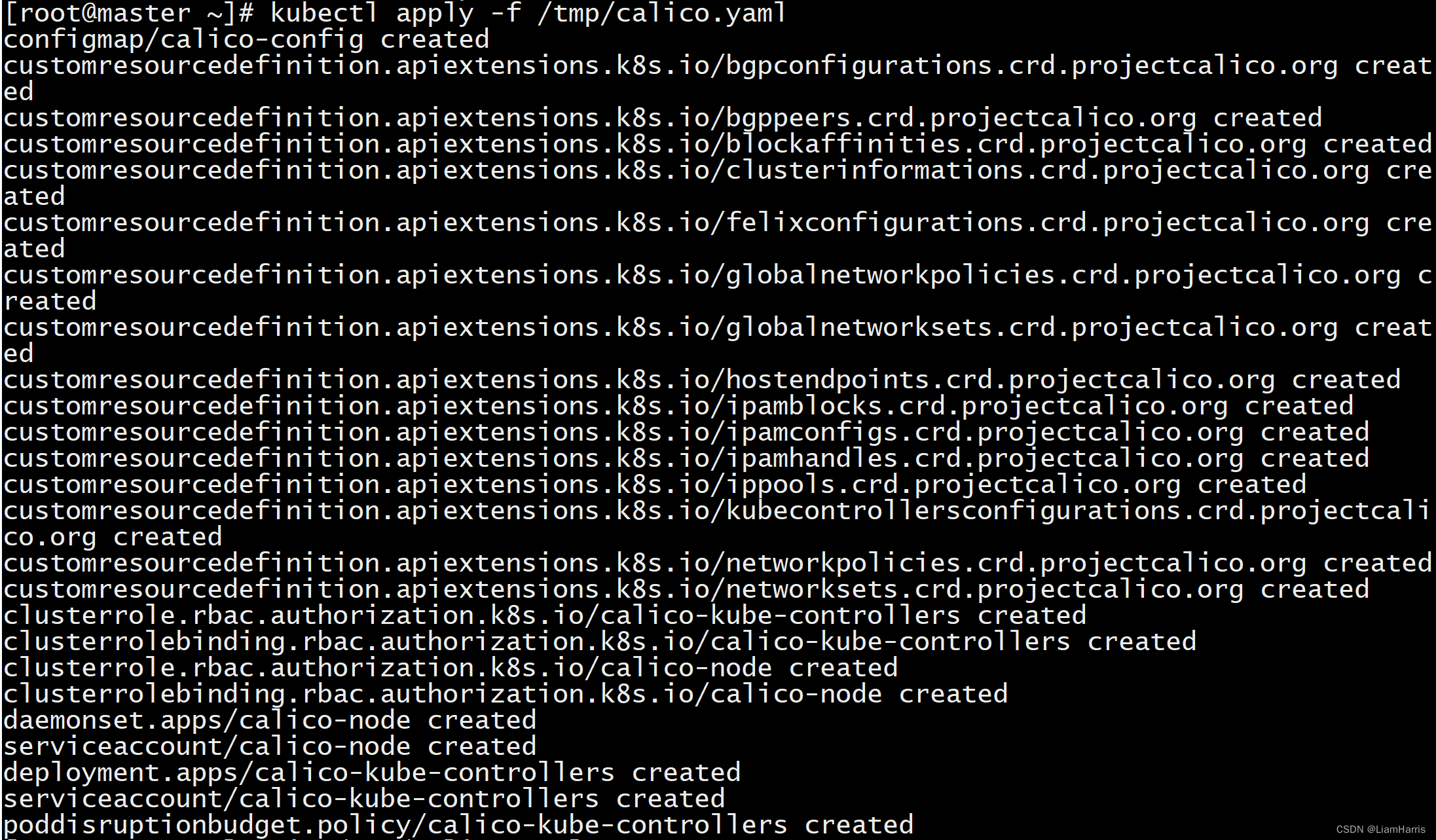

# kubectl apply -f /tmp/calico.yaml

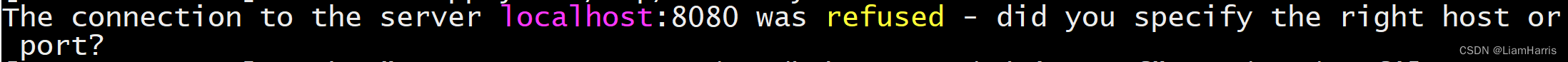

如果出现如下问题

原因:kubernetes master没有与本机绑定,集群初始化的时候没有绑定,此时设置在本机的环境变量即可解决问题。

原因:kubernetes master没有与本机绑定,集群初始化的时候没有绑定,此时设置在本机的环境变量即可解决问题。

# echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> /etc/profile

# source /etc/profile

kubectl apply命令执行成功,可见到如下输出

部署kubernetes控制台

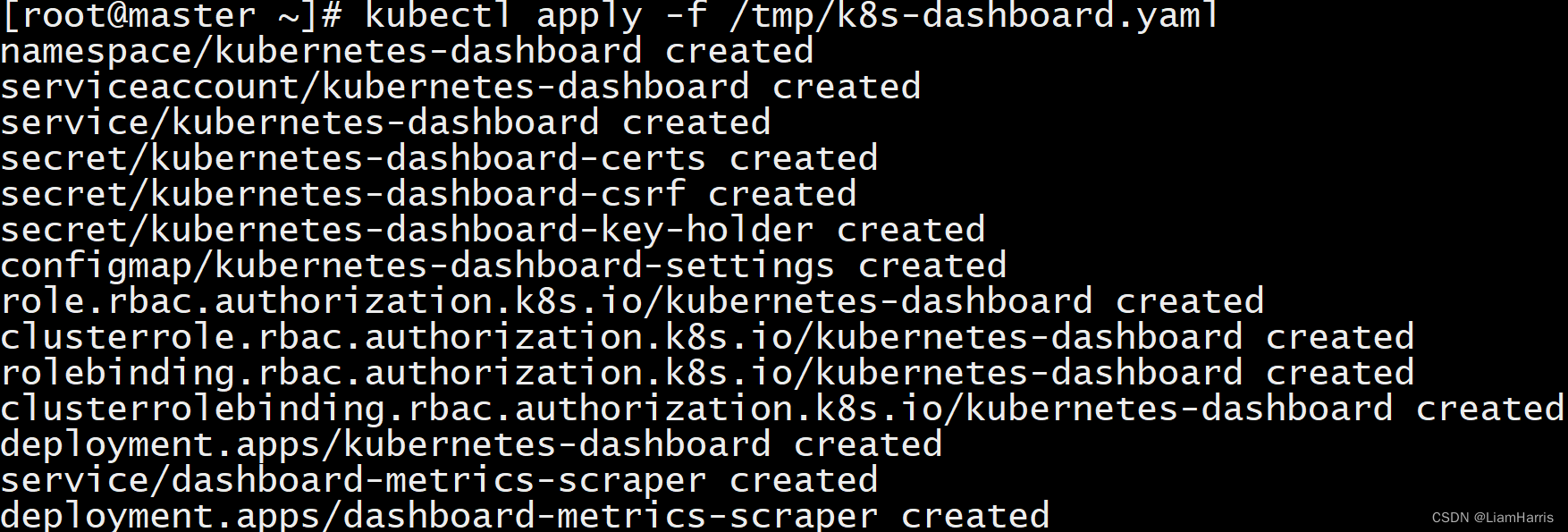

# curl -ls https://gitee.com/xiaojinran/k8s/raw/master/k8s-dashboard/dashboard.yaml -o /tmp/k8s-dashboard.yaml

# kubectl apply -f /tmp/k8s-dashboard.yaml

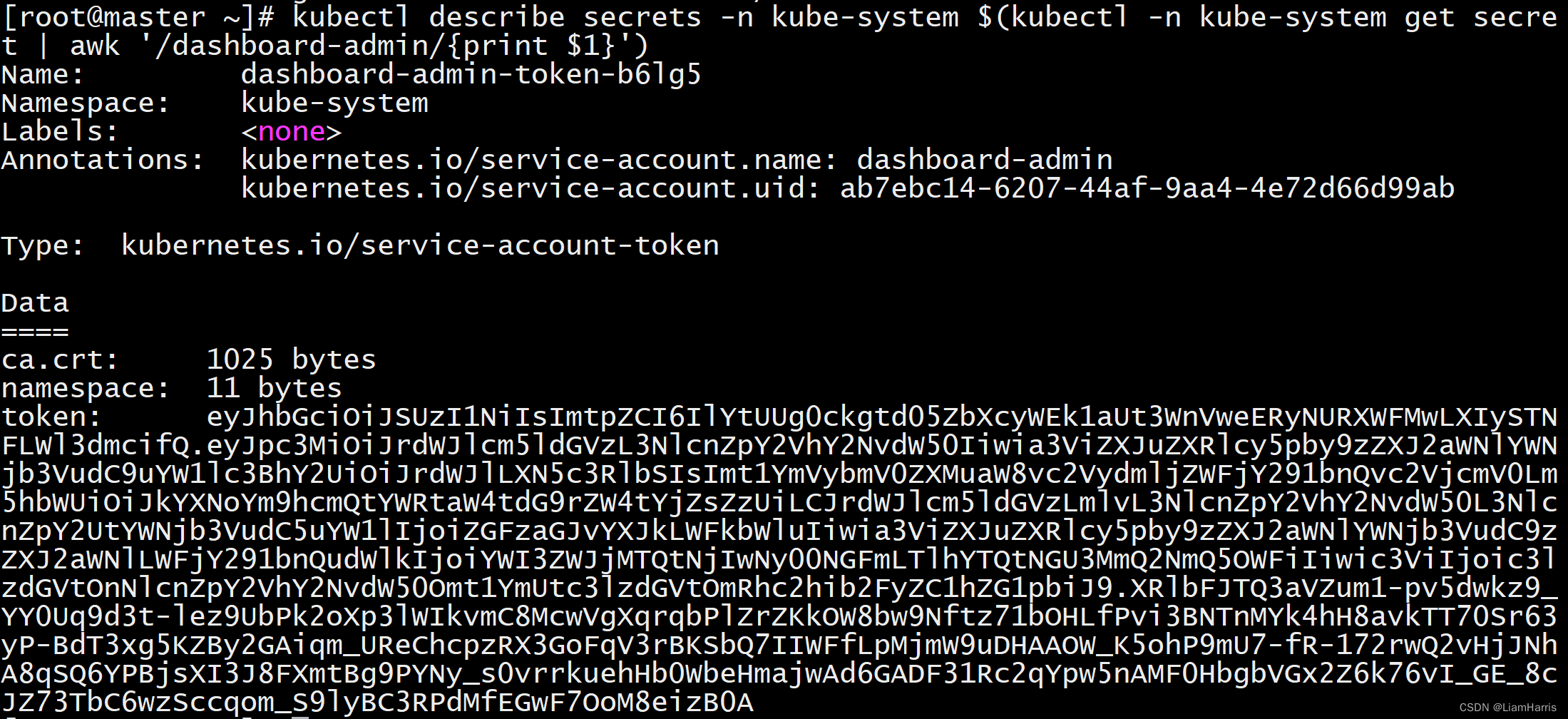

配置登录Token

# kubectl create serviceaccount dashboard-admin -n kube-system

# kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

# kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

Istio平台部署

当前Knative支持的网络层组件有Ambassdor、Contour、Gloo、Istio、Kong、Kourier

安装istioctl命令工具

# curl -L https://istio.io/downloadIstio | ISTIO_VERSION=1.6.8 sh -

# cd istio-1.6.8${ISTIO_VERSION}

# export PATH=$PWD/bin:$PATH

编写IstioOperator自定义配置文件

# cat << EOF > ./istio-minimal-operator.yaml

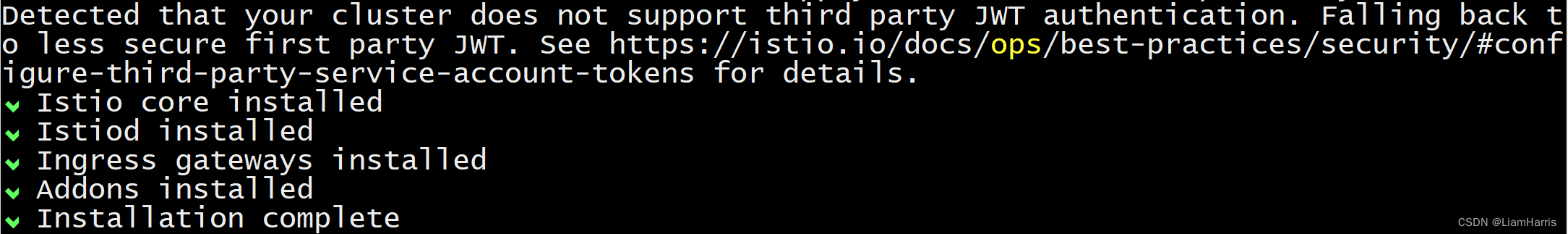

应用配置清单

# istioctl manifest apply -f istio-minimal-operator.yaml

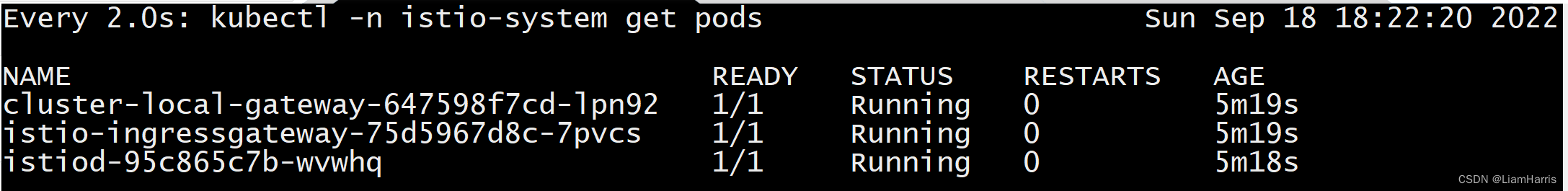

验证已部署的Istio服务运行状态

# watch kubectl -n istio-system get pods

Knative Serving安装

安装Knative Serving CRD

# kubectl apply -f \

https://github.com/knative/serving/releases/download/v0.16.0/serving-crds.yaml

安装Knative Serving核心组件

# kubectl apply -f \

https://github.com/knative/serving/releases/download/v0.16.0/serving-core.yaml

安装Knative网络层Istio控制器,实现Istio与Knative集成

# kubectl apply -f \

https://github.com/knative/net-istio/releases/download/v0.16.0/release.yaml

安装HPA自动缩放扩展

# kubectl apply -f \

https://github.com/knative/serving/releases/download/v0.16.0/serving-hpa.yaml

检查Knative Serving相关服务运行状态

# watch kubectl get pods -n knative-serving

Knative Eventing安装

安装Knative Eventing CRD

# kubectl apply -f \

https://github.com/knative/eventing/releases/download/v0.16.0/eventing-crds.yaml

安装Knative Eventing核心组件

# kubectl apply -f \

https://github.com/knative/eventing/releases/download/v0.16.0/eventing-core.yaml

安装默认Channel层

# kubectl apply -f \

https://github.com/knative/eventing/releases/download/v0.16.0/in-memory-channel.yaml

安装Broker层

# kubectl apply -f \

https://github.com/knative/eventing/releases/download/v0.16.0/mt-channel-broker.yaml

检查Knative Eventing相关服务运行状态

# watch kubectl get pods -n knative-eventing

安装可观察组件

为可观察行组件创建命名空间

# kubectl apply -f \

https://github.com/knative/serving/releases/download/v0.16.0/monitoring-core.yaml

安装Prometheus和Grafana

# kubectl apply -f \

https://github.com/knative/serving/releases/download/v0.16.0/monitoring-metrics-prometheus.yaml

安装EFK日志收集处理中心

# kubectl apply -f \

https://github.com/knative/serving/releases/download/v0.16.0/monitoring-logs-elasticsearch.yaml

安装Jaeger实现分布式追踪

# kubectl apply -f \

https://github.com/knative/serving/releases/download/v0.16.0/monitoring-tracing-jaeger-in-mem.yaml

Tekton安装

Tekton Pipeline安装

安装Tekton的核心组件Pipeline

# kubectl apply -f \

https://storage.googleapis.com/tekton-releases/pipeline/previous/v0.16.3/release.yaml

验证Pipeline组件运行状态

# kubectl get pods -n tekton-pipelines

为PipelineResources配置存储

# cat <<EOF | kubectl apply -f -

Tekton Dashborad安装

为Tekton 安装 Dashborad UI

# kubectl apply -f \

https://storage.googleapis.com/tekton-releases/dashboard/previous/v0.10.0/tekton-dashboard-release.yaml

验证Dashboard组件运行状态

# kubectl get pods -n tekton-pipelines

访问TektonDashboard

# kubectl --namespace tekton-pipelines \

port-forward svc/tekton-dashboard 9097:9097 --address=<Kubernetes 节点 IP>

Tekton Trigger安装

为Tekton安装Trigger

# kubectl apply -f \

https://github.com/tektoncd/triggers/release/download/v0.8.1/release.yaml

验证Trigger组件运行状态

# kubectl get pods -n tekton-pipelines

最近要开始做项目了,以后可能更多的是别的平台了,比如Fn Project或者OpenWhisk,Knative如果有什么学习感悟,还是会更新的。

本文详细介绍Knative及Tekton的部署过程,包括Kubernetes环境搭建、Istio平台安装、Knative Serving与Eventing配置,以及Tekton Pipeline与Trigger的安装验证。

本文详细介绍Knative及Tekton的部署过程,包括Kubernetes环境搭建、Istio平台安装、Knative Serving与Eventing配置,以及Tekton Pipeline与Trigger的安装验证。

3486

3486

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?