pom:

<!--引入kafak和spring整合的jar-->

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

</dependency>

yml:

#kafka节点

kafka:

bootstrap-servers: 127.0.0.1:9092

#=============== provider =======================

#kafka发送消息失败后的重试次数

producer:

retries: 0

#当消息达到该值后再批量发送消息.16kb(每次批量发送消息的数量)

batch-size: 16984

#设置kafka producer内存缓冲区大小.32MB

buffer-memory: 33554432

#kafka消息的序列化配置(指定消息key和消息体的编解码方式)

key-serializer: org.apache.kafka.common.serialization.StringSerializer

value-serializer: org.apache.kafka.common.serialization.StringSerializer

#acks=0 : 生产者在成功写入消息之前不会等待任何来自服务器的响应。??

#acks=1 : 只要集群的leader节点收到消息,生产者就会收到一个来自服务器成功响应。

#acks=-1: 表示分区leader必须等待消息被成功写入到所有的ISR副本(同步副本)中才认为producer请求成功。

# 这种方案提供最高的消息持久性保证,但是理论上吞吐率也是最差的。

acks: 1

#=============== consumer =======================

consumer:

# 指定默认消费者group id

group-id: user-log-group

auto-offset-reset: earliest

enable-auto-commit: true

auto-commit-interval: 100

# 指定消息key和消息体的编解码方式

key-deserializer: org.apache.kafka.common.serialization.StringDeserializer

value-deserializer: org.apache.kafka.common.serialization.StringDeserializer

实体类:

package com.example.dtest.kafka;

import lombok.Data;

import lombok.experimental.Accessors;

@Data

@Accessors(chain = true)

public class UserLog {

private String username;

private String userid;

private String state;

}

生产者端:

package com.example.dtest.kafka;

import com.gexin.fastjson.JSON;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.kafka.core.KafkaTemplate;

import org.springframework.stereotype.Component;

@Component

public class UserLogProducer {

@Autowired

private KafkaTemplate kafkaTemplate;

/**

* 发送数据

* @param userid

*/

public void sendLog(String userid){

UserLog userLog = new UserLog();

userLog.setUsername("jhp").setUserid(userid).setState("0");

System.err.println("发送用户日志数据:"+userLog);

kafkaTemplate.send("user-log", JSON.toJSONString(userLog));

}

}

消费者端:

package com.example.dtest.kafka;

import lombok.extern.slf4j.Slf4j;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.springframework.kafka.annotation.KafkaListener;

import org.springframework.stereotype.Component;

import java.util.Optional;

@Component

@Slf4j

public class UserLogConsumer {

@KafkaListener(topics = {"user-log"})

public void consumer(ConsumerRecord<?,?> consumerRecord){

log.info("消费!");

//判断是否为null

Optional<?> kafkaMessage = Optional.ofNullable(consumerRecord.value());

log.info(">>>>>>>>>> record =" + kafkaMessage);

if(kafkaMessage.isPresent()){

//得到Optional实例中的值

Object message = kafkaMessage.get();

System.err.println("消费消息:"+message);

}

}

}

controller测试:

package com.example.dtest.controller;

import com.example.dtest.kafka.UserLogProducer;

import com.example.vo.Res;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.web.bind.annotation.*;

import javax.servlet.http.HttpServletRequest;

@RestController

@RequestMapping("/KAFKA")

@CrossOrigin

public class KafkaTestContoller {

@Autowired

UserLogProducer userLogProducer;

@GetMapping("setMsg/{userid}")

public Res setMsg(@PathVariable("userid") String userid, HttpServletRequest httpServletRequest){

userLogProducer.sendLog(userid);

return Res.success();

}

}

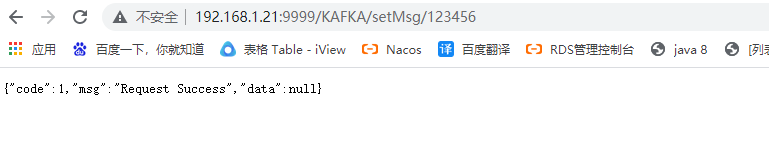

测试结果:

697

697

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?