目录

前置要求

单机需要安装docker,集群搭建还需要docker compose。

注意事项

以下命令如docker compose命令为Mac下的命令,linux需要使用docker-compose。

单机搭建

该搭建流程为使用docker进行搭建。

1 创建目录

/Users/jiajie/docker/clickhouse/clickhouse_test_db 该目录为机器上自己创建的目录,需要先创建一个,将下面命令中的目录修改为实际机器的目录

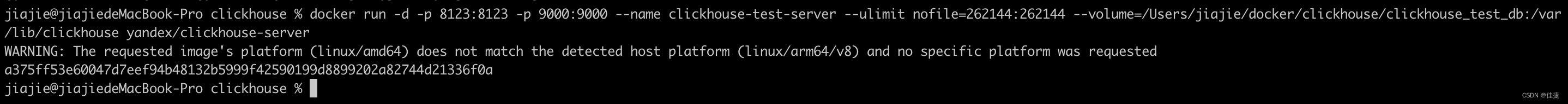

2 启动docker镜像服务

docker run -d -p 8123:8123 -p 9000:9000 --name clickhouse-test-server --ulimit nofile=262144:262144 --volume=/Users/jiajie/docker/clickhouse/clickhouse_test_db:/var/lib/clickhouse yandex/clickhouse-server

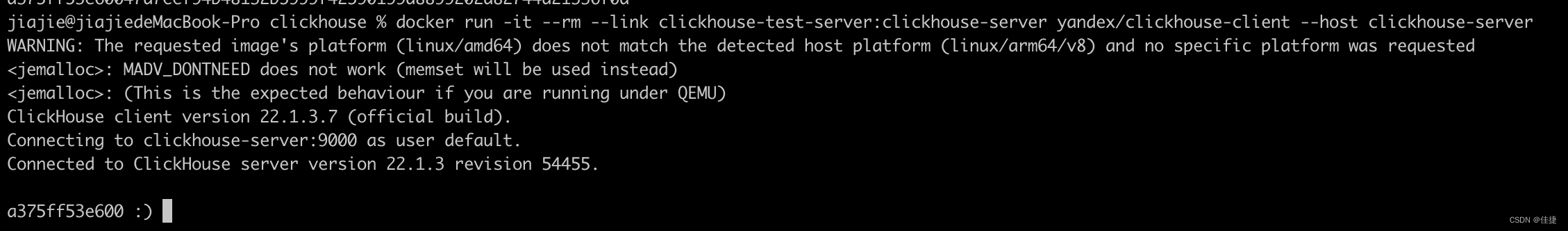

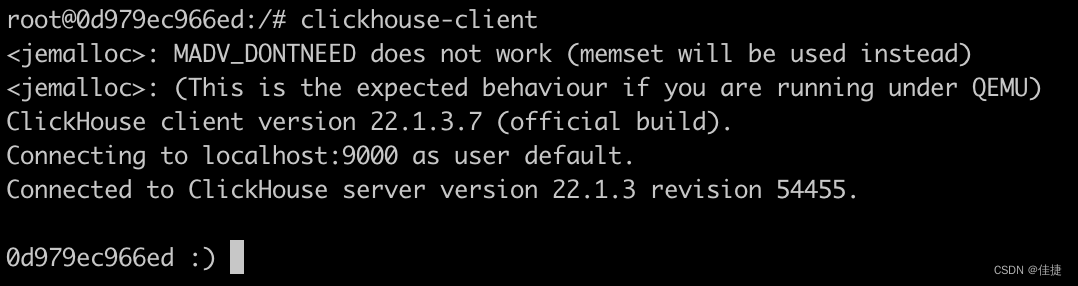

3 使用client连接clickhouse服务

docker run -it --rm --link clickhouse-test-server:clickhouse-server yandex/clickhouse-client --host clickhouse-server

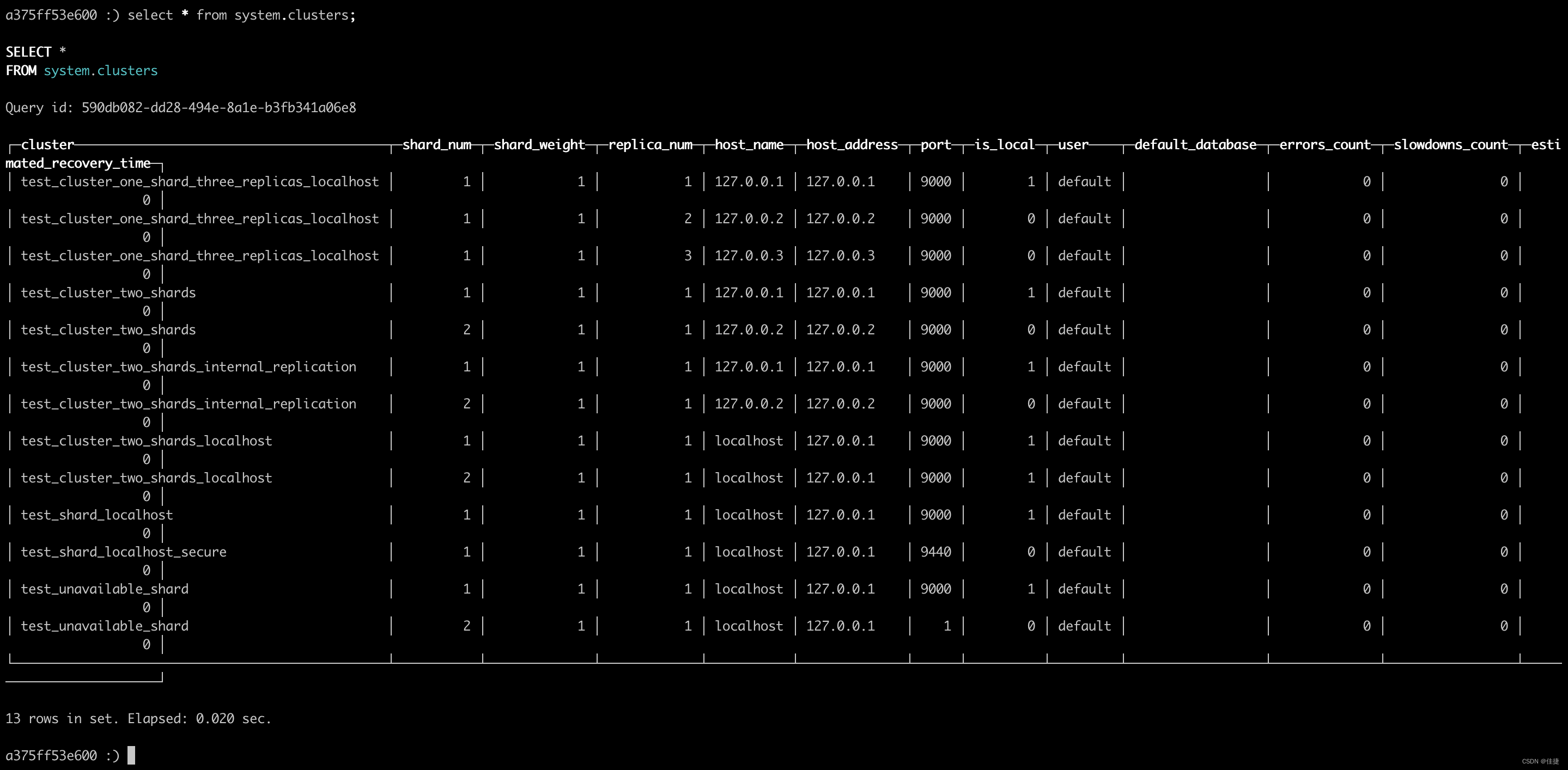

4 查询clickhouse状态

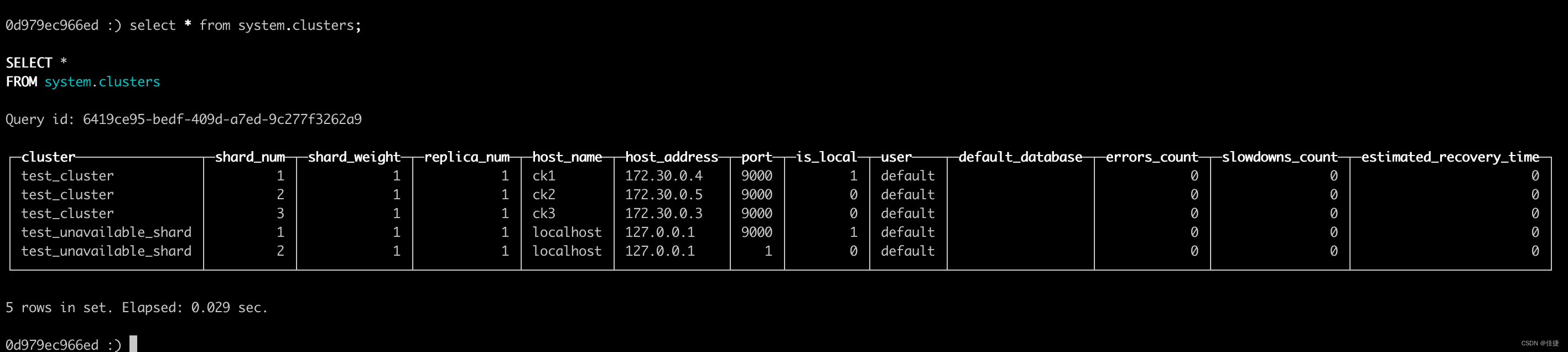

select * from system.clusters;

集群搭建

使用docker compose进行搭建

1添加配置文件

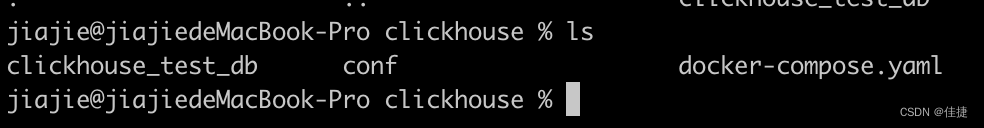

配置文件目录结构

根目录下情况主要的是conf目录和docker-compose.yaml文件

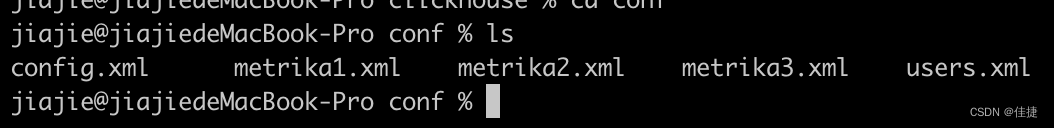

conf目录下文件

文件内容

docker-compose.yaml

version: "3.7"

services:

ck1:

image: yandex/clickhouse-server

ulimits:

nofile:

soft: 300001

hard: 300002

ports:

- 9001:9000

volumes:

- ./conf/config.xml:/etc/clickhouse-server/config.xml

- ./conf/users.xml:/etc/clickhouse-server/users.xml

- ./conf/metrika1.xml:/etc/metrika.xml

links:

- "zk1"

depends_on:

- zk1

ck2:

image: yandex/clickhouse-server

ulimits:

nofile:

soft: 300001

hard: 300002

volumes:

- ./conf/metrika2.xml:/etc/metrika.xml

- ./conf/config.xml:/etc/clickhouse-server/config.xml

- ./conf/users.xml:/etc/clickhouse-server/users.xml

ports:

- 9002:9000

depends_on:

- zk1

ck3:

image: yandex/clickhouse-server

ulimits:

nofile:

soft: 300001

hard: 300002

volumes:

- ./conf/metrika3.xml:/etc/metrika.xml

- ./conf/config.xml:/etc/clickhouse-server/config.xml

- ./conf/users.xml:/etc/clickhouse-server/users.xml

ports:

- 9003:9000

depends_on:

- zk1

zk1:

image: zookeeper

restart: always

hostname: zk1

expose:

- "2181"

ports:

- 2181:2181

config.xml(clickhouse配置文件)

<?xml version="1.0"?>

<!--

NOTE: User and query level settings are set up in "users.xml" file.

If you have accidentally specified user-level settings here, server won't start.

You can either move the settings to the right place inside "users.xml" file

or add <skip_check_for_incorrect_settings>1</skip_check_for_incorrect_settings> here.

-->

<clickhouse>

<logger>

<level>trace</level>

<log>/var/log/clickhouse-server/clickhouse-server.log</log>

<errorlog>/var/log/clickhouse-server/clickhouse-server.err.log</errorlog>

<size>1000M</size>

<count>10</count>

</logger>

<http_port>8123</http_port>

<tcp_port>9000</tcp_port>

<mysql_port>9004</mysql_port>

<postgresql_port>9005</postgresql_port>

<interserver_http_port>9009</interserver_http_port>

<max_connections>4096</max_connections>

<keep_alive_timeout>3</keep_alive_timeout>

<grpc>

<enable_ssl>false</enable_ssl>

<ssl_cert_file>/path/to/ssl_cert_file</ssl_cert_file>

<ssl_key_file>/path/to/ssl_key_file</ssl_key_file>

<ssl_require_client_auth>false</ssl_require_client_auth>

<ssl_ca_cert_file>/path/to/ssl_ca_cert_file</ssl_ca_cert_file>

<compression>deflate</compression>

<compression_level>medium</compression_level>

<max_send_message_size>-1</max_send_message_size>

<max_receive_message_size>-1</max_receive_message_size>

<verbose_logs>false</verbose_logs>

</grpc>

<openSSL>

<server>

<certificateFile>/etc/clickhouse-server/server.crt</certificateFile>

<privateKeyFile>/etc/clickhouse-server/server.key</privateKeyFile>

<dhParamsFile>/etc/clickhouse-server/dhparam.pem</dhParamsFile>

<verificationMode>none</verificationMode>

<loadDefaultCAFile>true</loadDefaultCAFile>

<cacheSessions>true</cacheSessions>

<disableProtocols>sslv2,sslv3</disableProtocols>

<preferServerCiphers>true</preferServerCiphers>

</server>

<client>

<loadDefaultCAFile>true</loadDefaultCAFile>

<cacheSessions>true</cacheSessions>

<disableProtocols>sslv2,sslv3</disableProtocols>

<preferServerCiphers>true</preferServerCiphers>

<invalidCertificateHandler>

<name>RejectCertificateHandler</name>

</invalidCertificateHandler>

</client>

</openSSL>

<max_concurrent_queries>100</max_concurrent_queries>

<max_server_memory_usage>0</max_server_memory_usage>

<max_thread_pool_size>10000</max_thread_pool_size>

<max_server_memory_usage_to_ram_ratio>0.9</max_server_memory_usage_to_ram_ratio>

<total_memory_profiler_step>4194304</total_memory_profiler_step>

<total_memory_tracker_sample_probability>0</total_memory_tracker_sample_probability>

<uncompressed_cache_size>8589934592</uncompressed_cache_size>

<mark_cache_size>5368709120</mark_cache_size>

<mmap_cache_size>1000</mmap_cache_size>

<compiled_expression_cache_size>134217728</compiled_expression_cache_size>

<compiled_expression_cache_elements_size>10000</compiled_expression_cache_elements_size>

<path>/var/lib/clickhouse/</path>

<tmp_path>/var/lib/clickhouse/tmp/</tmp_path>

<user_files_path>/var/lib/clickhouse/user_files/</user_files_path>

<ldap_servers>

</ldap_servers>

<user_directories>

<users_xml>

<path>users.xml</path>

</users_xml>

<local_directory>

<path>/var/lib/clickhouse/access/</path>

</local_directory>

</user_directories>

<default_profile>default</default_profile>

<custom_settings_prefixes></custom_settings_prefixes>

<default_database>default</default_database>

<mlock_executable>true</mlock_executable>

<remap_executable>false</remap_executable>

<![CDATA[

Uncomment below in order to use JDBC table engine and function.

To install and run JDBC bridge in background:

* [Debian/Ubuntu]

export MVN_URL=https://repo1.maven.org/maven2/ru/yandex/clickhouse/clickhouse-jdbc-bridge

export PKG_VER=$(curl -sL $MVN_URL/maven-metadata.xml | grep '<release>' | sed -e 's|.*>\(.*\)<.*|\1|')

wget https://github.com/ClickHouse/clickhouse-jdbc-bridge/releases/download/v$PKG_VER/clickhouse-jdbc-bridge_$PKG_VER-1_all.deb

apt install --no-install-recommends -f ./clickhouse-jdbc-bridge_$PKG_VER-1_all.deb

clickhouse-jdbc-bridge &

* [CentOS/RHEL]

export MVN_URL=https://repo1.maven.org/maven2/ru/yandex/clickhouse/clickhouse-jdbc-bridge

export PKG_VER=$(curl -sL $MVN_URL/maven-metadata.xml | grep '<release>' | sed -e 's|.*>\(.*\)<.*|\1|')

wget https://github.com/ClickHouse/clickhouse-jdbc-bridge/releases/download/v$PKG_VER/clickhouse-jdbc-bridge-$PKG_VER-1.noarch.rpm

yum localinstall -y clickhouse-jdbc-bridge-$PKG_VER-1.noarch.rpm

clickhouse-jdbc-bridge &

Please refer to https://github.com/ClickHouse/clickhouse-jdbc-bridge#usage for more information.

]]>

<remote_servers incl="clickhouse_remote_servers">

<test_unavailable_shard>

<shard>

<replica>

<host>localhost</host>

<port>9000</port>

</replica>

</shard>

<shard>

<replica>

<host>localhost</host>

<port>1</port>

</replica>

</shard>

</test_unavailable_shard>

</remote_servers>

<zookeeper incl="zookeeper-servers">

</zookeeper>

<builtin_dictionaries_reload_interval>3600</builtin_dictionaries_reload_interval>

<max_session_timeout>3600</max_session_timeout>

<default_session_timeout>60</default_session_timeout>

<query_log>

<database>system</database>

<table>query_log</table>

<partition_by>toYYYYMM(event_date)</partition_by>

<flush_interval_milliseconds>7500</flush_interval_milliseconds>

</query_log>

<trace_log>

<database>system</database>

<table>trace_log</table>

<partition_by>toYYYYMM(event_date)</partition_by>

<flush_interval_milliseconds>7500</flush_interval_milliseconds>

</trace_log>

<query_thread_log>

<database>system</database>

<table>query_thread_log</table>

<partition_by>toYYYYMM(event_date)</partition_by>

<flush_interval_milliseconds>7500</flush_interval_milliseconds>

</query_thread_log>

<query_views_log>

<database>system</database>

<table>query_views_log</table>

<partition_by>toYYYYMM(event_date)</partition_by>

<flush_interval_milliseconds>7500</flush_interval_milliseconds>

</query_views_log>

<part_log>

<database>system</database>

<table>part_log</table>

<partition_by>toYYYYMM(event_date)</partition_by>

<flush_interval_milliseconds>7500</flush_interval_milliseconds>

</part_log>

<metric_log>

<database>system</database>

<table>metric_log</table>

<flush_interval_milliseconds>7500</flush_interval_milliseconds>

<collect_interval_milliseconds>1000</collect_interval_milliseconds>

</metric_log>

<asynchronous_metric_log>

<database>system</database>

<table>asynchronous_metric_log</table>

<flush_interval_milliseconds>7000</flush_interval_milliseconds>

</asynchronous_metric_log>

<opentelemetry_span_log>

<engine>

engine MergeTree

partition by toYYYYMM(finish_date)

order by (finish_date, finish_time_us, trace_id)

</engine>

<database>system</database>

<table>opentelemetry_span_log</table>

<flush_interval_milliseconds>7500</flush_interval_milliseconds>

</opentelemetry_span_log>

<crash_log>

<database>system</database>

<table>crash_log</table>

<partition_by />

<flush_interval_milliseconds>1000</flush_interval_milliseconds>

</crash_log>

<session_log>

<database>system</database>

<table>session_log</table>

<partition_by>toYYYYMM(event_date)</partition_by>

<flush_interval_milliseconds>7500</flush_interval_milliseconds>

</session_log>

<top_level_domains_lists>

</top_level_domains_lists>

<dictionaries_config>*_dictionary.xml</dictionaries_config>

<user_defined_executable_functions_config>*_function.xml</user_defined_executable_functions_config>

<encryption_codecs>

</encryption_codecs>

<distributed_ddl>

<path>/clickhouse/task_queue/ddl</path>

</distributed_ddl>

<graphite_rollup_example>

<pattern>

<regexp>click_cost</regexp>

<function>any</function>

<retention>

<age>0</age>

<precision>3600</precision>

</retention>

<retention>

<age>86400</age>

<precision>60</precision>

</retention>

</pattern>

<default>

<function>max</function>

<retention>

<age>0</age>

<precision>60</precision>

</retention>

<retention>

<age>3600</age>

<precision>300</precision>

</retention>

<retention>

<age>86400</age>

<precision>3600</precision>

</retention>

</default>

</graphite_rollup_example>

<format_schema_path>/var/lib/clickhouse/format_schemas/</format_schema_path>

<query_masking_rules>

<rule>

<name>hide encrypt/decrypt arguments</name>

<regexp>((?:aes_)?(?:encrypt|decrypt)(?:_mysql)?)\s*\(\s*(?:'(?:\\'|.)+'|.*?)\s*\)</regexp>

<replace>\1(???)</replace>

</rule>

</query_masking_rules>

<send_crash_reports>

<enabled>false</enabled>

<anonymize>false</anonymize>

<endpoint>https://6f33034cfe684dd7a3ab9875e57b1c8d@o388870.ingest.sentry.io/5226277</endpoint>

</send_crash_reports>

<include_from>/etc/metrika.xml</include_from>

</clickhouse>

metrika1.xml、metrika2.xml、metrika3.xml(集群节点配置)

weight标签提供写入数据权重,权重越大,写入数据越多。

<yandex>

<clickhouse_remote_servers>

<test_cluster>

<shard>

<weight>1</weight>

<internal_replication>true</internal_replication>

<replica>

<host>ck1</host>

<port>9000</port>

</replica>

</shard>

<shard>

<weight>1</weight>

<internal_replication>true</internal_replication>

<replica>

<host>ck2</host>

<port>9000</port>

</replica>

</shard>

<shard>

<weight>1</weight>

<internal_replication>true</internal_replication>

<replica>

<host>ck3</host>

<port>9000</port>

</replica>

</shard>

</test_cluster>

</clickhouse_remote_servers>

<zookeeper-servers>

<node index="1">

<host>zk1</host>

<port>2181</port>

</node>

</zookeeper-servers>

</yandex>

users.xml(用户信息)

<?xml version="1.0"?>

<clickhouse>

<!-- Profiles of settings. -->

<profiles>

<!-- Default settings. -->

<default>

<!-- Maximum memory usage for processing single query, in bytes. -->

<max_memory_usage>10000000000</max_memory_usage>

<!-- How to choose between replicas during distributed query processing.

random - choose random replica from set of replicas with minimum number of errors

nearest_hostname - from set of replicas with minimum number of errors, choose replica

with minimum number of different symbols between replica's hostname and local hostname

(Hamming distance).

in_order - first live replica is chosen in specified order.

first_or_random - if first replica one has higher number of errors, pick a random one from replicas with minimum number of errors.

-->

<load_balancing>random</load_balancing>

<allow_ddl>1</allow_ddl>

<readonly>0</readonly>

</default>

<!-- Profile that allows only read queries. -->

<readonly>

<readonly>1</readonly>

</readonly>

</profiles>

<!-- Users and ACL. -->

<users>

<!-- If user name was not specified, 'default' user is used. -->

<default>

<access_management>1</access_management>

<password></password>

<networks>

<ip>::/0</ip>

</networks>

<!-- Settings profile for user. -->

<profile>default</profile>

<!-- Quota for user. -->

<quota>default</quota>

<!-- User can create other users and grant rights to them. -->

<!-- <access_management>1</access_management> -->

</default>

<test>

<password></password>

<quota>default</quota>

<profile>default</profile>

<allow_databases>

<database>default</database>

<database>test_dictionaries</database></allow_databases>

<allow_dictionaries>

<dictionary>replicaTest_all</dictionary>

</allow_dictionaries>

</test>

</users>

<!-- Quotas. -->

<quotas>

<!-- Name of quota. -->

<default>

<!-- Limits for time interval. You could specify many intervals with different limits. -->

<interval>

<!-- Length of interval. -->

<duration>3600</duration>

<!-- No limits. Just calculate resource usage for time interval. -->

<queries>0</queries>

<errors>0</errors>

<result_rows>0</result_rows>

<read_rows>0</read_rows>

<execution_time>0</execution_time>

</interval>

</default>

</quotas>

</clickhouse>

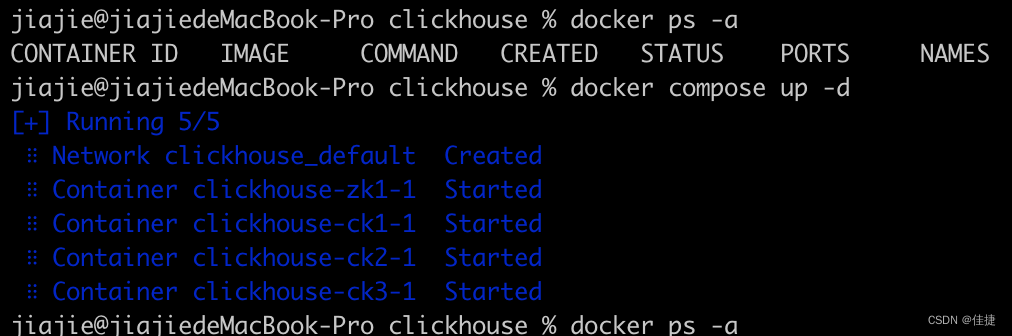

2启动项目

存放docker-conpose.yaml处进行命令输入

docker compose up -d

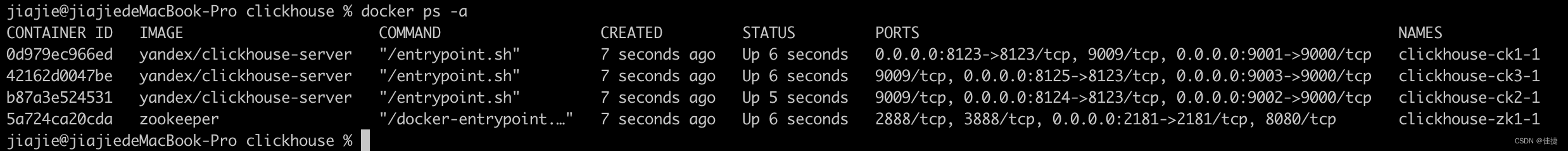

3查询容器id

docker ps -a

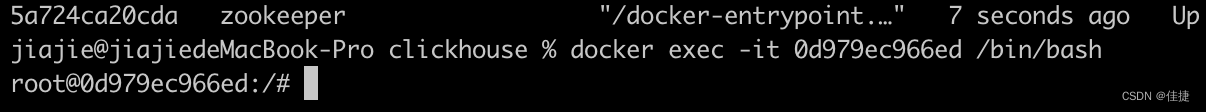

4进入容器

docker exec -it 容器id /bin/bash

5连接clickhouse

clickhouse-client

6查询集群状态

select * from system.clusters;

130

130

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?