最近想自己写一个python爬取百度搜索的代码,我比较喜欢自己写。

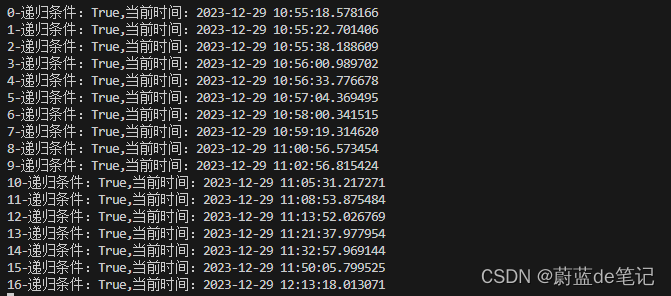

目前速度还是太差了,跑了两小时,跑出来八千条符合我自己要求的数据。

具体的爬取代码我放在压缩包里面了。

下面是部分方法分享,部分包导入。

# 导入

from json import loads

import requests

from bs4 import BeautifulSoup

import sqlite3

import os

import jieba

from functools import lru_cache

import time

from datetime import datetime

爬取方法

def get_baidu_keywords(keyword):

try:

list_keywords = []

# 设置浏览器的请求头

# 发起HTTP请求, 获取百度搜索结果页面

res = requests.get(f'https://m.baidu.com/s?word={keyword}&ts=0&t_kt=0&ie=utf-8')

res.raise_for_status() # 如果请求出错,会抛出HTTPError异常

soup = BeautifulSoup(res.text, 'html.parser')

# 提取中间部分的拓展词

section = soup.find('section', class_='_content_17i39_210')

if section: # 检查section是否找到

divx = section.find_all('div', class_='cos-col')

for item in divx:

a_tag = item.find('a', class_='result-item_5xDvF')

if a_tag and a_tag.text:

list_keywords.append(a_tag.text)

# 提取底部的拓展词

butt = soup.find_all('div', class_='rw-list-new')

for item in butt:

a_tag = item.find('a', class_='c-fwb')

if a_tag and a_tag.text:

list_keywords.append(a_tag.text)

return list_keywords

except requests.HTTPError as http_err:

print(f'HTTP error occurred: {http_err}')

except Exception as err:

print(f'An error occurred: {err}')

@lru_cache(maxsize=100000) # 根据需要设置缓存大小限制

def get_baidu_keywords_cached(keyword):

# 您现有的获取关键词的函数

return get_baidu_keywords(keyword)

递归爬取方法

# 数据库递归方法

def searchData_DB(containDataList, searched_keywords_set, keyWordList, depth=0):

new_search_required = False

for item in keyWordList:

# 判断关键词是否符合

if any(keyword in item for keyword in containDataList) and item not in searched_keywords_set:

new_search_required = True

# 判断数据库是否已经有了

suggestion_record = get_suggestion('赚钱', item)

searched_keywords_set.add(item) # 添加到已搜索集合

# 如果存在就跳过当前循环

if suggestion_record:

continue

# 如果不存在就插入数据库

suggestion_id = insert_suggestion('赚钱', item)

# print('---------------------------------')

print(f'{depth}-递归条件:{new_search_required},当前时间:{datetime.now()}')

# print(f'Current searched_keywords_list: {searched_keywords_set}')

if new_search_required:

# 获取所有新添加的关键词的搜索结果

all_new_keywords = set() # 使用集合来避免重复

for new_keyword in searched_keywords_set:

# 从数据库判断是否搜索过

suggestion_record = get_suggestion('赚钱', new_keyword)

if suggestion_record and suggestion_record[3] == 1: # 假设searched字段是第四个字段

continue

# print(suggestion_record)

listData = get_baidu_keywords_cached(new_keyword)

mark_suggestion_searched(suggestion_record[0]) # 假设id字段是第一个字段

all_new_keywords.update(listData) # 使用 update 方法来添加多个元素

# 递归搜索新的关键词

searchData_DB(containDataList, searched_keywords_set, all_new_keywords, depth + 1)

方法运行代码

db_connection = None

if __name__ == '__main__':

initialize_database()

# get_baidu_keywords_cached.cache_clear()

# containDataList = {'赚钱'} # 您的包含条件

# searched_keywords_set = set()

# keywordList = get_baidu_keywords_cached('赚钱')

# print(f'keywordList: {keywordList}')

# searchData_DB(containDataList, searched_keywords_set, keywordList)

list = find_suggestion_all('赚钱')

print(f'list: {list}')

with open('file/sl_new.txt', 'w', encoding='utf-8') as file:

for word in list:

file.write(f'{word[1]}\t{word[2]}\n')

close_database()

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?