1 前言

基于JAVA8 环境 + Centos7 服务器 + Flink1.14.6.

Flink安装部署测试可见1

Flink1.14.6安装部署参考

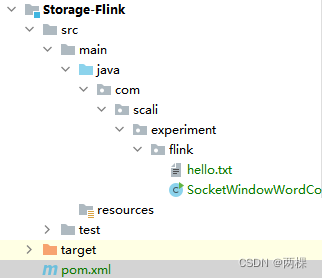

2 构建Java项目

2.1 Maven建立项目

导入项目依赖

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>1.14.6</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.12</artifactId>

<version>1.14.6</version>

</dependency>

</dependencies>

代码参考网址:

2.2 模拟WindowWordCount

package com.scali.experiment.flink;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.common.functions.ReduceFunction;

import org.apache.flink.api.java.utils.ParameterTool;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.assigners.TumblingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.util.Collector;

public class SocketWindowWordCount {

public static void main(String[] args) throws Exception {

// 该部分代码从官网copy出来的,前面有一部分是hostname和port直接修改为默认。

// ip随便命名

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// get input data by connecting to the socket

DataStream<String> text = env.socketTextStream("202.127.205.60", 9999, "\n");

// parse the data, group it, window it, and aggregate the counts

DataStream<WordWithCount> windowCounts =

text.flatMap(

new FlatMapFunction<String, WordWithCount>() {

@Override

public void flatMap(

String value, Collector<WordWithCount> out) {

for (String word : value.split("\\s")) {

out.collect(new WordWithCount(word, 1L));

}

}

})

.keyBy(value -> value.word)

.window(TumblingProcessingTimeWindows.of(Time.seconds(5)))

.reduce(

new ReduceFunction<WordWithCount>() {

@Override

public WordWithCount reduce(WordWithCount a, WordWithCount b) {

return new WordWithCount(a.word, a.count + b.count);

}

});

// print the results with a single thread, rather than in parallel

windowCounts.print().setParallelism(1);

env.execute("Socket Window WordCount");

}

// ------------------------------------------------------------------------

/** Data type for words with count. */

public static class WordWithCount {

public String word;

public long count;

public WordWithCount() {}

public WordWithCount(String word, long count) {

this.word = word;

this.count = count;

}

@Override

public String toString() {

return word + " : " + count;

}

}

}

保存代码。使用了IDEA,所以直接进行打包

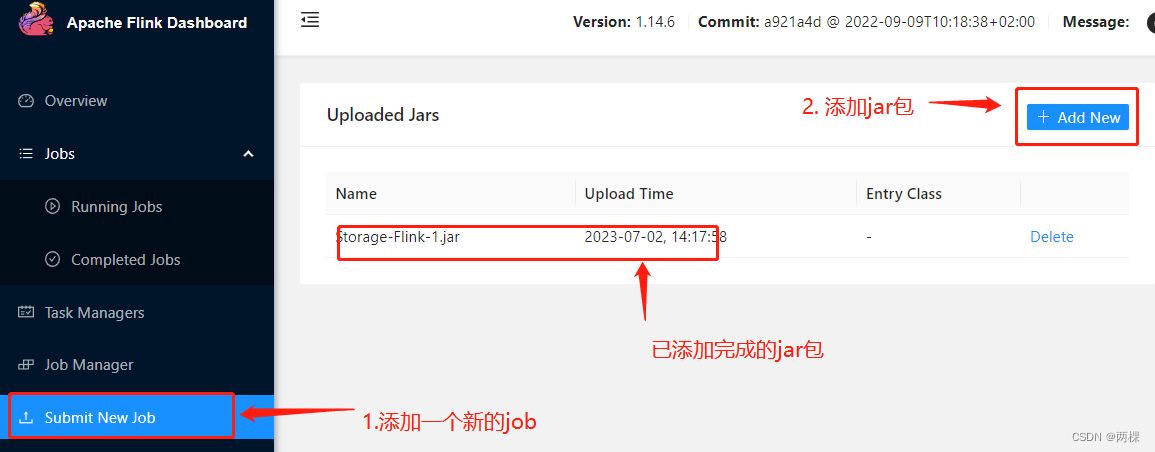

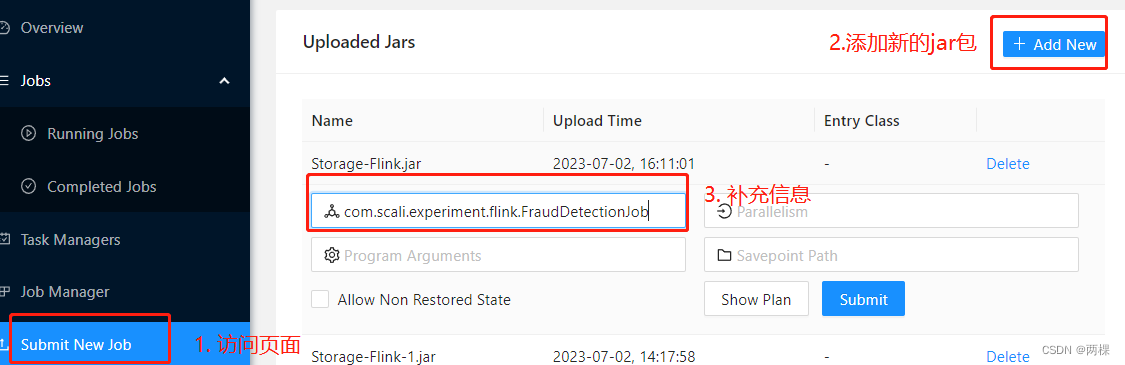

- 访问ip:8081【未修改端口默认使用8081端口访问web页面。所以其他微服务尽量将8081端口空余,如果有修改方案也可以采用,此处为了方便直接用默认端口】

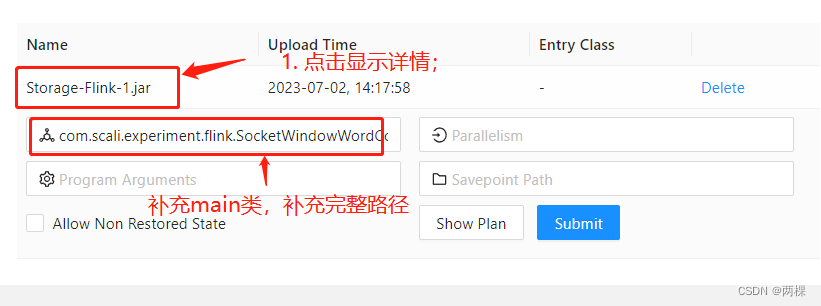

- 在上述图片第二步中,点击ADD NEW直接跳出系统文件窗口,选择打包后target目录下的jar包即可完成上传。

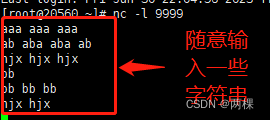

- 在服务器中输入

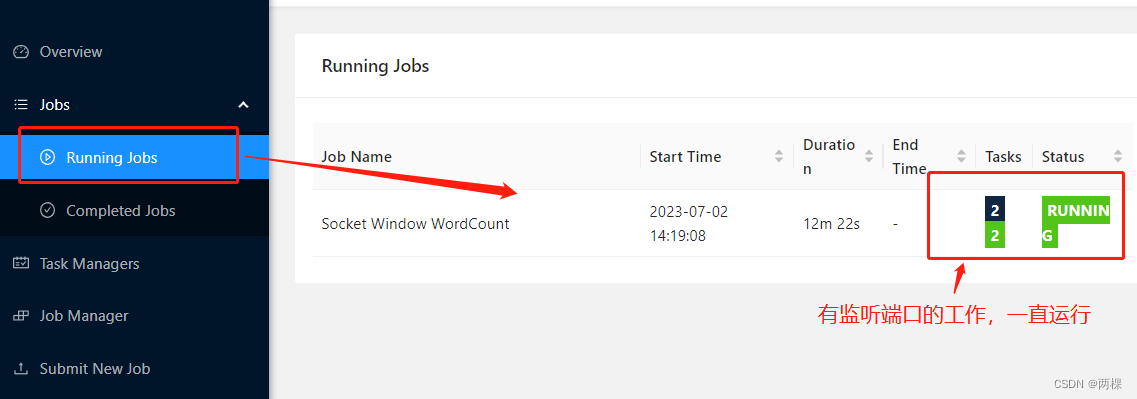

nc -l 9999, - 完成步骤3添加后对类进行说明,点击submit提交后开始运行。

- 查看代码运行效果

- 服务器中随意输入一些字符串文本进行测试

- 查看输出

3. 基于 DataStream API 实现欺诈检测

3.1 环境准备

参考网址:flink1.14.4说明文档

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-core</artifactId>

<version>1.14.6</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>1.14.6</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.12</artifactId>

<version>1.14.6</version>

</dependency>

<!-- 欺诈检测的common依赖包,包括一些实体等内容-->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-walkthrough-common_2.12</artifactId>

<version>1.14.6</version>

</dependency>

</dependencies>

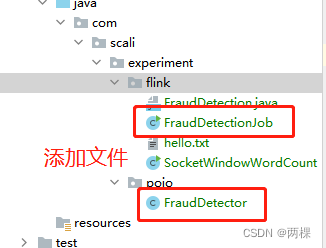

3.2 项目构建

- FraudDetectionJob代码

package com.scali.experiment.flink;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.walkthrough.common.entity.Alert;

import org.apache.flink.walkthrough.common.entity.Transaction;

import org.apache.flink.walkthrough.common.sink.AlertSink;

import org.apache.flink.walkthrough.common.source.TransactionSource;

/**

* @Author:HE

* @Date:Created in 15:42 2023/7/2

* @Description:

*/

public class FraudDetectionJob {

public static void main(String[] args) throws Exception {

// 设置任务环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

//创建数据源:Transaction是个实体类,可以后期修改,自己建实体类等信息

DataStream<Transaction> transactions = env

// TransactionSource是准备好的数据源

.addSource(new TransactionSource())

.name("transactions");

//一个task处理同一个key的所有数据。 DataStream#keyBy 对流进行分区,

DataStream<Alert> alerts = transactions

.keyBy(Transaction::getAccountId)

// 绑定数据流,执行FraudDetector中的操作

.process(new FraudDetector())

.name("fraud-detector");

alerts

// AlertSink 使用 INFO 的日志级别打印每一个 Alert 的数据记录了,可以将结果输入到kafka,

.addSink(new AlertSink())

.name("send-alerts");

env.execute("Fraud Detection");

}

}

- FraudDetector代码

package com.scali.experiment.flink;

import org.apache.flink.api.common.state.ValueState;

import org.apache.flink.api.common.state.ValueStateDescriptor;

import org.apache.flink.api.common.typeinfo.Types;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.functions.KeyedProcessFunction;

import org.apache.flink.util.Collector;

import org.apache.flink.walkthrough.common.entity.Alert;

import org.apache.flink.walkthrough.common.entity.Transaction;

// KeyedProcessFunction的接口实现

//KeyedProcessFunction:同时提供对状态和时间的细粒度操作

public class FraudDetector extends KeyedProcessFunction<Long, Transaction, Alert> {

private static final long serialVersionUID = 1L;

private static final double SMALL_AMOUNT = 1.00;

private static final double LARGE_AMOUNT = 500.00;

private static final long ONE_MINUTE = 60 * 1000;

private transient ValueState<Boolean> flagState;

private transient ValueState<Long> timerState;

//通过定时器判断两次取钱间隔是否超过一分钟;通过flag判断上一次取钱行为是否被标记为小数目取钱

// 综合判断本次取钱是否有问题

@Override

public void open(Configuration parameters) {

ValueStateDescriptor<Boolean> flagDescriptor = new ValueStateDescriptor<>(

"flag",

Types.BOOLEAN);

flagState = getRuntimeContext().getState(flagDescriptor);

ValueStateDescriptor<Long> timerDescriptor = new ValueStateDescriptor<>(

"timer-state",

Types.LONG);

timerState = getRuntimeContext().getState(timerDescriptor);

}

@Override

public void processElement(

Transaction transaction,

Context context,

Collector<Alert> collector) throws Exception {

// 获取上一次取钱行为判断是否设置了值

Boolean lastTransactionWasSmall = flagState.value();

// Check if the flag is set

if (lastTransactionWasSmall != null) {

if (transaction.getAmount() > LARGE_AMOUNT) {

//Output an alert downstream

Alert alert = new Alert();

alert.setId(transaction.getAccountId());

collector.collect(alert);

}

// Clean up our state

cleanUp(context);

}

if (transaction.getAmount() < SMALL_AMOUNT) {

// set the flag to true

flagState.update(true);

long timer = context.timerService().currentProcessingTime() + ONE_MINUTE;

context.timerService().registerProcessingTimeTimer(timer);

timerState.update(timer);

}

}

//定时器服务可以用于查询当前时间、注册定时器和删除定时器。

@Override

public void onTimer(long timestamp, OnTimerContext ctx, Collector<Alert> out) {

// remove flag after 1 minute

timerState.clear();

flagState.clear();

}

private void cleanUp(Context ctx) throws Exception {

// delete timer

Long timer = timerState.value();

ctx.timerService().deleteProcessingTimeTimer(timer);

// clean up all state

timerState.clear();

flagState.clear();

}

}

-

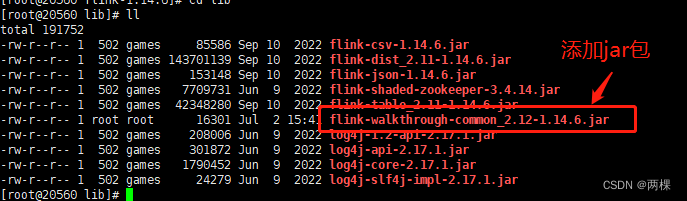

需要在flink的lib目录下添加相关jar包,否则后面会有NoclassError错误

访问bin目录重启flink。 -

打包后上传到flink进行执行

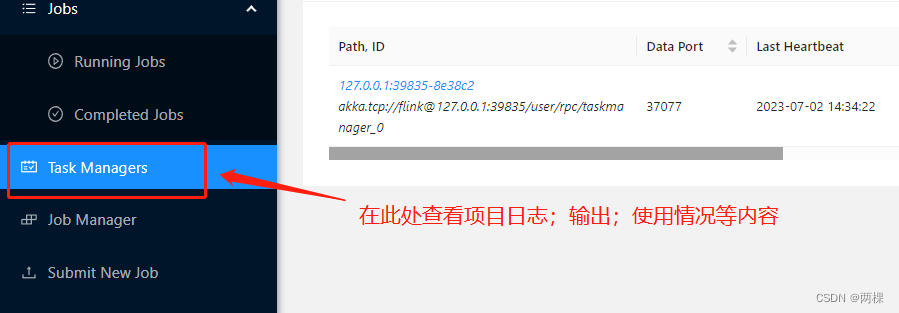

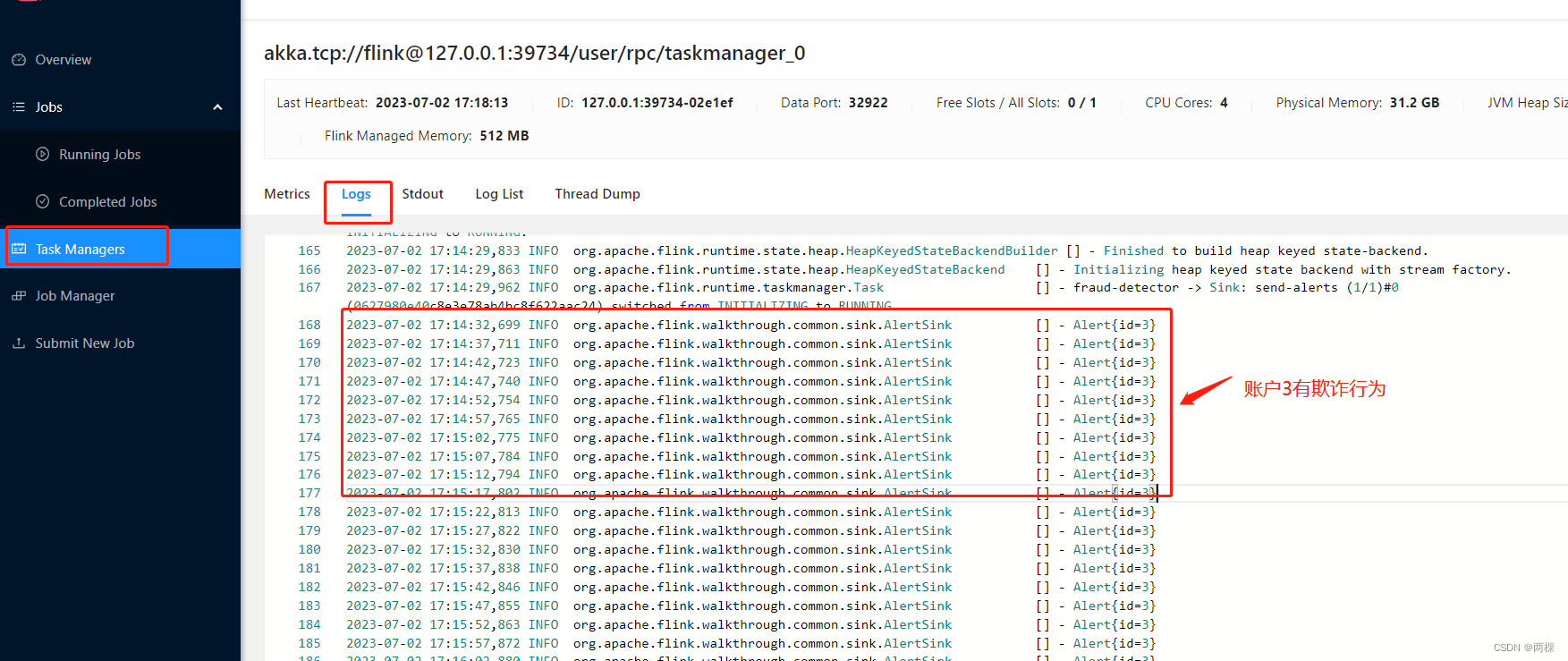

3.3 结果查看

查看TaskManager中的结果

926

926

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?