1、#部署 node-exporter

编写pv-pvc-prometheus.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv-proc-prometheus

spec:

capacity:

storage: 3Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: nfs-p

nfs:

path: /nfs/data/prometheus/proc

server: 192.168.3.64

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv-dev-prometheus

spec:

capacity:

storage: 3Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: nfs-p

nfs:

path: /nfs/data/prometheus/dev

server: 192.168.3.64

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv-sys-prometheus

spec:

capacity:

storage: 3Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: nfs-p

nfs:

path: /nfs/data/prometheus/sys

server: 192.168.3.64

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv-rootfs-prometheus

spec:

capacity:

storage: 3Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: nfs-p

nfs:

path: /nfs/data/prometheus/rootfs

server: 192.168.3.64

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

namespace: monitor-sa

name: pvc-proc-prometheus

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 2Gi

storageClassName: nfs-p

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

namespace: monitor-sa

name: pvc-dev-prometheus

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 2Gi

storageClassName: nfs-p

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

namespace: monitor-sa

name: pvc-sys-prometheus

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 2Gi

storageClassName: nfs-p

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

namespace: monitor-sa

name: pvc-rootfs-prometheus

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 2Gi

storageClassName: nfs-p

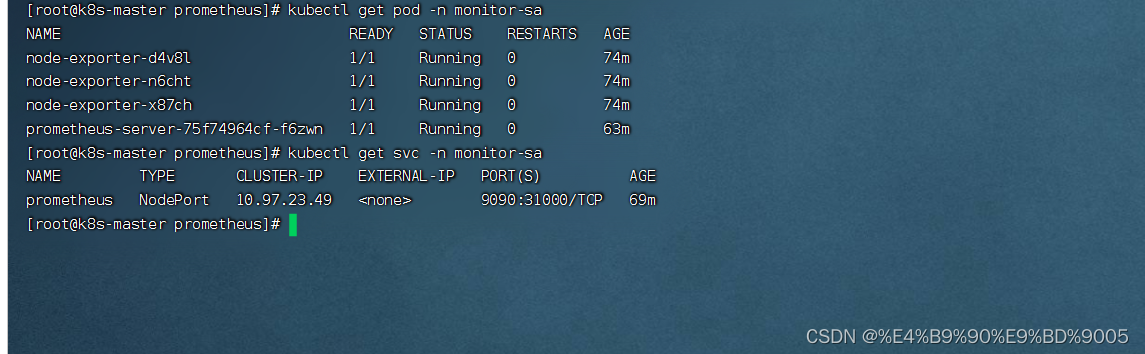

#创建监控 namespace

kubectl create ns monitor-sa

#部署 node-exporter

mkdir /opt/prometheus

cd /opt/prometheus/

vim node-export.yaml

---

apiVersion: apps/v1

kind: DaemonSet #可以保证 k8s 集群的每个节点都运行完全一样的 pod

metadata:

name: node-exporter

namespace: monitor-sa

labels:

name: node-exporter

spec:

selector:

matchLabels:

name: node-exporter

template:

metadata:

labels:

name: node-exporter

spec:

hostPID: true

hostIPC: true

hostNetwork: true

containers:

- name: node-exporter

image: prom/node-exporter:v0.16.0

ports:

- containerPort: 9100

resources:

requests:

cpu: 0.15 #这个容器运行至少需要0.15核cpu

securityContext:

privileged: true #开启特权模式

args:

- --path.procfs

- /host/proc

- --path.sysfs

- /host/sys

- --collector.filesystem.ignored-mount-points

- '"^/(sys|proc|dev|host|etc)($|/)"'

volumeMounts:

- name: dev

mountPath: /host/dev

- name: proc

mountPath: /host/proc

- name: sys

mountPath: /host/sys

- name: rootfs

mountPath: /rootfs

tolerations:

- key: "node-role.kubernetes.io/master"

operator: "Exists"

effect: "NoSchedule"

volumes:

- name: dev

persistentVolumeClaim:

claimName: pvc-dev-prometheus

- name: proc

persistentVolumeClaim:

claimName: pvc-proc-prometheus

- name: sys

persistentVolumeClaim:

claimName: pvc-sys-prometheus

- name: rootfs

persistentVolumeClaim:

claimName: pvc-rootfs-prometheus

2、创建 sa 账号,对 sa 做 rbac 授权

#创建一个 sa 账号 monitor

kubectl create serviceaccount monitor -n monitor-sa

#把 sa 账号 monitor 通过 clusterrolebing 绑定到 clusterrole 上

kubectl create clusterrolebinding monitor-clusterrolebinding -n monitor-sa --clusterrole=cluster-admin --serviceaccount=monitor-sa:monitor

3、创建 configmap 存储卷,用来存放 prometheus 配置信息

vim prometheus-cfg.yaml

---

kind: ConfigMap

apiVersion: v1

metadata:

labels:

app: prometheus

name: prometheus-config

namespace: monitor-sa

data:

prometheus.yml: |

global: #指定prometheus的全局配置,比如采集间隔,抓取超时时间等

scrape_interval: 15s #采集目标主机监控数据的时间间隔,默认为1m

scrape_timeout: 10s #数据采集超时时间,默认10s

evaluation_interval: 1m #触发告警生成alert的时间间隔,默认是1m

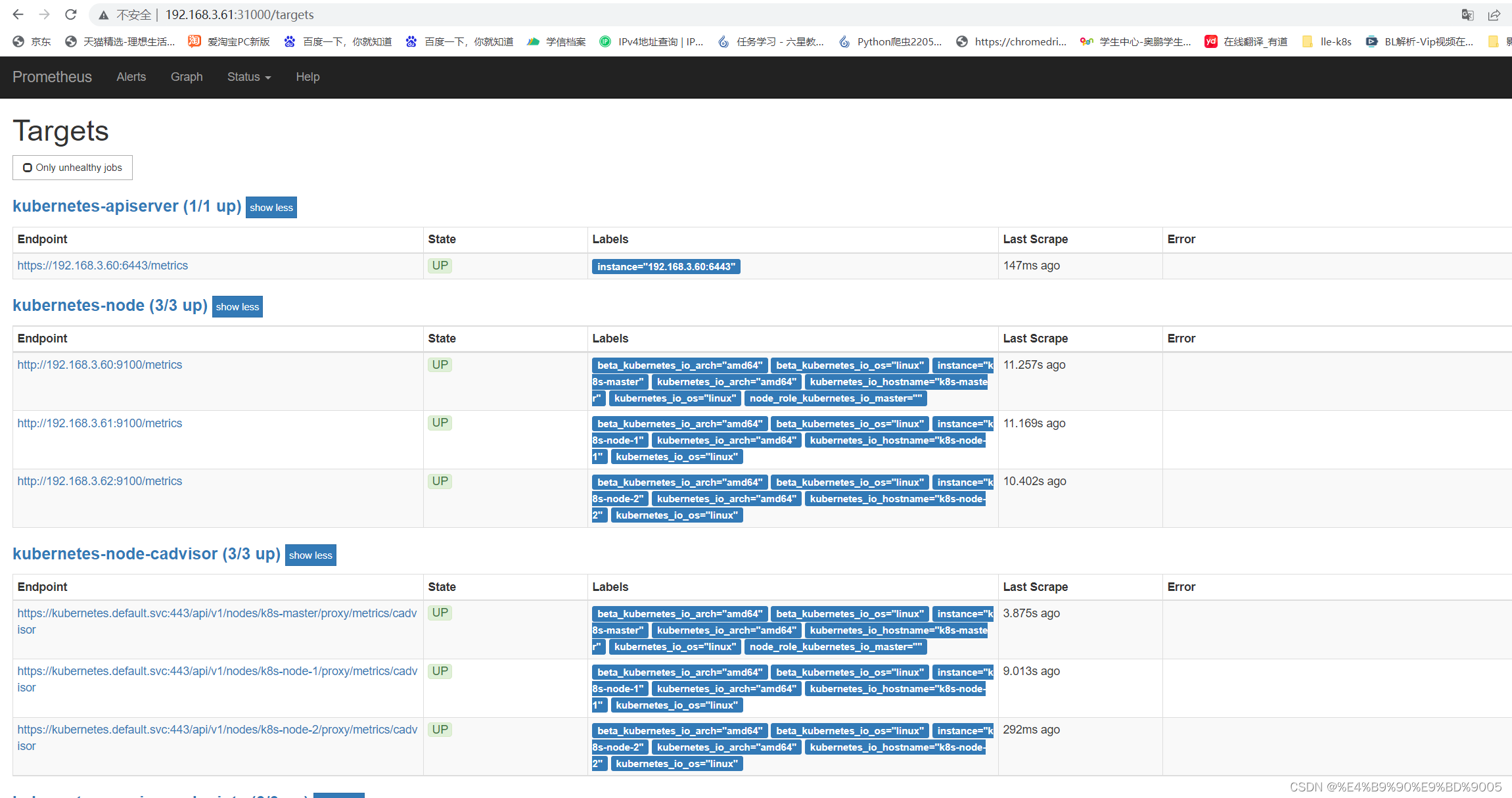

scrape_configs: #配置数据源,称为target,每个target用job_name命名。又分为静态配置和服务发现

- job_name: 'kubernetes-node'

kubernetes_sd_configs: # *_sd_configs 指定的是k8s的服务发现

- role: node #使用node角色,它使用默认的kubelet提供的http端口来发现集群中每个node节点

relabel_configs: #重新标记

- source_labels: [__address__] #配置的原始标签,匹配地址

regex: '(.*):10250' #匹配带有10250端口的url

replacement: '${1}:9100' #把匹配到的ip:10250的ip保留

target_label: __address__ #新生成的url是${1}获取到的ip:9100

action: replace #动作替换

- action: labelmap

regex: __meta_kubernetes_node_label_(.+) #匹配到下面正则表达式的标签会被保留,如果不做regex正则的话,默认只是会显示instance标签

- job_name: 'kubernetes-node-cadvisor' #抓取cAdvisor数据,是获取kubelet上/metrics/cadvisor接口数据来获取容器的资源使用情况

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap #把匹配到的标签保留

regex: __meta_kubernetes_node_label_(.+) #保留匹配到的具有__meta_kubernetes_node_label的标签

- target_label: __address__ #获取到的地址:__address__="192.168.80.20:10250"

replacement: kubernetes.default.svc:443 #把获取到的地址替换成新的地址kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+) #把原始标签中__meta_kubernetes_node_name值匹配到

target_label: __metrics_path__ #获取__metrics_path__对应的值

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

#把metrics替换成新的值api/v1/nodes/k8s-master1/proxy/metrics/cadvisor

#${1}是__meta_kubernetes_node_name获取到的值

#新的url就是https://kubernetes.default.svc:443/api/v1/nodes/k8s-master1/proxy/metrics/cadvisor

- job_name: 'kubernetes-apiserver'

kubernetes_sd_configs:

- role: endpoints #使用k8s中的endpoint服务发现,采集apiserver 6443端口获取到的数据

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name] #[endpoint这个对象的名称空间,endpoint对象的服务名,exnpoint的端口名称]

action: keep #采集满足条件的实例,其他实例不采集

regex: default;kubernetes;https #正则匹配到的默认空间下的service名字是kubernetes,协议是https的endpoint类型保留下来

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

#重新打标仅抓取到的具有"prometheus.io/scrape: true"的annotation的端点, 意思是说如果某个service具有prometheus.io/scrape = true的annotation声明则抓取,annotation本身也是键值结构, 所以这里的源标签设置为键,而regex设置值true,当值匹配到regex设定的内容时则执行keep动作也就是保留,其余则丢弃。

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

#重新设置scheme,匹配源标签__meta_kubernetes_service_annotation_prometheus_io_scheme也就是prometheus.io/scheme annotation,如果源标签的值匹配到regex,则把值替换为__scheme__对应的值。

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

#应用中自定义暴露的指标,也许你暴露的API接口不是/metrics这个路径,那么你可以在这个POD对应的service中做一个 "prometheus.io/path = /mymetrics" 声明,上面的意思就是把你声明的这个路径赋值给__metrics_path__, 其实就是让prometheus来获取自定义应用暴露的metrices的具体路径, 不过这里写的要和service中做好约定,如果service中这样写 prometheus.io/app-metrics-path: '/metrics' 那么你这里就要__meta_kubernetes_service_annotation_prometheus_io_app_metrics_path这样写。

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

#暴露自定义的应用的端口,就是把地址和你在service中定义的 "prometheus.io/port = <port>" 声明做一个拼接, 然后赋值给__address__,这样prometheus就能获取自定义应用的端口,然后通过这个端口再结合__metrics_path__来获取指标,如果__metrics_path__值不是默认的/metrics那么就要使用上面的标签替换来获取真正暴露的具体路径。

- action: labelmap #保留下面匹配到的标签

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace #替换__meta_kubernetes_namespace变成kubernetes_namespace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

4、通过 deployment 部署 prometheus

#将 prometheus 调度到 node1 节点,在 node1 节点创建 prometheus 数据存储目录

mkdir /data && chmod 777 /data

#通过 deployment 部署 prometheus

vim prometheus-deploy.yaml

vim prometheus-deploy.yaml

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus-server

namespace: monitor-sa

labels:

app: prometheus

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

component: server

#matchExpressions:

#- {key: app, operator: In, values: [prometheus]}

#- {key: component, operator: In, values: [server]}

template:

metadata:

labels:

app: prometheus

component: server

annotations:

prometheus.io/scrape: 'false'

spec:

nodeName: k8s-node-1 #指定pod调度到哪个节点上

serviceAccountName: monitor

containers:

- name: prometheus

image: prom/prometheus:v2.2.1

imagePullPolicy: IfNotPresent

command:

- prometheus

- --config.file=/etc/prometheus/prometheus.yml

- --storage.tsdb.path=/prometheus #数据存储目录

- --storage.tsdb.retention=720h #数据保存时长

- --web.enable-lifecycle #开启热加载

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: /etc/prometheus/prometheus.yml

name: prometheus-config

subPath: prometheus.yml

- mountPath: /prometheus/

name: prometheus-storage-volume

volumes:

- name: prometheus-config

configMap:

name: prometheus-config

items:

- key: prometheus.yml

path: prometheus.yml

mode: 0644

- name: prometheus-storage-volume

hostPath:

path: /data

type: Directory

---

apiVersion: v1

kind: Service

metadata:

name: prometheus

namespace: monitor-sa

labels:

app: prometheus

spec:

type: NodePort

ports:

- port: 9090

targetPort: 9090

protocol: TCP

nodePort: 31000

selector:

app: prometheus

component: server

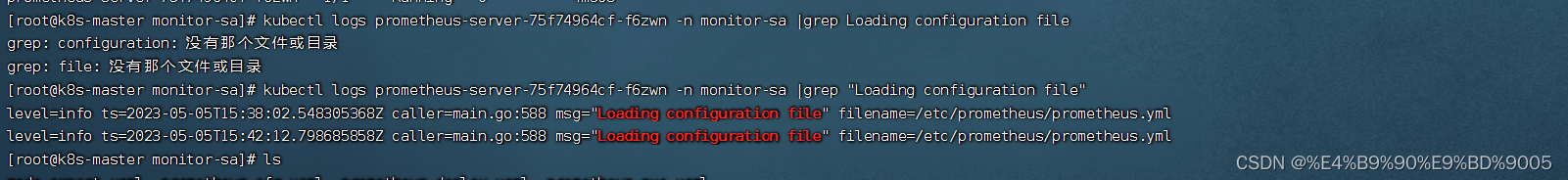

5、Prometheus 热加载配置

###为了每次修改配置文件可以热加载prometheus,也就是不停止prometheus,就可以使配置生效,想要使配置生效可用如下热加载命令:

kubectl get pods -n monitor-sa -o wide -l app=prometheus

#想要使配置生效可用如下命令热加载

curl -X POST -Ls http://10.244.2.5:9090/-/reload

#查看 log

kubectl logs -n monitor-sa prometheus-server-75f74964cf-f6zwn | grep "Loading configuration file"

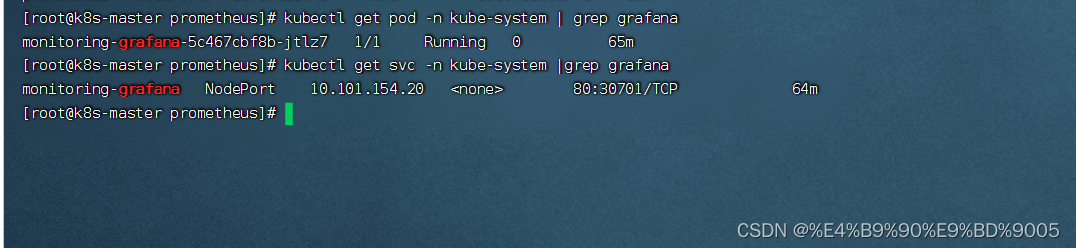

6、安装grafana 安装

vim grafana.yaml

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: monitoring-grafana

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

task: monitoring

k8s-app: grafana

template:

metadata:

labels:

task: monitoring

k8s-app: grafana

spec:

containers:

- name: grafana

image: grafana/grafana:5.0.4

ports:

- containerPort: 3000

protocol: TCP

volumeMounts:

- mountPath: /etc/ssl/certs

name: ca-certificates

readOnly: true

- mountPath: /var

name: grafana-storage

env:

- name: INFLUXDB_HOST

value: monitoring-influxdb

- name: GF_SERVER_HTTP_PORT

value: "3000"

- name: GF_AUTH_BASIC_ENABLED

value: "false"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ORG_ROLE

value: Admin

- name: GF_SERVER_ROOT_URL

value: /

volumes:

- name: ca-certificates

hostPath:

path: /etc/ssl/certs

- name: grafana-storage

emptyDir: {}

---

apiVersion: v1

kind: Service

metadata:

labels:

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: monitoring-grafana

name: monitoring-grafana

namespace: kube-system

spec:

ports:

- port: 80

targetPort: 3000

selector:

k8s-app: grafana

type: NodePort

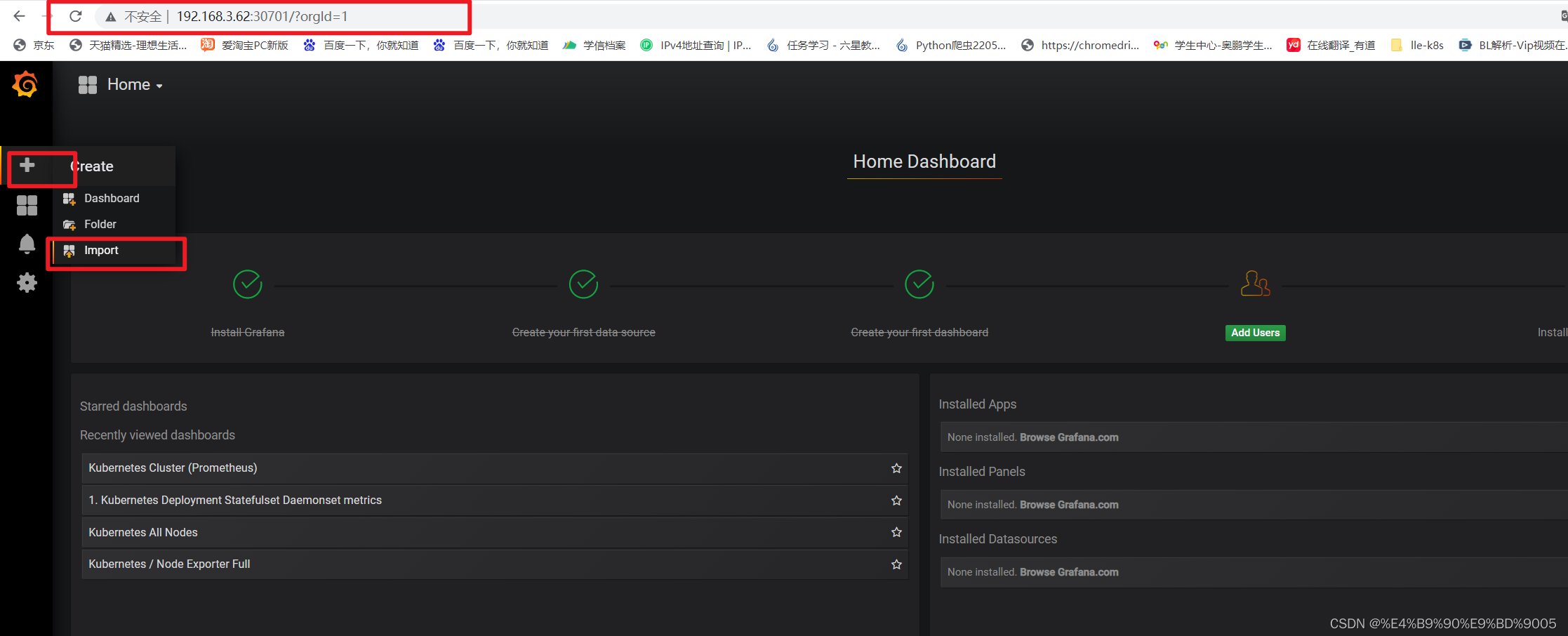

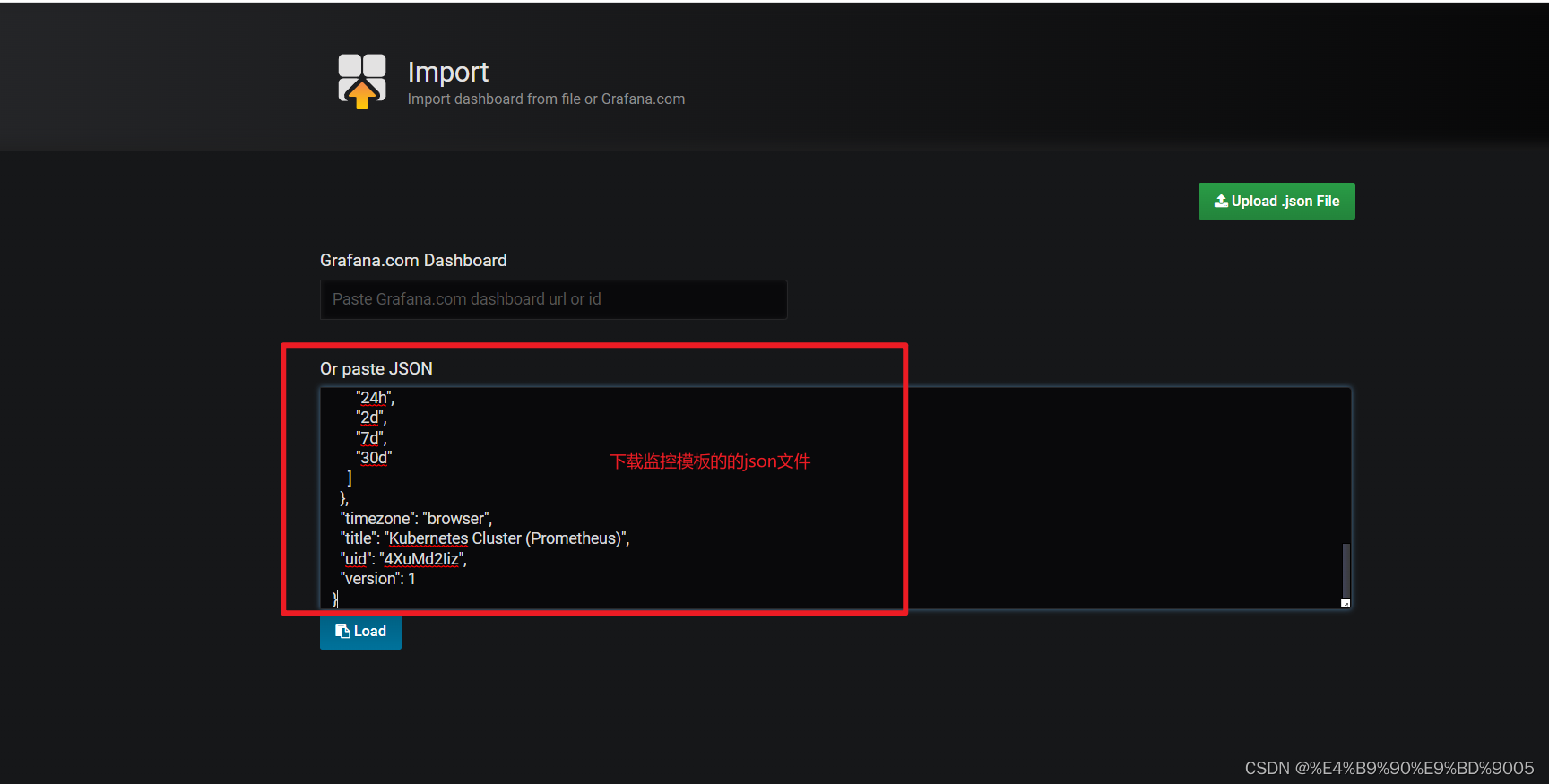

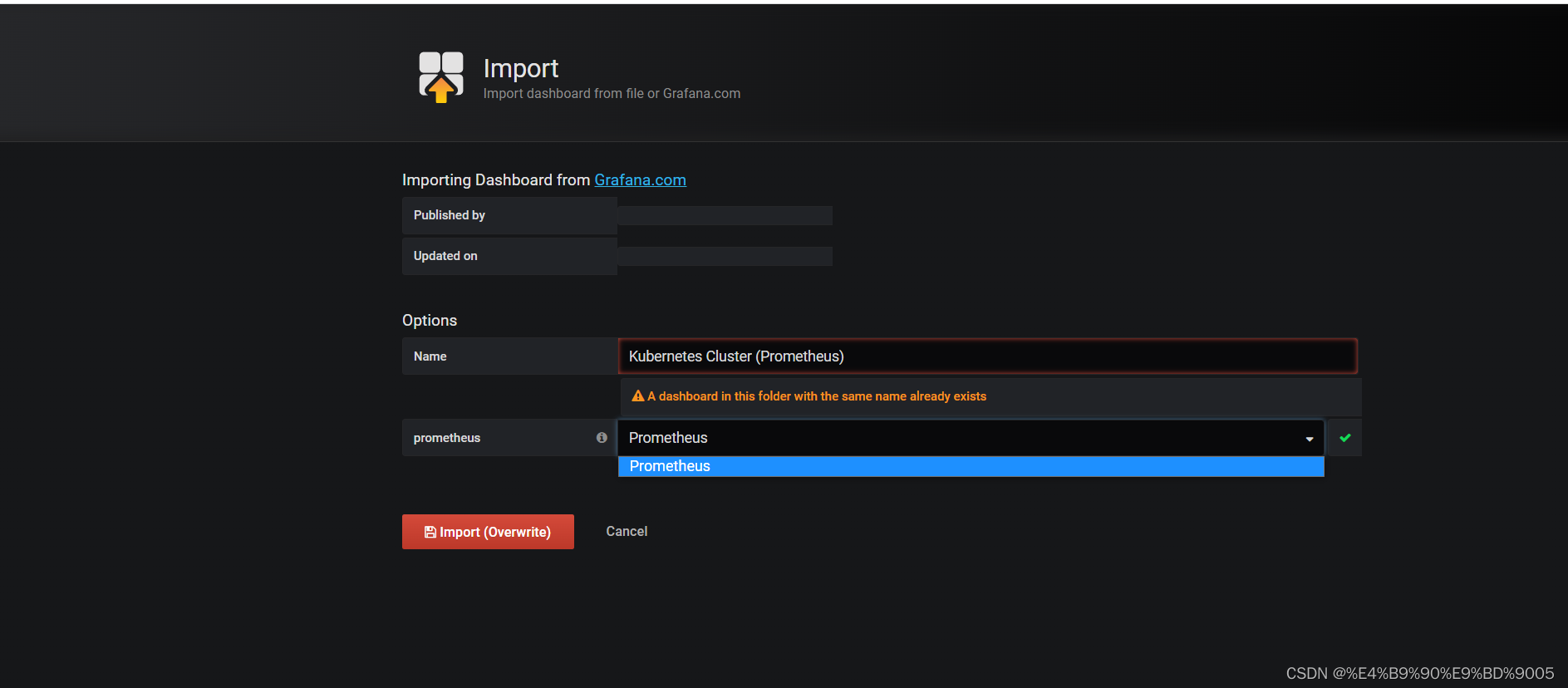

#导入监控模板的链接:

官方链接搜索:https://grafana.com/dashboards?dataSource=prometheus&search=kubernetes

7、kube-state-metrics-创建 sa并对sa授权

vim kube-state-metrics-rbac.yaml

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: kube-state-metrics

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: kube-state-metrics

rules:

- apiGroups: [""]

resources: ["nodes", "pods", "services", "resourcequotas", "replicationcontrollers", "limitranges", "persistentvolumeclaims", "persistentvolumes", "namespaces", "endpoints"]

verbs: ["list", "watch"]

- apiGroups: ["extensions"]

resources: ["daemonsets", "deployments", "replicasets"]

verbs: ["list", "watch"]

- apiGroups: ["apps"]

resources: ["statefulsets"]

verbs: ["list", "watch"]

- apiGroups: ["batch"]

resources: ["cronjobs", "jobs"]

verbs: ["list", "watch"]

- apiGroups: ["autoscaling"]

resources: ["horizontalpodautoscalers"]

verbs: ["list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kube-state-metrics

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kube-state-metrics

subjects:

- kind: ServiceAccount

name: kube-state-metrics

namespace: kube-system

8、安装 kube-state-metrics 组件和 service

vim kube-state-metrics-deploy.yaml

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kube-state-metrics

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

app: kube-state-metrics

template:

metadata:

labels:

app: kube-state-metrics

spec:

serviceAccountName: kube-state-metrics

containers:

- name: kube-state-metrics

image: quay.io/coreos/kube-state-metrics:v1.9.0

ports:

- containerPort: 8080

---

apiVersion: v1

kind: Service

metadata:

annotations:

prometheus.io/scrape: 'true'

name: kube-state-metrics

namespace: kube-system

labels:

app: kube-state-metrics

spec:

ports:

- name: kube-state-metrics

port: 8080

protocol: TCP

selector:

app: kube-state-metrics

2612

2612

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?