农产品信息智能推荐平台(2)

爬取农产品的图片

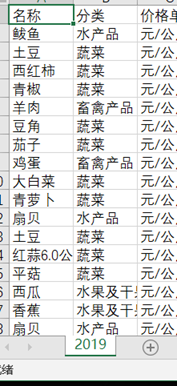

根据本组的需求,先是提取了农产品的列表

代码:

jiage = pandas.read_csv('2019.csv', encoding='gbk')

name = jiage['名称']

namelist = []

for i in range(len(name)):

if name[i] in namelist:

continue

else:

namelist.append(name[i])

然后在网上找了一些的图片网站,最后决定在摄图网上进行爬取。

代码:

url = 'https://699pic.com/'#首页url

srclist=[]

driver.get(url)#请求首页面

driver.maximize_window()

driver.implicitly_wait(3)

driver.find_element_by_xpath('// *[ @ id = "act95Close"]').click()

sleep(1)

driver.find_element_by_xpath('/html/body/div[1]/div[2]/div/div[2]/div/div[1]/div[2]/form[1]/input').send_keys('1')

driver.find_element_by_xpath('/html/body/div[1]/div[2]/div/div[2]/div/div[2]').click()

sleep(2)

然后只要注意搜索过程中出现的情况,根据不同的情况,用try的形式对其处理即可。如

等等,有时还出现没有图片的情况。这些都需考虑全面。

代码:

for i in range(len(namelist)):

driver.find_element_by_xpath('//*[@id="search-input"]').clear()

driver.find_element_by_xpath('//*[@id="search-input"]').send_keys(namelist[i])

driver.find_element_by_xpath('//*[@id="sp-header"]/div/div[2]/div[3]').click()

sleep(2)

try:

try:

driver.find_element_by_xpath('// *[ @ id = "act95Close"]').click()

sleep(1)

except:

driver.find_element_by_xpath('// *[ @ id = "login-box_home"] / div[2] / div[1]').click()

sleep(1)

except:

try:

shuju = driver.find_element_by_xpath('//*[@id="wrapper"]/div[4]/div/div[1]/a/img')

ip = shuju.get_attribute("src")

srclist.append(ip)

except:

continue

try:

shuju = driver.find_element_by_xpath('//*[@id="wrapper"]/div[4]/div/div[1]/a/img')

ip = shuju.get_attribute("src")

if ip in srclist:

continue

else:

srclist.append(ip)

except:

continue

然后将爬取的src保存到数据库中。

print('导入数据库')

connect = pymysql.connect(host='localhost', user='root', password='xxxxxxx', db='nongchanpin', port=3306)

cursor = connect.cursor()

print("连接数据库成功")

for i in range(len(srclist)):

cursor.execute(

'insert into pic(name,src)VALUES ("{}","{}")'.format(namelist[i], srclist[i]))

connect.commit()

cursor.close()

connect.close()

结果如图:

4775

4775

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?