【微服务全家桶】-高级篇-2-分布式事务Seata

1 事务

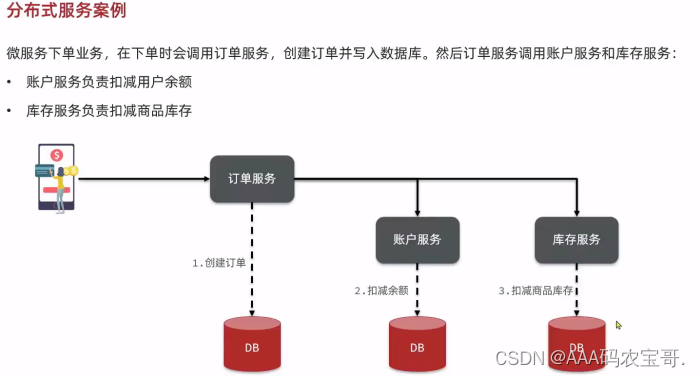

1.1 演示分布式事务问题

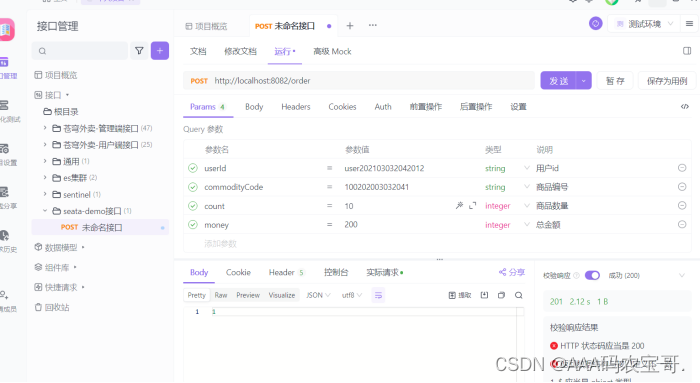

测试下单功能

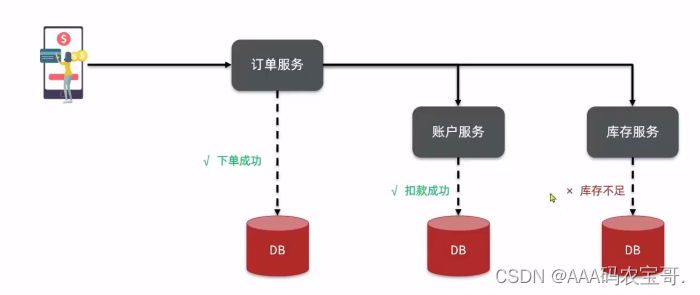

但如果再继续下单,即使库存不够,仍然扣款成功

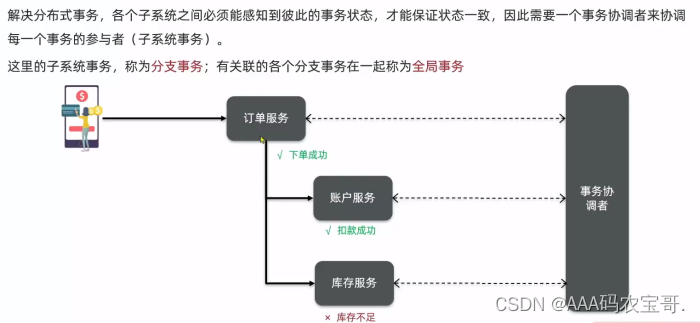

现在这三个事务都需要回滚,但作为微服务每一块,不了解对方也无法同时回滚,这时候就需要使用Seata框架

2 理论基础

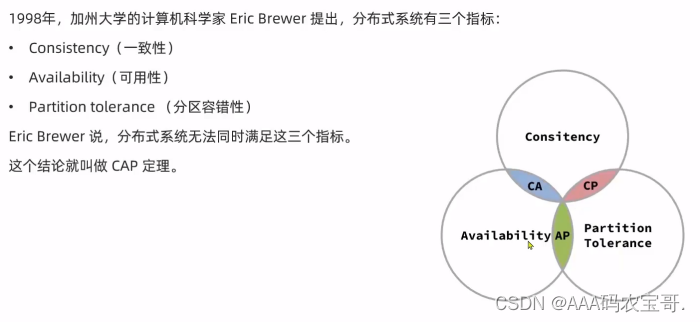

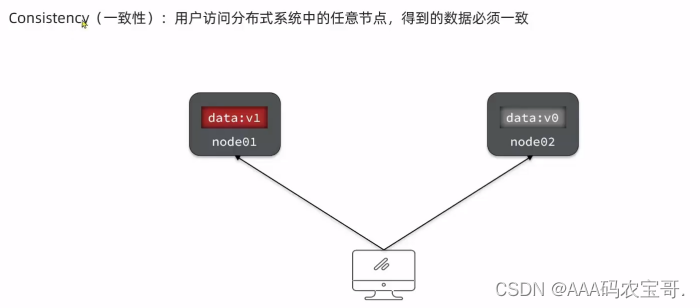

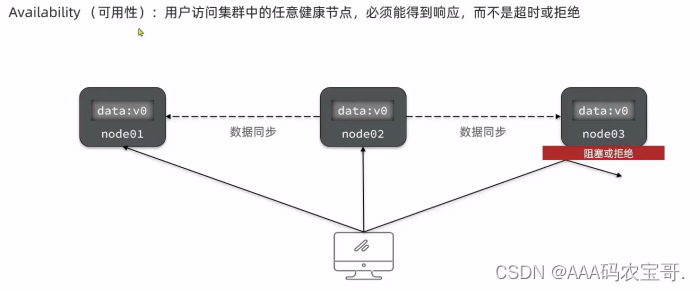

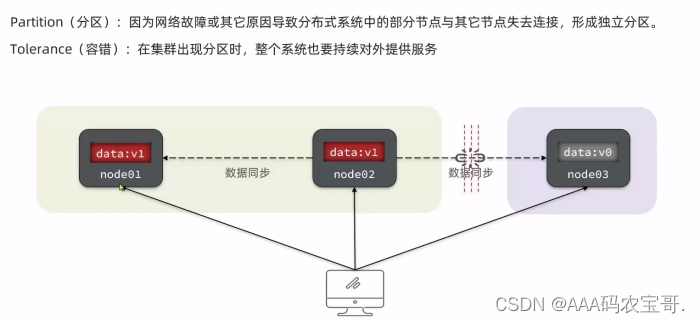

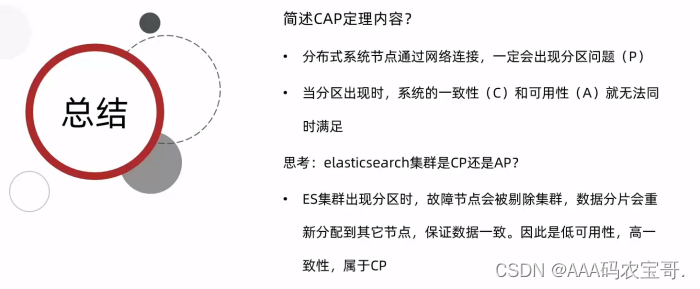

2.1 CAP定理

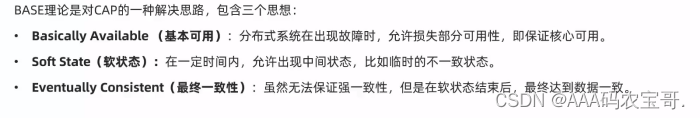

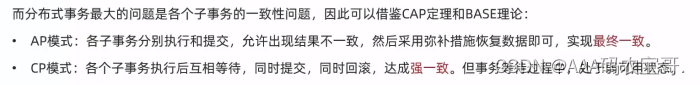

2.2 BASE理论

3 Seata

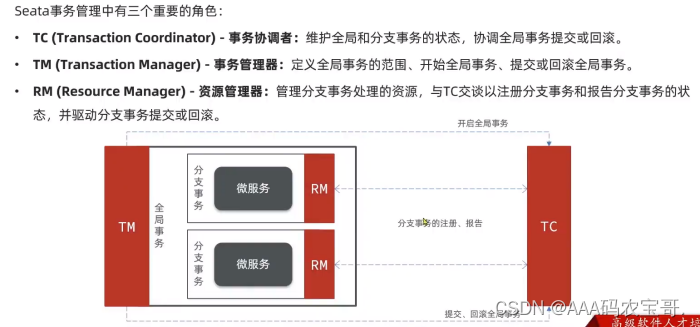

3.1 Seata框架

3.2 部署Seata服务

3.2.1 下载

首先我们要下载seata-server包,地址在http😕/seata.io/zh-cn/blog/download.html

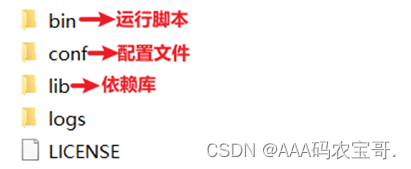

3.2.2 解压

在非中文目录解压缩这个zip包,其目录结构如下:

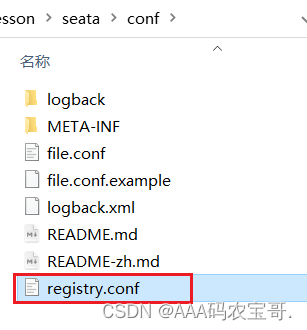

3.2.3 修改配置

修改conf目录下的registry.conf文件:

内容如下:

registry {

# file 、nacos 、eureka、redis、zk、consul、etcd3、sofa

type = "nacos"

nacos {

application = "seata-tc-server"

serverAddr = "127.0.0.1:8848"

group = "DEFAULT_GROUP"

namespace = ""

cluster = "SH"

username = "nacos"

password = "nacos"

}

}

config {

# file、nacos 、apollo、zk、consul、etcd3

type = "nacos"

nacos {

serverAddr = "127.0.0.1:8848"

namespace = ""

group = "SEATA_GROUP"

username = "nacos"

password = "nacos"

dataId = "seataServer.properties"

}

}

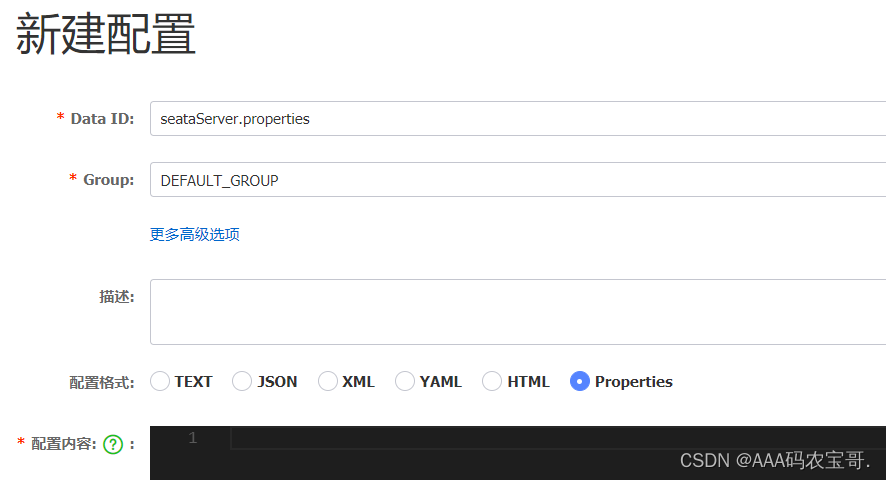

3.2.4 在nacos添加配置

特别注意,为了让tc服务的集群可以共享配置,我们选择了nacos作为统一配置中心。因此服务端配置文件seataServer.properties文件需要在nacos中配好。

格式如下:

配置内容如下:

# 数据存储方式,db代表数据库

store.mode=db

store.db.datasource=druid

store.db.dbType=mysql

store.db.driverClassName=com.mysql.cj.jdbc.Driver

store.db.url=jdbc:mysql://127.0.0.1:3306/seata?useUnicode=true&rewriteBatchedStatements=true&serverTimezone=UTC

store.db.user=root

store.db.password=123sjbsjb

store.db.minConn=5

store.db.maxConn=30

store.db.globalTable=global_table

store.db.branchTable=branch_table

store.db.queryLimit=100

store.db.lockTable=lock_table

store.db.maxWait=5000

# 事务、日志等配置

server.recovery.committingRetryPeriod=1000

server.recovery.asynCommittingRetryPeriod=1000

server.recovery.rollbackingRetryPeriod=1000

server.recovery.timeoutRetryPeriod=1000

server.maxCommitRetryTimeout=-1

server.maxRollbackRetryTimeout=-1

server.rollbackRetryTimeoutUnlockEnable=false

server.undo.logSaveDays=7

server.undo.logDeletePeriod=86400000

# 客户端与服务端传输方式

transport.serialization=seata

transport.compressor=none

# 关闭metrics功能,提高性能

metrics.enabled=false

metrics.registryType=compact

metrics.exporterList=prometheus

metrics.exporterPrometheusPort=9898

其中的数据库地址、用户名、密码都需要修改成你自己的数据库信息。

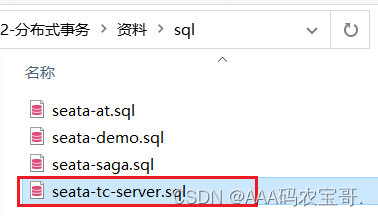

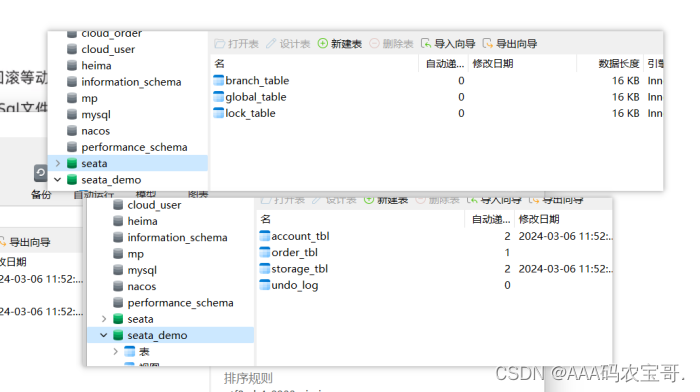

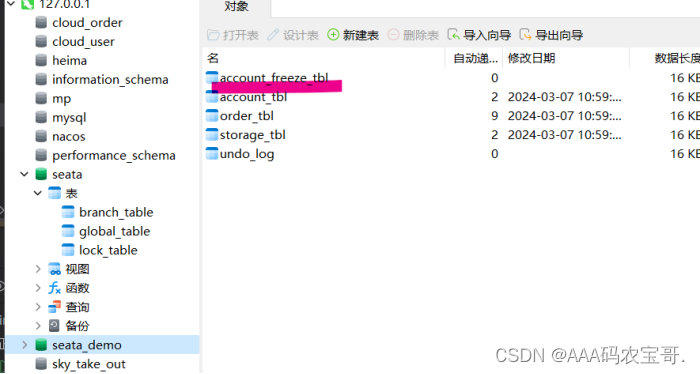

3.2.5 创建数据库表

特别注意:tc服务在管理分布式事务时,需要记录事务相关数据到数据库中,你需要提前创建好这些表。

新建一个名为seata的数据库,运行准备好的的sql文件:

这些表主要记录全局事务、分支事务、全局锁信息:

SET NAMES utf8mb4;

SET FOREIGN_KEY_CHECKS = 0;

-- ----------------------------

-- 分支事务表

-- ----------------------------

DROP TABLE IF EXISTS `branch_table`;

CREATE TABLE `branch_table` (

`branch_id` bigint(20) NOT NULL,

`xid` varchar(128) CHARACTER SET utf8 COLLATE utf8_general_ci NOT NULL,

`transaction_id` bigint(20) NULL DEFAULT NULL,

`resource_group_id` varchar(32) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`resource_id` varchar(256) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`branch_type` varchar(8) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`status` tinyint(4) NULL DEFAULT NULL,

`client_id` varchar(64) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`application_data` varchar(2000) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`gmt_create` datetime(6) NULL DEFAULT NULL,

`gmt_modified` datetime(6) NULL DEFAULT NULL,

PRIMARY KEY (`branch_id`) USING BTREE,

INDEX `idx_xid`(`xid`) USING BTREE

) ENGINE = InnoDB CHARACTER SET = utf8 COLLATE = utf8_general_ci ROW_FORMAT = Compact;

-- ----------------------------

-- 全局事务表

-- ----------------------------

DROP TABLE IF EXISTS `global_table`;

CREATE TABLE `global_table` (

`xid` varchar(128) CHARACTER SET utf8 COLLATE utf8_general_ci NOT NULL,

`transaction_id` bigint(20) NULL DEFAULT NULL,

`status` tinyint(4) NOT NULL,

`application_id` varchar(32) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`transaction_service_group` varchar(32) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`transaction_name` varchar(128) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`timeout` int(11) NULL DEFAULT NULL,

`begin_time` bigint(20) NULL DEFAULT NULL,

`application_data` varchar(2000) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`gmt_create` datetime NULL DEFAULT NULL,

`gmt_modified` datetime NULL DEFAULT NULL,

PRIMARY KEY (`xid`) USING BTREE,

INDEX `idx_gmt_modified_status`(`gmt_modified`, `status`) USING BTREE,

INDEX `idx_transaction_id`(`transaction_id`) USING BTREE

) ENGINE = InnoDB CHARACTER SET = utf8 COLLATE = utf8_general_ci ROW_FORMAT = Compact;

SET FOREIGN_KEY_CHECKS = 1;

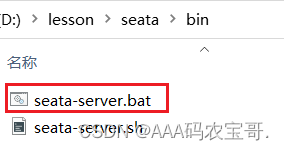

3.2.6 启动TC服务

进入bin目录,运行其中的seata-server.bat即可:

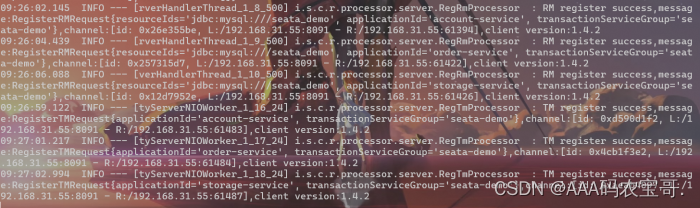

启动成功后,seata-server应该已经注册到nacos注册中心了。

打开浏览器,访问nacos地址:http://localhost:8848,然后进入服务列表页面,可以看到seata-tc-server的信息:

4 Seata动手实践

1.引入依赖

首先,我们需要在微服务中引入seata依赖:

<dependency>

<groupId>com.alibaba.cloud</groupId>

<artifactId>spring-cloud-starter-alibaba-seata</artifactId>

<exclusions>

<!--版本较低,1.3.0,因此排除-->

<exclusion>

<artifactId>seata-spring-boot-starter</artifactId>

<groupId>io.seata</groupId>

</exclusion>

</exclusions>

</dependency>

<!--seata starter 采用1.4.2版本-->

<dependency>

<groupId>io.seata</groupId>

<artifactId>seata-spring-boot-starter</artifactId>

<version>${seata.version}</version>

</dependency>

2.修改配置文件

需要修改application.yml文件,添加一些配置:

seata:

registry: # TC服务注册中心的配置,微服务根据这些信息去注册中心获取tc服务地址

# 参考tc服务自己的registry.conf中的配置

type: nacos

nacos: # tc

server-addr: 127.0.0.1:8848

namespace: ""

group: DEFAULT_GROUP

application: seata-tc-server # tc服务在nacos中的服务名称

username: nacos

password: nacos

tx-service-group: seata-demo # 事务组,根据这个获取tc服务的cluster名称

service:

vgroup-mapping:

seata-demo: SH

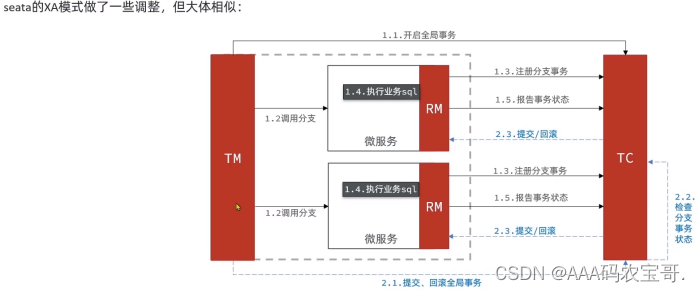

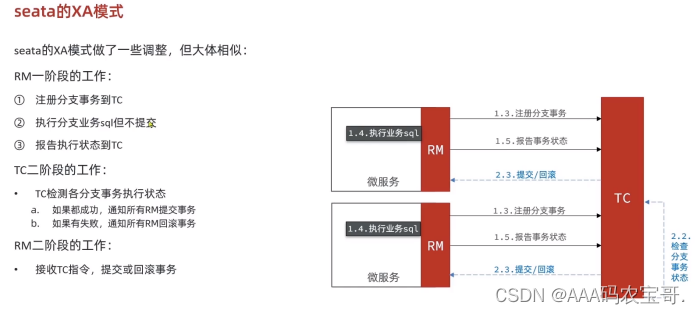

4.1 XA模式

4.1.1 实现XA模式

account、order、storage的application都要改 data-source-proxy-mode: XA

seata:

registry: # TC服务注册中心的配置,微服务根据这些信息去注册中心获取tc服务地址

# 参考tc服务自己的registry.conf中的配置

type: nacos

nacos: # tc

server-addr: 127.0.0.1:8848

namespace: ""

group: DEFAULT_GROUP

application: seata-tc-server # tc服务在nacos中的服务名称

username: nacos

password: nacos

tx-service-group: seata-demo # 事务组,根据这个获取tc服务的cluster名称

service:

vgroup-mapping:

seata-demo: SH

data-source-proxy-mode: XA

在全局事务入口,order-service的service中impl的OrderServiceImpl的create方法,将@Transactional改为@GlobalTransactional

@Override

@GlobalTransactional

public Long create(Order order) {

// 创建订单

orderMapper.insert(order);

try {

// 扣用户余额

accountClient.deduct(order.getUserId(), order.getMoney());

// 扣库存

storageClient.deduct(order.getCommodityCode(), order.getCount());

} catch (FeignException e) {

log.error("下单失败,原因:{}", e.contentUTF8(), e);

throw new RuntimeException(e.contentUTF8(), e);

}

return order.getId();

}

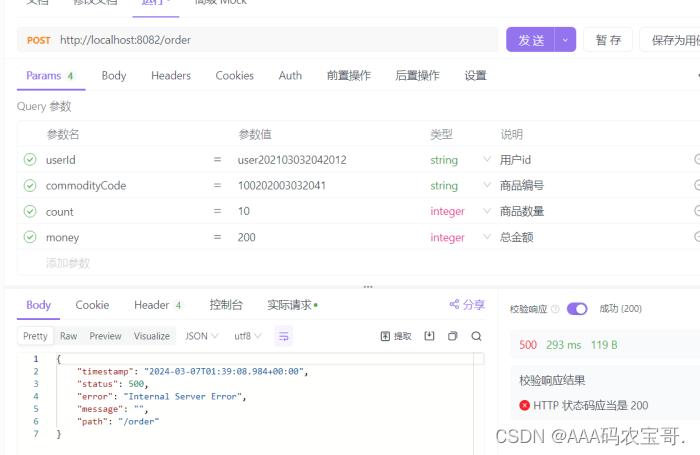

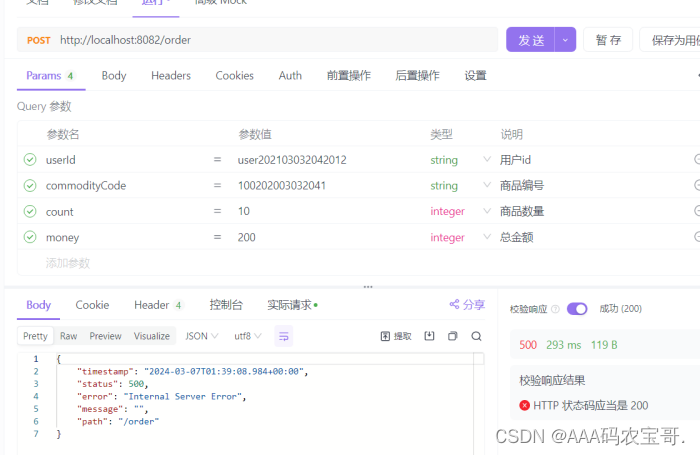

发送请求发现被拦截

account发现,并被rm回滚rm handle branch rollback process:xid=192.168.31.55:8091:6134399696503754763,branchId=6134399696503754767,branchType=XA,resourceId=jdbc:mysql:///seata_demo,applicationData=null,其他一样

account

03-07 09:39:08:753 INFO 3160 --- [nio-8083-exec-2] c.i.a.service.impl.AccountServiceImpl : 开始扣款

03-07 09:39:08:753 DEBUG 3160 --- [nio-8083-exec-2] c.i.account.mapper.AccountMapper.deduct : ==> Preparing: update account_tbl set money = money - 200 where user_id = ?

03-07 09:39:08:753 DEBUG 3160 --- [nio-8083-exec-2] c.i.account.mapper.AccountMapper.deduct : ==> Parameters: user202103032042012(String)

03-07 09:39:08:755 DEBUG 3160 --- [nio-8083-exec-2] c.i.account.mapper.AccountMapper.deduct : <== Updates: 1

03-07 09:39:08:755 INFO 3160 --- [nio-8083-exec-2] c.i.a.service.impl.AccountServiceImpl : 扣款成功

03-07 09:39:08:928 INFO 3160 --- [h_RMROLE_1_2_24] i.s.c.r.p.c.RmBranchRollbackProcessor : rm handle branch rollback process:xid=192.168.31.55:8091:6134399696503754763,branchId=6134399696503754767,branchType=XA,resourceId=jdbc:mysql:///seata_demo,applicationData=null

03-07 09:39:08:929 INFO 3160 --- [h_RMROLE_1_2_24] io.seata.rm.AbstractRMHandler : Branch Rollbacking: 192.168.31.55:8091:6134399696503754763 6134399696503754767 jdbc:mysql:///seata_demo

03-07 09:39:08:934 INFO 3160 --- [h_RMROLE_1_2_24] i.s.rm.datasource.xa.ResourceManagerXA : 192.168.31.55:8091:6134399696503754763-6134399696503754767 was rollbacked

03-07 09:39:08:934 INFO 3160 --- [h_RMROLE_1_2_24] io.seata.rm.AbstractRMHandler : Branch Rollbacked result: PhaseTwo_Rollbacked

order

rm client handle branch commit process:xid=192.168.31.55:8091:6134399696503754793,branchId=6134399696503754795,branchType=XA,resourceId=jdbc:mysql:///seata_demo,applicationData=null

03-07 09:42:11:040 INFO 11548 --- [h_RMROLE_1_5_24] io.seata.rm.AbstractRMHandler : Branch committing: 192.168.31.55:8091:6134399696503754793 6134399696503754795 jdbc:mysql:///seata_demo null

03-07 09:42:11:042 INFO 11548 --- [h_RMROLE_1_5_24] i.s.rm.datasource.xa.ResourceManagerXA : 192.168.31.55:8091:6134399696503754793-6134399696503754795 was committed.

03-07 09:42:11:042 INFO 11548 --- [h_RMROLE_1_5_24] io.seata.rm.AbstractRMHandler : Branch commit result: PhaseTwo_Committed

03-07 09:42:11:068 INFO 11548 --- [nio-8082-exec-9] i.seata.tm.api.DefaultGlobalTransaction : Suspending current transaction, xid = 192.168.31.55:8091:6134399696503754793

03-07 09:42:11:068 INFO 11548 --- [nio-8082-exec-9] i.seata.tm.api.DefaultGlobalTransaction : [192.168.31.55:8091:6134399696503754793] commit status: Committed

storage

03-07 09:42:11:027 DEBUG 7148 --- [nio-8081-exec-5] c.i.storage.mapper.StorageMapper.deduct : <== Updates: 1

03-07 09:42:11:027 INFO 7148 --- [nio-8081-exec-5] c.i.s.service.impl.StorageServiceImpl : 扣减库存成功

03-07 09:42:11:055 INFO 7148 --- [h_RMROLE_1_2_24] i.s.c.r.p.c.RmBranchCommitProcessor : rm client handle branch commit process:xid=192.168.31.55:8091:6134399696503754793,branchId=6134399696503754799,branchType=XA,resourceId=jdbc:mysql:///seata_demo,applicationData=null

03-07 09:42:11:055 INFO 7148 --- [h_RMROLE_1_2_24] io.seata.rm.AbstractRMHandler : Branch committing: 192.168.31.55:8091:6134399696503754793 6134399696503754799 jdbc:mysql:///seata_demo null

03-07 09:42:11:058 INFO 7148 --- [h_RMROLE_1_2_24] i.s.rm.datasource.xa.ResourceManagerXA : 192.168.31.55:8091:6134399696503754793-6134399696503754799 was committed.

03-07 09:42:11:058 INFO 7148 --- [h_RMROLE_1_2_24] io.seata.rm.AbstractRMHandler : Branch commit result: PhaseTwo_Committed

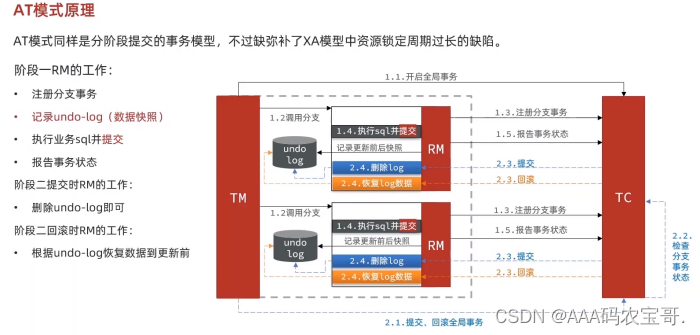

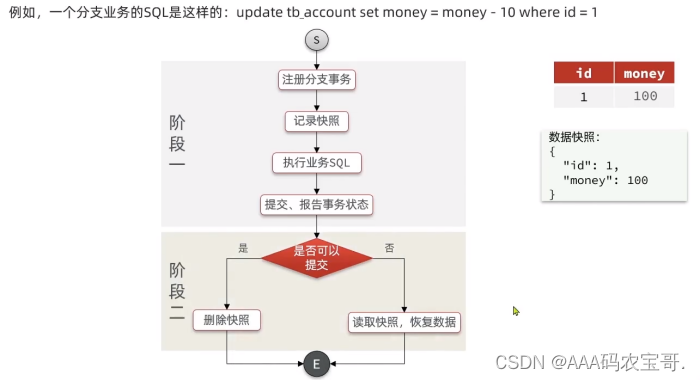

4.2 AT模式

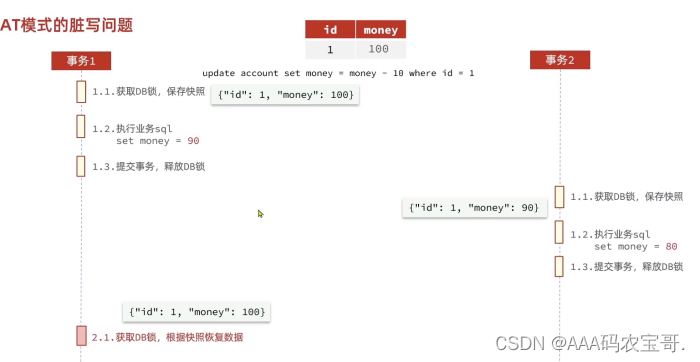

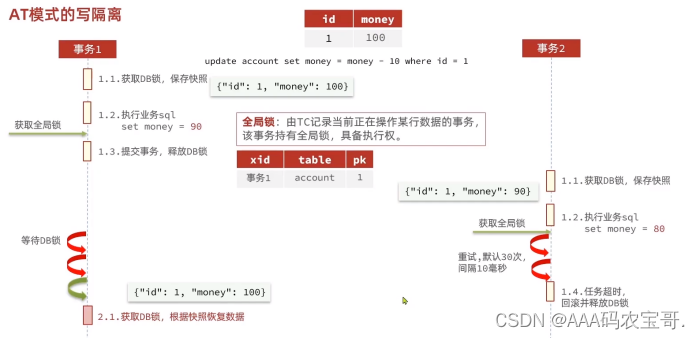

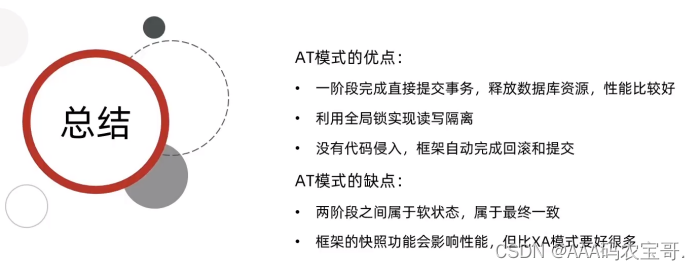

4.2.1 AT的并发问题

会用100覆盖80,导致事务2空转,因此引入全局锁

AT相比于XA粒度更细,XA锁整张表,AT尽可能局限于一行。

如果另外一个事务不是由Seata管理的呢,也会引起并发问题。

会对比before-image和after-image,如果不一致则会发送警告请求人工介入。

4.2.2 AT模式特点

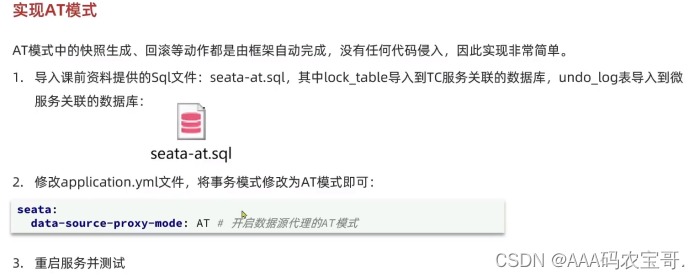

4.2.3 实现AT模式

1)

lock_table放到seata数据库中,undo_log放到seata_demo中

2)

修改三个微服务的模式的application,改为AT

data-source-proxy-mode: AT

account显示

03-07 10:59:17:832 INFO 22020 --- [nio-8083-exec-2] c.i.a.service.impl.AccountServiceImpl : 扣款成功

03-07 10:59:18:074 INFO 22020 --- [h_RMROLE_1_2_24] i.s.c.r.p.c.RmBranchRollbackProcessor : rm handle branch rollback process:xid=192.168.31.55:8091:6134399696503754815,branchId=6134399696503754820,branchType=AT,resourceId=jdbc:mysql:///seata_demo,applicationData=null

03-07 10:59:18:075 INFO 22020 --- [h_RMROLE_1_2_24] io.seata.rm.AbstractRMHandler : Branch Rollbacking: 192.168.31.55:8091:6134399696503754815 6134399696503754820 jdbc:mysql:///seata_demo

03-07 10:59:18:142 INFO 22020 --- [h_RMROLE_1_2_24] i.s.r.d.undo.AbstractUndoLogManager : xid 192.168.31.55:8091:6134399696503754815 branch 6134399696503754820, undo_log deleted with GlobalFinished

03-07 10:59:18:144 INFO 22020 --- [h_RMROLE_1_2_24] io.seata.rm.AbstractRMHandler : Branch Rollbacked result: PhaseTwo_Rollbacked

storage

03-07 10:59:17:989 ERROR 17384 --- [nio-8081-exec-4] o.a.c.c.C.[.[.[/].[dispatcherServlet] : Servlet.service() for servlet [dispatcherServlet] in context with path [] threw exception [Request processing failed; nested exception is java.lang.RuntimeException: 扣减库存失败,可能是库存不足!] with root cause

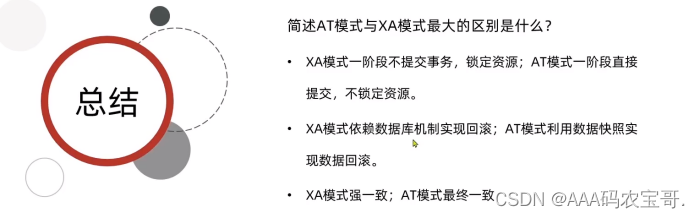

4.3 XA与AT模式区别

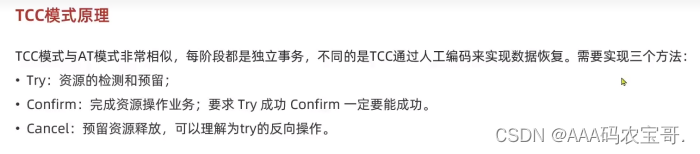

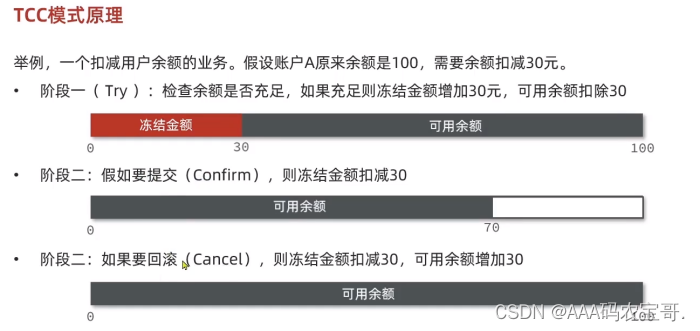

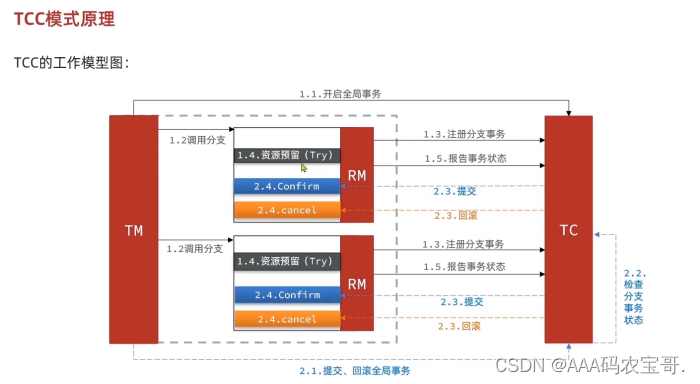

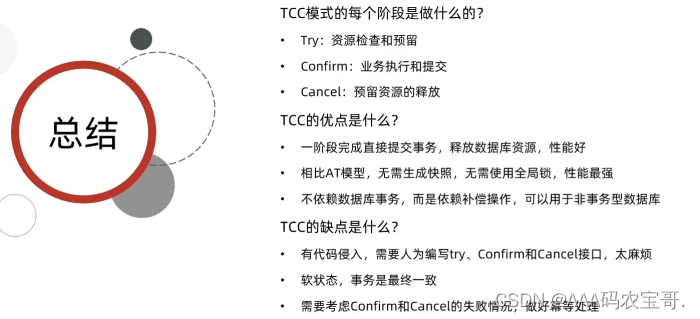

4.4 TCC模式

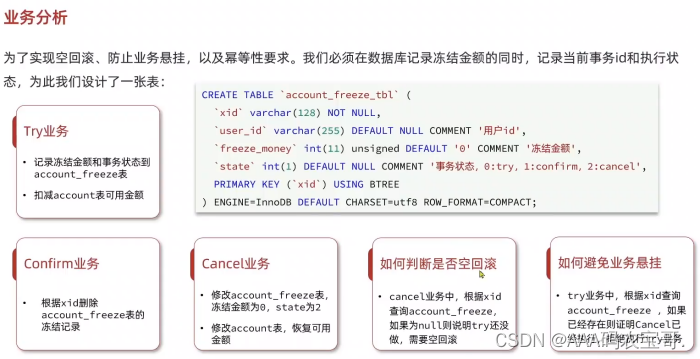

如果是不同事务,那操作的就是属于自己的一部分的冻结金额,相互不干扰。

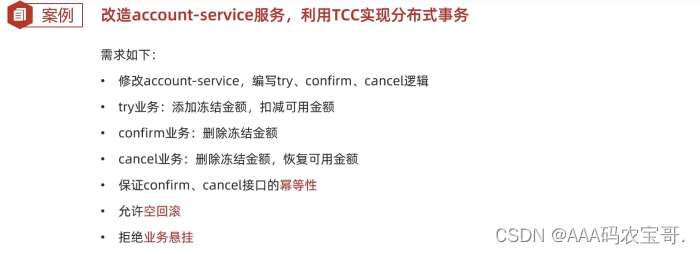

4.4.1 TCC案例

有些模块不适合TCC,比如下单,新增订单这种,不需要预留,不需要直接删了就好,而像扣余额扣库存这种,才需要预留。

所以先改造account

4.4.2 实现

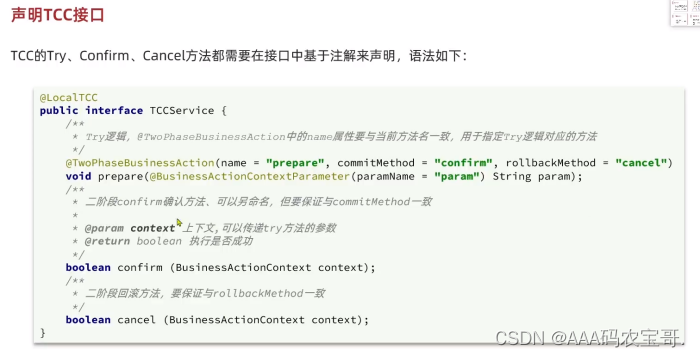

1)声明接口

在account-service的service包下声明一个接口,AccountTCCService

@LocalTCC

public interface AccountTCCService {

@TwoPhaseBusinessAction(name = "deduct", commitMethod = "confirm", rollbackMethod = "cancel")

void deduct(@BusinessActionContextParameter(paramName = "userId")String userId,

@BusinessActionContextParameter(paramName = "money")int money);

boolean confirm(BusinessActionContext ctx);

boolean cancel(BusinessActionContext ctx);

}

2)导入冻结表

将冻结表导入seata_demo中

3)创建freeze实体类

创建相应冻结表实体类,Mapper接口等

@Data

@TableName("account_freeze_tbl")

public class AccountFreeze {

@TableId(type = IdType.INPUT)

private String xid;

private String userId;

private Integer freezeMoney;

private Integer state;

public static abstract class State {

public final static int TRY = 0;

public final static int CONFIRM = 1;

public final static int CANCEL = 2;

}

}

4)实现service接口

实现AccountTCCServiceImpl

@Slf4j

@Service

public class AccountTCCServiceImpl implements AccountTCCService {

@Override

public void deduct(String userId, int money) {

}

@Override

public boolean confirm(BusinessActionContext ctx) {

return false;

}

@Override

public boolean cancel(BusinessActionContext ctx) {

return false;

}

}

5)基本业务逻辑实现

@Slf4j

@Service

public class AccountTCCServiceImpl implements AccountTCCService {

@Autowired

private AccountMapper accountMapper;

@Autowired

private AccountFreezeMapper freezeMapper;

@Override

@Transactional

public void deduct(String userId, int money) {

//0.获取全局事务ID

String xid = RootContext.getXID();

//1.因为数据库中Money字段是unsigned类型,所以这里不需要判断余额是否足够

//1.直接扣减金额

accountMapper.deduct(userId, money);

//2.记录冻结金额,事务状态

AccountFreeze freeze = new AccountFreeze();

freeze.setUserId(userId);

freeze.setFreezeMoney(money);

freeze.setState(AccountFreeze.State.TRY);

freeze.setXid(xid);

freezeMapper.insert(freeze);

}

@Override

public boolean confirm(BusinessActionContext ctx) {

//1.获取全局事务ID

String xid = ctx.getXid();

//2.根据全局事务ID删除冻结金额记录

int count = freezeMapper.deleteById(xid);

return count ==1;

}

@Override

public boolean cancel(BusinessActionContext ctx) {

//0.查询冻结记录

String xid = ctx.getXid();

AccountFreeze freeze = freezeMapper.selectById(xid);

//1.恢复可用余额

accountMapper.refund(freeze.getUserId(), freeze.getFreezeMoney());

//2.修改冻结金额为0,事务状态为CANCEL

freeze.setFreezeMoney(0);

freeze.setState(AccountFreeze.State.CANCEL);

int count = freezeMapper.updateById(freeze);

return count == 1;

}

}

6)空回滚、幂等

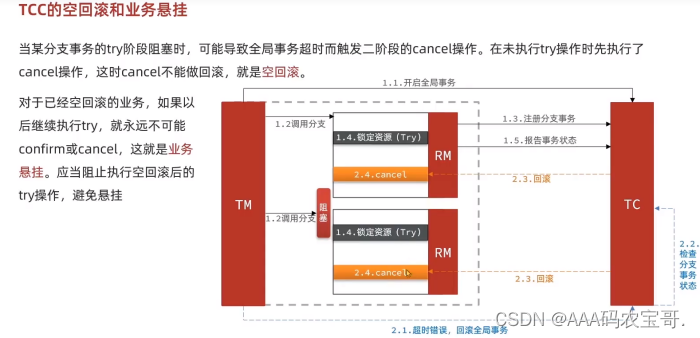

如果还没try就要执行,那就要进行空回滚,如果进行try,freeze表里会有一条记录,如果cancel时候发现没记录,说明try没执行,需要空回滚。

//0.1 空回滚

if (freeze == null) {

//证明try阶段没有执行,需要空回滚

freeze = new AccountFreeze();

freeze.setUserId(userId);

freeze.setFreezeMoney(0);

freeze.setState(AccountFreeze.State.CANCEL);

freeze.setXid(xid);

freezeMapper.insert(freeze);

return true;

}

如果多次实行cancel,则需要相同操作,如果执行过cancel,那么freeze表中状态必为cancel,直接返回true即可。

// 0.2 判断幂等操作

if (freeze.getState() == AccountFreeze.State.CANCEL) {

//证明cancel阶段已经执行过了,不需要重复执行

return true;

}

全部cancel代码

@Override

public boolean cancel(BusinessActionContext ctx) {

//0.查询冻结记录

String xid = ctx.getXid();

String userId = ctx.getActionContext("userId").toString();

AccountFreeze freeze = freezeMapper.selectById(xid);

//0.1 空回滚

if (freeze == null) {

//证明try阶段没有执行,需要空回滚

freeze = new AccountFreeze();

freeze.setUserId(userId);

freeze.setFreezeMoney(0);

freeze.setState(AccountFreeze.State.CANCEL);

freeze.setXid(xid);

freezeMapper.insert(freeze);

return true;

}

// 0.2 判断幂等操作

if (freeze.getState() == AccountFreeze.State.CANCEL) {

//证明cancel阶段已经执行过了,不需要重复执行

return true;

}

//1.恢复可用余额

accountMapper.refund(freeze.getUserId(), freeze.getFreezeMoney());

//2.修改冻结金额为0,事务状态为CANCEL

freeze.setFreezeMoney(0);

freeze.setState(AccountFreeze.State.CANCEL);

int count = freezeMapper.updateById(freeze);

return count == 1;

}

7)业务悬挂

try阶段,如果已经执行过空回滚的业务,那永远不可能进行try,判断freeze中是否有冻结记录,如果有则进行业务悬挂

//0.1 判断freeze中是否有冻结记录,如果有则进行业务悬挂,拒绝业务

AccountFreeze oldfreeze = freezeMapper.selectById(xid);

if (oldfreeze != null) {

return;

}

全部try代码

@Override

@Transactional

public void deduct(String userId, int money) {

//0.获取全局事务ID

String xid = RootContext.getXID();

//0.1 判断freeze中是否有冻结记录,如果有则进行业务悬挂,拒绝业务

AccountFreeze oldfreeze = freezeMapper.selectById(xid);

if (oldfreeze != null) {

return;

}

//1.因为数据库中Money字段是unsigned类型,所以这里不需要判断余额是否足够

//1.直接扣减金额

accountMapper.deduct(userId, money);

//2.记录冻结金额,事务状态

AccountFreeze freeze = new AccountFreeze();

freeze.setUserId(userId);

freeze.setFreezeMoney(money);

freeze.setState(AccountFreeze.State.TRY);

freeze.setXid(xid);

freezeMapper.insert(freeze);

}

8)修改Controller层

Controller层要调TCC,不能调原始的

@RestController

@RequestMapping("account")

public class AccountController {

@Autowired

private AccountTCCService accountService;

@PutMapping("/{userId}/{money}")

public ResponseEntity<Void> deduct(@PathVariable("userId") String userId, @PathVariable("money") Integer money){

accountService.deduct(userId, money);

return ResponseEntity.noContent().build();

}

}

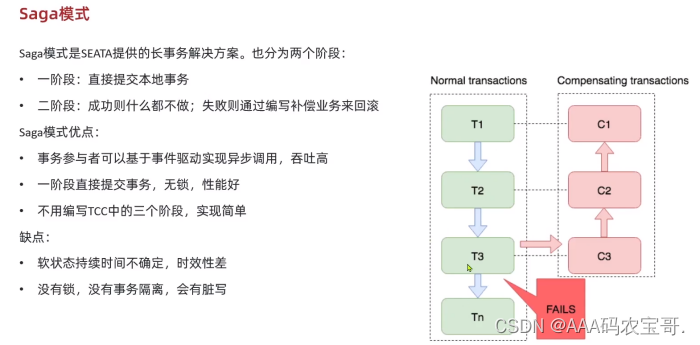

4.5 Saga模式

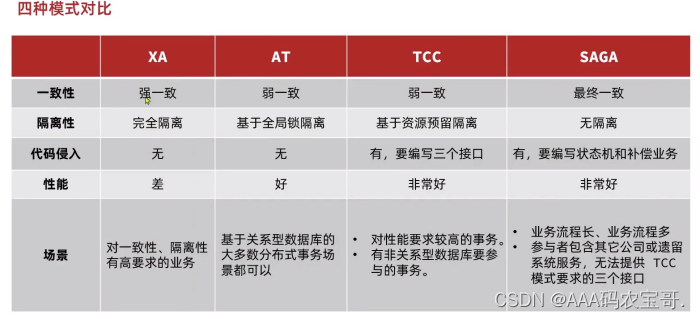

4.6 四种模式对比

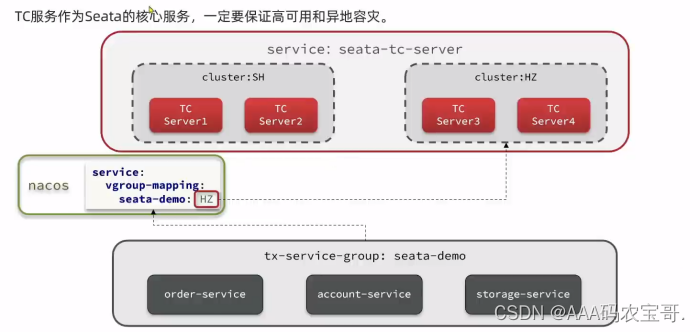

4.7 异地容灾

4.7.1 模拟异地容灾的TC集群

计划启动两台seata的tc服务节点:

| 节点名称 | ip地址 | 端口号 | 集群名称 |

|---|---|---|---|

| seata | 127.0.0.1 | 8091 | SH |

| seata2 | 127.0.0.1 | 8092 | HZ |

之前我们已经启动了一台seata服务,端口是8091,集群名为SH。

现在,将seata目录复制一份,起名为seata2

修改seata2/conf/registry.conf内容如下:

registry {

# tc服务的注册中心类,这里选择nacos,也可以是eureka、zookeeper等

type = "nacos"

nacos {

# seata tc 服务注册到 nacos的服务名称,可以自定义

application = "seata-tc-server"

serverAddr = "127.0.0.1:8848"

group = "DEFAULT_GROUP"

namespace = ""

cluster = "HZ"

username = "nacos"

password = "nacos"

}

}

config {

# 读取tc服务端的配置文件的方式,这里是从nacos配置中心读取,这样如果tc是集群,可以共享配置

type = "nacos"

# 配置nacos地址等信息

nacos {

serverAddr = "127.0.0.1:8848"

namespace = ""

group = "SEATA_GROUP"

username = "nacos"

password = "nacos"

dataId = "seataServer.properties"

}

}

进入seata2/bin目录,然后运行命令:

seata-server.bat -p 8092

打开nacos控制台,查看服务列表:

点进详情查看:

4.7.2 2.将事务组映射配置到nacos

接下来,我们需要将tx-service-group与cluster的映射关系都配置到nacos配置中心。

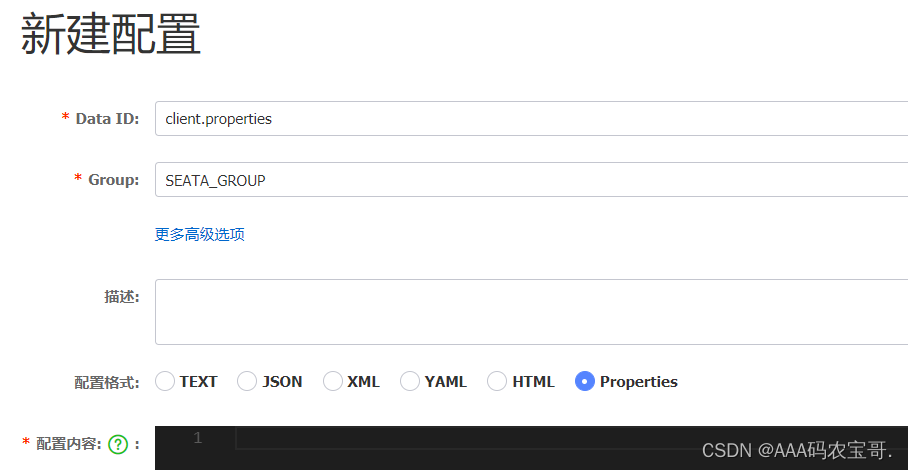

新建一个配置:

配置的内容如下:

# 事务组映射关系

service.vgroupMapping.seata-demo=SH

service.enableDegrade=false

service.disableGlobalTransaction=false

# 与TC服务的通信配置

transport.type=TCP

transport.server=NIO

transport.heartbeat=true

transport.enableClientBatchSendRequest=false

transport.threadFactory.bossThreadPrefix=NettyBoss

transport.threadFactory.workerThreadPrefix=NettyServerNIOWorker

transport.threadFactory.serverExecutorThreadPrefix=NettyServerBizHandler

transport.threadFactory.shareBossWorker=false

transport.threadFactory.clientSelectorThreadPrefix=NettyClientSelector

transport.threadFactory.clientSelectorThreadSize=1

transport.threadFactory.clientWorkerThreadPrefix=NettyClientWorkerThread

transport.threadFactory.bossThreadSize=1

transport.threadFactory.workerThreadSize=default

transport.shutdown.wait=3

# RM配置

client.rm.asyncCommitBufferLimit=10000

client.rm.lock.retryInterval=10

client.rm.lock.retryTimes=30

client.rm.lock.retryPolicyBranchRollbackOnConflict=true

client.rm.reportRetryCount=5

client.rm.tableMetaCheckEnable=false

client.rm.tableMetaCheckerInterval=60000

client.rm.sqlParserType=druid

client.rm.reportSuccessEnable=false

client.rm.sagaBranchRegisterEnable=false

# TM配置

client.tm.commitRetryCount=5

client.tm.rollbackRetryCount=5

client.tm.defaultGlobalTransactionTimeout=60000

client.tm.degradeCheck=false

client.tm.degradeCheckAllowTimes=10

client.tm.degradeCheckPeriod=2000

# undo日志配置

client.undo.dataValidation=true

client.undo.logSerialization=jackson

client.undo.onlyCareUpdateColumns=true

client.undo.logTable=undo_log

client.undo.compress.enable=true

client.undo.compress.type=zip

client.undo.compress.threshold=64k

client.log.exceptionRate=100

4.7.3 微服务读取nacos配置

接下来,需要修改每一个微服务的application.yml文件,让微服务读取nacos中的client.properties文件:

seata:

config:

type: nacos

nacos:

server-addr: 127.0.0.1:8848

username: nacos

password: nacos

group: SEATA_GROUP

data-id: client.properties

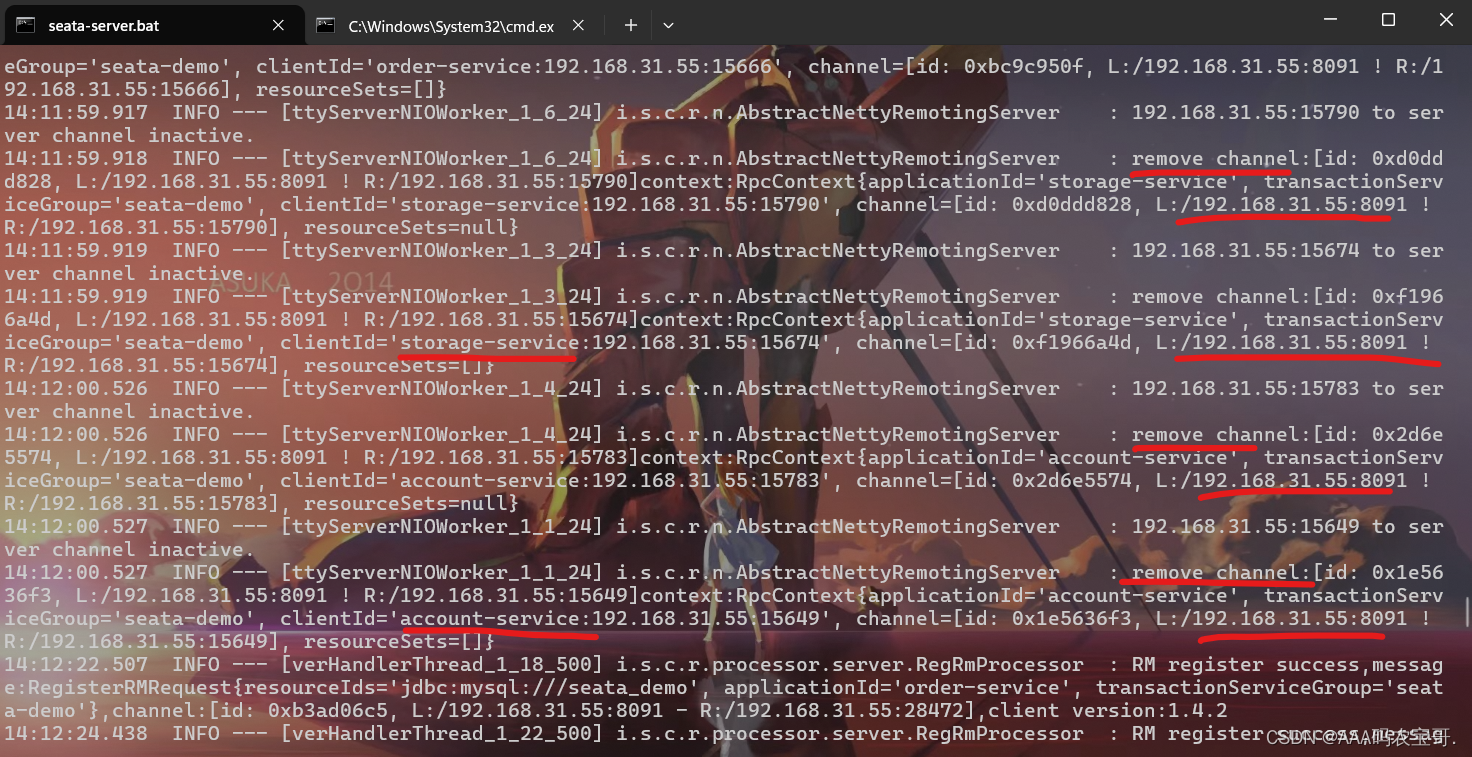

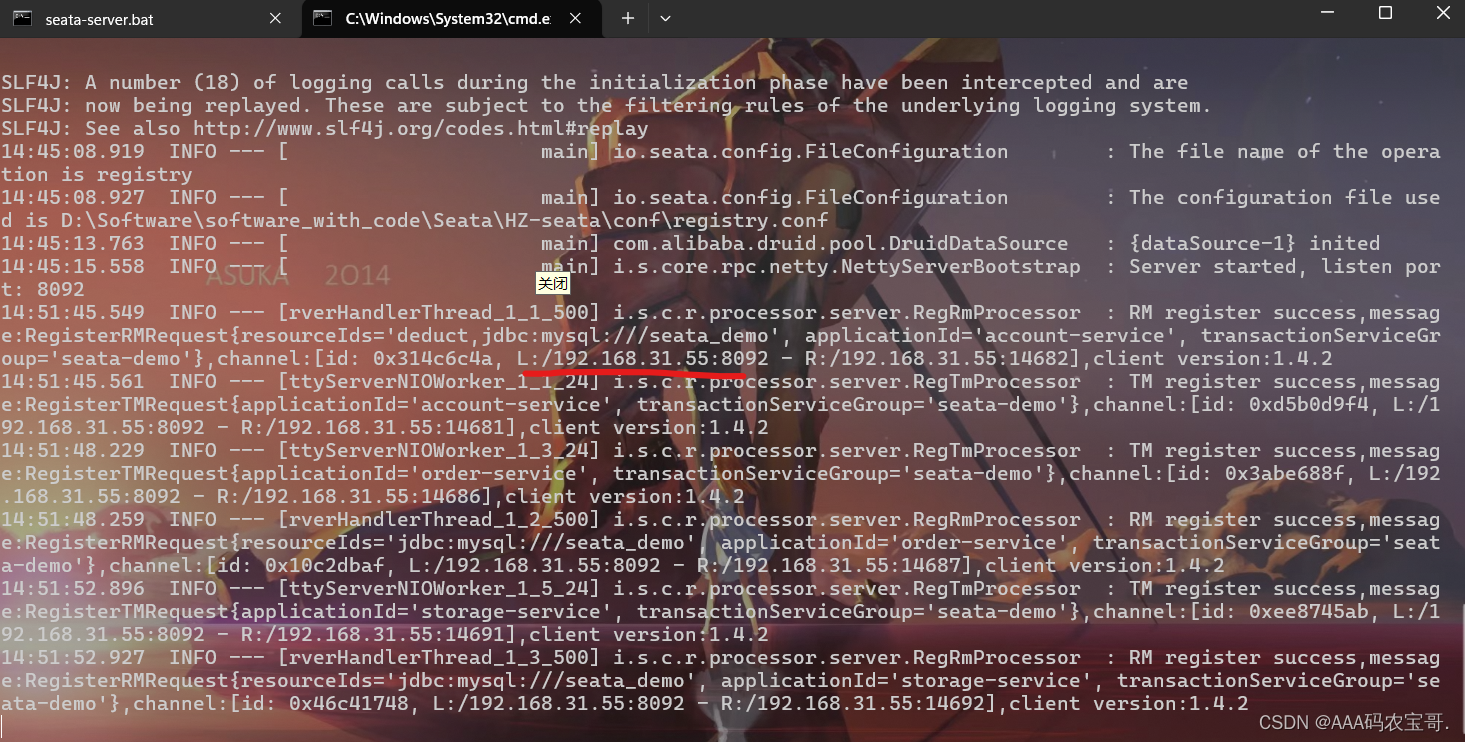

重启微服务,现在微服务到底是连接tc的SH集群,还是tc的HZ集群,都统一由nacos的client.properties来决定了。

可以修改client.properties来动态的切换到HZ,从SH修改到HZ

SH在remove

HZ在register

3834

3834

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?