使用Java API操作hdfs,实现以下功能:

package lab1.task4;

import java.io.IOException;

import java.util.Iterator;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

public class STjoin {

public static int time = 0; public static class Map extends Mapper<Object, Text, Text, Text>{

public void map(Object key,Text value,Context context)throws IOException,InterruptedException{

String relationtype = new String();

String line = value.toString();

System.out.println("mapper...............");

int i = 0;

//遍历方法二:使用迭代器取出child和parent

String[] values = new String[10];

StringTokenizer itr = new StringTokenizer(line);

while(itr.hasMoreTokens()){

values[i] = itr.nextToken();

i = i+1;

}

System.out.println("child:"+values[0]+" parent:"+values[1]);

if(values[0].compareTo("child") != 0){//如果是child,则为0,否则为-1

relationtype="1";

context.write(new Text(values[1]),new Text(relationtype+"+"+values[0]));

System.out.println("key:"+values[1]+" value: "+relationtype+"+"+values[0]);

relationtype = "2";

context.write(new Text(values[0]), new Text(relationtype+"+"+values[1]));

System.out.println("key:"+values[0]+" value: "+relationtype+"+"+values[1]);

}

}

}

public static class Reduce extends Reducer<Text, Text, Text, Text>{

public void reduce(Text key,Iterable<Text> values,Context context) throws IOException, InterruptedException{

System.out.println("reduce.....................");

System.out.println("key:"+key+" values:"+values);

if(time==0){

context.write(new Text("grandchild"), new Text("grandparent"));

time++;

}

int grandchildnum = 0;

String grandchild[] = new String[10];

int grandparentnum = 0;

String grandparent[] = new String[10];

String name = new String();

//遍历方法二:用for循环

for(Text val : values){

// String record = ite.next().toString();

String record = val.toString();

System.out.println("record: "+record);

int i = 2;

char relationtype = record.charAt(0);

name = record.substring(i);

System.out.println("name: "+name);

if (relationtype=='1') {

grandchild[grandchildnum] = name;

grandchildnum++;

}

else{

grandparent[grandparentnum]=name;

grandparentnum++;

}

}

//遍历方法三:就是详细方法的charAt(),一个一个字符遍历

if(grandparentnum!=0&&grandchildnum!=0){

for(int m = 0 ; m < grandchildnum ; m++){

for(int n = 0 ; n < grandparentnum; n++){

context.write(new Text(grandchild[m]), new Text(grandparent[n]));

System.out.println("grandchild: "+grandchild[m]+" grandparent: "+grandparent[n]);

}

}

}

}

}

public static void main(String [] args)throws Exception{

Configuration conf = new Configuration();

Job job = new Job(conf,"single table join");

job.setJarByClass(STjoin.class);

job.setMapperClass(Map.class);

job.setReducerClass(Reduce.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

FileInputFormat.addInputPath(job, new Path("hdfs://master:9000/input4/"));

FileOutputFormat.setOutputPath(job,new Path("hdfs://master:9000/output4/"));

System.exit(job.waitForCompletion(true)? 0 : 1);

}

}

1、 在hdfs上创建文件

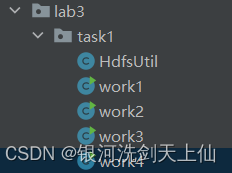

package lab3.task1;

public class work1 {

public static void main(String[] args) {

HdfsUtil hdfs1 = new HdfsUtil("hadoop");

hdfs1.mkdir("hdfs://master:9000/lab3/task2/");

System.out.println("success!");

}

}

2、 删除hdfs上的文件

package lab3.task1;

public class work2 {

public static void main(String[] args) {

HdfsUtil hdfs1 = new HdfsUtil("hadoop");

hdfs1.delete("hdfs://master:9000/lab3/task2/",true);

System.out.println("success!");

}

}

3、 上传文件至hdfs

package lab3.task1;

public class work3 {

public static void main(String[] args) {

HdfsUtil hdfs1 = new HdfsUtil("hadoop");

hdfs1.upload("C:\\Users\\dell\\Desktop\\3.txt","hdfs://master:9000/lab3/task1/3.txt");

System.out.println("success!");

}

}

4、 读取文件内容

package lab3.task1;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import java.io.*;

public class work4 {

/**

* 读取文件内容

*/

public static void cat(Configuration conf, String remoteFilePath) throws IOException {

FileSystem fs = FileSystem.get(conf);

Path remotePath = new Path(remoteFilePath);

FSDataInputStream in = fs.open(remotePath);

BufferedReader d = new BufferedReader(new InputStreamReader(in));

String line = null;

while ((line = d.readLine()) != null) {

String[] strarray = line.split(" ");

for (int i = 0; i < strarray.length; i++) {

System.out.print(strarray[i]);

System.out.print(" ");

}

System.out.println(" ");

// System.out.println(line);

// System.out.print(strarray[0]);

}

d.close();

in.close();

fs.close();

}

/**

* 主函数

*/

public static void main(String[] args) {

Configuration conf = new Configuration();

conf.set("fs.default.name", "hdfs://master:9000");

String remoteFilePath = "hdfs://master:9000/lab3/task1/3.txt"; // HDFS路径

try {

System.out.println("读取文件: " + remoteFilePath);

work4.cat(conf, remoteFilePath);

System.out.println("\n读取完成");

} catch (Exception e) {

e.printStackTrace();

}

}

}

217

217

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?