目录

- ⽬标⽹站:https://quotes.toscrape.com/

- 数据需求:

- 名⾔

- 名⼈

- 标签

- 分析⽹站: 数据静态加载

1. 创建项⽬

scrapy startproject my_scrapy

# 项⽬名

2. 创建Spider

cd my_scrapy

scrapy genspider spider baidu

.

com

# 爬⾍⽂件名

域名

3. 创建Item

Item是保存数据的容器 定义爬取数据结构

4. Spider

- 定义初始请求

- 定义解析数据

5.保存数据

1. 命令保存(⽂件:csv ,json ...)

scrapy crawl spider -o demo.csv

2.管道保存

class MyScrapyPipeline:

def process_item(self, item, spider):

# 简单保存

with open('demo2.txt','a',encoding= 'utf8') as f:

f.write(item['author'] + '\n'+ item['text'] + '\n\n\n')

return item完整代码

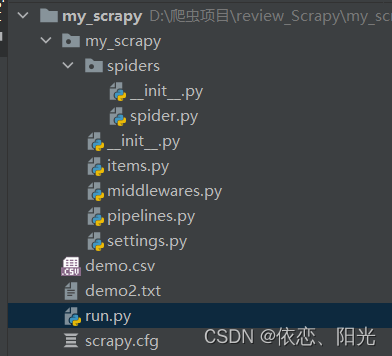

目录结构

spider.py

import scrapy

from my_scrapy.items import MyScrapyItem

class SpiderSpider(scrapy.Spider):

# 爬虫名称

name = 'spider'

# 域名限制,允许爬取的范围

# allowed_domains = ['https://quotes.toscrape.com/']

# 初始请求的页面

start_urls = ['https://quotes.toscrape.com//']

def parse(self, response):

# text = response.text

quotes = response.xpath('//div[@class="quote"]')

for quote in quotes :

# 旧方法 get()为新方法

# text = quote.xpath('./span[@class = "text"]/text()').extract_first()

# 实例化对象

item = MyScrapyItem()

# 利用xpth进行爬取

text = quote.xpath('./span[@class = "text"]/text()').get()

author = quote.xpath('.//small[@class="author"]/text()').get()

Tags = quote.xpath('.//a[@class="tag"]/text()').getall()

item['text'] = text

item['author'] = author

item['Tag'] = Tags

# 迭代出去

yield item

items.py

import scrapy

class MyScrapyItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

# 名言

text = scrapy.Field()

# 名人

author = scrapy.Field()

# 标签

Tag = scrapy.Field()pipelines.py

class MyScrapyPipeline:

def process_item(self, item, spider):

# 简单保存

with open('demo2.txt','a',encoding= 'utf8') as f:

f.write(item['author'] + '\n'+ item['text'] + '\n\n\n')

return itemsetting.py

ITEM_PIPELINES = {

'my_scrapy.pipelines.MyScrapyPipeline': 300,

}run.py

from scrapy import cmdline

cmdline.execute('scrapy crawl spider '.split())

312

312

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?