目录

部署KafkaUI-lite(kafka 轻量级可视化图形界面工具)

部署Kibana(用于elasticsearch可视化操作分析)

Docker-Compose部署微服务

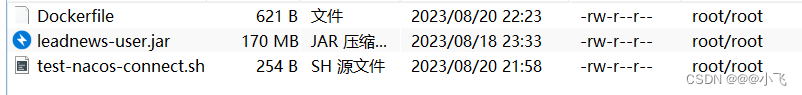

构建镜像的工作目录:

test-nacos-connect.sh:(当nacos服务完全启动时,微服务才启动)

#!/bin/bash # Test Nacos connection while ! curl -f http://192.168.200.100:8848/nacos/; do echo "Waiting for Nacos to become available..." sleep 5 done echo "Nacos is available, starting Spring Boot application..." java -jar leadnews-user.jarDcokerfile:

# 使用一个基础的Java镜像作为基础 FROM openjdk:8-jre-alpine # 将 JAR 文件复制到容器中 COPY leadnews-user.jar /app/leadnews-user.jar COPY test-nacos-connect.sh /app/test-nacos-connect.sh # 添加执行权限 RUN chmod +x /app/test-nacos-connect.sh # 更新软件包列表并安装 curl RUN apk update && apk add --no-cache curl # 设置工作目录 WORKDIR /app # 设置JVM运行参数, 这里限定下内存大小,减少开销 ENV JAVA_OPTS="\ -server \ -Xms256m \ -Xmx512m \ -XX:MetaspaceSize=256m \ -XX:MaxMetaspaceSize=512m" # 指定启动命令 CMD ["sh", "/app/test-nacos-connect.sh"]在工作目录中执行docker build构建微服务镜像

docker build -t leadnews-user-image:v1.0 .docker-compose.yml:

version: '3' services: mysql: # 服务名称 image: mysql # 基于mysql镜像 container_name: mysql # 构建容器的名称 ports: - "3306:3306" # 端口映射(:前面是主机端口,后面是容器内端口) environment: # 设置环境变量 MYSQL_ROOT_PASSWORD: "123456" # 设置mysql连接密码为123456,用户名默认root volumes: - /usr/dockerApp/mysql:/var/lib/mysql # 挂载数据库,mysql容器的数据持久化到该目录 nacos: image: nacos/nacos-server container_name: nacos ports: - "8848:8848" environment: - MODE=standalone volumes: - /usr/dockerApp/nacos/data:/home/nacos/data xxl-job-admin: image: xuxueli/xxl-job-admin:2.3.0 container_name: xxl-job-admin environment: #配置xxj-job连接mysql PARAMS: "--spring.datasource.url=jdbc:mysql://192.168.200.100:3306/xxl_job?Unicode=true&characterEncoding=UTF-8 \ --spring.datasource.username=root \ --spring.datasource.password=123456 \ --spring.datasource.hikari.maxLifetime=100000" ports: - "8888:8080" volumes: - /usr/dockerApp/xxl-job/tmp:/data/applogs depends_on: - mysql zookeeper: image: zookeeper container_name: zookeeper ports: - "2181:2181" kafka: image: wurstmeister/kafka container_name: kafka environment: KAFKA_ADVERTISED_HOST_NAME: 192.168.200.100 KAFKA_ZOOKEEPER_CONNECT: 192.168.200.100:2181 KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://192.168.200.100:9092 KAFKA_LISTENERS: PLAINTEXT://0.0.0.0:9092 KAFKA_HEAP_OPTS: "-Xmx256M -Xms256M" network_mode: host depends_on: - zookeeper elasticsearch: image: elasticsearch:7.4.0 container_name: elasticsearch environment: - discovery.type=single-node # 使用单节点模式 ports: - "9200:9200" volumes: - /usr/dockerApp/elasticsearch/data:/usr/share/elasticsearch/data - /usr/dockerApp/elasticsearch/plugins:/usr/share/elasticsearch/plugins redis: image: redis container_name: redis ports: - "6379:6379" volumes: - /usr/dockerApp/redis/data:/data mongodb: image: mongo container_name: mongo ports: - "27017:27017" volumes: - /usr/dockerApp/mongo/data:/data/db minio: image: minio/minio container_name: minio ports: - "9000:9000" - "9090:9090" environment: MINIO_ROOT_USER: minio MINIO_ROOT_PASSWORD: minio123 volumes: - /usr/dockerApp/minio/data:/data - /usr/dockerApp/minio/config:/root/.minio command: server /data --console-address ":9090" leadnews-app-gateway: image: leadnews-app-gateway-image:v1.0 container_name: leadnews-app-gateway ports: - "51601:51601" depends_on: - nacos - mysql - minio - mongodb - kafka - redis - elasticsearch - xxl-job-admin leadnews-wemedia-gateway: image: leadnews-wemedia-gateway-image:v1.0 container_name: leadnews-wemedia-gateway ports: - "51602:51602" depends_on: - nacos - mysql - minio - mongodb - kafka - redis - elasticsearch - xxl-job-admin leadnews-article: image: leadnews-article-image:v1.0 container_name: leadnews-article ports: - "51802:51802" depends_on: - nacos - mysql - minio - mongodb - kafka - redis - elasticsearch - xxl-job-admin leadnews-behavior: image: leadnews-behavior-image:v1.0 container_name: leadnews-behavior ports: - "51805:51805" depends_on: - nacos - mysql - minio - mongodb - kafka - redis - elasticsearch - xxl-job-admin leadnews-schedule: image: leadnews-schedule-image:v1.0 container_name: leadnews-schedule ports: - "51701:51701" depends_on: - nacos - mysql - minio - mongodb - kafka - redis - elasticsearch - xxl-job-admin leadnews-search: image: leadnews-search-image:v1.0 container_name: leadnews-search ports: - "51804:51804" depends_on: - nacos - mysql - minio - mongodb - kafka - redis - elasticsearch - xxl-job-admin leadnews-user: image: leadnews-user-image:v1.0 container_name: leadnews-user ports: - "51801:51801" depends_on: - nacos - mysql - minio - mongodb - kafka - redis - elasticsearch - xxl-job-admin leadnews-wemedia: image: leadnews-wemedia-image:v1.0 container_name: leadnews-wemedia ports: - "51803:51803" depends_on: - nacos - mysql - minio - mongodb - kafka - redis - elasticsearch - xxl-job-admin启动docker-compose:在docker-compose.yml文件的路径下执行

docker-compose up -d关闭docker-compose:在docker-compose.yml文件的路径下执行

docker-compose down这将停止并删除 docker-compose 中的容器,因此需要在yml文件中定义好容器的数据卷挂载

部署MySQL

docker run -p 3306:3306 \

--name mysql \

-e MYSQL_ROOT_PASSWORD=123456 \

-d \

--restart=always \

-v /usr/dockerApp/mysql:/var/lib/mysql \

mysql

- docker run:该命令用于创建和运行一个新的Docker容器

-p 3306:3306:将主机的3306端口映射到容器的3306端口(连接端口)--name mysql:此选项将容器的名称设置为mysql-e MYSQL_ROOT_PASSWORD=123456:设置MySQL的root用户密码为"123456"-d:后台运行- --restart=always:容器自启动(类似开机自启)

-v /usr/dockerApp/mysql:/var/lib/mysql:将主机的/usr/dockerApp/mysql目录挂载到容器的/var/lib/mysql目录,实现数据持久化。这样即使容器被删除,数据也会保留在主机上mysql:这是用于创建容器的MySQL镜像名称

部署Nacos

docker run --env MODE=standalone \

--name nacos \

--restart=always \

-d \

-p 8848:8848 \

nacos/nacos-server

--env MODE=standalone: 单机运行

naocs注册中心![]() http://192.168.200.100:8848/nacos

http://192.168.200.100:8848/nacos

部署Redis

docker run -d --name redis \

-p 6379:6379 \

-e REDIS_PASSWORD=123456 \

--restart=always \

redis

- -e REDIS_PASSWORD=123456:设置连接密码为123456

部署ZooKeeper

docker run -d --name zookeeper -p 2181:2181 --restart always zookeeper部署Kafka

docker run -d \

--name kafka \

--restart=always \

-e KAFKA_ADVERTISED_HOST_NAME=192.168.200.100 \

-e KAFKA_ZOOKEEPER_CONNECT=192.168.200.100:2181 \

-e KAFKA_ADVERTISED_LISTENERS=PLAINTEXT://192.168.200.100:9092 \

-e KAFKA_LISTENERS=PLAINTEXT://0.0.0.0:9092 \

-e KAFKA_HEAP_OPTS="-Xmx256M -Xms256M" \

--net=host \

wurstmeister/kafka

-e KAFKA_ADVERTISED_HOST_NAME=192.168.200.100:设置Kafka主机名为192.168.200.100,即用于外部访问Kafka的主机名。-e KAFKA_ZOOKEEPER_CONNECT=192.168.200.100:2181:设置Kafka连接到ZooKeeper的地址为192.168.200.100:2181-e KAFKA_ADVERTISED_LISTENERS=PLAINTEXT://192.168.200.100:9092:设置Kafka监听器的地址为PLAINTEXT://192.168.200.100:9092-e KAFKA_LISTENERS=PLAINTEXT://0.0.0.0:9092:设置Kafka监听器的地址为PLAINTEXT://0.0.0.0:9092,允许从任何地址访问Kafka-e KAFKA_HEAP_OPTS="-Xmx256M -Xms256M":设置Kafka堆内存的大小为256MB--net=host:将容器与主机共享网络命名空间,使得Kafka服务可以直接使用主机的网络接口wurstmeister/kafka:使用wurstmeister/kafka镜像创建并启动容器

部署KafkaUI-lite(kafka 轻量级可视化图形界面工具)

docker run -d --name kafka-ui -p 8889:8889 freakchicken/kafka-ui-liteKafkaUI-lite 图形界面工具![]() http://192.168.200.100:8889

http://192.168.200.100:8889

部署ElasticSearch

docker run -d --name elasticsearch \

--restart unless-stopped \

-p 9200:9200 \

-p 9300:9300 \

-v /usr/dockerApp/elasticsearch/plugins:/usr/share/elasticsearch/plugins \

-e "discovery.type=single-node" \

elasticsearch:7.4.0

- -e "discovery.type=single-node":单节点运行

-p 9200:9200:将容器的9200端口映射到主机的9200端口,用于访问Elasticsearch的REST API-p 9300:9300:将容器的9300端口映射到主机的9300端口,用于节点间的通信

部署Kibana(用于elasticsearch可视化操作分析)

docker run -d \

--name=kibana \

--restart=unless-stopped \

-v /usr/dockerApp/kibana/config:/usr/share/kibana/config \

-p 5601:5601 \

docker.elastic.co/kibana/kibana:7.4.0

- -v /usr/dockerApp/kibana/config:/usr/share/kibana/config:挂载配置文件

定义配置文件:

vi /usr/dockerApp/kibana/config/kibana.yml

# kibana.yml文件

server.port: 5601 # 访问端口

server.host: "0.0.0.0" # 允许外部访问

elasticsearch.hosts: ["http://192.168.200.100:9200"] # elasticsearch的ip端口

i18n.locale: "zh-CN" # 将界面语言设置为中文Kibana Web页面![]() http://192.168.200.100:5601

http://192.168.200.100:5601

部署MongoDB

docker run -id --name mongo \

-v /usr/dockerApp/mongo/data:/data/db \

-p 27017:27017 \

--restart always \

mongo

部署xxl-job

docker run -e PARAMS="--spring.datasource.url=jdbc:mysql://192.168.200.100:3306/xxl_job?Unicode=true&characterEncoding=UTF-8 \

--spring.datasource.username=root \

--spring.datasource.password=123456 \

--spring.datasource.hikari.maxLifetime=100000" \

-p 8888:8080 \

-v /usr/dockerApp/xxl-job/tmp:/data/applogs \

--name xxl-job-admin \

--restart=always \

-d xuxueli/xxl-job-admin:2.3.0

xxl-job管理页面(账号:admin 密码:123456)![]() http://192.168.200.100:8888/xxl-job-admin/

http://192.168.200.100:8888/xxl-job-admin/

部署minio

docker run -p 9000:9000 -p 9090:9090 \

--name minio \

-d \

--restart=always \

-e "MINIO_ACCESS_KEY=minio" \

-e "MINIO_SECRET_KEY=minio123" \

-v /usr/dockerApp/minio/data:/data \

-v /usr/dockerApp/minio/config:/root/.minio \

minio/minio server /data --console-address ":9090"

minio客户端![]() http://192.168.200.100:9000

http://192.168.200.100:9000

部署Jenkins

docker run -d -p 9999:8080 --name jenkins \

-v /usr/dockerApp/jenkins/jenkins_home:/var/jenkins_home \

-v /usr/app/maven/apache-maven-3.6.1:/usr/share/maven \

-v /usr/app/maven/repository-3.6.1:/root/.m2/repository \

-v /usr/app/git/git-2.41.0/bin/git:/usr/local/bin/git \

-v /var/run/docker.sock:/var/run/docker.sock \

jenkins/jenkins

- -v /usr/dockerApp/jenkins/jenkins_home:/var/jenkins_home:挂载Jenkins容器数据

- -v /usr/app/maven/apache-maven-3.6.1:/usr/share/maven:将主机中的Maven挂载到容器中使用

- -v /usr/app/maven/repository-3.6.1:/root/.m2/repository:将主机中的Maven仓库挂载到容器中使用

- -v /usr/jdk/jdk-17.0.8:/usr/java/latest:将主机中JDK挂载到容器中使用

- -v /usr/app/git/git-2.41.0/bin/git:/usr/local/bin/git:将主机中的Git挂载到容器中使用

- -v /var/run/docker.sock:/var/run/docker.sock:Docker容器中Jenkins与主机的Docker守护进程进行通信的,这个参数的作用是允许在容器内执行Docker命令,并且通过套接字与主机上的Docker守护进程进行交互,从而实现了在容器内使用Docker的功能

551

551

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?