pytorch 模型训练并保存模型文件案例

# Import the packages needed.

import matplotlib.pyplot as plt

import torchvision

from torchvision.transforms import ToTensor

data_train = torchvision.datasets.MNIST('./data',

download=True, train=True, transform=ToTensor())

data_test = torchvision.datasets.MNIST('./data',

download=True, train=False, transform=ToTensor())

fig, ax = plt.subplots(1, 7)

for i in range(7):

ax[i].imshow(data_train[i][0].view(28, 28))

ax[i].set_title(data_train[i][1])

ax[i].axis('off')

print('Training samples:', len(data_train))

print('Test samples:', len(data_test))

print('Tensor size:', data_train[0][0].size())

print('First 10 digits are:', [data_train[i][1] for i in range(10)])

print('Min intensity value: ', data_train[0][0].min().item())

print('Max intensity value: ', data_train[0][0].max().item())

import torch

import torch.nn as nn

import matplotlib.pyplot as plt

from torchinfo import summary

from pytorchcv import load_mnist, plot_results

load_mnist()

net = nn.Sequential(

nn.Flatten(),

nn.Linear(784, 10), # 784 inputs, 10 outputs

nn.LogSoftmax())

print('Digit to be predicted: ', data_train[0][1])

torch.exp(net(data_train[0][0]))

train_loader = torch.utils.data.DataLoader(data_train, batch_size=64)

test_loader = torch.utils.data.DataLoader(data_test, batch_size=64) # we can use larger batch size for testing

def train_epoch(net, dataloader, lr=0.01, optimizer=None, loss_fn=nn.NLLLoss()):

optimizer = optimizer or torch.optim.Adam(net.parameters(), lr=lr)

net.train()

total_loss, acc, count = 0, 0, 0

for features, labels in dataloader:

optimizer.zero_grad()

out = net(features)

loss = loss_fn(out, labels) # cross_entropy(out,labels)

loss.backward()

optimizer.step()

total_loss += loss

_, predicted = torch.max(out, 1)

acc += (predicted == labels).sum()

count += len(labels)

return total_loss.item() / count, acc.item() / count

train_epoch(net, train_loader)

def validate(net, dataloader, loss_fn=nn.NLLLoss()):

net.eval()

count, acc, loss = 0, 0, 0

with torch.no_grad():

for features, labels in dataloader:

out = net(features)

loss += loss_fn(out, labels)

pred = torch.max(out, 1)[1]

acc += (pred == labels).sum()

count += len(labels)

return loss.item() / count, acc.item() / count

validate(net, test_loader)

def train(net, train_loader, test_loader, optimizer=None, lr=0.01, epochs=10, loss_fn=nn.NLLLoss()):

optimizer = optimizer or torch.optim.Adam(net.parameters(), lr=lr)

res = {'train_loss': [], 'train_acc': [], 'val_loss': [], 'val_acc': []}

for ep in range(epochs):

tl, ta = train_epoch(net, train_loader, optimizer=optimizer, lr=lr, loss_fn=loss_fn)

vl, va = validate(net, test_loader, loss_fn=loss_fn)

print(f"Epoch {ep:2}, Train acc={ta:.3f}, Val acc={va:.3f}, Train loss={tl:.3f}, Val loss={vl:.3f}")

res['train_loss'].append(tl)

res['train_acc'].append(ta)

res['val_loss'].append(vl)

res['val_acc'].append(va)

return res

# Re-initialize the network to start from scratch

net = nn.Sequential(

nn.Flatten(),

nn.Linear(784, 10), # 784 inputs, 10 outputs

nn.LogSoftmax())

hist = train(net, train_loader, test_loader, epochs=5)

plt.figure(figsize=(15, 5))

plt.subplot(121)

plt.plot(hist['train_acc'], label='Training acc')

plt.plot(hist['val_acc'], label='Validation acc')

plt.legend()

plt.subplot(122)

plt.plot(hist['train_loss'], label='Training loss')

plt.plot(hist['val_loss'], label='Validation loss')

plt.legend()

net = nn.Sequential(

nn.Flatten(),

nn.Linear(784, 100), # 784 inputs, 100 outputs

nn.ReLU(), # Activation Function

nn.Linear(100, 10), # 100 inputs, 10 outputs

nn.LogSoftmax(dim=0))

summary(net, input_size=(1, 28, 28))

from torch.nn.functional import relu, log_softmax

class MyNet(nn.Module):

def __init__(self):

super(MyNet, self).__init__()

self.flatten = nn.Flatten()

self.hidden = nn.Linear(784, 100)

self.out = nn.Linear(100, 10)

def forward(self, x):

x = self.flatten(x)

x = self.hidden(x)

x = relu(x)

x = self.out(x)

x = log_softmax(x, dim=0)

return x

net = MyNet()

summary(net, input_size=(1, 28, 28), device='cpu')

hist = train(net, train_loader, test_loader, epochs=5)

plot_results(hist)

import torch

import torch.nn as nn

import matplotlib.pyplot as plt

from torchinfo import summary

from pytorchcv import load_mnist, train, plot_results, plot_convolution

load_mnist(batch_size=128)

plot_convolution(torch.tensor([[-1., 0., 1.], [-1., 0., 1.], [-1., 0., 1.]]), 'Vertical edge filter')

plot_convolution(torch.tensor([[-1., -1., -1.], [0., 0., 0.], [1., 1., 1.]]), 'Horizontal edge filter')

class OneConv(nn.Module):

def __init__(self):

super(OneConv, self).__init__()

self.conv = nn.Conv2d(in_channels=1, out_channels=9, kernel_size=(5, 5))

self.flatten = nn.Flatten()

self.fc = nn.Linear(5184, 10)

def forward(self, x):

x = nn.functional.relu(self.conv(x))

x = self.flatten(x)

x = nn.functional.log_softmax(self.fc(x), dim=1)

return x

net = OneConv()

summary(net, input_size=(1, 1, 28, 28))

hist = train(net, train_loader, test_loader, epochs=5)

plot_results(hist)

fig, ax = plt.subplots(1, 9)

with torch.no_grad():

p = next(net.conv.parameters())

for i, x in enumerate(p):

ax[i].imshow(x.detach().cpu()[0, ...])

ax[i].axis('off')

from pytorchcv import load_mnist

load_mnist(batch_size=128)

import torch

import torch.nn as nn

import torchvision

from torchinfo import summary

from pytorchcv import load_mnist, train, display_dataset

load_mnist(batch_size=128)

class MultiLayerCNN(nn.Module):

def __init__(self):

super(MultiLayerCNN, self).__init__()

self.conv1 = nn.Conv2d(1, 10, 5)

self.pool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(10, 20, 5)

self.fc = nn.Linear(320, 10)

def forward(self, x):

x = self.pool(nn.functional.relu(self.conv1(x)))

x = self.pool(nn.functional.relu(self.conv2(x)))

x = x.view(-1, 320)

x = nn.functional.log_softmax(self.fc(x), dim=1)

return x

net = MultiLayerCNN()

summary(net, input_size=(1, 1, 28, 28))

hist = train(net, train_loader, test_loader, epochs=5)

transform = torchvision.transforms.Compose(

[torchvision.transforms.ToTensor(),

torchvision.transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

trainset = torchvision.datasets.CIFAR10(root='./data', train=True, download=True, transform=transform)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=14, shuffle=True)

testset = torchvision.datasets.CIFAR10(root='./data', train=False, download=True, transform=transform)

testloader = torch.utils.data.DataLoader(testset, batch_size=14, shuffle=False)

classes = ('plane', 'car', 'bird', 'cat',

'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

display_dataset(trainset, classes=classes)

class LeNet(nn.Module):

def __init__(self):

super(LeNet, self).__init__()

self.conv1 = nn.Conv2d(3, 6, 5)

self.pool = nn.MaxPool2d(2)

self.conv2 = nn.Conv2d(6, 16, 5)

self.conv3 = nn.Conv2d(16, 120, 5)

self.flat = nn.Flatten()

self.fc1 = nn.Linear(120, 64)

self.fc2 = nn.Linear(64, 10)

def forward(self, x):

x = self.pool(nn.functional.relu(self.conv1(x)))

x = self.pool(nn.functional.relu(self.conv2(x)))

x = nn.functional.relu(self.conv3(x))

x = self.flat(x)

x = nn.functional.relu(self.fc1(x))

x = self.fc2(x)

return x

net = LeNet()

summary(net, input_size=(1, 3, 32, 32))

opt = torch.optim.SGD(net.parameters(), lr=0.001, momentum=0.9)

hist = train(net, trainloader, testloader, epochs=3, optimizer=opt, loss_fn=nn.CrossEntropyLoss())

import torch

import torchvision

import torchvision.transforms as transforms

import matplotlib.pyplot as plt

from torchinfo import summary

import os

from pytorchcv import train, display_dataset, train_long, check_image_dir

import zipfile

if not os.path.exists('data/PetImages'):

with zipfile.ZipFile('data/kagglecatsanddogs_5340.zip', 'r') as zip_ref:

zip_ref.extractall('data')

check_image_dir('data/PetImages/Cat/*.jpg')

check_image_dir('data/PetImages/Dog/*.jpg')

std_normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225])

trans = transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

std_normalize])

dataset = torchvision.datasets.ImageFolder('data/PetImages', transform=trans)

trainset, testset = torch.utils.data.random_split(dataset, [20000, len(dataset) - 20000])

display_dataset(dataset)

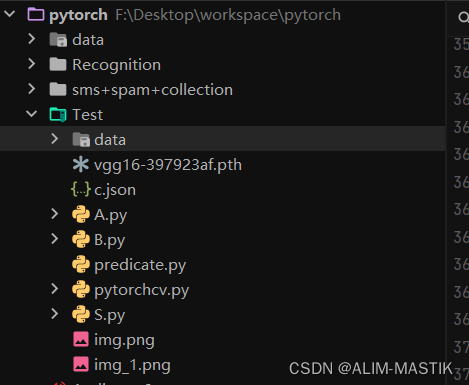

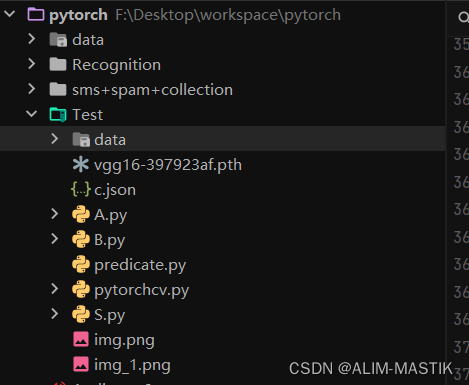

file_path = 'vgg16-397923af.pth'

vgg = torchvision.models.vgg16()

vgg.load_state_dict(torch.load(file_path))

vgg.eval()

sample_image = dataset[0][0].unsqueeze(0)

res = vgg(sample_image)

print(res[0].argmax())

import json

# 从本地文件加载JSON数据

with open("c.json", "r") as f:

class_map = json.load(f)

# 将分类映射转换为字典格式

class_map = {int(k): v for k, v in class_map.items()}

summary(vgg, input_size=(1, 3, 224, 224))

device = 'cuda' if torch.cuda.is_available() else 'cpu'

print('Doing computations on device = {}'.format(device))

vgg.to(device)

sample_image = sample_image.to(device)

vgg(sample_image).argmax()

res = vgg.features(sample_image).cpu()

plt.figure(figsize=(15, 3))

plt.imshow(res.detach().view(-1, 512))

print(res.size())

bs = 8

dl = torch.utils.data.DataLoader(dataset, batch_size=bs, shuffle=True)

num = bs * 100

feature_tensor = torch.zeros(num, 512 * 7 * 7).to(device)

label_tensor = torch.zeros(num).to(device)

i = 0

for x, l in dl:

with torch.no_grad():

f = vgg.features(x.to(device))

feature_tensor[i:i + bs] = f.view(bs, -1)

label_tensor[i:i + bs] = l

i += bs

print('.', end='')

if i >= num:

break

vgg_dataset = torch.utils.data.TensorDataset(feature_tensor, label_tensor.to(torch.long))

train_ds, test_ds = torch.utils.data.random_split(vgg_dataset, [700, 100])

train_loader = torch.utils.data.DataLoader(train_ds, batch_size=32)

test_loader = torch.utils.data.DataLoader(test_ds, batch_size=32)

net = torch.nn.Sequential(torch.nn.Linear(512 * 7 * 7, 2), torch.nn.LogSoftmax()).to(device)

history = train(net, train_loader, test_loader)

print(vgg)

vgg.classifier = torch.nn.Linear(25088, 2).to(device)

for x in vgg.features.parameters():

x.requires_grad = False

summary(vgg, (1, 3, 244, 244))

trainset, testset = torch.utils.data.random_split(dataset, [20000, len(dataset) - 20000])

train_loader = torch.utils.data.DataLoader(trainset, batch_size=16)

test_loader = torch.utils.data.DataLoader(testset, batch_size=16)

train_long(vgg, train_loader, test_loader, loss_fn=torch.nn.CrossEntropyLoss(), epochs=1, print_freq=90)

torch.save(vgg, 'data/cats_dogs.pth')

vgg = torch.load('data/cats_dogs.pth')

for x in vgg.features.parameters():

x.requires_grad = True

train_long(vgg, train_loader, test_loader, loss_fn=torch.nn.CrossEntropyLoss(), epochs=1, print_freq=90, lr=0.0001)

resnet = torchvision.models.resnet18()

print(resnet)

import torch

import torch.nn as nn

from torchinfo import summary

from pytorchcv import train_long, load_cats_dogs_dataset, validate

dataset, train_loader, test_loader = load_cats_dogs_dataset()

model = torch.hub.load('pytorch/vision:v0.13.0', 'mobilenet_v2', weights='MobileNet_V2_Weights.DEFAULT')

model.eval()

print(model)

sample_image = dataset[0][0].unsqueeze(0)

res = model(sample_image)

print(res[0].argmax())

for x in model.parameters():

x.requires_grad = False

device = 'cuda' if torch.cuda.is_available() else 'cpu'

model.classifier = nn.Linear(1280, 2)

model = model.to(device)

summary(model, input_size=(1, 3, 244, 244))

train_long(model, train_loader, test_loader, loss_fn=torch.nn.CrossEntropyLoss(), epochs=1, print_freq=90)

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?