1. Flink开发环境

推荐使用IntelliJ IDEA编译器,创建Maven项目,在这里给出Java语言的Maven配置:

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>lhd</groupId>

<artifactId>flink</artifactId>

<version>1.0-SNAPSHOT</version>

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>1.10.0</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.11</artifactId>

<version>1.10.0</version>

<scope>provided</scope>

</dependency>

</dependencies>

</project>

2. Flink流处理案例

功能:实现每隔1s对最近2s内的数据进行汇总计算

package streaming;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.java.utils.ParameterTool;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.util.Collector;

/**

* 单词计数之滑动窗口计算

* request: 每隔1s对最近2s内的数据进行汇总计算

*/

public class SocketWindowWordCountJava {

public static class WordWithCount{

public String word;

public long count;

public WordWithCount(){}

public WordWithCount(String word, long count){

this.word = word;

this.count = count;

}

@Override

public String toString(){

return "WordWithCount{" +

"word = '" + word + '\'' +

", count = " + count +

'}';

}

}

public static void main(String[] args) throws Exception {

//获取需要的端口号

int port;

try{

ParameterTool parameterTool = ParameterTool.fromArgs(args);

port = parameterTool.getInt("port");

}catch (Exception e){

System.out.println("No port set. use default port 10000--Java");

port = 10000;

}

//获取Flink的运行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

String hostname = "master001";

String delimiter = "\n";

//连接Socket获取输入的数据

DataStreamSource<String> text = env.socketTextStream(hostname, port, delimiter);

//a a c

//a 1

//a 1

//c 1

DataStream<WordWithCount> windowCounts = text.flatMap(new FlatMapFunction<String, WordWithCount>() {

public void flatMap(String value, Collector<WordWithCount> out) throws Exception {

String[] splits = value.split("\\s");

for(String word : splits){

out.collect(new WordWithCount(word, 1L));

}

}

}).keyBy("word").timeWindow(Time.seconds(2), Time.seconds(1))

.sum("count");

windowCounts.print().setParallelism(1);

//这一行代码一定要实现,否则程序不执行

env.execute("Socket window count");

}

}

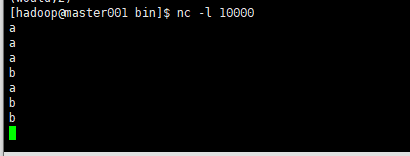

**执行程序:**指定hostname为master001,port默认为10000,表示流处理程序默认监听master001的10000端口,所以需要在master001节点上监听10000端口:

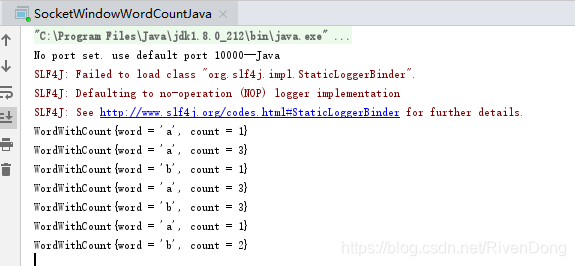

执行结果:

3. Flink批处理案例

功能:统计文件中的单词出现的总次数,并且把结果存储到文件中、

package batch;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.java.DataSet;

import org.apache.flink.api.java.ExecutionEnvironment;

import org.apache.flink.api.java.operators.DataSource;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.util.Collector;

public class BatchWordCountJava {

public static class Tokenizer implements FlatMapFunction<String, Tuple2<String, Integer>>{

public void flatMap(String value, Collector<Tuple2<String, Integer>> out) throws Exception {

String[] tokens = value.toLowerCase().split("\\W+");

for(String token : tokens){

if(token.length()>0){

out.collect(new Tuple2<String, Integer>(token, 1));

}

}

}

}

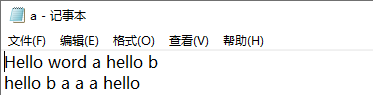

public static void main(String[] args) throws Exception {

String inputPath = "E:\\test\\a.txt";

String outPath = "E:\\test\\result";

//获取运行环境

ExecutionEnvironment env = ExecutionEnvironment.getExecutionEnvironment();

//获取文件内容

DataSource<String> text = env.readTextFile(inputPath);

DataSet<Tuple2<String, Integer>> counts = text.flatMap(new Tokenizer()).groupBy(0).sum(1);

counts.writeAsCsv(outPath, "\n", " ").setParallelism(1);

env.execute("batch word count");

}

}

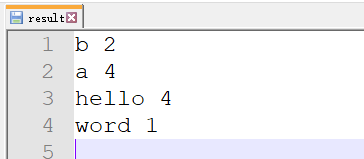

执行结果:

9465

9465

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?