AWS认证介绍

AWS Certified Solutions Architect 系列认证是亚马逊从2013年开始推出的对云计算架构师能力的官方认证。考试不仅考察应试者对AWS相关云服务的熟悉程度,题目也多来源于实际中要解决的问题,要求应试者有全面的架构设计经验和积累,所以含金量很高。在美国招聘网站glassdoor上,AWS solution architect的身价平均在10万美金以上,可见业界对这个认证的认可程度。

它在国内的认可程度如何呢?根据我和考试认证中心的工作人员聊天打听到,国内来考试的分两类人,一类是工作单位和亚马逊有合作关系,单位出钱要求通过。另一类是云计算这个行业的早起的鸟儿,他们想充实自己的职业技能,在云计算这个浪潮中能有一席之地。所以相对来说了解和报考的人还是少数。

不过想顺利通过这个考试并不容易。一方面考试全程英文阅读量大。另一方面复习资料缺乏,只能通过官方的FAQ文档和白皮书进行学习,自学效率非常低。对于初次接触AWS云服务认证的同学来说,不花2,3个月的时间认证准备,是很难通过的。就算作者身边那些有2,3年AWS云服务使用经验的架构师,想裸考通过,成功率也几乎为零。所以,打算通过这个考试,一定要认真对待,规划好时间充分准备。

关于AWS认证考试更多的介绍,大家还可以参考网上刘涛同学的文章http://www.csdn.net/article/2015-10-12/2825891

考试过程

考试可以通过AWS认证中心网站预约考试时间。由于是电脑考试,随到随考,不用提前很久就预约。自己觉得复习的差不多了,上网预约就近的时间和考点就可。亚马逊把考点外包了给专业考试机构,一般都在交通比较方便的写字楼,北京的考点是在朝阳区建国门的万豪大厦和海淀区中关村南大街寰太大厦。assotiate level的考试全程80分钟,55道题。考场的布局其实挺经济节约的,一个能容3,4人开会的玻璃小隔间,一张桌子和椅子,一台联网的笔记本,房顶一个摄像头,就足够考试机构开门做生意了。80分钟时间到后,系统会自动提交并立刻判定分数,告诉你总分和4个测试方向的单项得分,是PASS or Failed。这写信息不记住也没关系,因为亚马逊会自动向你报名用的邮箱发送一份电子成绩单。例如我的成绩单内容如下:

Overall Score: 76%

Topic Level Scoring:

1.0 Designing highly available, cost efficient, fault tolerant, scalable systems : 72%

2.0 Implementation/Deployment: 100%

3.0 Security: 72%

4.0 Troubleshooting: 80%

在这4个测试单项中,我感觉最难的单项是security部分,建议大家把AWS关于security的白皮书都好好看看。其次是Designing highly available system部分,一般题干都是大段的英文,对于阅读理解和关键词归纳能力都有考验,大家在备考时要注意训练一下阅读速度。平时练习时一道题的答题速度要控制在1分30秒以内。不然机号时有可能时间不够。

如果通过了测试, 你的注册邮箱会收到通知邮件,附件里有考试通过的证书和可授权使用的logo。如图:

考试范围

入门级Associate Level的考试主要考察应试者对云计算架构设计,AWS云服务安全,各种服务的功能细节,以及问题排查这4方面进行综合评测。 官网的考试指南要仔细研究,对考试范围有个大致了解。 值得研究一下的是它的评分标准,如图:

其中, Designing highly avilable system 和 data security这两部分, 占的比重是最大的,直接影响到你能不能顺利通过考试。 所以在准备考试时,这两方面的内容要重点掌握。

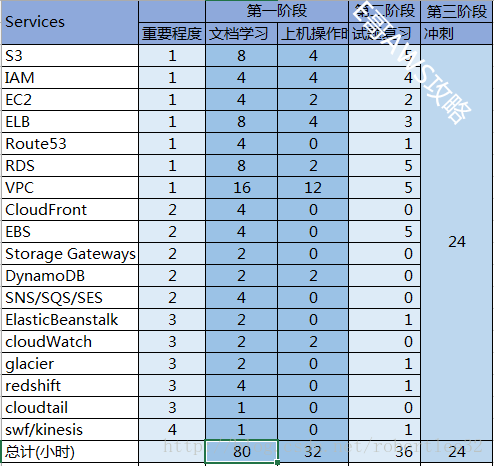

AWS认证考试的要求掌握的亚马逊的各种云计算服务, 虽然它们都有特别的名字,但是和其他云计算厂商的产品功能其实都大同小异,只是叫法不一样。(这里要赞一下亚马逊,不愧为云计算的领头羊,在这个行业有很深的技术沉淀,其各类产品的功能和细节大大领先于Azure等其它厂商。所以通过了AWS认证,基本上就通吃云计算圈了)。我列了一下需要复习和掌握的范围,如下表:

整个学习过程阅读大概需要80小时左右的学习时间,上机操作32小时,详细的复习计划我后面有介绍。大家可以参考我估计的学习时间来做参考,制定自己的的学习计划。如果对某些服务已经比较熟悉,可以适当缩短学习时间。参加认证的同学大部分都是公司骨干,想必平日都非常忙,所以做好学习计划至关重要。评估好每周能抽出用于学习的时间,例如以平均每周拿出8小时来学习计算,那大致需要3个月左右的时间来准备这次考试。

学习资料

官方的学习资料大部分可以在https://aws.amazon.com/cn/certification/certification-prep/获得,比较常用的资料地址:

- 考试指南: http://awstrainingandcertification.s3.amazonaws.com/production/AWS_certified_solutions_architect_associate_blueprint.pdf

- 动手实验室: https://qwiklabs.com/learning_paths/10/lab_catalogue

- AWS白皮书: https://aws.amazon.com/whitepapers/

- 各种云服务的常见问题: https://aws.amazon.com/faqs/

- AWS各种服务的介绍: http://aws.amazon.com/cn/documentation/

- •模考Practice Exam 考一次20刀。 强烈建议花20刀去模考一次,分析考试各项成绩,发现自己的不足,花时间强攻弱点。

https://webassessor.com/aws/

第三方的博客学习资料,发现大部分博客都是印度老哥的,看来印度帮在硅谷真是广泛存在的物种:

- markosrendell的 Sample Questions Answered and Discussed, 对7个样题进行了详细解读,顺便对相关的考点也进行了解读,是考试入门非常实用的资料。

- Abhishek Shaha的 Pass AWS Certification Exam in 10 days 里面的一些推荐视频资料还不错,不过大多是收费的。别信看完视频10天可以通过考试。

- Jayendra的博客http://jayendrapatil.com/ 里面对一些考点有很不错的归纳,附带一些试题的答案分析,非常实用。不过由于作者是考professional level的,里面混入了很多professional的考点,需要自己甄别一下。

- Faeez Shaikh 公司出的题库手机题库,有300多道题,可以在苹果手机上安装,费用是6美刀。我没有用过,只列举不推荐。http://apple.co/1Mv6Bua

网上的一些真题机经题库,答案不一定正确,我做了整理和纠错,建议看我的博客,在后面的章节会提到。

- https://quizlet.com/144362328/6_aws-solution-architect-asoc-flash-cards/

- https://quizlet.com/35935418/detailed-questions-flash-cards/

有些朋友问亚马逊官方的复习资料是否值得购买,价格30刀左右,官网有购买链接。 这个我没看过,没有发言权。 从考托福雅思的经验来看,官方资料除了列举的考点信息有点用外,整体参考价值没有机经真题来得高,性价比不是很合适。不过由于网上资料比较少,有总比没有强。 是否要花那30刀,大家自己决定吧。

学习计划

按照上一节列的的考点分析表格,我将整个学习计划建议分成3部分。

- 前期,熟悉各个服务的内容和功能,阅读文档预计80小时,动手实验预计32小时,总体需要112学时。 按每周学习8小时计算,大约需要10-14周。要完成以下项目:

- 阅读考试指南

- 看完相关白皮书和FAQ

- 了解和实操各种云服务功能,考点参加后面章节的 AWS services考点列表

- 有时间和余力的学习Jayendra的博客http://jayendrapatil.com/ 中的内容

- 考sample试卷,全面阅读 Sample Questions Answered and Discussed

- 中期,重点突击网上的机经和题库,边自考边查漏补缺, 这个过程大约需要36学时,按一周8小时计,为期1个半月。要完成的项目:

- E哥整理的机经和题库,见后面章节

- Jayendra的博客http://jayendrapatil.com/ 上的题库

- 后期,花20美刀参加预考,熟悉流程,通过考分也了解自己的弱项。回来之后突击自己的弱项,把不熟悉的考点和试题再复习一遍。 这个时间24小时。3周左右。

- 最后,感觉复习差不多,就可以去亚马逊网站预约考试了。

总共的复习时间在152个学时,大家按自己每周的学习时间科学安排。这个考试没有报名时间限制,所以一定等准备差不多了再去考试。如果资料都复习完了心里还没底,就再去模考一次找找信心。别舍不得多花这20美刀,总比因为准备不足考试没通过损失150刀的考试费强。另外有些同学还是单位出钱,正考没过就得不偿失了。

最后

我打算陆续把一些自己整理的学习笔记放上来,不过由于这个考试现在过于小众,也拿不准大家想看哪些内容。如果你看到这里了,说明是真觉得E哥写的东西还有点用,希望你能给我一些反馈,留下你的建议。 哪怕只是点个赞,也能鼓励我继续完善这个博客,帮助到更多爱真求知的小伙伴。祝各位备考顺利!

附AWS Services考点列表

我把自己在复习过程中的记录的关键点整理了一下,大家可以按关键字在网上搜索aws相关文档进行学习。 例如,要学习S3 的 Server-Side Encryption, 在浏览器中使用www.bing.com (不推荐用某度,搜索技术文档效果太渣)搜索 aws Server-Side Encryption 关键字, 根据查询链接进入aws官方网站进行学习。

S3

- Security

- encryption

- Server-Side Encryption

- Client-Side Encryption

- Permissions

- User policies

- Resource based policies

- Bucket Policies

- ACL

- bucket ACL

- Object ACL

- cross-origin requests

- not support https custom domain

- Request Authorization

- Permission Delegation

- Operation Authorization

- encryption

- price

- request + storage + data transfer

- Standard Storage vs RRS (Reduced Redundancy Storage)

- Pre-Signed URLs

- Torrent support

- Storage Classes

- standard, standard IA, RRS, glacier

- Glacier

- version

- Versioning can be suspended

- MFA Delete

- not enabled versioning, verion ID is NULL

- Lifecycle Management

- Transition

- expiration

EBS

- general

- attached in same AZ

- create snapshot cross AZ or region

- Root EBS volume is deleted, by default

- persists independently

- encrypted

- Public or shared snapshots of encrypted volumes are not supported

- Existing unencrypted volumes cannot be encrypted directly. Can migrate from copy encripted snapshot

- Supported on all Amazon EBS volume types, not instance type

- performence

- use raid0 , raid1 improve iops

- EBS optimized with PIOPS EBS

- price

- charge with storage, I/O requests and snapshot storage

- EBS backed EC2,very stop/start it will be charged as a separate hour

- snapshot

cloudfront

- delivery

- request-ROUTE53-edge location-Origin server

- supports both static and dynamic content

- RMTP

- S3 bucket as the origin

- users view media files using the media player that is provided by cloudfront; not the locally installed

- Web distribution for media Player and RMTP distribution for media files

- private content

- OAI Origin Access Identity

- add header in http server, Origin to verify the request has come from CloudFront

- feature

- signed URLs and signed cookies

- for RTMP distribution

- restrict access to individual files

- access to multiple restricted files

- Caching Based on Request Headers

- Geo Restriction

- Compressed Files

- Content-Encoding header on the file must not be gzip

- viewer uncompresses the file

- multi-upload to S3

- signed URLs and signed cookies

- SNI

- Server Name Indication, 同一个IP可用选择多个hostname, 用自己的SSL证书时选择,一般是客户端浏览器的选项

- Dedicated IP

- 专属主机IP,不和其他hostname共用,传统SSL使用,现在大部分用SNI

- https with S3, s3不能独立用https, 但是结合cloudfront, 用 ACM Amazon Certificate Manager 生成的证书可以通讯

- price

- charge with: data out, request, Invalidation request, SSL certificates

VPC

- VPN

- Virtual Private Gateway , TWO endpoint

- Customer Gateway, hardware or software, keep ping to alive

- Direct Connect

- CloudHub

- software VPN

- Endpoints

- vpc connect S3

- VPC and endpoints must in same region

- IP

- private, public(dynamic), Elasitic IP

- VPC wizard

- auto create : private sub, custom public sub, NAT, IGW

- NAT

- NAT Gateway

- NAT Instance

- Auto Scaling for HA

- source/destination checks on the NAT instance should be disabled

- Peering

- security group vs NACL

IAM

- root account, user, group

- MultiFactor Authentication

- Security token-based, 6位数字设备

- SMS text

- policy

- An explicit allow overrides default deny

- 语法 Principal, action,Effect,Resource,condition

- Capability policies, Resource policies, IAM policies

- Role delegation

- Identity Providers

- Amazon Cognito

- SAML

- Custom Identity broker Federation

- Cross account access

- EC2 has role, app inside can take role

- User-based and Resource-based

ELB

- Pre-Warming

- Connection Draining

- Client-Side SSL certificates

- Server Order Preference

- Cross-Zone

- SSL termination

- ELB HTTPS listener does not support Client-Side SSL certificates

- autoscaling

- Scheduled scaling can not be overlap

- choose greatest impact when Multiple Policies

- cooldown period

- Termination Policy

RDS

- backup

- preferred backup window

- backup retention period

- I/O suspension for single

- Point-In-Time Recovery

- snapshot

- DB Snapshots make entire DB instance

- from one region to another region,a copy retain in that region

- Because KMS encryption keys are specific to the region that they are created in, encrypted snapshot cannot be copied to another region

- DB Snapshot Sharing

- DB snapshot that uses an option group with permanent or persistent options cannot be shared

- KMS key policy must first be updated by adding any accounts to share the snapshot with, before sharing an encrypted DB snapshot

- replication

- routing read queries from applications to the Read Replica

- Failover mechanism automatically changes the DNS record of the DB instance to point to the standby DB instance

- Multi-AZ deployment

- read-only traffic, use a Read Replica.

- synchronous standby replica in a different Availability Zone

- must be in same region

- Read Replica

- RDS sets up a secure communications channel between the source DB instance and the Read Replica, if that Read Replica is in a different AWS region from the DB instance

- replication link is broken, A Read Replica can be promoted to a new independent source DB

- use some tools like HAPROXY, with two url ,one for write one tor read

- security

- Encryption enabled at creating, can not change key later

- Once encryption, log,snapshot,autobackup, replica are encripted

- Cross region replicas and snapshots copy does not work since the key is only available in a single region

- Database security groups default to a “deny all” access mode

- monitor

- 监控的metric 16 项, ReplicaLag

- Backup not notify for snapshot

- maintenance

- Multi-AZ deployment, preform standby, promote standby, preform old primary

- RDS takes two DB snapshots , before upgrade, after upgrade

DynamoDB

- synchronously replicates data across three AZ’s in a region

- durability with shared data

- secutiry&permission

- IAM Role that allows write access to the DynamoDB,

Launch an EC2 Instance with the IAM Role included

- IAM Role that allows write access to the DynamoDB,

- Secondary Indexes

- gloabl, An index with a hash and range key that can be different from those on the table

ElasticCache

- ElastiCache currently allows access only from the EC2 network

- Memcache not support multi-AZ

- REDIS replica read can not across regions

- Redis Replication Groups, max 5 replica

- Redis Multi-AZ with Automatic Failover, promote one replica as primary, disabled Filover, create new instance and sync with exist replica

redshift

- Single vs Multi-Node Cluster

- from 1-128 compute nodes

- cluster can be restored from snapshot in same region

route53

- Simple Routing

- Weighted Routing

- Latency-based Routing

- Failover Routing

- Geolocation Routing

storage gateway

- gateway-cached volume

- Gateway Stored volumes

EC2

- placement group

- Amazon Instance Store/EBS-backed instance

- security

- EC2 Key Pairs

- Security Groups

- Connection Tracking

- IAM Role

- tags

- billing Allocation report

- Restriction

- Maximum tags 10

- Maximum key length – 128 Unicode characters in UTF-8

- Maximum value length – 256 Unicode characters in UTF-8

- show

- keyName = value1|value2|value3 or keyName = key1|value1;key2|value2

题库

这个是我们中国特色的章节,大家的最爱 - 机经&题库。

免责申明:

这些题目从不同的公开网站渠道获得,为了方便复习我进行了一些整理,校对和重新排版。 如果有版权方面的问题请联系我进行删除。

You have a video transcoding application running on Amazon EC2. Each instance polls a queue to find out which video should be transcoded, and then runs a transcoding process. If this process is interrupted, the video will be transcoded by another instance based on the queuing system. You have a large backlog of videos which need to be transcoded and would like to reduce this backlog by adding more instances. You will need these instances only until the backlog is reduced. Which type of Amazon EC2 instances should you use to reduce the backlog in the most cost efficient way?

- A. Reserved instances

- B. Spot instances

- C. Dedicated instances

D. On-demand instances

Answer: B

A customer has established an AWS Direct Connect connection to AWS. The link is up and routes are being advertised from the customer’s end, however the customer is unable to connect from EC2 instances inside its VPC to servers residing in its datacenter.

Which of the following options provide a viable solution to remedy this situation? (Choose 2 answers)- A. Add a route to the route table with an iPsec VPN connection as the target.

- B. Enable route propagation to the virtual pinnate gateway (VGW).

- C. Enable route propagation to the customer gateway (CGW).

- D. Modify the route table of all Instances using the ‘route’ command.

E. Modify the Instances VPC subnet route table by adding a route back to the customer’s on-premises environment.

Answer: B,E

An International company has deployed a multi-tier web application that relies on DynamoDB in a single region For regulatory reasons they need disaster recovery capability In a separate region with a Recovery Time Objective of 2 hours and a Recovery Point Objective of 24 hours They should synchronize their data on a regular basis and be able to provision me web application rapidly using CloudFormation.

The objective is to minimize changes to the existing web application, control the throughput of DynamoDB used for the synchronization of data and synchronize only the modified elements.

Which design would you choose to meet these requirements?- A. Use AWS data Pipeline to schedule a DynamoDB cross region copy once a day. create a Lastupdated’ attribute in your DynamoDB table that would represent the timestamp of the last update and use it as a filter.

- B. Use EMR and write a custom script to retrieve data from DynamoDB in the current region using a SCAN operation and push it to DynamoDB in the second region.

- C. Use AWS data Pipeline to schedule an export of the DynamoDB table to S3 in the current region once a day then schedule another task immediately after it that will import data from S3 to DynamoDB in the other region.

D. Send also each Ante into an SQS queue in me second region; use an auto-scaiing group behind the SQS queue to replay the write in the second region.

Answer: C

You deployed your company website using Elastic Beanstalk and you enabled log file rotation to S3. An Elastic Map Reduce job is periodically analyzing the logs on S3 to build a usage dashboard that you share with your CIO. You recently improved overall performance of the website using Cloud Front for dynamic content delivery and your website as the origin

After this architectural change, the usage dashboard shows that the traffic on your website dropped by an order of magnitude. How do you fix your usage dashboard’?- A. Enable Cloud Front to deliver access logs to S3 and use them as input of the Elastic Map Reduce job.

- B. Turn on Cloud Trail and use trail log tiles on S3 as input of the Elastic Map Reduce job

- C. Change your log collection process to use Cloud Watch ELB metrics as input of the Elastic Map Reduce job

- D. Use Elastic Beanstalk “Rebuild Environment” option to update log delivery to the Elastic Map Reduce job.

E. Use Elastic Beanstalk ‘Restart App server(s)” option to update log delivery to the Elastic Map Reduce job.

Answer: A

If you’re unable to connect via SSH to your EC2 instance, which of the following should you check and possibly correct to restore connectivity?

- A. Adjust Security Group to permit egress traffic over TCP port 443 from your IP.

- B. Configure the IAM role to permit changes to security group settings.

- C. Modify the instance security group to allow ingress of ICMP packets from your IP.

- D. Adjust the instance’s Security Group to permit ingress traffic over port 22 from your IP.

E. Apply the most recently released Operating System security patches.

Answer: D

Your company produces customer commissioned one-of-a-kind skiing helmets combining nigh fashion with custom technical enhancements Customers can show off their

Individuality on the ski slopes and have access to head-up-displays. GPS rear-view cams and any other technical innovation they wish to embed in the helmet.

The current manufacturing process is data rich and complex including assessments to ensure that the custom electronics and materials used to assemble the helmets are to the highest standards Assessments are a mixture of human and automated assessments you need to add a new set of assessment to model the failure modes of the custom electronics using GPUs with CUDA. across a cluster of servers with low latency networking.

What architecture would allow you to automate the existing process using a hybrid approach and ensure that the architecture can support the evolution of processes over time?- A. Use AWS Data Pipeline to manage movement of data & meta-data and assessments Use an auto-scaling group of G2 instances in a placement group.

- B. Use Amazon Simple Workflow (SWF) to manages assessments, movement of data & meta-data Use an auto-scaling group of G2 instances in a placement group.

- C. Use Amazon Simple Workflow (SWF) to manages assessments movement of data & meta-data Use an auto-scaling group of C3 instances with SR-IOV (Single Root I/O Virtualization).

D. Use AWS data Pipeline to manage movement of data & meta-data and assessments use auto-scaling group of C3 with SR-IOV (Single Root I/O virtualization).

Answer: B (SR-IOV is a method of device virtualization that provides higher I/O performance and lower CPU utilization when compared to traditional virtualized network interfaces)

Your startup wants to implement an order fulfillment process for selling a personalized gadget that needs an average of 3-4 days to produce with some orders taking up to 6 months you expect 10 orders per day on your first day. 1000 orders per day after 6 months and 10,000 orders after 12 months.

Orders coming in are checked for consistency men dispatched to your manufacturing plant for production quality control packaging shipment and payment processing If the product does not meet the quality standards at any stage of the process employees may force the process to repeat a step Customers are notified via email about order status and any critical issues with their orders such as payment failure.

Your case architecture includes AWS Elastic Beanstalk for your website with an RDS MySQL instance for customer data and orders.

How can you implement the order fulfillment process while making sure that the emails are delivered reliably?- A. Add a business process management application to your Elastic Beanstalk app servers and re-use the ROS database for tracking order status use one of the Elastic Beanstalk instances to send emails to customers.

- B. Use SWF with an Auto Scaling group of activity workers and a decider instance in another Auto Scaling group with min/max=1 Use the decider instance to send emails to customers.

- C. Use SWF with an Auto Scaling group of activity workers and a decider instance in another Auto Scaling group with min/max=1 use SES to send emails to customers.

D. Use an SQS queue to manage all process tasks Use an Auto Scaling group of EC2 Instances that poll the tasks and execute them. Use SES to send emails to customers.

Answer: C

Will my standby RDS instance be in the same Region as my primary?

- A Only for Oracle RDS types

- B Yes

- C Only if configured at launch

D No

Answer: B

Out of the stripping options available for the EBS volumes, which one has the following disadvantage: ‘Doubles the amount of I/O required from the instance to EBS compared to RAID 0, because you’re mirroring all writes to a pair of volumes, limiting how much you can stripe.’ ?

- A Raid 0

- B RAID 1+0 (RAID 10)

- C Raid 1

D Raid 2

Answer: B

Can Amazon S3 uploads resume on failure or do they need to restart?

- A Restart from beginning

- B You can resume them, if you flag the “resume on failure” option before uploading.

- C Resume on failure

D Depends on the file size

Answer: A

What is the maximum write throughput I can provision for a single DynamoDB table?

- A 1,000 write capacity units

- B 100,000 write capacity units

- C DynamoDB is designed to scale without limits, but if you go beyond 10,000 you have to contact AWS first.—

D 10,000 write capacity units

Answer: D

Q. Is Federated Storage Engine currently supported by Amazon RDS for MySQL?

- A Only for Oracle RDS instances

- B No

- C Yes

D Only in VPC

Answer: B

You must increase storage size in increments of at least _ %

- A 40

- B 30

- C 10

D 20

Answer: C

HTTP Query-based requests are HTTP requests that use the HTTP verb GET or POST and a Query parameter named_____.

- A Action

- B Value

- C Reset

D Retrieve

Answer: A

You have an application running in us-west-2 that requires six Amazon Elastic Compute Cloud (EC2) instances running at all times. With three Availability Zones available in that region (us-west-2a, us-west-2b, and us-west-2c), which of the following deployments provides 100 percent fault tolerance if any single Availability Zone in us-west-2 becomes unavailable?Choose 2 answers

- A. Us-west-2a with two EC2 instances, us-west-2b with two EC2 instances, and us-west-2c with two EC2 instances

- B. Us-west-2a with three EC2 instances, us-west-2b with three EC2 instances, and us-west-2c with no EC2 instances

- C. Us-west-2a with four EC2 instances, us-west-2b with two EC2 instances, and us-west-2c with two EC2 instances

- D. Us-west-2a with six EC2 instances, us-west-2b with six EC2 instances, and us-west-2c with no EC2 instances

E. Us-west-2a with three EC2 instances, us-west-2b with three EC2 instances, and us-west-2c with three EC2 instances

Answer: D, E

You have a business-critical two-tier web app currently deployed in two Availability Zones in a single region, using Elastic Load Balancing and Auto Scaling. The app depends on synchronous replication (very low latency connectivity) at the database layer. The application needs to remain fully available even if one application Availability Zone goes off-line, and Auto Scaling cannot launch new instances in the remaining Availability Zones. How can the current architecture be enhanced to ensure this?

- A. Deploy in three Availability Zones, with Auto Scaling minimum set to handle 33 percent peak load per zone.

- B. Deploy in three Availability Zones, with Auto Scaling minimum set to handle 50 percent peak load per zone.

- C. Deploy in two regions using Weighted Round Robin (WRR), with Auto Scaling minimums set for 50 percent peak load per Region.

D. Deploy in two regions using Weighted Round Robin (WRR), with Auto Scaling minimums set for 100 percent peak load per region.

Answer: B

Amazon Glacier is designed for:Choose 2 answers

- A. Frequently accessed data

- B. Active database storage

- C. Infrequently accessed data

- D. Cached session data

E. Data archives

Answer: C, E

You receive a Spot Instance at a bid of 0.05/hr.After30minutes,theSpotPriceincreasesto 0.06/hr and your Spot Instance is terminated by AWS. What was the total EC2 compute cost of running your Spot Instance?

You receive a Spot Instance at a bid of 0.03/hr.After30minutes,theSpotPriceincreasesto 0.05/hr and your Spot Instance is terminated by AWS. What was the total EC2 compute cost of running your Spot Instance?

- A. $0.00

- B. $0.02

- C. $0.03

- D. $0.05

E. $0.06

Answer: A

You have been tasked with creating a VPC network topology for your company. The VPC network must support both Internet-facing applications and internally-facing applications accessed only over VPN. Both Internet-facing and internally-facing applications must be able to leverage at least three AZs for high availability. At a minimum, how many subnets must you create within your VPC to accommodate these requirements?

- A. 2

- B. 3

- C. 4

D. 6

Answer: D

Your customer wishes to deploy an enterprise application to AWS which will consist of several web servers, several application servers and a small (50GB) Oracle database information is stored, both in the database and the file systems of the various servers. The backup system must support database recovery whole server and whole disk restores, and individual file restores with a recovery time of no more than two hours They have chosen to use RDS Oracle as the database Which backup architecture will meet these requirements?

- A. Backup RDS using automated daily DB backups Backup the EC2 instances using AMIs and supplement with file-level backup to S3 using traditional enterprise backup software to provide file level restore

- B. Backup RDS using a Multi-AZ Deployment Backup the EC2 instances using Amis, and supplement by copying file system data to S3 to provide file level restore.

- C. Backup RDS using automated daily DB backups Backup the EC2 instances using EBS snapshots and supplement with file-level backups to Amazon Glacier using traditional enterprise backup software to provide file level restore

D. Backup RDS database to S3 using Oracle RMAN Backup the EC2 instances using Amis, and supplement with EBS snapshots for individual volume restore.

Answer: B

You have a content management system running on an Amazon EC2 instance that is approaching 100% CPU utilization. Which option will reduce load on the Amazon EC2 instance?

- A. Create a load balancer, and register the Amazon EC2 instance with it

- B. Create a CloudFront distribution, and configure the Amazon EC2 instance as the origin

- C. Create an Auto Scaling group from the instance using the CreateAutoScalingGroup action

D. Create a launch configuration from the instance using the CreateLaunchConfiguration action

Answer: A

With which AWS services HSM can be used?

- A. s3,

- B. ebs,

- C. redshift **

D. dynamodb

Answer: C

Company B is launching a new game app for mobile devices. Users will log into the game using their existing social media account to streamline data capture. Company B would like to directly save player data and scoring information from the mobile app to a DynamoDS table named Score Data When a user saves their game the progress data will be stored to the Game state S3 bucket. what is the best approach for storing data to DynamoDB and S3?

- A. Use an EC2 Instance that is launched with an EC2 role providing access to the Score Data DynamoDB table and the GameState S3 bucket that communicates with the mobile app via web services.

- B. Use temporary security credentials that assume a role providing access to the Score Data

DynamoDB table and the Game State S3 bucket using web identity federation. - C. Use Login with Amazon allowing users to sign in with an Amazon account providing the mobile app with access to the Score Data DynamoDB table and the Game State S3 bucket.

D. Use an 1AM user with access credentials assigned a role providing access to the Score Data DynamoDB table and the Game State S3 bucket for distribution with the mobile app

Answer: B

An instance running a webserver is launched in a VPC subnet. A security group and a NACL are configured to allow inbound port 80. What should be done to make web server accessible by everyone?

- A. Outbound Port 80 rule should be enabled on security group

- B. Outbound Ports 49152-65535 should be enabled on NACL

- C. Outbound Port 80 rule should be enabled on both security group and NACL

D. All ports both inbound and outbound should be enabled on security group and NACL

Answer: B

What happens to data on ephemeral volume of an EBS-backed instance if instance is stopped and started?

- A. Data persists

- B. Data is deleted

- C. Volume snapshot is saved in S3

D. Data is automatically copied to another volume

Answer:B

You’re creating a forum DynamoDB database for hosting forums. Your “thread” table contains the forum name and each “forum name” can have one or more “subjects”. What primary key type would you give the thread table in order to allow more than one subject to be tied to the forum primary key name?

- A. Hash

- B. Primary and range

- C. Range and Hash

D. Hash and Range

Answer: D

ref:https://forums.aws.amazon.com/thread.jspa?messageID=331668

In the basic monitoring package for EC2, Amazon CloudWatch provides the following metrics:

- A. web server visible metrics such as number failed transaction requests

- B. operating system visible metrics such as memory utilization

- C. database visible metrics such as number of connections

D. hypervisor visible metrics such as CPU utilization

Answer: D.

Question 6 (of 7): Which is an operational process performed by AWS for data security?

- A. AES-256 encryption of data stored on any shared storage device

- B. Decommissioning of storage devices using industry-standard practices

- C. Background virus scans of EBS volumes and EBS snapshots

D. Replication of data across multiple AWS Regions E. Secure wiping of EBS data when an EBS volume is un-mounted

Answer: B.

Select the correct set of options. These are the initial settings for the default security group:

- A. Allow no inbound traffic, Allow all outbound traffic and Allow instances associated with this security group to talk to each other.

- B. Allow all inbound traffic, Allow no outbound traffic and Allow instances associated with this security group to talk to each other.

- C. Allow no inbound traffic, Allow all outbound traffic and Does NOT allow instances associated with this security group to talk to each other.

- D. Allow all inbound traffic, Allow all outbound traffic and Does NOT allow instances associated with this security group to talk to each other.

- Answer: A ref

An IAM user is trying to perform an action on an object belonging to some other root account’s bucket. Which of the below mentioned options will AWS S3 not verify?

- A. Permission provided by the parent of the IAM user on the bucket

- B. The object owner has provided access to the IAM user

- C. Permission provided by the parent of the IAM user

- D. Permission provided by the bucket owner to the IAM user

- Answer: C If the IAM user is trying to perform some action on the object belonging to another AWS user’s bucket, S3 will verify whether the owner of the IAM user has given sufficient permission to him. It also verifies the policy for the bucket as well as the policy defined by the object owner. ref

Placement Groups: enables applications to participate in a low-latency, 10 Gbps network. Which of below statements is false.

- A. Not all of the instance types that can be launched into a placement group.

- B. A placement group can’t span multiple Availability Zones.

- C. You can move an existing instance into a placement group by specify parameter of placement group.

D. A placement group can span peered VPCs.

Answer: D ref

What about below is false for AWS SLA

- A. S3 availability is guarantee to 99.95%.

- B. EBS availability is guarantee to 99.95%.

- C. EC2 availability is guarantee to 99.95%.

D. RDS multi-AZ is guarantee to 99.95%.

Answer: A S3 availability is 99.9% ref

You have assigned one Elastic IP to your EC2 instance. Now we need to restart the VM without EIP changed. Which of below you should not do?

- A. Reboot and stop/start both works.

- B. Reboot the instance.

- C. When the instance is in VPC public subnets, stop/start works.

D. When the instance is in VPC private subnet, stop/start works.

Answer: A ref

About the charge of Elastic IP Address, which of the following is true?

- A. You can have one Elastic IP (EIP) address associated with a running instance at no charge.

- B. You are charged for each Elastic IP addressed.

- C. You can have 5 Elastic IP addresses per region with no charge.

D. Elastic IP addresses can always be used with no charge.

Answer: B [ref](

http://docs.aws.amazon.com/AWSEC2/latest/UserGuide/elastic-ip-addresses-eip.html)

EC2 role

- A. Launch an instance with an AWS Identity and Aceess Management (IAM) role to restrict AWS API access for the instance.

- B. Pass access AWS credentials in the User Data field when the instance is launched.

- C. Setup an IAM group with restricted AWS API access and put the instance in the group at launch.

D. Setup an IAM user for the instance to restrict access to AWS API and assign it at launch.

Answer: A ref

What cli tools does AWS provide

- A. AWS CLI.

- B. Amazon EC2 CLI.

- C. All of the three.

D. AWS Tools for Windows PowerShell.

Answer: C

All three are provided

Which of the below mentioned steps will not be performed while creating the AMI of instance stored-backend?

- A. Define the AMI launch permissions.

- B. Upload the bundled volume.

- C. Register the AMI.

D. Bundle the volume.

Answer: A ref

The user just started an instance at 3 PM. Between 3 PM to 5 PM, he stopped and started the instance twice. During the same period, he has run the linux reboot command by ssh once and triggered reboot from AWS console once. For how many instance hours will AWS charge this user?

- A. 4

- B. 3

- C. 2

D. 5

Answer: B ref

Each time you start a stopped instance we charge a full instance hour, even if you make this transition multiple times within a single hour.

Rebooting an instance doesn’t start a new instance billing hour, unlike stopping and restarting your instance.

Amazon Redshift is what type of data warehouse service?

- A. Gigabyte-scale

- B. Exobyte-scale

- C. Petabyte-scale

D. Terabyte-scale

Answer: C Amazon Redshift is a fully-managed, petabyte-scale data warehouse service.

What does MPP stand for when referring to the type of architecture Redshift has?

- A. massively parallel processing

- B. massive protection policy

- C. massively parallel policy

D. massive protection processing

Answer: a Redshift has a massively parallel processing architecture that parallelizes and distributes SQL operations to take advantage of available resources.

Redshift can provide fast query performance by leveraging _ storage approaches and technology.

- A. key-value

- B. database

- C. row

D. columnar

Answer: D Redshift can provide fast query performance by leveraging columnar storage approaches and technology, much of which is taken from enterprise database technology.

Amazon’s Redshift data warehouse allows enterprise IT pros to execute against data sets.

- A. simple SQL queries / small

- B. complex SQL queries / large

- C. simple SQL queries / large

D. complex SQL queries / small

Answer: B Amazon’s Redshift data warehouse allows enterprise IT pros to execute complex SQL queries against large data sets.

Redshift was designed to alleviate the frustrating, time-consuming challenges database clusters have imposed on _ administrators?

- A. system

- B. database

- C. certified

D. privilege

Answer: b Redshift was designed to alleviate the frustrating, time-consuming challenges database clusters have imposed on database administrators.

True or False: Amazon Redshift is adept at handling data analysis workflows.

- A. True

B. False

Answer: A There currently are two Amazon data warehouse services adept at handling data analysis workflows: Amazon Redshift and Amazon Relational Database Service.

Adding nodes to a Redshift cluster provides _ performance improvements.

- A. linear

- B. non-linear

- C. both

D. neither

Answer: C Adding nodes to a Redshift cluster provides linear or near-linear performance improvements.

The preferred way to load data into Redshift is through __ using the COPY command.

- A. Remote hosts

- B. Simple Storage Service

- C. Elastic MapReduce

D. All of the above

Answer: D The preferred way to load data into Redshift is through remote hosts, Simple Storage Service or Elastic MapReduce using the COPY command. The COPY command executes loads in parallel and has the option to compress data during the load process.

Amazon Redshift has how many pricing components?

- A. 4

- B. 3

- C. 2

D. 5

Answer: B (Amazon Redshift has three pricing components: data warehouse node hours, backup storage and data transfer.)

What type of API provides a management interface to manage data warehouse clusters programmatically?

- A. Query

- B. REST

- C. Management

D. SOAP

Answer: A The Amazon Redshift Query API provides a management interface to manage data warehouse clusters programmatically.

Amazon Web Services falls into which cloud-computing category?

- A. Software as a Service (SaaS)

- B. Platform as a Service (PaaS)

- C. Infrastructure as a Service (IaaS)

D. Back-end as a Service (BaaS)

Answer: C

Amazon Elastic Compute Cloud (Amazon EC2) does which of the following?

- A. Provides customers with an isolated section of the AWS cloud where they can launch AWS resources in a virtual network that they define.

- B. Provides resizable computing capacity in the cloud.

- C. Provide a simple web services interface that customers can use to store and retrieve any amount of data from anywhere on the Web.

D. Provides a web service allowing customers to easily set up, operate and scale relational databases in the cloud.

Answer: B ( AWS describes Amazon EC2 a web service that provides resizable computing capacity in the cloud, allowing customers “to quickly scale capacity, both up and down, as your computing requirements change.”)

Amazon Glacier is a storage service allowing customers to store data for as little as:

- A. 1 cent per gigabyte (GB) per month

- B. 10 cents per GB per month

- C. 20 cents per GB per month

D. 50 cents per GB per month

Answer: A

Amazon Elastic Beanstalk automates the details of which of the following functions?

- A. Capacity provisioning

- B. Load balancing

- C. Auto-scaling

- D. Application deployment

E. All of the above

Answer: E (According to AWS, Amazon Elastic Beanstalk offers capacity provisioning, load balancing, auto-scaling and application deployment. )

All AWS IaaS services are pay-as-you-go.

- A. True

B. False

Answer: A

Amazon S3 is which type of storage service?

- A. Object

- B. Block

- C. Simple

D. Secure

Answer: A ( Object storage is more scalable than traditional file system storage, which is typically what users think about when comparing storage to databases for data persistence.)

Which AWS storage service assists S3 with transferring data?

- A. CloudFront

- B. AWS Import/Export

- C. DynamoDB

D. ElastiCache

Answer: b ( AWS Import/Export accelerates moving large amounts of data into and out of AWS using portable storage devices. AWS transfers your data directly onto and off of storage devices by using Amazon’s internal network and avoiding the Internet.)

Object storage systems store files in a flat organization of containers called what?

- A. Baskets

- B. Brackets

- C. Clusters

D. Buckets

Answer: D ( Instead of organizing files in a directory hierarchy, object storage systems store files in a flat organization of containers known as buckets in Amazon S3.)

Amazon S3 offers encryption services for which types of data?

- A. data in flight

- B. data at relax

- C. data at rest

- D. data in motion

- E. a and c

F. b and d

Answer: E Amazon offers encryption services for data at flight and data at rest.

Amazon S3 has how many pricing components?

- A. 4

- B. 5

- C. 3

D. 2

Answer: C Amazon S3 offers three pricing options. Storage (per GB per month), data transfer in or out (per GB per month), and requests (per x thousand requests per month).

What does RRS stand for when referring to the storage option in Amazon S3 that offers a lower level of durability at a lower storage cost?

- A. Reduced Reaction Storage

- B. Redundant Research Storage

- C. Regulatory Resources Storage

D. Reduced Redundancy Storage

Answer:D (Non-critical data, such as transcoded media or image thumbnails, can be easily reproduced using the Reduced Redundancy Storage option. Objects stored using the RRS option have less redundancy than objects stored using standard Amazon S3 storage.)

Object storage systems require less _ than file systems to store and access files.

- A. Big data

- B. Metadata

- C. Master data

D. Exif data

Answer: B (Object storage systems are typically more efficient because they reduce the overhead of managing file metadata by storing the metadata with the object. This means object storage can be scaled out almost endlessly by adding nodes.)

True or False. S3 objects are only accessible from the region they were created in.

- A. True

B. False

Answer: B While S3 objects are created in a specific region, they can be accessed from anywhere.

Amazon S3 offers developers which combination?

- A. High scalability and low latency data storage infrastructure at low costs.

- B. Low scalability and high latency data storage infrastructure at high costs.

- C. High scalability and low latency data storage infrastructure at high costs.

D. Low scalability and high latency data storage infrastructure at low costs.

Answer: A ( Amazon S3 offers software developers a reliable, highly scalable and low-latency data storage infrastructure at very low costs. S3 provides an interface that can be used to store and retrieve any amount of data from anywhere on the Web.)

Why is a bucket policy necessary?

- A. To allow bucket access to multiple users.

- B. To grant or deny accounts to read and upload files in your bucket.

- C. To approve or deny users the option to add or remove buckets.

D. All of the above

Answer: B Users need a bucket policy to grant or deny accounts to read and upload files in your bucket.

An ERP application is deployed across multiple AZs in a single region. In the An ERP application is deployed across multiple AZs in a single region. In the event of failure, the Recovery Time Objective (RTO) must be less than 3 hours, event of failure, the Recovery Time Objective (RTO) must be less than 3 hours, and the Recovery Point Objective (RPO) must be 15 minutes the customer and the Recovery Point Objective (RPO) must be 15 minutes the customer realizes that data corruption occurred roughly 1.5 hours ago.What DR strategy realizes that data corruption occurred roughly 1.5 hours ago.What DR strategy could be used to achieve this RTO and RPO in the event of this kind of failure?could be used to achieve this RTO and RPO in the event of this kind of failure?

- A. Take hourly DB backups to S3, with transaction logs stored in S3 every 5 A. Take hourly DB backups to S3, with transaction logs stored in S3 every 5 minutes.minutes.

- B. Use synchronous database master-slave replication between two availability B. Use synchronous database master-slave replication between two availability zones.zones.

- C. Take hourly DB backups to EC2 Instance store volumes with transaction logs C. Take hourly DB backups to EC2 Instance store volumes with transaction logs stored In S3 every 5 minutes.stored In S3 every 5 minutes.

D. Take 15 minute DB backups stored In Glacier with transaction logs stored in D. Take 15 minute DB backups stored In Glacier with transaction logs stored in S3 every5 minutes.S3 every5 minutes.

Answer: A

You are designing a social media site and are considering how to mitigate distributed denial-of-service (DDoS) attacks. Which of the below are viable mitigation techniques? (Choose 3 answers)

- A. Add multiple elastic network interfaces (ENIs) to each EC2 instance to increase the network bandwidth.

- B. Use dedicated instances to ensure that each instance has the maximum performance possible.

- C. Use an Amazon CloudFront distribution for both static and dynamic content.

- D. Use an Elastic Load Balancer with auto scaling groups at the web. App and Amazon Relational Database Service (RDS) tiers

- E. Add alert Amazon CloudWatch to look for high Network in and CPU utilization.

F. Create processes and capabilities to quickly add and remove rules to the instance OS firewall.

Answer : B,D,F

You would like to create a mirror image of your production environment in another region for disaster recovery purposes. Which of the following AWS resources do not need to be recreated in the second region? (Choose 2 answers)

- A. Route 53 Record Sets

- B. IM1 Roles

- C. Elastic IP Addresses (EIP)

- D. EC2 Key Pairs

- E. Launch configurations

F. Security Groups

Answer : A,C

You are responsible for a legacy web application whose server environment is approaching end of life You would like to migrate this application to AWS as quickly as possible, since the application environment currently has the following limitations:

The VM’s single 10GB VMDK is almost full Mevirtual network interface still uses the 10Mbps driver, which leaves your 100Mbps WAN connection completely underutilized It is currently running on a highly customized. Windows VM within a VMware environment: You do not have me installation media This is a mission critical application with an RTO (Recovery Time Objective) of 8 hours. RPO (Recovery Point Objective) of 1 hour. How could you best migrate this application to AWS while meeting your business continuity requirements?- A. Use the EC2 VM Import Connector for vCenter to import the VM into EC2.

- B. Use Import/Export to import the VM as an ESS snapshot and attach to EC2.

- C. Use S3 to create a backup of the VM and restore the data into EC2.

D. Use me ec2-bundle-instance API to Import an Image of the VM into EC2

Answer : A

You are designing Internet connectivity for your VPC. The Web servers must be available on the Internet. The application must have a highly available architecture.Which alternatives should you consider? (Choose 2 answers)

- A. Configure a NAT instance in your VPC Create a default route via the NAT instance and associate it with all subnets Configure a DNS A record that points to the NAT instance public IP address.

- B. Configure a CloudFront distribution and configure the origin to point to the private IP addresses of your Web servers Configure a Route53 CNAME record to your CloudFront distribution.

- C. Place all your web servers behind EL8 Configure a Route53 CNMIE to point to the ELB DNS name.

- D. Assign BPs to all web servers. Configure a Route53record set with all EIPs. With health checks and DNS failover.

E. Configure ELB with an EIP Place all your Web servers behind ELB Configure a Route53 A record that points to the EIP.

Answer : B,C

Your company has recently extended its datacenter into a VPC on AWS to add burst computing capacity as needed Members of your Network Operations Center need to be able to go to the AWS Management Console and administer Amazon EC2 instances as necessary You don’t want to create new IAM users for each NOC member and make those users sign in again to the AWS Management Console Which option below will meet the needs for your NOC members?

- A. Use OAuth 2 0 to retrieve temporary AWS security credentials to enable your NOC members to sign in to the AVVS Management Console.

- B. Use web Identity Federation to retrieve AWS temporary security credentials to enable your NOC members to sign in to the AWS Management Console.

- C. Use your on-premises SAML 2 O-compliant identityprovider (IDP) to grant the NOC members federated access to the AWS Management Console via the AWS single sign-on (SSO) endpoint.

D. Use your on-premises SAML2.0-compliam identity provider (IDP) to retrieve temporary security credentials to enable NOC members to sign in to the AWS Management Console.

Answer : D

You are implementing AWS Direct Connect. You intend to use AWS public service end points such as Amazon S3, across the AWS Direct Connect link. You want other Internet traffic to use your existing link to an Internet Service Provider. What is the correct way to configure AWS Direct connect for access to services such as Amazon S3?

- A. Configure a public Interface on your AWS Direct Connect link Configure a static route via your AWS Direct Connect link that points to Amazon S3 Advertise a default route to AWS using BGP.

- B. Create a private interface on your AWS Direct Connect link. Configure a static route via your AWS Direct connect link that points to Amazon S3 Configure specific routes to your network in your VPC.

- C. Create a public interface on your AWS Direct Connect link Redistribute BGP routes into your existing routing infrastructure advertise specific routes for your network to AWS.

D. Create a private interface on your AWS Direct connect link. Redistribute BGP routes into your existing routing infrastructure and advertise a default route to AWS.

Answer : C

You have deployed a web application targeting a global audience across multiple AWS Regions under the domain name.example.com. You decide to use Route53 Latency-Based Routing to serve web requests to users from the region closest to the user. To provide business continuity in the event of server downtime you configure weighted record sets associated with two web servers in separate Availability Zones per region. Dunning a DR test you notice that when you disable all web servers in one of the regions Route53 does not automatically direct all users to the other region. What could be happening? (Choose 2 answers)

- A. Latency resource record sets cannot be used in combination with weighted resource record sets.

- B. You did not setup an http health check tor one or more of the weighted resource record sets associated with me disabled web servers.

- C. The value of the weight associated with the latency alias resource record set in the region with the disabled servers is higher than the weight for the other region.

- D. One of the two working web servers in the other region did not pass its HTTP health check.

E. You did not set “Evaluate Target Health” to “Yes” on the latency alias resource record set associated with example com in the region where you disabled the servers.

Answer : B,D

Your company produces customer commissioned one-of-a-kind skiing helmets combining nigh fashion with custom technical enhancements Customers can show off their Individuality on the ski slopes and have access to head-up-displays. GPS rear-view cams and any other technical innovation they wish to embed in the helmet.The current manufacturing process is data rich and complex including assessments to ensure that the custom electronics and materials used to assemble the helmets are to the highest standards Assessments are amixture of human and automated assessments you need to add a new set of assessment to model the failure modes of the custom electronics using GPUs with CUDA. across a cluster of servers with low latency networking.What architecture would allow you to automate the existing process using a hybrid approach and ensure that the architecture can support the evolution of processes over time?

- A. Use AWS Data Pipeline to manage movement of data & meta-data and assessments Use an auto-scaling group of G2 instances in a placement group.

- B. Use Amazon Simple Workflow (SWF) 10 manages assessments, movement of data & meta-data Use an auto-scaling group of G2 instances in a placement group.

- C. Use Amazon Simple Workflow (SWF) lo manages assessments movement of data & meta-data Use an auto-scaling group of C3 instances with SR-IOV (Single Root I/O Virtualization).

D. Use AWS data Pipeline to manage movement of data & meta-data and assessments use auto-scaling group of C3 with SR-IOV (Single Root I/O virtualization).

Answer : A

You require the ability to analyze a large amount of data, which is stored on Amazon S3 using Amazon Elastic Map Reduce. You are using the cc2 8x large Instance type, whose CPUs are mostly idle during processing. Which of the below would be the most cost efficient way to reduce the runtime of the job?

- A. Create more smaller flies on Amazon S3.

- B.Add additional cc2 8x large instances by introducing a task group.

- C. Use smaller instances that have higher aggregate I/O performance.

D. Create fewer, larger files on Amazon S3.

Answer : C

You are designing a photo sharing mobile app the application will store all pictures in a single Amazon S3 bucket.Users will upload pictures from their mobile device directly to Amazon S3 and will be able to view and download their own pictures directly from Amazon S3.You want to configure security to handle potentially millions of users in the most secure manner possible. What should your server-side application do when a new user registers on the photo-sharing mobile application?

- A. Create a set of long-term credentials using AWS Security Token Service with appropriate permissions Store these credentials in the mobile app and use them to access Amazon S3.

- B. Record the user’s Information in Amazon RDS and create a role in IAM with appropriate permissions. When the user uses their mobile app create temporary credentials using the AWS Security Token Service ‘AssumeRole’ function Store these credentials in the mobile app’s memory and use them to access Amazon S3 Generate new credentials the next time the user runs the mobile app.

- C. Record the user’s Information In Amazon DynamoDB. When the user uses their mobile app create temporary credentials using AWS Security Token Service with appropriate permissions Store these credentials in the mobile app’s memory and use them to access Amazon S3 Generate new credentials the next time the user runs the mobile app.

- D. Create IAM user. Assign appropriate permissions to the IAM user Generate an access key and secret key for the IAM user, store them in the mobile app and use these credentials to access Amazon S3.

E.Create an IAM user. Update the bucket policy with appropriate permissions for the IAM user Generate an access Key and secret Key for the IAM user, store them In the mobile app and use these credentials to access Amazon S3.

Answer : B

A customer has a 10 GB AWS Direct Connect connection to an AWS region where they have a web application hosted on Amazon Elastic Computer Cloud (EC2). The application has dependencies on an on-premises mainframe database that uses a BASE (Basic Available. Sort stale Eventual consistency) rather than an ACID (Atomicity. Consistency isolation. Durability) consistency model. The application is exhibiting undesirable behavior because the database is not able to handle the volume of writes. How can you reduce the load on your on-premises database resources in the most cost-effective way?

- A. Use an Amazon Elastic Map Reduce (EMR) S3DistCp as a synchronization mechanism between the on-premises database and a Hadoop cluster on AWS.

- B. Modify the application to write to an Amazon SQS queue and develop a worker process to flush the queue to the on-premises database.

- C. Modify the application to use DynamoDB to feed an EMR cluster which uses a map function to write to the on-premises database.

D. Provision an RDS read-replica database on AWS to handle the writes and synchronize the two databases using Data Pipeline.

Answer : A

You are the new IT architect in a company that operates a mobile sleep tracking application When activated at night, the mobile app is sending collected data points of 1 kilobyte every5 minutes to your backend The backend takes care of authenticating the user and writing the data points into an Amazon DynamoDB table. Every morning, you scan the table to extract and aggregate last night’s data on a per user basis, and store the results in Amazon S3.Users are notified via Amazon SMS mobile push notifications that new data is available, which is parsed and visualized by (The mobile app Currently you have around 100k users who are mostly based out of North America. You have been tasked to optimize the architecture of the backend system to lower cost what would you recommend? (Choose 2 answers)

- A. Create a new Amazon DynamoDB (able each day and drop the one for the previous day after its data is on Amazon S3.

- B. Have the mobile app access Amazon DynamoDB directly instead of JSON files stored on Amazon S3.

- C. Introduce an Amazon SQS queue to buffer writes to the Amazon DynamoDB table and reduce provisioned write throughput.

- D. Introduce Amazon Elasticache lo cache reads from the Amazon DynamoDB table and reduce provisioned read throughput.

E. Write data directly into an Amazon Redshift cluster replacing both Amazon DynamoDB and Amazon S3.

Answer : B,D

Your company is getting ready to do a major public announcement of a social media site on AWS. The website is running on EC2 instances deployed across multiple Availability Zones with a Multi-AZ RDS MySQL Extra Large DB Instance. The site performs a high number of small reads and writes per second and relies on an eventual consistency model. After comprehensive tests you discover that there is read contention on RDS MySQL. Which are the best approaches to meet these requirements? (Choose 2 answers)

- A. Deploy ElasticCache in-memory cache running in each availability zone

- B. Implement sharding to distribute load to multiple RDS MySQL instances

- C. Increase the RDS MySQL Instance size and Implement provisioned IOPS

D. Add an RDS MySQL read replica in each availability zone

Answer : A,C

You are tasked with moving a legacy application from a virtual machine running Inside your datacenter to an Amazon VPC Unfortunately this app requires access to a number of on-premises services and no one who configured the app still works for your company. Even worse there’s no documentation for it. What will allow the application running inside the VPC to reach back and access its internal dependencies without being reconfigured? (Choose 3 answers)

- A. An AWS Direct Connect link between the VPC and the network housing the internal services.

- B. AnInternet Gateway to allow a VPN connection.

- C. An Elastic IP address on the VPC instance

- D. An IP address space that does not conflict with the one on-premises

- E. Entries in Amazon Route 53 that allow the Instance to resolve its dependencies’ IP addresses

F. A VM Import of the current virtual machine

Answer : A,C,F

Your company currently has a 2-tier web application running in an on-premises data center. You have experienced several infrastructure failures in the past two months resulting in significant financial losses. Your CIO is strongly agreeing to move the application to AWS. While working on achieving buy-infrom the other company executives, he asks you to develop a disaster recovery plan to help improve Business continuity in the short term. He specifies a target Recovery Time Objective (RTO) of 4 hours and a Recovery Point Objective (RPO) of 1 hour or less.He also asks you to implement the solution within 2 weeks. Your database is 200GB in size and you have a 20Mbps Internet connection. How would you do this while minimizing costs?

- A. Create an EBS backed private AMI which includes a fresh install or your application. Setup a script in your data center to backup the local database every 1 hour and to encrypt and copy the resulting file to an S3 bucket using multi-part upload.

- B. Install your application on a compute-optimized EC2 instance capable of supporting the application’s average load synchronously replicate transactions from your on-premises database to a database instance in AWS across a secure Direct Connect connection.

- C. Deploy your application on EC2 instances within an Auto Scaling group across multiple availability zones asynchronously replicate transactions from your on-premises database to a database instance in AWS across a secure VPN connection.

D. Create an EBS backed private AMI that includes a fresh install of your application. Develop a Cloud Formation template which includes your Mil and the required EC2. Auto-Scaling and ELB resources to support deploying the application across Multiple-Ability Zones. Asynchronously replicate transactions from your on-premises database to a database instance in AWS across a secure VPN connection.

Answer : A

Refer to the architecture diagram above of a batch processing solution using Simple Queue Service (SOS) to set up a message queue between EC2 instances which are used as batch processors Cloud Watch monitors the number of Job requests (queued messages) and an Auto Scaling group adds or deletes batch servers automatically based on parameters set in Cloud Watch alarms. You can use this architecture to implement which of the following features in a cost effective and efficient manner?

- A. Reduce the overall lime for executing jobs through parallel processing by allowing a busy EC2 instance that receives a message to pass it to the next instance in a daisy-chain setup.

- B. Implement fault tolerance against EC2 instance failure since messages would remain in SQS and worn can continue with recovery of EC2 instances implement fault tolerance against SQS failure by backing up messages to S3.

- C. Implement message passing between EC2 instances within a batch by exchanging messages through SOS. D. Coordinate number of EC2 instances with number of job requests automatically thus Improving cost effectiveness.

D. Handle high priority jobs before lower priority jobs by assigning a priority metadata field to SQS messages.

Answer : B

A company is building a voting system for a popular TV show, viewers win watch the performances then visit the show’s website to vote for their favorite performer. It is expected that in a short period of time after the show has finished the site will receive millions of visitors. The visitors will first login to the site using their Amazon.com credentials and then submit their vote. After the voting is completed the page will display the vote totals. The company needs to build the site such that can handlethe rapid influx of traffic while maintaining good performance but also wants to keep costs to a minimum. Which of the design patterns below should they use?

- A. Use CloudFront and an Elastic Load balancer in front of an auto-scaled set of web servers, the web servers will first can the Login With Amazon service to authenticate the user then process the users vote and store the result into a multi-AZ Relational Database Service instance.

- B. Use CloudFront and the static website hosting feature of S3 with the Javascript SDK to call the Login With Amazon service to authenticate the user, use IAM Roles to gain permissions to a DynamoDB table to store the users vote.

- C. Use CloudFront and an Elastic Load Balancer in front of an auto-scaled set of web servers, the web servers will first call the Login with Amazon service to authenticate the user, the web servers will process the users vote and store the result into a DynamoDB table using IAM Roles for EC2 instances to gain permissions to the DynamoDB table.

D. Use CloudFront and an Elastic Load Balancer in front of an auto-scaled set of web servers, the web servers will first call the Login. With Amazon service to authenticate the user, the web servers win process the users vote and store the result into an SQS queue using IAM Roles for EC2 Instances to gain permissions to the SQS queue. A set of application servers will then retrieve the items from the queue and store the result into a DynamoDB table.

Answer : D

- 相对C,D加了一个queue缓存用户洪峰请求,后台再消费请求入库。直接入库洪峰时dynamodb的IO高, 必须用高IO设备,费用高。

You have a periodic Image analysis application that gets some files In Input analyzes them and tor each file writes some data in output to a ten file the number of files in input per day is high and concentrated in a few hours of the day. Currently you have a server on EC2 with a large EBS volume that hosts the input data and the results it takes almost 20 hours per day to complete the process What services could be used to reduce the elaboration time and improve the availability of the solution?

- A. S3 to store I/O files. SQS to distribute elaboration commands to a group of hosts working in parallel. Auto scaling to dynamically size the group of hosts depending on the length of the SQS queue

- B. EBS with Provisioned IOPS (PIOPS) to store I/O files. SNS to distribute elaboration commands to agroup of hosts working in parallel Auto Scaling to dynamically size the group of hosts depending on the number of SNS notifications

- C. S3 to store I/O files, SNS to distribute evaporation commands to a group of hosts working in parallel. Auto scaling to dynamically size the group of hosts depending on the number of SNS notifications

D. EBS with Provisioned IOPS (PIOPS) to store I/O files SOS to distribute elaboration commands to a group of hosts working in parallel Auto Scaling to dynamically size the group ot hosts depending on the length of the SQS queue.

Answer : C

机经

机经都是大家考完试后的回忆,题干和选项缺失,只有答案。可以作为考试前热身读物:

If we are to host an application on a single ec2 instance, what can be done to make sure highest iops?

- a. A single ec2 ebs backed instance with provisioned IOPS

b. An array of EBS volumes with provisioned IOPS.

- -

If an instance hosts website on multiple virtual hosts each with it’s own ssl certificate, what should be done?

- a. Upload the SSL certificates to IAM

b. Create an SSL termination at the ELB

- -

Name four things that Trusted Advisor checks …

- performance

- cost opt

- security

fault tolerance

- -

One of your users is trying to upload a 7.5GB file to S3 however they keep getting the following error message - “Your proposed upload exceeds the maximum allowed object size.”. What is a possible solution for this?_

- The answer seems a bit odd “design you app to use…”.

Ans : multipart upload

- -

_____is a service where you buy devices more akin to a hard disk that can be attached to one (and only one -at the time of writing) EC2 instance

EBS (Elastic Block Store)

- -

This storage option is best for storing your EC2 server images (Amazon Machine Images aka AMIs), static content e.g. for a web site, input or output data files (like you’ve use an SFTP site), or anything that you’d treat like a file.

S3

- -

These two items handle replacement of instances when they are configured. Then when an instance fails the health checks,presumably because it is down, it is these two items that will decide whether we now need to add another server to compensate

autoscaling group & launch configuration

- -

A ___ tells AWS how to stand up a bootstrapped server that once up is ready to do work without any human intervention

launch configuration

- -

This tells AWS where it can create servers : which launch configuration to use, the minimum and maximum allowed servers in the group, and how to scale up and down.

Auto Scaling Group

- -

An AWS account can have up to ____CloudFront origin access identities.

100

- -

True or false Elastic IPs are sticky until re-assigned

True Elastic Ips are sticky until the instance or volume they are associated with is deleted

- -

EBS devices are ______of EC2 instances and by default _____them (unless configured otherwise). All data on Instance storage however will be lost and also on the root (/dev/sda1) partition of S3 backed servers

EBS devices are independent of EC2 instances and by default outlive them (unless configured otherwise). All data on Instance storage however will be lost and also on the root (/dev/sda1) partition of S3 backed servers

- -

S3 Versioning means

S3 versioning means that all versions of a file are kept and retrievable at a later date (by making a request to the bucket, using the object ID and also the version number). The only charge for having this enabled is from the fact that you will incur more storage. When an object is deleted, it will still be accessible just not visible.

- -

Define a Placement Group

A placement group is a logical grouping of instances within a single Availability Zone

- -

using these types od groups enables applications to get the full-bisection bandwidth and low-latency network performance required for tightly coupled, node-to-node communication typical of High Performance Computing (HPC) on AWS.

Placement Groups

- -

For Relational Database, the setting of provisioned IOPS storage does what

provides fast, consistent performance

- -

for a DB instance the default setting for minor upgrades is set to

yes, allow auto minor version upgrades

- -

What 3 things must you provide the DB instance during setup

The DB instance Identifer, the master username, the master password

- -

in DB management options what options exist and what are they set to on default

- Enable Automatic Backups, set to yes on default

backup retention period-the daily time range which automated backups are created if automated backups are created is default set to 1 day, the backup window-is set to no preference, the weekly time range

- -

is a web service that gives you access to a _ that can be used to store messages while waiting for a computer to process them. This allows you to quickly build message queuing applications that can be run on any computer on the internet.

Amazon SQS is a web service that gives you access to a message queue that can be used to store messages while waiting for a computer to process them. This allows you to quickly build message queuing applications that can be run on any computer on the internet.

- -

If you choose to delete a DB instance on the management console what question might you be asked in regards to backups

You will be asked if you wish to create a final snapshot

- -

If you choose not to create a final snapshot for a DB instance what will happen to the automated snapshot associated with the instance?

The automated snapshot will be deleted

- -

fill in the 3 blanks:

____Instances allow us to optimize processing costs - and ____allows us to orchestrate the process in a distributed and asynchronous manner & ____facilitates the storage of intermediate and final processing results- Spot Instances + SQS + S3 = Magic - Spot Instances allow us to optimize processing costs - Amazon SQS allows us to orchestrate the process in a distributed and asynchronous manner - Amazon Simple Storage Service (S3) facilitates the storage of intermediate and final processing results

注:互联网上有叫卖题库的网站,常见的是300多到题,差不多300元左右一套。因为很多能在网上免费找到,而且多数答案没有必要的原因解释,时间也比较旧,所以不建议大家花钱购买。

5151

5151

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?