MapReduce在处理小文件时效率很低,但面对大量的小文件又不可避免,这个时候就需要相应的解决方案。 默认的输入格式为TextInputFormat,对于小文件,它是按照它的父类FileInputFormat的切片机制来切片的,也就是不管一个文件多小,独占一片

案例1

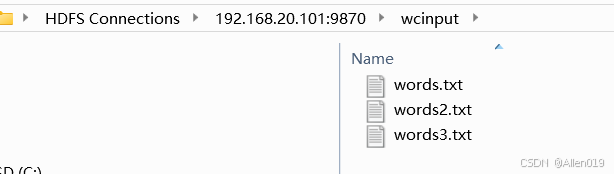

对于之前的wordcount案例来说,输入目录下一共有3个文件,这将开启3个reduceTask去执行

需求

需求

我们需要将三个文本文件合并为一个序列化文件

输入三个文本文件,输出一个文件

![]()

自定义Inputformat类

需要实现两个方法

isSplitable():是否可以切片,我们修改返回值为false不可切割。

createRecordReader:返回我们自定义的RecordReader对象。

自定义inputfomat

package selfinputformat;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.InputSplit;

import org.apache.hadoop.mapreduce.JobContext;

import org.apache.hadoop.mapreduce.RecordReader;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import java.io.IOException;

public class MyInputFormat extends FileInputFormat<Text, BytesWritable> {

//设置文件不可切片,设置一个文件最多作为1片

@Override

protected boolean isSplitable(JobContext context, Path filename) {

return false;

}

//设置读取文件的格式为自定义格式

@Override

public RecordReader<Text,BytesWritable> createRecordReader(InputSplit inputSplit, TaskAttemptContext taskAttemptContext) throws IOException, InterruptedException {

return new MyRecordReader();

}

}自定义RecordReader类

要修改两个地方:key和value。 Mapper类中的map方法需要有四个参数,其中的KEY_IN和VALUE_IN都是由RecordReader类来设置的,这里需要设置一下。

默认的RecordReader类的key为LongWritable类型,也就是一行数据对应的字节偏移量,这里设置key为文件名,也就是Text类型。

默认的RecordReader类的value为Text类型,也就是一行文本,设置value为文件名key对应的文件的二进制序列,也就是BytesWritable类型。

package selfinputformat;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.IOUtils;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.InputSplit;

import org.apache.hadoop.mapreduce.RecordReader;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import java.io.IOException;

public class MyRecordReader extends RecordReader<Text,BytesWritable> {

private Text key;

private BytesWritable value;

private String filename;

private int length;

private FileSystem fs;

private Path path;

private FSDataInputStream is;

private boolean flag=true;

@Override

public void initialize(InputSplit inputSplit, TaskAttemptContext taskAttemptContext) throws IOException, InterruptedException {

FileSplit fileSplit = (FileSplit) inputSplit;

filename = fileSplit.getPath().getName();

length = (int) fileSplit.getLength();

path = fileSplit.getPath();

//获取当前Job的配置对象

Configuration conf = taskAttemptContext.getConfiguration();

//获取当前Job使用的文件系统

fs = FileSystem.get(conf);

is = fs.open(path);

}

//文件的名称做为 key,文件的内容封装为BytesWritable类型的 value, 返回true

@Override

public boolean nextKeyValue() throws IOException, InterruptedException {

//第一次调用nextKeyValue方法

if (flag){

//实例化对象

if (key==null){

key = new Text();

}

if (value==null){

value = new BytesWritable();

}

//赋值将文件名封装到key中

key.set(filename);

//将文件的内容读取封装到value中

byte[] content = new byte[ length];

IOUtils.readFully(is,content,0,length);

value.set(content,0,length);

flag = false;

return true;

}

//第二次调用直接执行 return false

return false;

}

//返回当前读取到的key

@Override

public Text getCurrentKey() throws IOException, InterruptedException {

return key;

}

//返回当前读取到的value

@Override

public BytesWritable getCurrentValue() throws IOException, InterruptedException {

return value;

}

//返回读取切片的进度

@Override

public float getProgress() throws IOException, InterruptedException {

return 0;

}

//关闭资源

@Override

public void close() throws IOException {

if (is != null){

IOUtils.closeStream(is);

}

if (fs != null){

fs.close();

}

}

}Mapper类

在自定义RecordReader类中,已经设置输入的key为文件名,value设置为文件的二进制序列,所以这里直接将key和value写出即可,key的类型为Text,value的类型为BytesWritable

package selfinputformat;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class SelfFileMapper extends Mapper<Text, BytesWritable,Text,BytesWritable> {

@Override

protected void map(Text key, BytesWritable value, Context context) throws IOException, InterruptedException {

context.write(key,value);

}

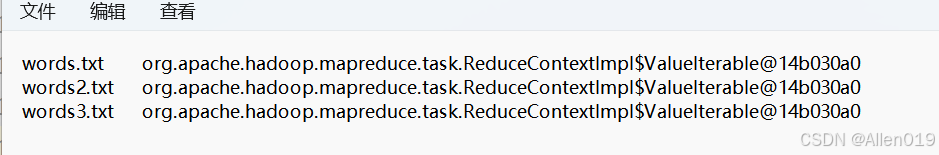

}Reducer类

public class SequenceFileReducer extends Reducer<Text, BytesWritable,Text,Text> {

private Text OUT_VALUE = new Text();

@Override

protected void reduce(Text key, Iterable<BytesWritable> values, Context context) throws IOException, InterruptedException {

String value = values.toString();

OUT_VALUE.set(value);

context.write(key,OUT_VALUE);

}

}启动类

import com.lyh.mapreduce.MaxTemp.MaxTempMapper;

import com.lyh.mapreduce.MaxTemp.MaxTempReducer;

import com.lyh.mapreduce.MaxTemp.MaxTempRunner;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class MySequenceFileRunner extends Configured implements Tool {

public static void main(String[] args) throws Exception {

ToolRunner.run(new Configuration(),new MySequenceFileRunner(),args);

}

@Override

public int run(String[] args) throws Exception {

//1.获取job

Configuration conf = new Configuration();

Job job = Job.getInstance(conf, "my sequence file demo");

//2.配置jar包路径

job.setJarByClass(MySequenceFileRunner.class);

//3.关联mapper和reducer

job.setMapperClass(SequenceFileMapper.class);

job.setReducerClass(SequenceFileReducer.class);

//4.设置map、reduce输出的k、v类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(BytesWritable.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(BytesWritable.class);

//设置切片机制为我们自定义的切片机制

job.setInputFormatClass(MyInputFormat.class);

//5.设置统计文件输入的路径,将命令行的第一个参数作为输入文件的路径

FileInputFormat.setInputPaths(job,new Path("D:\\MapReduce_Data_Test\\myinputformat\\input"));

//6.设置结果数据存放路径,将命令行的第二个参数作为数据的输出路径

FileOutputFormat.setOutputPath(job,new Path("D:\\MapReduce_Data_Test\\myinputformat\\output1"));

return job.waitForCompletion(true) ? 0 : 1;//verbose:是否监控并打印job的信息

}

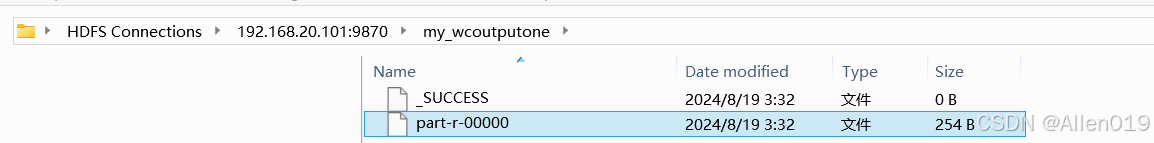

}打成jar包,运行

hadoop jar /hadoopmapreduce-1.0-SNAPSHOT.jar selfinputformat.JobMain /wcinput/ /my_wcoutputone结果合并成一个文件

案例2

数据文件 studentscore.txt,可以看到三行为一组数据,那么常用的inputfomat就不起作用了,需要自定义

wjm

shuxue 91

yinyu 88

lilie

shuxue 73

yinyu 27

fuhu

shuxue 37

yinyu 90代码如下:

Reader

package selfinputformat1;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.IOUtils;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.InputSplit;

import org.apache.hadoop.mapreduce.RecordReader;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import org.apache.hadoop.util.LineReader;

import java.io.IOException;

import java.net.URI;

public class MyRecordReader extends RecordReader<Text, Text> {

private LineReader reader;

private Text key;

private Text value;

private long length;

private float pos = 0;

private static final byte[] blank = new Text(" ").getBytes();

// 初始化方法,在初始化的时候会被调用一次

// 一般会利用这个方法来获取一个实际的流用于读取数据

@Override

public void initialize(InputSplit split, TaskAttemptContext context) throws IOException {

// 转化

FileSplit fileSplit = (FileSplit) split;

// 获取切片所存储的位置

Path path = fileSplit.getPath();

// 获取切片大小

length = fileSplit.getLength();

// 连接HDFS

FileSystem fs = FileSystem.get(URI.create(path.toString()), context.getConfiguration());

// 获取实际用于读取数据的输入流

FSDataInputStream in = fs.open(path);

// 获取到的输入流是一个字节流,要处理的文件是一个字符文件

// 考虑将字节流包装成一个字符流,最好还能够按行读取

reader = new LineReader(in);

}

// 判断是否有下一个键值对要交给map方法来处理

// 试着读取文件。如果读取到了数据,那么说明有数据要交给map方法处理,此时返回true

// 反之,如果没有读取到数据,那么说明所有的数据都处理完了,此时返回false

@Override

public boolean nextKeyValue() throws IOException {

// 构建对象来存储数据

key = new Text();

value = new Text();

Text tmp = new Text();

// 读取第一行数据

// 将读取到的数据放到tmp中

// 返回值表示读取到的字节个数

if (reader.readLine(tmp) <= 0) return false;

key.set(tmp.toString());

pos += tmp.getLength();

// 读取第二行数据

if (reader.readLine(tmp) <= 0) return false;

value.set(tmp.toString());

pos += tmp.getLength();

// 读取第三行数据

if (reader.readLine(tmp) <= 0) return false;

value.append(blank, 0, blank.length);

value.append(tmp.getBytes(), 0, tmp.getLength());

pos += tmp.getLength();

// key = wjm

// value = shuxue 90 yinyu 98

return true;

}

// 获取键

@Override

public Text getCurrentKey() {

return key;

}

// 获取值

@Override

public Text getCurrentValue() {

return value;

}

// 获取执行进度

@Override

public float getProgress() {

return pos / length;

}

@Override

public void close() throws IOException {

if (reader != null)

reader.close();

}

}MyInputFormat

package selfinputformat1;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.InputSplit;

import org.apache.hadoop.mapreduce.RecordReader;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import org.apache.hadoop.util.LineReader;

import java.io.IOException;

import java.net.URI;

// 泛型表示读取出来的数据类型

public class MyInputFormat extends FileInputFormat<Text, Text> {

@Override

public RecordReader<Text, Text> createRecordReader(InputSplit split, TaskAttemptContext context) {

return new MyRecordReader();

}

}

MyselfReducer

package selfinputformat1;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class JobMain extends Configured implements Tool {

public static void main(String[] args) throws Exception {

ToolRunner.run(new Configuration(),new JobMain(),args);

}

@Override

public int run(String[] args) throws Exception {

//1.获取job

Configuration conf = new Configuration();

Job job = Job.getInstance(conf, "my sequence file demo");

//2.配置jar包路径

job.setJarByClass(JobMain.class);

//3.关联mapper和reducer

job.setMapperClass(SelfFileMapper.class);

job.setReducerClass(MyselfReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

// 指定输入格式类

job.setInputFormatClass(MyInputFormat.class);

FileInputFormat.addInputPath(job,new Path(args[0]));

FileOutputFormat.setOutputPath(job,new Path(args[1]));

return job.waitForCompletion(true) ? 0 : 1;//verbose:是否监控并打印job的信息

}

}package selfinputformat1;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class MyselfReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable value : values) {

sum += value.get();

}

context.write(key, new IntWritable(sum));

}

}

SelfFileMapper

package selfinputformat1;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class SelfFileMapper extends Mapper<Text, Text, Text, IntWritable> {

@Override

protected void map(Text key, Text value, Context context) throws IOException, InterruptedException {

// key = tom

// value = math 90 english 98

// 拆分数据

String[] arr = value.toString().split(" ");

context.write(key, new IntWritable(Integer.parseInt(arr[1])));

context.write(key, new IntWritable(Integer.parseInt(arr[3])));

}

}

JobMain

package selfinputformat1;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class JobMain extends Configured implements Tool {

public static void main(String[] args) throws Exception {

ToolRunner.run(new Configuration(),new JobMain(),args);

}

@Override

public int run(String[] args) throws Exception {

//1.获取job

Configuration conf = new Configuration();

Job job = Job.getInstance(conf, "my sequence file demo");

//2.配置jar包路径

job.setJarByClass(JobMain.class);

//3.关联mapper和reducer

job.setMapperClass(SelfFileMapper.class);

job.setReducerClass(MyselfReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

// 指定输入格式类

job.setInputFormatClass(MyInputFormat.class);

FileInputFormat.addInputPath(job,new Path(args[0]));

FileOutputFormat.setOutputPath(job,new Path(args[1]));

return job.waitForCompletion(true) ? 0 : 1;//verbose:是否监控并打印job的信息

}

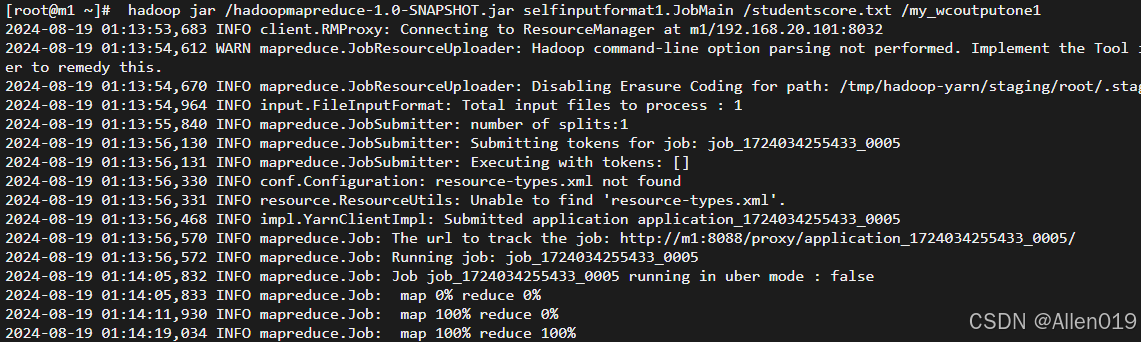

}运行代码

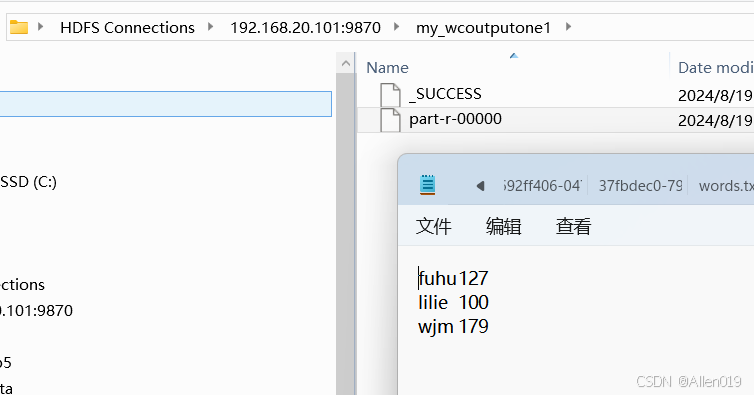

hadoop jar /hadoopmapreduce-1.0-SNAPSHOT.jar selfinputformat1.JobMain /studentscore.txt /my_wcoutputone1

输出结果

181

181

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?