1.使用hive统计出每个用户的累积访问次数

数据准备:

| userId | visitDate | visitCount |

|---|---|---|

| u01 | 2017/1/21 | 5 |

| u02 | 2017/1/23 | 6 |

| u03 | 2017/1/22 | 8 |

| u04 | 2017/1/20 | 3 |

| u01 | 2017/1/23 | 6 |

| u01 | 2017/1/21 | 8 |

| u02 | 2017/1/23 | 6 |

| u01 | 2017/1/22 | 4 |

建表语句:

create table action(userid string,visitDate string,visitCount string) row format delimited fields terminated by '\t';

导入数据:

load data local inpath '/home/lzx/file/visite.txt' into table action;

数据预览:

查询 语句:

select *,sum(c1) over(partition by userid order by time) from ( select userid,time,sum(visitcount) as c1 from ( select userid,from_unixtime(unix_timestamp(visitdate,'yyyy/MM/dd'),'yyyy-MM') as time,visitcount from action) tt group by userid,time ) dd;

2. 京东问题

数据准备:

| userid (用户名) | shop(商店名) |

|---|---|

| u1 | a |

| u2 | b |

| u1 | b |

| u1 | a |

| u3 | c |

| u4 | b |

| u1 | a |

| u2 | c |

| u5 | b |

| u4 | b |

| u6 | c |

| u2 | c |

| u1 | b |

| u2 | a |

| u2 | a |

| u3 | a |

| u5 | a |

| u5 | a |

| u5 | a |

建表语句:

create table jd(userid string,shop string) row format delimited fields terminated by '\t';

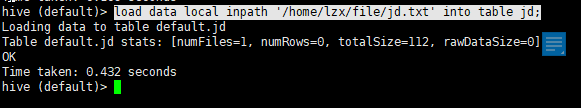

导入数据:

load data local inpath '/home/lzx/file/jd.txt' into table jd;

预览数据:

1.求每个店铺的访客人数

第一种解法:

select shop, count(distinct userid) from jd group by shop;

但是这种解法如果碰到大量数据,就会出现数据倾斜,大量相同userid的人都去了一个reduce。

第二种解法:

先去重 后求和

select t.shop,count(*) from (select userid,shop from jd group by userid,shop) t group by t.shop;

结果是一样的。

2.求每个店铺访问次数的top3的访客信息,输出店铺名称,访客id,访客次数。

先根据userid和shop分组,得到每个店铺,每个游客的访问量

通过row_number() over() 根据shop分组,降序排序得到访问前三名。

select * from ( select shop,userid,num,row_number() over(partition by shop order by shop,num desc) rn from ( select shop,userid,count(*) as num from jd group by userid,shop ) tt ) ttt where rn <4;

蚂蚁森林问题

背景说明:

以下表记录了用户每天的蚂蚁森林低碳生活领取的记录流水。

table_name:user_low_carbon

user_id data_dt low_carbon

用户 日期 减少碳排放(g)

蚂蚁森林植物换购表,用于记录申领环保植物所需要减少的碳排放量

table_name: plant_carbon

plant_id plant_name low_carbon

植物编号 植物名 换购植物所需要的碳

----题目

1.蚂蚁森林植物申领统计

问题:假设2017年1月1日开始记录低碳数据(user_low_carbon),假设2017年10月1日之前满足申领条件的用户都申领了一颗p004-胡杨,

剩余的能量全部用来领取“p002-沙柳” 。

统计在10月1日累计申领“p002-沙柳” 排名前10的用户信息;以及他比后一名多领了几颗沙柳。

得到的统计结果如下表样式:

user_id plant_count less_count(比后一名多领了几颗沙柳)

u_101 1000 100

u_088 900 400

u_103 500 …

2、蚂蚁森林低碳用户排名分析

问题:查询user_low_carbon表中每日流水记录,条件为:

用户在2017年,连续三天(或以上)的天数里,

每天减少碳排放(low_carbon)都超过100g的用户低碳流水。

需要查询返回满足以上条件的user_low_carbon表中的记录流水。

例如用户u_002符合条件的记录如下,因为2017/1/2~2017/1/5连续四天的碳排放量之和都大于等于100g:

seq(key) user_id data_dt low_carbon

xxxxx10 u_002 2017/1/2 150

xxxxx11 u_002 2017/1/2 70

xxxxx12 u_002 2017/1/3 30

xxxxx13 u_002 2017/1/3 80

xxxxx14 u_002 2017/1/4 150

xxxxx14 u_002 2017/1/5 101

备注:统计方法不限于sql、procedure、python,java等

创建表:

create table user_low_carbon(user_id String,data_dt String,low_carbon int) row format delimited fields terminated by '\t';

create table plant_carbon(plant_id string,plant_name String,low_carbon int) row format delimited fields terminated by '\t';

数据样例:

plant_carbon.txt

p001 梭梭树 17

p002 沙柳 19

p003 樟子树 146

p004 胡杨 215

user_low_carbon.txt

u_001 2017/1/1 10

u_001 2017/1/2 150

u_001 2017/1/2 110

u_001 2017/1/2 10

u_001 2017/1/4 50

u_001 2017/1/4 10

u_001 2017/1/6 45

u_001 2017/1/6 90

u_002 2017/1/1 10

u_002 2017/1/2 150

u_002 2017/1/2 70

u_002 2017/1/3 30

u_002 2017/1/3 80

u_002 2017/1/4 150

u_002 2017/1/5 101

u_002 2017/1/6 68

u_003 2017/1/1 20

u_003 2017/1/2 10

u_003 2017/1/2 150

u_003 2017/1/3 160

u_003 2017/1/4 20

u_003 2017/1/5 120

u_003 2017/1/6 20

u_003 2017/1/7 10

u_003 2017/1/7 110

u_004 2017/1/1 110

u_004 2017/1/2 20

u_004 2017/1/2 50

u_004 2017/1/3 120

u_004 2017/1/4 30

u_004 2017/1/5 60

u_004 2017/1/6 120

u_004 2017/1/7 10

u_004 2017/1/7 120

u_005 2017/1/1 80

u_005 2017/1/2 50

u_005 2017/1/2 80

u_005 2017/1/3 180

u_005 2017/1/4 180

u_005 2017/1/4 10

u_005 2017/1/5 80

u_005 2017/1/6 280

u_005 2017/1/7 80

u_005 2017/1/7 80

u_006 2017/1/1 40

u_006 2017/1/2 40

u_006 2017/1/2 140

u_006 2017/1/3 210

u_006 2017/1/3 10

u_006 2017/1/4 40

u_006 2017/1/5 40

u_006 2017/1/6 20

u_006 2017/1/7 50

u_006 2017/1/7 240

u_007 2017/1/1 130

u_007 2017/1/2 30

u_007 2017/1/2 330

u_007 2017/1/3 30

u_007 2017/1/4 530

u_007 2017/1/5 30

u_007 2017/1/6 230

u_007 2017/1/7 130

u_007 2017/1/7 30

u_008 2017/1/1 160

u_008 2017/1/2 60

u_008 2017/1/2 60

u_008 2017/1/3 60

u_008 2017/1/4 260

u_008 2017/1/5 360

u_008 2017/1/6 160

u_008 2017/1/7 60

u_008 2017/1/7 60

u_009 2017/1/1 70

u_009 2017/1/2 70

u_009 2017/1/2 70

u_009 2017/1/3 170

u_009 2017/1/4 270

u_009 2017/1/5 70

u_009 2017/1/6 70

u_009 2017/1/7 70

u_009 2017/1/7 70

u_010 2017/1/1 90

u_010 2017/1/2 90

u_010 2017/1/2 90

u_010 2017/1/3 90

u_010 2017/1/4 90

u_010 2017/1/4 80

u_010 2017/1/5 90

u_010 2017/1/5 90

u_010 2017/1/6 190

u_010 2017/1/7 90

u_010 2017/1/7 90

u_011 2017/1/1 110

u_011 2017/1/2 100

u_011 2017/1/2 100

u_011 2017/1/3 120

u_011 2017/1/4 100

u_011 2017/1/5 100

u_011 2017/1/6 100

u_011 2017/1/7 130

u_011 2017/1/7 100

u_012 2017/1/1 10

u_012 2017/1/2 120

u_012 2017/1/2 10

u_012 2017/1/3 10

u_012 2017/1/4 50

u_012 2017/1/5 10

u_012 2017/1/6 20

u_012 2017/1/7 10

u_012 2017/1/7 10

u_013 2017/1/1 50

u_013 2017/1/2 150

u_013 2017/1/2 50

u_013 2017/1/3 150

u_013 2017/1/4 550

u_013 2017/1/5 350

u_013 2017/1/6 50

u_013 2017/1/7 20

u_013 2017/1/7 60

u_014 2017/1/1 220

u_014 2017/1/2 120

u_014 2017/1/2 20

u_014 2017/1/3 20

u_014 2017/1/4 20

u_014 2017/1/5 250

u_014 2017/1/6 120

u_014 2017/1/7 270

u_014 2017/1/7 20

u_015 2017/1/1 10

u_015 2017/1/2 20

u_015 2017/1/2 10

u_015 2017/1/3 10

u_015 2017/1/4 20

u_015 2017/1/5 70

u_015 2017/1/6 10

u_015 2017/1/7 80

u_015 2017/1/7 60

导入数据:

load data local inpath "/home/lzx/file/low_carbon.txt" into table user_low_carbon;

load data local inpath "/home/lzx/file/plant_carbon.txt" into table plant_carbon;

预览:

解题过程:

1.蚂蚁森林植物申领统计

第一步:获取时间在10.1号之前的数据并求得降低碳总数

select user_id,sum(low_carbon) sum_carbon from user_low_carbon t where datediff(regexp_replace(data_dt,'/','-'),'2017-10-1')<0 group by user_id order by sum_carbon desc

第二步:求得减去胡杨能得到的沙柳的数量

select user_id,sum_carbon,floor(if(sum_carbon>215,sum_carbon-215,0)/19) as carbon from ( select user_id,sum(low_carbon) sum_carbon from user_low_carbon t where datediff(regexp_replace(data_dt,'/','-'),'2017-10-1')<0 group by user_id order by sum_carbon desc ) t

这里面省掉了查询种树所需要的碳量

第三步:通过窗口函数查询前十名比后一名多多少棵树

select user_id,sum_carbon,carbon, carbon - (lead(carbon,1,'0') over(order by carbon desc)) as num from ( select user_id,sum_carbon,floor(if(sum_carbon>215,sum_carbon-215,0)/19) as carbon from ( select user_id,sum(low_carbon) sum_carbon from user_low_carbon t where datediff(regexp_replace(data_dt,'/','-'),'2017-10-1')<0 group by user_id order by sum_carbon desc ) t ) tt limit 10;

2、蚂蚁森林低碳用户排名分析

看到题目里说的,条件有三个:

第一个条件:用户在2017年

第二个条件:连续三天(或以上)的天数里

第三个条件:每天减少碳排放都超过100g的用户

所以第一步sql,获取每天减少排放量超过100g的记录

select user_id, regexp_replace(data_dt,'/','-') as data_dt from user_low_carbon

where substring(data_dt,1,4) = '2017'

group by user_id,data_dt having sum(low_carbon) >=100

order by user_id ,data_dt

第二步sql获取每条记录的前两天和后两天的日期以及本条记录日期相减的数据

select

user_id,

data_dt,

datediff(data_dt,lag(data_dt,2,'1990-1-1') over(partition by user_id order by data_dt)) as t1,

datediff(data_dt,lag(data_dt,1,'1990-1-1') over(partition by user_id order by data_dt)) as t2,

datediff(data_dt,lead(data_dt,1,'1990-1-1') over(partition by user_id order by data_dt)) as t3,

datediff(data_dt,lead(data_dt,2,'1990-1-1') over(partition by user_id order by data_dt)) as t4

from (

select user_id, regexp_replace(data_dt,'/','-') as data_dt from user_low_carbon

where substring(data_dt,1,4) = '2017'

group by user_id,data_dt having sum(low_carbon) >=100

order by user_id ,data_dt) tt

第三步sql获取符合日期连续三天的

select user_id,data_dt from (

select

user_id,

data_dt,

datediff(data_dt,lag(data_dt,2,'1990-1-1') over(partition by user_id order by data_dt)) as t1,

datediff(data_dt,lag(data_dt,1,'1990-1-1') over(partition by user_id order by data_dt)) as t2,

datediff(data_dt,lead(data_dt,1,'1990-1-1') over(partition by user_id order by data_dt)) as t3,

datediff(data_dt,lead(data_dt,2,'1990-1-1') over(partition by user_id order by data_dt)) as t4

from (

select user_id, regexp_replace(data_dt,'/','-') as data_dt from user_low_carbon

where substring(data_dt,1,4) = '2017'

group by user_id,data_dt having sum(low_carbon) >=100

order by user_id ,data_dt) tt ) dd where ( t1 = 2 and t2 =1) or (t2=1 and t3=-1) or (t3=-1 and t4=-2);

因为需要查询返回满足以上条件的user_low_carbon表中的记录流水所以还需要一步 join 原表。

select t5.*from (

select user_id, data_dt from (

select

user_id,

regexp_replace(data_dt,'-','/') as data_dt,

datediff(data_dt,lag(data_dt,2,'1990-1-1') over(partition by user_id order by data_dt)) as t1,

datediff(data_dt,lag(data_dt,1,'1990-1-1') over(partition by user_id order by data_dt)) as t2,

datediff(data_dt,lead(data_dt,1,'1990-1-1') over(partition by user_id order by data_dt)) as t3,

datediff(data_dt,lead(data_dt,2,'1990-1-1') over(partition by user_id order by data_dt)) as t4

from (

select user_id, regexp_replace(data_dt,'/','-') as data_dt from user_low_carbon

where substring(data_dt,1,4) = '2017'

group by user_id,data_dt having sum(low_carbon) >=100

order by user_id ,data_dt) tt ) dd where ( t1 = 2 and t2 =1) or (t2=1 and t3=-1) or (t3=-1 and t4=-2)) t4

join user_low_carbon t5 on t4.user_id = t5.user_id and t4.data_dt = t5.data_dt;

第二题还有第二种解法:

通过日期对rank的序列值相减得到结果日期 通过判断是否是相等的值并且大于三个来判断是否是连续的三天

第一步sql:获取一天的减少碳排放大于100的

select user_id,data_dt,sum(low_carbon) sum_carbon,

rank() over(partition by user_id order by data_dt) rank

from user_low_carbon

where substring(data_dt,1,4)="2017"

group by user_id,data_dt

having sum_carbon>100

第二步sql:获取日期减去序列值的日期

select user_id,data_dt,

date_sub(regexp_replace(data_dt,"/","-"),rank) sub

from (select user_id,data_dt,sum(low_carbon) sum_carbon,

rank() over(partition by user_id order by data_dt) rank

from user_low_carbon

where substring(data_dt,1,4)="2017"

group by user_id,data_dt

having sum_carbon>100)t1

第三步sql:获取减去的日期值连续三个相等的就是我们需要的

select user_id,data_dt

from (

select user_id,data_dt,

count(*) over(partition by user_id,sub) threeDays

from (select user_id,data_dt,

date_sub(regexp_replace(data_dt,"/","-"),rank) sub

from (select user_id,data_dt,sum(low_carbon) sum_carbon,

rank() over(partition by user_id order by data_dt) rank

from user_low_carbon

where substring(data_dt,1,4)="2017"

group by user_id,data_dt

having sum_carbon>100)t1)t2

)t3

where threeDays>=3

order by user_id,data_dt;

265

265

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?