文章目录

Dcoker默认网络模式

Docker默认提供了四个网络模式,说明:

- bridge:容器默认的网络是桥接模式(自己搭建的网络默认也是使用桥接模式,启动容器默认也是使用桥接模式)。此模式会为每一个容器分配、设置IP等,并将容器连接到一个docker0虚拟网桥,通过docker0网桥以及Iptables nat表配置与宿主机通信。

- none:不配置网络,容器有独立的Network namespace,但并没有对其进行任何网络设置,如分配veth pair 和网桥连接,配置IP等。

- host:容器和宿主机共享Network namespace。容器将不会虚拟出自己的网卡,配置自己的IP等,而是使用宿主机的IP和端口。

- container:创建的容器不会创建自己的网卡,配置自己的IP容器网络连通。容器和另外一个容器共享Network namespace(共享IP、端口范围)。

容器默认使用bridge网络模式,我们使用该docker run --network=选项指定容器使用的网络:

bridge模式:使用 --net=bridge 指定,默认设置。

none模式:使用 --net=none 指定。

host模式:使用 --net=host 指定。

container模式:使用 --net=container:NAME_or_ID 指定。

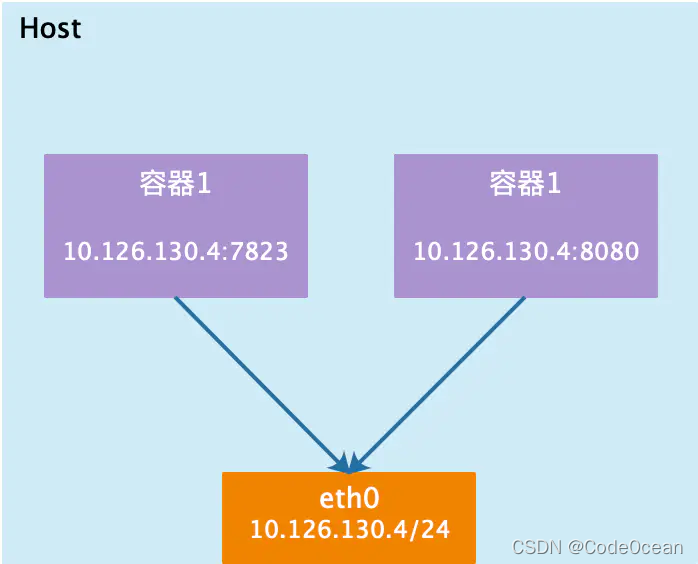

host模式

如果启动容器的时候使用host模式,那么这个容器将不会获得一个独立的Network Namespace,而是和宿主机共用一个Network Namespace。容器将不会虚拟出自己的网卡,配置自己的IP等,而是使用宿主机的IP和端口。但是,容器的其他方面,如文件系统、进程列表等还是和宿主机隔离的。

使用host模式的容器可以直接使用宿主机的IP地址与外界通信,容器内部的服务端口也可以使用宿主机的端口,不需要进行NAT,host最大的优势就是网络性能比较好,但是docker host上已经使用的端口就不能再用了,网络的隔离性不好。Host模式的模型图,如下图所示:

container模式

这个模式指定新创建的容器和已经存在的一个容器共享一个 Network Namespace,而不是和宿主机共享。新创建的容器不会创建自己的网卡,配置自己的 IP,而是和一个指定的容器共享 IP、端口范围等。同样,两个容器除了网络方面,其他的如文件系统、进程列表等还是隔离的。两个容器的进程可以通过 lo 网卡设备通信。Container模式模型示意图如下:

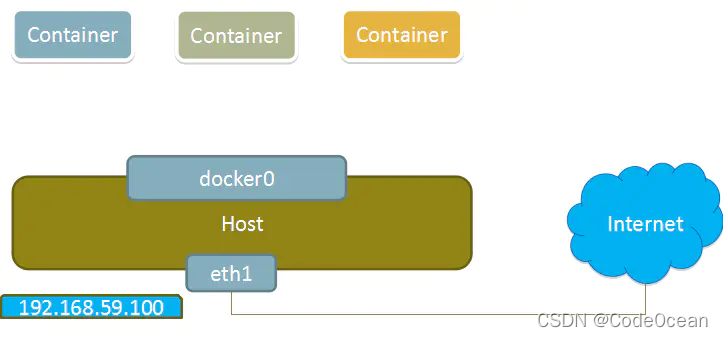

none模式

使用none模式,Docker容器拥有自己的Network Namespace,但是,并不为Docker容器进行任何网络配置。也就是说,这个Docker容器没有网卡、IP、路由等信息。需要我们自己为Docker容器添加网卡、配置IP等。

这种网络模式下容器只有lo回环网络,没有其他网卡。none模式可以在容器创建时通过–network=none来指定。这种类型的网络没有办法联网,封闭的网络能很好的保证容器的安全性。

None模式示意图:

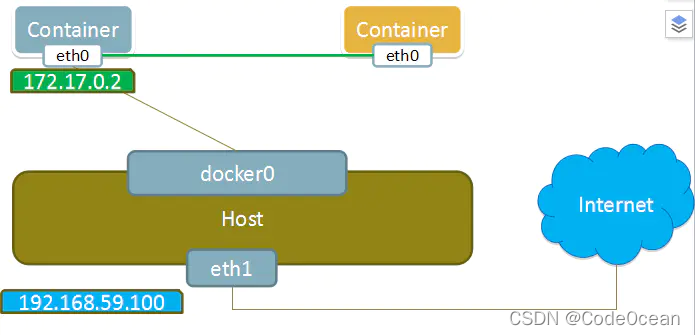

bridge模式

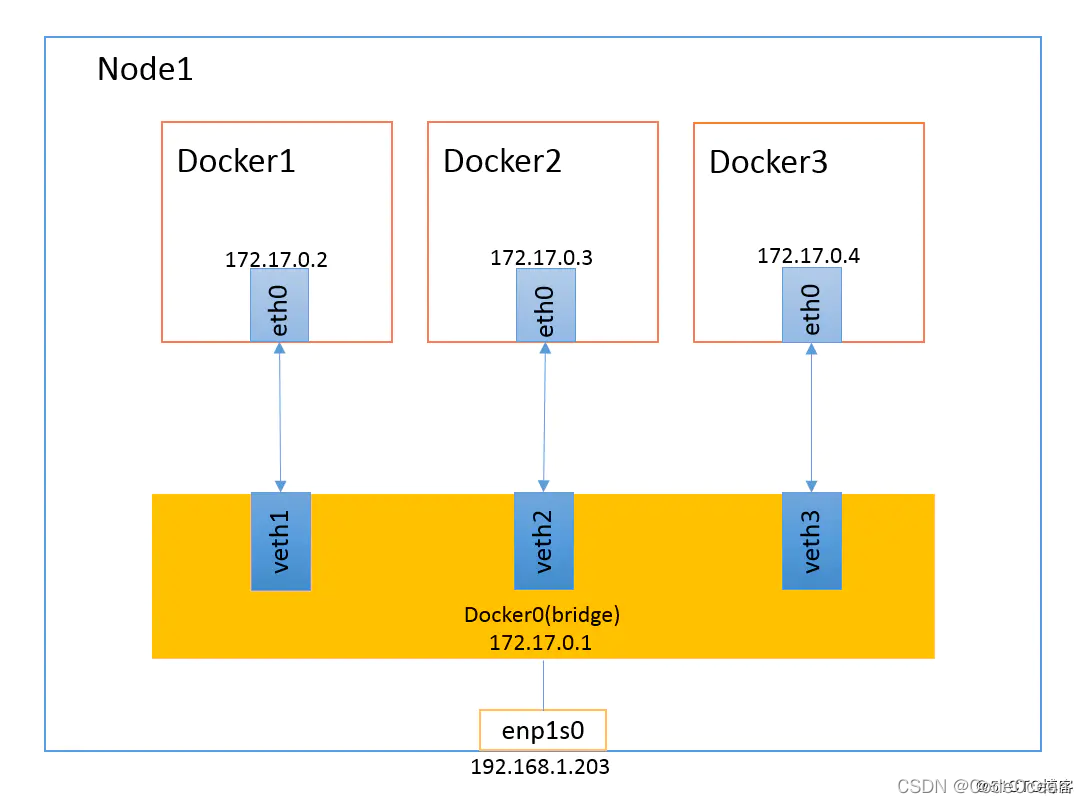

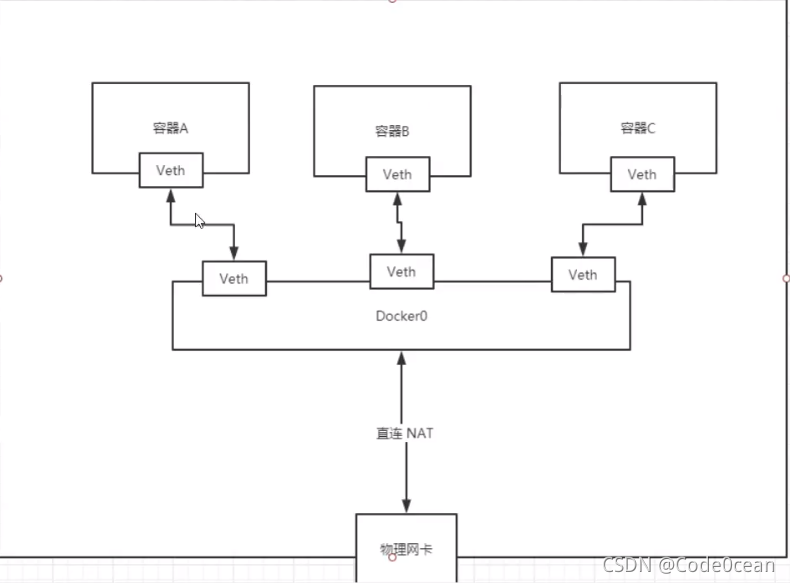

当Docker进程启动时,会在主机上创建一个名为docker0的虚拟网桥,此主机上启动的Docker容器会连接到这个虚拟网桥上。虚拟网桥的工作方式和物理交换机类似,这样主机上的所有容器就通过交换机连在了一个二层网络中。

从docker0子网中分配一个IP给容器使用,并设置docker0的IP地址为容器的默认网关。在主机上创建一对虚拟网卡veth pair设备,Docker将veth pair设备的一端放在新创建的容器中,并命名为eth0(容器的网卡),另一端放在主机中,以vethxxx这样类似的名字命名,并将这个网络设备加入到docker0网桥中。可以通过brctl show命令查看。

bridge模式是docker的默认网络模式,不写–net参数,就是bridge模式。使用docker run -p时,docker实际是在iptables做了DNAT规则,实现端口转发功能。可以使用iptables -t nat -vnL查看。bridge模式如下图所示:

Docker容器完成bridge网络配置的过程如下:

- 在主机上创建一对虚拟网卡veth pair设备。veth设备总是成对出现的,它们组成了一个数据的通道,数据从一个设备进入,就会从另一个设备出来。因此,veth设备常用来连接两个网络设备。

- Docker将veth pair设备的一端放在新创建的容器中,并命名为eth0。另一端放在主机中,以veth65f9这样类似的名字命名,并将这个网络设备加入到docker0网桥中。

- 从docker0子网中分配一个IP给容器使用,并设置docker0的IP地址为容器的默认网关。

示例见下自定义网络

自定义网络

- 创建自己的网络

[root@iZ70eyv5ttqkcsZ ~]# docker network create --driver bridge --subnet 192.168.0.0/16 --gateway 192.168.0.1 mynet

ee8ffcd2dc2705d5979ee85b4fead8774f240b056e04d9572f7cbd2d63a67b21

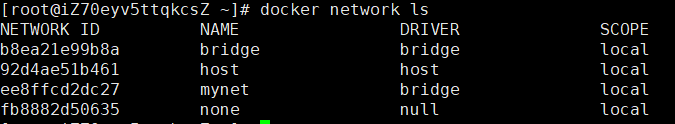

[root@iZ70eyv5ttqkcsZ ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

b8ea21e99b8a bridge bridge local

92d4ae51b461 host host local

ee8ffcd2dc27 mynet bridge local

fb8882d50635 none null local

#网络类型

--driver bridge

#创建一个子网掩码为

--subnet 192.168.0.0/16

#设置网关

--gateway 192.168.0.1

#查看网络配置

[root@iZ70eyv5ttqkcsZ ~]# docker network inspect ee8ffcd2dc27

[

{

"Name": "mynet",

"Id": "ee8ffcd2dc2705d5979ee85b4fead8774f240b056e04d9572f7cbd2d63a67b21",

"Created": "2021-12-30T18:22:07.420759386+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": {},

"Config": [

{

"Subnet": "192.168.0.0/16",

"Gateway": "192.168.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Containers": {},

"Options": {},

"Labels": {}

}

]

- 运行两个容器之后查看自定义网络mynet的属性变化

[root@iZ70eyv5ttqkcsZ ~]# docker run -d -P --name tomcat-net-01 --net mynet tomcat

f3b437f89894a651fc44be699eebea62ef30de68e6ea1119dd4bb55f438e1118

[root@iZ70eyv5ttqkcsZ ~]# docker run -d -P --name tomcat-net-02 --net mynet tomcat

e1be42d908939a183d2032cb03d96b05bdcc1d7ec2c5ff527ad8e3cc27911c07

[root@iZ70eyv5ttqkcsZ ~]# docker network inspect mynet

[

{

"Name": "mynet",

"Id": "ee8ffcd2dc2705d5979ee85b4fead8774f240b056e04d9572f7cbd2d63a67b21",

"Created": "2021-12-30T18:22:07.420759386+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": {},

"Config": [

{

"Subnet": "192.168.0.0/16",

"Gateway": "192.168.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Containers": {

"e1be42d908939a183d2032cb03d96b05bdcc1d7ec2c5ff527ad8e3cc27911c07": {

"Name": "tomcat-net-02",

"EndpointID": "42f3b8a0435805129aeb3af77a92a61878d688338433feb319f52b97fa90ce33",

"MacAddress": "02:42:c0:a8:00:03",

"IPv4Address": "192.168.0.3/16",

"IPv6Address": ""

},

"f3b437f89894a651fc44be699eebea62ef30de68e6ea1119dd4bb55f438e1118": {

"Name": "tomcat-net-01",

"EndpointID": "c5e0099c3c1006c413bcc94567bebfd792445ce24c17c3ffb43e0f892327eb37",

"MacAddress": "02:42:c0:a8:00:02",

"IPv4Address": "192.168.0.2/16",

"IPv6Address": ""

}

},

"Options": {},

"Labels": {}

}

]

说明:

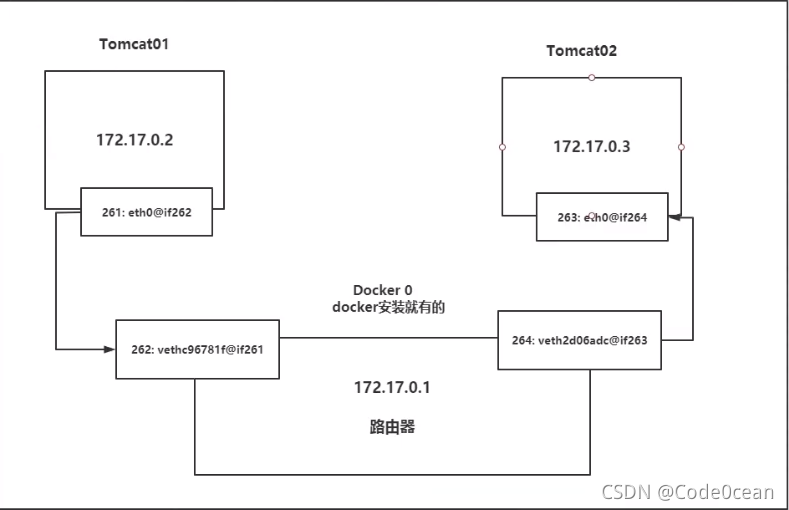

Tomcat01和Tomcat02都使用公用的路由器docker0。所有的容器不指定网络下,都是由docker0路由的,Docker会给我们容器默认分配一个随机的可用IP地址。

通过容器名进行ping命令

[root@iZ70eyv5ttqkcsZ ~]# docker exec -it tomcat-net-01 ping tomcat-net-02

PING tomcat-net-02 (192.168.0.3) 56(84) bytes of data.

64 bytes from tomcat-net-02.mynet (192.168.0.3): icmp_seq=1 ttl=64 time=0.064 ms

64 bytes from tomcat-net-02.mynet (192.168.0.3): icmp_seq=2 ttl=64 time=0.062 ms

64 bytes from tomcat-net-02.mynet (192.168.0.3): icmp_seq=3 ttl=64 time=0.061 ms

64 bytes from tomcat-net-02.mynet (192.168.0.3): icmp_seq=4 ttl=64 time=0.079 ms

64 bytes from tomcat-net-02.mynet (192.168.0.3): icmp_seq=5 ttl=64 time=0.077 ms

64 bytes from tomcat-net-02.mynet (192.168.0.3): icmp_seq=6 ttl=64 time=0.067 ms

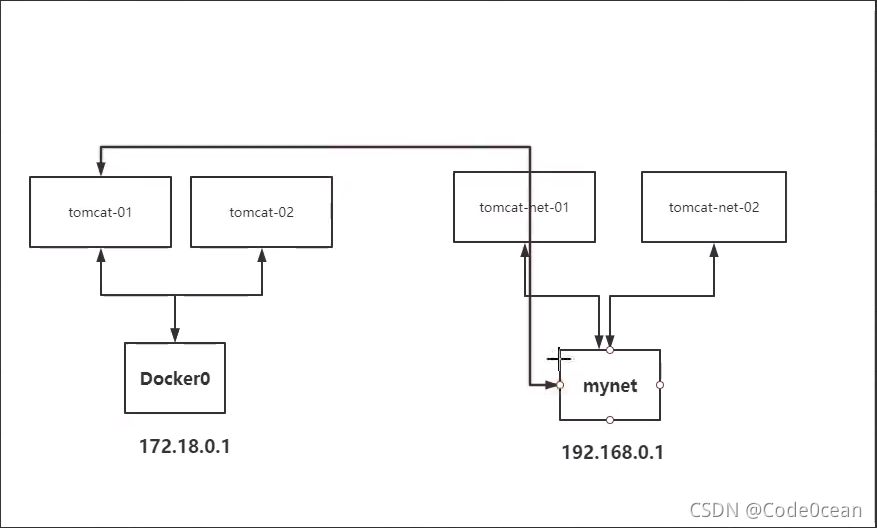

容器网络互联的通用模型,如下图所示:

当Docker的容器被删除,对应网桥也会被删除

Docker网络之间的互联

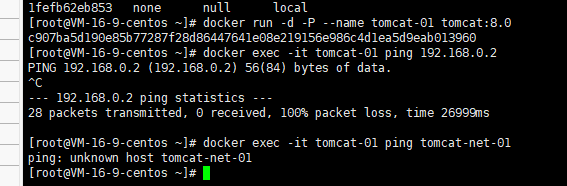

没有设置的情况下,不同网络间的容器是无法进行网络连接的。如图,两个不同的网络docker0和自定义网络mynet的网络模型图:

两个不同网段下的容器通信

我们下面创建两个tomcat容器tomcat-01和tomcat-02使用默认网卡Docker0

docker run -d -P --name tomcat-01 tomcat

docker run -d -P --name tomcat-02 tomcat

可以看到是无法网络连接的。不同Docker网络之间的容器需要连接的话需要把作为调用方的容器注册一个ip到被调用方所在的网络上。需要使用docker connect命令。

[root@iZ70eyv5ttqkcsZ ~]# docker network connect mynet tomcat-01

#将tomcat-01链接到mynet上

[root@iZ70eyv5ttqkcsZ ~]# docker network inspect mynet

[

{

"Name": "mynet",

"Id": "ee8ffcd2dc2705d5979ee85b4fead8774f240b056e04d9572f7cbd2d63a67b21",

"Created": "2021-12-30T18:22:07.420759386+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": {},

"Config": [

{

"Subnet": "192.168.0.0/16",

"Gateway": "192.168.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Containers": {

"3138d21c6e47d7727eb6c3982b19ca0573d7f8d8fc960f3592d160003cbb177e": {

"Name": "tomcat-01",

"EndpointID": "b45309e7f4da448a8d188bb014d6362445ca5cddcef1698b646e9a39740a8b40",

"MacAddress": "02:42:c0:a8:00:04",

"IPv4Address": "192.168.0.4/16",

"IPv6Address": ""

},#可以看出来给tomcat-01分配了一个ip地址

"819770bc3e10810985d1e858053d9a2101a4afd42664216789649c3c41f9dd6b": {

"Name": "tomcat01",

"EndpointID": "36d468dd2c0eb4eb780471f98307a6d176d10304835c511751f32b316d03a3f5",

"MacAddress": "02:42:c0:a8:00:02",

"IPv4Address": "192.168.0.2/16",

"IPv6Address": ""

},

"921599eef5da0b4eeb61d095f8e4b163c68b02af7ae51050071bc3cef1c2ad77": {

"Name": "tomcat02",

"EndpointID": "c664d5b38824527d1def4dde262bb3180ad2ad2283af09a836a22a3a77f9f359",

"MacAddress": "02:42:c0:a8:00:03",

"IPv4Address": "192.168.0.3/16",

"IPv6Address": ""

}

},

"Options": {},

"Labels": {}

}

]

redis集群部署

- 创建一个redis网络

docker network create redis_net --subnet 172.38.0.0/16

[root@iZ70eyv5ttqkcsZ ~]# docker network inspect 779f547ec0e9

[

{

"Name": "redis_net",

"Id": "779f547ec0e9b34a319e8519b9c22ec6e2f8c71638976adeddfd47f3890a882e",

"Created": "2021-12-30T21:26:02.452272681+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": {},

"Config": [

{

"Subnet": "172.38.0.0/16"

}

]

},

"Internal": false,

"Attachable": false,

"Containers": {},

"Options": {},

"Labels": {}

}

]

- 通过以下脚本创建6个redis集群:

for port in $(seq 1 6); \

do \

mkdir -p /mydata/redis/node-${port}/conf

touch /mydata/redis/node-${port}/conf/redis.conf

cat << EOF >/mydata/redis/node-${port}/conf/redis.conf

port 6379

bind 0.0.0.0

cluster-enabled yes

cluster-config-file nodes.conf

cluster-node-timeout 5000

cluster-announce-ip 172.38.0.1${port}

cluster-announce-port 6379

cluster-announce-bus-port 16379

appendonly yes

EOF

done

- 创建6个redis容器

#第1个Redis容器

docker run -p 6371:6379 -p 16371:16379 --name redis-1 \

-v /mydata/redis/node-1/data:/data \

-v /mydata/redis/node-1/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.38.0.11 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

#第2个Redis容器

docker run -p 6372:6379 -p 16372:16379 --name redis-2 \

-v /mydata/redis/node-2/data:/data \

-v /mydata/redis/node-2/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.38.0.12 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

#第3个Redis容器

docker run -p 6373:6379 -p 16373:16379 --name redis-3 \

-v /mydata/redis/node-3/data:/data \

-v /mydata/redis/node-3/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.38.0.13 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

#第4个Redis容器

docker run -p 6374:6379 -p 16374:16379 --name redis-4 \

-v /mydata/redis/node-4/data:/data \

-v /mydata/redis/node-4/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.38.0.14 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

#第5个Redis容器

docker run -p 6375:6379 -p 16375:16379 --name redis-5 \

-v /mydata/redis/node-5/data:/data \

-v /mydata/redis/node-5/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.38.0.15 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

#第6个Redis容器

docker run -p 6376:6379 -p 16376:16379 --name redis-6 \

-v /mydata/redis/node-6/data:/data \

-v /mydata/redis/node-6/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.38.0.16 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

- 进入redis-1容器进行设置集群

#进入redis-1容器,通过/bin/sh进入 没有bin/bash

docker exec -it redis-1 /bin/sh

/data # redis-cli --cluster create 172.38.0.11:6379 172.38.0.12:6379 172.38.0.13:6379 172.38.0.14:6379 172.38.0.15:6379 172.38.0.16:6379 --cluster-replicas 1

>>> Performing hash slots allocation on 6 nodes...

Master[0] -> Slots 0 - 5460

Master[1] -> Slots 5461 - 10922

Master[2] -> Slots 10923 - 16383

Adding replica 172.38.0.15:6379 to 172.38.0.11:6379

Adding replica 172.38.0.16:6379 to 172.38.0.12:6379

Adding replica 172.38.0.14:6379 to 172.38.0.13:6379

M: e4222a3c94850bd1bd059d3c3d723d7b506889c4 172.38.0.11:6379

slots:[0-5460] (5461 slots) master

M: 4e73d13b08b002656b98c5469708499af4b063ec 172.38.0.12:6379

slots:[5461-10922] (5462 slots) master

M: 44bd724d0004ffd7224279a6cd6c79711cb11616 172.38.0.13:6379

slots:[10923-16383] (5461 slots) master

S: c7f852962a48a82287f84b0f3b7a5e0c1388e598 172.38.0.14:6379

replicates 44bd724d0004ffd7224279a6cd6c79711cb11616

S: f047a2ac74d6edbdec4cdddf1cc021474fdc24ae 172.38.0.15:6379

replicates e4222a3c94850bd1bd059d3c3d723d7b506889c4

S: 10e6b9572c964c5e4d52e1a77f5fa89bcfaae4c8 172.38.0.16:6379

replicates 4e73d13b08b002656b98c5469708499af4b063ec

Can I set the above configuration? (type 'yes' to accept): yes

>>> Nodes configuration updated

>>> Assign a different config epoch to each node

>>> Sending CLUSTER MEET messages to join the cluster

Waiting for the cluster to join

...

>>> Performing Cluster Check (using node 172.38.0.11:6379)

M: e4222a3c94850bd1bd059d3c3d723d7b506889c4 172.38.0.11:6379

slots:[0-5460] (5461 slots) master

1 additional replica(s)

M: 4e73d13b08b002656b98c5469708499af4b063ec 172.38.0.12:6379

slots:[5461-10922] (5462 slots) master

1 additional replica(s)

S: 10e6b9572c964c5e4d52e1a77f5fa89bcfaae4c8 172.38.0.16:6379

slots: (0 slots) slave

replicates 4e73d13b08b002656b98c5469708499af4b063ec

S: c7f852962a48a82287f84b0f3b7a5e0c1388e598 172.38.0.14:6379

slots: (0 slots) slave

replicates 44bd724d0004ffd7224279a6cd6c79711cb11616

M: 44bd724d0004ffd7224279a6cd6c79711cb11616 172.38.0.13:6379

slots:[10923-16383] (5461 slots) master

1 additional replica(s)

S: f047a2ac74d6edbdec4cdddf1cc021474fdc24ae 172.38.0.15:6379

slots: (0 slots) slave

replicates e4222a3c94850bd1bd059d3c3d723d7b506889c4

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

- 进入redis查看集群信息

/data # redis-cli -c

127.0.0.1:6379> cluster info

cluster_state:ok

cluster_slots_assigned:16384

cluster_slots_ok:16384

cluster_slots_pfail:0

cluster_slots_fail:0

cluster_known_nodes:6 //六个集群

cluster_size:3

cluster_current_epoch:6

cluster_my_epoch:1

cluster_stats_messages_ping_sent:141

cluster_stats_messages_pong_sent:145

cluster_stats_messages_sent:286

cluster_stats_messages_ping_received:140

cluster_stats_messages_pong_received:141

cluster_stats_messages_meet_received:5

cluster_stats_messages_received:286

- 查看集群节点的主从关系

127.0.0.1:6379> cluster nodes

4e73d13b08b002656b98c5469708499af4b063ec 172.38.0.12:6379@16379 master - 0 1640871895601 2 connected 5461-10922

10e6b9572c964c5e4d52e1a77f5fa89bcfaae4c8 172.38.0.16:6379@16379 slave 4e73d13b08b002656b98c5469708499af4b063ec 0 1640871896635 6 connected

c7f852962a48a82287f84b0f3b7a5e0c1388e598 172.38.0.14:6379@16379 slave 44bd724d0004ffd7224279a6cd6c79711cb11616 0 1640871895000 4 connected

44bd724d0004ffd7224279a6cd6c79711cb11616 172.38.0.13:6379@16379 master - 0 1640871896000 3 connected 10923-16383

f047a2ac74d6edbdec4cdddf1cc021474fdc24ae 172.38.0.15:6379@16379 slave e4222a3c94850bd1bd059d3c3d723d7b506889c4 0 1640871897151 5 connected

e4222a3c94850bd1bd059d3c3d723d7b506889c4 172.38.0.11:6379@16379 myself,master - 0 1640871896000 1 connected 0-5460

- 设置一个key a 值为 b

127.0.0.1:6379> set a b

-> Redirected to slot [15495] located at 172.38.0.13:6379

OK

- 停止对应主节点的redis容器,验证集群效果

[root@iZ70eyv5ttqkcsZ conf]# docker stop redis-3

redis-3

#可以看出是从172.38.0.14:6379他的子节点取出的数据

127.0.0.1:6379> get a

-> Redirected to slot [15495] located at 172.38.0.14:6379

"b"

#172.38.0.13:6379主节点已经被判定为fail状态,经过选举他的子节点172.38.0.14:6379成为了master,从中查询出了

172.38.0.14:6379> cluster nodes

c7f852962a48a82287f84b0f3b7a5e0c1388e598 172.38.0.14:6379@16379 myself,master - 0 1640872271000 8 connected 10923-16383

44bd724d0004ffd7224279a6cd6c79711cb11616 172.38.0.13:6379@16379 master,fail - 1640872163186 1640872162568 3 connected

e4222a3c94850bd1bd059d3c3d723d7b506889c4 172.38.0.11:6379@16379 master - 0 1640872271000 1 connected 0-5460

4e73d13b08b002656b98c5469708499af4b063ec 172.38.0.12:6379@16379 master - 0 1640872272539 2 connected 5461-10922

f047a2ac74d6edbdec4cdddf1cc021474fdc24ae 172.38.0.15:6379@16379 slave e4222a3c94850bd1bd059d3c3d723d7b506889c4 0 1640872272000 5 connected

10e6b9572c964c5e4d52e1a77f5fa89bcfaae4c8 172.38.0.16:6379@16379 slave 4e73d13b08b002656b98c5469708499af4b063ec 0 1640872272130 2 connected

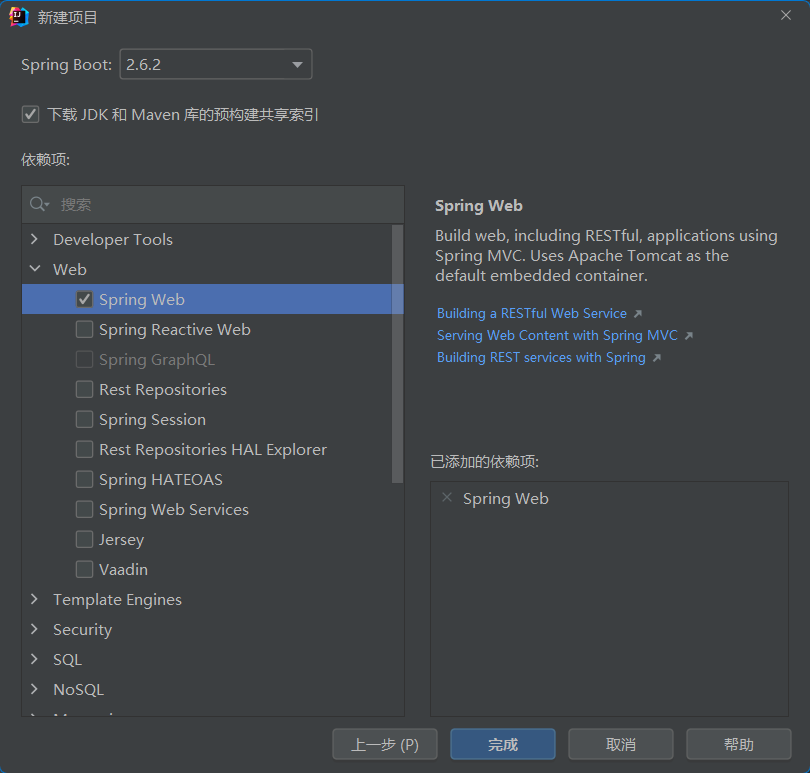

SpringBoot微服务打包Docker发布

- 创建一个SpringBoot项目

- 编写contorller类

package com.example.demo.controller;

import org.springframework.web.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.RestController;

@RestController

public class HelloController {

@RequestMapping("/hello")

public String hello(){

return "hello,world";

}

}

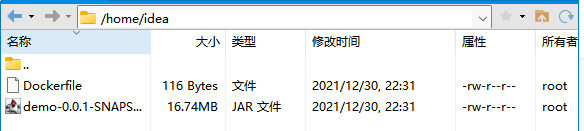

- 编写Dcokerfile

FROM java:8

COPY *.jar /app.jar

CMD ["--server.port=8080"]

EXPOSE 8080

ENTRYPOINT ["java","-jar","/app.jar"]

-

打包为jar包并将jar包和Dockerfile文件一起放在linux某一个文件夹中,我放在了/home/idea中

-

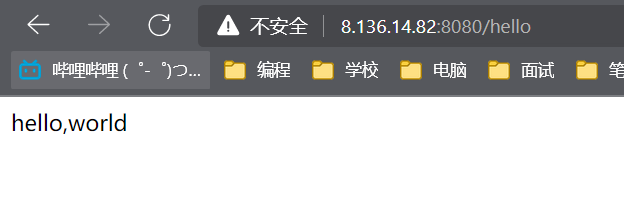

build一个镜像并运行

docker build -t demo .

docker run -d -p 8080:8080 --name demo-springboot-web demo

[root@iZ70eyv5ttqkcsZ idea]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

86dab60c2b20 demo "java -jar /app.ja..." 3 seconds ago Up 2 seconds 0.0.0.0:8080->8080/tcp demo-springboot-web

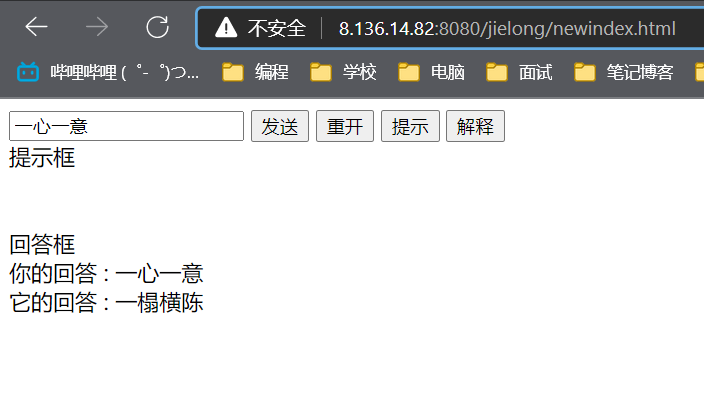

运行结果

测试:tomcat+mysql+jdk1.8部署成语接龙项目;

创建一个docker网络用于容器通信

#前文创建过了子网掩码为192.168.0.0/16

#网关为192.168.0.1

[root@iZ70eyv5ttqkcsZ ~]# docker network create --driver bridge --subnet 192.168.0.0/16 --gateway 192.168.0.1 mynet

#下面mynet详细信息中可以看出mysql容器的ip为192.168.0.2,tomcat的为192.168.0.3

[root@iZ70eyv5ttqkcsZ classes]# docker network ls

NETWORK ID NAME DRIVER SCOPE

b8ea21e99b8a bridge bridge local

92d4ae51b461 host host local

ee8ffcd2dc27 mynet bridge local

fb8882d50635 none null local

c619a62c8c7b redis bridge local

[root@iZ70eyv5ttqkcsZ classes]# docker network inspect mynet

[

{

"Name": "mynet",

"Id": "ee8ffcd2dc2705d5979ee85b4fead8774f240b056e04d9572f7cbd2d63a67b21",

"Created": "2021-12-30T18:22:07.420759386+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": {},

"Config": [

{

"Subnet": "192.168.0.0/16",

"Gateway": "192.168.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Containers": {

"2ca9d202e2bd62144d5d8761469bde5dad3c7fa9c39f80123006e793a649085b": {

"Name": "yjwtomcat",

"EndpointID": "8f8e4efb39041394e3813c0588babee32adfec236ba55280a73f34554ab9928d",

"MacAddress": "02:42:c0:a8:00:03",

"IPv4Address": "192.168.0.3/16",

"IPv6Address": ""

},

"60c29708128ae9633b6af4a3d052cf40a5fb683da52a5c20ba10882d7f11f00d": {

"Name": "mysqltest",

"EndpointID": "73cd7e30a7638375fb9f30bcebdf1904267eee2bbacc38217991ba3ded736c4b",

"MacAddress": "02:42:c0:a8:00:02",

"IPv4Address": "192.168.0.2/16",

"IPv6Address": ""

}

},

"Options": {},

"Labels": {}

}

]

mysql的dockerfile编写

FROM mysql:5.7

MAINTAINER yjw_mysql:5.7

VOLUME /home/mysql/conf:/etc/mysql/conf

VOLUME /home/mysql/data:/var/lib/mysql

ENV MYSQL_ROOT_PASSWORD 1234

EXPOSE 3306

tomcat的Dockerfile文件的编写

FROM centos

MAINTAINER yjw

COPY readme.txt /usr/local/readme.txt

ADD jdk-8u181-linux-x64.tar.gz.gz /usr/local

ADD apache-tomcat-8.5.73.tar.gz /usr/local

RUN yum -y install vim

ENV MYPATH /usr/local

WORKDIR $MYPATH

ENV JAVA_HOME /usr/local/jdk1.8.0_181

ENV CLASSPATH $JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

ENV CATALINA_HOME /usr/local/apache-tomcat-8.5.73

ENV CATALINA_BASH /usr/local/apache-tomcat-8.5.73

ENV PATH $PATH:$JAVA_HOME/bin:$CATALINA_HOME/lib:$CATALINA_HOME/bin

EXPOSE 8080

CMD /usr/local/apache-tomcat-8.5.73/bin/startup.sh && tail -F /usr/local/apache-tomcat-8.5.73/logs/catalina.out

#docker build

docker build -t yjwtomcat:1.0 .

docker run -d -p3306:3306 -v /home/mysql/conf:/etc/mysql/conf -v /home/mysql/data:/var/lib/mysql -e MYSQL_ROOT_PASSWORD=1234 --name mysqltest --net mynet mysql:5.7

docker run -d -p 8080:8080 --name yjwtomcat --net mynet -v /home/newtomcat/webapps:/usr/local/apache-tomcat-8.5.73/webapps/ -v /home/newtomcat/logs/:/usr/local/apache-tomcat-8.5.73/logs yjwtomcat

数据库的导入

mysql sql.db语句版本 5.1.47

通过mysql -u用户名 -p 密码 < 数据库文件.sql进行数据库的导入

因为数据库编码为Latin1中文会出现编码,需要将数据库的默认编码改为utf-8

- 首先登录数据库

- show variables like ‘character%’; 查询数据库编码

+--------------------------+----------------------------+

| Variable_name | Value |

+--------------------------+----------------------------+

| character_set_client | latin1 |

| character_set_connection | latin1 |

| character_set_database | latin1 |

| character_set_filesystem | binary |

| character_set_results | latin1 |

| character_set_server | latin1 |

| character_set_system | utf8 |

| character_sets_dir | /usr/share/mysql/charsets/ |

+--------------------------+----------------------------+

- character_set_client为客户端编码方式;

- character_set_connection为建立连接使用的编码;

- character_set_database数据库的编码;

- character_set_results结果集的编码;

- character_set_server数据库服务器的编码

要保证其中四个采用的编码都相同,就不会出现乱码问题

2、linux系统下,修改MySQL数据库默认编码的步骤为:

- 停止MySQL的运行

/etc/init.d/mysql start (stop) 为启动和停止服务器

-

MySQL主配置文件为my.cnf,一般目录为/etc/mysql

-

var/lib/mysql/ 放置的是数据库表文件夹,这里的mysql相当于windows下mysql的date文件夹

当我们需要修改MySQL数据库的默认编码时,需要编辑my.cnf文件进行编码修改,在linux下修改mysql的配置文件my.cnf,文件位置默认/etc/my.cnf文件

- 找到客户端配置[client] 在下面添加

default-character-set=utf8 默认字符集为utf8

- 在找到[mysqld] 添加

default-character-set=utf8 默认字符集为utf8

init_connect=‘SET NAMES utf8’ (设定连接mysql数据库时使用utf8编码,以让mysql数据库为utf8运行)

- 修改好后,重新启动mysql 即可,重新查询数据库编码可发现编码方式的改变:

show variables like 'character%';

+--------------------------+----------------------------+

| Variable_name | Value |

+--------------------------+----------------------------+

| character_set_client | utf8 |

| character_set_connection | utf8 |

| character_set_database | utf8 |

| character_set_filesystem | binary |

| character_set_results | utf8 |

| character_set_server | utf8 |

| character_set_system | utf8 |

| character_sets_dir | /usr/share/mysql/charsets/ |

项目文件打包

通过maven打包为war文件

放在挂载的文件夹/home/newtomcat/webapps,容器就可以解压缩,

修改项目中的数据库连接池配置文件

/home/newtomcat/webapps/jielong/WEB-INF/classes路径下的c3p0-config.xml jdbcUrl字段中的ip地址需要修改为mysql容器的地址

<?xml version="1.0" encoding="UTF-8"?>

<c3p0-config>

<!--默认配置-->

<default-config>

<property name="initialPoolSize">10</property>

<property name="maxIdleTime">30</property>

<property name="maxPoolSize">100</property>

<property name="minPoolSize">10</property>

<property name="maxStatements">200</property>

</default-config>

<!--配置连接池mysql-->

<named-config name="mysql">

<property name="driverClass">com.mysql.jdbc.Driver</property>

<!-- <property name="jdbcUrl">jdbc:mysql://localhost/jielong?useUnicode=true&characterEncoding=UTF-8</property>-->

<property name="jdbcUrl">jdbc:mysql://192.168.0.2:3306/jielong?useUnicode=true&characterEncoding=UTF-8</property>

<property name="useUnicode">true</property>

<property name="characterEncoding">UTF-8</property>

<property name="user">root</property>

<property name="password">1234</property>

<property name="initialPoolSize">10</property>

<property name="maxIdleTime">30</property>

<property name="maxPoolSize">100</property>

<property name="minPoolSize">10</property>

<property name="maxStatements">200</property>

<property name="testConnectionOnCheckin">true</property>

</named-config>

<!--配置连接池2-->

......

</c3p0-config>

535

535

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?