实验环境:

| 主机名(ip) | 服务 |

|---|---|

| server4(172.25.13.4) | hdfs,nfs,(namenode) |

| server5(172.25.13.5) | nfs,(datanode) |

| server6(172.25.13.6) | nfs,(datanode) |

1.部署及测试

安装包:

hadoop-3.0.3.tar.gz

jdk-8u181-linux-x64.tar.gz

###建立hadoop用户,并且设置密码

[root@server4 mnt]# useradd -u 1000 hadoop

[root@server4 ~]# passwd hadoop

#####进入到hadoop用户下,配置java的运行环境

[root@server4 mnt]# mv .gz /home/hadoop/

[root@server4 mnt]# su - hadoop

Last login: Sun Aug 18 02:41:26 EDT 2019 on pts/0

[hadoop@server4 ~]$ ls

hadoop-3.0.3.tar.gz jdk-8u181-linux-x64.tar.gz

[hadoop@server4 ~]$ tar zxf jdk-8u181-linux-x64.tar.gz

[hadoop@server4 ~]$ tar zxf hadoop-3.0.3.tar.gz

[hadoop@server4 ~]$ ls

hadoop-3.0.3 jdk1.8.0_181

hadoop-3.0.3.tar.gz jdk-8u181-linux-x64.tar.gz

[hadoop@server4 ~]$ ln -s jdk1.8.0_181/ java

[hadoop@server4 ~]$ ln -s hadoop-3.0.3 hadoop

[hadoop@server4 ~]$ ls

hadoop hadoop-3.0.3.tar.gz jdk1.8.0_181

hadoop-3.0.3 java jdk-8u181-linux-x64.tar.gz

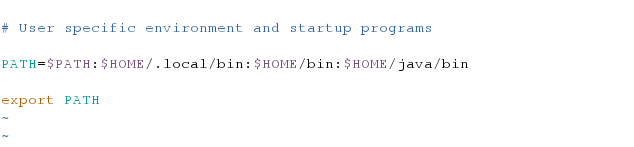

######.配置java的环境变量

[hadoop@server4 ~]$ vim .bash_profile

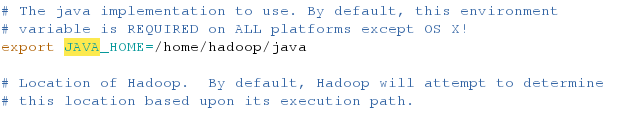

######在hadoop的配置文件中添加java的路径

[hadoop@server4 ~]$ cd hadoop

[hadoop@server4 hadoop]$ ls

bin include libexec NOTICE.txt sbin

etc lib LICENSE.txt README.txt share

[hadoop@server4 hadoop]$ cd etc

[hadoop@server4 etc]$ ls

hadoop

[hadoop@server4 etc]$ cd hadoop/

[hadoop@server4 hadoop]$ ls

[hadoop@server4 hadoop]$ vim hadoop-env.sh

测试:

[hadoop@server4 hadoop]$ mkdir input

######将etc/hadoop中的相应的算法案例cp到input目录下

[hadoop@server4 hadoop]$ cp etc/hadoop/.xml input

######执行算法并且将其输出到output目录下:

[hadoop@server4 hadoop]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.0.3.jar grep input output ‘dfs[a-z.]+’

[hadoop@server4 hadoop]$ ls

bin include lib LICENSE.txt NOTICE.txt README.txt share

etc input libexec logs output sbin

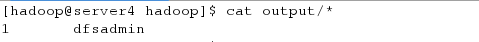

######进入output目录显示计算成功:

[hadoop@server4 hadoop]$ cat output/*

1 dfsadmin

[hadoop@server4 hadoop]$ cd output/

[hadoop@server4 output]$ ls

part-r-00000 _SUCCESS

2.伪分布式

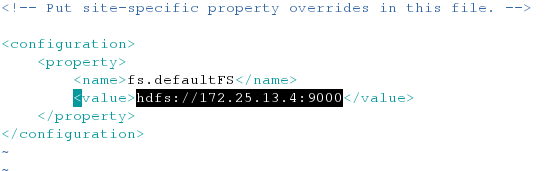

####编写文件:

[hadoop@server4 hadoop]$ vim etc/hadoop/core-site.xml

###########

fs.defaultFS

hdfs://localhost:9000

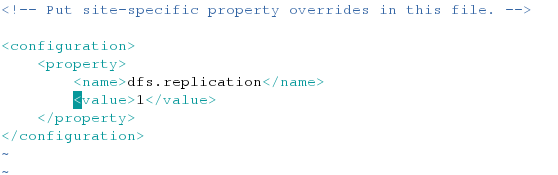

[hadoop@server4 hadoop]$ vim etc/hadoop/hdfs-site.xml

########

dfs.replication

1

#####对本机及其相关本机相关域名生成钥匙做免密连接

[hadoop@server4 hadoop]$ ssh-keygen

[hadoop@server4 hadoop]$ ssh-copy-id localhost

####格式化namenode节点并且卡其hdfs服务

[hadoop@server4 hadoop]$ bin/hdfs namenode -format

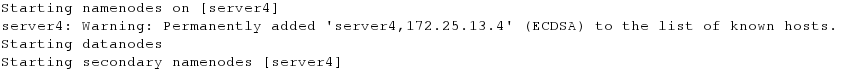

[hadoop@server4 hadoop]$ sbin/start-dfs.sh

#####开启服务之后会生成相应的节点

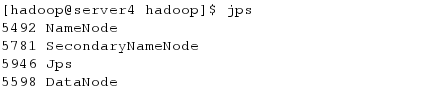

[hadoop@server4 hadoop]$ jps

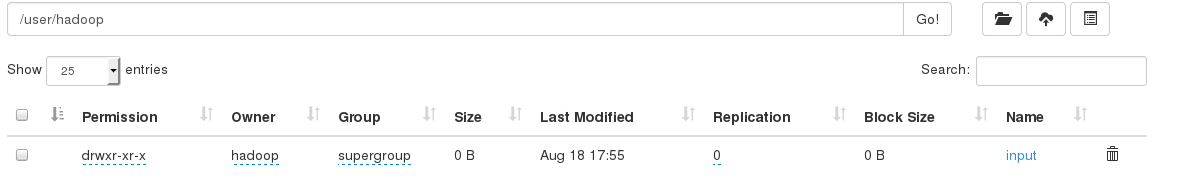

#####.在浏览器上查看

http://172.25.13.4:9870

####测试,创建目录,并且上传文件

[hadoop@server4 hadoop]$ bin/hdfs dfs -mkdir /user

[hadoop@server4 hadoop]$ bin/hdfs dfs -mkdir /user/hadoop

[hadoop@server4 hadoop]

b

i

n

/

h

d

f

s

d

f

s

−

m

k

d

i

r

i

n

p

u

t

[

h

a

d

o

o

p

@

s

e

r

v

e

r

4

h

a

d

o

o

p

]

bin/hdfs dfs -mkdir input [hadoop@server4 hadoop]

bin/hdfsdfs−mkdirinput[hadoop@server4hadoop] bin/hdfs dfs -put etc/hadoop/*.xml input

在网页查看:

2333

2333

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?