接触爬虫也有一段时间了,起初都是使用request库爬取数据,并没有使用过什么爬虫框架。之前仅仅是好奇,这两天看了一下scrapy文档,也试着去爬了一些数据,发现还真是好用。

以下以爬 易车网的销售指数为例。具体过程就不多说了;

需要的字段:

- 时间(年月);

- 销售量;

- 类别(包括小型、微型、中型、紧凑型、中大型、SUV、MPV、品牌、厂商);

- 车型。

#分析网站

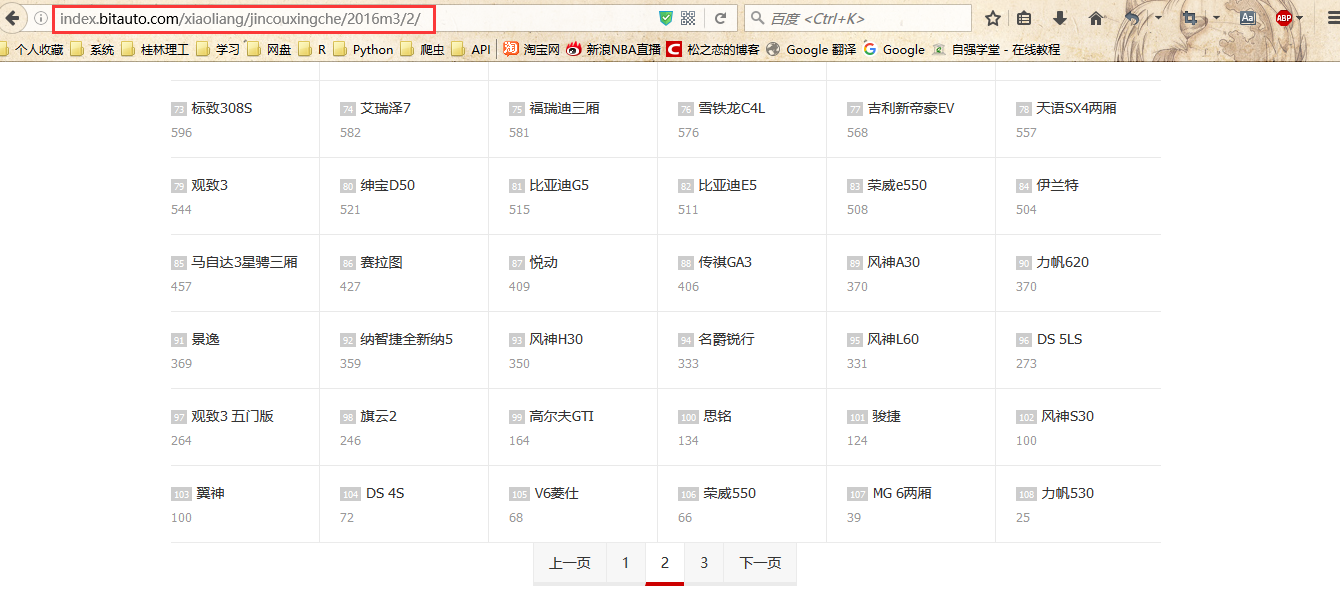

- 分析URL http://index.bitauto.com/xiaoliang/jincouxingche/2016m3/2/,我们发现url中的2016是年份,3是月份,2是页码,jincouxingche是类别;

- 只需要改变URL中的年份、月份、页码、类别,就可以请求到不同的数据。

--------------------------------------------------------------分割线部分---------------------------------------------------------------

注意:再深入我们发现数据也是动态加载的,返回的是aspx页面。而上面请求的是静态页面,返回html。

--------------------------------------------------------------分割线---------------------------------------------------------------

编写spiders

# -*- coding:utf-8 -*-

import scrapy

from scrapy.http import Request

import re

# create UrlList

url_list = []

Type = ['changshang','pinpai','jincouxingche','xiaoxingche','weixingche','zhongxingche','zhongdaxingche','suv','mpv']

for t in Type:

for year in range(2009,2016):

for m in range(1,13):

url = 'http://index.bitauto.com/xiaoliang/'+t+'/'+str(year)+'m'+str(m)+'/'

url_list.append(url)

class YicheItem(scrapy.Item):

# define the fields for your item here like:

Date = scrapy.Field()

CarName = scrapy.Field()

Type = scrapy.Field()

SalesNum = scrapy.Field()

class YicheSpider(scrapy.spiders.Spider):

name = "yiche"

allowed_domains = ["index.bitauto.com"]

start_urls = url_list

def parse(self, response):

# 获取第一个页面的数据

s = response.url

t,year,m = re.findall('xiaoliang/(.*?)/(\d+)m(\d+)',s,re.S)[0]

for sel in response.xpath('//ol/li'):

Name = sel.xpath('a/text()').extract()[0]

SalesNum = sel.xpath('span/text()').extract()[0]

#print Name,SalesNum

items = YicheItem()

items['Date'] = str(year)+'/'+str(m)

items['CarName'] = Name

items['Type'] = t

items['SalesNum'] = SalesNum

yield items

# 判断是否还有下一页,如果没有跳过,有则爬取下一个页面

if len(response.xpath('//div[@class="the_pages"]/@class').extract())==0:

pass

else:

next_pageclass = response.xpath('//div[@class="the_pages"]/div/span[@class="next_off"]/@class').extract()

next_page = response.xpath('//div[@class="the_pages"]/div/span[@class="next_off"]/text()').extract()

if len(next_page)!=0 and len(next_pageclass)!=0:

pass

else:

next_url = 'http://index.bitauto.com'+response.xpath('//div[@class="the_pages"]/div/a/@href')[-1].extract()

yield Request(next_url, callback=self.parse)

保存到数据库

-

在mysql数据库中新建用于保存数据的表格;

-

修改settings.py文件:

ITEM_PIPELINES = {

'yiche.pipelines.YichePipeline': 300,

}

- 修改文件pipeline.py,代码如下:

# -*- coding: utf-8 -*-

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: http://doc.scrapy.org/en/latest/topics/item-pipeline.html

import MySQLdb

import MySQLdb.cursors

import logging

from twisted.enterprise import adbapi

class YichePipeline(object):

def __init__(self):

self.dbpool = adbapi.ConnectionPool(

dbapiName ='MySQLdb',#数据库类型,我这里是mysql

host ='127.0.0.1',#IP地址,这里是本地

db = 'scrapy',#数据库名称

user = 'root',#用户名

passwd = 'root',#密码

cursorclass = MySQLdb.cursors.DictCursor,

charset = 'utf8',#使用编码类型

use_unicode = False

)

# pipeline dafault function

def process_item(self, item, spider):

query = self.dbpool.runInteraction(self._conditional_insert, item)

logging.debug(query)

return item

# 插入数据到数据库

def _conditional_insert(self, tx, item):

parms = (item['Date'],item['CarName'],item['Type'],item['SalesNum'])

sql = "insert into yiche (Date,CarName,Type,SalesNum) values('%s','%s','%s','%s') " % parms

#logging.debug(sql)

tx.execute(sql)

运行程序

命令行执行:

scrapy crawl yiche

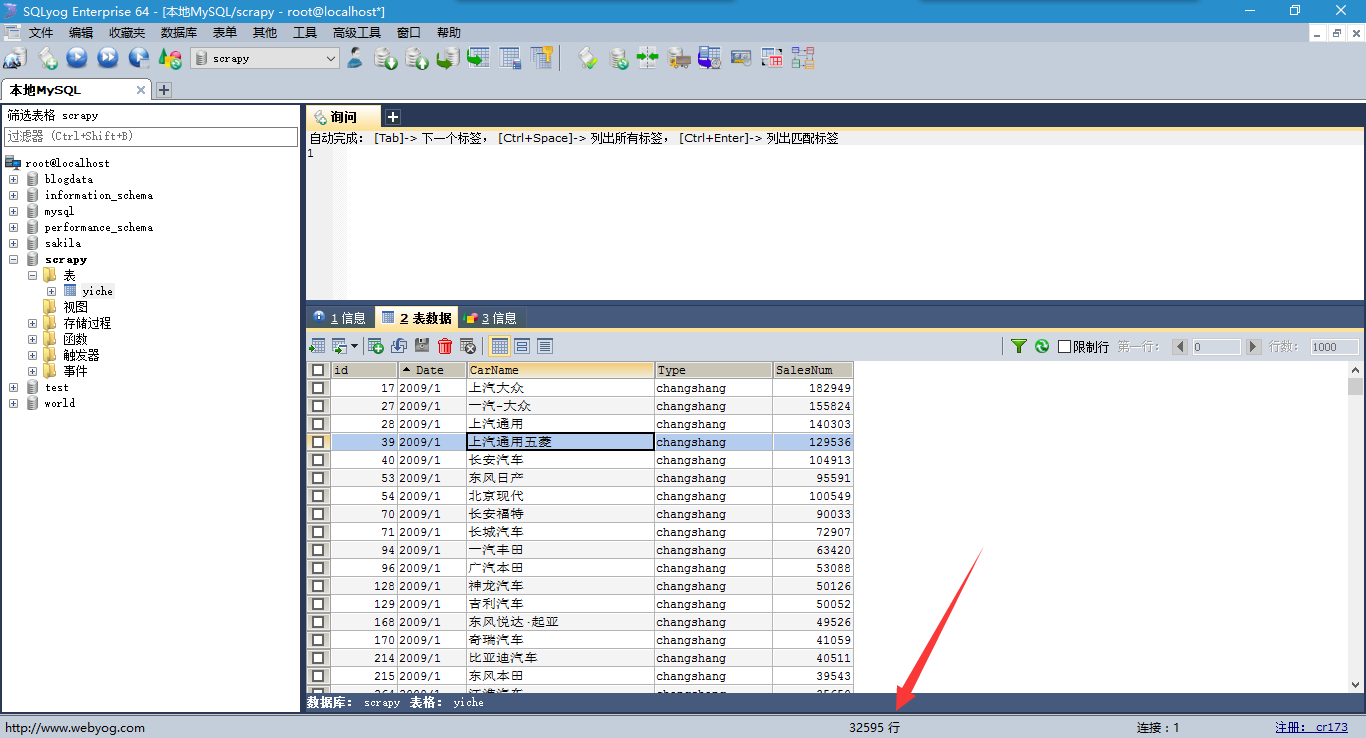

查看数据:

ok,数据有大概3W+条记录。

欢迎访问我的个人站点:http://bgods.cn/

1111

1111

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?