1.Xception网络简介

Xception网络是在2017提出的轻量型网络,兼顾了准确性和参数量,Xception----->Extreme(极致的) Inception。

2.创新点

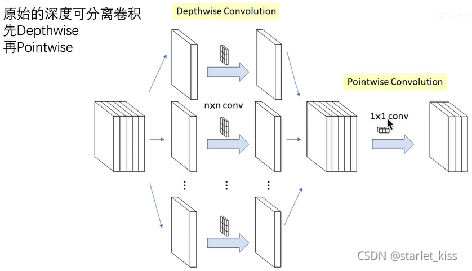

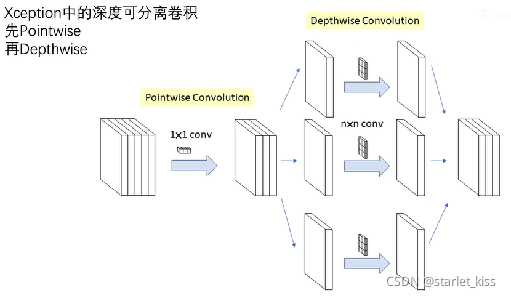

引入类似深度可分离卷积:Depthwise Separable Convolution。

为什么说是类似深度可分离卷积呢?

1)深度可分离卷积的实现过程:

2)Xception深度可分离卷积的实现过程:

Xception的深度可分离卷积与传统的深度可分离卷积的步骤是相反的,但是原论文作者说,两者的性能差异不大,最终的结果差异也不大,因此在实现的时候还是用的传统的深度可分离卷积。

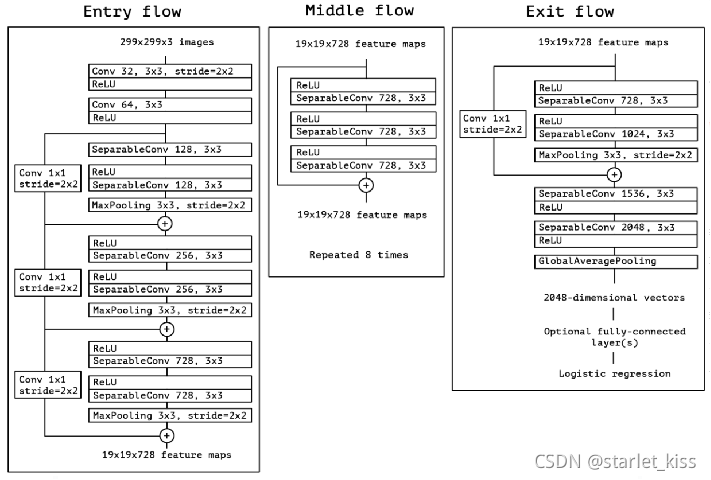

3.网络模型

4.网络实现

def Xcepiton(nb_class,input_shape):

input_ten = Input(shape=input_shape)

#block 1

#299,299,3 -> 149,149,64

x = Conv2D(32,(3,3),strides=(2,2),use_bias=False)(input_ten)

x = BatchNormalization()(x)

x = Activation('relu')(x)

x = Conv2D(64,(3,3),use_bias=False)(x)

x = BatchNormalization()(x)

x = Activation('relu')(x)

#block2

#149,149,64 -> 75,75,128

residual = Conv2D(128,(1,1),strides=(2,2),padding='same',use_bias=False)(x)

residual = BatchNormalization()(residual)

x = SeparableConv2D(128,(3,3),padding='same',use_bias=False)(x)

x = BatchNormalization()(x)

x = Activation('relu')(x)

x = SeparableConv2D(128,(3,3),padding='same')(x)

x = BatchNormalization()(x)

x = MaxPooling2D((3,3),strides=(2,2),padding='same')(x)

x = layers.add([x,residual])

#block3

#75,75,128 -> 38,38,256

residual = Conv2D(256,(1,1),strides=(2,2),padding='same',use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu')(x)

x = SeparableConv2D(256,(3,3),padding='same',use_bias=False)(x)

x = BatchNormalization()(x)

x = Activation('relu')(x)

x = SeparableConv2D(256,(3,3),padding='same',use_bias=False)(x)

x = BatchNormalization()(x)

x = MaxPooling2D((3,3),strides=(2,2),padding='same')(x)

x = layers.add([x,residual])

#block4

#38,38,256 -> 19,19,728

residual = Conv2D(728,(1,1),strides=(2,2),padding='same',use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu')(x)

x = SeparableConv2D(728,(3,3),padding='same',use_bias=False)(x)

x = BatchNormalization()(x)

x = Activation('relu')(x)

x = SeparableConv2D(728,(3,3),padding='same',use_bias=False)(x)

x = BatchNormalization()(x)

x = MaxPooling2D((3,3),strides=(2,2),padding='same')(x)

x = layers.add([x,residual])

#block 5 - 12

#19,19,728 -> 19,19,728

for i in range(8):

residual = x

x = Activation('relu')(x)

x = SeparableConv2D(728,(3,3),padding='same',use_bias=False)(x)

x = BatchNormalization()(x)

x = Activation('relu')(x)

x = SeparableConv2D(728,(3,3),padding='same',use_bias=False)(x)

x = BatchNormalization()(x)

x = Activation('relu')(x)

x = SeparableConv2D(728,(3,3),padding='same',use_bias=False)(x)

x = BatchNormalization()(x)

x = layers.add([x,residual])

#block13

#19,19,728 -> 10,10,1024

residual = Conv2D(1024,(1,1),strides=(2,2),padding='same',use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu')(x)

x = SeparableConv2D(728,(3,3),padding='same',use_bias=False)(x)

x = BatchNormalization()(x)

x = Activation('relu')(x)

x = SeparableConv2D(1024,(3,3),padding='same',use_bias=False)(x)

x = BatchNormalization()(x)

x = MaxPooling2D((3,3),strides=(2,2),padding='same')(x)

x = layers.add([x,residual])

#block14

#10,10,1024 ->10,10,2048

x = SeparableConv2D(1536,(3,3),padding='same',use_bias=False)(x)

x = BatchNormalization()(x)

x = Activation('relu')(x)

x = SeparableConv2D(2048,(3,3),padding='same',use_bias=False)(x)

x = BatchNormalization()(x)

x = Activation('relu')(x)

x = GlobalAveragePooling2D()(x)

output_ten = Dense(nb_class,activation='softmax')(x)

model = Model(input_ten,output_ten)

return model

model_xception = Xcepiton(24,(img_height,img_width,3))

model_xception.summary()

训练参数并不多,是一个比较经典的轻量级网络。

努力加油a啊

1953

1953

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?