Caffe自带的图像转LMDB接口只支持单label,对于多label的任务,可以使用HDF5的格式,也可以通过修改caffe代码来实现, 我的文章Caffe 实现多标签分类 里介绍了怎么通过修改ImageDataLayer来实现Multilabel的任务, 本篇文章介绍怎么通过修改DataLayer来实现带Multilabel的LMDB格式数据输入的分类任务

1. 首先修改代码

修改下面的几个文件:

$CAFFE_ROOT/src/caffe/proto/caffe.proto

$CAFFE_ROOT/src/caffe/layers/data_layer.cpp

$CAFFE_ROOT/src/caffe/util/io.cpp

$CAFFE_ROOT/include/caffe/util/io.hpp

$CAFFE_ROOT/tools/convert_imageset.cpp

(1) 修改 caffe.proto

在 message Datum { }里添加用于容纳labels的一项

repeated float labels = 8;

如果你的Label只有int类型,可以用 repeated int32 labels = 8;

(2) 修改 data_layer.cpp

修改函数 DataLayerSetUp()

新的代码:

vector<int> label_shape(2);

label_shape[0] = batch_size;

label_shape[1] = datum.labels_size();

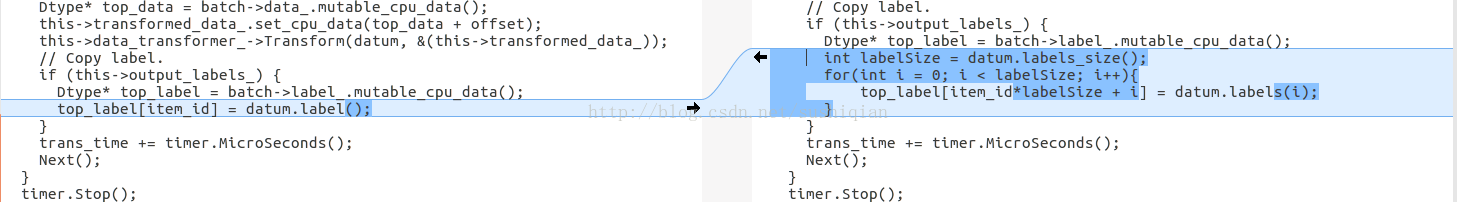

修改函数 load_batch()

新的代码:

int labelSize = datum.labels_size();

for(int i = 0; i < labelSize; i++){

top_label[item_id*labelSize + i] = datum.labels(i);

}代码修改前后,右边是修改后的代码

(3) 修改 io.hpp

新的代码

bool ReadFileToDatum(const string& filename, const vector<float> label, Datum* datum);

inline bool ReadFileToDatum(const string& filename, Datum* datum) {

return ReadFileToDatum(filename, vector<float>(), datum);

}

bool ReadImageToDatum(const string& filename, const vector<float> label,

const int height, const int width, const bool is_color,

const std::string & encoding, Datum* datum);

inline bool ReadImageToDatum(const string& filename, const vector<float> label,

const int height, const int width, const bool is_color, Datum* datum) {

return ReadImageToDatum(filename, label, height, width, is_color,

"", datum);

}

inline bool ReadImageToDatum(const string& filename, const vector<float> label,

const int height, const int width, Datum* datum) {

return ReadImageToDatum(filename, label, height, width, true, datum);

}

inline bool ReadImageToDatum(const string& filename, const vector<float> label,

const bool is_color, Datum* datum) {

return ReadImageToDatum(filename, label, 0, 0, is_color, datum);

}

inline bool ReadImageToDatum(const string& filename, const vector<float> label,

Datum* datum) {

return ReadImageToDatum(filename, label, 0, 0, true, datum);

}

inline bool ReadImageToDatum(const string& filename, const vector<float> label,

const std::string & encoding, Datum* datum) {

return ReadImageToDatum(filename, label, 0, 0, true, encoding, datum);

}

代码修改前后,

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1258

1258

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?