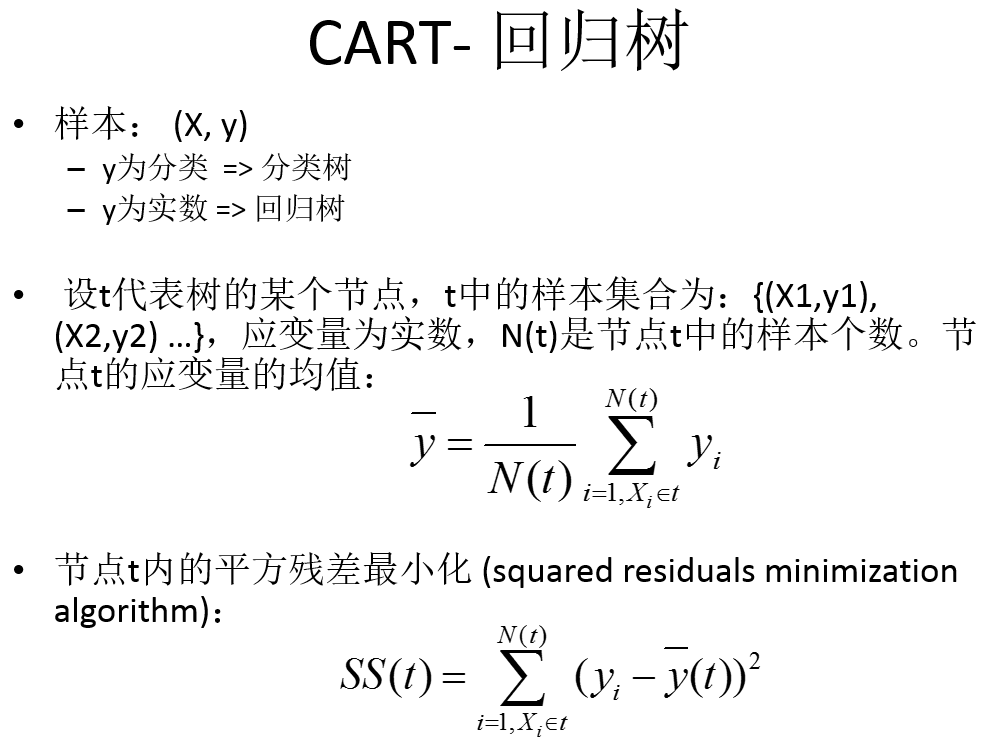

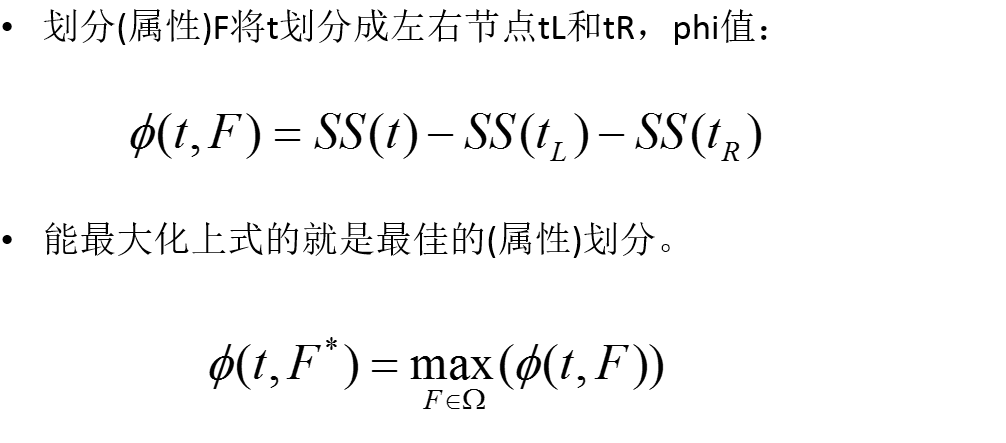

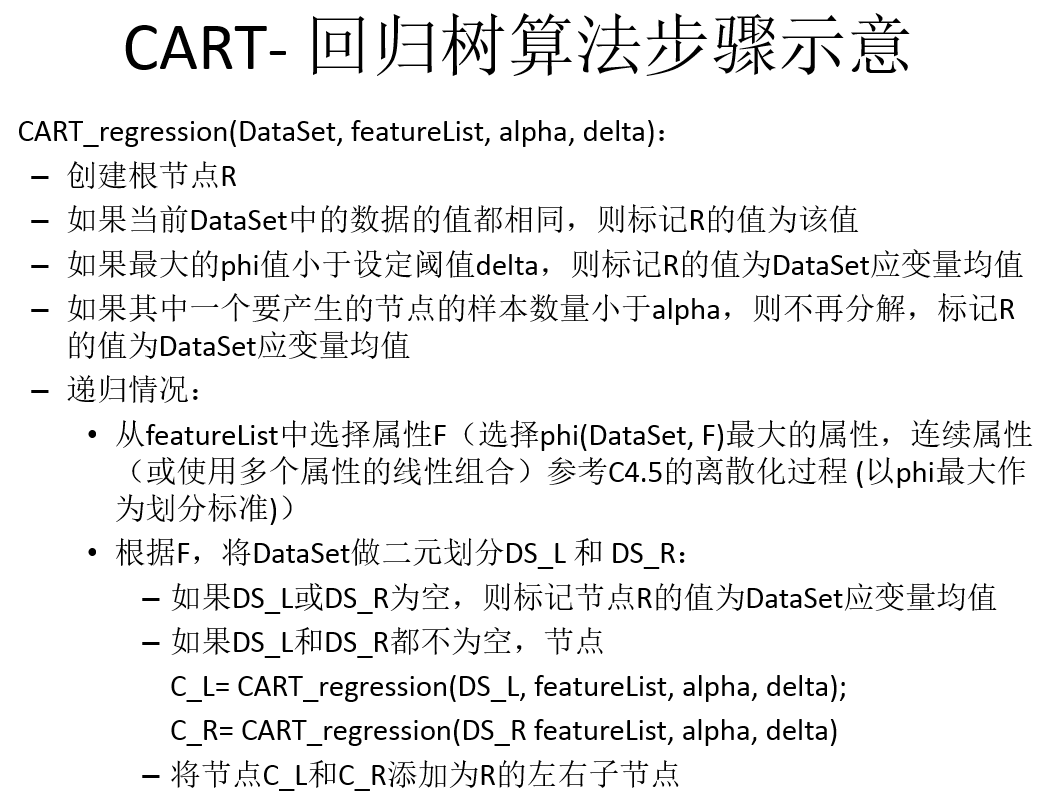

回归树的运行流程与分类树基本类似,但有以下两点不同之处:

1.回归树的每个节点得到的是一个预测值而非分类树式的样本计数.

2.第二,在分枝节点的选取上,回归树使用了最小化均方差

from numpy import *

def loadDataSet(fileName): #general function to parse tab -delimited floats

dataMat = [] #assume last column is target value

fr = open(fileName)

for line in fr.readlines():

curLine = line.strip().split('\t')

fltLine = map(float,curLine) #将每行的每个元素映射为浮点数

dataMat.append(fltLine)

return dataMat

#mat0, mat1 = binSplitDataSet(testMat, 1, 0.5)

def binSplitDataSet(dataSet, feature, value):

print nonzero(dataSet[:,feature] > value)

print nonzero(dataSet[:, feature] > value)[0]

print dataSet[nonzero(dataSet[:, feature] > value)[0], :]

mat0 = dataSet[nonzero(dataSet[:,feature] > value)[0],:][0]

mat1 = dataSet[nonzero(dataSet[:,feature] <= value)[0],:][0]

return mat0,mat1

def regLeaf(dataSet):#返回叶节点,回归树中的目标

return mean(dataSet[:,-1])

def regErr(dataSet):#误差估计,计算目标变量的均方误差,需要返回总误差

return var(dataSet[:,-1]) * shape(dataSet)[0]

def chooseBestSplit(dataSet, leafType=regLeaf, errType=regErr, ops=(1,4)):

tolS = ops[0]; tolN = ops[1]

#if all the target variables are the same value: quit and return value

if len(set(dataSet[:,-1].T.tolist()[0])) == 1: #exit cond 1

return None, leafType(dataSet)

m,n = shape(dataSet)

#the choice of the best feature is driven by Reduction in RSS error from mean

S = errType(dataSet)

bestS = inf; bestIndex = 0; bestValue = 0

for featIndex in range(n-1):

for splitVal in set(dataSet[:,featIndex]):

mat0, mat1 = binSplitDataSet(dataSet, featIndex, splitVal)

if (shape(mat0)[0] < tolN) or (shape(mat1)[0] < tolN): continue

newS = errType(mat0) + errType(mat1)

if newS < bestS:

bestIndex = featIndex

bestValue = splitVal

bestS = newS

#if the decrease (S-bestS) is less than a threshold don't do the split

if (S - bestS) < tolS:

return None, leafType(dataSet) #exit cond 2

mat0, mat1 = binSplitDataSet(dataSet, bestIndex, bestValue)

if (shape(mat0)[0] < tolN) or (shape(mat1)[0] < tolN): #如果某个子集行数不大于tolN,也不应该切分

return None, leafType(dataSet)

return bestIndex,bestValue#returns the best feature to split on

#and the value used for that split

def createTree(dataSet, leafType=regLeaf, errType=regErr, ops=(1,4)):

feat, val = chooseBestSplit(dataSet, leafType, errType, ops)#choose the best split

if feat == None: return val #if the splitting hit a stop condition return val

retTree = {}

retTree['spInd'] = feat

retTree['spVal'] = val

lSet, rSet = binSplitDataSet(dataSet, feat, val)

retTree['left'] = createTree(lSet, leafType, errType, ops)

retTree['right'] = createTree(rSet, leafType, errType, ops)

return retTree

1.http://www.lai18.com/content/1406280.html

2.机器学习实战

9831

9831

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?