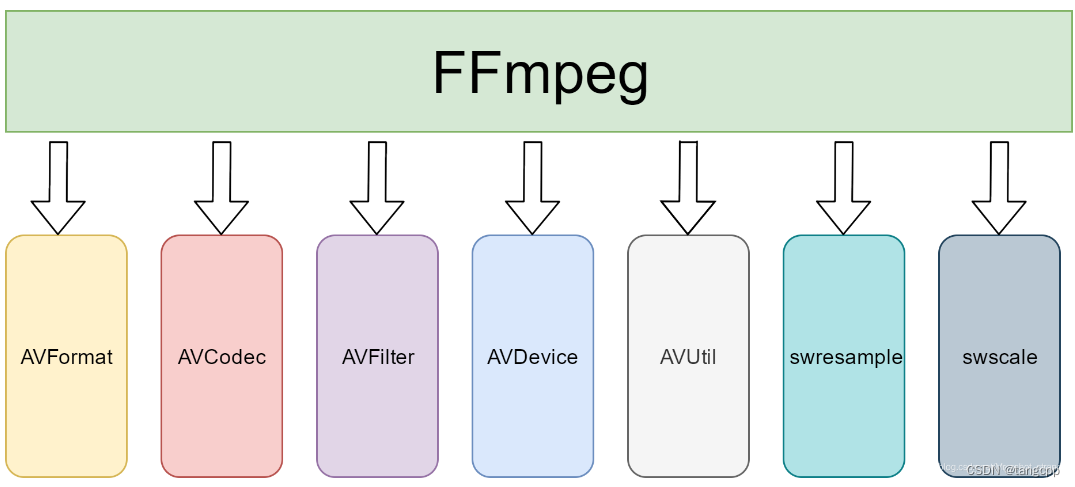

一、FFmpeg框架

1. 模块

- libavformat:用于各种音视频封装格式的生成和解析。

- libavcodec:用于各种类型声音/图像编解码。该库是音视频编解码核心,实现了市面上可见的绝大部分解码器的功能。

- libavfilter:filter(FileIO、 FPS、 DrawText) 音视频滤波器的开发,如宽高比 裁剪。

- libavutil:包含一些公共的工具函数的使用库,包括算数运算 字符操作。

- libavresample:音视频封转编解码格式预设等。

- libswscale:用于视频缩放、色彩映射转换。图像颜色空间或格式转换。

- libswresample:提供了高级别的音频重采样API。例如允许操作音频采样、音频通道布局转换与布局调整。

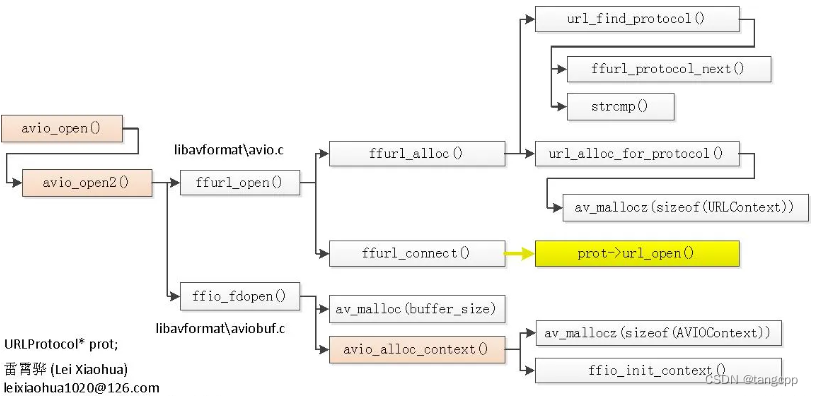

2. avio_open

打开文件,根据文件名找到对应的协议,打开协议对象URLProtocol,存在AVIOContext中,后面用的时候都是通过AVIOContext中的URLProtocol来操作这个文件。

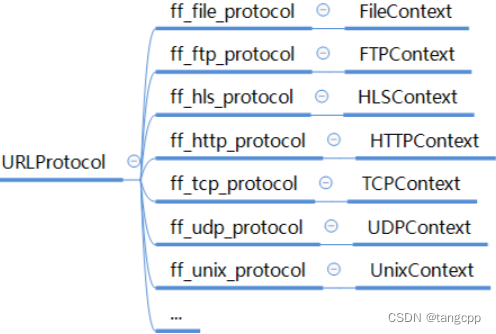

FFMPEG支持各种不同的协议类型

每种协议自己实现协议对象里的操作,外部程序按协议定义的接口统一调用。只要在调用前通过url识别给AVFormatContext的AVIOContext初始化好协议对象就行。

//每个协议自己定义自己的实现函数

const URLProtocol ff_librtmp_protocol = {

.name = "rtmp",

.url_open = rtmp_open,

.url_read = rtmp_read,

.url_write = rtmp_write,

.url_close = rtmp_close,

.url_read_pause = rtmp_read_pause,

.url_read_seek = rtmp_read_seek,

.url_get_file_handle = rtmp_get_file_handle,

.priv_data_size = sizeof(LibRTMPContext),

.priv_data_class = &librtmp_class,

.flags = URL_PROTOCOL_FLAG_NETWORK,

};

//外部调用时把协议对象URLProtocol赋值给URLContext的prot

static int url_alloc_for_protocol(URLContext **puc, const URLProtocol *up,

const char *filename, int flags,

const AVIOInterruptCB *int_cb)

{

uc->av_class = &ffurl_context_class;

uc->filename = (char *)&uc[1];

strcpy(uc->filename, filename);

uc->prot = up;

uc->flags = flags;

uc->is_streamed = 0; /* default = not streamed */

uc->max_packet_size = 0; /* default: stream file */

}

//外部程序调用avio_open2,传入filename

int avio_open2(AVIOContext **s, const char *filename, int flags,

const AVIOInterruptCB *int_cb, AVDictionary **options)

{

return ffio_open_whitelist(s, filename, flags, int_cb, options, NULL, NULL);

}

int ffio_open_whitelist(AVIOContext **s, const char *filename, int flags,

const AVIOInterruptCB *int_cb, AVDictionary **options,

const char *whitelist, const char *blacklist

)

{

URLContext *h;

ffurl_open_whitelist(&h, filename, flags, int_cb, options, whitelist,blacklist, NULL);

ffio_fdopen(s, h);

}

//avio_open2调用ffurl_open_whitelist

int ffurl_open_whitelist(URLContext **puc, const char *filename, int flags,

const AVIOInterruptCB *int_cb, AVDictionary **options,

const char *whitelist, const char* blacklist,

URLContext *parent)

{

int ret = ffurl_alloc(puc, filename, flags, int_cb);

}

//ffurl_open_whitelist 调 ffurl_alloc

//在 ffurl_alloc 中通过 filename 查找到对应的协议。

//协议对 URLProtocol 是每个协议实现好之后提前定义为一个全局对象的,通过url_find_protocol找到之后set在URLContext的prot中

//通过 ffio_fdopen() 把 URLContext 存入 AVIOInternal

int ffurl_alloc(URLContext **puc, const char *filename, int flags,

const AVIOInterruptCB *int_cb)

{

const URLProtocol *p = NULL;

p = url_find_protocol(filename);//通过filename找到协议对象 URLProtocol 之后存入 URLContext

if (p)

return url_alloc_for_protocol(puc, p, filename, flags, int_cb);

}

//AVIOContext 在 ffio_fdopen() 中通过avio_alloc_context创建的

//在ffio_fdopen里面创建了AVIOInternal,然后把 URLContext 存入 AVIOInternal

//在ffio_fdopen里面把后面要调用到的read,write,seek等函数赋值,后面通过 AVIOContext 的read、write、seek函数调用到具体协议相关的函数,然后传参 opaque 就是在ffio_fdopen提前设置好的 AVIOInternal,里面包含具体协议对象,通过具体协议对象能在 AVIOContext 的read、write、seek等函数中调用到具体协议的read、write、seek等函数。

int ffio_fdopen(AVIOContext **s, URLContext *h)

{

AVIOInternal *internal = NULL;

internal = av_mallocz(sizeof(*internal));

internal->h = h;

*s = avio_alloc_context(buffer, buffer_size, h->flags & AVIO_FLAG_WRITE,

internal, io_read_packet, io_write_packet, io_seek);

if(h->prot) {

(*s)->read_pause = io_read_pause;

(*s)->read_seek = io_read_seek;

if (h->prot->url_read_seek)

(*s)->seekable |= AVIO_SEEKABLE_TIME;

}

(*s)->short_seek_get = io_short_seek;

(*s)->av_class = &ff_avio_class;

}

AVIOContext *avio_alloc_context(

unsigned char *buffer,

int buffer_size,

int write_flag,

void *opaque,

int (*read_packet)(void *opaque, uint8_t *buf, int buf_size),

int (*write_packet)(void *opaque, uint8_t *buf, int buf_size),

int64_t (*seek)(void *opaque, int64_t offset, int whence))

{

AVIOContext *s = av_malloc(sizeof(AVIOContext));

ffio_init_context(s, buffer, buffer_size, write_flag, opaque,

read_packet, write_packet, seek);

return s;

}

int ffio_init_context(AVIOContext *s,

unsigned char *buffer,

int buffer_size,

int write_flag,

void *opaque,

int (*read_packet)(void *opaque, uint8_t *buf, int buf_size),

int (*write_packet)(void *opaque, uint8_t *buf, int buf_size),

int64_t (*seek)(void *opaque, int64_t offset, int whence))

{

...

s->opaque = opaque;

...

s->write_packet = write_packet;

s->read_packet = read_packet;

s->seek = seek;

...

s->last_time = AV_NOPTS_VALUE;

return 0;

}

//后面通过AVIOContext找到

static int io_read_packet(void *opaque, uint8_t *buf, int buf_size)

{

AVIOInternal *internal = opaque;

return ffurl_read(internal->h, buf, buf_size);

}

static int io_write_packet(void *opaque, uint8_t *buf, int buf_size)

{

AVIOInternal *internal = opaque;

return ffurl_write(internal->h, buf, buf_size);

}

static int64_t io_seek(void *opaque, int64_t offset, int whence)

{

AVIOInternal *internal = opaque;

return ffurl_seek(internal->h, offset, whence);

}

int ffurl_write(URLContext *h, const unsigned char *buf, int size)

{

......

return retry_transfer_wrapper(h, (unsigned char *)buf, size, size,

(int (*)(struct URLContext *, uint8_t *, int))

h->prot->url_write);//传入协议接口,然后调用

}

static inline int retry_transfer_wrapper(URLContext *h, uint8_t *buf,

int size, int size_min,

int (*transfer_func)(URLContext *h,

uint8_t *buf,

int size))

{

while (len < size_min) {

if (ff_check_interrupt(&h->interrupt_callback))

return AVERROR_EXIT;

ret = transfer_func(h, buf + len, size - len);

}

}

封装和解封装

每一种IO协议都对应有自己的输入和输出,输出对应的封装,即写入到文件中。输入对应的解封装,即从文件中读取。

如HLS协议 ff_hls_protocol ,对应有协议上下文 HLSContext ,封装和解封装都会围绕HLSContext 来获取相关信息。

const URLProtocol ff_hls_protocol = {

.name = "hls",

.url_open = hls_open,

.url_read = hls_read,

.url_close = hls_close,

.flags = URL_PROTOCOL_FLAG_NESTED_SCHEME,

.priv_data_size = sizeof(HLSContext),

};

typedef struct HLSContext {

AVClass *class;

AVFormatContext *ctx;

int n_variants;

struct variant **variants;

int n_playlists;

struct playlist **playlists;

int n_renditions;

struct rendition **renditions;

int cur_seq_no;

int live_start_index;

int first_packet;

int64_t first_timestamp;

int64_t cur_timestamp;

AVIOInterruptCB *interrupt_callback;

AVDictionary *avio_opts;

int strict_std_compliance;

char *allowed_extensions;

int max_reload;

int http_persistent;

int http_multiple;

AVIOContext *playlist_pb;

} HLSContext;

封装

AVOutputFormat ff_hls_muxer = {

.name = "hls",

.long_name = NULL_IF_CONFIG_SMALL("Apple HTTP Live Streaming"),

.extensions = "m3u8",

.priv_data_size = sizeof(HLSContext),

.audio_codec = AV_CODEC_ID_AAC,

.video_codec = AV_CODEC_ID_H264,

.subtitle_codec = AV_CODEC_ID_WEBVTT,

.flags = AVFMT_NOFILE | AVFMT_GLOBALHEADER | AVFMT_ALLOW_FLUSH | AVFMT_NODIMENSIONS,

.init = hls_init,

.write_header = hls_write_header,

.write_packet = hls_write_packet,

.write_trailer = hls_write_trailer,

.priv_class = &hls_class,

};解封装

AVInputFormat ff_hls_demuxer = {

.name = "hls,applehttp",

.long_name = NULL_IF_CONFIG_SMALL("Apple HTTP Live Streaming"),

.priv_class = &hls_class,

.priv_data_size = sizeof(HLSContext),

.flags = AVFMT_NOGENSEARCH,

.read_probe = hls_probe,

.read_header = hls_read_header,

.read_packet = hls_read_packet,

.read_close = hls_close,

.read_seek = hls_read_seek,

};static int read_data(void *opaque, uint8_t *buf, int buf_size)

{

open_input(c, v, v->cur_seg);

}

static int open_input(HLSContext *c, struct playlist *pls, struct segment *seg, AVIOContext **in)

{

open_url(pls->parent, in, seg->url, c->avio_opts, opts, &is_http);

}

static int open_url(AVFormatContext *s, AVIOContext **pb, const char *url,

AVDictionary *opts, AVDictionary *opts2, int *is_http_out)

{

HLSContext *c = s->priv_data;

AVDictionary *tmp = NULL;

const char *proto_name = NULL;

proto_name = avio_find_protocol_name(url);

s->io_open(s, pb, url, AVIO_FLAG_READ, &tmp);

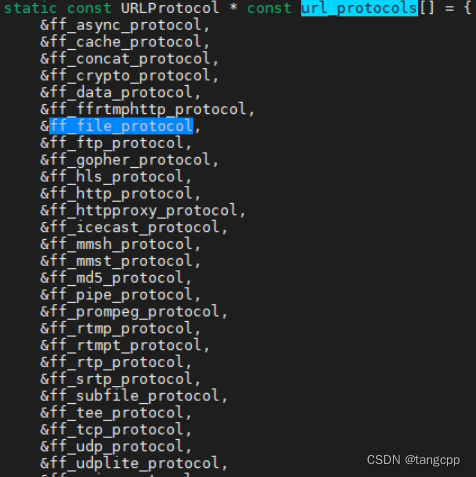

}3. url_protocols

url_protocols 定义在 protocol_list.c 中,但是FFMPEG新下载的代码里是找不到 protocol_list.c文件的,只能看到 protocol_list.c 包含在了 libavformat/protocols.c中(#include "libavformat/protocol_list.c"),那protocol_list.c是怎么出现的?

protocol_list.c 是FFMPEG编译后生成的,FFMPEG编译时通过脚本生成protocol_list.c,包括编解码的 codec 列表,filter的列表等都是通过编译时的enable开关打开之后,用脚本按固定形式的名字写入数组生成各种列表文件的。编译开关没有打开的就没有加入列表中,使用的时候就会找不到而执行失败。

因为FFMPEG支持各种协议,并且还支持用户自定义协议,协议可以不断扩展。每一种协议实现之后都有自己的数据,占用一定的内存,如果编译的时候把所有的都加上,那么很多无用的协议也会被加载到内存,白白的占用内存而不干事情。同样编解码FFMPEG本身支持好多类型的编解码,并且还支持第三方的编解码库,还有不常用的过滤器filter,编解码参数解析器parsers等等,如果都加进内存来,势必给内存增加很多不必要的负担,所以FFMPEG选择通过开关控制编译的方式,选择必要的模块加入,通过脚本生成列表写在相应的.c文件中。一旦写入到列表中那就是定义好了这个结构对象——即把已经定义好的协议模块或者编解码模块等的代码文件加入编译(各协议结构体或者编解码结构体都是在模块代码中定义好的,不加入编译自然不会加载到内存), 然后把模块中定义好的结构体加入到列表中,以此来控制FFMPEG的内存占用情况。

定义的各种协议列表

FILTER_LIST=$(find_filters_extern libavfilter/allfilters.c)

OUTDEV_LIST=$(find_things_extern muxer AVOutputFormat libavdevice/alldevices.c outdev)

INDEV_LIST=$(find_things_extern demuxer AVInputFormat libavdevice/alldevices.c indev)

MUXER_LIST=$(find_things_extern muxer AVOutputFormat libavformat/allformats.c)

DEMUXER_LIST=$(find_things_extern demuxer AVInputFormat libavformat/allformats.c)

ENCODER_LIST=$(find_things_extern encoder AVCodec libavcodec/allcodecs.c)

DECODER_LIST=$(find_things_extern decoder AVCodec libavcodec/allcodecs.c)

CODEC_LIST="$ENCODER_LIST $DECODER_LIST"

PARSER_LIST=$(find_things_extern parser AVCodecParser libavcodec/parsers.c)

BSF_LIST=$(find_things_extern bsf AVBitStreamFilter libavcodec/bitstream_filters.c)

HWACCEL_LIST=$(find_things_extern hwaccel AVHWAccel libavcodec/hwaccels.h)

PROTOCOL_LIST=$(find_things_extern protocol URLProtocol libavformat/protocols.c)

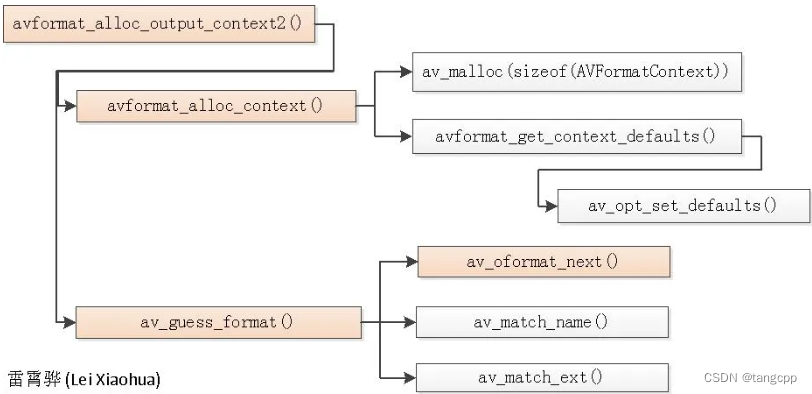

4. AVFormatContext

每一种协议都有自己的协议对象,包括用户自定义的协议也需要自己实现好自己的协议对象。每个协议对象对应一种媒体文件格式,每种媒体格式对应一个AVFormatContext。

AVIOContext 属于AVFormatContext,相当于这个媒体格式的一个文件句柄,在文件打开之前必须先创建AVFormatContext对象。

avformat_alloc_output_context2可以通过文件后缀guess出这个文件格式并分配相应的AVFormatContext。

5. 创建流媒体文件的流程

a. 首先通过avformat_alloc_output_context2和文件名创建格式上下文 AVFormatContext

b. 有了 AVFormatContext 之后,通过avio_open打开这个格式媒体文件得到AVFormatContext中的AVIOContext,同时 AVIOContext 中也存入了具体媒体格式协议的协议对象URLContext::URLProtocol。

c. 协议对象打开之后就可以通过 AVFormatContext 和 编码类型打开媒体流

AVStream* av_stream = avformat_new_stream(media_output_ctx_, codec);

如codec是H264的codec,AVFormatContext是MP4的媒体格式,那avformat_new_stream就是打开了MP4文件的H264流。

d. 流打开之后就可以通过avcodec_open2和流中的codec设置编码参数打开编码器。

e. 以上基本工作完成之后就可以写文件头 avformat_write_header(),里面写媒体文件的元信息,之后就可以通过 av_interleaved_write_frame() 写入媒体数据,最后通过 av_write_trailer 写入文件结束符,关闭之前打开的AVIOContext和销毁AVFormatContext。

6. avcodec_send_frame、avcodec_send_packet 和 avcodec_receive_packet、 avcodec_receive_frame

static int encode_frame(AVCodecContext *c, AVFrame *frame)

{

AVPacket pkt = { 0 };

int ret;

int size = 0;

av_init_packet(&pkt);

ret = avcodec_send_frame(c, frame);

if (ret < 0)

return ret;

do {

ret = avcodec_receive_packet(c, &pkt);

if (ret >= 0) {

size += pkt.size;

av_packet_unref(&pkt);

} else if (ret < 0 && ret != AVERROR(EAGAIN) && ret != AVERROR_EOF)

return ret;

} while (ret >= 0);

return size;

}send 0 :send_packet返回值为0,正常状态,意味着输入的packet被解码器正常接收。

send EAGAIN :send_packet返回值为EAGAIN,输入的packet未被接收,需要输出一个或多个的frame后才能重新输入当前packet。

send EOF :send_packet返回值为EOF,当send_packet输入为NULL时才会触发该状态,用于通知解码器输入packet已结束。

receive 0 :receive_frame返回值为0,正常状态,意味着已经输出一帧。

receive EAGAIN:receive_frame返回值为EAGAIN,未能输出frame,需要输入更多的packet才能输出当前frame。

receive EOF :receive_frame返回值为EOF,当处于send EOF状态后,调用一次或者多次receive_frame后就能得到该状态,表示所有的帧已经被输出。

7. 编解码器

同url_protocols 定义在 protocol_list.c 一样, 编解码器也通过编译配置开关编译后放在 #include "libavcodec/codec_list.c" 中

avcodec_register_all 就是把codec_list 里面的都连接起来,等查找的时候能遍历到。

AVCodec * codec_list[] = {

};

void avcodec_register_all(void)

{

ff_thread_once(&av_codec_next_init, av_codec_init_next);

}

typedef struct AVCodec {

const char *name;

enum AVCodecID id;

struct AVCodec *next;

}

static void av_codec_init_next(void)

{

AVCodec *prev = NULL, *p;

void *i = 0;

while ((p = (AVCodec*)av_codec_iterate(&i))) {

if (prev)

prev->next = p;

prev = p;

}

}

const AVCodec *av_codec_iterate(void **opaque)

{

uintptr_t i = (uintptr_t)*opaque;

const AVCodec *c = codec_list[i];

ff_thread_once(&av_codec_static_init, av_codec_init_static);

if (c)

*opaque = (void*)(i + 1);

return c;

}打开编码器

//在定义编码器协议的时候设置好 .priv_data_size = sizeof(X264Context)

//打开编码器的时候初始化,调编码器初始化函数

int attribute_align_arg avcodec_open2(AVCodecContext *avctx, const AVCodec *codec, AVDictionary **options)

{

if (codec->priv_data_size > 0) {

if (!avctx->priv_data) {

avctx->priv_data = av_mallocz(codec->priv_data_size);

}

}

av_opt_set_dict(avctx->priv_data, &tmp);

ff_frame_thread_encoder_init(avctx, options ? *options : NULL);

ff_decode_bsfs_init(avctx);

ff_thread_init(avctx);

avctx->codec->init(avctx);

}编码函数

typedef struct AVCodecContext {

const struct AVCodec *codec;

enum AVCodecID codec_id; /* see AV_CODEC_ID_xxx */

void *priv_data;

struct AVCodecInternal *internal;

void *opaque;

}

typedef struct AVCodec {

const char *name;

enum AVCodecID id;

struct AVCodec *next;

int (*send_frame)(AVCodecContext *avctx, const AVFrame *frame);

int (*receive_packet)(AVCodecContext *avctx, AVPacket *avpkt);

}

int attribute_align_arg avcodec_send_frame(AVCodecContext *avctx, const AVFrame *frame)

{

if (avctx->codec->send_frame)

return avctx->codec->send_frame(avctx, frame);

return do_encode(avctx, frame, &(int){0});

}

int attribute_align_arg avcodec_receive_packet(AVCodecContext *avctx, AVPacket *avpkt)

{

return avctx->codec->receive_packet(avctx, avpkt);

}

static int do_encode(AVCodecContext *avctx, const AVFrame *frame, int *got_packet)

{

if (avctx->codec_type == AVMEDIA_TYPE_VIDEO) {

ret = avcodec_encode_video2(avctx, avctx->internal->buffer_pkt, frame, got_packet);

} else if (avctx->codec_type == AVMEDIA_TYPE_AUDIO) {

ret = avcodec_encode_audio2(avctx, avctx->internal->buffer_pkt, frame, got_packet);

}x264 和 x265 需要单独安装,并且它们都没有 avcodec_send_xxx 函数,只能使用 encode2 协议接口,调 do_encode函数。

ffmpeg 接入x264编码库

static const AVOption options[] = {...

{ "profile", "Set profile restrictions (cf. x264 --fullhelp) ", OFFSET(profile), AV_OPT_TYPE_STRING, { 0 }, 0, 0, VE},

......

}

static const AVCodecDefault x264_defaults[] = {......

{ "qmin", "-1" },

{ "qmax", "-1" },

......

}

static const AVClass x264_class = {

.class_name = "libx264",

.item_name = av_default_item_name,

.option = options,

.version = LIBAVUTIL_VERSION_INT,

};

AVCodec ff_libx264_encoder = {

.name = "libx264",

.long_name = NULL_IF_CONFIG_SMALL("libx264 H.264 / AVC / MPEG-4 AVC / MPEG-4 part 10"),

.type = AVMEDIA_TYPE_VIDEO,

.id = AV_CODEC_ID_H264,

.priv_data_size = sizeof(X264Context),

.init = X264_init,

.encode2 = X264_frame,

.close = X264_close,

.capabilities = AV_CODEC_CAP_DELAY | AV_CODEC_CAP_AUTO_THREADS,

.priv_class = &x264_class,

.defaults = x264_defaults,

.init_static_data = X264_init_static,

.caps_internal = FF_CODEC_CAP_INIT_CLEANUP,

.wrapper_name = "libx264",

};

//定义和x264库(需要单独安装)对接的结构

typedef struct X264Context {

AVClass *class;

x264_param_t params;

x264_t *enc;

x264_picture_t pic;

uint8_t *sei;

int sei_size;

char *preset;

char *tune;

char *profile;

char *level;

int fastfirstpass;

char *wpredp;

char *x264opts;

float crf;

float crf_max;

int cqp;

......

char *x264_params;

} X264Context;

//初始化,设置x642编码参数,调用x264库函数x264_encoder_open打开x264编码器

static av_cold int X264_init(AVCodecContext *avctx)

{

X264Context *x4 = avctx->priv_data;

if (avctx->gop_size >= 0)

x4->params.i_keyint_max = avctx->gop_size;

x4->params.xxx = avctx->xxx;

x4->params.rc.i_aq_mode = x4->aq_mode;

x4->params.xxx = x4->xxx;

x4->enc = x264_encoder_open(&x4->params);

if (avctx->flags & AV_CODEC_FLAG_GLOBAL_HEADER) {

x264_nal_t *nal;

s = x264_encoder_headers(x4->enc, &nal, &nnal);

}

}

//编码的时候通过初始化时打开的编码器x4->enc调用x264库函数x264_encoder_encode完成

static int X264_frame(AVCodecContext *ctx, AVPacket *pkt, const AVFrame *frame,

int *got_packet)

{

X264Context *x4 = ctx->priv_data;

x264_nal_t *nal;

x264_picture_t pic_out = {0};

x264_picture_init( &x4->pic );

do {

x264_encoder_encode(x4->enc, &nal, &nnal, frame? &x4->pic: NULL, &pic_out);

encode_nals(ctx, pkt, nal, nnal);

} while (!ret && !frame && x264_encoder_delayed_frames(x4->enc));

}

typedef struct{

int i_ref_idc; /* nal_priority_e */

int i_type; /* nal_unit_type_e */

int b_long_startcode;

int i_first_mb;

int i_last_mb;

uint8_t *p_payload;

int i_padding;

} x264_nal_t;FFMPEG接入x265编码

//定义H265编码协议

AVCodec ff_libx265_encoder = {

.name = "libx265",

.long_name = NULL_IF_CONFIG_SMALL("libx265 H.265 / HEVC"),

.type = AVMEDIA_TYPE_VIDEO,

.id = AV_CODEC_ID_HEVC,

.init = libx265_encode_init,

.init_static_data = libx265_encode_init_csp,

.encode2 = libx265_encode_frame,

.close = libx265_encode_close,

.priv_data_size = sizeof(libx265Context),

.priv_class = &class,

.defaults = x265_defaults,

.capabilities = AV_CODEC_CAP_DELAY | AV_CODEC_CAP_AUTO_THREADS,

.wrapper_name = "libx265",

};

//定义和H265编码库对接的结构

typedef struct libx265Context {

const AVClass *class;

x265_encoder *encoder;

x265_param *params;

const x265_api *api;

float crf;

int forced_idr;

char *preset;

char *tune;

char *profile;

char *x265_opts;

} libx265Context;

//初始化的时候通过x265库的函数 x265_api_get 拿到x265 api 对象,后面的x265操作都是通过这个api对象来完成。

static av_cold int libx265_encode_init(AVCodecContext *avctx)

{

libx265Context *ctx = avctx->priv_data;

ctx->params = ctx->api->param_alloc();

ctx->api = x265_api_get(av_pix_fmt_desc_get(avctx->pix_fmt)->comp[0].depth);

ctx->encoder = ctx->api->encoder_open(ctx->params);

}

//x265的编码通过初始化打开的编码器ctx->encoder来完成

static int libx265_encode_frame(AVCodecContext *avctx, AVPacket *pkt,

const AVFrame *pic, int *got_packet)

{

libx265Context *ctx = avctx->priv_data;

x265_picture x265pic;

x265_picture x265pic_out = { 0 };

x265_nal *nal;

ctx->api->encoder_encode(ctx->encoder, &nal, &nnal,

pic ? &x265pic : NULL, &x265pic_out);

}解码函数

int attribute_align_arg avcodec_send_packet(AVCodecContext *avctx, const AVPacket *avpkt)

{

AVCodecInternal *avci = avctx->internal;

av_bsf_send_packet(avci->filter.bsfs[0], avci->buffer_pkt);

decode_receive_frame_internal(avctx, avci->buffer_frame);

}

static int decode_receive_frame_internal(AVCodecContext *avctx, AVFrame *frame)

{

AVCodecInternal *avci = avctx->internal;

if (avctx->codec->receive_frame)

ret = avctx->codec->receive_frame(avctx, frame);

else

ret = decode_simple_receive_frame(avctx, frame);

}

static int decode_simple_receive_frame(AVCodecContext *avctx, AVFrame *frame)

{

while (!frame->buf[0]) {

decode_simple_internal(avctx, frame);

}

}

static int decode_simple_internal(AVCodecContext *avctx, AVFrame *frame)

{

AVCodecInternal *avci = avctx->internal;

DecodeSimpleContext *ds = &avci->ds;

AVPacket *pkt = ds->in_pkt;

if (HAVE_THREADS && avctx->active_thread_type & FF_THREAD_FRAME) {

ret = ff_thread_decode_frame(avctx, frame, &got_frame, pkt);

} else {

ret = avctx->codec->decode(avctx, frame, &got_frame, pkt);

}H264解码

//H264解码协议接口

AVCodec ff_h264_decoder = {

.name = "h264",

.long_name = NULL_IF_CONFIG_SMALL("H.264 / AVC / MPEG-4 AVC / MPEG-4 part 10"),

.type = AVMEDIA_TYPE_VIDEO,

.id = AV_CODEC_ID_H264,

.priv_data_size = sizeof(H264Context),

.init = h264_decode_init,

.close = h264_decode_end,

.decode = h264_decode_frame,

.capabilities = /*AV_CODEC_CAP_DRAW_HORIZ_BAND |*/ AV_CODEC_CAP_DR1 |

AV_CODEC_CAP_DELAY | AV_CODEC_CAP_SLICE_THREADS |

AV_CODEC_CAP_FRAME_THREADS,

.hw_configs = (const AVCodecHWConfigInternal*[]) {

#if CONFIG_H264_DXVA2_HWACCEL

HWACCEL_DXVA2(h264),

#endif

#if CONFIG_H264_D3D11VA_HWACCEL

HWACCEL_D3D11VA(h264),

#endif

#if CONFIG_H264_D3D11VA2_HWACCEL

HWACCEL_D3D11VA2(h264),

#endif

#if CONFIG_H264_NVDEC_HWACCEL

HWACCEL_NVDEC(h264),

#endif

#if CONFIG_H264_VAAPI_HWACCEL

HWACCEL_VAAPI(h264),

#endif

#if CONFIG_H264_VDPAU_HWACCEL

HWACCEL_VDPAU(h264),

#endif

#if CONFIG_H264_VIDEOTOOLBOX_HWACCEL

HWACCEL_VIDEOTOOLBOX(h264),

#endif

NULL

},

.caps_internal = FF_CODEC_CAP_INIT_THREADSAFE | FF_CODEC_CAP_EXPORTS_CROPPING,

.flush = flush_dpb,

.init_thread_copy = ONLY_IF_THREADS_ENABLED(decode_init_thread_copy),

.update_thread_context = ONLY_IF_THREADS_ENABLED(ff_h264_update_thread_context),

.profiles = NULL_IF_CONFIG_SMALL(ff_h264_profiles),

.priv_class = &h264_class,

};

static av_cold int h264_decode_init(AVCodecContext *avctx)

{

H264Context *h = avctx->priv_data;

h264_init_context(avctx, h);

if (avctx->extradata_size > 0 && avctx->extradata) {

ret = ff_h264_decode_extradata(avctx->extradata, avctx->extradata_size,

&h->ps, &h->is_avc, &h->nal_length_size,

avctx->err_recognition, avctx);

}

}

//初始化的时候把解码参数都保存在H264Context中

static int h264_init_context(AVCodecContext *avctx, H264Context *h)

{

int i;

h->avctx = avctx;

h->cur_chroma_format_idc = -1;

h->width_from_caller = avctx->width;

h->height_from_caller = avctx->height;

h->picture_structure = PICT_FRAME;

h->workaround_bugs = avctx->workaround_bugs;

h->flags = avctx->flags;

h->poc.prev_poc_msb = 1 << 16;

h->recovery_frame = -1;

h->frame_recovered = 0;

h->poc.prev_frame_num = -1;

h->sei.frame_packing.arrangement_cancel_flag = -1;

h->sei.unregistered.x264_build = -1;

h->next_outputed_poc = INT_MIN;

h->nb_slice_ctx = (avctx->active_thread_type & FF_THREAD_SLICE) ? avctx->thread_count : 1;

}

//解码avpkt,通过data输出AVFrame

static int h264_decode_frame(AVCodecContext *avctx, void *data,

int *got_frame, AVPacket *avpkt)

{

const uint8_t *buf = avpkt->data;

int buf_size = avpkt->size;

H264Context *h = avctx->priv_data;

AVFrame *pict = data; //输出

int buf_size = avpkt->size;

if (buf_size == 0)

return send_next_delayed_frame(h, pict, got_frame, 0);//avpkt->size为0时刷新缓存中的帧

//解码前先解出sps/pps的数据放在extradata中

ff_h264_decode_extradata(side, side_size,

&h->ps, &h->is_avc, &h->nal_length_size,

avctx->err_recognition, avctx);

//解码nal单元

buf_index = decode_nal_units(h, buf, buf_size);//具体解码过程

if (!h->cur_pic_ptr && h->nal_unit_type == H264_NAL_END_SEQUENCE) {

return send_next_delayed_frame(h, pict, got_frame, buf_index);//一帧的最后一个包时输出一帧

}

if (h->next_output_pic) {

ret = finalize_frame(h, pict, h->next_output_pic, got_frame);//一次解码可能输出多帧,输出之后发现还需要再输出的情况

}

}

//针对不同nal类型(IDR SLICE、普通SLICE、SPS、PPS、增强信心SEI)做不同的解码,配置了硬解码的优先做硬解码

static int decode_nal_units(H264Context *h, const uint8_t *buf, int buf_size)

{

AVCodecContext *const avctx = h->avctx;

for (i = 0; i < h->pkt.nb_nals; i++) {//循环解码所有nal

H2645NAL *nal = &h->pkt.nals[i];

switch (nal->type) {

case H264_NAL_IDR_SLICE:

case H264_NAL_SLICE:

ff_h264_queue_decode_slice(h, nal);

if (h->avctx->hwaccel) {

ret = avctx->hwaccel->decode_slice(avctx, nal->raw_data, nal->raw_size);

h->nb_slice_ctx_queued = 0;

} else

ret = ff_h264_execute_decode_slices(h);

break;

case H264_NAL_SEI:

ret = ff_h264_sei_decode(&h->sei, &nal->gb, &h->ps, avctx);

break;

case H264_NAL_SPS:

if (avctx->hwaccel && avctx->hwaccel->decode_params) {

ret = avctx->hwaccel->decode_params(avctx,

nal->type,

nal->raw_data,

nal->raw_size);

break;

}

case H264_NAL_PPS:

if (avctx->hwaccel && avctx->hwaccel->decode_params) {

ret = avctx->hwaccel->decode_params(avctx,

nal->type,

nal->raw_data,

nal->raw_size);

}

break;

}

ret = ff_h264_execute_decode_slices(h);

}硬件加速

同其他编解码器一样,硬编硬解也定义了一套自己的协议接口,如:

//vaapi_h264.c

const AVHWAccel ff_h264_vaapi_hwaccel = {

.name = "h264_vaapi",

.type = AVMEDIA_TYPE_VIDEO,

.id = AV_CODEC_ID_H264,

.pix_fmt = AV_PIX_FMT_VAAPI,

.start_frame = &vaapi_h264_start_frame,

.end_frame = &vaapi_h264_end_frame,

.decode_slice = &vaapi_h264_decode_slice,

.frame_priv_data_size = sizeof(VAAPIDecodePicture),

.init = &ff_vaapi_decode_init,

.uninit = &ff_vaapi_decode_uninit,

.frame_params = &ff_vaapi_common_frame_params,

.priv_data_size = sizeof(VAAPIDecodeContext),

.caps_internal = HWACCEL_CAP_ASYNC_SAFE,

};

//vaapi_vp9.c

const AVHWAccel ff_vp9_vaapi_hwaccel = {

.name = "vp9_vaapi",

.type = AVMEDIA_TYPE_VIDEO,

.id = AV_CODEC_ID_VP9,

.pix_fmt = AV_PIX_FMT_VAAPI,

.start_frame = vaapi_vp9_start_frame,

.end_frame = vaapi_vp9_end_frame,

.decode_slice = vaapi_vp9_decode_slice,

.frame_priv_data_size = sizeof(VAAPIDecodePicture),

.init = ff_vaapi_decode_init,

.uninit = ff_vaapi_decode_uninit,

.frame_params = ff_vaapi_common_frame_params,

.priv_data_size = sizeof(VAAPIDecodeContext),

.caps_internal = HWACCEL_CAP_ASYNC_SAFE,

};

//对应每一种类型有一个通用的硬件上下文,里面封装有具体设备上下文和帧上下文

const HWContextType ff_hwcontext_type_vaapi = {

.type = AV_HWDEVICE_TYPE_VAAPI,

.name = "VAAPI",

.device_hwctx_size = sizeof(AVVAAPIDeviceContext),

.device_priv_size = sizeof(VAAPIDeviceContext),

.device_hwconfig_size = sizeof(AVVAAPIHWConfig),

.frames_hwctx_size = sizeof(AVVAAPIFramesContext),

.frames_priv_size = sizeof(VAAPIFramesContext),

.device_create = &vaapi_device_create,

.device_derive = &vaapi_device_derive,

.device_init = &vaapi_device_init,

.device_uninit = &vaapi_device_uninit,

.frames_get_constraints = &vaapi_frames_get_constraints,

.frames_init = &vaapi_frames_init,

.frames_uninit = &vaapi_frames_uninit,

.frames_get_buffer = &vaapi_get_buffer,

.transfer_get_formats = &vaapi_transfer_get_formats,

.transfer_data_to = &vaapi_transfer_data_to,

.transfer_data_from = &vaapi_transfer_data_from,

.map_to = &vaapi_map_to,

.map_from = &vaapi_map_from,

.pix_fmts = (const enum AVPixelFormat[]) {

AV_PIX_FMT_VAAPI,

AV_PIX_FMT_NONE

},

};

//硬件加速器初始化,把配置中的硬件加速器赋值给avctx->hwaccel,在解码的时候先检查avctx->hwaccel不为空则使用avctx->hwaccel进行解码,即有硬件加速器的时候优先使用硬解

static int hwaccel_init(AVCodecContext *avctx,

const AVCodecHWConfigInternal *hw_config)

{

const AVHWAccel *hwaccel;

hwaccel = hw_config->hwaccel;

avctx->hwaccel = hwaccel;

if (hwaccel->init) {

err = hwaccel->init(avctx);

}

}

硬件加速器配置

同其他编解码协议或者IO协议一样,需要再编译前用 --enable 来配置,配置之后在解码器中把加速器就加上了,这样这个解码器就支持这种类型的硬解,但具体是不是真能支持得上还要看硬件系统本身。

//什么时候调的硬件加速初始化呢?

//编译的时候配置(Makefile 配置)

OBJS-$(CONFIG_H263_VAAPI_HWACCEL) += vaapi_mpeg4.o

OBJS-$(CONFIG_H263_VIDEOTOOLBOX_HWACCEL) += videotoolbox.o

OBJS-$(CONFIG_H264_D3D11VA_HWACCEL) += dxva2_h264.o

OBJS-$(CONFIG_H264_DXVA2_HWACCEL) += dxva2_h264.o

OBJS-$(CONFIG_H264_NVDEC_HWACCEL) += nvdec_h264.o

OBJS-$(CONFIG_H264_QSV_HWACCEL) += qsvdec_h2645.o

OBJS-$(CONFIG_H264_VAAPI_HWACCEL) += vaapi_h264.o

OBJS-$(CONFIG_H264_VDPAU_HWACCEL) += vdpau_h264.o

//解码器协议中通过编译配置对硬解码赋值

AVCodec ff_h264_decoder = {

......

.hw_configs = (const AVCodecHWConfigInternal*[]) {

#if CONFIG_H264_DXVA2_HWACCEL

HWACCEL_DXVA2(h264),

#endif

#if CONFIG_H264_D3D11VA_HWACCEL

HWACCEL_D3D11VA(h264),

#endif

#if CONFIG_H264_D3D11VA2_HWACCEL

HWACCEL_D3D11VA2(h264),

#endif

#if CONFIG_H264_NVDEC_HWACCEL

HWACCEL_NVDEC(h264),

#endif

#if CONFIG_H264_VAAPI_HWACCEL

HWACCEL_VAAPI(h264),

#endif

#if CONFIG_H264_VDPAU_HWACCEL

HWACCEL_VDPAU(h264),

#endif

#if CONFIG_H264_VIDEOTOOLBOX_HWACCEL

HWACCEL_VIDEOTOOLBOX(h264),

#endif

NULL

},

}

//加入hw_configs数组的宏定义,就是把定义好的硬件加速器填到数组hw_configs中,同时对AVCodecHWConfig public赋值。

#define HWACCEL_DXVA2(codec) \

HW_CONFIG_HWACCEL(1, 1, 1, DXVA2_VLD, DXVA2, ff_ ## codec ## _dxva2_hwaccel)

#define HWACCEL_D3D11VA2(codec) \

HW_CONFIG_HWACCEL(1, 1, 0, D3D11, D3D11VA, ff_ ## codec ## _d3d11va2_hwaccel)

#define HWACCEL_NVDEC(codec) \

HW_CONFIG_HWACCEL(1, 1, 0, CUDA, CUDA, ff_ ## codec ## _nvdec_hwaccel)

#define HWACCEL_VAAPI(codec) \

HW_CONFIG_HWACCEL(1, 1, 1, VAAPI, VAAPI, ff_ ## codec ## _vaapi_hwaccel)

#define HWACCEL_VDPAU(codec) \

HW_CONFIG_HWACCEL(1, 1, 1, VDPAU, VDPAU, ff_ ## codec ## _vdpau_hwaccel)

#define HWACCEL_VIDEOTOOLBOX(codec) \

HW_CONFIG_HWACCEL(1, 1, 1, VIDEOTOOLBOX, VIDEOTOOLBOX, ff_ ## codec ## _videotoolbox_hwaccel)

#define HWACCEL_D3D11VA(codec) \

HW_CONFIG_HWACCEL(0, 0, 1, D3D11VA_VLD, NONE, ff_ ## codec ## _d3d11va_hwaccel)

#define HWACCEL_XVMC(codec) \

HW_CONFIG_HWACCEL(0, 0, 1, XVMC, NONE, ff_ ## codec ## _xvmc_hwaccel)

//AVCodecHWConfigInternal 有2个成员,一个是硬件加速配置,一个是加速器本身

typedef struct AVCodecHWConfigInternal {

AVCodecHWConfig public;

const AVHWAccel *hwaccel;

} AVCodecHWConfigInternal;

//public 成员定义

typedef struct AVCodecHWConfig {

enum AVPixelFormat pix_fmt;

int methods;

enum AVHWDeviceType device_type;

} AVCodecHWConfig;

//获取硬解的像素格式

static enum AVPixelFormat get_pixel_format(H264Context *h, int force_callback)

{

switch (h->ps.sps->bit_depth_luma) {

case 8:

#if CONFIG_H264_VDPAU_HWACCEL

*fmt++ = AV_PIX_FMT_VDPAU;

#endif

#if CONFIG_H264_NVDEC_HWACCEL

*fmt++ = AV_PIX_FMT_CUDA;

#endif

#if CONFIG_H264_DXVA2_HWACCEL

*fmt++ = AV_PIX_FMT_DXVA2_VLD;

#endif

#if CONFIG_H264_D3D11VA_HWACCEL

*fmt++ = AV_PIX_FMT_D3D11VA_VLD;

*fmt++ = AV_PIX_FMT_D3D11;

#endif

#if CONFIG_H264_VAAPI_HWACCEL

*fmt++ = AV_PIX_FMT_VAAPI;

}

}

//硬件加速器的初始化时在获取format的时候发现这个format的配置中有硬件加速时初始化的

int ff_get_format(AVCodecContext *avctx, const enum AVPixelFormat *fmt)

{

enum AVPixelFormat *choices;

......

user_choice = avctx->get_format(avctx, choices);//get_format是个用户回调函数,把choices交给用户自己选择format

if (avctx->codec->hw_configs) {

for (i = 0;; i++) {

hw_config = avctx->codec->hw_configs[i];

if (!hw_config)

break;

if (hw_config->public.pix_fmt == user_choice)

break;

}

}

if (hw_config->hwaccel) {

hwaccel_init(avctx, hw_config);

}

......

ret = user_choice;

return ret;

}

const AVCodecHWConfig *avcodec_get_hw_config(const AVCodec *codec, int index)

{

int i;

if (!codec->hw_configs || index < 0)

return NULL;

for (i = 0; i <= index; i++)

if (!codec->hw_configs[i])

return NULL;

return &codec->hw_configs[index]->public;

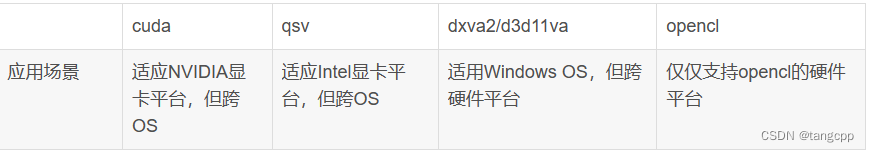

}FFMPEG里面定义的硬件加速器类型

static const char *const hw_type_names[] = {

[AV_HWDEVICE_TYPE_CUDA] = "cuda",//一个基于Nvidia GPU的并行计算的架构,一套硬件和指令集,与X86或者cell类似的架构,但是是基于是GPU,而不是传统的CPU。CUDA架构可以支持包括OpenCL或者DirectX的API

[AV_HWDEVICE_TYPE_DRM] = "drm", //DRM是linux内核中负责与显卡交互的管理架构,用户空间很方便的利用DRM提供的API,实现3D渲染、视频解码和GPU计算等工作。DRM检测到的每个GPU都作为DRM设备,并为之创建一个设备文件/dev/dri/cardX与之连接

[AV_HWDEVICE_TYPE_DXVA2] = "dxva2", //DirectX Video Acceleration,微软公司专门定制的视频加速规范API

[AV_HWDEVICE_TYPE_D3D11VA] = "d3d11va",// 微软所设立的一套硬件渲染API

[AV_HWDEVICE_TYPE_OPENCL] = "opencl",//由苹果(Apple)公司发起,业界众多著名厂商共同制作的面向异构系统通用目的并行编程的开放式、免费标准.按标准定义成一套可以利用GPU进行并行计算工作的连接硬件和软件的API

[AV_HWDEVICE_TYPE_QSV] = "qsv",

[AV_HWDEVICE_TYPE_VAAPI] = "vaapi",

[AV_HWDEVICE_TYPE_VDPAU] = "vdpau",

[AV_HWDEVICE_TYPE_VIDEOTOOLBOX] = "videotoolbox",

[AV_HWDEVICE_TYPE_MEDIACODEC] = "mediacodec",

};

enum AVHWDeviceType av_hwdevice_find_type_by_name(const char *name){

int type;

for (type = 0; type < FF_ARRAY_ELEMS(hw_type_names); type++) {

if (hw_type_names[type] && !strcmp(hw_type_names[type], name))

return type;

}

return AV_HWDEVICE_TYPE_NONE;

}

//通过硬件加速器名字把用户想用的硬件加速器记录在hw_pix_fmt中,在初始化的时候解码器把当前支持的硬解像素格式全部传出来给用户选择,如果没有用户所想用的则返回错误。

static enum AVPixelFormat get_hw_format(AVCodecContext *ctx,

const enum AVPixelFormat *pix_fmts){

const enum AVPixelFormat *p;

for (p = pix_fmts; *p != -1; p++) {

if (*p == hw_pix_fmt)

return *p;

}

return AV_PIX_FMT_NONE;

}

int av_hwdevice_ctx_init(AVBufferRef *ref){

AVHWDeviceContext *ctx = (AVHWDeviceContext*)ref->data;

if (ctx->internal->hw_type->device_init) {

ctx->internal->hw_type->device_init(ctx);

}

}

(截图 from (56条消息) 视频编解码硬件方案漫谈_d3d11和dxva2_江海细流的博客-CSDN博客)

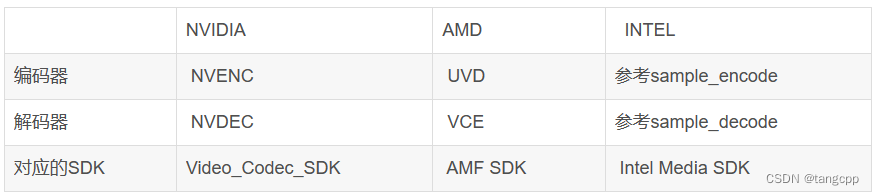

厂家SDK

FFMPEG对厂家的集成

FFMPEG对厂家的集成

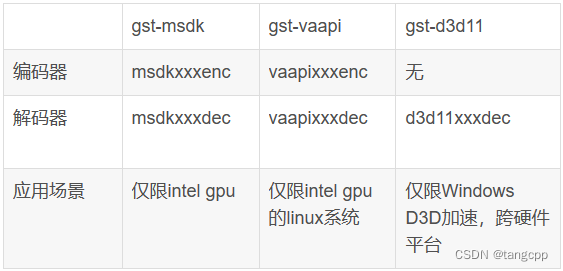

FFMPEG的应用

GStream的应用

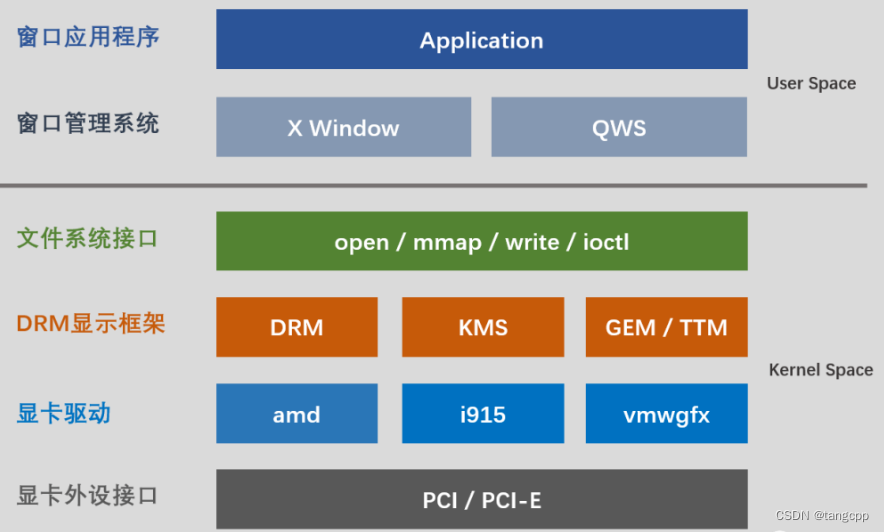

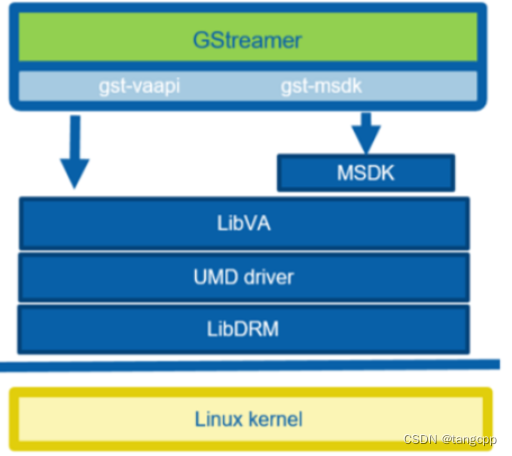

linux下DRM

Direct Rendering Manager

drm 管理显卡驱动,并为显卡的显存管理、参数设置等对外提供访问接口,实现用户态对显卡的编程控制。

libva为 intel 提供的 vaapi。针对 Intel 自己显卡特性,在drm基础上按Intel显卡特性封装自己的API。

FFMPEG的硬解实例

int main(int argc, char *argv[])

{

AVFormatContext *input_ctx = NULL;

int video_stream, ret;

AVStream *video = NULL;

AVCodecContext *decoder_ctx = NULL;

AVCodec *decoder = NULL;

AVPacket packet;

enum AVHWDeviceType type;

int i;

if (argc < 4) {

fprintf(stderr, "Usage: %s <device type> <input file> <output file>\n", argv[0]);

return -1;

}

type = av_hwdevice_find_type_by_name(argv[1]);

if (type == AV_HWDEVICE_TYPE_NONE) {

fprintf(stderr, "Device type %s is not supported.\n", argv[1]);

fprintf(stderr, "Available device types:");

while((type = av_hwdevice_iterate_types(type)) != AV_HWDEVICE_TYPE_NONE)

fprintf(stderr, " %s", av_hwdevice_get_type_name(type));

fprintf(stderr, "\n");

return -1;

}

/* open the input file */

if (avformat_open_input(&input_ctx, argv[2], NULL, NULL) != 0) {

fprintf(stderr, "Cannot open input file '%s'\n", argv[2]);

return -1;

}

if (avformat_find_stream_info(input_ctx, NULL) < 0) {

fprintf(stderr, "Cannot find input stream information.\n");

return -1;

}

/* find the video stream information */

ret = av_find_best_stream(input_ctx, AVMEDIA_TYPE_VIDEO, -1, -1, &decoder, 0);

if (ret < 0) {

fprintf(stderr, "Cannot find a video stream in the input file\n");

return -1;

}

video_stream = ret;

for (i = 0;; i++) {

const AVCodecHWConfig *config = avcodec_get_hw_config(decoder, i);

if (!config) {

fprintf(stderr, "Decoder %s does not support device type %s.\n",

decoder->name, av_hwdevice_get_type_name(type));

return -1;

}

if (config->methods & AV_CODEC_HW_CONFIG_METHOD_HW_DEVICE_CTX &&

config->device_type == type) {

hw_pix_fmt = config->pix_fmt;

break;

}

}

if (!(decoder_ctx = avcodec_alloc_context3(decoder)))

return AVERROR(ENOMEM);

video = input_ctx->streams[video_stream];

if (avcodec_parameters_to_context(decoder_ctx, video->codecpar) < 0)

return -1;

decoder_ctx->get_format = get_hw_format;

if (hw_decoder_init(decoder_ctx, type) < 0)

return -1;

if ((ret = avcodec_open2(decoder_ctx, decoder, NULL)) < 0) {

fprintf(stderr, "Failed to open codec for stream #%u\n", video_stream);

return -1;

}

/* open the file to dump raw data */

output_file = fopen(argv[3], "w+");

/* actual decoding and dump the raw data */

while (ret >= 0) {

if ((ret = av_read_frame(input_ctx, &packet)) < 0)

break;

if (video_stream == packet.stream_index)

ret = decode_write(decoder_ctx, &packet);

av_packet_unref(&packet);

}

/* flush the decoder */

packet.data = NULL;

packet.size = 0;

ret = decode_write(decoder_ctx, &packet);

av_packet_unref(&packet);

if (output_file)

fclose(output_file);

avcodec_free_context(&decoder_ctx);

avformat_close_input(&input_ctx);

av_buffer_unref(&hw_device_ctx);

return 0;

}

//编解码器上下文里面有个硬件设备上下文ctx->hw_device_ctx,这个值需要在初始化的时候赋值

//利用AVCodecContext中的AVBufferRef的data指针传递AVHWDeviceContext

static int hw_decoder_init(AVCodecContext *ctx, const enum AVHWDeviceType type)

{

int err = 0;

if ((err = av_hwdevice_ctx_create(&hw_device_ctx, type,

NULL, NULL, 0)) < 0) {

fprintf(stderr, "Failed to create specified HW device.\n");

return err;

}

ctx->hw_device_ctx = av_buffer_ref(hw_device_ctx);

return err;

}

int av_hwdevice_ctx_create(AVBufferRef **pdevice_ref, enum AVHWDeviceType type,

const char *device, AVDictionary *opts, int flags)

{

AVBufferRef *device_ref = NULL;

AVHWDeviceContext *device_ctx;

int ret = 0;

device_ref = av_hwdevice_ctx_alloc(type);

if (!device_ref) {

ret = AVERROR(ENOMEM);

goto fail;

}

device_ctx = (AVHWDeviceContext*)device_ref->data;

if (!device_ctx->internal->hw_type->device_create) {

ret = AVERROR(ENOSYS);

goto fail;

}

ret = device_ctx->internal->hw_type->device_create(device_ctx, device,

opts, flags);

if (ret < 0)

goto fail;

ret = av_hwdevice_ctx_init(device_ref);

if (ret < 0)

goto fail;

*pdevice_ref = device_ref;

return 0;

fail:

av_buffer_unref(&device_ref);

*pdevice_ref = NULL;

return ret;

}VP9

AVCodec ff_vp9_decoder = {

.name = "vp9",

.long_name = NULL_IF_CONFIG_SMALL("Google VP9"),

.type = AVMEDIA_TYPE_VIDEO,

.id = AV_CODEC_ID_VP9,

.priv_data_size = sizeof(VP9Context),

.init = vp9_decode_init,

.close = vp9_decode_free,

.decode = vp9_decode_frame,

.capabilities = AV_CODEC_CAP_DR1 | AV_CODEC_CAP_FRAME_THREADS | AV_CODEC_CAP_SLICE_THREADS,

.caps_internal = FF_CODEC_CAP_SLICE_THREAD_HAS_MF,

.flush = vp9_decode_flush,

.init_thread_copy = ONLY_IF_THREADS_ENABLED(vp9_decode_init_thread_copy),

.update_thread_context = ONLY_IF_THREADS_ENABLED(vp9_decode_update_thread_context),

.profiles = NULL_IF_CONFIG_SMALL(ff_vp9_profiles),

.bsfs = "vp9_superframe_split",

.hw_configs = (const AVCodecHWConfigInternal*[]) {

#if CONFIG_VP9_DXVA2_HWACCEL

HWACCEL_DXVA2(vp9),

#endif

#if CONFIG_VP9_D3D11VA_HWACCEL

HWACCEL_D3D11VA(vp9),

#endif

#if CONFIG_VP9_D3D11VA2_HWACCEL

HWACCEL_D3D11VA2(vp9),

#endif

#if CONFIG_VP9_NVDEC_HWACCEL

HWACCEL_NVDEC(vp9),

#endif

#if CONFIG_VP9_VAAPI_HWACCEL

HWACCEL_VAAPI(vp9),

#endif

NULL

},

};二、编译安装配置

使用pccodec自带的x264静态库只编译FFmpeg的静态库,打开H265解码器 --enable-decoder=hevc (解码直接用FFmpeg,编码H265需要单独再装x265库并且--enable-libx265)

打开x264,x265 and --disable-lzma

编译配置

ubuntu@ubuntu:/home/xxx/ffmpeg$ ./configure --prefix=/tang/lib/ffmpeg41 --bindir=/tang/lib/ffmpeg41/bin/ --enable-pic --target-os=linux --arch=x86_64 --enable-gpl --enable-static --enable-nonfree --disable-stripping --enable-ffmpeg --enable-ffplay --enable-ffprobe --enable-libmp3lame --enable-libx264 --enable-libx265 --enable-decoder=h264 --enable-decoder=hevc --enable-decoder=mp3 --enable-decoder=mjpeg --enable-decoder=mjpegb --enable-decoder=jpeg2000 --enable-decoder=jpegls --enable-demuxer=mjpeg --enable-protocol=file --enable-avfilter --enable-bsf=aac_adtstoasc --enable-libfdk-aac --disable-x86asm --enable-shared --disable-lzma --extra-cflags='-I/home/xxx/ffmpeg/ffbuild/include/ -I/home/xxx/dev/src/codec_output/include/x264/' --extra-ldflags='-L/usr/local/lib -L/home/xxx/ffmpeg/ffbuild/lib/ -L/home/xxx/dev/src/codec_output/lib'

编译:./configure --prefix=/usr/local/ --enable-shared --with-pic

--prefix=/usr/local/ffmpeg 安装程序到指定目录(默认/usr/local)

--enable-gpl 允许使用GPL(默认关闭)

--enable-small 启用优化文件尺寸大小

--enable-nonfree 允许使用非免费的代码, 产生的库和二进制程序将是不可再发行的

--enable-libfdk-aac 使能aac编码(默认关闭)

--enable-x264 启用H.264编码(默认关闭)

--enable-filter=delogo 使能去水印记功能(默认关闭)

--enable-debug 用来控制编译器比如gcc的debug level选项的不是控制ffmpeg的debug level选项的

--disable-optimizations 禁用编译器优化

--enable-libmp3lame 使能lame mp3编码

--disable-asm 禁用全部汇编程序优化

--enable-pic 创造不依赖(于)位置的代码

--enable-pthreads 启用pthreads(多线程)(默认关闭,可能会有线程安全问题)

--enable-libopus 使能opus编码

--enable-libspeex 使能speex编码

--enable-libvorbis 通过 libvorbis 启用 Vorbis 编码方式,本地装置存在(默认:关闭)

--enable-static 构建静态库(默认启用)

--enable-shared 构建共享库(默认关闭)

--enable-librtmp 使用librtmp拉流(默认关闭)

生成ffmpeg动态库用到的其他三方库编译时要加pic,否则会因为符号表问题寻址报错

安装 fdk-aac:

wget -o fdk-aac.tar.gz https://github.com/mstorsjo/fdk-aac/tarball/master

./configure --prefix="/home/xxx/ffmpeg/ffbuild/" --disable-shared

make

make install

make distclean

安装libx264和libmp3lame:

apt-get install libx264-dev

apt-get install libmp3lame-dev

字幕库 apt-get install libass-dev

/ffmpeg: error while loading shared libraries: libass.so.9: cannot open shared object file: No such file or directory

root@ubuntu:/home/xxx/ffmpeg# dpkg -l |grep libass

ii libass-dev:amd64 0.10.1-3ubuntu1 amd64 development files for libass

ii libass4:amd64 0.10.1-3ubuntu1 amd64 library for SSA/ASS subtitles rendering

X264\x265

x264下载地址 Index of /pub/videolan/x264/snapshots/

root@ubuntu:/home/xxx/x264-snapshot-20191217-2245-stable#

./configure --prefix=/home/xxx/ffmpeg/ffbuild --enable-shared --enable-static --disable-asm

只编译静态库 ./configure --prefix=/home/xxx/ffmpeg/ffbuild --enable-static --disable-asm

x265下载地址 http://ftp.videolan.org/pub/videolan/x265

https://bitbucket.org/multicoreware/x265_git/src/master/ 官方

http://ftp.videolan.org/pub/videolan/x265/ 版本下载

http://ftp.videolan.org/pub/videolan/x265/x265_3.2.tar.gz ---ok

编译x265:

PATH=/wwz-build/bin/:$PATH cmake -G "Unix Makefiles" -DCMAKE_INSTALL_PREFIX=/wwz-build/ffmpeg_build -DENABLE_SHARED=on ../../source

PATH=/wwz-build/bin:$PATH make

make install

解压后, 进入 ./x265_2.9/build/linux 目录下, 然后运行脚本

./make-Makefiles.bash

报错 make-Makefiles.bash: line 3: ccmake: command not found

需要安装cmake-curses-gui

apt-get install cmake-curses-gui

然后再执行./make-Makefiles.bash(中途会弹出option,不用配置,q退出)

make

make install

编译错误记录:

- /tang/lib/libvideotranscoder.so: undefined reference to `x264_encoder_open_142'

编译过程:编译了新下载版本的x264库并安装到/usr/local/下,然后去编译安装FFmpeg到/tang/lib/ffmpeg41/lib成功,拷贝 FFmpeg的静态库文件到编译库引入目录 cp /tang/lib/ffmpeg41/lib/*.a ../dev/src/codec_output/lib/,编译安装pccodec成功,然后编译mp4-convert 提示错误 undefined reference to `x264_encoder_open_142'。

原因:拷贝FFmpeg静态库到编译目录的时候没有拷贝新版本的x264库头文件,导致使用新的x264库到仍然使用老的头文件,老的头文件中有函数x264_encoder_open_142但库里已经没有这个函数所以链接出错。

- 为了解决问题1,只编译静态x264库,结果FFmpeg编译配置的时候找不到x264库,报错opencl.c:(.text+0xd1): undefined reference to `dlopen'

原因:编译x264库只编译静态库,但编译FFmpeg是静态库和动态库同时编译,而动态库链接找不到对应的x264动态库。

- 为了解决问题1,在编译配置FFmpeg时把x264库引入到老x264库中 --extra-ldflags='-L/home/xxx/ffmpeg/ffbuild/lib/ -L/home/xxx/dev/pccodec_v4_hevc/src/codec_output/lib/',结果编译配置还是提示找不到x264库。

原因:只引入库,但没有引入头文件 --extra-cflags='-I/home/xxx/ffmpeg/ffbuild/include/ -I/home/xxx/dev/src/codec_output/include/x264/'

- 编译了FFmpeg之后拷贝FFmpeg静态库文件打包,运行是崩溃。我本地自己打包运行没有崩溃但是H264编码器打不开。

崩溃原因:x264库不一致。鹏宇打包用了我的FFmpeg库但他编译时用的是他自己的x264库和头文件。

H264编码器打不开的原因:我编译FFmpeg前没有下载编译x264库,默认使用了安装opencv自带的低版本的x264动态库,估计是版本太低问题,运行是提示:

x264 [error]: baseline profile doesn't support 4:0:0

[libx264 @ 0x162cd00] Error setting profile baseline.

[libx264 @ 0x162cd00] Possible profiles: baseline main high high10 high422 high444

- FFmpeg编译配置时多重包含用引号引起来,引号里面每一个库都要有自己的-I和-L,如

--extra-cflags='-I/home/xxx/ffmpeg/ffbuild/include/ -I/home/xxx/dev/src/codec_output/include/x264/'

--extra-ldflags='-L/home/xxx/ffmpeg/ffbuild/lib/ -L/home/xxx/dev/src/codec_output/lib/'

- FFmpeg编译配置出错是请看log :ffbuild/config.log

三、FF编码参数说明

/*AVCodecContext 相当于虚基类,需要用具体的编码器实现来给他赋值*/

pCodecCtxEnc = video_st->codec;

//编码器的ID号,这里我们自行指定为264编码器,实际上也可以根据video_st里的codecID 参数赋值

pCodecCtxEnc->codec_id = AV_CODEC_ID_H264;

//编码器编码的数据类型

pCodecCtxEnc->codec_type = AVMEDIA_TYPE_VIDEO;

//目标的码率,即采样的码率;显然,采样码率越大,视频大小越大

pCodecCtxEnc->bit_rate = 200000;

//固定允许的码率误差,数值越大,视频越小

pCodecCtxEnc->bit_rate_tolerance = 4000000;

//编码目标的视频帧大小,以像素为单位

pCodecCtxEnc->width = 640;

pCodecCtxEnc->height = 480;

//帧率的基本单位,我们用分数来表示,

//用分数来表示的原因是,有很多视频的帧率是带小数的eg:NTSC 使用的帧率是29.97

pCodecCtxEnc->time_base.den = 30;

pCodecCtxEnc->time_base = (AVRational){1,25};

pCodecCtxEnc->time_base.num = 1;

//像素的格式,也就是说采用什么样的色彩空间来表明一个像素点

pCodecCtxEnc->pix_fmt = PIX_FMT_YUV420P;

//每250帧插入1个I帧,I帧越少,视频越小

pCodecCtxEnc->gop_size = 250;

//两个非B帧之间允许出现多少个B帧数

//设置0表示不使用B帧

//b 帧越多,图片越小

pCodecCtxEnc->max_b_frames = 0;

//运动估计

pCodecCtxEnc->pre_me = 2;

//设置最小和最大拉格朗日乘数

//拉格朗日乘数 是统计学用来检测瞬间平均值的一种方法

pCodecCtxEnc->lmin = 1;

pCodecCtxEnc->lmax = 5;

//最大和最小量化系数

pCodecCtxEnc->qmin = 10;

pCodecCtxEnc->qmax = 50;

//因为我们的量化系数q是在qmin和qmax之间浮动的,

//qblur表示这种浮动变化的变化程度,取值范围0.0~1.0,取0表示不削减

pCodecCtxEnc->qblur = 0.0;

//空间复杂度的masking力度,取值范围 0.0-1.0

pCodecCtxEnc->spatial_cplx_masking = 0.3;

//运动场景预判功能的力度,数值越大编码时间越长

pCodecCtxEnc->me_pre_cmp = 2;

//采用(qmin/qmax的比值来控制码率,1表示局部采用此方法,)

pCodecCtxEnc->rc_qsquish = 1;

//设置 i帧、p帧与B帧之间的量化系数q比例因子,这个值越大,B帧越不清楚

//B帧量化系数 = 前一个P帧的量化系数q * b_quant_factor + b_quant_offset

pCodecCtxEnc->b_quant_factor = 1.25;

//i帧、p帧与B帧的量化系数便宜量,便宜越大,B帧越不清楚

pCodecCtxEnc->b_quant_offset = 1.25;

//p和i的量化系数比例因子,越接近1,P帧越清楚

//p的量化系数 = I帧的量化系数 * i_quant_factor + i_quant_offset

pCodecCtxEnc->i_quant_factor = 0.8;

pCodecCtxEnc->i_quant_offset = 0.0;

//码率控制测率,宏定义,查API

pCodecCtxEnc->rc_strategy = 2;

//b帧的生成策略

pCodecCtxEnc->b_frame_strategy = 0;

//消除亮度和色度门限

pCodecCtxEnc->luma_elim_threshold = 0;

pCodecCtxEnc->chroma_elim_threshold = 0;

//DCT变换算法的设置,有7种设置,这个算法的设置是根据不同的CPU指令集来优化的取值范围在0-7之间

pCodecCtxEnc->dct_algo = 0;

//这两个参数表示对过亮或过暗的场景作masking的力度,0表示不作

pCodecCtxEnc->lumi_masking = 0.0;

pCodecCtxEnc->dark_masking = 0.0;

三、命令使用备忘

截取时间段 : ffmpeg -i 1648188435981154.mp4 -vcodec copy -acodec copy -bsf:a aac_adtstoasc -ss 00:00:00 -to 00:10:00 ./out.mp4 -y

提取MP4的音频为MP3 : ffmpeg -i test.mp4 -ss 00:00:00 -t 00:00:50.0 -q:a 0 -map a test.mp3 没有(-t 00:00:50.0)就是到末尾。

ffmpeg -i 1676372559580756.mp4 -vn -acodec copy 1676372559580756.aac

ffmpeg -i 1676372559580756.aac -acodec libmp3lame 1676372559580756.mp3 (用自己装的FFmpeg提示 Unknown encoder 'libmp3lame', 把libmp3lame安装之后也不行,估计需要在重新config里面enable libmp3lame 之后再编才行)

自己安装的FFmpeg组件不全的话,可以直接下载编好的

John Van Sickle - FFmpeg Static Builds :John Van Sickle - FFmpeg Static Builds

wget https://johnvansickle.com/ffmpeg/releases/ffmpeg-release-amd64-static.tar.xz

tar -xvf ffmpeg-release-amd64-static.tar.xz

ffmpeg-5.1.1-amd64-static/ffmpeg -i 1676372559580756.aac -acodec libmp3lame 1676372559580756.mp3

2479

2479

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?