In Multiple Variable Linear Regression, the value ranges of different features vary greatly.

It makes gradient descend take a long way to converge.

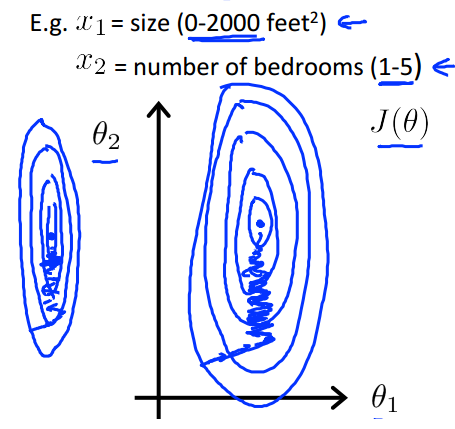

In the house price example, it can be something like this:

The hypothesis contour is a skinny eclipse, then gradient descent takes a zigzag trace.

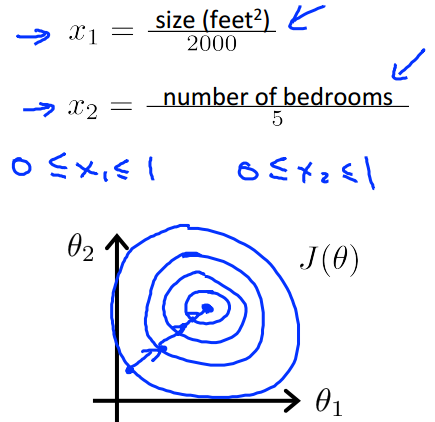

The basic idea to handle this problem is to make sure all features are on a similar scale.

After that, hypothesis contour tends to be a circle, makes gradient descent converge faster.

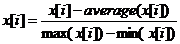

Another frequently used formula is:

It makes every feature range from -0.5 to 0.5.

This material comes from machine learning class on coursera.

3万+

3万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?