关于Spark集群部署参考:http://blog.csdn.net/tototuzuoquan/article/details/74481570

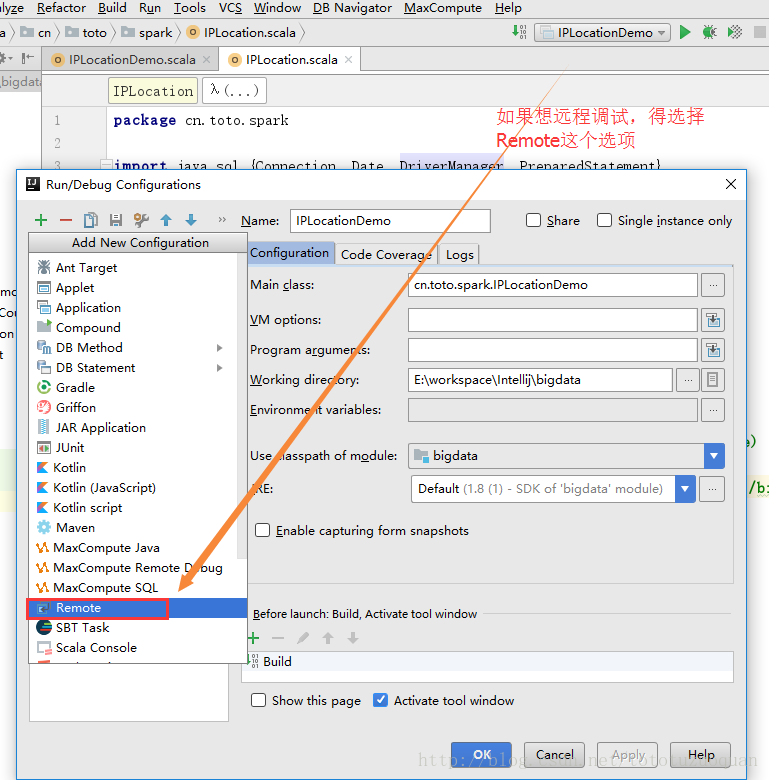

一、Spark远程调试配置:

#调试Master,在master节点的spark-env.sh中添加SPARK_MASTER_OPTS变量

export SPARK_MASTER_OPTS="-Xdebug -Xrunjdwp:transport=dt_socket,server=y,suspend=y,address=10000"

#启动Master

sbin/start-master.sh

#调试Worker,在worker节点的spark-env.sh中添加SPARK_WORKER_OPTS变量

export SPARK_WORKER_OPTS="-Xdebug -Xrunjdwp:transport=dt_socket,server=y,suspend=y,address=10001"

#启动Worker

sbin/start-slave.sh 1 spark://hadoop1:7077

#调试spark-submit + app

bin/spark-submit --class cn.itcast.spark.WordCount --master spark://hadoop1:7077 --driver-java-options "-Xdebug -Xrunjdwp:transport=dt_socket,server=y,suspend=y,address=10002" /root/wc.jar hdfs://mycluster/wordcount/input/2.txt hdfs://mycluster/out2

#调试spark-submit + app + executor

bin/spark-submit --class cn.itcast.spark.WordCount --master spark://hadoop1:7077 --conf "spark.executor.extraJavaOptions=-Xdebug -Xrunjdwp:transport=dt_socket,server=y,suspend=y,address=10003" --driver-java-options "-Xdebug -Xrunjdwp:transport=dt_socket,server=y,suspend=y,address=10002" /root/wc.jar hdfs://mycluster/wordcount/input/2.txt hdfs://mycluster/out2 二、编写程序进行调试

接着:

532

532

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?